Meng Yue

From Natural Language to Solver-Ready Power System Optimization: An LLM-Assisted, Validation-in-the-Loop Framework

Aug 11, 2025Abstract:This paper introduces a novel Large Language Models (LLMs)-assisted agent that automatically converts natural-language descriptions of power system optimization scenarios into compact, solver-ready formulations and generates corresponding solutions. In contrast to approaches that rely solely on LLM to produce solutions directly, the proposed method focuses on discovering a mathematically compatible formulation that can be efficiently solved by off-the-shelf optimization solvers. Directly using LLMs to produce solutions often leads to infeasible or suboptimal results, as these models lack the numerical precision and constraint-handling capabilities of established optimization solvers. The pipeline integrates a domain-aware prompt and schema with an LLM, enforces feasibility through systematic validation and iterative repair, and returns both solver-ready models and user-facing results. Using the unit commitment problem as a representative case study, the agent produces optimal or near-optimal schedules along with the associated objective costs. Results demonstrate that coupling the solver with task-specific validation significantly enhances solution reliability. This work shows that combining AI with established optimization frameworks bridges high-level problem descriptions and executable mathematical models, enabling more efficient decision-making in energy systems

Mitigating Parameter Degeneracy using Joint Conditional Diffusion Model for WECC Composite Load Model in Power Systems

Nov 15, 2024

Abstract:Data-driven modeling for dynamic systems has gained widespread attention in recent years. Its inverse formulation, parameter estimation, aims to infer the inherent model parameters from observations. However, parameter degeneracy, where different combinations of parameters yield the same observable output, poses a critical barrier to accurately and uniquely identifying model parameters. In the context of WECC composite load model (CLM) in power systems, utility practitioners have observed that CLM parameters carefully selected for one fault event may not perform satisfactorily in another fault. Here, we innovate a joint conditional diffusion model-based inverse problem solver (JCDI), that incorporates a joint conditioning architecture with simultaneous inputs of multi-event observations to improve parameter generalizability. Simulation studies on the WECC CLM show that the proposed JCDI effectively reduces uncertainties of degenerate parameters, thus the parameter estimation error is decreased by 42.1% compared to a single-event learning scheme. This enables the model to achieve high accuracy in predicting power trajectories under different fault events, including electronic load tripping and motor stalling, outperforming standard deep reinforcement learning and supervised learning approaches. We anticipate this work will contribute to mitigating parameter degeneracy in system dynamics, providing a general parameter estimation framework across various scientific domains.

Conformalized Prediction of Post-Fault Voltage Trajectories Using Pre-trained and Finetuned Attention-Driven Neural Operators

Oct 31, 2024Abstract:This paper proposes a new data-driven methodology for predicting intervals of post-fault voltage trajectories in power systems. We begin by introducing the Quantile Attention-Fourier Deep Operator Network (QAF-DeepONet), designed to capture the complex dynamics of voltage trajectories and reliably estimate quantiles of the target trajectory without any distributional assumptions. The proposed operator regression model maps the observed portion of the voltage trajectory to its unobserved post-fault trajectory. Our methodology employs a pre-training and fine-tuning process to address the challenge of limited data availability. To ensure data privacy in learning the pre-trained model, we use merging via federated learning with data from neighboring buses, enabling the model to learn the underlying voltage dynamics from such buses without directly sharing their data. After pre-training, we fine-tune the model with data from the target bus, allowing it to adapt to unique dynamics and operating conditions. Finally, we integrate conformal prediction into the fine-tuned model to ensure coverage guarantees for the predicted intervals. We evaluated the performance of the proposed methodology using the New England 39-bus test system considering detailed models of voltage and frequency controllers. Two metrics, Prediction Interval Coverage Probability (PICP) and Prediction Interval Normalized Average Width (PINAW), are used to numerically assess the model's performance in predicting intervals. The results show that the proposed approach offers practical and reliable uncertainty quantification in predicting the interval of post-fault voltage trajectories.

Federated contrastive learning models for prostate cancer diagnosis and Gleason grading

Feb 17, 2023

Abstract:The application effect of artificial intelligence (AI) in the field of medical imaging is remarkable. Robust AI model training requires large datasets, but data collection faces communication, ethics, and privacy protection constraints. Fortunately, federated learning can solve the above problems by coordinating multiple clients to train the model without sharing the original data. In this study, we design a federated contrastive learning framework (FCL) for large-scale pathology images and the heterogeneity challenges. It enhances the model's generalization ability by maximizing the attention consistency between the local client and server models. To alleviate the privacy leakage problem when transferring parameters and verify the robustness of FCL, we use differential privacy to further protect the model by adding noise. We evaluate the effectiveness of FCL on the cancer diagnosis task and Gleason grading task on 19,635 prostate cancer WSIs from multiple clients. In the diagnosis task, the average AUC of 7 clients is 95% when the categories are relatively balanced, and our FCL achieves 97%. In the Gleason grading task, the average Kappa of 6 clients is 0.74, and the Kappa of FCL reaches 0.84. Furthermore, we also validate the robustness of the model on external datasets(one public dataset and two private datasets). In addition, to better explain the classification effect of the model, we show whether the model focuses on the lesion area by drawing a heatmap. Finally, FCL brings a robust, accurate, low-cost AI training model to biomedical research, effectively protecting medical data privacy.

On Approximating the Dynamic Response of Synchronous Generators via Operator Learning: A Step Towards Building Deep Operator-based Power Grid Simulators

Jan 29, 2023

Abstract:This paper designs an Operator Learning framework to approximate the dynamic response of synchronous generators. One can use such a framework to (i) design a neural-based generator model that can interact with a numerical simulator of the rest of the power grid or (ii) shadow the generator's transient response. To this end, we design a data-driven Deep Operator Network~(DeepONet) that approximates the generators' infinite-dimensional solution operator. Then, we develop a DeepONet-based numerical scheme to simulate a given generator's dynamic response over a short/medium-term horizon. The proposed numerical scheme recursively employs the trained DeepONet to simulate the response for a given multi-dimensional input, which describes the interaction between the generator and the rest of the system. Furthermore, we develop a residual DeepONet numerical scheme that incorporates information from mathematical models of synchronous generators. We accompany this residual DeepONet scheme with an estimate for the prediction's cumulative error. We also design a data aggregation (DAgger) strategy that allows (i) employing supervised learning to train the proposed DeepONets and (ii) fine-tuning the DeepONet using aggregated training data that the DeepONet is likely to encounter during interactive simulations with other grid components. Finally, as a proof of concept, we demonstrate that the proposed DeepONet frameworks can effectively approximate the transient model of a synchronous generator.

DeepGraphONet: A Deep Graph Operator Network to Learn and Zero-shot Transfer the Dynamic Response of Networked Systems

Sep 21, 2022

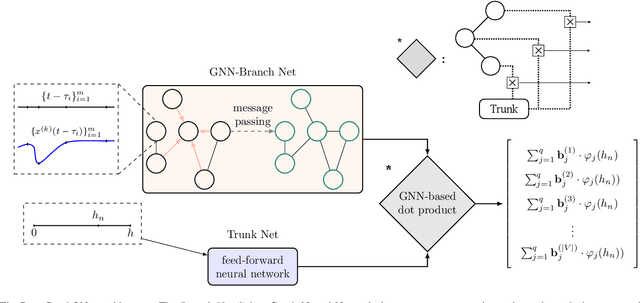

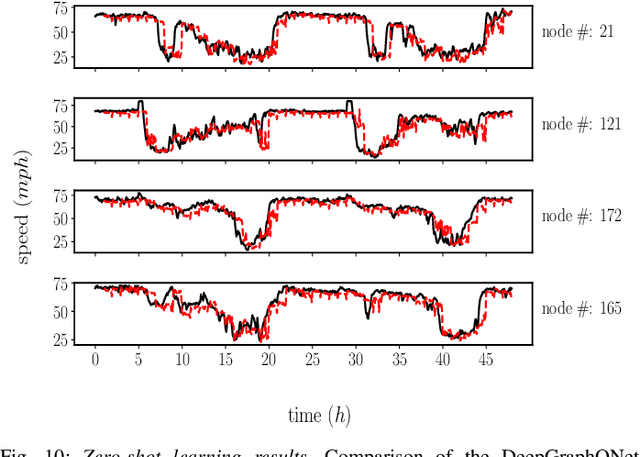

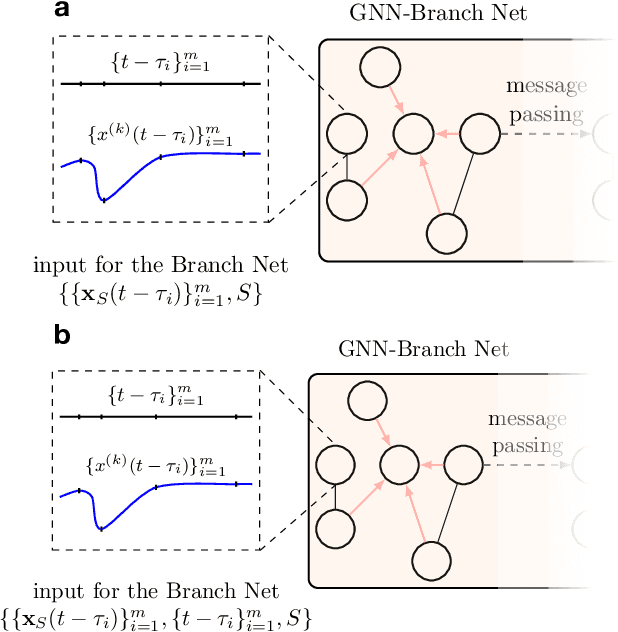

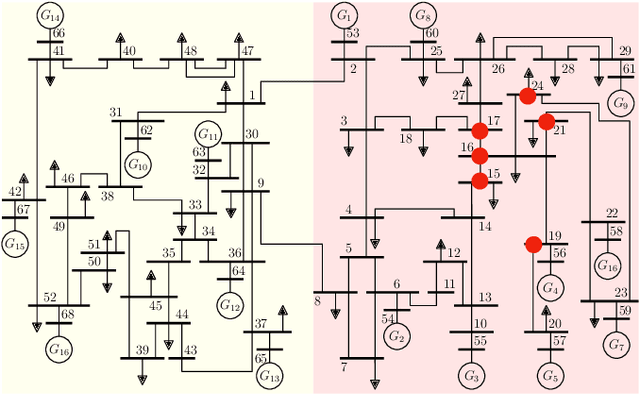

Abstract:This paper develops a Deep Graph Operator Network (DeepGraphONet) framework that learns to approximate the dynamics of a complex system (e.g. the power grid or traffic) with an underlying sub-graph structure. We build our DeepGraphONet by fusing the ability of (i) Graph Neural Networks (GNN) to exploit spatially correlated graph information and (ii) Deep Operator Networks~(DeepONet) to approximate the solution operator of dynamical systems. The resulting DeepGraphONet can then predict the dynamics within a given short/medium-term time horizon by observing a finite history of the graph state information. Furthermore, we design our DeepGraphONet to be resolution-independent. That is, we do not require the finite history to be collected at the exact/same resolution. In addition, to disseminate the results from a trained DeepGraphONet, we design a zero-shot learning strategy that enables using it on a different sub-graph. Finally, empirical results on the (i) transient stability prediction problem of power grids and (ii) traffic flow forecasting problem of a vehicular system illustrate the effectiveness of the proposed DeepGraphONet.

DeepONet-Grid-UQ: A Trustworthy Deep Operator Framework for Predicting the Power Grid's Post-Fault Trajectories

Feb 15, 2022Abstract:This paper proposes a new data-driven method for the reliable prediction of power system post-fault trajectories. The proposed method is based on the fundamentally new concept of Deep Operator Networks (DeepONets). Compared to traditional neural networks that learn to approximate functions, DeepONets are designed to approximate nonlinear operators. Under this operator framework, we design a DeepONet to (1) take as inputs the fault-on trajectories collected, for example, via simulation or phasor measurement units, and (2) provide as outputs the predicted post-fault trajectories. In addition, we endow our method with a much-needed ability to balance efficiency with reliable/trustworthy predictions via uncertainty quantification. To this end, we propose and compare two methods that enable quantifying the predictive uncertainty. First, we propose a \textit{Bayesian DeepONet} (B-DeepONet) that uses stochastic gradient Hamiltonian Monte-Carlo to sample from the posterior distribution of the DeepONet parameters. Then, we propose a \textit{Probabilistic DeepONet} (Prob-DeepONet) that uses a probabilistic training strategy to equip DeepONets with a form of automated uncertainty quantification, at virtually no extra computational cost. Finally, we validate the predictive power and uncertainty quantification capability of the proposed B-DeepONet and Prob-DeepONet using the IEEE 16-machine 68-bus system.

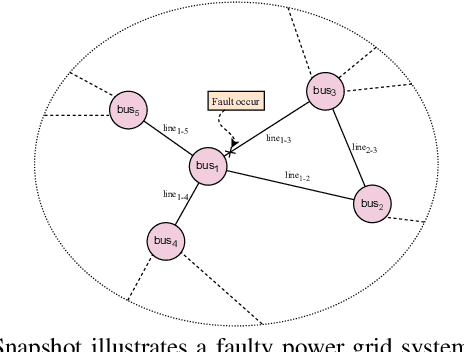

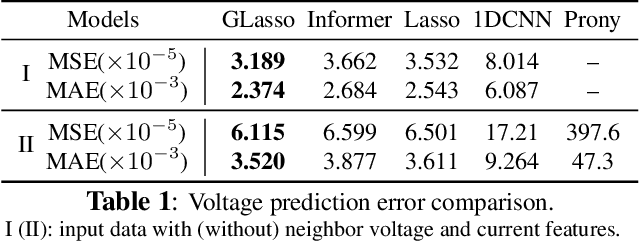

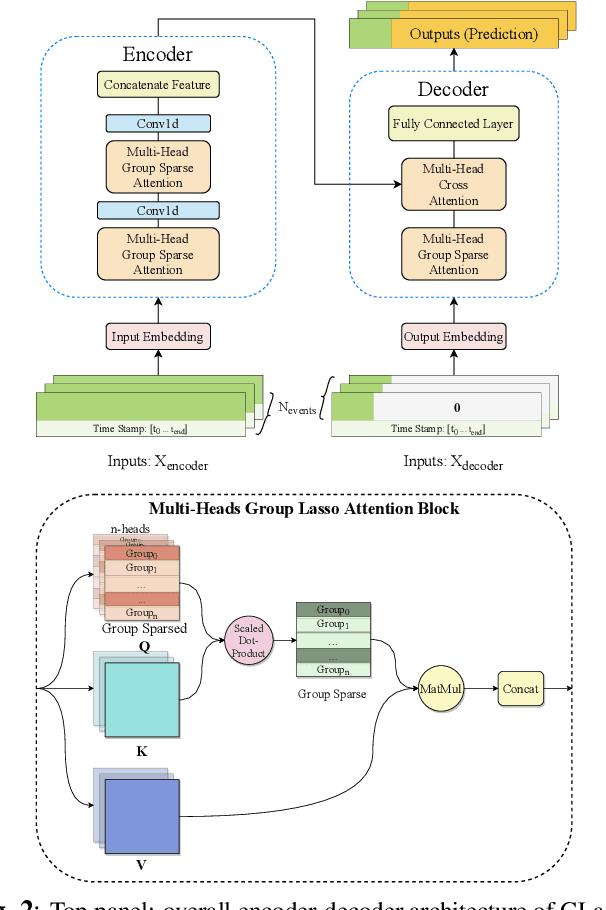

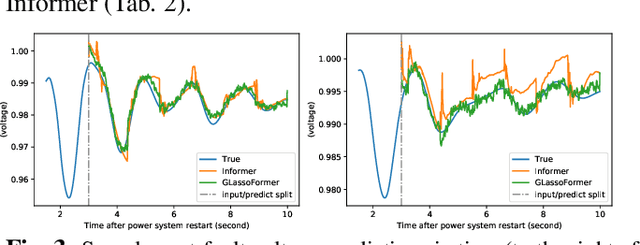

glassoformer: a query-sparse transformer for post-fault power grid voltage prediction

Jan 22, 2022

Abstract:We propose GLassoformer, a novel and efficient transformer architecture leveraging group Lasso regularization to reduce the number of queries of the standard self-attention mechanism. Due to the sparsified queries, GLassoformer is more computationally efficient than the standard transformers. On the power grid post-fault voltage prediction task, GLassoformer shows remarkably better prediction than many existing benchmark algorithms in terms of accuracy and stability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge