Lee Martie

Rapid Development of Compositional AI

Feb 12, 2023

Abstract:Compositional AI systems, which combine multiple artificial intelligence components together with other application components to solve a larger problem, have no known pattern of development and are often approached in a bespoke and ad hoc style. This makes development slower and harder to reuse for future applications. To support the full rapid development cycle of compositional AI applications, we have developed a novel framework called (Bee)* (written as a regular expression and pronounced as "beestar"). We illustrate how (Bee)* supports building integrated, scalable, and interactive compositional AI applications with a simplified developer experience.

* Accepted to ICSE 2023, NIER track

Distributed Adversarial Training to Robustify Deep Neural Networks at Scale

Jun 13, 2022

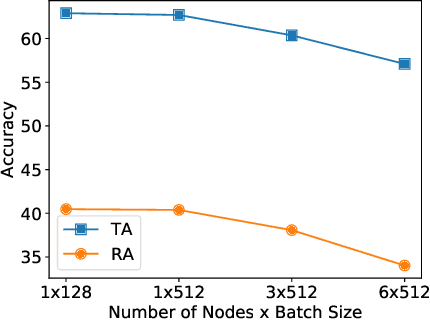

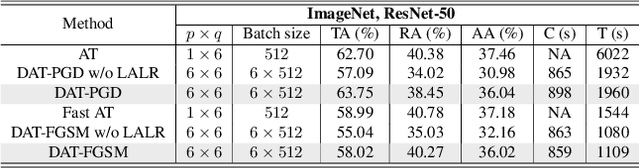

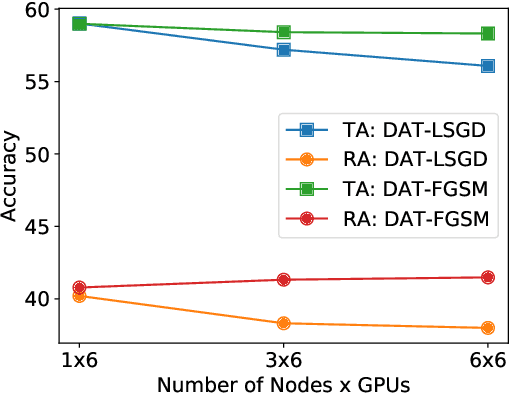

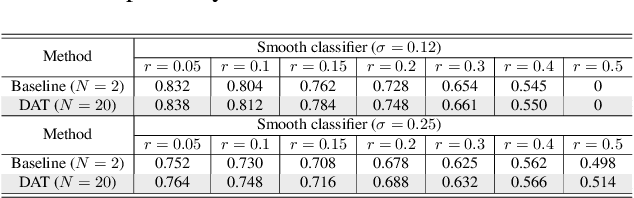

Abstract:Current deep neural networks (DNNs) are vulnerable to adversarial attacks, where adversarial perturbations to the inputs can change or manipulate classification. To defend against such attacks, an effective and popular approach, known as adversarial training (AT), has been shown to mitigate the negative impact of adversarial attacks by virtue of a min-max robust training method. While effective, it remains unclear whether it can successfully be adapted to the distributed learning context. The power of distributed optimization over multiple machines enables us to scale up robust training over large models and datasets. Spurred by that, we propose distributed adversarial training (DAT), a large-batch adversarial training framework implemented over multiple machines. We show that DAT is general, which supports training over labeled and unlabeled data, multiple types of attack generation methods, and gradient compression operations favored for distributed optimization. Theoretically, we provide, under standard conditions in the optimization theory, the convergence rate of DAT to the first-order stationary points in general non-convex settings. Empirically, we demonstrate that DAT either matches or outperforms state-of-the-art robust accuracies and achieves a graceful training speedup (e.g., on ResNet-50 under ImageNet). Codes are available at https://github.com/dat-2022/dat.

Reflecting After Learning for Understanding

Oct 18, 2019

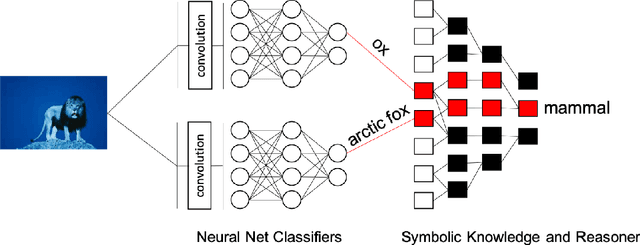

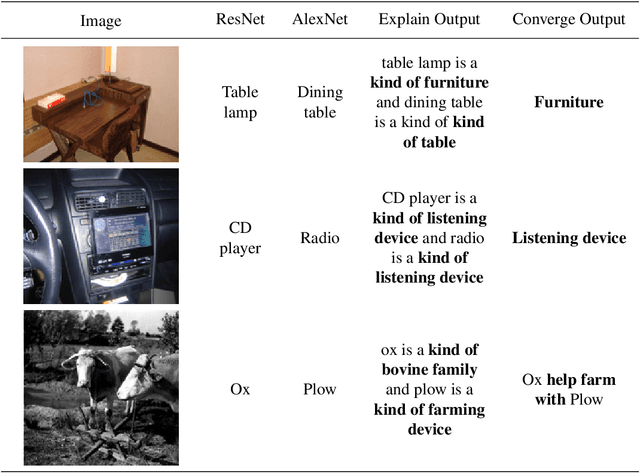

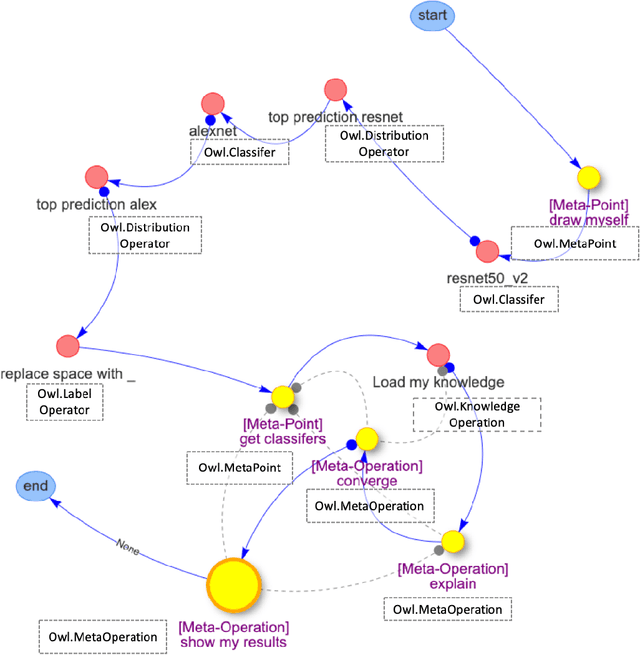

Abstract:Today, image classification is a common way for systems to process visual content. Although neural network approaches to classification have seen great progress in reducing error rates, it is not clear what this means for a cognitive system that needs to make sense of the multiple and competing predictions from its own classifiers. As a step to address this, we present a novel framework that uses meta-reasoning and meta-operations to unify predictions into abstractions, properties, or relationships. Using the framework on images from ImageNet, we demonstrate systems that unify 41% to 46% of predictions in general and unify 67% to 75% of predictions when the systems can explain their conceptual differences. We also demonstrate a system in "the wild" by feeding live video images through it and show it unifying 51% of predictions in general and 69% of predictions when their differences can be explained conceptually by the system. In a survey given to 24 participants, we found that 87% of the unified predictions describe their corresponding images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge