Jianhang Tang

Prototype-Regularized Federated Learning for Cross-Domain Aspect Sentiment Triplet Extraction

Apr 10, 2026Abstract:Aspect Sentiment Triplet Extraction (ASTE) aims to extract all sentiment triplets of aspect terms, opinion terms, and sentiment polarities from a sentence. Existing methods are typically trained on individual datasets in isolation, failing to jointly capture the common feature representations shared across domains. Moreover, data privacy constraints prevent centralized data aggregation. To address these challenges, we propose Prototype-based Cross-Domain Span Prototype extraction (PCD-SpanProto), a prototype-regularized federated learning framework to enable distributed clients to exchange class-level prototypes instead of full model parameters. Specifically, we design a weighted performance-aware aggregation strategy and a contrastive regularization module to improve the global prototype under domain heterogeneity and the promotion between intra-class compactness and inter-class separability across clients. Extensive experiments on four ASTE datasets demonstrate that our method outperforms baselines and reduces communication costs, validating the effectiveness of prototype-based cross-domain knowledge transfer.

LMM-Incentive: Large Multimodal Model-based Incentive Design for User-Generated Content in Web 3.0

Oct 06, 2025Abstract:Web 3.0 represents the next generation of the Internet, which is widely recognized as a decentralized ecosystem that focuses on value expression and data ownership. By leveraging blockchain and artificial intelligence technologies, Web 3.0 offers unprecedented opportunities for users to create, own, and monetize their content, thereby enabling User-Generated Content (UGC) to an entirely new level. However, some self-interested users may exploit the limitations of content curation mechanisms and generate low-quality content with less effort, obtaining platform rewards under information asymmetry. Such behavior can undermine Web 3.0 performance. To this end, we propose \textit{LMM-Incentive}, a novel Large Multimodal Model (LMM)-based incentive mechanism for UGC in Web 3.0. Specifically, we propose an LMM-based contract-theoretic model to motivate users to generate high-quality UGC, thereby mitigating the adverse selection problem from information asymmetry. To alleviate potential moral hazards after contract selection, we leverage LMM agents to evaluate UGC quality, which is the primary component of the contract, utilizing prompt engineering techniques to improve the evaluation performance of LMM agents. Recognizing that traditional contract design methods cannot effectively adapt to the dynamic environment of Web 3.0, we develop an improved Mixture of Experts (MoE)-based Proximal Policy Optimization (PPO) algorithm for optimal contract design. Simulation results demonstrate the superiority of the proposed MoE-based PPO algorithm over representative benchmarks in the context of contract design. Finally, we deploy the designed contract within an Ethereum smart contract framework, further validating the effectiveness of the proposed scheme.

Cross Task Neural Architecture Search for EEG Signal Classifications

Oct 01, 2022

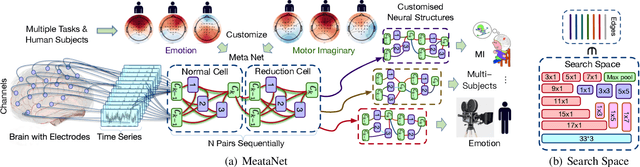

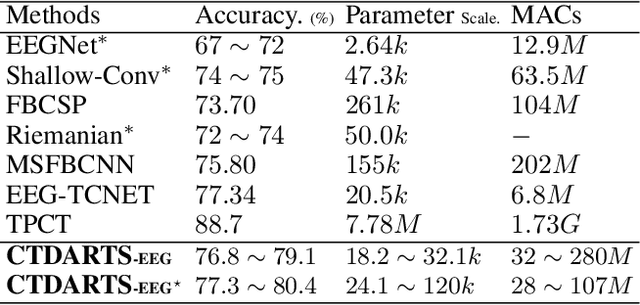

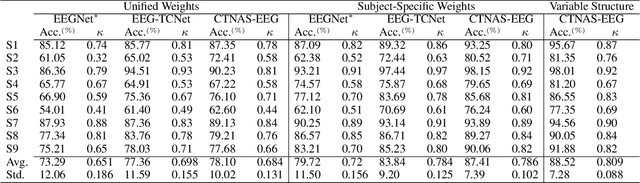

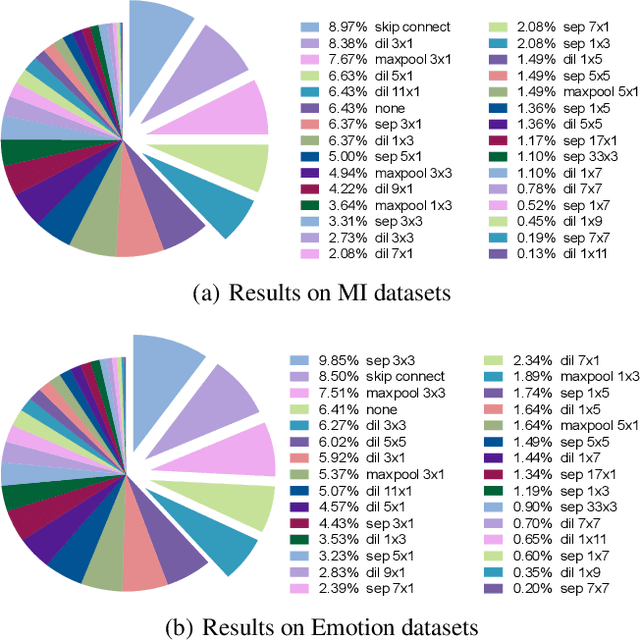

Abstract:Electroencephalograms (EEGs) are brain dynamics measured outside the brain, which have been widely utilized in non-invasive brain-computer interface applications. Recently, various neural network approaches have been proposed to improve the accuracy of EEG signal recognition. However, these approaches severely rely on manually designed network structures for different tasks which generally are not sharing the same empirical design cross-task-wise. In this paper, we propose a cross-task neural architecture search (CTNAS-EEG) framework for EEG signal recognition, which can automatically design the network structure across tasks and improve the recognition accuracy of EEG signals. Specifically, a compatible search space for cross-task searching and an efficient constrained searching method is proposed to overcome challenges brought by EEG signals. By unifying structure search on different EEG tasks, this work is the first to explore and analyze the searched structure difference cross-task-wise. Moreover, by introducing architecture search, this work is the first to analyze model performance by customizing model structure for each human subject. Detailed experimental results suggest that the proposed CTNAS-EEG could reach state-of-the-art performance on different EEG tasks, such as Motor Imagery (MI) and Emotion recognition. Extensive experiments and detailed analysis are provided as a good reference for follow-up researchers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge