Jia Guo

Cycle Inverse-Consistent TransMorph: A Balanced Deep Learning Framework for Brain MRI Registration

Mar 23, 2026Abstract:Deformable image registration plays a fundamental role in medical image analysis by enabling spatial alignment of anatomical structures across subjects. While recent deep learning-based approaches have significantly improved computational efficiency, many existing methods remain limited in capturing long-range anatomical correspondence and maintaining deformation consistency. In this work, we present a cycle inverse-consistent transformer-based framework for deformable brain MRI registration. The model integrates a Swin-UNet architecture with bidirectional consistency constraints, enabling the joint estimation of forward and backward deformation fields. This design allows the framework to capture both local anatomical details and global spatial relationships while improving deformation stability. We conduct a comprehensive evaluation of the proposed framework on a large multi-center dataset consisting of 2851 T1-weighted brain MRI scans aggregated from 13 public datasets. Experimental results demonstrate that the proposed framework achieves strong and balanced performance across multiple quantitative evaluation metrics while maintaining stable and physically plausible deformation fields. Detailed quantitative comparisons with baseline methods, including ANTs, ICNet, and VoxelMorph, are provided in the appendix. Experimental results demonstrate that CICTM achieves consistently strong performance across multiple evaluation criteria while maintaining stable and physically plausible deformation fields. These properties make the proposed framework suitable for large-scale neuroimaging datasets where both accuracy and deformation stability are critical.

Implicit Neural Representation for Multiuser Continuous Aperture Array Beamforming

Mar 17, 2026Abstract:Implicit neural representations (INRs) can parameterize continuous beamforming functions in continuous aperture arrays (CAPAs) and thus enable efficient online inference. Existing INR-based beamforming methods for CAPAs, however, typically suffer from high training complexity and limited generalizability. To address these issues, we first derive a closed-form expression for the achievable sum rate in multiuser multi-CAPA systems where both the base station and the users are equipped with CAPAs. For sum-rate maximization, we then develop a functional weighted minimum mean-squared error (WMMSE) algorithm by using orthonormal basis expansion to convert the functional optimization into an equivalent parameter optimization problem. Based on this functional WMMSE algorithm, we further propose BeamINR, an INR-based beamforming method implemented with a graph neural network to exploit the permutation-equivariant structure of the optimal beamforming policy; its update equation is designed from the structure of the functional WMMSE iterations. Simulation results show that the functional WMMSE algorithm achieves the highest sum rate at the cost of high online complexity. Compared with baseline INRs, BeamINR substantially reduces inference latency, lowers training complexity, and generalizes better across the number of users and carrier frequency.

Active Inference for Micro-Gesture Recognition: EFE-Guided Temporal Sampling and Adaptive Learning

Mar 08, 2026Abstract:Micro-gestures are subtle and transient movements triggered by unconscious neural and emotional activities, holding great potential for human-computer interaction and clinical monitoring. However, their low amplitude, short duration, and strong inter-subject variability make existing deep models prone to degradation under low-sample, noisy, and cross-subject conditions. This paper presents an active inference-based framework for micro-gesture recognition, featuring Expected Free Energy (EFE)-guided temporal sampling and uncertainty-aware adaptive learning. The model actively selects the most discriminative temporal segments under EFE guidance, enabling dynamic observation and information gain maximization. Meanwhile, sample weighting driven by predictive uncertainty mitigates the effects of label noise and distribution shift. Experiments on the SMG dataset demonstrate the effectiveness of the proposed method, achieving consistent improvements across multiple mainstream backbones. Ablation studies confirm that both the EFE-guided observation and the adaptive learning mechanism are crucial to the performance gains. This work offers an interpretable and scalable paradigm for temporal behavior modeling under low-resource and noisy conditions, with broad applicability to wearable sensing, HCI, and clinical emotion monitoring.

A Survey of Pinching-Antenna Systems (PASS)

Jan 26, 2026Abstract:The pinching-antenna system (PASS), recently proposed as a flexible-antenna technology, has been regarded as a promising solution for several challenges in next-generation wireless networks. It provides large-scale antenna reconfiguration, establishes stable line-of-sight links, mitigates signal blockage, and exploits near-field advantages through its distinctive architecture. This article aims to present a comprehensive overview of the state of the art in PASS. The fundamental principles of PASS are first discussed, including its hardware architecture, circuit and physical models, and signal models. Several emerging PASS designs, such as segmented PASS (S-PASS), center-fed PASS (C-PASS), and multi-mode PASS (M-PASS), are subsequently introduced, and their design features are discussed. In addition, the properties and promising applications of PASS for wireless sensing are reviewed. On this basis, recent progress in the performance analysis of PASS for both communications and sensing is surveyed, and the performance gains achieved by PASS are highlighted. Existing research contributions in optimization and machine learning are also summarized, with the practical challenges of beamforming and resource allocation being identified in relation to the unique transmission structure and propagation characteristics of PASS. Finally, several variants of PASS are presented, and key implementation challenges that remain open for future study are discussed.

Native Intelligence Emerges from Large-Scale Clinical Practice: A Retinal Foundation Model with Deployment Efficiency

Dec 16, 2025Abstract:Current retinal foundation models remain constrained by curated research datasets that lack authentic clinical context, and require extensive task-specific optimization for each application, limiting their deployment efficiency in low-resource settings. Here, we show that these barriers can be overcome by building clinical native intelligence directly from real-world medical practice. Our key insight is that large-scale telemedicine programs, where expert centers provide remote consultations across distributed facilities, represent a natural reservoir for learning clinical image interpretation. We present ReVision, a retinal foundation model that learns from the natural alignment between 485,980 color fundus photographs and their corresponding diagnostic reports, accumulated through a decade-long telemedicine program spanning 162 medical institutions across China. Through extensive evaluation across 27 ophthalmic benchmarks, we demonstrate that ReVison enables deployment efficiency with minimal local resources. Without any task-specific training, ReVision achieves zero-shot disease detection with an average AUROC of 0.946 across 12 public benchmarks and 0.952 on 3 independent clinical cohorts. When minimal adaptation is feasible, ReVision matches extensively fine-tuned alternatives while requiring orders of magnitude fewer trainable parameters and labeled examples. The learned representations also transfer effectively to new clinical sites, imaging domains, imaging modalities, and systemic health prediction tasks. In a prospective reader study with 33 ophthalmologists, ReVision's zero-shot assistance improved diagnostic accuracy by 14.8% across all experience levels. These results demonstrate that clinical native intelligence can be directly extracted from clinical archives without any further annotation to build medical AI systems suited to various low-resource settings.

Collaborative Reconstruction and Repair for Multi-class Industrial Anomaly Detection

Dec 12, 2025Abstract:Industrial anomaly detection is a challenging open-set task that aims to identify unknown anomalous patterns deviating from normal data distribution. To avoid the significant memory consumption and limited generalizability brought by building separate models per class, we focus on developing a unified framework for multi-class anomaly detection. However, under this challenging setting, conventional reconstruction-based networks often suffer from an identity mapping problem, where they directly replicate input features regardless of whether they are normal or anomalous, resulting in detection failures. To address this issue, this study proposes a novel framework termed Collaborative Reconstruction and Repair (CRR), which transforms the reconstruction to repairation. First, we optimize the decoder to reconstruct normal samples while repairing synthesized anomalies. Consequently, it generates distinct representations for anomalous regions and similar representations for normal areas compared to the encoder's output. Second, we implement feature-level random masking to ensure that the representations from decoder contain sufficient local information. Finally, to minimize detection errors arising from the discrepancies between feature representations from the encoder and decoder, we train a segmentation network supervised by synthetic anomaly masks, thereby enhancing localization performance. Extensive experiments on industrial datasets that CRR effectively mitigates the identity mapping issue and achieves state-of-the-art performance in multi-class industrial anomaly detection.

Every Step Evolves: Scaling Reinforcement Learning for Trillion-Scale Thinking Model

Oct 21, 2025

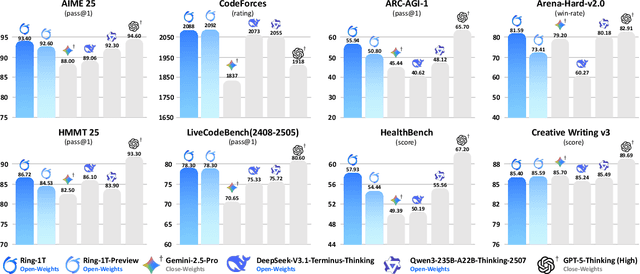

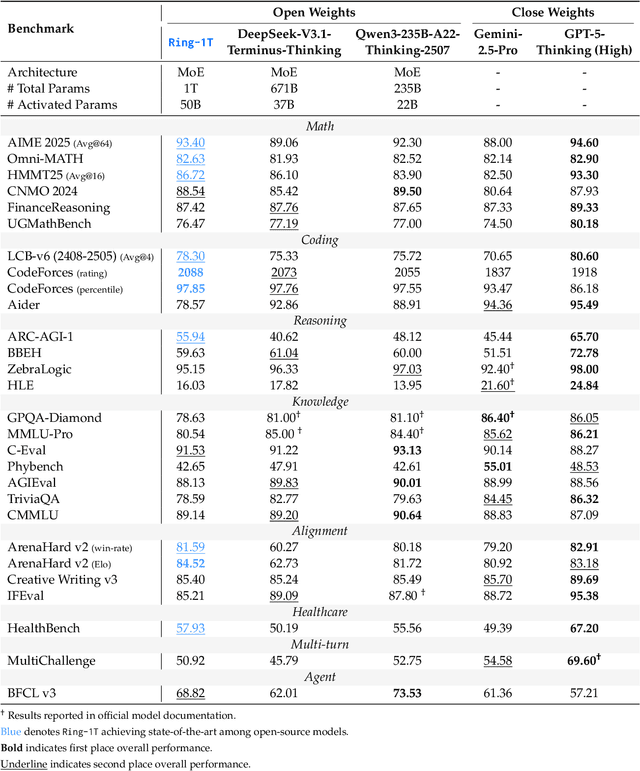

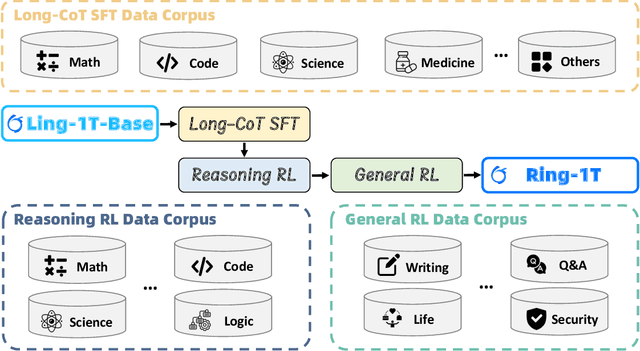

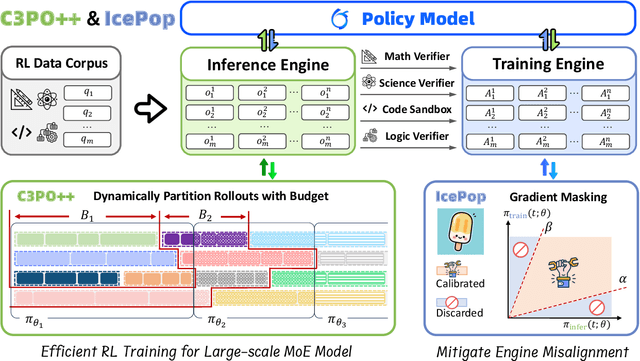

Abstract:We present Ring-1T, the first open-source, state-of-the-art thinking model with a trillion-scale parameter. It features 1 trillion total parameters and activates approximately 50 billion per token. Training such models at a trillion-parameter scale introduces unprecedented challenges, including train-inference misalignment, inefficiencies in rollout processing, and bottlenecks in the RL system. To address these, we pioneer three interconnected innovations: (1) IcePop stabilizes RL training via token-level discrepancy masking and clipping, resolving instability from training-inference mismatches; (2) C3PO++ improves resource utilization for long rollouts under a token budget by dynamically partitioning them, thereby obtaining high time efficiency; and (3) ASystem, a high-performance RL framework designed to overcome the systemic bottlenecks that impede trillion-parameter model training. Ring-1T delivers breakthrough results across critical benchmarks: 93.4 on AIME-2025, 86.72 on HMMT-2025, 2088 on CodeForces, and 55.94 on ARC-AGI-v1. Notably, it attains a silver medal-level result on the IMO-2025, underscoring its exceptional reasoning capabilities. By releasing the complete 1T parameter MoE model to the community, we provide the research community with direct access to cutting-edge reasoning capabilities. This contribution marks a significant milestone in democratizing large-scale reasoning intelligence and establishes a new baseline for open-source model performance.

Pinching-Antenna Systems (PASS): A Tutorial

Aug 11, 2025Abstract:Pinching antenna systems (PASS) present a breakthrough among the flexible-antenna technologies, and distinguish themselves by facilitating large-scale antenna reconfiguration, line-of-sight creation, scalable implementation, and near-field benefits, thus bringing wireless communications from the last mile to the last meter. A comprehensive tutorial is presented in this paper. First, the fundamentals of PASS are discussed, including PASS signal models, hardware models, power radiation models, and pinching antenna activation methods. Building upon this, the information-theoretic capacity limits achieved by PASS are characterized, and several typical performance metrics of PASS-based communications are analyzed to demonstrate its superiority over conventional antenna technologies. Next, the pinching beamforming design is investigated. The corresponding power scaling law is first characterized. For the joint transmit and pinching design in the general multiple-waveguide case, 1) a pair of transmission strategies is proposed for PASS-based single-user communications to validate the superiority of PASS, namely sub-connected and fully connected structures; and 2) three practical protocols are proposed for facilitating PASS-based multi-user communications, namely waveguide switching, waveguide division, and waveguide multiplexing. A possible implementation of PASS in wideband communications is further highlighted. Moreover, the channel state information acquisition in PASS is elaborated with a pair of promising solutions. To overcome the high complexity and suboptimality inherent in conventional convex-optimization-based approaches, machine-learning-based methods for operating PASS are also explored, focusing on selected deep neural network architectures and training algorithms. Finally, several promising applications of PASS in next-generation wireless networks are highlighted.

When Attention is Beneficial for Learning Wireless Resource Allocation Efficiently?

Jul 03, 2025

Abstract:Owing to the use of attention mechanism to leverage the dependency across tokens, Transformers are efficient for natural language processing. By harnessing permutation properties broadly exist in resource allocation policies, each mapping measurable environmental parameters (e.g., channel matrix) to optimized variables (e.g., precoding matrix), graph neural networks (GNNs) are promising for learning these policies efficiently in terms of scalability and generalizability. To reap the benefits of both architectures, there is a recent trend of incorporating attention mechanism with GNNs for learning wireless policies. Nevertheless, is the attention mechanism really needed for resource allocation? In this paper, we strive to answer this question by analyzing the structures of functions defined on sets and numerical algorithms, given that the permutation properties of wireless policies are induced by the involved sets (say user set). In particular, we prove that the permutation equivariant functions on a single set can be recursively expressed by two types of functions: one involves attention, and the other does not. We proceed to re-express the numerical algorithms for optimizing several representative resource allocation problems in recursive forms. We find that when interference (say multi-user or inter-data stream interference) is not reflected in the measurable parameters of a policy, attention needs to be used to model the interference. With the insight, we establish a framework of designing GNNs by aligning with the structures. By taking reconfigurable intelligent surface-aided hybrid precoding as an example, the learning efficiency of the proposed GNN is validated via simulations.

Ring-lite: Scalable Reasoning via C3PO-Stabilized Reinforcement Learning for LLMs

Jun 18, 2025Abstract:We present Ring-lite, a Mixture-of-Experts (MoE)-based large language model optimized via reinforcement learning (RL) to achieve efficient and robust reasoning capabilities. Built upon the publicly available Ling-lite model, a 16.8 billion parameter model with 2.75 billion activated parameters, our approach matches the performance of state-of-the-art (SOTA) small-scale reasoning models on challenging benchmarks (e.g., AIME, LiveCodeBench, GPQA-Diamond) while activating only one-third of the parameters required by comparable models. To accomplish this, we introduce a joint training pipeline integrating distillation with RL, revealing undocumented challenges in MoE RL training. First, we identify optimization instability during RL training, and we propose Constrained Contextual Computation Policy Optimization(C3PO), a novel approach that enhances training stability and improves computational throughput via algorithm-system co-design methodology. Second, we empirically demonstrate that selecting distillation checkpoints based on entropy loss for RL training, rather than validation metrics, yields superior performance-efficiency trade-offs in subsequent RL training. Finally, we develop a two-stage training paradigm to harmonize multi-domain data integration, addressing domain conflicts that arise in training with mixed dataset. We will release the model, dataset, and code.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge