Huafeng Li

Breaking Degradation Coupling: A Structural Entropy Guided Decoupled Framework and Benchmark for Infrared Enhancement

Apr 24, 2026Abstract:Thermal infrared image enhancement aims to restore high-quality images from complex compound degradations. Existing all-in-one approaches typically employ a single shared backbone to handle diverse degradations, which causes gradient interference and parameter competition. To address this, we propose a Structural Entropy-Guided Decoupled (SEGD) Framework. Unlike unified modeling paradigms, SEGD decomposes compound degradations into independent sub-processes and models them in a divide-and-conquer manner through Degradation-Specific Residual Modules (DRMs). Each DRM focuses on residual estimation for a specific degradation, enabling task decoupling while remaining jointly trainable, which mitigates parameter contention. A Degradation-Aware Evidential Network further estimates degradation type and intensity, providing priors that adaptively regulate DRM restoration strength. To handle compound cases, DRMs are composed in varying orders to form multiple restoration paths, from which the most informative features are aggregated under a structural-entropy criterion, yielding decoder-ready representations with structural fidelity and degradation awareness. Integrating divide-and-conquer restoration, evidential perception, and entropy-guided adaptation, SEGD achieves fine-grained and interpretable enhancement. We also construct a nighttime TIR benchmark for evaluation under real low-light conditions. Experimental results demonstrate that SEGD surpasses state-of-the-art methods while achieving higher efficiency with fewer parameters.

Degradation-Robust Fusion: An Efficient Degradation-Aware Diffusion Framework for Multimodal Image Fusion in Arbitrary Degradation Scenarios

Apr 10, 2026Abstract:Complex degradations like noise, blur, and low resolution are typical challenges in real world image fusion tasks, limiting the performance and practicality of existing methods. End to end neural network based approaches are generally simple to design and highly efficient in inference, but their black-box nature leads to limited interpretability. Diffusion based methods alleviate this to some extent by providing powerful generative priors and a more structured inference process. However, they are trained to learn a single domain target distribution, whereas fusion lacks natural fused data and relies on modeling complementary information from multiple sources, making diffusion hard to apply directly in practice. To address these challenges, this paper proposes an efficient degradation aware diffusion framework for image fusion under arbitrary degradation scenarios. Specifically, instead of explicitly predicting noise as in conventional diffusion models, our method performs implicit denoising by directly regressing the fused image, enabling flexible adaptation to diverse fusion tasks under complex degradations with limited steps. Moreover, we design a joint observation model correction mechanism that simultaneously imposes degradation and fusion constraints during sampling to ensure high reconstruction accuracy. Experiments on diverse fusion tasks and degradation configurations demonstrate the superiority of the proposed method under complex degradation scenarios.

Customized Fusion: A Closed-Loop Dynamic Network for Adaptive Multi-Task-Aware Infrared-Visible Image Fusion

Apr 10, 2026Abstract:Infrared-visible image fusion aims to integrate complementary information for robust visual understanding, but existing fusion methods struggle with simultaneously adapting to multiple downstream tasks. To address this issue, we propose a Closed-Loop Dynamic Network (CLDyN) that can adaptively respond to the semantic requirements of diverse downstream tasks for task-customized image fusion. Specifically, CLDyN introduces a closed-loop optimization mechanism that establishes a semantic transmission chain to achieve explicit feedback from downstream tasks to the fusion network through a Requirement-driven Semantic Compensation (RSC) module. The RSC module leverages a Basis Vector Bank (BVB) and an Architecture-Adaptive Semantic Injection (A2SI) block to customize the network architecture according to task requirements, thereby enabling task-specific semantic compensation and allowing the fusion network to actively adapt to diverse tasks without retraining. To promote semantic compensation, a reward-penalty strategy is introduced to reward or penalize the RSC module based on task performance variations. Experiments on the M3FD, FMB, and VT5000 datasets demonstrate that CLDyN not only maintains high fusion quality but also exhibits strong multi-task adaptability. The code is available at https://github.com/YR0211/CLDyN.

MAVFusion: Efficient Infrared and Visible Video Fusion via Motion-Aware Sparse Interaction

Apr 02, 2026Abstract:Infrared and visible video fusion combines the object saliency from infrared images with the texture details from visible images to produce semantically rich fusion results. However, most existing methods are designed for static image fusion and cannot effectively handle frame-to-frame motion in videos. Current video fusion methods improve temporal consistency by introducing interactions across frames, but they often require high computational cost. To mitigate these challenges, we propose MAVFusion, an end-to-end video fusion framework featuring a motion-aware sparse interaction mechanism that enhances efficiency while maintaining superior fusion quality. Specifically, we leverage optical flow to identify dynamic regions in multi-modal sequences, adaptively allocating computationally intensive cross-modal attention to these sparse areas to capture salient transitions and facilitate inter-modal information exchange. For static background regions, a lightweight weak interaction module is employed to maintain structural and appearance integrity. By decoupling the processing of dynamic and static regions, MAVFusion simultaneously preserves temporal consistency and fine-grained details while significantly accelerating inference. Extensive experiments demonstrate that MAVFusion achieves state-of-the-art performance on multiple infrared and visible video benchmarks, achieving a speed of 14.16\,FPS at $640 \times 480$ resolution. The source code will be available at https://github.com/ixilai/MAVFusion.

Missing No More: Dictionary-Guided Cross-Modal Image Fusion under Missing Infrared

Mar 09, 2026Abstract:Infrared-visible (IR-VIS) image fusion is vital for perception and security, yet most methods rely on the availability of both modalities during training and inference. When the infrared modality is absent, pixel-space generative substitutes become hard to control and inherently lack interpretability. We address missing-IR fusion by proposing a dictionary-guided, coefficient-domain framework built upon a shared convolutional dictionary. The pipeline comprises three key components: (1) Joint Shared-dictionary Representation Learning (JSRL) learns a unified and interpretable atom space shared by both IR and VIS modalities; (2) VIS-Guided IR Inference (VGII) transfers VIS coefficients to pseudo-IR coefficients in the coefficient domain and performs a one-step closed-loop refinement guided by a frozen large language model as a weak semantic prior; and (3) Adaptive Fusion via Representation Inference (AFRI) merges VIS structures and inferred IR cues at the atom level through window attention and convolutional mixing, followed by reconstruction with the shared dictionary. This encode-transfer-fuse-reconstruct pipeline avoids uncontrolled pixel-space generation while ensuring prior preservation within interpretable dictionary-coefficient representation. Experiments under missing-IR settings demonstrate consistent improvements in perceptual quality and downstream detection performance. To our knowledge, this represents the first framework that jointly learns a shared dictionary and performs coefficient-domain inference-fusion to tackle missing-IR fusion. The source code is publicly available at https://github.com/harukiv/DCMIF.

Adaptive Dynamic Dehazing via Instruction-Driven and Task-Feedback Closed-Loop Optimization for Diverse Downstream Task Adaptation

Mar 04, 2026Abstract:In real-world vision systems,haze removal is required not only to enhance image visibility but also to meet the specific needs of diverse downstream tasks.To address this challenge,we propose a novel adaptive dynamic dehazing framework that incorporates a closed-loop optimization mechanism.It enables feedback-driven refinement based on downstream task performance and user instruction-guided adjustment during inference,allowing the model to satisfy the specific requirements of multiple downstream tasks without retraining.Technically,our framework integrates two complementary and innovative mechanisms: (1)a task feedback loop that dynamically modulates dehazing outputs based on performance across multiple downstream tasks,and (2) a text instruction interface that allows users to specify high-level task preferences.This dual-guidance strategy enables the model to adapt its dehazing behavior after training,tailoring outputs in real time to the evolving needs of multiple tasks.Extensive experiments across various vision tasks demonstrate the strong effectiveness,robustness,and generalizability of our approach.These results establish a new paradigm for interactive,task-adaptive dehazing that actively collaborates with downstream applications.

Hierarchical Prompt Learning for Image- and Text-Based Person Re-Identification

Nov 17, 2025

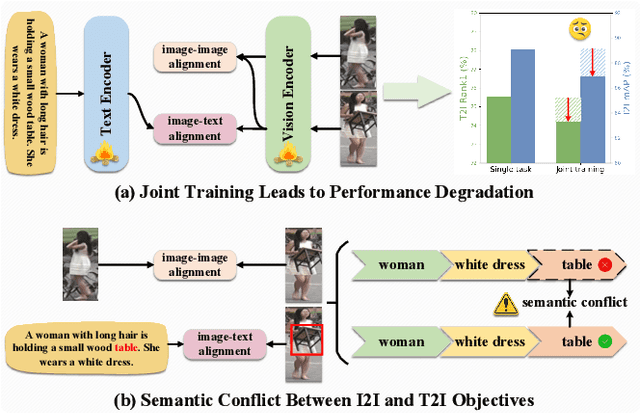

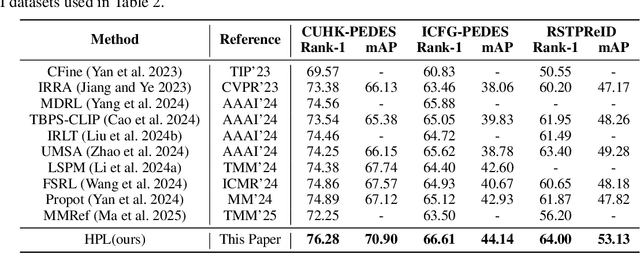

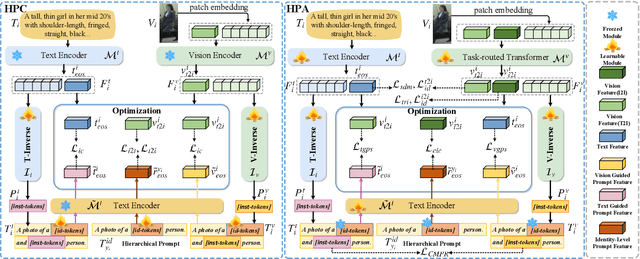

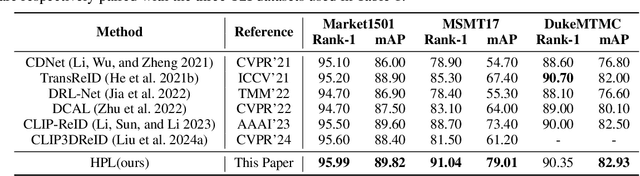

Abstract:Person re-identification (ReID) aims to retrieve target pedestrian images given either visual queries (image-to-image, I2I) or textual descriptions (text-to-image, T2I). Although both tasks share a common retrieval objective, they pose distinct challenges: I2I emphasizes discriminative identity learning, while T2I requires accurate cross-modal semantic alignment. Existing methods often treat these tasks separately, which may lead to representation entanglement and suboptimal performance. To address this, we propose a unified framework named Hierarchical Prompt Learning (HPL), which leverages task-aware prompt modeling to jointly optimize both tasks. Specifically, we first introduce a Task-Routed Transformer, which incorporates dual classification tokens into a shared visual encoder to route features for I2I and T2I branches respectively. On top of this, we develop a hierarchical prompt generation scheme that integrates identity-level learnable tokens with instance-level pseudo-text tokens. These pseudo-tokens are derived from image or text features via modality-specific inversion networks, injecting fine-grained, instance-specific semantics into the prompts. Furthermore, we propose a Cross-Modal Prompt Regularization strategy to enforce semantic alignment in the prompt token space, ensuring that pseudo-prompts preserve source-modality characteristics while enhancing cross-modal transferability. Extensive experiments on multiple ReID benchmarks validate the effectiveness of our method, achieving state-of-the-art performance on both I2I and T2I tasks.

DINOv2 Driven Gait Representation Learning for Video-Based Visible-Infrared Person Re-identification

Nov 06, 2025Abstract:Video-based Visible-Infrared person re-identification (VVI-ReID) aims to retrieve the same pedestrian across visible and infrared modalities from video sequences. Existing methods tend to exploit modality-invariant visual features but largely overlook gait features, which are not only modality-invariant but also rich in temporal dynamics, thus limiting their ability to model the spatiotemporal consistency essential for cross-modal video matching. To address these challenges, we propose a DINOv2-Driven Gait Representation Learning (DinoGRL) framework that leverages the rich visual priors of DINOv2 to learn gait features complementary to appearance cues, facilitating robust sequence-level representations for cross-modal retrieval. Specifically, we introduce a Semantic-Aware Silhouette and Gait Learning (SASGL) model, which generates and enhances silhouette representations with general-purpose semantic priors from DINOv2 and jointly optimizes them with the ReID objective to achieve semantically enriched and task-adaptive gait feature learning. Furthermore, we develop a Progressive Bidirectional Multi-Granularity Enhancement (PBMGE) module, which progressively refines feature representations by enabling bidirectional interactions between gait and appearance streams across multiple spatial granularities, fully leveraging their complementarity to enhance global representations with rich local details and produce highly discriminative features. Extensive experiments on HITSZ-VCM and BUPT datasets demonstrate the superiority of our approach, significantly outperforming existing state-of-the-art methods.

FS-Diff: Semantic guidance and clarity-aware simultaneous multimodal image fusion and super-resolution

Sep 11, 2025Abstract:As an influential information fusion and low-level vision technique, image fusion integrates complementary information from source images to yield an informative fused image. A few attempts have been made in recent years to jointly realize image fusion and super-resolution. However, in real-world applications such as military reconnaissance and long-range detection missions, the target and background structures in multimodal images are easily corrupted, with low resolution and weak semantic information, which leads to suboptimal results in current fusion techniques. In response, we propose FS-Diff, a semantic guidance and clarity-aware joint image fusion and super-resolution method. FS-Diff unifies image fusion and super-resolution as a conditional generation problem. It leverages semantic guidance from the proposed clarity sensing mechanism for adaptive low-resolution perception and cross-modal feature extraction. Specifically, we initialize the desired fused result as pure Gaussian noise and introduce the bidirectional feature Mamba to extract the global features of the multimodal images. Moreover, utilizing the source images and semantics as conditions, we implement a random iterative denoising process via a modified U-Net network. This network istrained for denoising at multiple noise levels to produce high-resolution fusion results with cross-modal features and abundant semantic information. We also construct a powerful aerial view multiscene (AVMS) benchmark covering 600 pairs of images. Extensive joint image fusion and super-resolution experiments on six public and our AVMS datasets demonstrated that FS-Diff outperforms the state-of-the-art methods at multiple magnifications and can recover richer details and semantics in the fused images. The code is available at https://github.com/XylonXu01/FS-Diff.

FlexiD-Fuse: Flexible number of inputs multi-modal medical image fusion based on diffusion model

Sep 11, 2025

Abstract:Different modalities of medical images provide unique physiological and anatomical information for diseases. Multi-modal medical image fusion integrates useful information from different complementary medical images with different modalities, producing a fused image that comprehensively and objectively reflects lesion characteristics to assist doctors in clinical diagnosis. However, existing fusion methods can only handle a fixed number of modality inputs, such as accepting only two-modal or tri-modal inputs, and cannot directly process varying input quantities, which hinders their application in clinical settings. To tackle this issue, we introduce FlexiD-Fuse, a diffusion-based image fusion network designed to accommodate flexible quantities of input modalities. It can end-to-end process two-modal and tri-modal medical image fusion under the same weight. FlexiD-Fuse transforms the diffusion fusion problem, which supports only fixed-condition inputs, into a maximum likelihood estimation problem based on the diffusion process and hierarchical Bayesian modeling. By incorporating the Expectation-Maximization algorithm into the diffusion sampling iteration process, FlexiD-Fuse can generate high-quality fused images with cross-modal information from source images, independently of the number of input images. We compared the latest two and tri-modal medical image fusion methods, tested them on Harvard datasets, and evaluated them using nine popular metrics. The experimental results show that our method achieves the best performance in medical image fusion with varying inputs. Meanwhile, we conducted extensive extension experiments on infrared-visible, multi-exposure, and multi-focus image fusion tasks with arbitrary numbers, and compared them with the perspective SOTA methods. The results of the extension experiments consistently demonstrate the effectiveness and superiority of our method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge