Dario Floreano

Perching by hugging: an initial feasibility study

Jun 08, 2023

Abstract:Current UAVs capable of perching require added structure and mechanisms to accomplish this. These take the form of hooks, claws, needles, etc which add weight and usually drag. We propose in this paper the dual use of structures already on the vehicle to enable perching, thus reducing the weight and drag cost associated with perching UAVs. We propose a wing design capable of passively wrapping around a vertical pole to perch. We experimentally investigate the feasibility of the design, presenting results on minimum required perching speeds as well as the effect of weight distribution on the success rate of the wing wrapping. Finally, we comment on design requirements for holding onto the pole based on our findings.

Design and manufacture of edible microfluidic logic gates

Apr 05, 2023

Abstract:Edible robotics is an emerging research field with potential use in environmental, food, and medical scenarios. In this context, the design of edible control circuits could increase the behavioral complexity of edible robots and reduce their dependence on inedible components. Here we describe a method to design and manufacture edible control circuits based on microfluidic logic gates. We focus on the choice of materials and fabrication procedure to produce edible logic gates based on recently available soft microfluidic logic. We validate the proposed design with the production of a functional NOT gate and suggest further research avenues for scaling up the method to more complex circuits.

Avian-Inspired Claws Enable Robot Perching and Walking

Mar 29, 2023

Abstract:Multimodal UAVs (Unmanned Aerial Vehicles) are rarely capable of more than two modalities, i.e., flying and walking or flying and perching. However, being able to fly, perch, and walk could further improve their usefulness by expanding their operating envelope. For instance, an aerial robot could fly a long distance, perch in a high place to survey the surroundings, then walk to avoid obstacles that could potentially inhibit flight. Birds are capable of these three tasks, and so offer a practical example of how a robot might be developed to do the same. In this paper, we present a specialized avian-inspired claw design to enable UAVs to passively perch and walk. The key innovation is the combination of a Hoberman linkage leg with Fin Ray claw that uses the weight of the UAV to wrap the claw around a perch, or hyperextend it in the opposite direction to form a ball shape for stable terrestrial locomotion. Because the design uses the weight of the vehicle, the underactuated design is lightweight and low power. With the inclusion of talons, the 45g claws are capable of holding a 700g UAV to an almost 20-degree angle on a perch. In scenarios where cluttered environments impede flight and long mission times are required, such a combination of flying, perching, and walking is critical.

Reconfigurable Drone System for Transportation of Parcels With Variable Mass and Size

Nov 16, 2022

Abstract:Cargo drones are designed to carry payloads with predefined shape, size, and/or mass. This lack of flexibility requires a fleet of diverse drones tailored to specific cargo dimensions. Here we propose a new reconfigurable drone based on a modular design that adapts to different cargo shapes, sizes, and mass. We also propose a method for the automatic generation of drone configurations and suitable parameters for the flight controller. The parcel becomes the drone's body to which several individual propulsion modules are attached. We demonstrate the use of the reconfigurable hardware and the accompanying software by transporting parcels of different mass and sizes requiring various numbers and propulsion modules' positioning. The experiments are conducted indoors (with a motion capture system) and outdoors (with an RTK-GNSS sensor). The proposed design represents a cheaper and more versatile alternative to the solutions involving several drones for parcel transportation.

Towards edible drones for rescue missions: design and flight of nutritional wings

Nov 08, 2022

Abstract:Drones have shown to be useful aerial vehicles for unmanned transport missions such as food and medical supply delivery. This can be leveraged to deliver life-saving nutrition and medicine for people in emergency situations. However, commercial drones can generally only carry 10 % - 30 % of their own mass as payload, which limits the amount of food delivery in a single flight. One novel solution to noticeably increase the food-carrying ratio of a drone, is recreating some structures of a drone, such as the wings, with edible materials. We thus propose a drone, which is no longer only a food transporting aircraft, but itself is partially edible, increasing its food-carrying mass ratio to 50 %, owing to its edible wings. Furthermore, should the edible drone be left behind in the environment after performing its task in an emergency situation, it will be more biodegradable than its non-edible counterpart, leaving less waste in the environment. Here we describe the choice of materials and scalable design of edible wings, and validate the method in a flight-capable prototype that can provide 300 kcal and carry a payload of 80 g of water.

Training Efficient Controllers via Analytic Policy Gradient

Sep 26, 2022

Abstract:Control design for robotic systems is complex and often requires solving an optimization to follow a trajectory accurately. Online optimization approaches like Model Predictive Control (MPC) have been shown to achieve great tracking performance, but require high computing power. Conversely, learning-based offline optimization approaches, such as Reinforcement Learning (RL), allow fast and efficient execution on the robot but hardly match the accuracy of MPC in trajectory tracking tasks. In systems with limited compute, such as aerial vehicles, an accurate controller that is efficient at execution time is imperative. We propose an Analytic Policy Gradient (APG) method to tackle this problem. APG exploits the availability of differentiable simulators by training a controller offline with gradient descent on the tracking error. We address training instabilities that frequently occur with APG through curriculum learning and experiment on a widely used controls benchmark, the CartPole, and two common aerial robots, a quadrotor and a fixed-wing drone. Our proposed method outperforms both model-based and model-free RL methods in terms of tracking error. Concurrently, it achieves similar performance to MPC while requiring more than an order of magnitude less computation time. Our work provides insights into the potential of APG as a promising control method for robotics. To facilitate the exploration of APG, we open-source our code and make it available at https://github.com/lis-epfl/apg_trajectory_tracking.

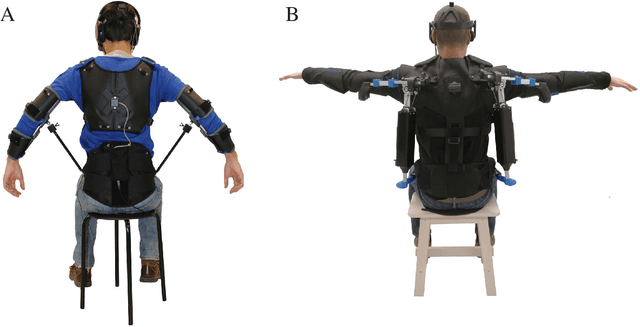

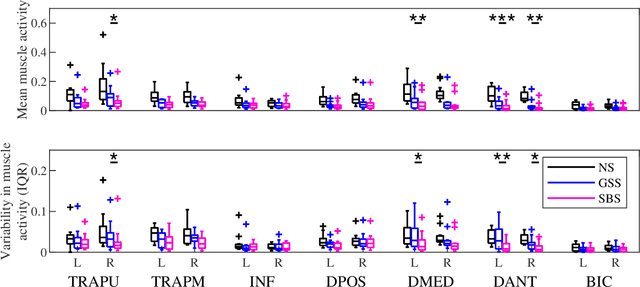

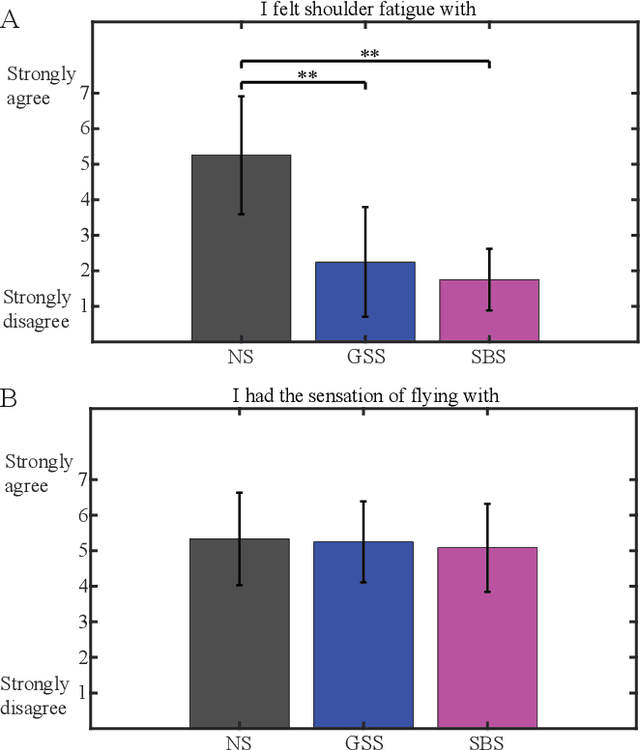

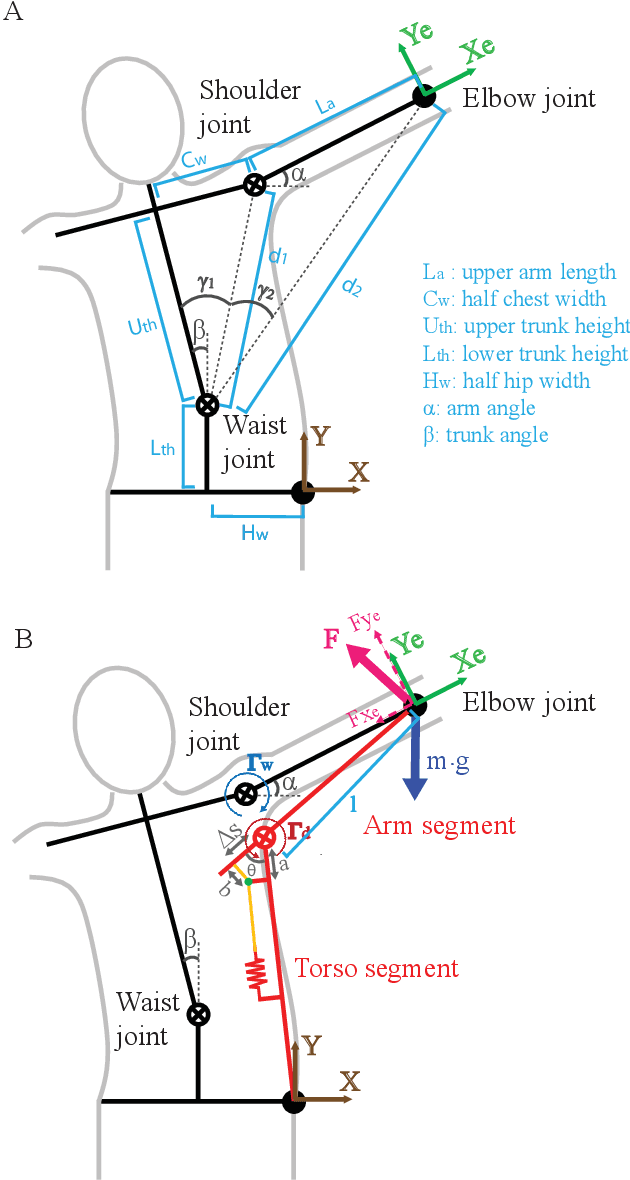

A Portable and Passive Gravity Compensation Arm Support for Drone Teleoperation

Nov 10, 2021

Abstract:Gesture-based interfaces are often used to achieve a more natural and intuitive teleoperation of robots. Yet, sometimes, gesture control requires postures or movements that cause significant fatigue to the user. In a previous user study, we demonstrated that na\"ive users can control a fixed-wing drone with torso movements while their arms are spread out. However, this posture induced significant arm fatigue. In this work, we present a passive arm support that compensates the arm weight with a mean torque error smaller than 0.005 N/kg for more than 97% of the range of motion used by subjects to fly, therefore reducing muscular fatigue in the shoulder of on average 58%. In addition, this arm support is designed to fit users from the body dimension of the 1st percentile female to the 99th percentile male. The performance analysis of the arm support is described with a mechanical model and its implementation is validated with both a mechanical characterization and a user study, which measures the flight performance, the shoulder muscle activity and the user acceptance.

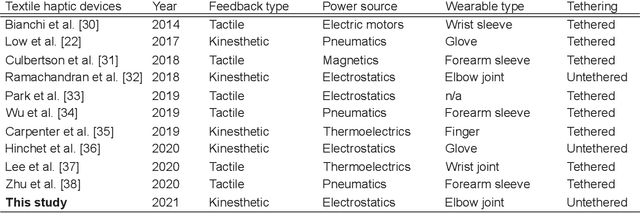

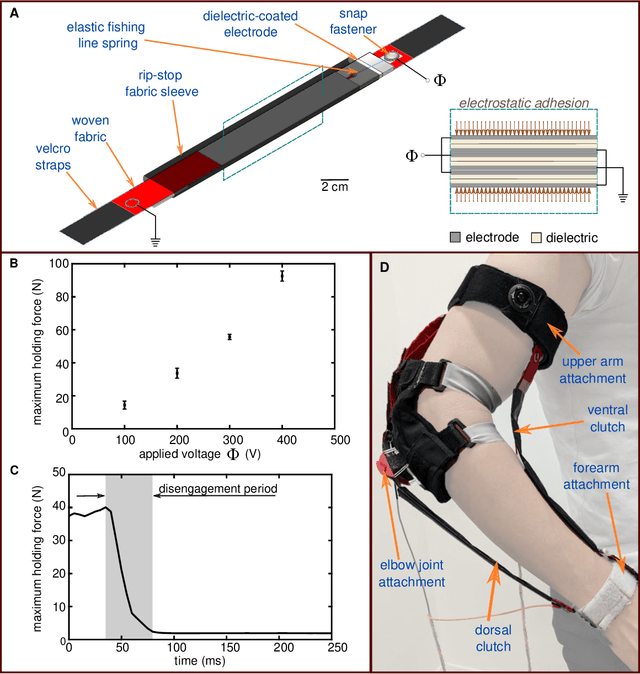

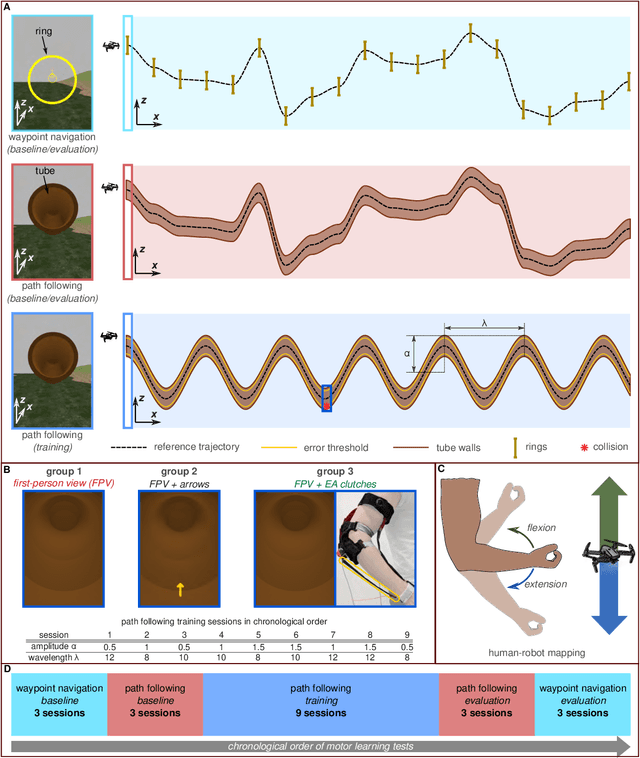

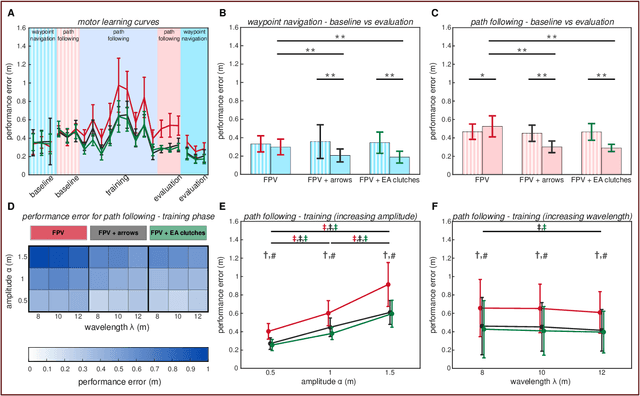

Smart Textiles that Teach: Fabric-Based Haptic Device Improves the Rate of Motor Learning

Jul 12, 2021

Abstract:People learn motor activities best when they are conscious of their errors and make a concerted effort to correct them. While haptic interfaces can facilitate motor training, existing interfaces are often bulky and do not always ensure post-training skill retention. Here, we describe a programmable haptic sleeve composed of textile-based electroadhesive clutches for skill acquisition and retention. We show its functionality in a motor learning study where users control a drone's movement using elbow joint rotation. Haptic feedback is used to restrain elbow motion and make users aware of their errors. This helps users consciously learn to avoid errors from occurring. While all subjects exhibited similar performance during the baseline phase of motor learning, those subjects who received haptic feedback from the haptic sleeve committed 23.5% fewer errors than subjects in the control group during the evaluation phase. The results show that the sleeve helps users retain and transfer motor skills better than visual feedback alone. This work shows the potential for fabric-based haptic interfaces as a training aid for motor tasks in the fields of rehabilitation and teleoperation.

Seeking Quality Diversity in Evolutionary Co-design of Morphology and Control of Soft Tensegrity Modular Robots

Apr 25, 2021

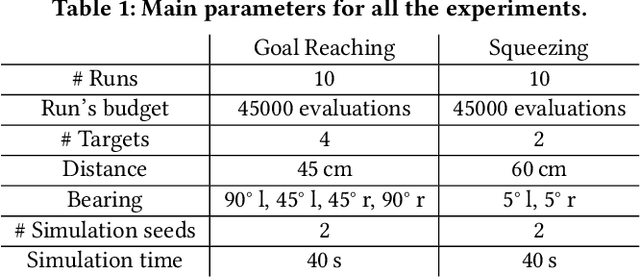

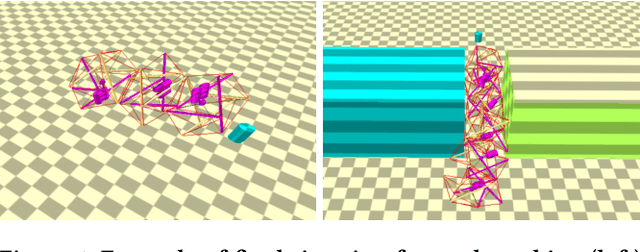

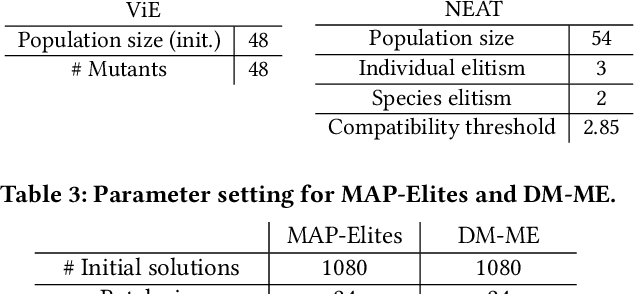

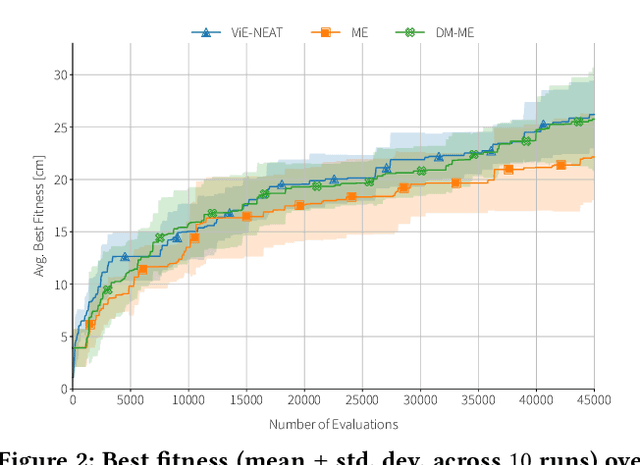

Abstract:Designing optimal soft modular robots is difficult, due to non-trivial interactions between morphology and controller. Evolutionary algorithms (EAs), combined with physical simulators, represent a valid tool to overcome this issue. In this work, we investigate algorithmic solutions to improve the Quality Diversity of co-evolved designs of Tensegrity Soft Modular Robots (TSMRs) for two robotic tasks, namely goal reaching and squeezing trough a narrow passage. To this aim, we use three different EAs, i.e., MAP-Elites and two custom algorithms: one based on Viability Evolution (ViE) and NEAT (ViE-NEAT), the other named Double Map MAP-Elites (DM-ME) and devised to seek diversity while co-evolving robot morphologies and neural network (NN)-based controllers. In detail, DM-ME extends MAP-Elites in that it uses two distinct feature maps, referring to morphologies and controllers respectively, and integrates a mechanism to automatically define the NN-related feature descriptor. Considering the fitness, in the goal-reaching task ViE-NEAT outperforms MAP-Elites and results equivalent to DM-ME. Instead, when considering diversity in terms of "illumination" of the feature space, DM-ME outperforms the other two algorithms on both tasks, providing a richer pool of possible robotic designs, whereas ViE-NEAT shows comparable performance to MAP-Elites on goal reaching, although it does not exploit any map.

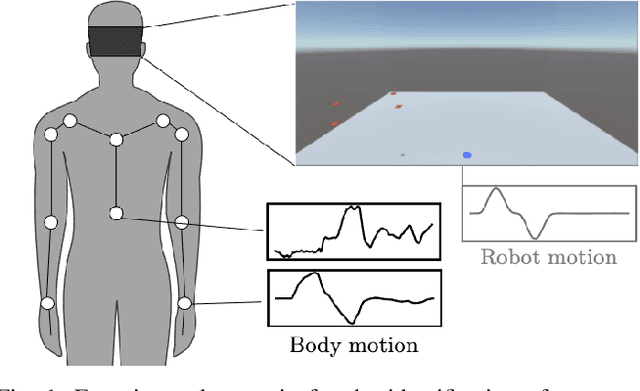

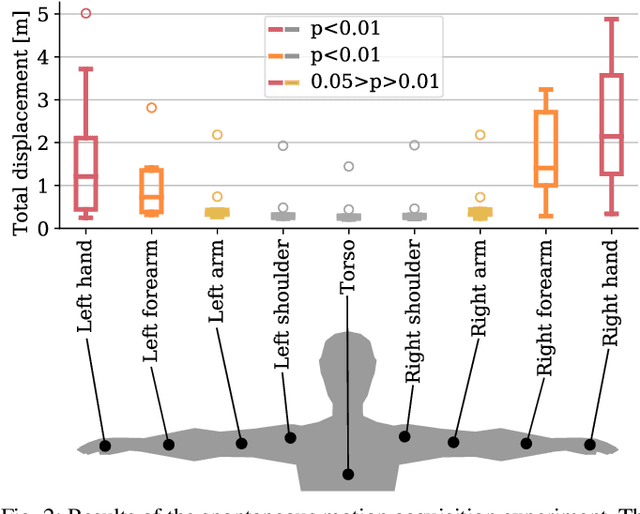

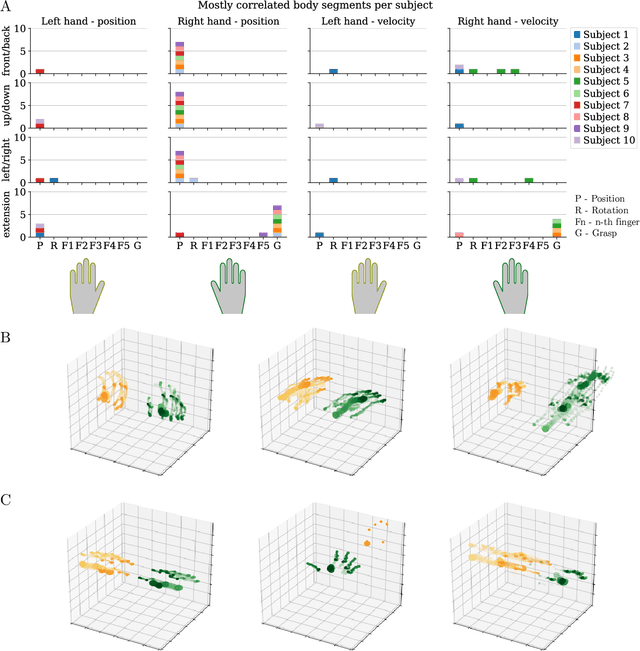

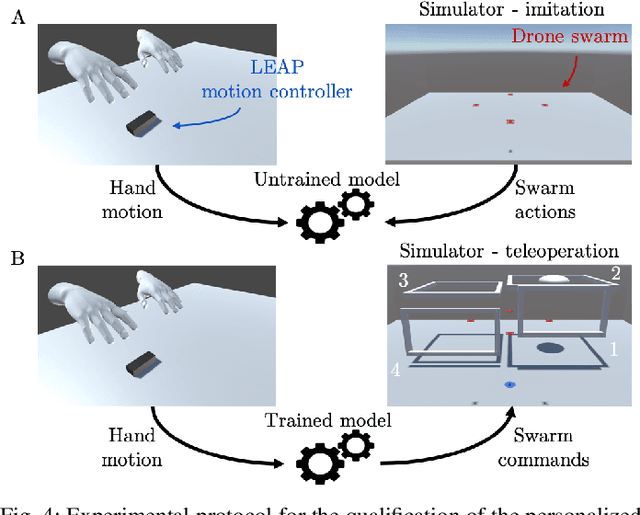

Personalized Human-Swarm Interaction through Hand Motion

Mar 13, 2021

Abstract:The control of collective robotic systems, such as drone swarms, is often delegated to autonomous navigation algorithms due to their high dimensionality. However, like other robotic entities, drone swarms can still benefit from being teleoperated by human operators, whose perception and decision-making capabilities are still out of the reach of autonomous systems. Drone swarm teleoperation is only at its dawn, and a standard human-swarm interface (HRI) is missing to date. In this study, we analyzed the spontaneous interaction strategies of naive users with a swarm of drones. We implemented a machine-learning algorithm to define a personalized Body-Machine Interface (BoMI) based only on a short calibration procedure. During this procedure, the human operator is asked to move spontaneously as if they were in control of a simulated drone swarm. We assessed that hands are the most commonly adopted body segment, and thus we chose a LEAP Motion controller to track them to let the users control the aerial drone swarm. This choice makes our interface portable since it does not rely on a centralized system for tracking the human body. We validated our algorithm to define personalized HRIs for a set of participants in a realistic simulated environment, showing promising results in performance and user experience. Our method leaves unprecedented freedom to the user to choose between position and velocity control only based on their body motion preferences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge