Athanasios Vasilakos

Test-Time Adaptation for Nighttime Color-Thermal Semantic Segmentation

Jul 10, 2023Abstract:The ability to scene understanding in adverse visual conditions, e.g., nighttime, has sparked active research for RGB-Thermal (RGB-T) semantic segmentation. However, it is essentially hampered by two critical problems: 1) the day-night gap of RGB images is larger than that of thermal images, and 2) the class-wise performance of RGB images at night is not consistently higher or lower than that of thermal images. we propose the first test-time adaptation (TTA) framework, dubbed Night-TTA, to address the problems for nighttime RGBT semantic segmentation without access to the source (daytime) data during adaptation. Our method enjoys three key technical parts. Firstly, as one modality (e.g., RGB) suffers from a larger domain gap than that of the other (e.g., thermal), Imaging Heterogeneity Refinement (IHR) employs an interaction branch on the basis of RGB and thermal branches to prevent cross-modal discrepancy and performance degradation. Then, Class Aware Refinement (CAR) is introduced to obtain reliable ensemble logits based on pixel-level distribution aggregation of the three branches. In addition, we also design a specific learning scheme for our TTA framework, which enables the ensemble logits and three student logits to collaboratively learn to improve the quality of predictions during the testing phase of our Night TTA. Extensive experiments show that our method achieves state-of-the-art (SoTA) performance with a 13.07% boost in mIoU.

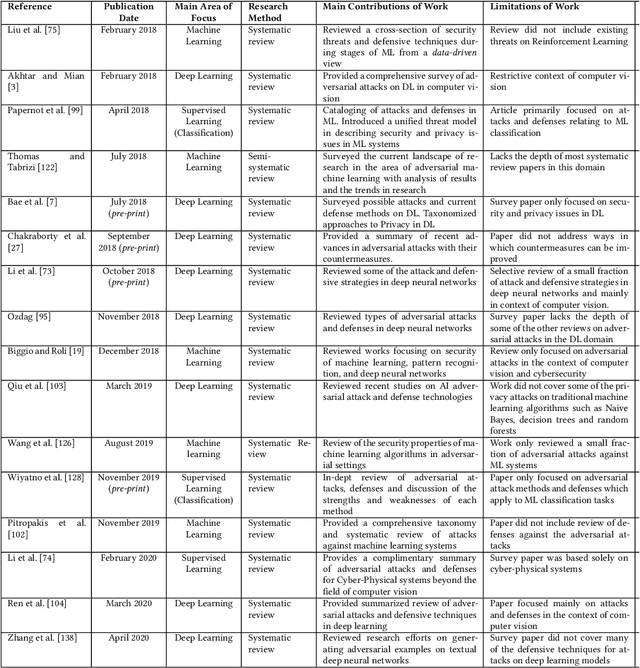

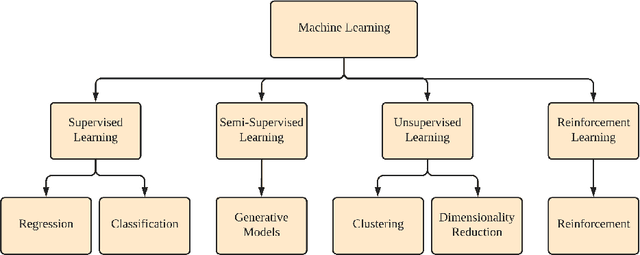

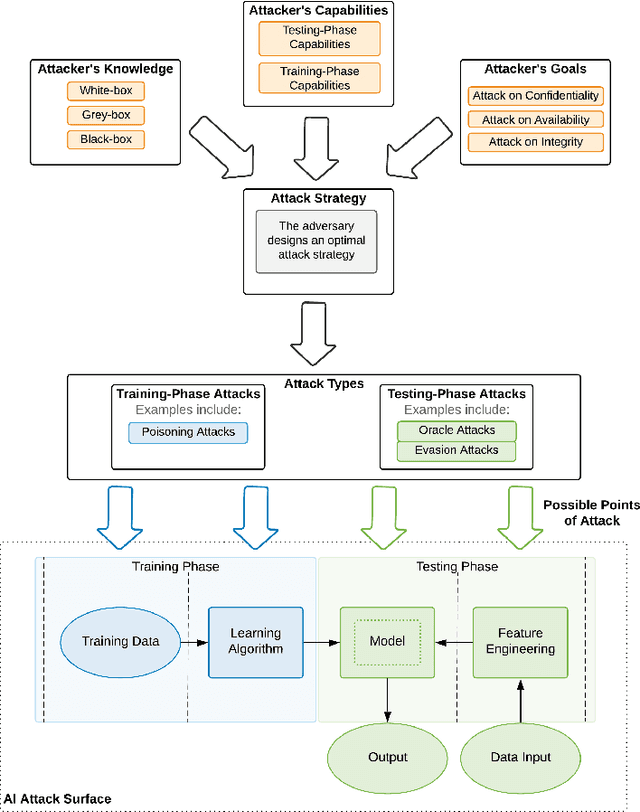

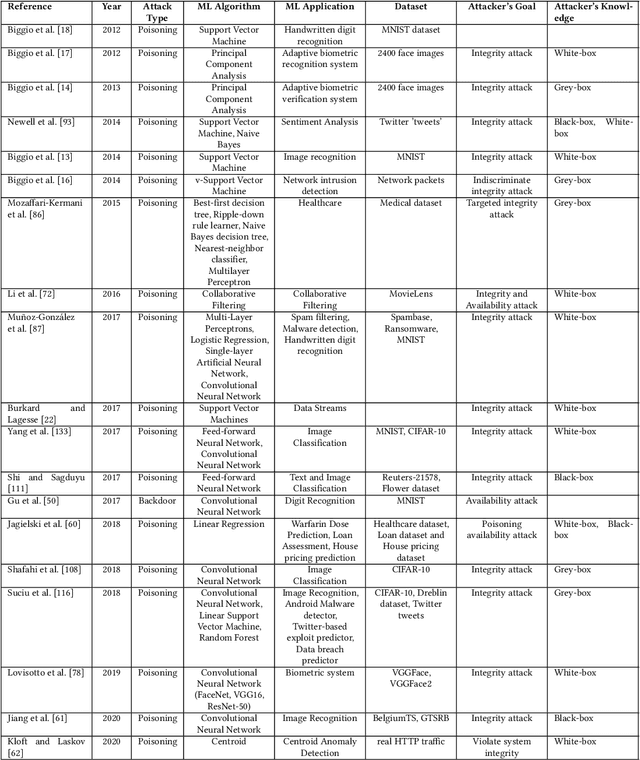

Security and Privacy for Artificial Intelligence: Opportunities and Challenges

Feb 09, 2021

Abstract:The increased adoption of Artificial Intelligence (AI) presents an opportunity to solve many socio-economic and environmental challenges; however, this cannot happen without securing AI-enabled technologies. In recent years, most AI models are vulnerable to advanced and sophisticated hacking techniques. This challenge has motivated concerted research efforts into adversarial AI, with the aim of developing robust machine and deep learning models that are resilient to different types of adversarial scenarios. In this paper, we present a holistic cyber security review that demonstrates adversarial attacks against AI applications, including aspects such as adversarial knowledge and capabilities, as well as existing methods for generating adversarial examples and existing cyber defence models. We explain mathematical AI models, especially new variants of reinforcement and federated learning, to demonstrate how attack vectors would exploit vulnerabilities of AI models. We also propose a systematic framework for demonstrating attack techniques against AI applications and reviewed several cyber defences that would protect AI applications against those attacks. We also highlight the importance of understanding the adversarial goals and their capabilities, especially the recent attacks against industry applications, to develop adaptive defences that assess to secure AI applications. Finally, we describe the main challenges and future research directions in the domain of security and privacy of AI technologies.

Progressive Defense Against Adversarial Attacks for Deep Learning as a Service in Internet of Things

Oct 15, 2020

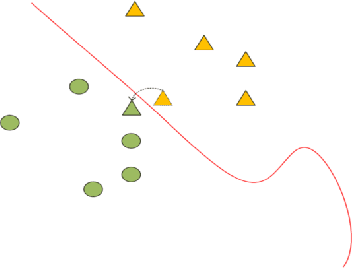

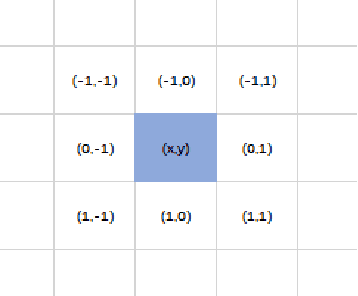

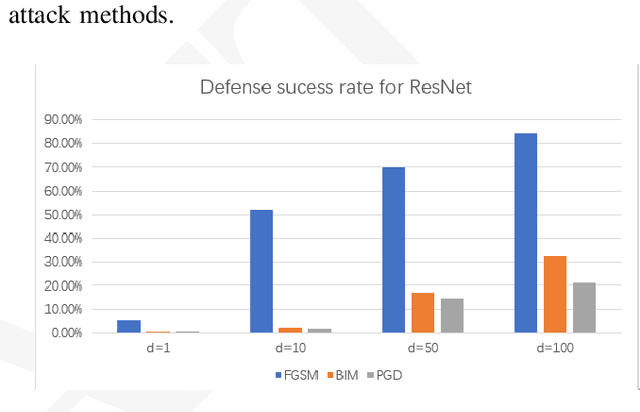

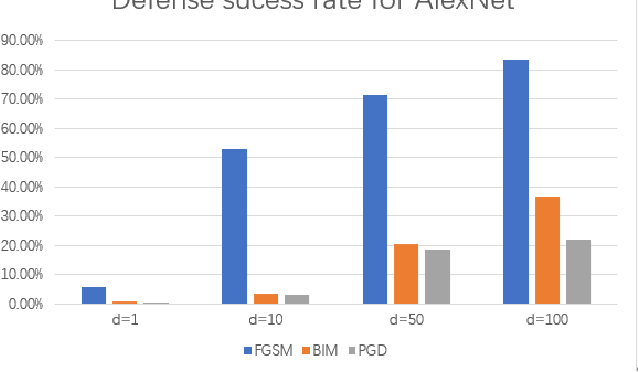

Abstract:Nowadays, Deep Learning as a service can be deployed in Internet of Things (IoT) to provide smart services and sensor data processing. However, recent research has revealed that some Deep Neural Networks (DNN) can be easily misled by adding relatively small but adversarial perturbations to the input (e.g., pixel mutation in input images). One challenge in defending DNN against these attacks is to efficiently identifying and filtering out the adversarial pixels. The state-of-the-art defense strategies with good robustness often require additional model training for specific attacks. To reduce the computational cost without loss of generality, we present a defense strategy called a progressive defense against adversarial attacks (PDAAA) for efficiently and effectively filtering out the adversarial pixel mutations, which could mislead the neural network towards erroneous outputs, without a-priori knowledge about the attack type. We evaluated our progressive defense strategy against various attack methods on two well-known datasets. The result shows it outperforms the state-of-the-art while reducing the cost of model training by 50% on average.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge