"Time": models, code, and papers

Unitary-Precoded Single-Carrier Waveforms for High Mobility: Detection and Channel Estimation

Jan 25, 2022

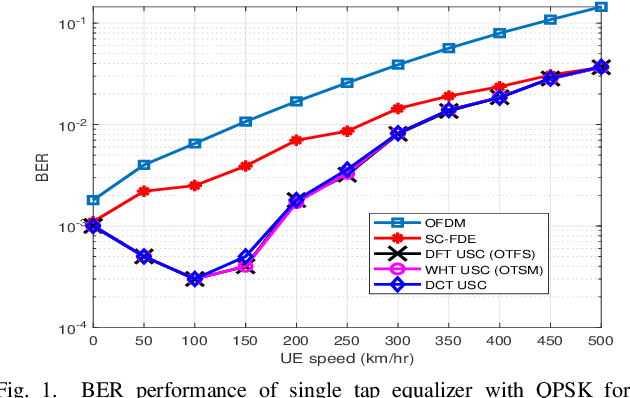

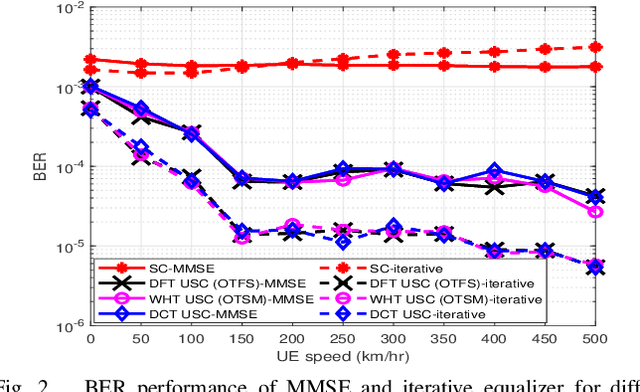

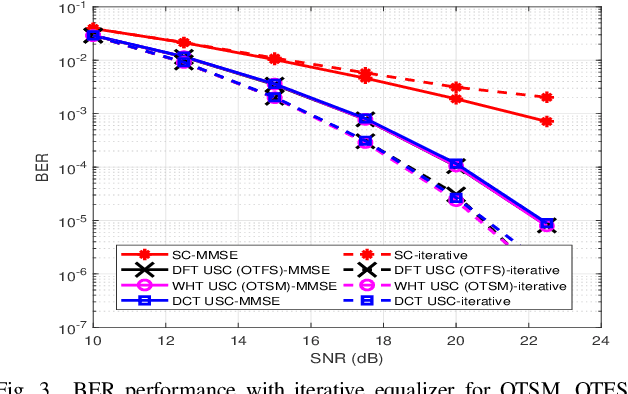

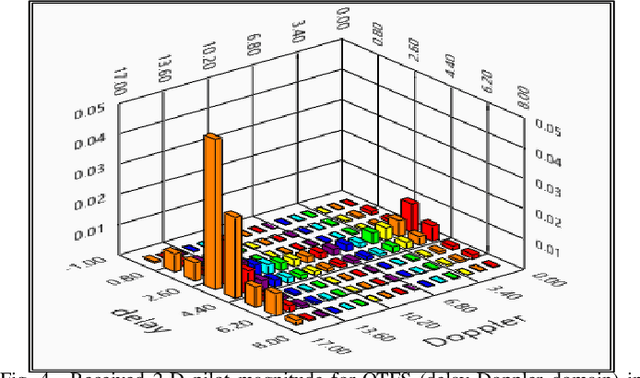

This paper presents unitary-precoded single-carrier (USC) modulation as a family of waveforms based on multiplexing the information symbols on time domain unitary basis functions. The common property of these basis functions is that they span the entire time and frequency plane. The recently proposed orthogonal time frequency space (OTFS) and orthogonal time sequency multiplexing (OTSM) based on discrete Fourier transform (DFT) and Walsh Hadamard transform (WHT), respectively, fall in the general framework of USC waveforms. In this work, we present channel estimation and detection methods that work for any USC waveform and numerically show that any choice of unitary precoding results in the same error performance. Lastly, we implement some USC systems and compare their performance with OFDM in a real-time indoor setting using an SDR platform.

Sampling Complexity of Path Integral Methods for Trajectory Optimization

Mar 18, 2022

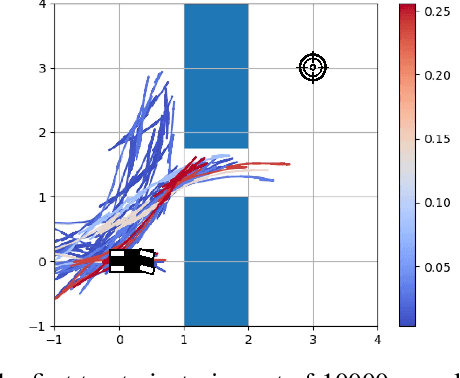

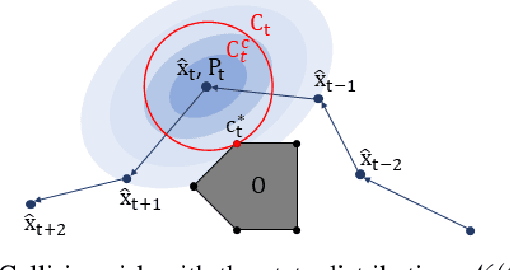

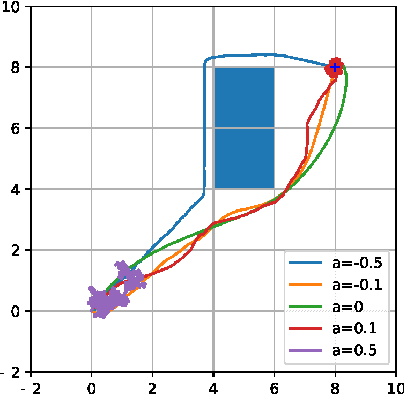

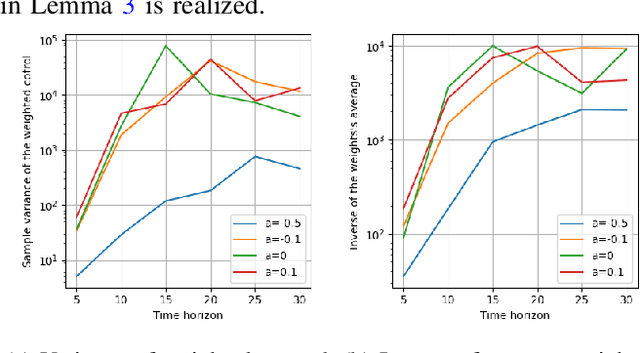

The use of random sampling in decision-making and control has become popular with the ease of access to graphic processing units that can generate and calculate multiple random trajectories for real-time robotic applications. In contrast to sequential optimization, the sampling-based method can take advantage of parallel computing to maintain constant control loop frequencies. Inspired by its wide applicability in robotic applications, we calculate a sampling complexity result applicable to general nonlinear systems considered in the path integral method, which is a sampling-based method. The result determines the required number of samples to satisfy the given error bounds of the estimated control signal from the optimal value with the predefined risk probability. The sampling complexity result shows that the variance of the estimated control value is upper-bounded in terms of the expectation of the cost. Then we apply the result to a linear time-varying dynamical system with quadratic cost and an indicator function cost to avoid constraint sets.

Time-varying Gaussian Process Bandit Optimization with Non-constant Evaluation Time

Mar 11, 2020

The Gaussian process bandit is a problem in which we want to find a maximizer of a black-box function with the minimum number of function evaluations. If the black-box function varies with time, then time-varying Bayesian optimization is a promising framework. However, a drawback with current methods is in the assumption that the evaluation time for every observation is constant, which can be unrealistic for many practical applications, e.g., recommender systems and environmental monitoring. As a result, the performance of current methods can be degraded when this assumption is violated. To cope with this problem, we propose a novel time-varying Bayesian optimization algorithm that can effectively handle the non-constant evaluation time. Furthermore, we theoretically establish a regret bound of our algorithm. Our bound elucidates that a pattern of the evaluation time sequence can hugely affect the difficulty of the problem. We also provide experimental results to validate the practical effectiveness of the proposed method.

UBERT: A Novel Language Model for Synonymy Prediction at Scale in the UMLS Metathesaurus

Apr 27, 2022

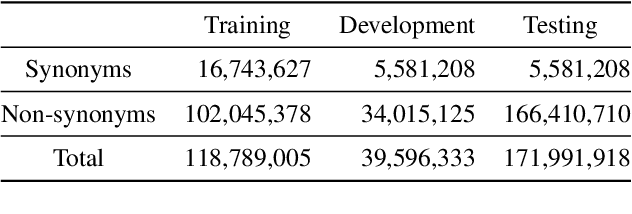

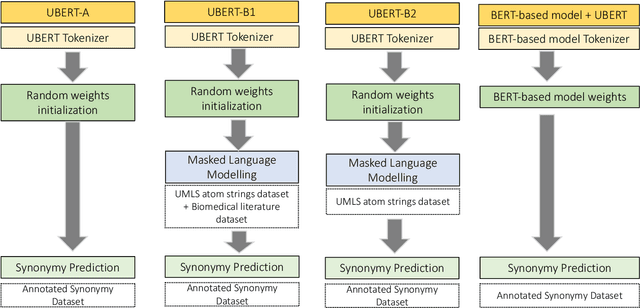

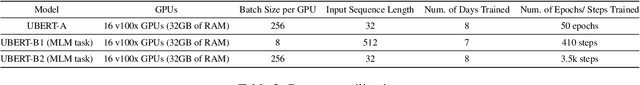

The UMLS Metathesaurus integrates more than 200 biomedical source vocabularies. During the Metathesaurus construction process, synonymous terms are clustered into concepts by human editors, assisted by lexical similarity algorithms. This process is error-prone and time-consuming. Recently, a deep learning model (LexLM) has been developed for the UMLS Vocabulary Alignment (UVA) task. This work introduces UBERT, a BERT-based language model, pretrained on UMLS terms via a supervised Synonymy Prediction (SP) task replacing the original Next Sentence Prediction (NSP) task. The effectiveness of UBERT for UMLS Metathesaurus construction process is evaluated using the UMLS Vocabulary Alignment (UVA) task. We show that UBERT outperforms the LexLM, as well as biomedical BERT-based models. Key to the performance of UBERT are the synonymy prediction task specifically developed for UBERT, the tight alignment of training data to the UVA task, and the similarity of the models used for pretrained UBERT.

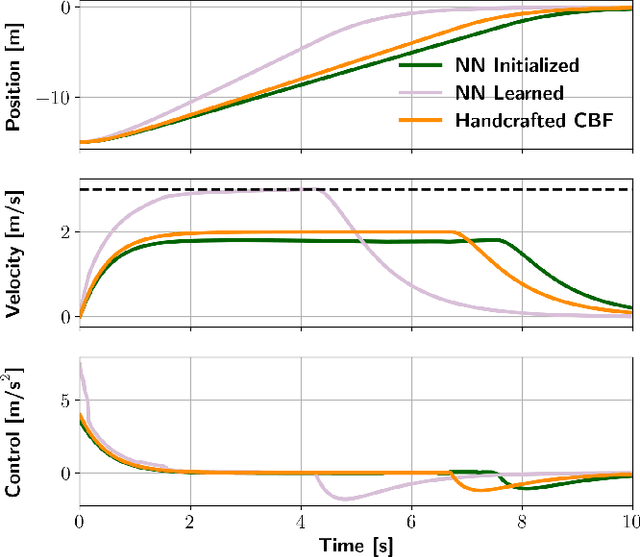

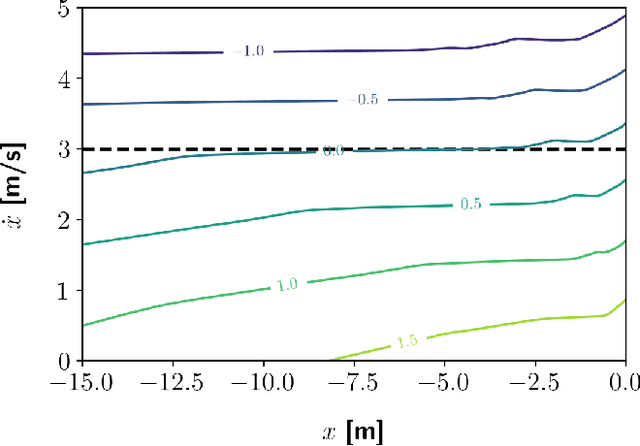

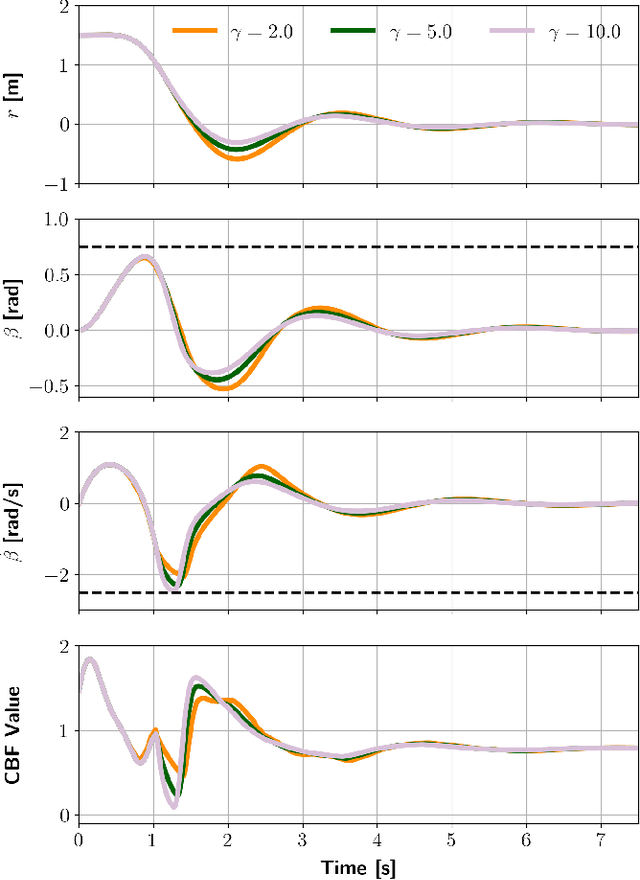

Learning a Better Control Barrier Function

May 11, 2022

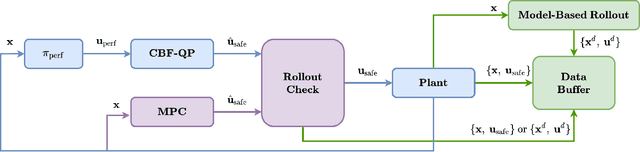

Control barrier functions (CBF) are widely used in safety-critical controllers. However, the construction of valid CBFs is well known to be challenging, especially for nonlinear or non-convex constraints and high relative degree systems. On the other hand, finding a conservative CBF that only recovers a portion of the true safe set is usually possible. In this work, starting from a "conservative" handcrafted control barrier function (HCBF), we develop a method to find a control barrier function that recovers a reasonably larger portion of the safe set. Using a different approach, by incorporating the hard constraints into an optimal control problem, e.g., MPC, we can safely generate solutions within the true safe set. Nevertheless, such an approach is usually computationally expensive and may not lend itself to real-time implementations. We propose to combine the two methods. During training, we utilize MPC to collect safe trajectory data. Thereafter, we train a neural network to estimate the difference between the HCBF and the CBF that recovers a closer solution to the true safe set. Using the proposed approach, we can generate a safe controller that is less conservative and computationally efficient. We validate our approach on three systems: a second-order integrator, ball-on-beam, and unicycle.

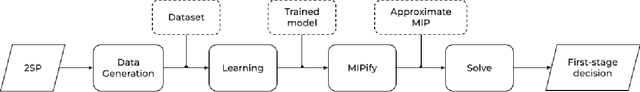

Neur2SP: Neural Two-Stage Stochastic Programming

May 20, 2022

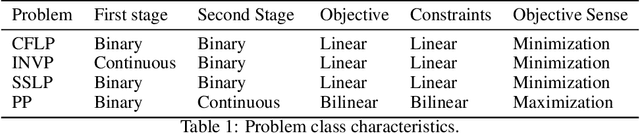

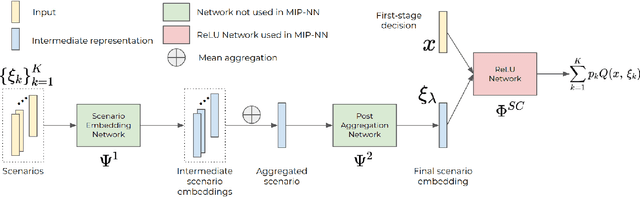

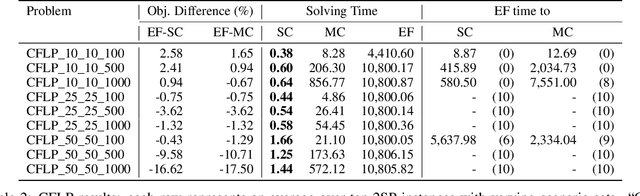

Stochastic programming is a powerful modeling framework for decision-making under uncertainty. In this work, we tackle two-stage stochastic programs (2SPs), the most widely applied and studied class of stochastic programming models. Solving 2SPs exactly requires evaluation of an expected value function that is computationally intractable. Additionally, having a mixed-integer linear program (MIP) or a nonlinear program (NLP) in the second stage further aggravates the problem difficulty. In such cases, solving them can be prohibitively expensive even if specialized algorithms that exploit problem structure are employed. Finding high-quality (first-stage) solutions -- without leveraging problem structure -- can be crucial in such settings. We develop Neur2SP, a new method that approximates the expected value function via a neural network to obtain a surrogate model that can be solved more efficiently than the traditional extensive formulation approach. Moreover, Neur2SP makes no assumptions about the problem structure, in particular about the second-stage problem, and can be implemented using an off-the-shelf solver and open-source libraries. Our extensive computational experiments on benchmark 2SP datasets from four problem classes with different structures (containing MIP and NLP second-stage problems) show the efficiency (time) and efficacy (solution quality) of Neur2SP. Specifically, the proposed method takes less than 1.66 seconds across all problems, achieving high-quality solutions even as the number of scenarios increases, an ideal property that is difficult to have for traditional 2SP solution techniques. Namely, the most generic baseline method typically requires minutes to hours to find solutions of comparable quality.

WikiOmnia: generative QA corpus on the whole Russian Wikipedia

Apr 29, 2022

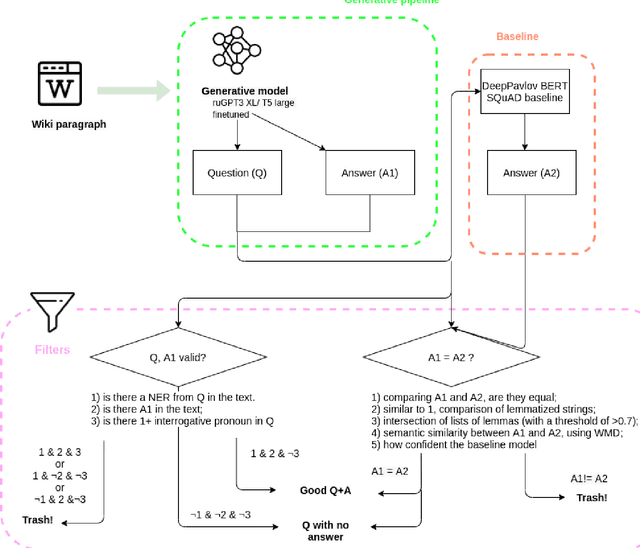

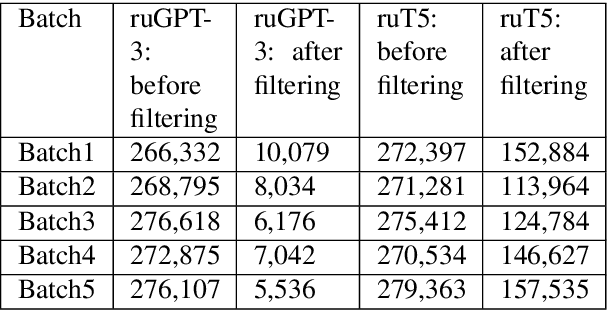

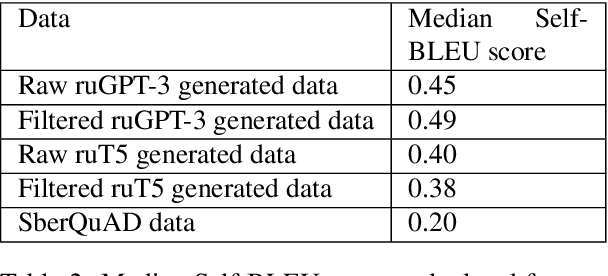

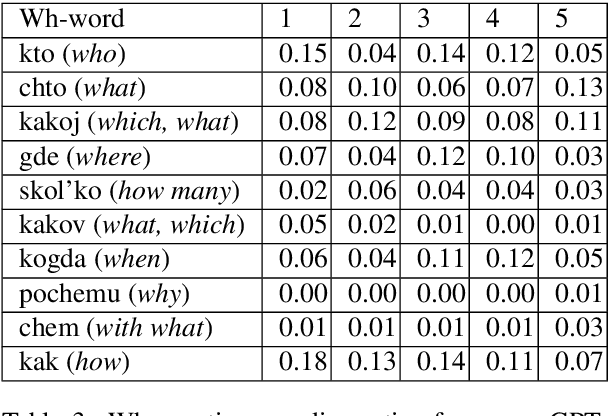

The General QA field has been developing the methodology referencing the Stanford Question answering dataset (SQuAD) as the significant benchmark. However, compiling factual questions is accompanied by time- and labour-consuming annotation, limiting the training data's potential size. We present the WikiOmnia dataset, a new publicly available set of QA-pairs and corresponding Russian Wikipedia article summary sections, composed with a fully automated generative pipeline. The dataset includes every available article from Wikipedia for the Russian language. The WikiOmnia pipeline is available open-source and is also tested for creating SQuAD-formatted QA on other domains, like news texts, fiction, and social media. The resulting dataset includes two parts: raw data on the whole Russian Wikipedia (7,930,873 QA pairs with paragraphs for ruGPT-3 XL and 7,991,040 QA pairs with paragraphs for ruT5-large) and cleaned data with strict automatic verification (over 160,000 QA pairs with paragraphs for ruGPT-3 XL and over 3,400,000 QA pairs with paragraphs for ruT5-large).

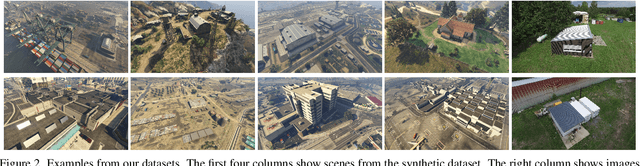

Real-time dense 3D Reconstruction from monocular video data captured by low-cost UAVs

Apr 21, 2021

Real-time 3D reconstruction enables fast dense mapping of the environment which benefits numerous applications, such as navigation or live evaluation of an emergency. In contrast to most real-time capable approaches, our approach does not need an explicit depth sensor. Instead, we only rely on a video stream from a camera and its intrinsic calibration. By exploiting the self-motion of the unmanned aerial vehicle (UAV) flying with oblique view around buildings, we estimate both camera trajectory and depth for selected images with enough novel content. To create a 3D model of the scene, we rely on a three-stage processing chain. First, we estimate the rough camera trajectory using a simultaneous localization and mapping (SLAM) algorithm. Once a suitable constellation is found, we estimate depth for local bundles of images using a Multi-View Stereo (MVS) approach and then fuse this depth into a global surfel-based model. For our evaluation, we use 55 video sequences with diverse settings, consisting of both synthetic and real scenes. We evaluate not only the generated reconstruction but also the intermediate products and achieve competitive results both qualitatively and quantitatively. At the same time, our method can keep up with a 30 fps video for a resolution of 768x448 pixels.

Intelligent Autonomous Intersection Management

Feb 09, 2022

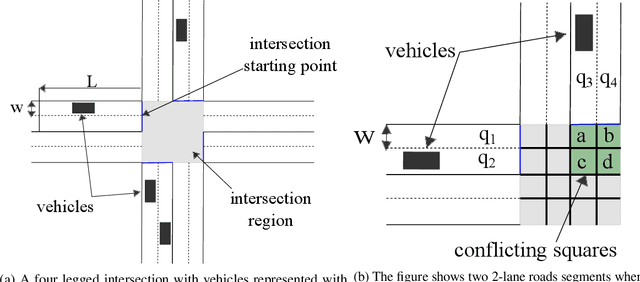

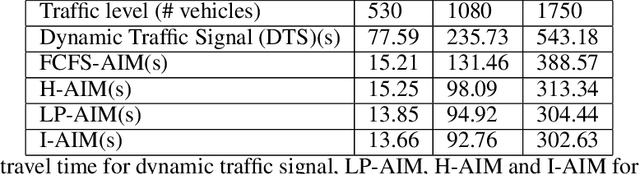

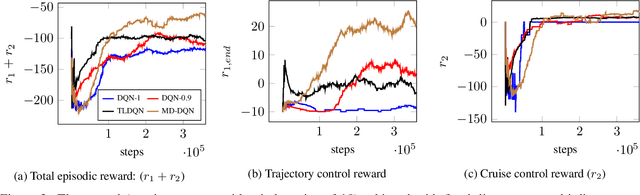

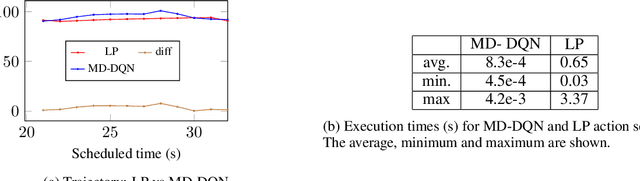

Connected Autonomous Vehicles will make autonomous intersection management a reality replacing traditional traffic signal control. Autonomous intersection management requires time and speed adjustment of vehicles arriving at an intersection for collision-free passing through the intersection. Due to its computational complexity, this problem has been studied only when vehicle arrival times towards the vicinity of the intersection are known beforehand, which limits the applicability of these solutions for real-time deployment. To solve the real-time autonomous traffic intersection management problem, we propose a reinforcement learning (RL) based multiagent architecture and a novel RL algorithm coined multi-discount Q-learning. In multi-discount Q-learning, we introduce a simple yet effective way to solve a Markov Decision Process by preserving both short-term and long-term goals, which is crucial for collision-free speed control. Our empirical results show that our RL-based multiagent solution can achieve near-optimal performance efficiently when minimizing the travel time through an intersection.

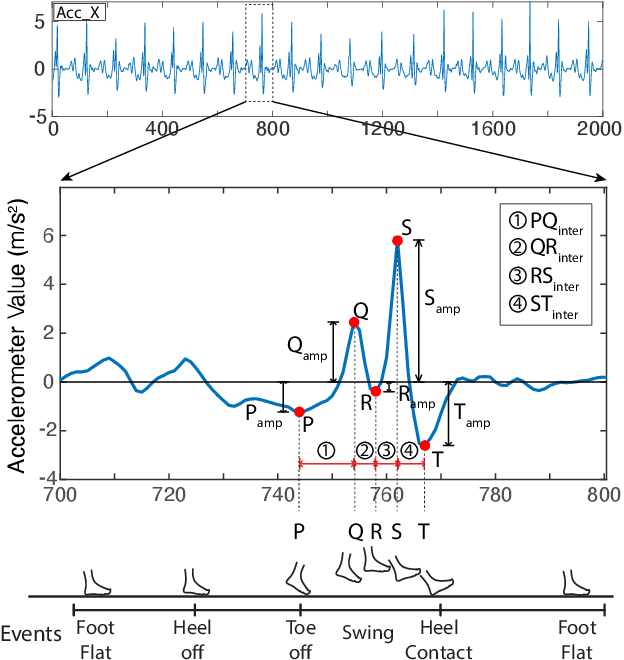

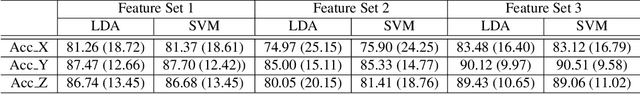

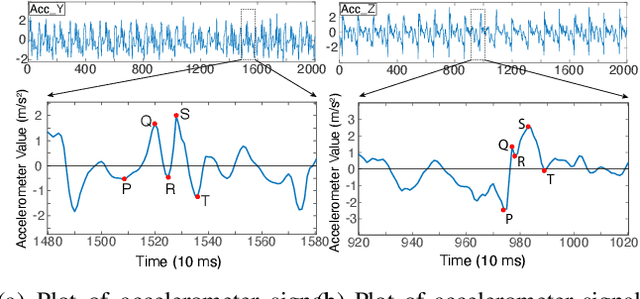

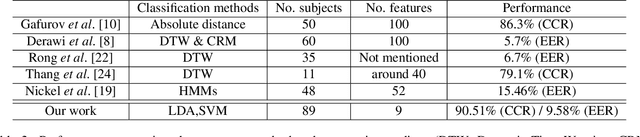

Formalizing PQRST Complex in Accelerometer-based Gait Cycle for Authentication

May 14, 2022

Accelerometer signals generated through gait present a new frontier of human interface with mobile devices. Gait cycle detection based on these signals has applications in various areas, including authentication, health monitoring, and activity detection. Template-based studies focus on how the entire gait cycle represents walking patterns, but these are compute-intensive. Aggregate feature-based studies extract features in the time domain and frequency domain from the entire gait cycle to reduce the number of features. However, these methods may miss critical structural information needed to appropriately represent the intricacies of walking patterns. To the best of our knowledge, no study has formally proposed a structure to capture variations within gait cycles or phases from accelerometer readings. We propose a new structure named the PQRST Complex, which corresponds to the swing phase in a gait cycle and matches the foot movements during this phase, thus capturing the changes in foot position. In our experiments, based on the nine features derived from this structure, the accelerometer-based gait authentication system outperforms many state-of-the-art gait cycle-based authentication systems. Our work opens up a new paradigm of capturing the structure of gait and opens multiple areas of research and practice using gait analogous to the "QRS complex" structure of ECG signals related to the heart.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge