Zhu Han

SAFE: Semantic Adaptive Feature Extraction with Rate Control for 6G Wireless Communications

Oct 02, 2024

Abstract:Most current Deep Learning-based Semantic Communication (DeepSC) systems are designed and trained exclusively for particular single-channel conditions, which restricts their adaptability and overall bandwidth utilization. To address this, we propose an innovative Semantic Adaptive Feature Extraction (SAFE) framework, which significantly improves bandwidth efficiency by allowing users to select different sub-semantic combinations based on their channel conditions. This paper also introduces three advanced learning algorithms to optimize the performance of SAFE framework as a whole. Through a series of simulation experiments, we demonstrate that the SAFE framework can effectively and adaptively extract and transmit semantics under different channel bandwidth conditions, of which effectiveness is verified through objective and subjective quality evaluations.

Enhancing Spectrum Efficiency in 6G Satellite Networks: A GAIL-Powered Policy Learning via Asynchronous Federated Inverse Reinforcement Learning

Sep 27, 2024Abstract:In this paper, a novel generative adversarial imitation learning (GAIL)-powered policy learning approach is proposed for optimizing beamforming, spectrum allocation, and remote user equipment (RUE) association in NTNs. Traditional reinforcement learning (RL) methods for wireless network optimization often rely on manually designed reward functions, which can require extensive parameter tuning. To overcome these limitations, we employ inverse RL (IRL), specifically leveraging the GAIL framework, to automatically learn reward functions without manual design. We augment this framework with an asynchronous federated learning approach, enabling decentralized multi-satellite systems to collaboratively derive optimal policies. The proposed method aims to maximize spectrum efficiency (SE) while meeting minimum information rate requirements for RUEs. To address the non-convex, NP-hard nature of this problem, we combine the many-to-one matching theory with a multi-agent asynchronous federated IRL (MA-AFIRL) framework. This allows agents to learn through asynchronous environmental interactions, improving training efficiency and scalability. The expert policy is generated using the Whale optimization algorithm (WOA), providing data to train the automatic reward function within GAIL. Simulation results show that the proposed MA-AFIRL method outperforms traditional RL approaches, achieving a $14.6\%$ improvement in convergence and reward value. The novel GAIL-driven policy learning establishes a novel benchmark for 6G NTN optimization.

Leaky Wave Antenna-Equipped RF Chipless Tags for Orientation Estimation

Aug 31, 2024

Abstract:Accurate orientation estimation of an object in a scene is critical in robotics, aerospace, augmented reality, and medicine, as it supports scene understanding. This paper introduces a novel orientation estimation approach leveraging radio frequency (RF) sensing technology and leaky-wave antennas (LWAs). Specifically, we propose a framework for a radar system to estimate the orientation of a \textit{dumb} LWA-equipped backscattering tag, marking the first exploration of this method in the literature. Our contributions include a comprehensive framework for signal modeling and orientation estimation with multi-subcarrier transmissions, and the formulation of a maximum likelihood estimator (MLE). Moreover, we analyze the impact of imperfect tag location information, revealing that it minimally affects estimation accuracy. Exploiting related results, we propose an approximate MLE and introduce a low-complexity radiation-pointing angle-based estimator with near-optimal performance. We derive the feasible orientation estimation region of the latter and show that it depends mainly on the system bandwidth. Our analytical results are validated through Monte Carlo simulations and reveal that the low-complexity estimator achieves near-optimal accuracy and that its feasible orientation estimation region is also approximately shared by the other estimators. Finally, we show that the optimal number of subcarriers increases with sensing time under a power budget constraint.

Active STAR-RIS Empowered Edge System for Enhanced Energy Efficiency and Task Management

Aug 23, 2024

Abstract:The proliferation of data-intensive and low-latency applications has driven the development of multi-access edge computing (MEC) as a viable solution to meet the increasing demands for high-performance computing and storage capabilities at the network edge. Despite the benefits of MEC, challenges such as obstructions cause non-line-of-sight (NLoS) communication to persist. Reconfigurable intelligent surfaces (RISs) and the more advanced simultaneously transmitting and reflecting (STAR)-RISs have emerged to address these challenges; however, practical limitations and multiplicative fading effects hinder their efficacy. We propose an active STAR-RIS-assisted MEC system to overcome these obstacles, leveraging the advantages of active STAR-RIS. The main contributions consist of formulating an optimization problem to minimize energy consumption with task queue stability by jointly optimizing the partial task offloading, amplitude, phase shift coefficients, amplification coefficients, transmit power of the base station (BS), and admitted tasks. Furthermore, we decompose the non-convex problem into manageable sub-problems, employing sequential fractional programming for transmit power control, convex optimization technique for task offloading, and Lyapunov optimization with double deep Q-network (DDQN) for joint amplitude, phase shift, amplification, and task admission. Extensive performance evaluations demonstrate the superiority of the proposed system over benchmark schemes, highlighting its potential for enhancing MEC system performance. Numerical results indicate that our proposed system outperforms the conventional STAR-RIS-assisted by 18.64\% and the conventional RIS-assisted system by 30.43\%, respectively.

Human-In-The-Loop Machine Learning for Safe and Ethical Autonomous Vehicles: Principles, Challenges, and Opportunities

Aug 22, 2024

Abstract:Rapid advances in Machine Learning (ML) have triggered new trends in Autonomous Vehicles (AVs). ML algorithms play a crucial role in interpreting sensor data, predicting potential hazards, and optimizing navigation strategies. However, achieving full autonomy in cluttered and complex situations, such as intricate intersections, diverse sceneries, varied trajectories, and complex missions, is still challenging, and the cost of data labeling remains a significant bottleneck. The adaptability and robustness of humans in complex scenarios motivate the inclusion of humans in ML process, leveraging their creativity, ethical power, and emotional intelligence to improve ML effectiveness. The scientific community knows this approach as Human-In-The-Loop Machine Learning (HITL-ML). Towards safe and ethical autonomy, we present a review of HITL-ML for AVs, focusing on Curriculum Learning (CL), Human-In-The-Loop Reinforcement Learning (HITL-RL), Active Learning (AL), and ethical principles. In CL, human experts systematically train ML models by starting with simple tasks and gradually progressing to more difficult ones. HITL-RL significantly enhances the RL process by incorporating human input through techniques like reward shaping, action injection, and interactive learning. AL streamlines the annotation process by targeting specific instances that need to be labeled with human oversight, reducing the overall time and cost associated with training. Ethical principles must be embedded in AVs to align their behavior with societal values and norms. In addition, we provide insights and specify future research directions.

IRS-Assisted Lossy Communications Under Correlated Rayleigh Fading: Outage Probability Analysis and Optimization

Aug 13, 2024Abstract:This paper focuses on an intelligent reflecting surface (IRS)-assisted lossy communication system with correlated Rayleigh fading. We analyze the correlated channel model and derive the outage probability of the system. Then, we design a deep reinforce learning (DRL) method to optimize the phase shift of IRS, in order to maximize the received signal power. Moreover, this paper presents results of the simulations conducted to evaluate the performance of the DRL-based method. The simulation results indicate that the outage probability of the considered system increases significantly with more correlated channel coefficients. Moreover, the performance gap between DRL and theoretical limit increases with higher transmit power and/or larger distortion requirement.

Large Models for Aerial Edges: An Edge-Cloud Model Evolution and Communication Paradigm

Aug 09, 2024

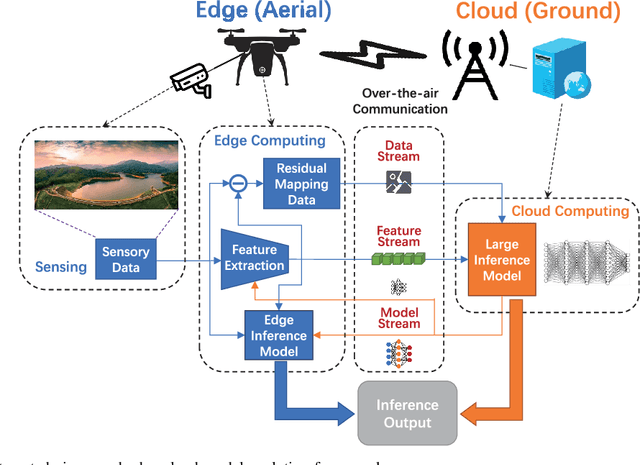

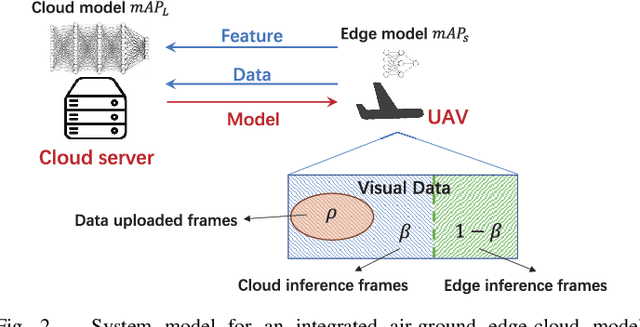

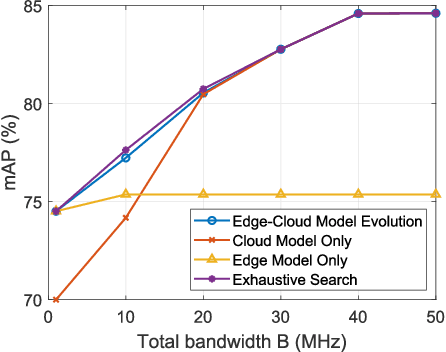

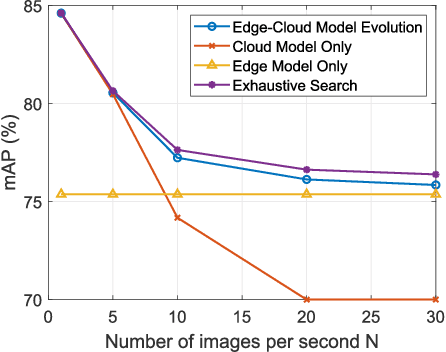

Abstract:The future sixth-generation (6G) of wireless networks is expected to surpass its predecessors by offering ubiquitous coverage through integrated air-ground facility deployments in both communication and computing domains. In this network, aerial facilities, such as unmanned aerial vehicles (UAVs), conduct artificial intelligence (AI) computations based on multi-modal data to support diverse applications including surveillance and environment construction. However, these multi-domain inference and content generation tasks require large AI models, demanding powerful computing capabilities, thus posing significant challenges for UAVs. To tackle this problem, we propose an integrated edge-cloud model evolution framework, where UAVs serve as edge nodes for data collection and edge model computation. Through wireless channels, UAVs collaborate with ground cloud servers, providing cloud model computation and model updating for edge UAVs. With limited wireless communication bandwidth, the proposed framework faces the challenge of information exchange scheduling between the edge UAVs and the cloud server. To tackle this, we present joint task allocation, transmission resource allocation, transmission data quantization design, and edge model update design to enhance the inference accuracy of the integrated air-ground edge-cloud model evolution framework by mean average precision (mAP) maximization. A closed-form lower bound on the mAP of the proposed framework is derived, and the solution to the mAP maximization problem is optimized accordingly. Simulations, based on results from vision-based classification experiments, consistently demonstrate that the mAP of the proposed framework outperforms both a centralized cloud model framework and a distributed edge model framework across various communication bandwidths and data sizes.

Semantic Successive Refinement: A Generative AI-aided Semantic Communication Framework

Jul 31, 2024

Abstract:Semantic Communication (SC) is an emerging technology aiming to surpass the Shannon limit. Traditional SC strategies often minimize signal distortion between the original and reconstructed data, neglecting perceptual quality, especially in low Signal-to-Noise Ratio (SNR) environments. To address this issue, we introduce a novel Generative AI Semantic Communication (GSC) system for single-user scenarios. This system leverages deep generative models to establish a new paradigm in SC. Specifically, At the transmitter end, it employs a joint source-channel coding mechanism based on the Swin Transformer for efficient semantic feature extraction and compression. At the receiver end, an advanced Diffusion Model (DM) reconstructs high-quality images from degraded signals, enhancing perceptual details. Additionally, we present a Multi-User Generative Semantic Communication (MU-GSC) system utilizing an asynchronous processing model. This model effectively manages multiple user requests and optimally utilizes system resources for parallel processing. Simulation results on public datasets demonstrate that our generative AI semantic communication systems achieve superior transmission efficiency and enhanced communication content quality across various channel conditions. Compared to CNN-based DeepJSCC, our methods improve the Peak Signal-to-Noise Ratio (PSNR) by 17.75% in Additive White Gaussian Noise (AWGN) channels and by 20.86% in Rayleigh channels.

Semantic Enabled 6G LEO Satellite Communication for Earth Observation: A Resource-Constrained Network Optimization

Jul 31, 2024

Abstract:Earth observation satellites generate large amounts of real-time data for monitoring and managing time-critical events such as disaster relief missions. This presents a major challenge for satellite-to-ground communications operating under limited bandwidth capacities. This paper explores semantic communication (SC) as a potential alternative to traditional communication methods. The rationality for adopting SC is its inherent ability to reduce communication costs and make spectrum efficient for 6G non-terrestrial networks (6G-NTNs). We focus on the critical satellite imagery downlink communications latency optimization for Earth observation through SC techniques. We formulate the latency minimization problem with SC quality-of-service (SC-QoS) constraints and address this problem with a meta-heuristic discrete whale optimization algorithm (DWOA) and a one-to-one matching game. The proposed approach for captured image processing and transmission includes the integration of joint semantic and channel encoding to ensure downlink sum-rate optimization and latency minimization. Empirical results from experiments demonstrate the efficiency of the proposed framework for latency optimization while preserving high-quality data transmission when compared to baselines.

FSSC: Federated Learning of Transformer Neural Networks for Semantic Image Communication

Jul 31, 2024

Abstract:In this paper, we address the problem of image semantic communication in a multi-user deployment scenario and propose a federated learning (FL) strategy for a Swin Transformer-based semantic communication system (FSSC). Firstly, we demonstrate that the adoption of a Swin Transformer for joint source-channel coding (JSCC) effectively extracts semantic information in the communication system. Next, the FL framework is introduced to collaboratively learn a global model by aggregating local model parameters, rather than directly sharing clients' data. This approach enhances user privacy protection and reduces the workload on the server or mobile edge. Simulation evaluations indicate that our method outperforms the typical JSCC algorithm and traditional separate-based communication algorithms. Particularly after integrating local semantics, the global aggregation model has further increased the Peak Signal-to-Noise Ratio (PSNR) by more than 2dB, thoroughly proving the effectiveness of our algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge