Yuntao Qian

Deep Neural Network Based Hyperspectral Pixel Classification With Factorized Spectral-Spatial Feature Representation

Apr 16, 2019

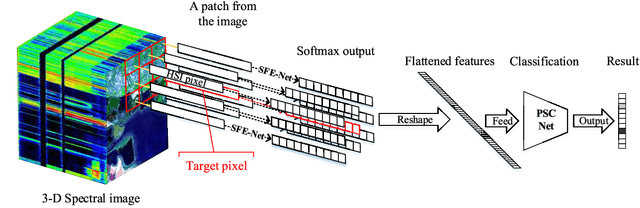

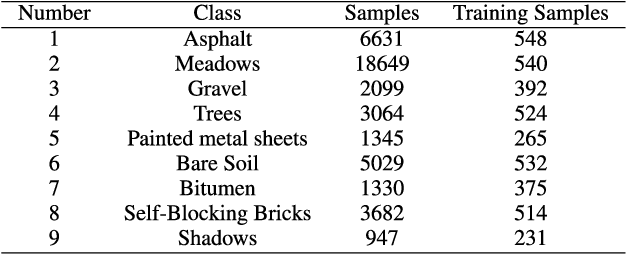

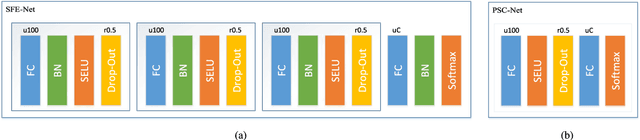

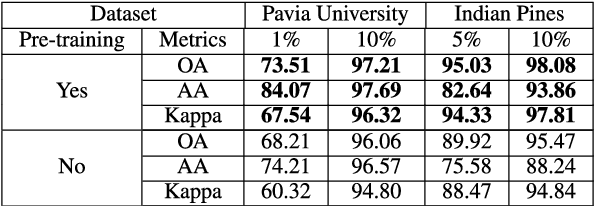

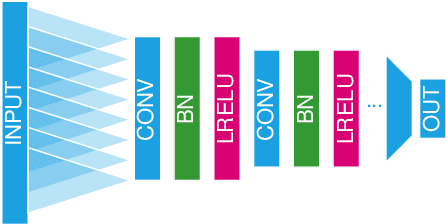

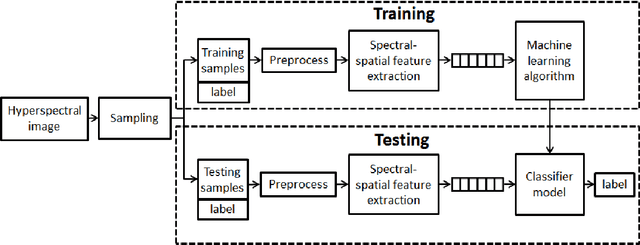

Abstract:Deep learning has been widely used for hyperspectral pixel classification due to its ability of generating deep feature representation. However, how to construct an efficient and powerful network suitable for hyperspectral data is still under exploration. In this paper, a novel neural network model is designed for taking full advantage of the spectral-spatial structure of hyperspectral data. Firstly, we extract pixel-based intrinsic features from rich yet redundant spectral bands by a subnetwork with supervised pre-training scheme. Secondly, in order to utilize the local spatial correlation among pixels, we share the previous subnetwork as a spectral feature extractor for each pixel in a patch of image, after which the spectral features of all pixels in a patch are combined and feeded into the subsequent classification subnetwork. Finally, the whole network is further fine-tuned to improve its classification performance. Specially, the spectral-spatial factorization scheme is applied in our model architecture, making the network size and the number of parameters great less than the existing spectral-spatial deep networks for hyperspectral image classification. Experiments on the hyperspectral data sets show that, compared with some state-of-art deep learning methods, our method achieves better classification results while having smaller network size and less parameters.

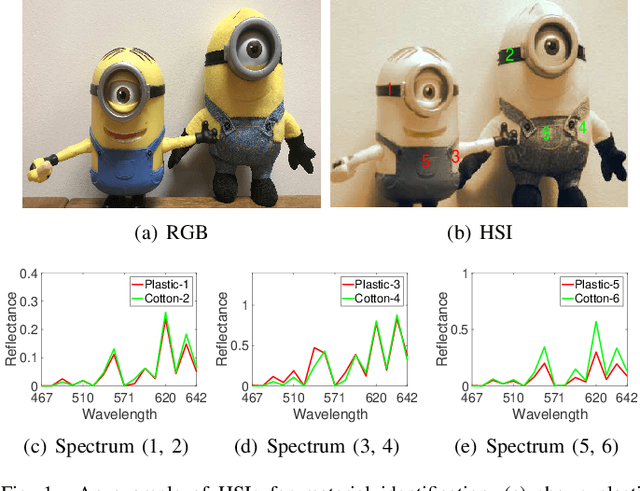

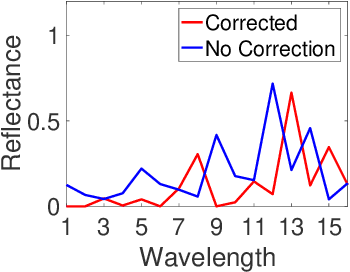

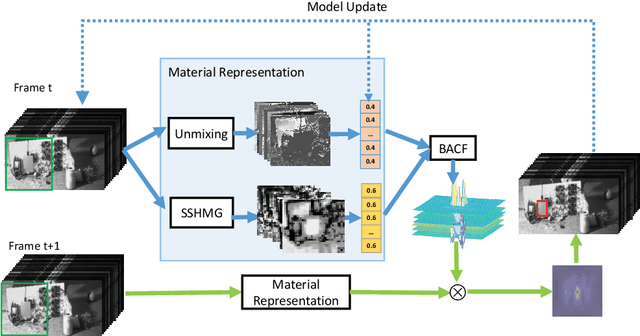

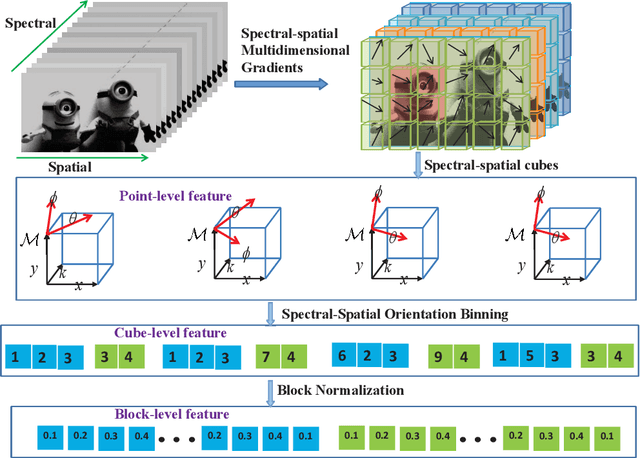

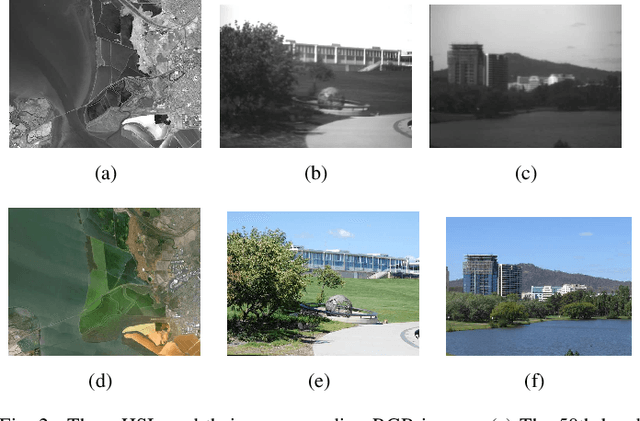

Spectral-spatial features for material based object tracking in hyperspectral videos

Dec 23, 2018

Abstract:Traditional color images only depict color intensities in red, green and blue channels, often making object trackers fail when a target shares similar color or texture as its surrounding environment. Alternatively, material information of targets contained in a large amount of bands of hyperspectral images (HSI) is more robust to these challenging conditions. In this paper, we conduct a comprehensive study on how HSIs can be utilized to boost object tracking from three aspects: benchmark dataset, material feature representation and material based tracking. In terms of benchmark, we construct a dataset of fully-annotated videos which contain both hyperspectral and color sequences of the same scene. We extract two types of material features from these videos. We first introduce a novel 3D spectral-spatial histogram of gradient to describe the local spectral-spatial structure in an HSI. Then an HSI is decomposed into the detailed constituent materials and associate abundances, i.e., proportions of materials at each location, to encode the underlying information on material distribution. These two types of features are embedded into correlation filters, yielding material based tracking. Experimental results on the collected benchmark dataset show the potentials and advantages of material based object tracking.

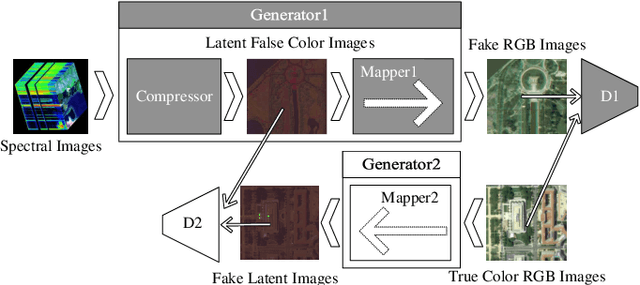

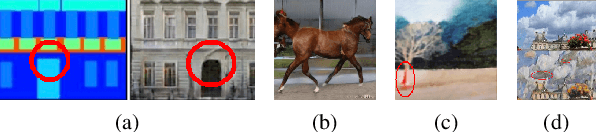

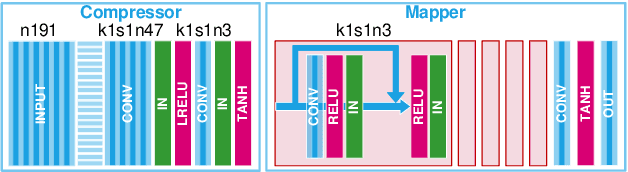

Spectral Image Visualization Using Generative Adversarial Networks

Feb 07, 2018

Abstract:Spectral images captured by satellites and radio-telescopes are analyzed to obtain information about geological compositions distributions, distant asters as well as undersea terrain. Spectral images usually contain tens to hundreds of continuous narrow spectral bands and are widely used in various fields. But the vast majority of those image signals are beyond the visible range, which calls for special visualization technique. The visualizations of spectral images shall convey as much information as possible from the original signal and facilitate image interpretation. However, most of the existing visualizatio methods display spectral images in false colors, which contradict with human's experience and expectation. In this paper, we present a novel visualization generative adversarial network (GAN) to display spectral images in natural colors. To achieve our goal, we propose a loss function which consists of an adversarial loss and a structure loss. The adversarial loss pushes our solution to the natural image distribution using a discriminator network that is trained to differentiate between false-color images and natural-color images. We also use a cycle loss as the structure constraint to guarantee structure consistency. Experimental results show that our method is able to generate structure-preserved and natural-looking visualizations.

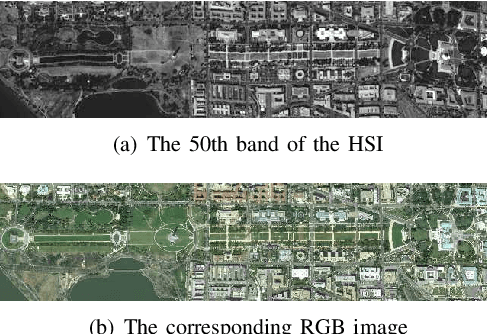

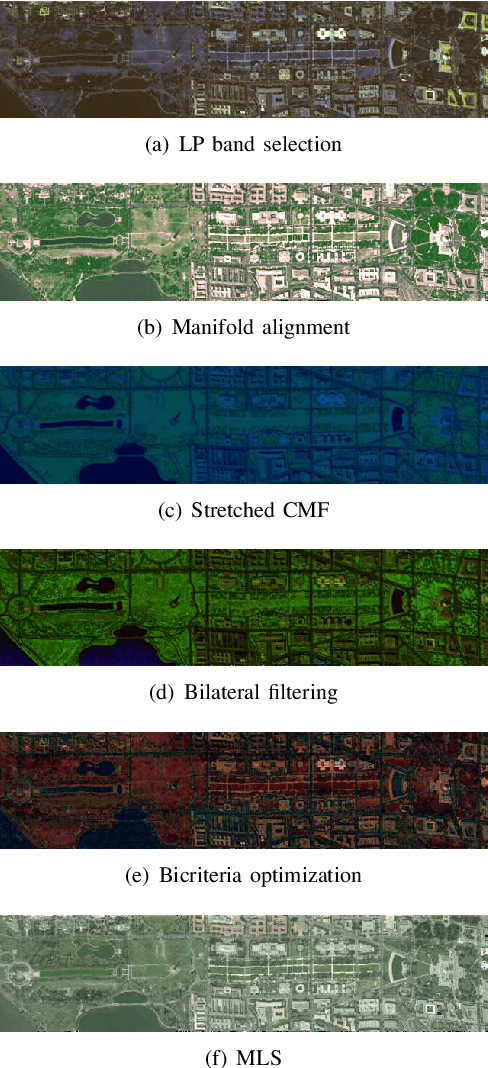

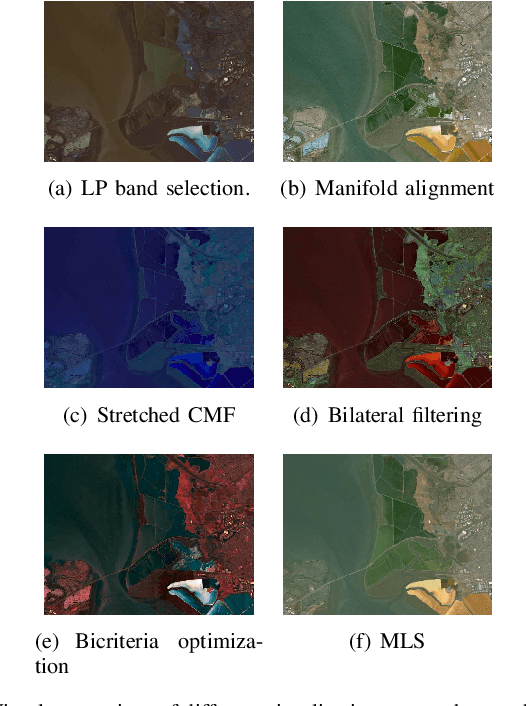

Visualization of Hyperspectral Images Using Moving Least Squares

Jan 20, 2018

Abstract:Displaying the large number of bands in a hyper spectral image on a trichromatic monitor has been an active research topic. The visualized image shall convey as much information as possible form the original data and facilitate image interpretation. Most existing methods display HSIs in false colors which contradict with human's experience and expectation. In this paper, we propose a nonlinear approach to visualize an input HSI with natural colors by taking advantage of a corresponding RGB image. Our approach is based on Moving Least Squares, an interpolation scheme for reconstructing a surface from a set of control points, which in our case is a set of matching pixels between the HSI and the corresponding RGB image. Based on MLS, the proposed method solves for each spectral signature a unique transformation so that the non linear structure of the HSI can be preserved. The matching pixels between a pair of HSI and RGB image can be reused to display other HSIs captured b the same imaging sensor with natural colors. Experiments show that the output image of the proposed method no only have natural colors but also maintain the visual information necessary for human analysis.

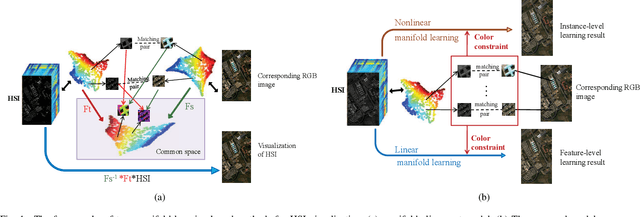

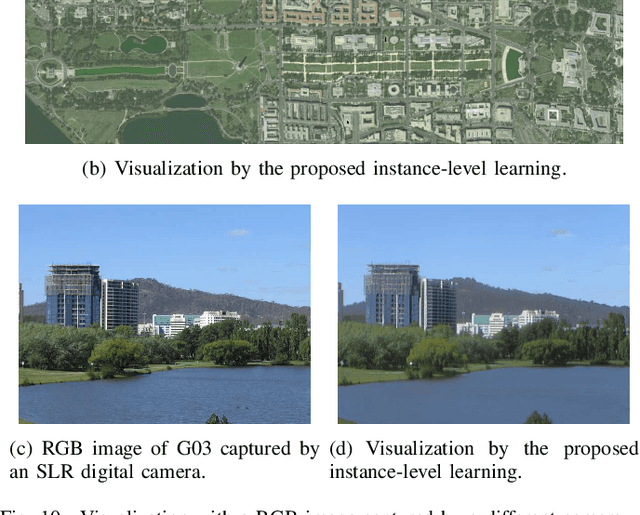

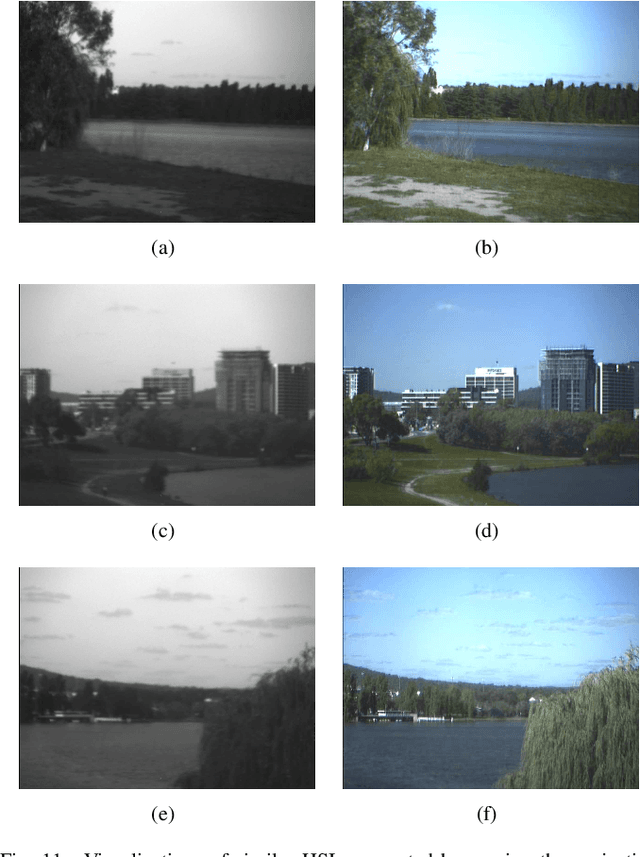

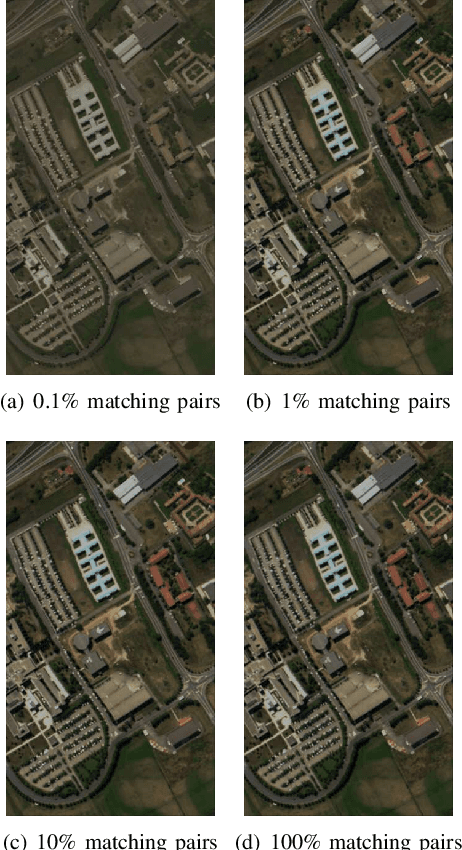

Constrained Manifold Learning for Hyperspectral Imagery Visualization

Nov 24, 2017

Abstract:Displaying the large number of bands in a hyper- spectral image (HSI) on a trichromatic monitor is important for HSI processing and analysis system. The visualized image shall convey as much information as possible from the original HSI and meanwhile facilitate image interpretation. However, most existing methods display HSIs in false color, which contradicts with user experience and expectation. In this paper, we propose a visualization approach based on constrained manifold learning, whose goal is to learn a visualized image that not only preserves the manifold structure of the HSI but also has natural colors. Manifold learning preserves the image structure by forcing pixels with similar signatures to be displayed with similar colors. A composite kernel is applied in manifold learning to incorporate both the spatial and spectral information of HSI in the embedded space. The colors of the output image are constrained by a corresponding natural-looking RGB image, which can either be generated from the HSI itself (e.g., band selection from the visible wavelength) or be captured by a separate device. Our method can be done at instance-level and feature-level. Instance-level learning directly obtains the RGB coordinates for the pixels in the HSI while feature-level learning learns an explicit mapping function from the high dimensional spectral space to the RGB space. Experimental results demonstrate the advantage of the proposed method in information preservation and natural color visualization.

On the Sampling Strategy for Evaluation of Spectral-spatial Methods in Hyperspectral Image Classification

May 19, 2016

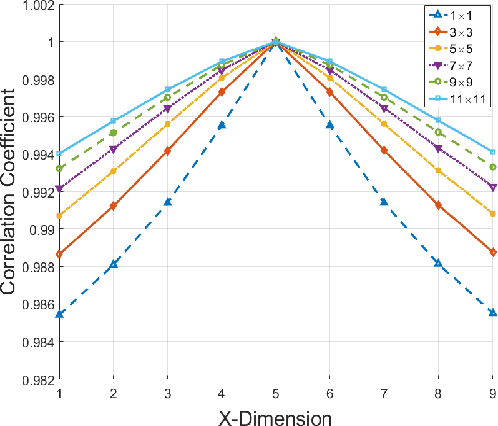

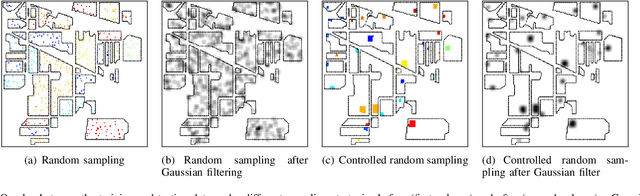

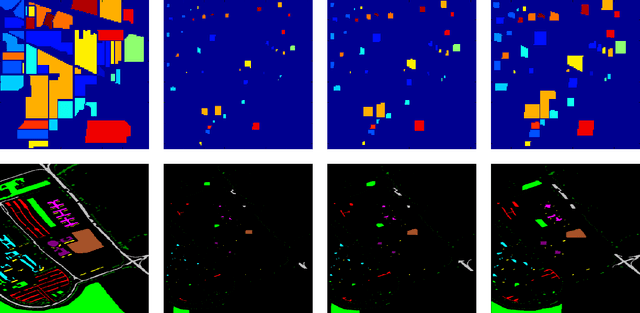

Abstract:Spectral-spatial processing has been increasingly explored in remote sensing hyperspectral image classification. While extensive studies have focused on developing methods to improve the classification accuracy, experimental setting and design for method evaluation have drawn little attention. In the scope of supervised classification, we find that traditional experimental designs for spectral processing are often improperly used in the spectral-spatial processing context, leading to unfair or biased performance evaluation. This is especially the case when training and testing samples are randomly drawn from the same image - a practice that has been commonly adopted in the experiments. Under such setting, the dependence caused by overlap between the training and testing samples may be artificially enhanced by some spatial information processing methods such as spatial filtering and morphological operation. Such interaction between training and testing sets has violated data independence assumption that is abided by supervised learning theory and performance evaluation mechanism. Therefore, the widely adopted pixel-based random sampling strategy is not always suitable to evaluate spectral-spatial classification algorithms because it is difficult to determine whether the improvement of classification accuracy is caused by incorporating spatial information into classifier or by increasing the overlap between training and testing samples. To partially solve this problem, we propose a novel controlled random sampling strategy for spectral-spatial methods. It can greatly reduce the overlap between training and testing samples and provides more objective and accurate evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge