Xinzhu Bei

Efficient Sequential Recommendation for Long Term User Interest Via Personalization

Jan 07, 2026Abstract:Recent years have witnessed success of sequential modeling, generative recommender, and large language model for recommendation. Though the scaling law has been validated for sequential models, it showed inefficiency in computational capacity when considering real-world applications like recommendation, due to the non-linear(quadratic) increasing nature of the transformer model. To improve the efficiency of the sequential model, we introduced a novel approach to sequential recommendation that leverages personalization techniques to enhance efficiency and performance. Our method compresses long user interaction histories into learnable tokens, which are then combined with recent interactions to generate recommendations. This approach significantly reduces computational costs while maintaining high recommendation accuracy. Our method could be applied to existing transformer based recommendation models, e.g., HSTU and HLLM. Extensive experiments on multiple sequential models demonstrate its versatility and effectiveness. Source code is available at \href{https://github.com/facebookresearch/PerSRec}{https://github.com/facebookresearch/PerSRec}.

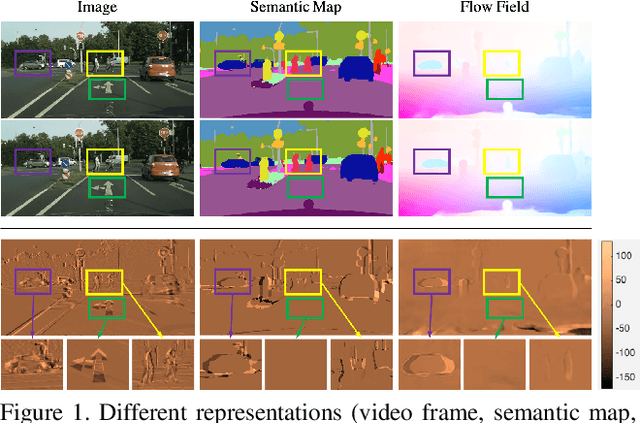

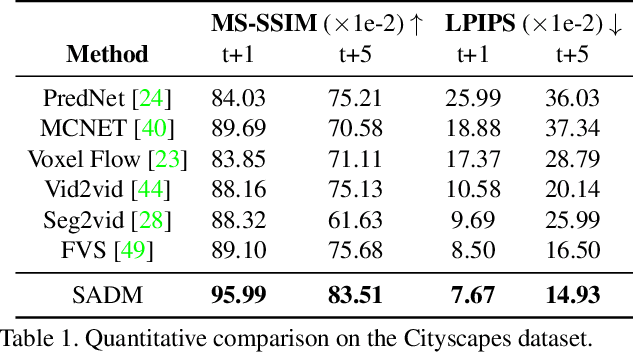

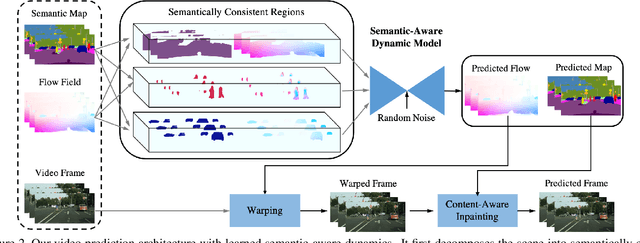

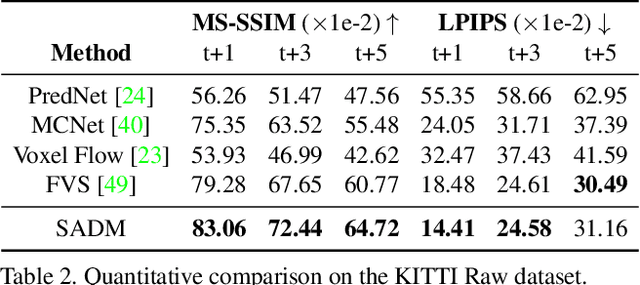

Learning Semantic-Aware Dynamics for Video Prediction

Apr 20, 2021

Abstract:We propose an architecture and training scheme to predict video frames by explicitly modeling dis-occlusions and capturing the evolution of semantically consistent regions in the video. The scene layout (semantic map) and motion (optical flow) are decomposed into layers, which are predicted and fused with their context to generate future layouts and motions. The appearance of the scene is warped from past frames using the predicted motion in co-visible regions; dis-occluded regions are synthesized with content-aware inpainting utilizing the predicted scene layout. The result is a predictive model that explicitly represents objects and learns their class-specific motion, which we evaluate on video prediction benchmarks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge