Xilong Wang

Model-Free Co-Optimization of Manufacturable Sensor Layouts and Deformation Proprioception

Mar 09, 2026Abstract:Flexible sensors are increasingly employed in soft robotics and wearable devices to provide proprioception of freeform deformations.Although supervised learning can train shape predictors from sensor signals, prediction accuracy strongly depends on sensor layout, which is typically determined heuristically or through trial-and-error. This work introduces a model-free, data-driven computational pipeline that jointly optimizes the number, length, and placement of flexible length-measurement sensors together with the parameters of a shape prediction network for large freeform deformations. Unlike model-based approaches, the proposed method relies solely on datasets of deformed shapes, without requiring physical simulation models, and is therefore broadly applicable to diverse robotic sensing tasks. The pipeline incorporates differentiable loss functions that account for both prediction accuracy and manufacturability constraints. By co-optimizing sensor layouts and network parameters, the method significantly improves deformation prediction accuracy over unoptimized layouts while ensuring practical feasibility. The effectiveness and generality of the approach are validated through numerical and physical experiments on multiple soft robotic and wearable systems.

WebSentinel: Detecting and Localizing Prompt Injection Attacks for Web Agents

Feb 03, 2026Abstract:Prompt injection attacks manipulate webpage content to cause web agents to execute attacker-specified tasks instead of the user's intended ones. Existing methods for detecting and localizing such attacks achieve limited effectiveness, as their underlying assumptions often do not hold in the web-agent setting. In this work, we propose WebSentinel, a two-step approach for detecting and localizing prompt injection attacks in webpages. Given a webpage, Step I extracts \emph{segments of interest} that may be contaminated, and Step II evaluates each segment by checking its consistency with the webpage content as context. We show that WebSentinel is highly effective, substantially outperforming baseline methods across multiple datasets of both contaminated and clean webpages that we collected. Our code is available at: https://github.com/wxl-lxw/WebSentinel.

WAInjectBench: Benchmarking Prompt Injection Detections for Web Agents

Oct 01, 2025Abstract:Multiple prompt injection attacks have been proposed against web agents. At the same time, various methods have been developed to detect general prompt injection attacks, but none have been systematically evaluated for web agents. In this work, we bridge this gap by presenting the first comprehensive benchmark study on detecting prompt injection attacks targeting web agents. We begin by introducing a fine-grained categorization of such attacks based on the threat model. We then construct datasets containing both malicious and benign samples: malicious text segments generated by different attacks, benign text segments from four categories, malicious images produced by attacks, and benign images from two categories. Next, we systematize both text-based and image-based detection methods. Finally, we evaluate their performance across multiple scenarios. Our key findings show that while some detectors can identify attacks that rely on explicit textual instructions or visible image perturbations with moderate to high accuracy, they largely fail against attacks that omit explicit instructions or employ imperceptible perturbations. Our datasets and code are released at: https://github.com/Norrrrrrr-lyn/WAInjectBench.

EnvInjection: Environmental Prompt Injection Attack to Multi-modal Web Agents

May 16, 2025Abstract:Multi-modal large language model (MLLM)-based web agents interact with webpage environments by generating actions based on screenshots of the webpages. Environmental prompt injection attacks manipulate the environment to induce the web agent to perform a specific, attacker-chosen action--referred to as the target action. However, existing attacks suffer from limited effectiveness or stealthiness, or are impractical in real-world settings. In this work, we propose EnvInjection, a new attack that addresses these limitations. Our attack adds a perturbation to the raw pixel values of the rendered webpage, which can be implemented by modifying the webpage's source code. After these perturbed pixels are mapped into a screenshot, the perturbation induces the web agent to perform the target action. We formulate the task of finding the perturbation as an optimization problem. A key challenge in solving this problem is that the mapping between raw pixel values and screenshot is non-differentiable, making it difficult to backpropagate gradients to the perturbation. To overcome this, we train a neural network to approximate the mapping and apply projected gradient descent to solve the reformulated optimization problem. Extensive evaluation on multiple webpage datasets shows that EnvInjection is highly effective and significantly outperforms existing baselines.

Provably Robust Federated Reinforcement Learning

Feb 12, 2025Abstract:Federated reinforcement learning (FRL) allows agents to jointly learn a global decision-making policy under the guidance of a central server. While FRL has advantages, its decentralized design makes it prone to poisoning attacks. To mitigate this, Byzantine-robust aggregation techniques tailored for FRL have been introduced. Yet, in our work, we reveal that these current Byzantine-robust techniques are not immune to our newly introduced Normalized attack. Distinct from previous attacks that targeted enlarging the distance of policy updates before and after an attack, our Normalized attack emphasizes on maximizing the angle of deviation between these updates. To counter these threats, we develop an ensemble FRL approach that is provably secure against both known and our newly proposed attacks. Our ensemble method involves training multiple global policies, where each is learnt by a group of agents using any foundational aggregation rule. These well-trained global policies then individually predict the action for a specific test state. The ultimate action is chosen based on a majority vote for discrete action systems or the geometric median for continuous ones. Our experimental results across different settings show that the Normalized attack can greatly disrupt non-ensemble Byzantine-robust methods, and our ensemble approach offers substantial resistance against poisoning attacks.

StringLLM: Understanding the String Processing Capability of Large Language Models

Oct 02, 2024Abstract:String processing, which mainly involves the analysis and manipulation of strings, is a fundamental component of modern computing. Despite the significant advancements of large language models (LLMs) in various natural language processing (NLP) tasks, their capability in string processing remains underexplored and underdeveloped. To bridge this gap, we present a comprehensive study of LLMs' string processing capability. In particular, we first propose StringLLM, a method to construct datasets for benchmarking string processing capability of LLMs. We use StringLLM to build a series of datasets, referred to as StringBench. It encompasses a wide range of string processing tasks, allowing us to systematically evaluate LLMs' performance in this area. Our evaluations indicate that LLMs struggle with accurately processing strings compared to humans. To uncover the underlying reasons for this limitation, we conduct an in-depth analysis and subsequently propose an effective approach that significantly enhances LLMs' string processing capability via fine-tuning. This work provides a foundation for future research to understand LLMs' string processing capability. Our code and data are available at https://github.com/wxl-lxw/StringLLM.

Exploring the Benefits of Differentially Private Pre-training and Parameter-Efficient Fine-tuning for Table Transformers

Sep 12, 2023

Abstract:For machine learning with tabular data, Table Transformer (TabTransformer) is a state-of-the-art neural network model, while Differential Privacy (DP) is an essential component to ensure data privacy. In this paper, we explore the benefits of combining these two aspects together in the scenario of transfer learning -- differentially private pre-training and fine-tuning of TabTransformers with a variety of parameter-efficient fine-tuning (PEFT) methods, including Adapter, LoRA, and Prompt Tuning. Our extensive experiments on the ACSIncome dataset show that these PEFT methods outperform traditional approaches in terms of the accuracy of the downstream task and the number of trainable parameters, thus achieving an improved trade-off among parameter efficiency, privacy, and accuracy. Our code is available at github.com/IBM/DP-TabTransformer.

OpenPneu: Compact platform for pneumatic actuation with multi-channels

Sep 22, 2022

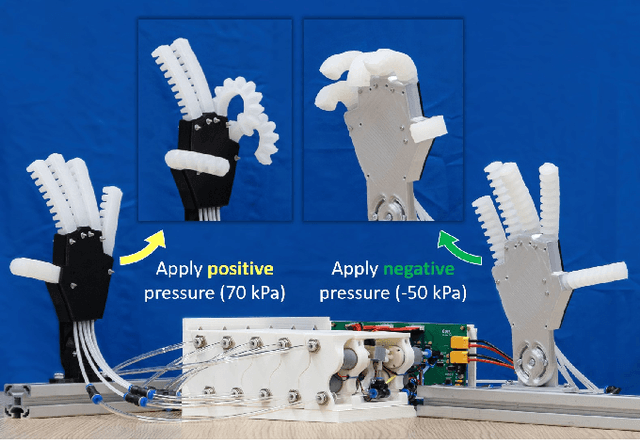

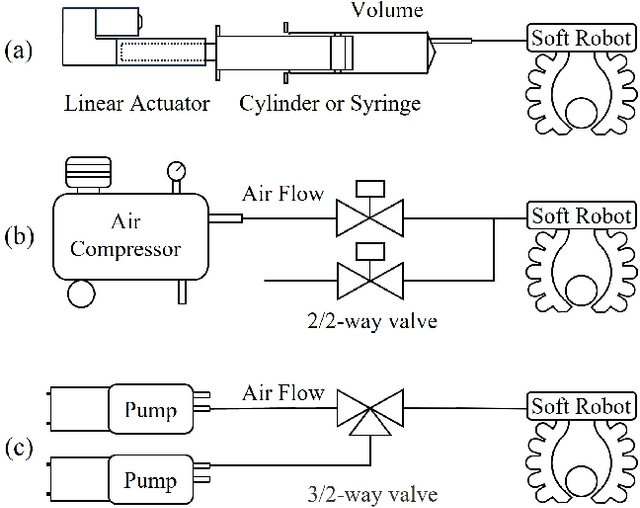

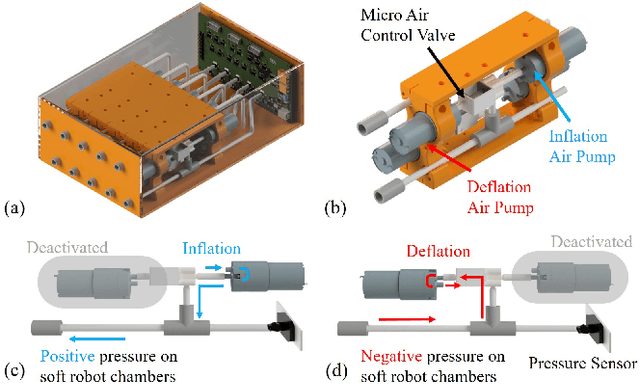

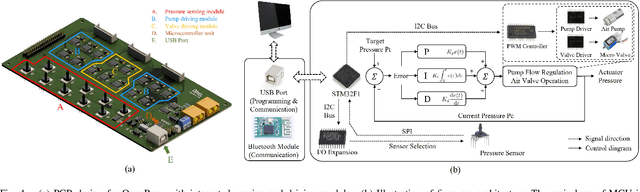

Abstract:This paper presents a compact system, OpenPneu, to support the pneumatic actuation for multi-chambers on soft robots. Micro-pumps are employed in the system to generate airflow and therefore no extra input as compressed air is needed. Our system conducts modular design to provide good scalability, which has been demonstrated on a prototype with ten air channels. Each air channel of OpenPneu is equipped with both the inflation and the deflation functions to provide a full range pressure supply from positive to negative with a maximal flow rate at 1.7 L/min. High precision closed-loop control of pressures has been built into our system to achieve stable and efficient dynamic performance in actuation. An open-source control interface and API in Python are provided. We also demonstrate the functionality of OpenPneu on three soft robotic systems with up to 10 chambers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge