Utsav Patel

GrASPE: Graph based Multimodal Fusion for Robot Navigation in Unstructured Outdoor Environments

Sep 13, 2022

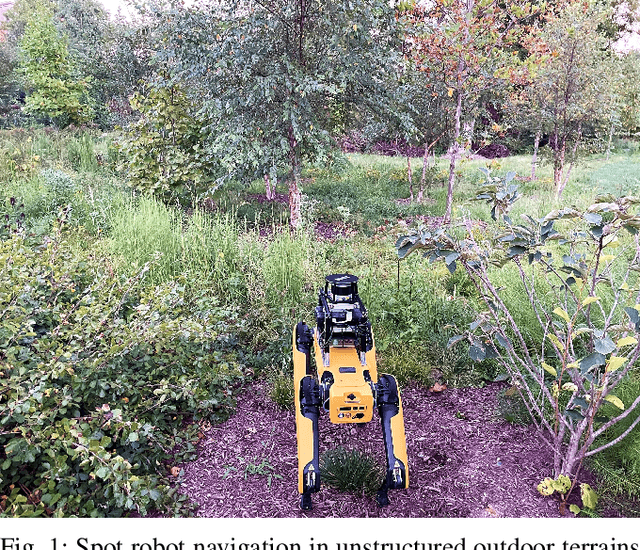

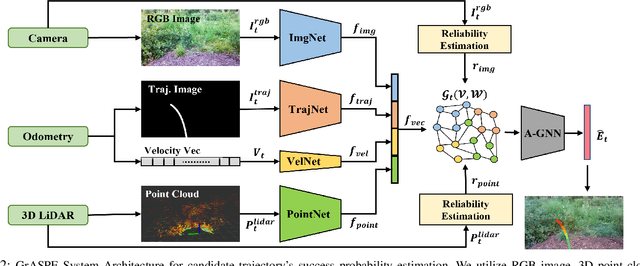

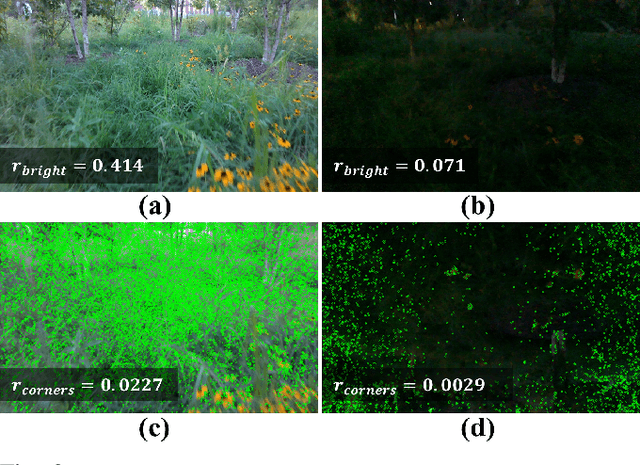

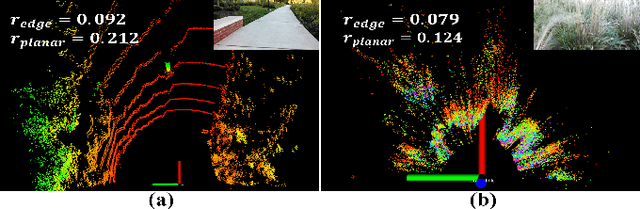

Abstract:We present a novel trajectory traversability estimation and planning algorithm for robot navigation in complex outdoor environments. We incorporate multimodal sensory inputs from an RGB camera, 3D LiDAR, and robot's odometry sensor to train a prediction model to estimate candidate trajectories' success probabilities based on partially reliable multi-modal sensor observations. We encode high-dimensional multi-modal sensory inputs to low-dimensional feature vectors using encoder networks and represent them as a connected graph to train an attention-based Graph Neural Network (GNN) model to predict trajectory success probabilities. We further analyze the image and point cloud data separately to quantify sensor reliability to augment the weights of the feature graph representation used in our GNN. During runtime, our model utilizes multi-sensor inputs to predict the success probabilities of the trajectories generated by a local planner to avoid potential collisions and failures. Our algorithm demonstrates robust predictions when one or more sensor modalities are unreliable or unavailable in complex outdoor environments. We evaluate our algorithm's navigation performance using a Spot robot in real-world outdoor environments.

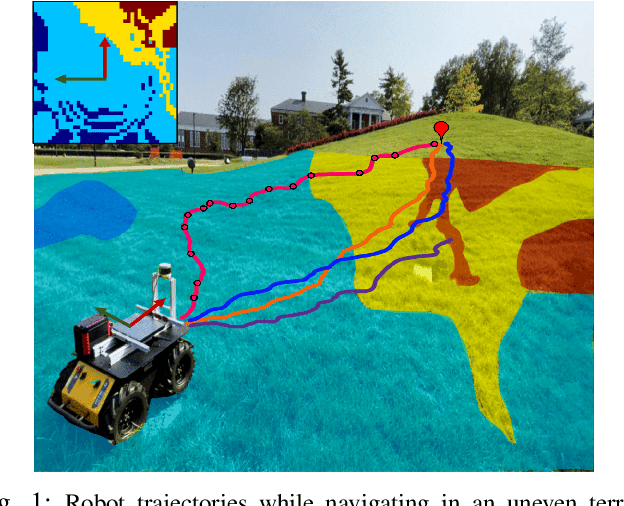

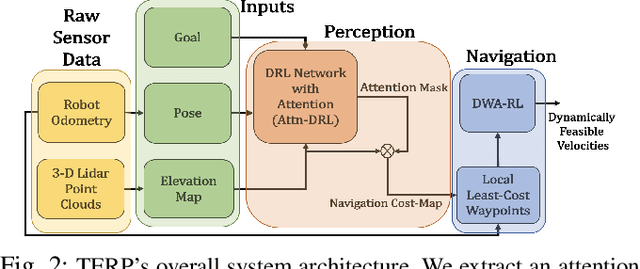

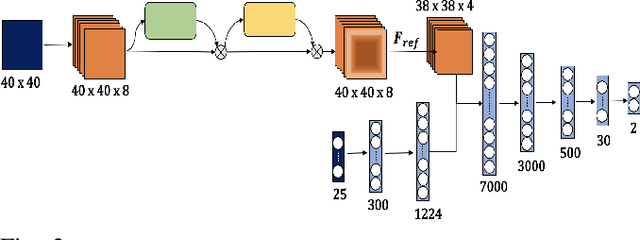

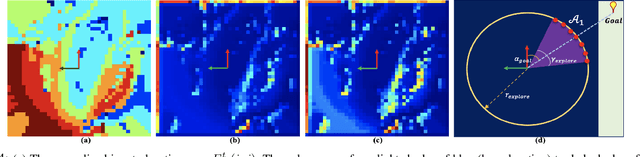

TERP: Reliable Planning in Uneven Outdoor Environments using Deep Reinforcement Learning

Sep 23, 2021

Abstract:We present a novel method for reliable robot navigation in uneven outdoor terrains. Our approach employs a novel fully-trained Deep Reinforcement Learning (DRL) network that uses elevation maps of the environment, robot pose, and goal as inputs to compute an attention mask of the environment. The attention mask is used to identify reduced stability regions in the elevation map and is computed using channel and spatial attention modules and a novel reward function. We continuously compute and update a navigation cost-map that encodes the elevation information or the level-of-flatness of the terrain using the attention mask. We then generate locally least-cost waypoints on the cost-map and compute the final dynamically feasible trajectory using another DRL-based method. Our approach guarantees safe, locally least-cost paths and dynamically feasible robot velocities in uneven terrains. We observe an increase of 35.18% in terms of success rate and, a decrease of 26.14% in the cumulative elevation gradient of the robot's trajectory compared to prior navigation methods in high-elevation regions. We evaluate our method on a Husky robot in real-world uneven terrains (~ 4m of elevation gain) and demonstrate its benefits.

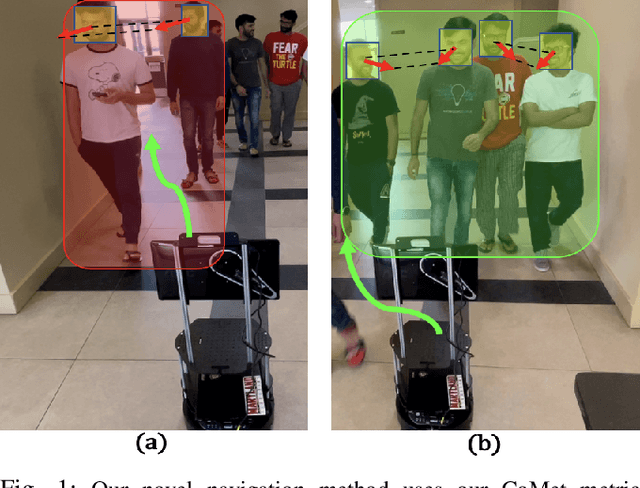

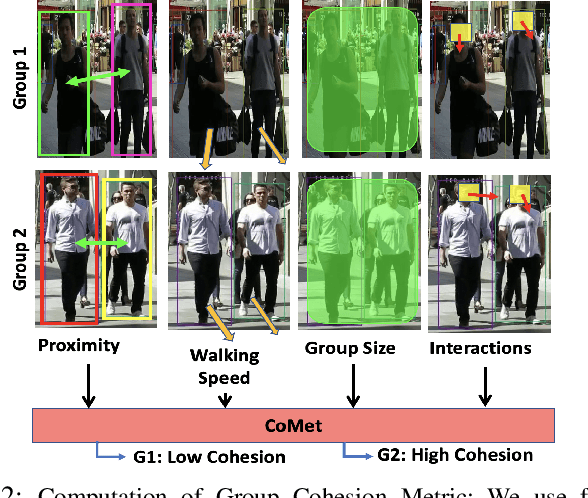

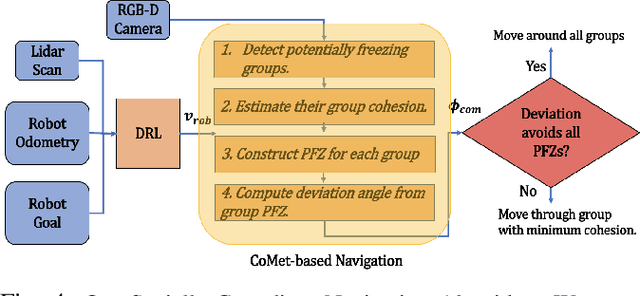

CoMet: Modeling Group Cohesion for Socially Compliant Robot Navigation in Crowded Scenes

Aug 26, 2021

Abstract:We present CoMet, a novel approach for computing a group's cohesion and using that to improve a robot's navigation in crowded scenes. Our approach uses a novel cohesion-metric that builds on prior work in social psychology. We compute this metric by utilizing various visual features of pedestrians from an RGB-D camera on-board a robot. Specifically, we detect characteristics corresponding to proximity between people, their relative walking speeds, the group size, and interactions between group members. We use our cohesion-metric to design and improve a navigation scheme that accounts for different levels of group cohesion while a robot moves through a crowd. We evaluate the precision and recall of our cohesion-metric based on perceptual evaluations. We highlight the performance of our social navigation algorithm on a Turtlebot robot and demonstrate its benefits in terms of multiple metrics: freezing rate (57% decrease), deviation (35.7% decrease), and path length of the trajectory(23.2% decrease).

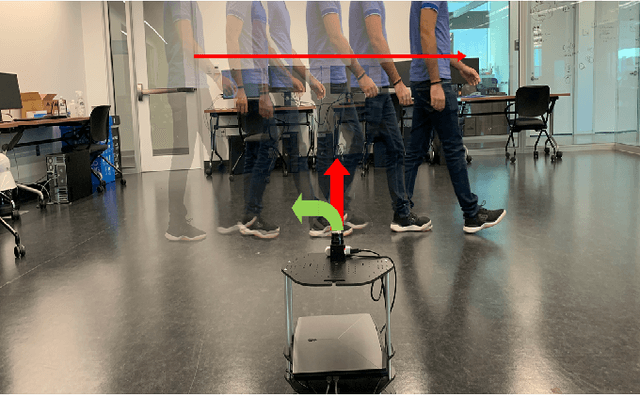

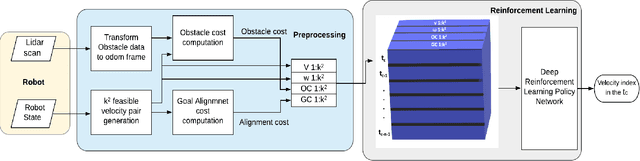

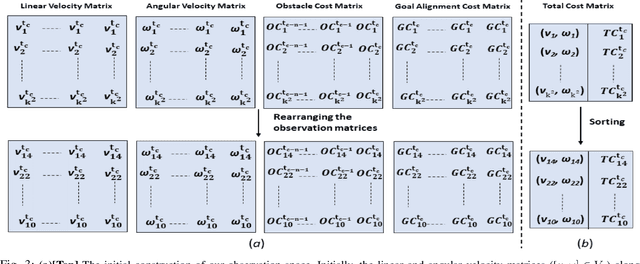

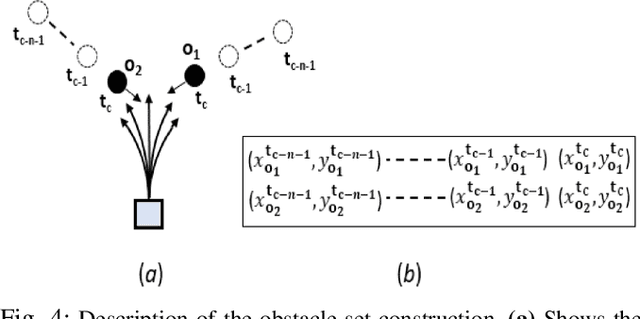

Dynamically Feasible Deep Reinforcement Learning Policy for Robot Navigation in Dense Mobile Crowds

Oct 28, 2020

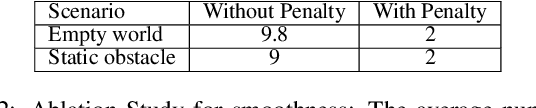

Abstract:We present a novel Deep Reinforcement Learning (DRL) based policy for mobile robot navigation in dynamic environments that computes dynamically feasible and spatially aware robot velocities. Our method addresses two primary issues associated with the Dynamic Window Approach (DWA) and DRL-based navigation policies and solves them by using the benefits of one method to fix the issues of the other. The issues are: 1. DWA not utilizing the time evolution of the environment while choosing velocities from the dynamically feasible velocity set leading to sub-optimal dynamic collision avoidance behaviors, and 2. DRL-based navigation policies computing velocities that often violate the dynamics constraints such as the non-holonomic and acceleration constraints of the robot. Our DRL-based method generates velocities that are dynamically feasible while accounting for the motion of the obstacles in the environment. This is done by embedding the changes in the environment's state in a novel observation space and a reward function formulation that reinforces spatially aware obstacle avoidance maneuvers. We evaluate our method in realistic 3-D simulation and on a real differential drive robot in challenging indoor scenarios with crowds of varying densities. We make comparisons with traditional and current state-of-the-art collision avoidance methods and observe significant improvements in terms of collision rate, number of dynamics constraint violations and smoothness. We also conduct ablation studies to highlight the advantages and explain the rationale behind our observation space construction, reward structure and network architecture.

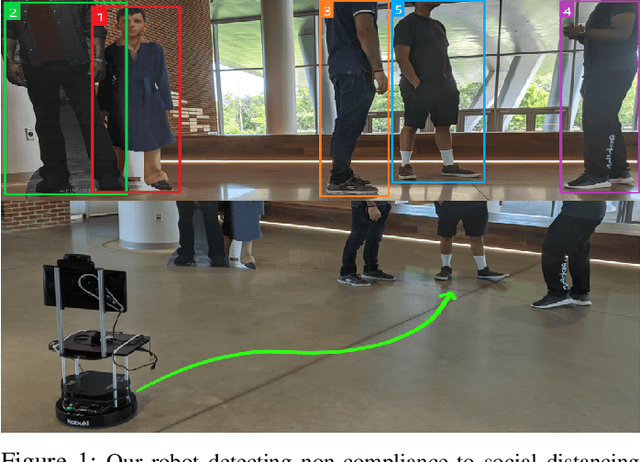

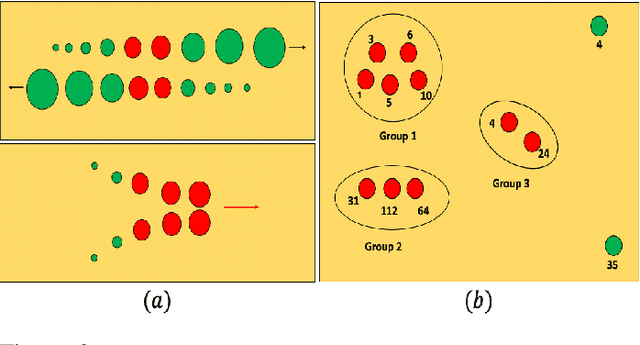

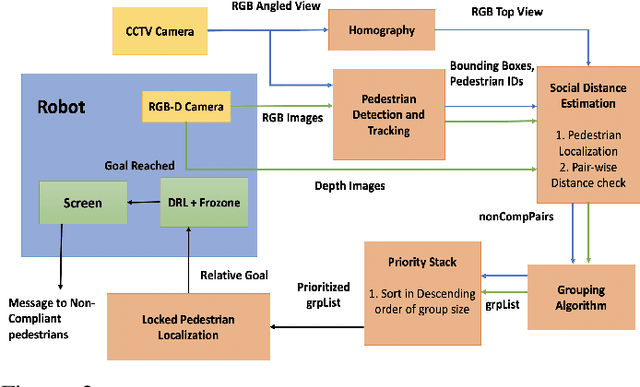

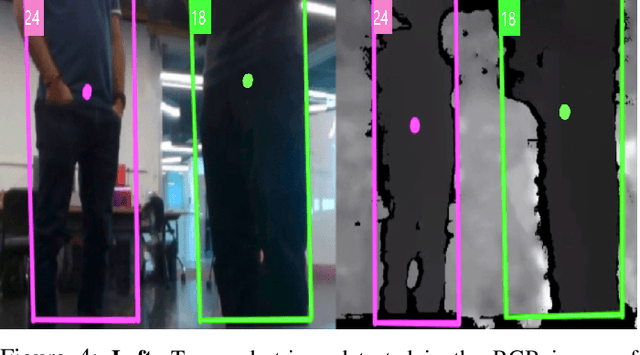

COVID-Robot: Monitoring Social Distancing Constraints in Crowded Scenarios

Aug 21, 2020

Abstract:Maintaining social distancing norms between humans has become an indispensable precaution to slow down the transmission of COVID-19. We present a novel method to automatically detect pairs of humans in a crowded scenario who are not adhering to the social distance constraint, i.e. about 6 feet of space between them. Our approach makes no assumption about the crowd density or pedestrian walking directions. We use a mobile robot with commodity sensors, namely an RGB-D camera and a 2-D lidar to perform collision-free navigation in a crowd and estimate the distance between all detected individuals in the camera's field of view. In addition, we also equip the robot with a thermal camera that wirelessly transmits thermal images to a security/healthcare personnel who monitors if any individual exhibits a higher than normal temperature. In indoor scenarios, our mobile robot can also be combined with static mounted CCTV cameras to further improve the performance in terms of number of social distancing breaches detected, accurately pursuing walking pedestrians etc. We highlight the performance benefits of our approach in different static and dynamic indoor scenarios.

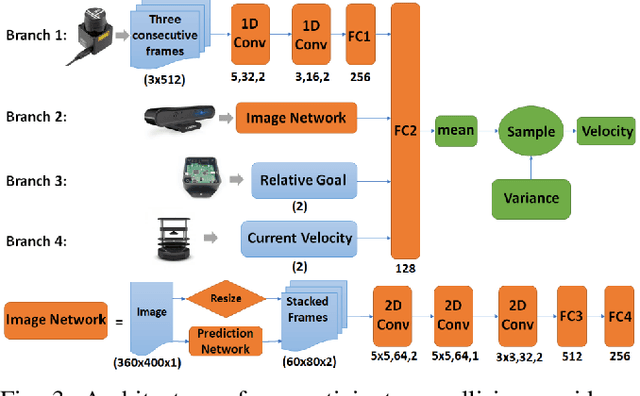

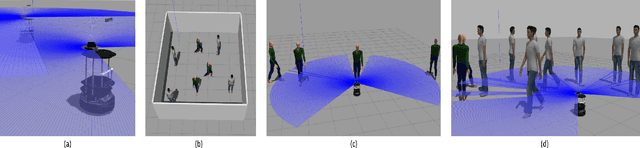

Realtime Collision Avoidance for Mobile Robots in Dense Crowds using Implicit Multi-sensor Fusion and Deep Reinforcement Learning

Apr 28, 2020

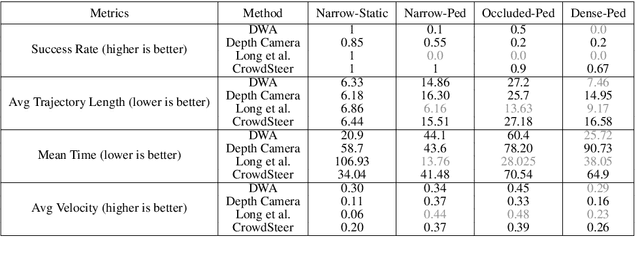

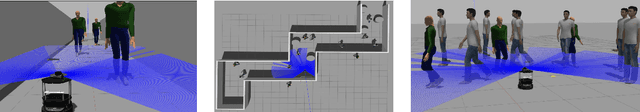

Abstract:We present a novel learning-based collision avoidance algorithm, CrowdSteer, for mobile robots operating in dense and crowded environments. Our approach is end-to-end and uses multiple perception sensors such as a 2-D lidar along with a depth camera to sense surrounding dynamic agents and compute collision-free velocities. Our training approach is based on the sim-to-real paradigm and uses high fidelity 3-D simulations of pedestrians and the environment to train a policy using Proximal Policy Optimization (PPO). We show that our learned navigation model is directly transferable to previously unseen virtual and dense real-world environments. We have integrated our algorithm with differential drive robots and evaluated its performance in narrow scenarios such as dense crowds, narrow corridors, T-junctions, L-junctions, etc. In practice, our approach can perform real-time collision avoidance and generate smooth trajectories in such complex scenarios. We also compare the performance with prior methods based on metrics such as trajectory length, mean time to goal, success rate, and smoothness and observe considerable improvement.

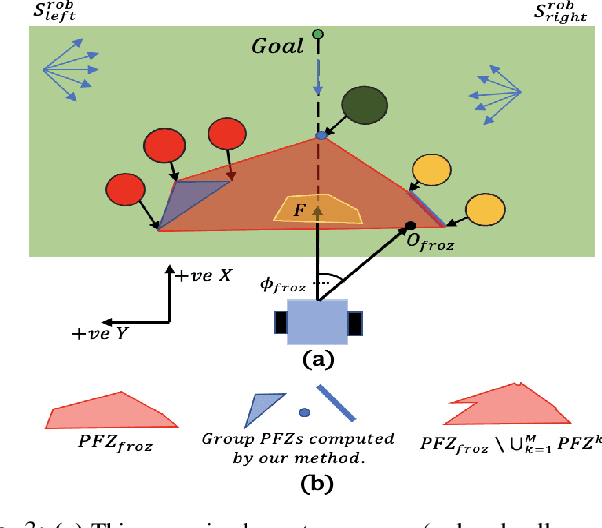

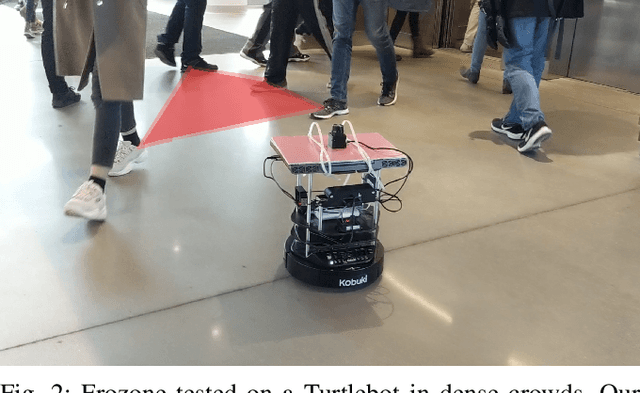

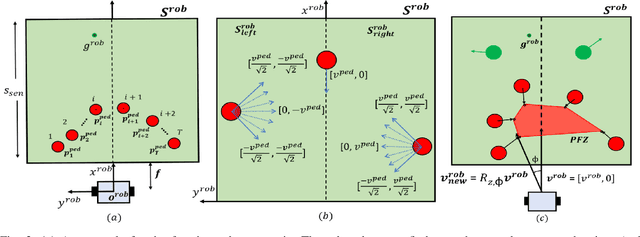

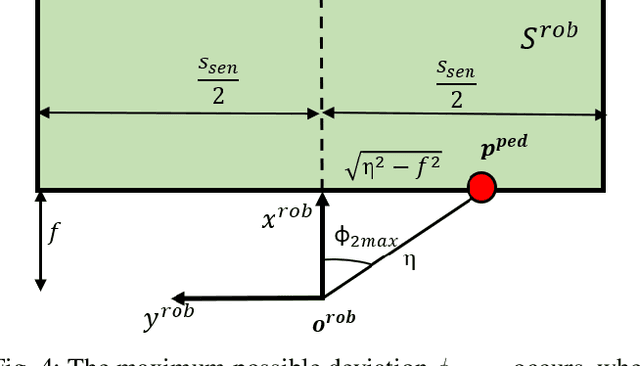

Frozone: Freezing-Free, Pedestrian-Friendly Navigation in Human Crowds

Mar 11, 2020

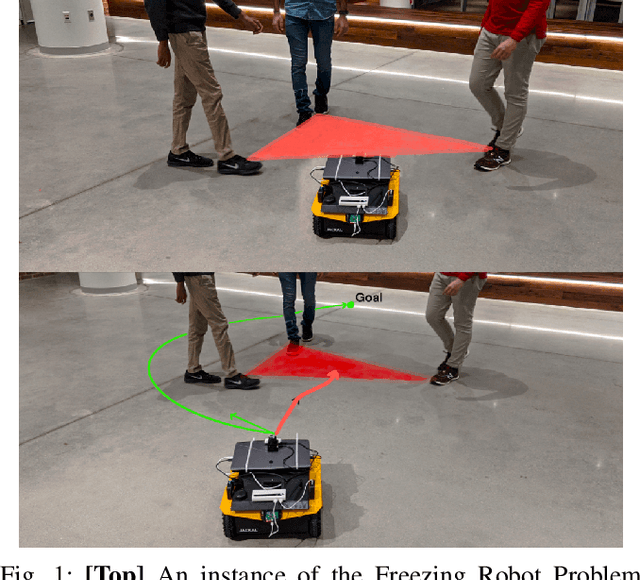

Abstract:We present Frozone, a novel algorithm to deal with the Freezing Robot Problem (FRP) that arises when a robot navigates through dense scenarios and crowds. Our method senses and explicitly predicts the trajectories of pedestrians and constructs a Potential Freezing Zone (PFZ); a spatial zone where the robot could freeze or be obtrusive to humans. Our formulation computes a deviation velocity to avoid the PFZ, which also accounts for social constraints. Furthermore, Frozone is designed for robots equipped with sensors with a limited sensing range and field of view. We ensure that the robot's deviation is bounded, thus avoiding sudden angular motion which could lead to the loss of perception data of the surrounding obstacles. We have combined Frozone with a Deep Reinforcement Learning-based (DRL) collision avoidance method and use our hybrid approach to handle crowds of varying densities. Our overall approach results in smooth and collision-free navigation in dense environments. We have evaluated our method's performance in simulation and on real differential drive robots in challenging indoor scenarios. We highlight the benefits of our approach over prior methods in terms of success rates (up to 50% increase), pedestrian-friendliness (100% increase) and the rate of freezing (> 80% decrease) in challenging scenarios.

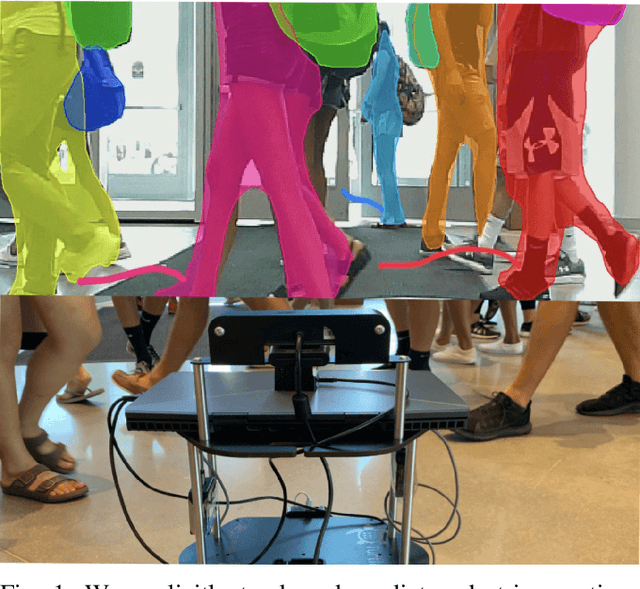

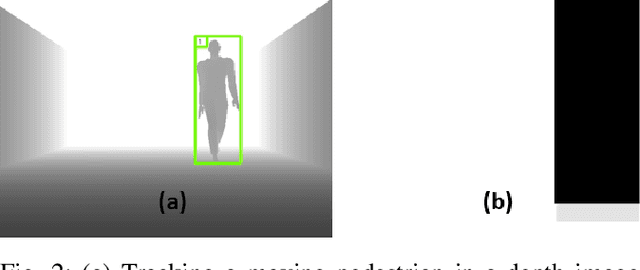

DenseCAvoid: Real-time Navigation in Dense Crowds using Anticipatory Behaviors

Feb 07, 2020

Abstract:We present DenseCAvoid, a novel navigation algorithm for navigating a robot through dense crowds and avoiding collisions by anticipating pedestrian behaviors. Our formulation uses visual sensors and a pedestrian trajectory prediction algorithm to track pedestrians in a set of input frames and provide bounding boxes that extrapolate the pedestrian positions in a future time. Our hybrid approach combines this trajectory prediction with a Deep Reinforcement Learning-based collision avoidance method to train a policy to generate smoother, safer, and more robust trajectories during run-time. We train our policy in realistic 3-D simulations of static and dynamic scenarios with multiple pedestrians. In practice, our hybrid approach generalizes well to unseen, real-world scenarios and can navigate a robot through dense crowds (~1-2 humans per square meter) in indoor scenarios, including narrow corridors and lobbies. As compared to cases where prediction was not used, we observe that our method reduces the occurrence of the robot freezing in a crowd by up to 48%, and performs comparably with respect to trajectory lengths and mean arrival times to goal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge