Tianfu Li

P$^{3}$Nav: End-to-End Perception, Prediction and Planning for Vision-and-Language Navigation

Mar 18, 2026Abstract:In Vision-and-Language Navigation (VLN), an agent is required to plan a path to the target specified by the language instruction, using its visual observations. Consequently, prevailing VLN methods primarily focus on building powerful planners through visual-textual alignment. However, these approaches often bypass the imperative of comprehensive scene understanding prior to planning, leaving the agent with insufficient perception or prediction capabilities. Thus, we propose P$^{3}$Nav, a novel end-to-end framework integrating perception, prediction, and planning in a unified pipeline to strengthen the VLN agent's scene understanding and boost navigation success. Specifically, P$^{3}$Nav augments perception by extracting complementary cues from object-level and map-level perspectives. Subsequently, our P$^{3}$Nav predicts waypoints to model the agent's potential future states, endowing the agent with intrinsic awareness of candidate positions during navigation. Conditioned on these future waypoints, P$^{3}$Nav further forecasts semantic map cues, enabling proactive planning and reducing the strict reliance on purely historical context. Integrating these perceptual and predictive cues, a holistic planning module finally carries out the VLN tasks. Extensive experiments demonstrate that our P$^{3}$Nav achieves new state-of-the-art performance on the REVERIE, R2R-CE, and RxR-CE benchmarks.

Enhancing Vision-Language Navigation with Multimodal Event Knowledge from Real-World Indoor Tour Videos

Feb 27, 2026Abstract:Vision-Language Navigation (VLN) agents often struggle with long-horizon reasoning in unseen environments, particularly when facing ambiguous, coarse-grained instructions. While recent advances use knowledge graph to enhance reasoning, the potential of multimodal event knowledge inspired by human episodic memory remains underexplored. In this work, we propose an event-centric knowledge enhancement strategy for automated process knowledge mining and feature fusion to solve coarse-grained instruction and long-horizon reasoning in VLN task. First, we construct YE-KG, the first large-scale multimodal spatiotemporal knowledge graph, with over 86k nodes and 83k edges, derived from real-world indoor videos. By leveraging multimodal large language models (i.e., LLaVa, GPT4), we extract unstructured video streams into structured semantic-action-effect events to serve as explicit episodic memory. Second, we introduce STE-VLN, which integrates the above graph into VLN models via a Coarse-to-Fine Hierarchical Retrieval mechanism. This allows agents to retrieve causal event sequences and dynamically fuse them with egocentric visual observations. Experiments on REVERIE, R2R, and R2R-CE benchmarks demonstrate the efficiency of our event-centric strategy, outperforming state-of-the-art approaches across diverse action spaces. Our data and code are available on the project website https://sites.google.com/view/y-event-kg/.

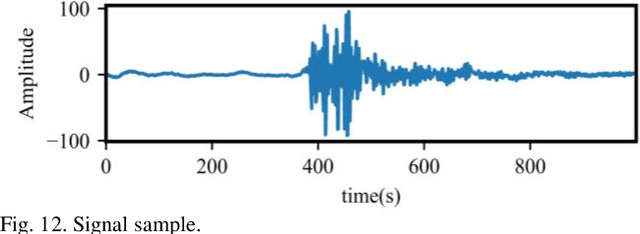

A Novel Unsupervised Graph Wavelet Autoencoder for Mechanical System Fault Detection

Jul 20, 2023Abstract:Reliable fault detection is an essential requirement for safe and efficient operation of complex mechanical systems in various industrial applications. Despite the abundance of existing approaches and the maturity of the fault detection research field, the interdependencies between condition monitoring data have often been overlooked. Recently, graph neural networks have been proposed as a solution for learning the interdependencies among data, and the graph autoencoder (GAE) architecture, similar to standard autoencoders, has gained widespread use in fault detection. However, both the GAE and the graph variational autoencoder (GVAE) have fixed receptive fields, limiting their ability to extract multiscale features and model performance. To overcome these limitations, we propose two graph neural network models: the graph wavelet autoencoder (GWAE), and the graph wavelet variational autoencoder (GWVAE). GWAE consists mainly of the spectral graph wavelet convolutional (SGWConv) encoder and a feature decoder, while GWVAE is the variational form of GWAE. The developed SGWConv is built upon the spectral graph wavelet transform which can realize multiscale feature extraction by decomposing the graph signal into one scaling function coefficient and several spectral graph wavelet coefficients. To achieve unsupervised mechanical system fault detection, we transform the collected system signals into PathGraph by considering the neighboring relationships of each data sample. Fault detection is then achieved by evaluating the reconstruction errors of normal and abnormal samples. We carried out experiments on two condition monitoring datasets collected from fuel control systems and one acoustic monitoring dataset from a valve. The results show that the proposed methods improve the performance by around 3%~4% compared to the comparison methods.

Smart filter aided domain adversarial neural network: An unsupervised domain adaptation method for fault diagnosis in noisy industrial scenarios

Jul 04, 2023

Abstract:The application of unsupervised domain adaptation (UDA)-based fault diagnosis methods has shown significant efficacy in industrial settings, facilitating the transfer of operational experience and fault signatures between different operating conditions, different units of a fleet or between simulated and real data. However, in real industrial scenarios, unknown levels and types of noise can amplify the difficulty of domain alignment, thus severely affecting the diagnostic performance of deep learning models. To address this issue, we propose an UDA method called Smart Filter-Aided Domain Adversarial Neural Network (SFDANN) for fault diagnosis in noisy industrial scenarios. The proposed methodology comprises two steps. In the first step, we develop a smart filter that dynamically enforces similarity between the source and target domain data in the time-frequency domain. This is achieved by combining a learnable wavelet packet transform network (LWPT) and a traditional wavelet packet transform module. In the second step, we input the data reconstructed by the smart filter into a domain adversarial neural network (DANN). To learn domain-invariant and discriminative features, the learnable modules of SFDANN are trained in a unified manner with three objectives: time-frequency feature proximity, domain alignment, and fault classification. We validate the effectiveness of the proposed SFDANN method based on two fault diagnosis cases: one involving fault diagnosis of bearings in noisy environments and another involving fault diagnosis of slab tracks in a train-track-bridge coupling vibration system, where the transfer task involves transferring from numerical simulations to field measurements. Results show that compared to other representative state of the art UDA methods, SFDANN exhibits superior performance and remarkable stability.

Filter-informed Spectral Graph Wavelet Networks for Multiscale Feature Extraction and Intelligent Fault Diagnosis

Mar 27, 2023

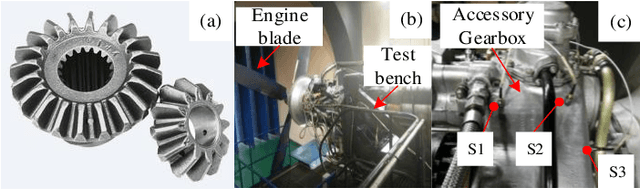

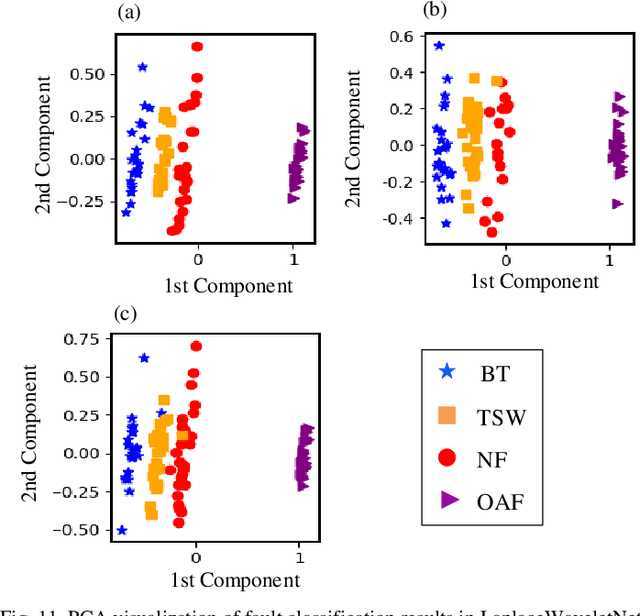

Abstract:Intelligent fault diagnosis has been increasingly improved with the evolution of deep learning (DL) approaches. Recently, the emerging graph neural networks (GNNs) have also been introduced in the field of fault diagnosis with the goal to make better use of the inductive bias of the interdependencies between the different sensor measurements. However, there are some limitations with these GNN-based fault diagnosis methods. First, they lack the ability to realize multiscale feature extraction due to the fixed receptive field of GNNs. Secondly, they eventually encounter the over-smoothing problem with increase of model depth. Lastly, the extracted features of these GNNs are hard to understand owing to the black-box nature of GNNs. To address these issues, a filter-informed spectral graph wavelet network (SGWN) is proposed in this paper. In SGWN, the spectral graph wavelet convolutional (SGWConv) layer is established upon the spectral graph wavelet transform, which can decompose a graph signal into scaling function coefficients and spectral graph wavelet coefficients. With the help of SGWConv, SGWN is able to prevent the over-smoothing problem caused by long-range low-pass filtering, by simultaneously extracting low-pass and band-pass features. Furthermore, to speed up the computation of SGWN, the scaling kernel function and graph wavelet kernel function in SGWConv are approximated by the Chebyshev polynomials. The effectiveness of the proposed SGWN is evaluated on the collected solenoid valve dataset and aero-engine intershaft bearing dataset. The experimental results show that SGWN can outperform the comparative methods in both diagnostic accuracy and the ability to prevent over-smoothing. Moreover, its extracted features are also interpretable with domain knowledge.

Deep Learning Algorithms for Rotating Machinery Intelligent Diagnosis: An Open Source Benchmark Study

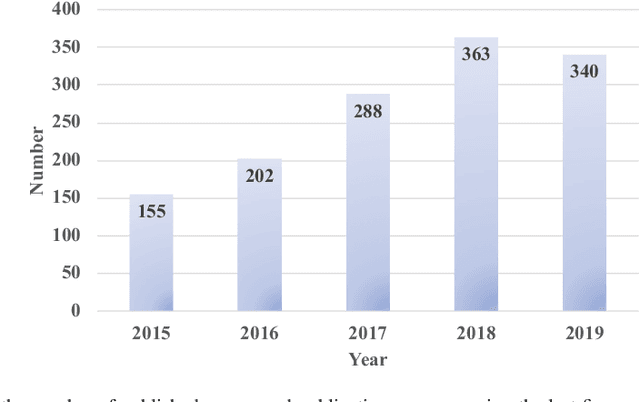

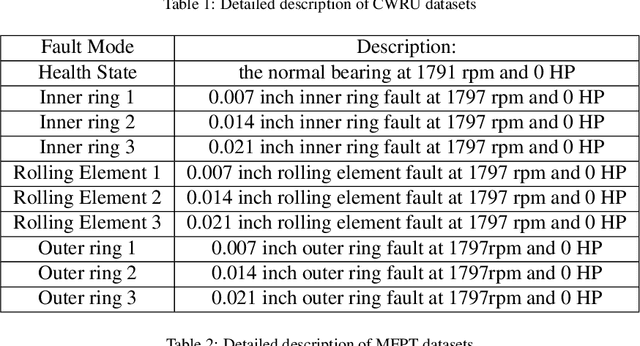

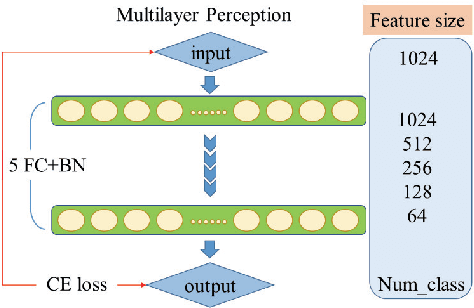

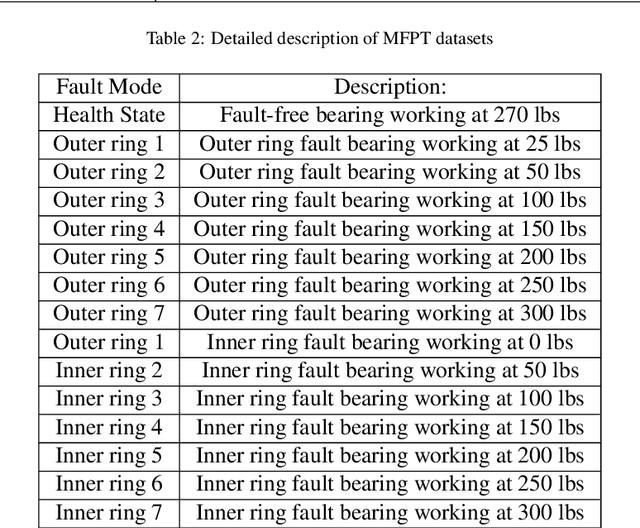

Mar 06, 2020

Abstract:With the development of artificial intelligence and deep learning (DL) techniques, rotating machinery intelligent diagnosis has gone through tremendous progress with verified success and the classification accuracies of many DL-based intelligent diagnosis algorithms are tending to 100\%. However, different datasets, configurations, and hyper-parameters are often recommended to be used in performance verification for different types of models, and few open source codes are made public for evaluation and comparisons. Therefore, unfair comparisons and ineffective improvement may exist in rotating machinery intelligent diagnosis, which limits the advancement of this field. To address these issues, we perform an extensive evaluation of four kinds of models with various datasets to provide a benchmark study within the same framework. In this paper, we first gather most of the publicly available datasets and give the complete benchmark study of DL-based intelligent algorithms under two data split strategies, five input formats, three normalization methods, and four augmentation methods. Second, we integrate the whole evaluation codes into a code library and release this code library to the public for better development of this field. Third, we use the specific-designed cases to point out the existing issues, including class imbalance, generalization ability, interpretability, few-shot learning, and model selection. By these works, we release a unified code framework for comparing and testing models fairly and quickly, emphasize the importance of open source codes, provide the baseline accuracy (a lower bound) to avoid useless improvement, and discuss potential future directions in this field. The code library is available at \url{https://github.com/ZhaoZhibin/DL-based-Intelligent-Diagnosis-Benchmark}.

WaveletKernelNet: An Interpretable Deep Neural Network for Industrial Intelligent Diagnosis

Nov 23, 2019

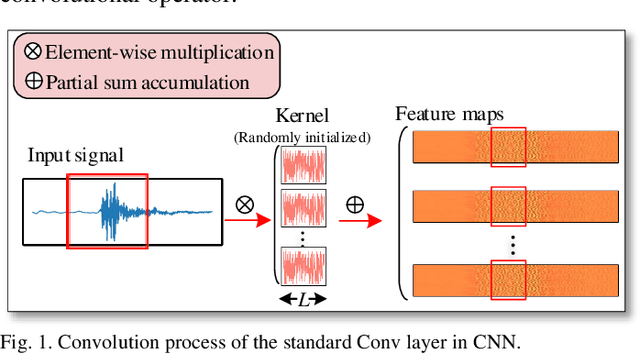

Abstract:Convolutional neural network (CNN), with ability of feature learning and nonlinear mapping, has demonstrated its effectiveness in prognostics and health management (PHM). However, explanation on the physical meaning of a CNN architecture has rarely been studied. In this paper, a novel wavelet driven deep neural network termed as WaveletKernelNet (WKN) is presented, where a continuous wavelet convolutional (CWConv) layer is designed to replace the first convolutional layer of the standard CNN. This enables the first CWConv layer to discover more meaningful filters. Furthermore, only the scale parameter and translation parameter are directly learned from raw data at this CWConv layer. This provides a very effective way to obtain a customized filter bank, specifically tuned for extracting defect-related impact component embedded in the vibration signal. In addition, three experimental verification using data from laboratory environment are carried out to verify effectiveness of the proposed method for mechanical fault diagnosis. The results show the importance of the designed CWConv layer and the output of CWConv layer is interpretable. Besides, it is found that WKN has fewer parameters, higher fault classification accuracy and faster convergence speed than standard CNN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge