Thien Hoang Nguyen

Towards Autonomous In-situ Soil Sampling and Mapping in Large-Scale Agricultural Environments

Jun 06, 2025Abstract:Traditional soil sampling and analysis methods are labor-intensive, time-consuming, and limited in spatial resolution, making them unsuitable for large-scale precision agriculture. To address these limitations, we present a robotic solution for real-time sampling, analysis and mapping of key soil properties. Our system consists of two main sub-systems: a Sample Acquisition System (SAS) for precise, automated in-field soil sampling; and a Sample Analysis Lab (Lab) for real-time soil property analysis. The system's performance was validated through extensive field trials at a large-scale Australian farm. Experimental results show that the SAS can consistently acquire soil samples with a mass of 50g at a depth of 200mm, while the Lab can process each sample within 10 minutes to accurately measure pH and macronutrients. These results demonstrate the potential of the system to provide farmers with timely, data-driven insights for more efficient and sustainable soil management and fertilizer application.

MCD: Diverse Large-Scale Multi-Campus Dataset for Robot Perception

Mar 18, 2024Abstract:Perception plays a crucial role in various robot applications. However, existing well-annotated datasets are biased towards autonomous driving scenarios, while unlabelled SLAM datasets are quickly over-fitted, and often lack environment and domain variations. To expand the frontier of these fields, we introduce a comprehensive dataset named MCD (Multi-Campus Dataset), featuring a wide range of sensing modalities, high-accuracy ground truth, and diverse challenging environments across three Eurasian university campuses. MCD comprises both CCS (Classical Cylindrical Spinning) and NRE (Non-Repetitive Epicyclic) lidars, high-quality IMUs (Inertial Measurement Units), cameras, and UWB (Ultra-WideBand) sensors. Furthermore, in a pioneering effort, we introduce semantic annotations of 29 classes over 59k sparse NRE lidar scans across three domains, thus providing a novel challenge to existing semantic segmentation research upon this largely unexplored lidar modality. Finally, we propose, for the first time to the best of our knowledge, continuous-time ground truth based on optimization-based registration of lidar-inertial data on large survey-grade prior maps, which are also publicly released, each several times the size of existing ones. We conduct a rigorous evaluation of numerous state-of-the-art algorithms on MCD, report their performance, and highlight the challenges awaiting solutions from the research community.

A Cost-Effective Cooperative Exploration and Inspection Strategy for Heterogeneous Aerial System

Mar 02, 2024

Abstract:In this paper, we propose a cost-effective strategy for heterogeneous UAV swarm systems for cooperative aerial inspection. Unlike previous swarm inspection works, the proposed method does not rely on precise prior knowledge of the environment and can complete full 3D surface coverage of objects in any shape. In this work, agents are partitioned into teams, with each drone assign a different task, including mapping, exploration, and inspection. Task allocation is facilitated by assigning optimal inspection volumes to each team, following best-first rules. A voxel map-based representation of the environment is used for pathfinding, and a rule-based path-planning method is the core of this approach. We achieved the best performance in all challenging experiments with the proposed approach, surpassing all benchmark methods for similar tasks across multiple evaluation trials. The proposed method is open source at https://github.com/ntu-aris/caric_baseline and used as the baseline of the Cooperative Aerial Robots Inspection Challenge at the 62nd IEEE Conference on Decision and Control 2023.

MMAUD: A Comprehensive Multi-Modal Anti-UAV Dataset for Modern Miniature Drone Threats

Feb 06, 2024

Abstract:In response to the evolving challenges posed by small unmanned aerial vehicles (UAVs), which possess the potential to transport harmful payloads or independently cause damage, we introduce MMAUD: a comprehensive Multi-Modal Anti-UAV Dataset. MMAUD addresses a critical gap in contemporary threat detection methodologies by focusing on drone detection, UAV-type classification, and trajectory estimation. MMAUD stands out by combining diverse sensory inputs, including stereo vision, various Lidars, Radars, and audio arrays. It offers a unique overhead aerial detection vital for addressing real-world scenarios with higher fidelity than datasets captured on specific vantage points using thermal and RGB. Additionally, MMAUD provides accurate Leica-generated ground truth data, enhancing credibility and enabling confident refinement of algorithms and models, which has never been seen in other datasets. Most existing works do not disclose their datasets, making MMAUD an invaluable resource for developing accurate and efficient solutions. Our proposed modalities are cost-effective and highly adaptable, allowing users to experiment and implement new UAV threat detection tools. Our dataset closely simulates real-world scenarios by incorporating ambient heavy machinery sounds. This approach enhances the dataset's applicability, capturing the exact challenges faced during proximate vehicular operations. It is expected that MMAUD can play a pivotal role in advancing UAV threat detection, classification, trajectory estimation capabilities, and beyond. Our dataset, codes, and designs will be available in https://github.com/ntu-aris/MMAUD.

VR-SLAM: A Visual-Range Simultaneous Localization and Mapping System using Monocular Camera and Ultra-wideband Sensors

Mar 20, 2023Abstract:In this work, we propose a simultaneous localization and mapping (SLAM) system using a monocular camera and Ultra-wideband (UWB) sensors. Our system, referred to as VRSLAM, is a multi-stage framework that leverages the strengths and compensates for the weaknesses of each sensor. Firstly, we introduce a UWB-aided 7 degree-of-freedom (scale factor, 3D position, and 3D orientation) global alignment module to initialize the visual odometry (VO) system in the world frame defined by the UWB anchors. This module loosely fuses up-to-scale VO and ranging data using either a quadratically constrained quadratic programming (QCQP) or nonlinear least squares (NLS) algorithm based on whether a good initial guess is available. Secondly, we provide an accompanied theoretical analysis that includes the derivation and interpretation of the Fisher Information Matrix (FIM) and its determinant. Thirdly, we present UWBaided bundle adjustment (UBA) and UWB-aided pose graph optimization (UPGO) modules to improve short-term odometry accuracy, reduce long-term drift as well as correct any alignment and scale errors. Extensive simulations and experiments show that our solution outperforms UWB/camera-only and previous approaches, can quickly recover from tracking failure without relying on visual relocalization, and can effortlessly obtain a global map even if there are no loop closures.

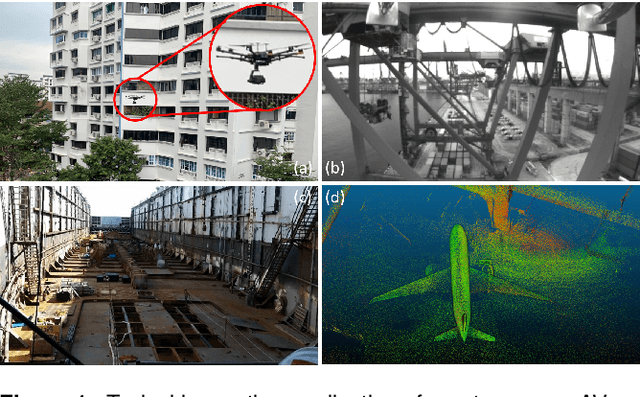

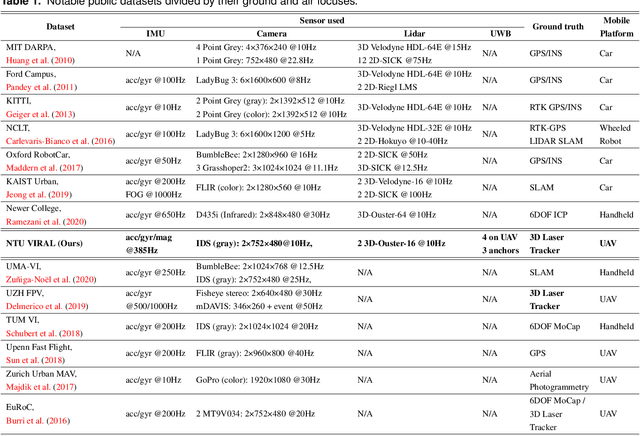

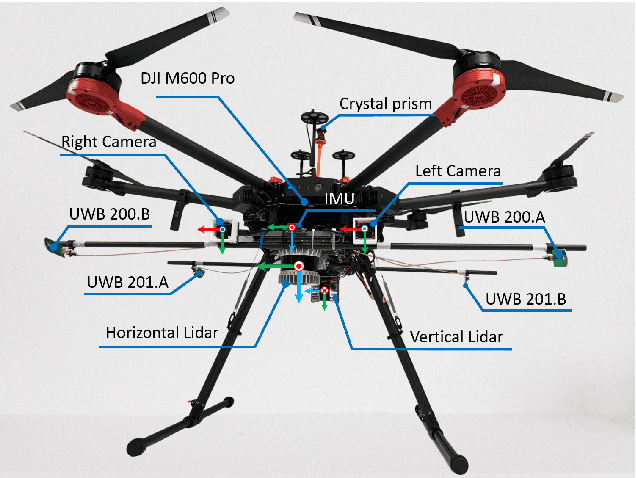

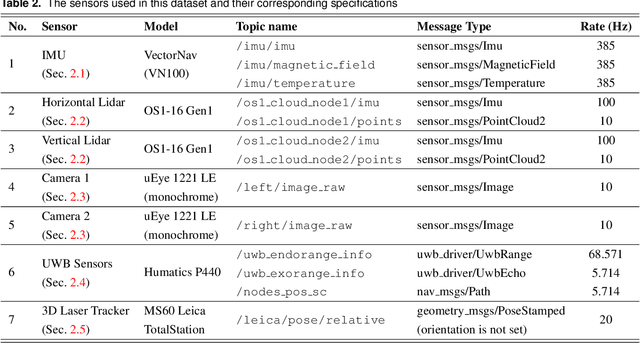

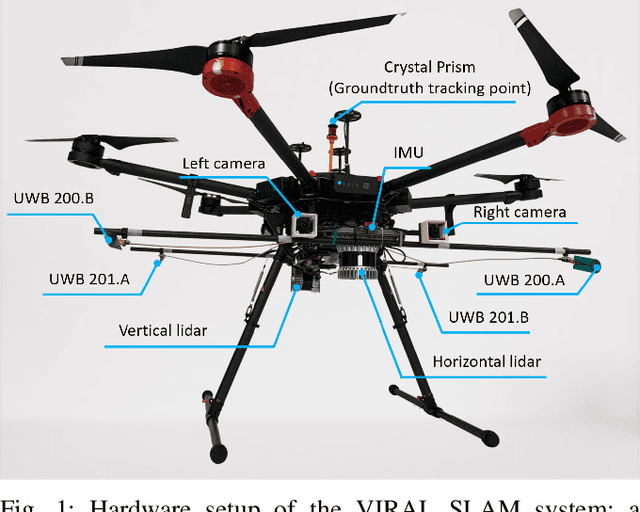

NTU VIRAL: A Visual-Inertial-Ranging-Lidar Dataset, From an Aerial Vehicle Viewpoint

Feb 01, 2022

Abstract:In recent years, autonomous robots have become ubiquitous in research and daily life. Among many factors, public datasets play an important role in the progress of this field, as they waive the tall order of initial investment in hardware and manpower. However, for research on autonomous aerial systems, there appears to be a relative lack of public datasets on par with those used for autonomous driving and ground robots. Thus, to fill in this gap, we conduct a data collection exercise on an aerial platform equipped with an extensive and unique set of sensors: two 3D lidars, two hardware-synchronized global-shutter cameras, multiple Inertial Measurement Units (IMUs), and especially, multiple Ultra-wideband (UWB) ranging units. The comprehensive sensor suite resembles that of an autonomous driving car, but features distinct and challenging characteristics of aerial operations. We record multiple datasets in several challenging indoor and outdoor conditions. Calibration results and ground truth from a high-accuracy laser tracker are also included in each package. All resources can be accessed via our webpage https://ntu-aris.github.io/ntu_viral_dataset.

Relative Transformation Estimation Based on Fusion of Odometry and UWB Ranging Data

Feb 01, 2022

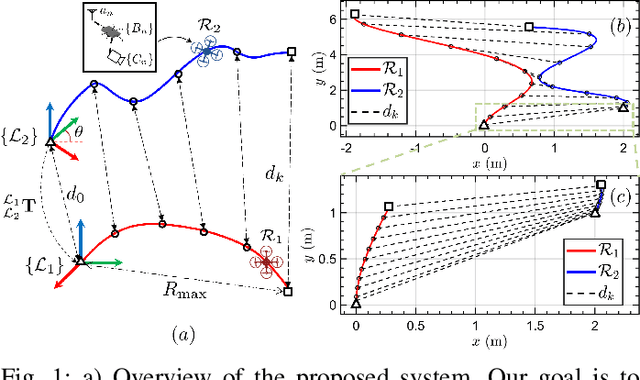

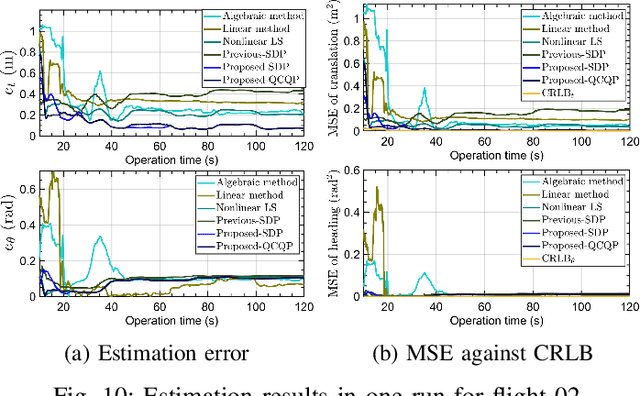

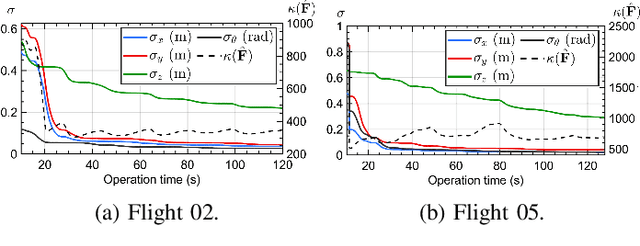

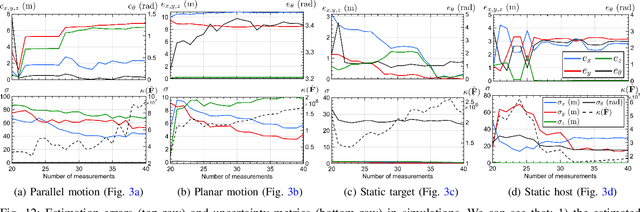

Abstract:In this work, the problem of 4 degree-of-freedom (3D position and heading) robot-to-robot relative frame transformation estimation using onboard odometry and inter-robot distance measurements is studied. Firstly, we present a theoretical analysis of the problem, namely the derivation and interpretation of the Cramer-Rao Lower Bound (CRLB), the Fisher Information Matrix (FIM) and its determinant. Secondly, we propose optimization-based methods to solve the problem, including a quadratically constrained quadratic programming (QCQP) and the corresponding semidefinite programming (SDP) relaxation. Moreover, we address practical issues that are ignored in previous works, such as accounting for spatial-temporal offsets between the ultra-wideband (UWB) and odometry sensors, rejecting UWB outliers and checking for singular configurations before commencing operation. Lastly, extensive simulations and real-life experiments with aerial robots show that the proposed QCQP and SDP methods outperform state-of-the-art methods, especially in geometrically poor or large measurement noise conditions. In general, the QCQP method provides the best results at the expense of computational time, while the SDP method runs much faster and is sufficiently accurate in most cases.

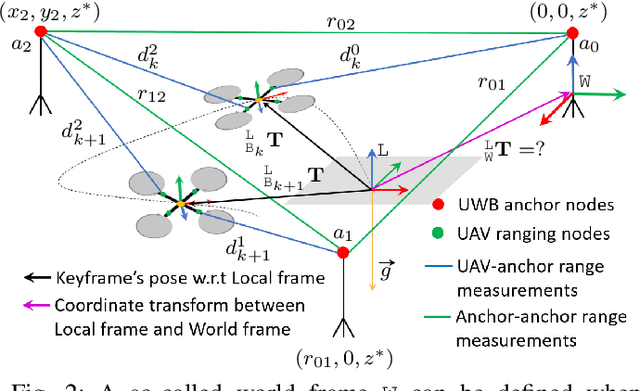

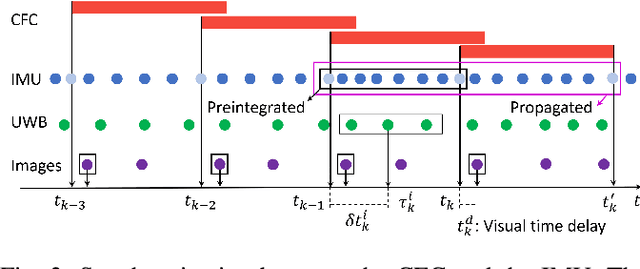

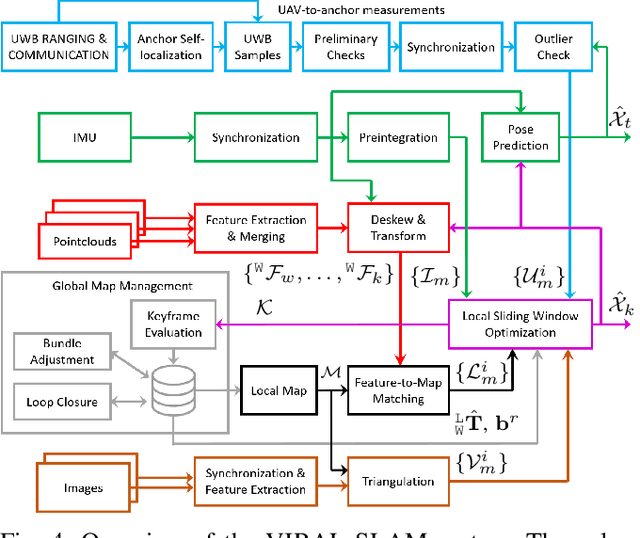

VIRAL SLAM: Tightly Coupled Camera-IMU-UWB-Lidar SLAM

May 16, 2021

Abstract:In this paper, we propose a tightly-coupled, multi-modal simultaneous localization and mapping (SLAM) framework, integrating an extensive set of sensors: IMU, cameras, multiple lidars, and Ultra-wideband (UWB) range measurements, hence referred to as VIRAL (visual-inertial-ranging-lidar) SLAM. To achieve such a comprehensive sensor fusion system, one has to tackle several challenges such as data synchronization, multi-threading programming, bundle adjustment (BA), and conflicting coordinate frames between UWB and the onboard sensors, so as to ensure real-time localization and smooth updates in the state estimates. To this end, we propose a two stage approach. In the first stage, lidar, camera, and IMU data on a local sliding window are processed in a core odometry thread. From this local graph, new key frames are evaluated for admission to a global map. Visual feature-based loop closure is also performed to supplement the global factor graph with loop constraints. When the global factor graph satisfies a condition on spatial diversity, the BA process will be triggered, which updates the coordinate transform between UWB and onboard SLAM systems. The system then seamlessly transitions to the second stage where all sensors are tightly integrated in the odometry thread. The capability of our system is demonstrated via several experiments on high-fidelity graphical-physical simulation and public datasets.

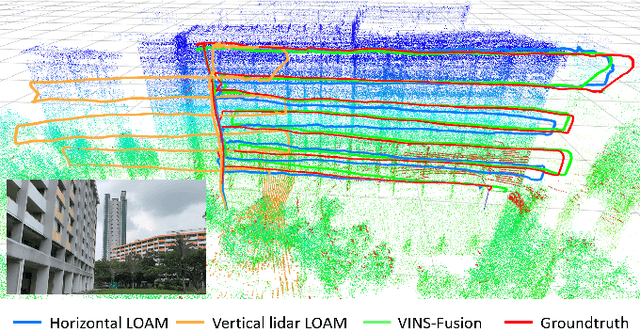

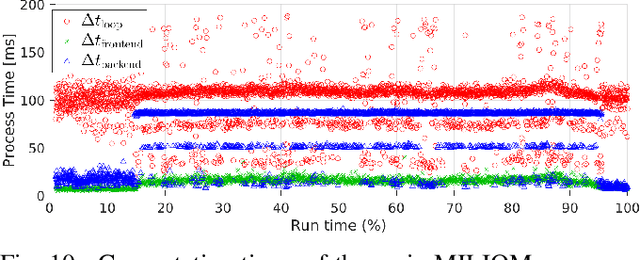

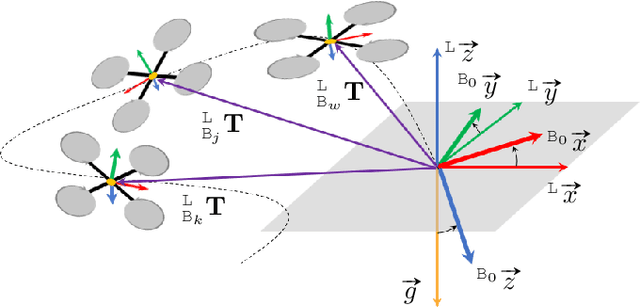

MILIOM: Tightly Coupled Multi-Input Lidar-Inertia Odometry and Mapping

Apr 24, 2021

Abstract:In this paper we investigate a tightly coupled Lidar-Inertia Odometry and Mapping (LIOM) scheme, with the capability to incorporate multiple lidars with complementary field of view (FOV). In essence, we devise a time-synchronized scheme to combine extracted features from separate lidars into a single pointcloud, which is then used to construct a local map and compute the feature-map matching (FMM) coefficients. These coefficients, along with the IMU preinteration observations, are then used to construct a factor graph that will be optimized to produce an estimate of the sliding window trajectory. We also propose a key frame-based map management strategy to marginalize certain poses and pointclouds in the sliding window to grow a global map, which is used to assemble the local map in the later stage. The use of multiple lidars with complementary FOV and the global map ensures that our estimate has low drift and can sustain good localization in situations where single lidar use gives poor result, or even fails to work. Multi-thread computation implementations are also adopted to fractionally cut down the computation time and ensure real-time performance. We demonstrate the efficacy of our system via a series of experiments on public datasets collected from an aerial vehicle.

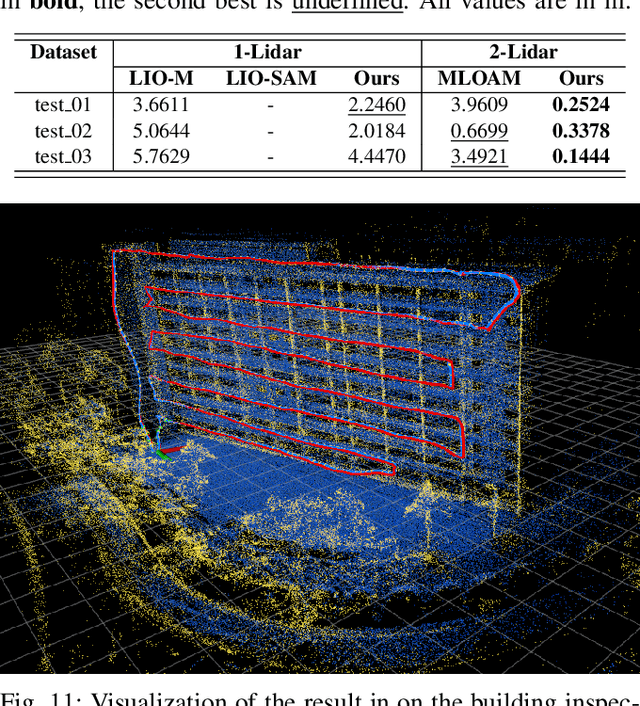

SPINS: Structure Priors aided Inertial Navigation System

Dec 28, 2020

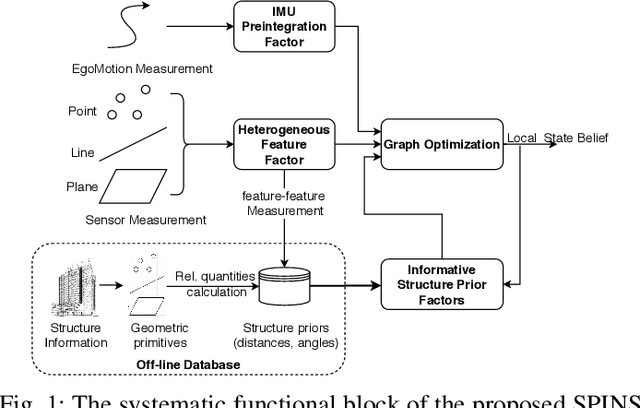

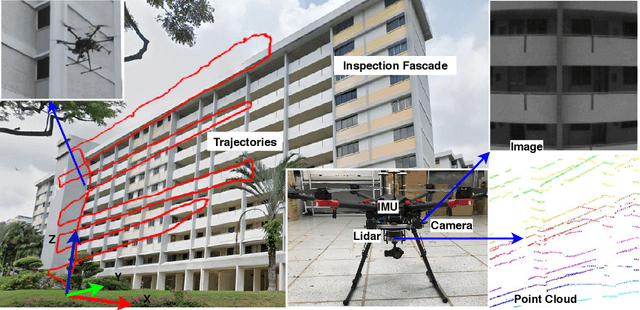

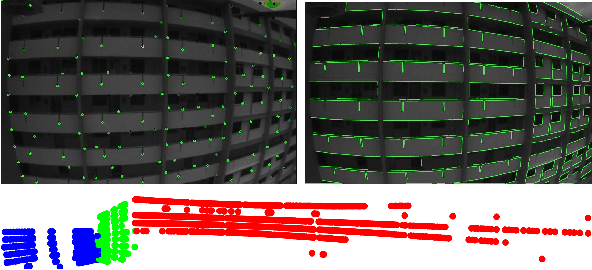

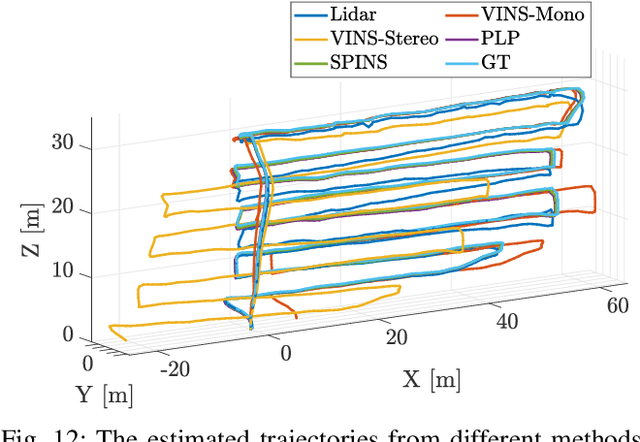

Abstract:Although Simultaneous Localization and Mapping (SLAM) has been an active research topic for decades, current state-of-the-art methods still suffer from instability or inaccuracy due to feature insufficiency or its inherent estimation drift, in many civilian environments. To resolve these issues, we propose a navigation system combing the SLAM and prior-map-based localization. Specifically, we consider additional integration of line and plane features, which are ubiquitous and more structurally salient in civilian environments, into the SLAM to ensure feature sufficiency and localization robustness. More importantly, we incorporate general prior map information into the SLAM to restrain its drift and improve the accuracy. To avoid rigorous association between prior information and local observations, we parameterize the prior knowledge as low dimensional structural priors defined as relative distances/angles between different geometric primitives. The localization is formulated as a graph-based optimization problem that contains sliding-window-based variables and factors, including IMU, heterogeneous features, and structure priors. We also derive the analytical expressions of Jacobians of different factors to avoid the automatic differentiation overhead. To further alleviate the computation burden of incorporating structural prior factors, a selection mechanism is adopted based on the so-called information gain to incorporate only the most effective structure priors in the graph optimization. Finally, the proposed framework is extensively tested on synthetic data, public datasets, and, more importantly, on the real UAV flight data obtained from a building inspection task. The results show that the proposed scheme can effectively improve the accuracy and robustness of localization for autonomous robots in civilian applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge