Shubhankar Agarwal

Context Matters: Leveraging Contextual Features for Time Series Forecasting

Oct 17, 2024Abstract:Time series forecasts are often influenced by exogenous contextual features in addition to their corresponding history. For example, in financial settings, it is hard to accurately predict a stock price without considering public sentiments and policy decisions in the form of news articles, tweets, etc. Though this is common knowledge, the current state-of-the-art (SOTA) forecasting models fail to incorporate such contextual information, owing to its heterogeneity and multimodal nature. To address this, we introduce ContextFormer, a novel plug-and-play method to surgically integrate multimodal contextual information into existing pre-trained forecasting models. ContextFormer effectively distills forecast-specific information from rich multimodal contexts, including categorical, continuous, time-varying, and even textual information, to significantly enhance the performance of existing base forecasters. ContextFormer outperforms SOTA forecasting models by up to 30% on a range of real-world datasets spanning energy, traffic, environmental, and financial domains.

Constrained Posterior Sampling: Time Series Generation with Hard Constraints

Oct 16, 2024

Abstract:Generating realistic time series samples is crucial for stress-testing models and protecting user privacy by using synthetic data. In engineering and safety-critical applications, these samples must meet certain hard constraints that are domain-specific or naturally imposed by physics or nature. Consider, for example, generating electricity demand patterns with constraints on peak demand times. This can be used to stress-test the functioning of power grids during adverse weather conditions. Existing approaches for generating constrained time series are either not scalable or degrade sample quality. To address these challenges, we introduce Constrained Posterior Sampling (CPS), a diffusion-based sampling algorithm that aims to project the posterior mean estimate into the constraint set after each denoising update. Notably, CPS scales to a large number of constraints (~100) without requiring additional training. We provide theoretical justifications highlighting the impact of our projection step on sampling. Empirically, CPS outperforms state-of-the-art methods in sample quality and similarity to real time series by around 10% and 42%, respectively, on real-world stocks, traffic, and air quality datasets.

Time Weaver: A Conditional Time Series Generation Model

Mar 05, 2024Abstract:Imagine generating a city's electricity demand pattern based on weather, the presence of an electric vehicle, and location, which could be used for capacity planning during a winter freeze. Such real-world time series are often enriched with paired heterogeneous contextual metadata (weather, location, etc.). Current approaches to time series generation often ignore this paired metadata, and its heterogeneity poses several practical challenges in adapting existing conditional generation approaches from the image, audio, and video domains to the time series domain. To address this gap, we introduce Time Weaver, a novel diffusion-based model that leverages the heterogeneous metadata in the form of categorical, continuous, and even time-variant variables to significantly improve time series generation. Additionally, we show that naive extensions of standard evaluation metrics from the image to the time series domain are insufficient. These metrics do not penalize conditional generation approaches for their poor specificity in reproducing the metadata-specific features in the generated time series. Thus, we innovate a novel evaluation metric that accurately captures the specificity of conditional generation and the realism of the generated time series. We show that Time Weaver outperforms state-of-the-art benchmarks, such as Generative Adversarial Networks (GANs), by up to 27% in downstream classification tasks on real-world energy, medical, air quality, and traffic data sets.

GPSINDy: Data-Driven Discovery of Equations of Motion

Sep 20, 2023

Abstract:In this paper, we consider the problem of discovering dynamical system models from noisy data. The presence of noise is known to be a significant problem for symbolic regression algorithms. We combine Gaussian process regression, a nonparametric learning method, with SINDy, a parametric learning approach, to identify nonlinear dynamical systems from data. The key advantages of our proposed approach are its simplicity coupled with the fact that it demonstrates improved robustness properties with noisy data over SINDy. We demonstrate our proposed approach on a Lotka-Volterra model and a unicycle dynamic model in simulation and on an NVIDIA JetRacer system using hardware data. We demonstrate improved performance over SINDy for discovering the system dynamics and predicting future trajectories.

Data Games: A Game-Theoretic Approach to Swarm Robotic Data Collection

Mar 07, 2023Abstract:Fleets of networked autonomous vehicles (AVs) collect terabytes of sensory data, which is often transmitted to central servers (the ''cloud'') for training machine learning (ML) models. Ideally, these fleets should upload all their data, especially from rare operating contexts, in order to train robust ML models. However, this is infeasible due to prohibitive network bandwidth and data labeling costs. Instead, we propose a cooperative data sampling strategy where geo-distributed AVs collaborate to collect a diverse ML training dataset in the cloud. Since the AVs have a shared objective but minimal information about each other's local data distribution and perception model, we can naturally cast cooperative data collection as an $N$-player mathematical game. We show that our cooperative sampling strategy uses minimal information to converge to a centralized oracle policy with complete information about all AVs. Moreover, we theoretically characterize the performance benefits of our game-theoretic strategy compared to greedy sampling. Finally, we experimentally demonstrate that our method outperforms standard benchmarks by up to $21.9\%$ on 4 perception datasets, including for autonomous driving in adverse weather conditions. Crucially, our experimental results on real-world datasets closely align with our theoretical guarantees.

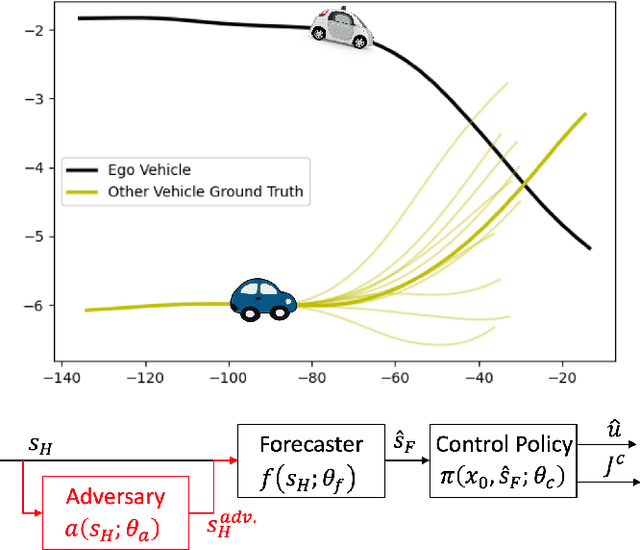

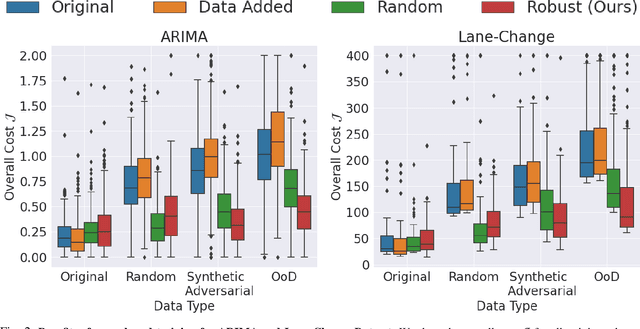

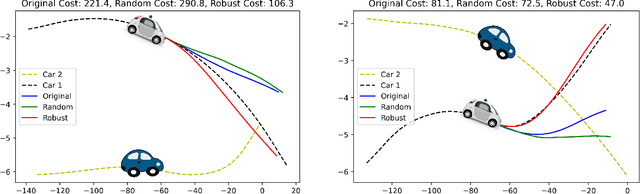

Robust Forecasting for Robotic Control: A Game-Theoretic Approach

Sep 22, 2022

Abstract:Modern robots require accurate forecasts to make optimal decisions in the real world. For example, self-driving cars need an accurate forecast of other agents' future actions to plan safe trajectories. Current methods rely heavily on historical time series to accurately predict the future. However, relying entirely on the observed history is problematic since it could be corrupted by noise, have outliers, or not completely represent all possible outcomes. To solve this problem, we propose a novel framework for generating robust forecasts for robotic control. In order to model real-world factors affecting future forecasts, we introduce the notion of an adversary, which perturbs observed historical time series to increase a robot's ultimate control cost. Specifically, we model this interaction as a zero-sum two-player game between a robot's forecaster and this hypothetical adversary. We show that our proposed game may be solved to a local Nash equilibrium using gradient-based optimization techniques. Furthermore, we show that a forecaster trained with our method performs 30.14% better on out-of-distribution real-world lane change data than baselines.

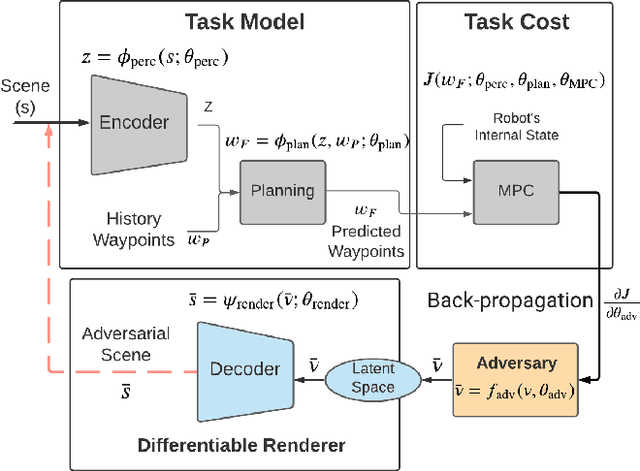

Task-Driven Data Augmentation for Vision-Based Robotic Control

Apr 26, 2022

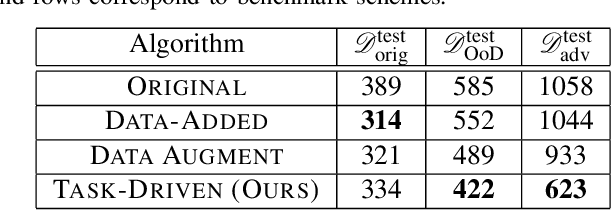

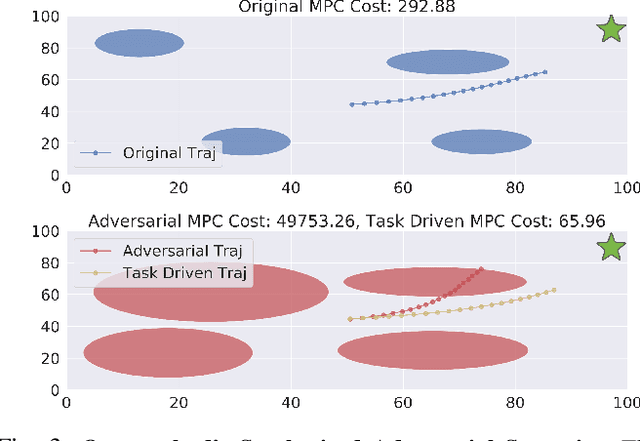

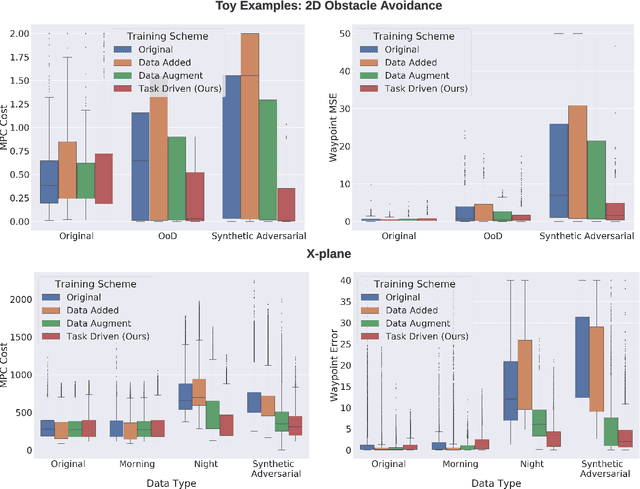

Abstract:Today's robots often interface data-driven perception and planning models with classical model-based controllers. For example, drones often use computer vision models to estimate navigation waypoints that are tracked by model predictive control (MPC). Often, such learned perception/planning models produce erroneous waypoint predictions on out-of-distribution (OoD) or even adversarial visual inputs, which increase control cost. However, today's methods to train robust perception models are largely task-agnostic - they augment a dataset using random image transformations or adversarial examples targeted at the vision model in isolation. As such, they often introduce pixel perturbations that are ultimately benign for control, while missing those that are most adversarial. In contrast to prior work that synthesizes adversarial examples for single-step vision tasks, our key contribution is to efficiently synthesize adversarial scenarios for multi-step, model-based control. To do so, we leverage differentiable MPC methods to calculate the sensitivity of a model-based controller to errors in state estimation, which in turn guides how we synthesize adversarial inputs. We show that re-training vision models on these adversarial datasets improves control performance on OoD test scenarios by up to 28.2% compared to standard task-agnostic data augmentation. Our system is tested on examples of robotic navigation and vision-based control of an autonomous air vehicle.

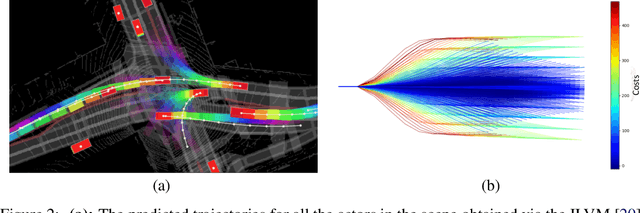

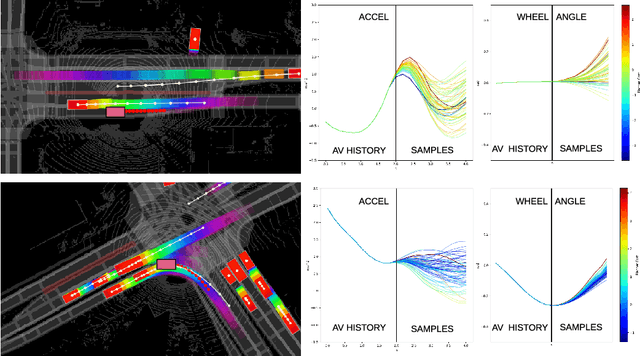

Imitative Planning using Conditional Normalizing Flow

Aug 26, 2020

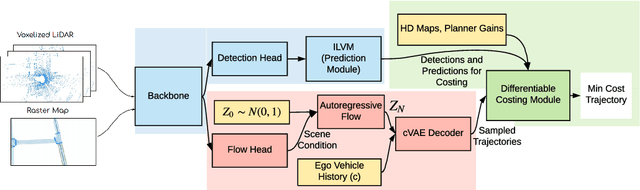

Abstract:We explore the application of normalizing flows for improving the performance of trajectory planning for autonomous vehicles (AVs). Normalizing flows provide an invertible mapping from a known prior distribution to a potentially complex, multi-modal target distribution and allow for fast sampling with exact PDF inference. By modeling a trajectory planner's cost manifold as an energy function we learn a scene conditioned mapping from the prior to a Boltzmann distribution over the AV control space. This mapping allows for control samples and their associated energy to be generated jointly and in parallel. We propose using neural autoregressive flow (NAF) as part of an end-to-end deep learned system that allows for utilizing sensors, map, and route information to condition the flow mapping. Finally, we demonstrate the effectiveness of our approach on real world datasets over IL and hand constructed trajectory sampling techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge