Robert Dyro

Performance assessment of ADAS in a representative subset of critical traffic situations

Sep 25, 2024Abstract:As a variety of automated collision prevention systems gain presence within personal vehicles, rating and differentiating the automated safety performance of car models has become increasingly important for consumers, manufacturers, and insurers. In 2023, Swiss Re and partners initiated an eight-month long vehicle testing campaign conducted on a recognized UNECE type approval authority and Euro NCAP accredited proving ground in Germany. The campaign exposed twelve mass-produced vehicle models and one prototype vehicle fitted with collision prevention systems to a selection of safety-critical traffic scenarios representative of United States and European Union accident landscape. In this paper, we compare and evaluate the relative safety performance of these thirteen collision prevention systems (hardware and software stack) as demonstrated by this testing campaign. We first introduce a new scoring system which represents a test system's predicted impact on overall real-world collision frequency and reduction of collision impact energy, weighted based on the real-world relevance of the test scenario. Next, we introduce a novel metric that quantifies the realism of the protocol and confirm that our test protocol is a plausible representation of real-world driving. Finally, we find that the prototype system in its pre-release state outperforms the mass-produced (post-consumer-release) vehicles in the majority of the tested scenarios on the test track.

Realistic Extreme Behavior Generation for Improved AV Testing

Sep 16, 2024Abstract:This work introduces a framework to diagnose the strengths and shortcomings of Autonomous Vehicle (AV) collision avoidance technology with synthetic yet realistic potential collision scenarios adapted from real-world, collision-free data. Our framework generates counterfactual collisions with diverse crash properties, e.g., crash angle and velocity, between an adversary and a target vehicle by adding perturbations to the adversary's predicted trajectory from a learned AV behavior model. Our main contribution is to ground these adversarial perturbations in realistic behavior as defined through the lens of data-alignment in the behavior model's parameter space. Then, we cluster these synthetic counterfactuals to identify plausible and representative collision scenarios to form the basis of a test suite for downstream AV system evaluation. We demonstrate our framework using two state-of-the-art behavior prediction models as sources of realistic adversarial perturbations, and show that our scenario clustering evokes interpretable failure modes from a baseline AV policy under evaluation.

Learning Deep SDF Maps Online for Robot Navigation and Exploration

Aug 02, 2022

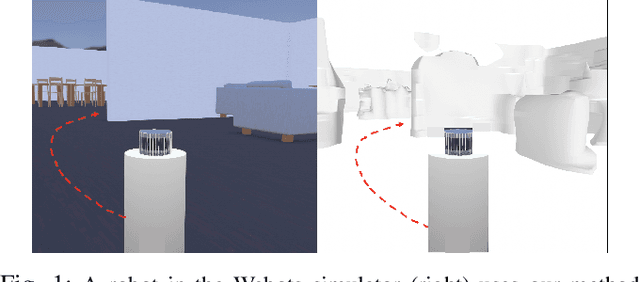

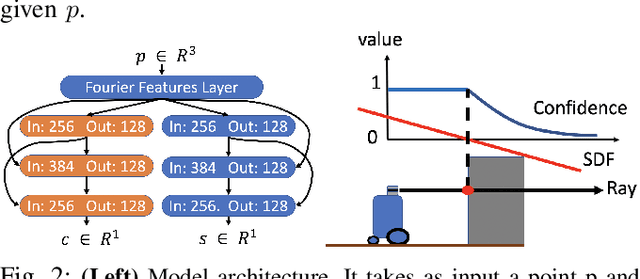

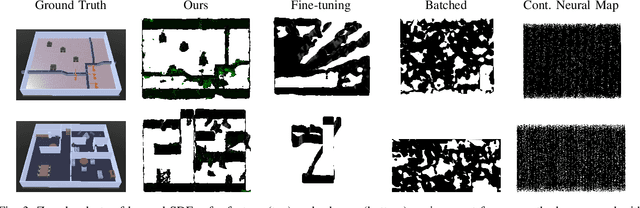

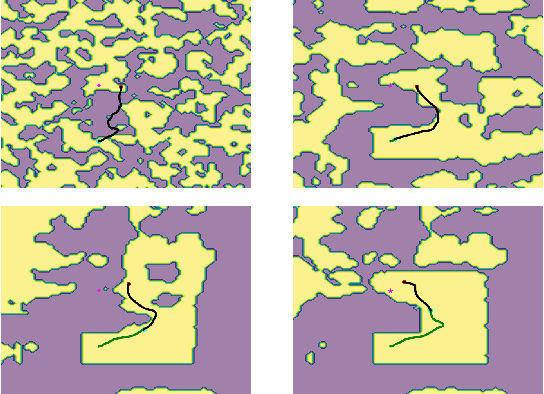

Abstract:We propose an algorithm to (i) learn online a deep signed distance function (SDF) with a LiDAR-equipped robot to represent the 3D environment geometry, and (ii) plan collision-free trajectories given this deep learned map. Our algorithm takes a stream of incoming LiDAR scans and continually optimizes a neural network to represent the SDF of the environment around its current vicinity. When the SDF network quality saturates, we cache a copy of the network, along with a learned confidence metric, and initialize a new SDF network to continue mapping new regions of the environment. We then concatenate all the cached local SDFs through a confidence-weighted scheme to give a global SDF for planning. For planning, we make use of a sequential convex model predictive control (MPC) algorithm. The MPC planner optimizes a dynamically feasible trajectory for the robot while enforcing no collisions with obstacles mapped in the global SDF. We show that our online mapping algorithm produces higher-quality maps than existing methods for online SDF training. In the WeBots simulator, we further showcase the combined mapper and planner running online -- navigating autonomously and without collisions in an unknown environment.

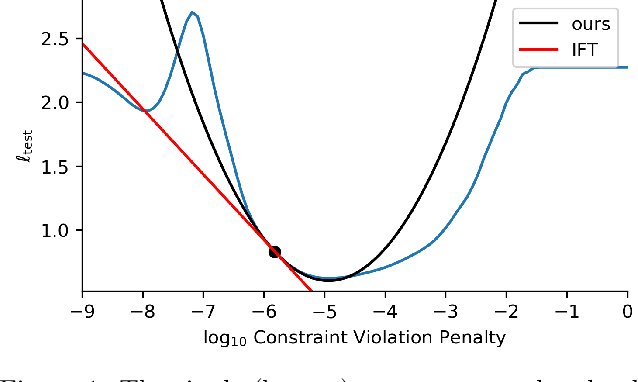

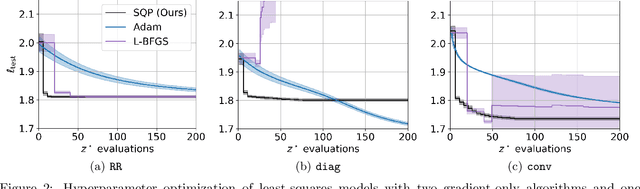

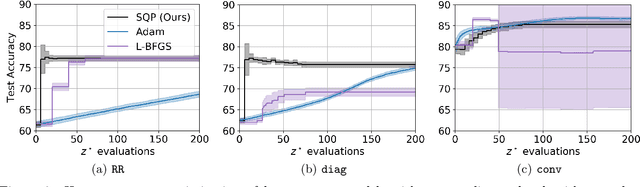

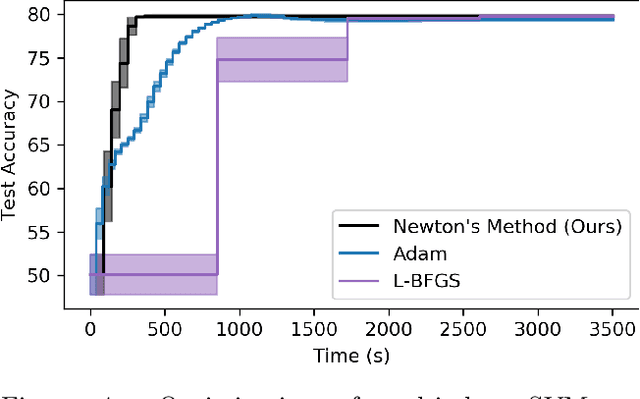

Second-Order Sensitivity Analysis for Bilevel Optimization

May 04, 2022

Abstract:In this work we derive a second-order approach to bilevel optimization, a type of mathematical programming in which the solution to a parameterized optimization problem (the "lower" problem) is itself to be optimized (in the "upper" problem) as a function of the parameters. Many existing approaches to bilevel optimization employ first-order sensitivity analysis, based on the implicit function theorem (IFT), for the lower problem to derive a gradient of the lower problem solution with respect to its parameters; this IFT gradient is then used in a first-order optimization method for the upper problem. This paper extends this sensitivity analysis to provide second-order derivative information of the lower problem (which we call the IFT Hessian), enabling the usage of faster-converging second-order optimization methods at the upper level. Our analysis shows that (i) much of the computation already used to produce the IFT gradient can be reused for the IFT Hessian, (ii) errors bounds derived for the IFT gradient readily apply to the IFT Hessian, (iii) computing IFT Hessians can significantly reduce overall computation by extracting more information from each lower level solve. We corroborate our findings and demonstrate the broad range of applications of our method by applying it to problem instances of least squares hyperparameter auto-tuning, multi-class SVM auto-tuning, and inverse optimal control.

* 16 pages, 6 figures

Particle MPC for Uncertain and Learning-Based Control

Apr 13, 2021

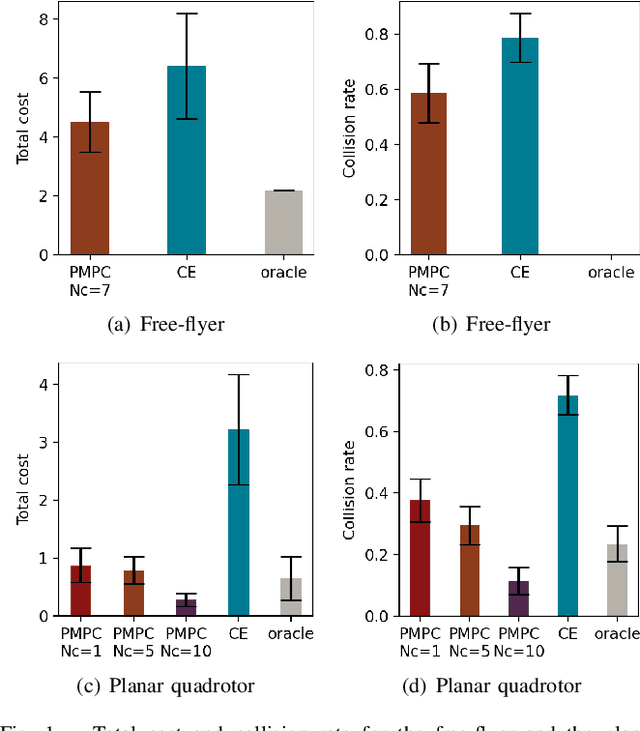

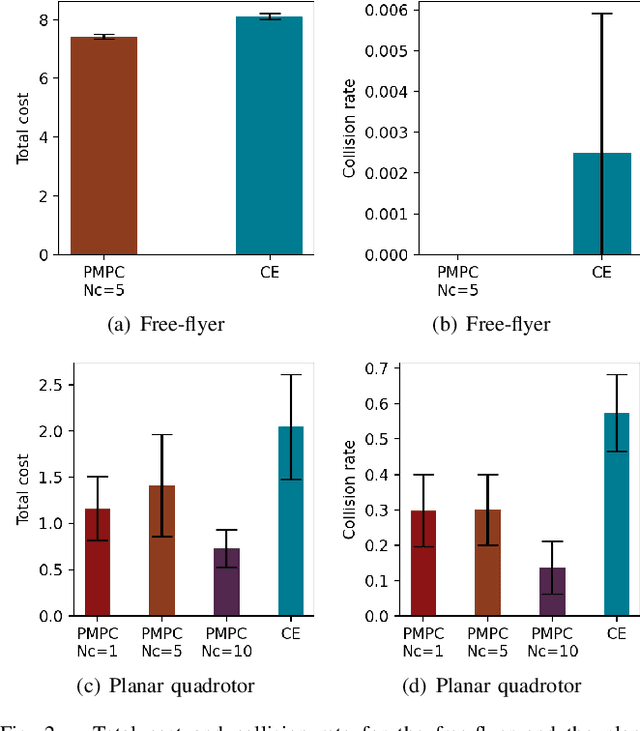

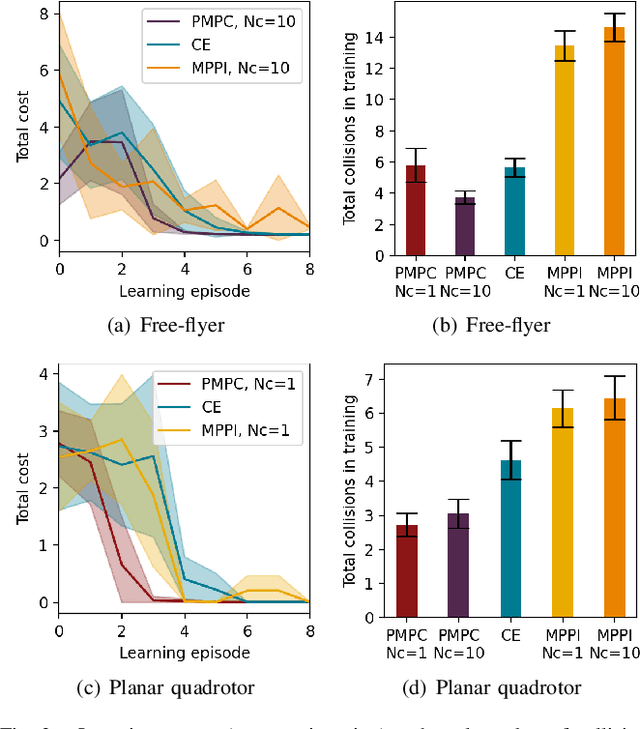

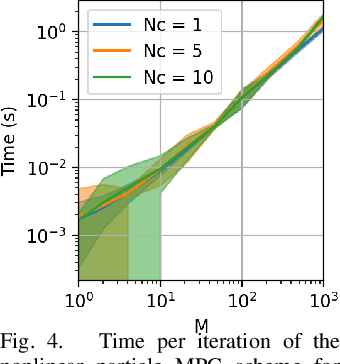

Abstract:As robotic systems move from highly structured environments to open worlds, incorporating uncertainty from dynamics learning or state estimation into the control pipeline is essential for robust performance. In this paper we present a nonlinear particle model predictive control (PMPC) approach to control under uncertainty, which directly incorporates any particle-based uncertainty representation, such as those common in robotics. Our approach builds on scenario methods for MPC, but in contrast to existing approaches, which either constrain all or only the first timestep to share actions across scenarios, we investigate the impact of a \textit{partial consensus horizon}. Implementing this optimization for nonlinear dynamics by leveraging sequential convex optimization, our approach yields an efficient framework that can be tuned to the particular information gain dynamics of a system to mitigate both over-conservatism and over-optimism. We investigate our approach for two robotic systems across three problem settings: time-varying, partially observed dynamics; sensing uncertainty; and model-based reinforcement learning, and show that our approach improves performance over baselines in all settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge