Ram Vasudevan

Can't Touch This: Real-Time, Safe Motion Planning and Control for Manipulators Under Uncertainty

Jan 30, 2023

Abstract:A key challenge to the widespread deployment of robotic manipulators is the need to ensure safety in arbitrary environments while generating new motion plans in real-time. In particular, one must ensure that a manipulator does not collide with obstacles, collide with itself, or exceed its joint torque limits. This challenge is compounded by the need to account for uncertainty in the mass and inertia of manipulated objects, and potentially the robot itself. The present work addresses this challenge by proposing Autonomous Robust Manipulation via Optimization with Uncertainty-aware Reachability (ARMOUR), a provably-safe, receding-horizon trajectory planner and tracking controller framework for serial link manipulators. ARMOUR works by first constructing a robust, passivity-based controller that is proven to enable a manipulator to track desired trajectories with bounded error despite uncertain dynamics. Next, ARMOUR uses a novel variation on the Recursive Newton-Euler Algorithm (RNEA) to compute the set of all possible inputs required to track any trajectory within a continuum of desired trajectories. Finally, the method computes an over-approximation to the swept volume of the manipulator; this enables one to formulate an optimization problem, which can be solved in real-time, to synthesize provably-safe motion. The proposed method is compared to state of the art methods and demonstrated on a variety of challenging manipulation examples in simulation and on real hardware, such as maneuvering a dumbbell with uncertain mass around obstacles.

REFINE: Reachability-based Trajectory Design using Robust Feedback Linearization and Zonotopes

Nov 22, 2022Abstract:Performing real-time receding horizon motion planning for autonomous vehicles while providing safety guarantees remains difficult. This is because existing methods to accurately predict ego vehicle behavior under a chosen controller use online numerical integration that requires a fine time discretization and thereby adversely affects real-time performance. To address this limitation, several recent papers have proposed to apply offline reachability analysis to conservatively predict the behavior of the ego vehicle. This reachable set can be constructed by utilizing a simplified model whose behavior is assumed a priori to conservatively bound the dynamics of a full-order model. However, guaranteeing that one satisfies this assumption is challenging. This paper proposes a framework named REFINE to overcome the limitations of these existing approaches. REFINE utilizes a parameterized robust controller that partially linearizes the vehicle dynamics even in the presence of modeling error. Zonotope-based reachability analysis is then performed on the closed-loop, full-order vehicle dynamics to compute the corresponding control-parameterized, over-approximate Forward Reachable Sets (FRS). Because reachability analysis is applied to the full-order model, the potential conservativeness introduced by using a simplified model is avoided. The pre-computed, control-parameterized FRS is then used online in an optimization framework to ensure safety. The proposed method is compared to several state of the art methods during a simulation-based evaluation on a full-size vehicle model and is evaluated on a 1/10th race car robot in real hardware testing. In contrast to existing methods, REFINE is shown to enable the vehicle to safely navigate itself through complex environments.

LiSnowNet: Real-time Snow Removal for LiDAR Point Cloud

Nov 18, 2022Abstract:LiDARs have been widely adopted to modern self-driving vehicles, providing 3D information of the scene and surrounding objects. However, adverser weather conditions still pose significant challenges to LiDARs since point clouds captured during snowfall can easily be corrupted. The resulting noisy point clouds degrade downstream tasks such as mapping. Existing works in de-noising point clouds corrupted by snow are based on nearest-neighbor search, and thus do not scale well with modern LiDARs which usually capture $100k$ or more points at 10Hz. In this paper, we introduce an unsupervised de-noising algorithm, LiSnowNet, running 52$\times$ faster than the state-of-the-art methods while achieving superior performance in de-noising. Unlike previous methods, the proposed algorithm is based on a deep convolutional neural network and can be easily deployed to hardware accelerators such as GPUs. In addition, we demonstrate how to use the proposed method for mapping even with corrupted point clouds.

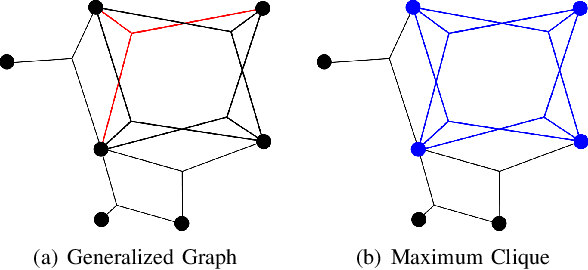

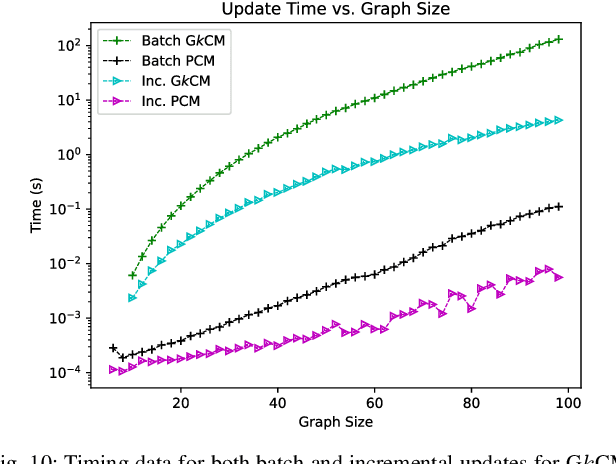

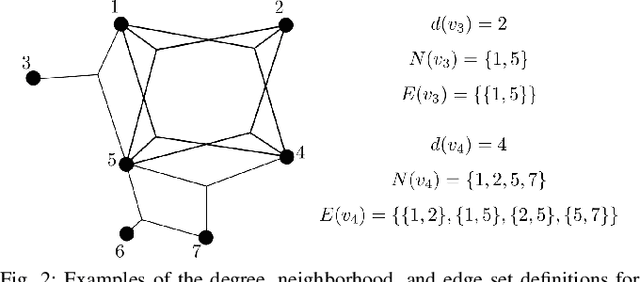

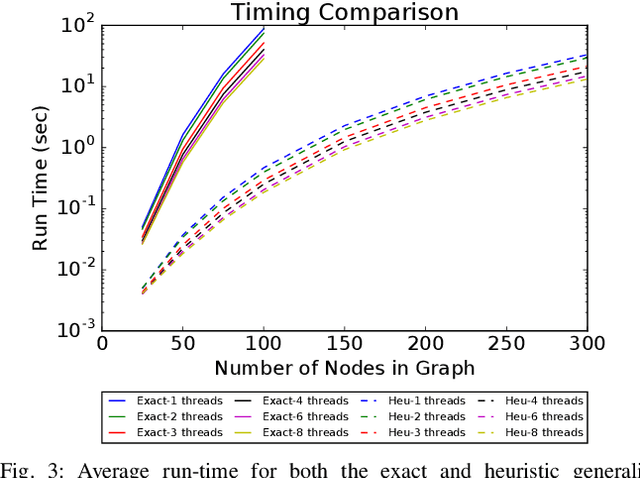

Group-$k$ Consistent Measurement Set Maximization for Robust Outlier Detection

Sep 06, 2022

Abstract:This paper presents a method for the robust selection of measurements in a simultaneous localization and mapping (SLAM) framework. Existing methods check consistency or compatibility on a pairwise basis, however many measurement types are not sufficiently constrained in a pairwise scenario to determine if either measurement is inconsistent with the other. This paper presents group-$k$ consistency maximization (G$k$CM) that estimates the largest set of measurements that is internally group-$k$ consistent. Solving for the largest set of group-$k$ consistent measurements can be formulated as an instance of the maximum clique problem on generalized graphs and can be solved by adapting current methods. This paper evaluates the performance of G$k$CM using simulated data and compares it to pairwise consistency maximization (PCM) presented in previous work.

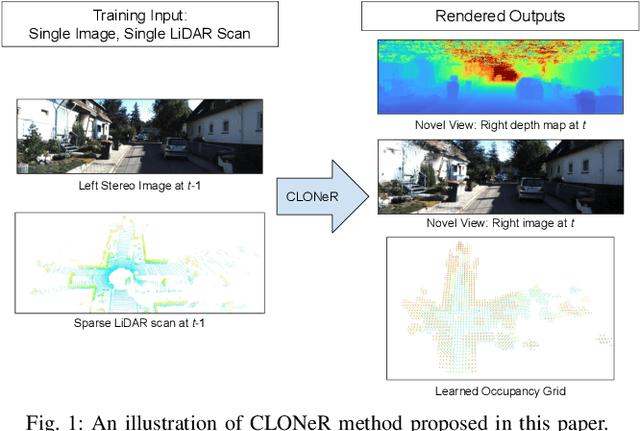

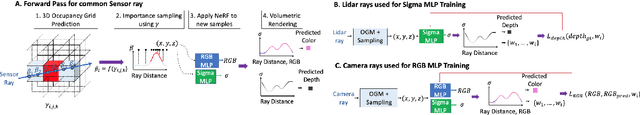

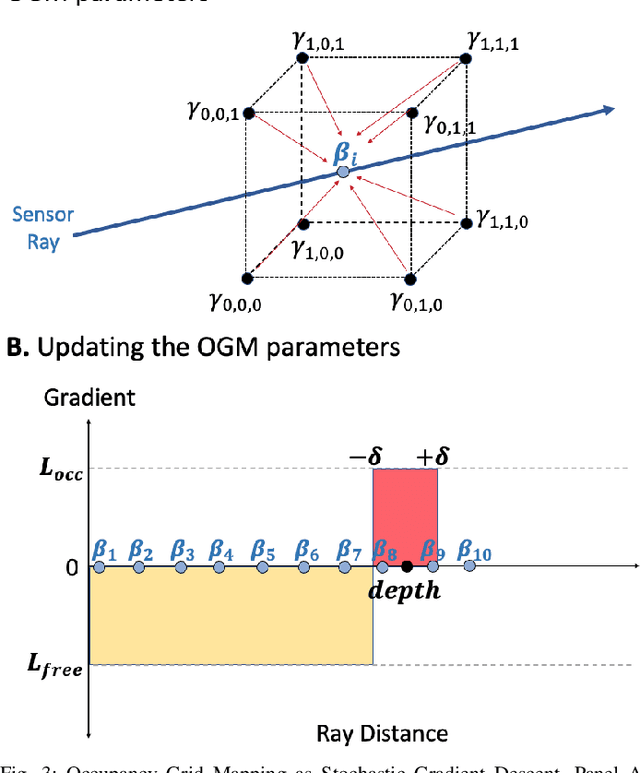

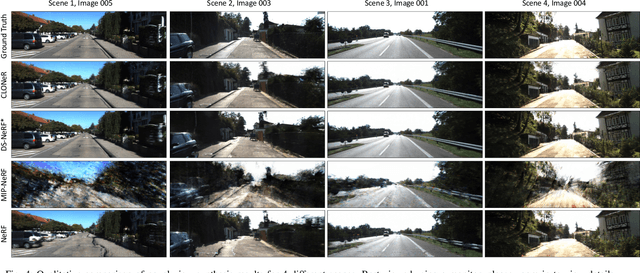

CLONeR: Camera-Lidar Fusion for Occupancy Grid-aided Neural Representations

Sep 06, 2022

Abstract:Recent advances in neural radiance fields (NeRFs) achieve state-of-the-art novel view synthesis and facilitate dense estimation of scene properties. However, NeRFs often fail for large, unbounded scenes that are captured under very sparse views with the scene content concentrated far away from the camera, as is typical for field robotics applications. In particular, NeRF-style algorithms perform poorly: (1) when there are insufficient views with little pose diversity, (2) when scenes contain saturation and shadows, and (3) when finely sampling large unbounded scenes with fine structures becomes computationally intensive. This paper proposes CLONeR, which significantly improves upon NeRF by allowing it to model large outdoor driving scenes that are observed from sparse input sensor views. This is achieved by decoupling occupancy and color learning within the NeRF framework into separate Multi-Layer Perceptrons (MLPs) trained using LiDAR and camera data, respectively. In addition, this paper proposes a novel method to build differentiable 3D Occupancy Grid Maps (OGM) alongside the NeRF model, and leverage this occupancy grid for improved sampling of points along a ray for volumetric rendering in metric space. Through extensive quantitative and qualitative experiments on scenes from the KITTI dataset, this paper demonstrates that the proposed method outperforms state-of-the-art NeRF models on both novel view synthesis and dense depth prediction tasks when trained on sparse input data.

BiPOCO: Bi-Directional Trajectory Prediction with Pose Constraints for Pedestrian Anomaly Detection

Jul 05, 2022

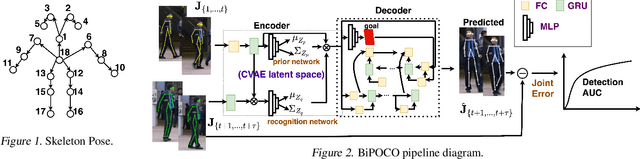

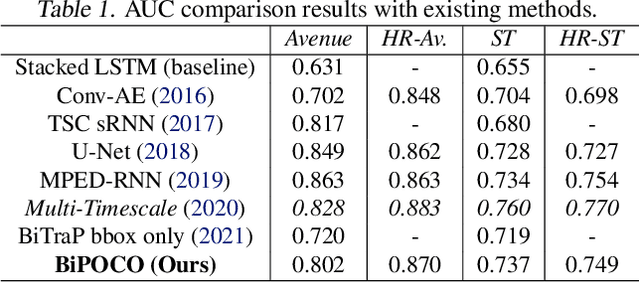

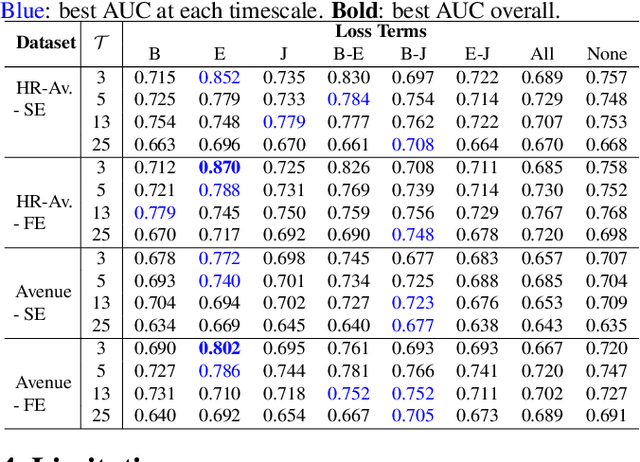

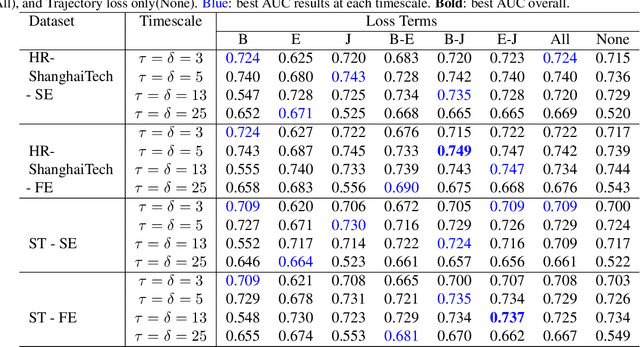

Abstract:We present BiPOCO, a Bi-directional trajectory predictor with POse COnstraints, for detecting anomalous activities of pedestrians in videos. In contrast to prior work based on feature reconstruction, our work identifies pedestrian anomalous events by forecasting their future trajectories and comparing the predictions with their expectations. We introduce a set of novel compositional pose-based losses with our predictor and leverage prediction errors of each body joint for pedestrian anomaly detection. Experimental results show that our BiPOCO approach can detect pedestrian anomalous activities with a high detection rate (up to 87.0%) and incorporating pose constraints helps distinguish normal and anomalous poses in prediction. This work extends current literature of using prediction-based methods for anomaly detection and can benefit safety-critical applications such as autonomous driving and surveillance. Code is available at https://github.com/akanuasiegbu/BiPOCO.

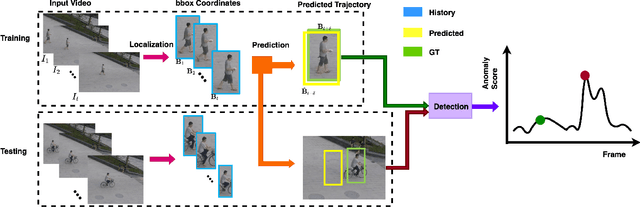

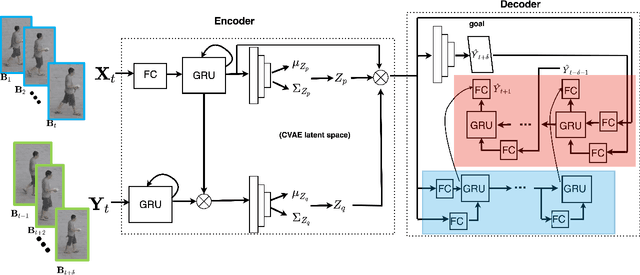

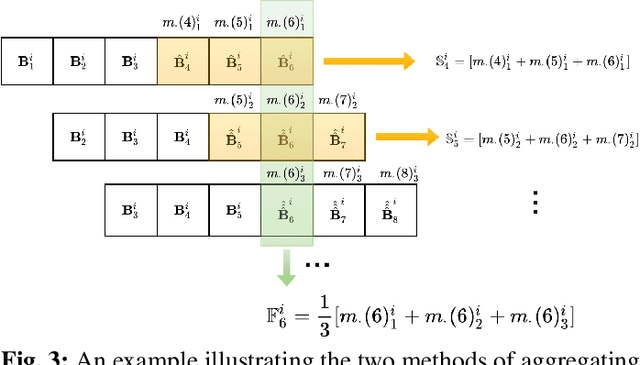

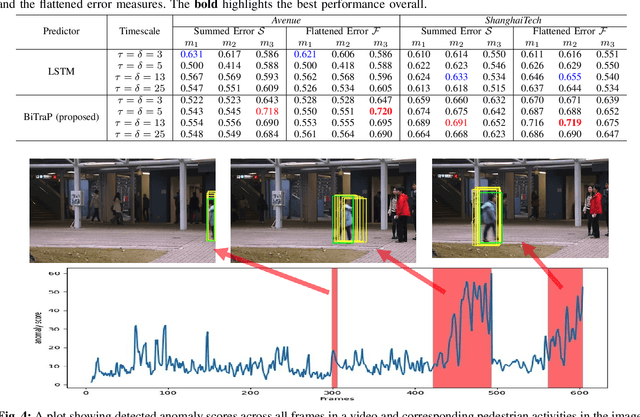

Leveraging Trajectory Prediction for Pedestrian Video Anomaly Detection

Jul 05, 2022

Abstract:Video anomaly detection is a core problem in vision. Correctly detecting and identifying anomalous behaviors in pedestrians from video data will enable safety-critical applications such as surveillance, activity monitoring, and human-robot interaction. In this paper, we propose to leverage trajectory localization and prediction for unsupervised pedestrian anomaly event detection. Different than previous reconstruction-based approaches, our proposed framework rely on the prediction errors of normal and abnormal pedestrian trajectories to detect anomalies spatially and temporally. We present experimental results on real-world benchmark datasets on varying timescales and show that our proposed trajectory-predictor-based anomaly detection pipeline is effective and efficient at identifying anomalous activities of pedestrians in videos. Code will be made available at https://github.com/akanuasiegbu/Leveraging-Trajectory-Prediction-for-Pedestrian-Video-Anomaly-Detection.

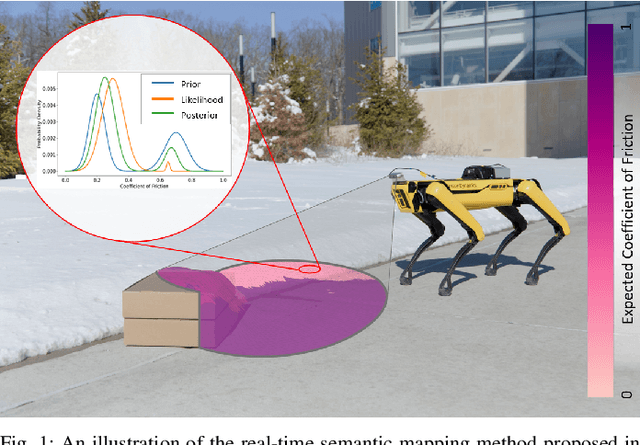

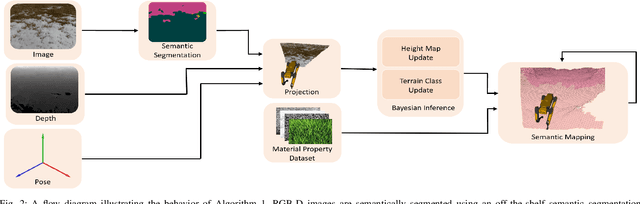

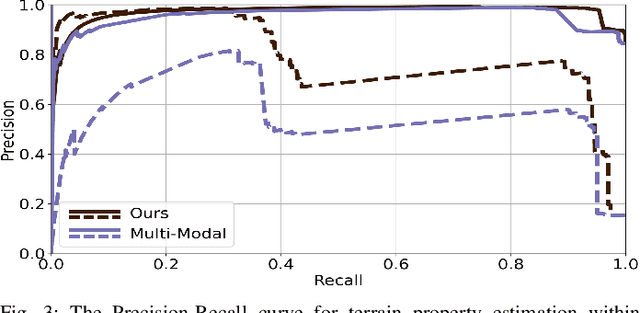

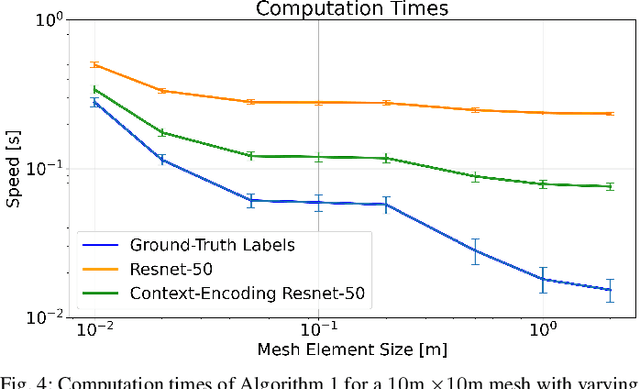

These Maps Are Made For Walking: Real-Time Terrain Property Estimation for Mobile Robots

May 25, 2022

Abstract:The equations of motion governing mobile robots are dependent on terrain properties such as the coefficient of friction, and contact model parameters. Estimating these properties is thus essential for robotic navigation. Ideally any map estimating terrain properties should run in real time, mitigate sensor noise, and provide probability distributions of the aforementioned properties, thus enabling risk-mitigating navigation and planning. This paper addresses these needs and proposes a Bayesian inference framework for semantic mapping which recursively estimates both the terrain surface profile and a probability distribution for terrain properties using data from a single RGB-D camera. The proposed framework is evaluated in simulation against other semantic mapping methods and is shown to outperform these state-of-the-art methods in terms of correctly estimating simulated ground-truth terrain properties when evaluated using a precision-recall curve and the Kullback-Leibler divergence test. Additionally, the proposed method is deployed on a physical legged robotic platform in both indoor and outdoor environments, and we show our method correctly predicts terrain properties in both cases. The proposed framework runs in real-time and includes a ROS interface for easy integration.

Lie Algebraic Cost Function Design for Control on Lie Groups

Apr 20, 2022

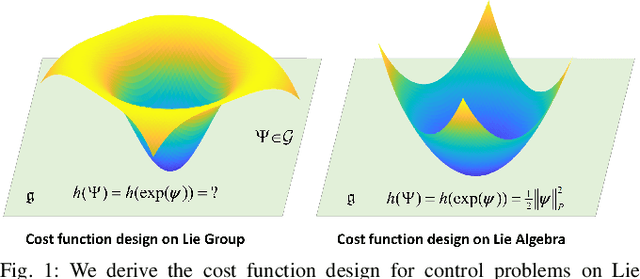

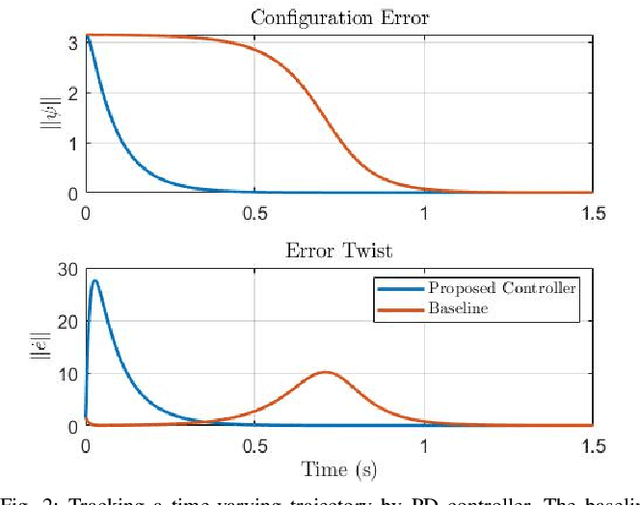

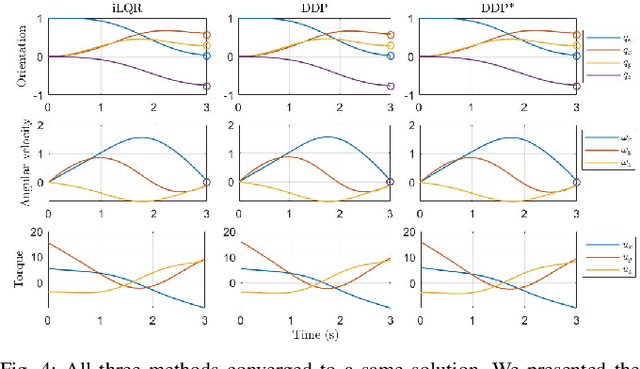

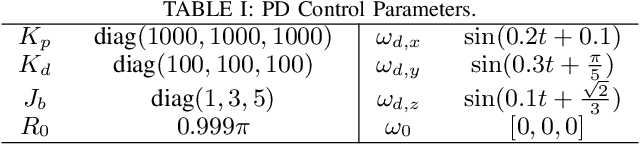

Abstract:This paper presents a control framework on Lie groups by designing the control objective in its Lie algebra. Control on Lie groups is challenging due to its nonlinear nature and difficulties in system parameterization. Existing methods to design the control objective on a Lie group and then derive the gradient for controller design are non-trivial and can result in slow convergence in tracking control. We show that with a proper left-invariant metric, setting the gradient of the cost function as the tracking error in the Lie algebra leads to a quadratic Lyapunov function that enables globally exponential convergence. In the PD control case, we show that our controller can maintain an exponential convergence rate even when the initial error is approaching $\pi$ in SO(3). We also show the merit of this proposed framework in trajectory optimization. The proposed cost function enables the iterative Linear Quadratic Regulator (iLQR) to converge much faster than the Differential Dynamic Programming (DDP) with a well-adopted cost function when the initial trajectory is poorly initialized on SO(3).

Input Influence Matrix Design for MIMO Discrete-Time Ultra-Local Model

Mar 16, 2022

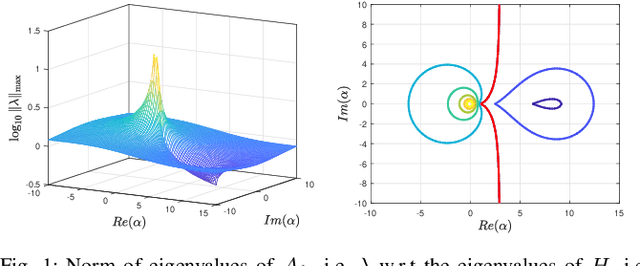

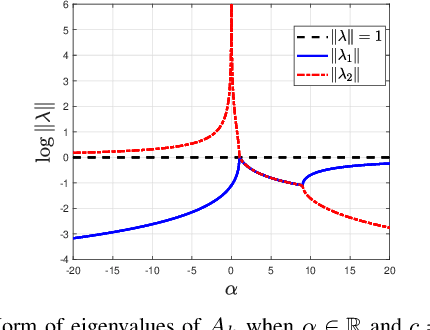

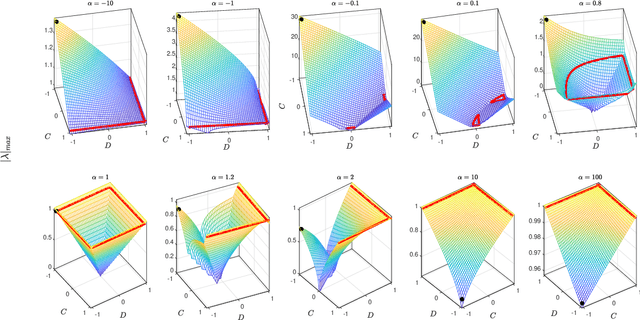

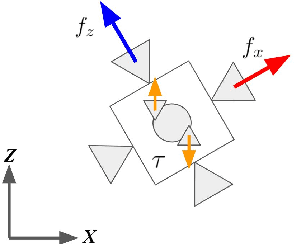

Abstract:Ultra-Local Models (ULM) have been applied to perform model-free control of nonlinear systems with unknown or partially known dynamics. Unfortunately, extending these methods to MIMO systems requires designing a dense input influence matrix which is challenging. This paper presents guidelines for designing an input influence matrix for discrete-time, control-affine MIMO systems using an ULM-based controller. This paper analyzes the case that uses ULM and a model-free control scheme: the H\"older-continuous Finite-Time Stable (FTS) control. By comparing the ULM with the actual system dynamics, the paper describes how to extract the input-dependent part from the lumped ULM dynamics and finds that the tracking and state estimation error are coupled. The stability of the ULM-FTS error dynamics is affected by the eigenvalues of the difference (defined by matrix multiplication) between the actual and designed input influence matrix. Finally, the paper shows that a wide range of input influence matrix designs can keep the ULM-FTS error dynamics (at least locally) asymptotically stable. A numerical simulation is included to verify the result. The analysis can also be extended to other ULM-based controllers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge