Qi Hong

Split CNN Inference on Networked Microcontrollers

May 10, 2026Abstract:Running deep neural networks on microcontroller units (MCUs) is severely constrained by limited memory resources. While TinyML techniques reduce model size and computation, they often fail in practice due to excessive peak Random Access Memory (RAM) usage during inference, dominated by intermediate activations. As a result, many models remain infeasible on standalone MCUs. In this work, we present a fine-grained split inference system for networked MCUs that enables collaborative inference of Convolutional Neural Networks (CNN) models across multiple devices. Our key insight is that breaking the memory bottleneck requires splitting inference at sub-layer granularity rather than at layer boundaries. We reinterpret pre-trained models to enable kernel-wise and neuron-wise partitioning, and distribute both model parameters and intermediate activations across multiple MCUs. A lightweight, resource-aware coordinator orchestrates the inference across MCU devices with heterogeneous resources. We implement the proposed system on a real testbed and evaluate it on up to 8 MCUs using MobileNetV2, a representative CNN model. Our experimental results show that CNN models infeasible on a single MCU can be executed across networked MCUs, reducing the per-MCU peak RAM usage while maintaining the practical end-to-end inference latency. All the source code of this work can be found here: https://github.com/shashsuresh/split-inference-on-MCUs.

* 10 pages

Let Experts Feel Uncertainty: A Multi-Expert Label Distribution Approach to Probabilistic Time Series Forecasting

Feb 04, 2026Abstract:Time series forecasting in real-world applications requires both high predictive accuracy and interpretable uncertainty quantification. Traditional point prediction methods often fail to capture the inherent uncertainty in time series data, while existing probabilistic approaches struggle to balance computational efficiency with interpretability. We propose a novel Multi-Expert Learning Distributional Labels (LDL) framework that addresses these challenges through mixture-of-experts architectures with distributional learning capabilities. Our approach introduces two complementary methods: (1) Multi-Expert LDL, which employs multiple experts with different learned parameters to capture diverse temporal patterns, and (2) Pattern-Aware LDL-MoE, which explicitly decomposes time series into interpretable components (trend, seasonality, changepoints, volatility) through specialized sub-experts. Both frameworks extend traditional point prediction to distributional learning, enabling rich uncertainty quantification through Maximum Mean Discrepancy (MMD). We evaluate our methods on aggregated sales data derived from the M5 dataset, demonstrating superior performance compared to baseline approaches. The continuous Multi-Expert LDL achieves the best overall performance, while the Pattern-Aware LDL-MoE provides enhanced interpretability through component-wise analysis. Our frameworks successfully balance predictive accuracy with interpretability, making them suitable for real-world forecasting applications where both performance and actionable insights are crucial.

Masked Face Recognition Dataset and Application

Mar 23, 2020

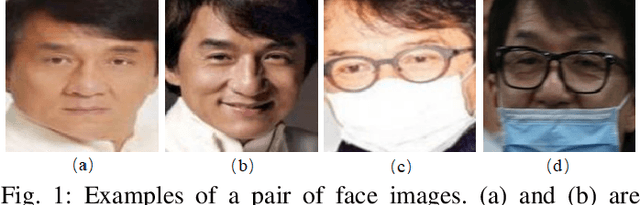

Abstract:In order to effectively prevent the spread of COVID-19 virus, almost everyone wears a mask during coronavirus epidemic. This almost makes conventional facial recognition technology ineffective in many cases, such as community access control, face access control, facial attendance, facial security checks at train stations, etc. Therefore, it is very urgent to improve the recognition performance of the existing face recognition technology on the masked faces. Most current advanced face recognition approaches are designed based on deep learning, which depend on a large number of face samples. However, at present, there are no publicly available masked face recognition datasets. To this end, this work proposes three types of masked face datasets, including Masked Face Detection Dataset (MFDD), Real-world Masked Face Recognition Dataset (RMFRD) and Simulated Masked Face Recognition Dataset (SMFRD). Among them, to the best of our knowledge, RMFRD is currently theworld's largest real-world masked face dataset. These datasets are freely available to industry and academia, based on which various applications on masked faces can be developed. The multi-granularity masked face recognition model we developed achieves 95% accuracy, exceeding the results reported by the industry. Our datasets are available at: https://github.com/X-zhangyang/Real-World-Masked-Face-Dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge