Pedro C. Neto

PIC-Score: Probabilistic Interpretable Comparison Score for Optimal Matching Confidence in Single- and Multi-Biometric (Face) Recognition

Nov 22, 2022

Abstract:In the context of biometrics, matching confidence refers to the confidence that a given matching decision is correct. Since many biometric systems operate in critical decision-making processes, such as in forensics investigations, accurately and reliably stating the matching confidence becomes of high importance. Previous works on biometric confidence estimation can well differentiate between high and low confidence, but lack interpretability. Therefore, they do not provide accurate probabilistic estimates of the correctness of a decision. In this work, we propose a probabilistic interpretable comparison (PIC) score that accurately reflects the probability that the score originates from samples of the same identity. We prove that the proposed approach provides optimal matching confidence. Contrary to other approaches, it can also optimally combine multiple samples in a joint PIC score which further increases the recognition and confidence estimation performance. In the experiments, the proposed PIC approach is compared against all biometric confidence estimation methods available on four publicly available databases and five state-of-the-art face recognition systems. The results demonstrate that PIC has a significantly more accurate probabilistic interpretation than similar approaches and is highly effective for multi-biometric recognition. The code is publicly-available.

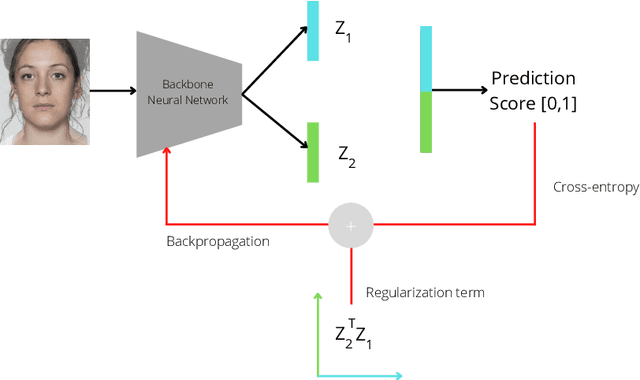

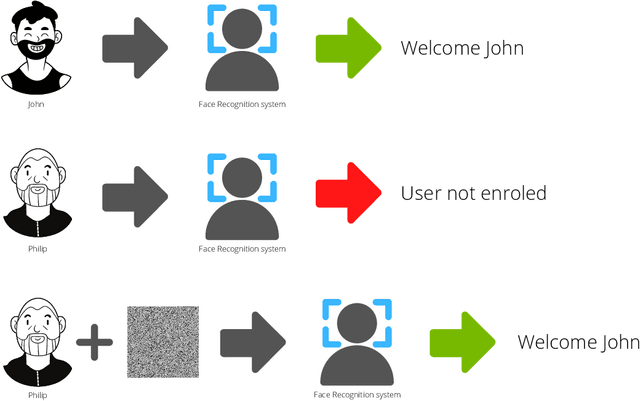

OrthoMAD: Morphing Attack Detection Through Orthogonal Identity Disentanglement

Aug 23, 2022

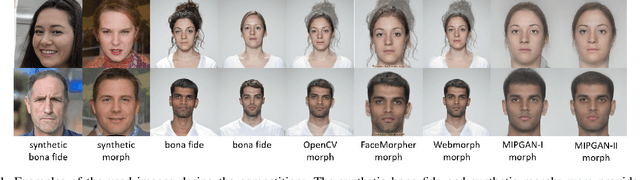

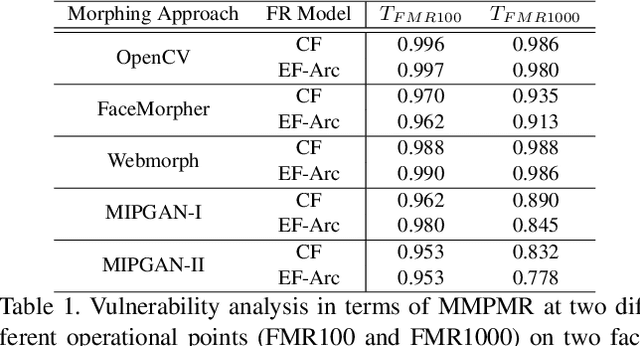

Abstract:Morphing attacks are one of the many threats that are constantly affecting deep face recognition systems. It consists of selecting two faces from different individuals and fusing them into a final image that contains the identity information of both. In this work, we propose a novel regularisation term that takes into account the existent identity information in both and promotes the creation of two orthogonal latent vectors. We evaluate our proposed method (OrthoMAD) in five different types of morphing in the FRLL dataset and evaluate the performance of our model when trained on five distinct datasets. With a small ResNet-18 as the backbone, we achieve state-of-the-art results in the majority of the experiments, and competitive results in the others. The code of this paper will be publicly available.

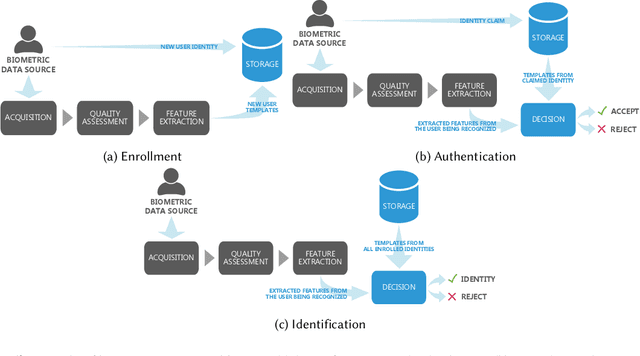

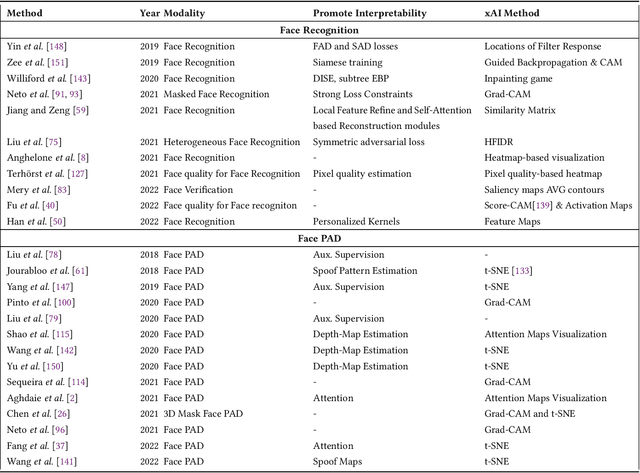

Explainable Biometrics in the Age of Deep Learning

Aug 19, 2022

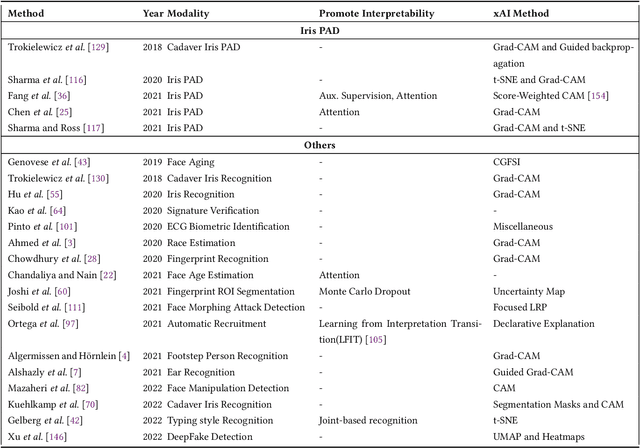

Abstract:Systems capable of analyzing and quantifying human physical or behavioral traits, known as biometrics systems, are growing in use and application variability. Since its evolution from handcrafted features and traditional machine learning to deep learning and automatic feature extraction, the performance of biometric systems increased to outstanding values. Nonetheless, the cost of this fast progression is still not understood. Due to its opacity, deep neural networks are difficult to understand and analyze, hence, hidden capacities or decisions motivated by the wrong motives are a potential risk. Researchers have started to pivot their focus towards the understanding of deep neural networks and the explanation of their predictions. In this paper, we provide a review of the current state of explainable biometrics based on the study of 47 papers and discuss comprehensively the direction in which this field should be developed.

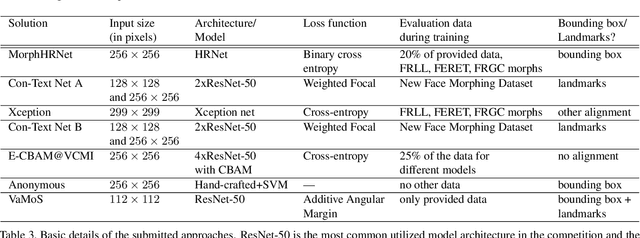

SYN-MAD 2022: Competition on Face Morphing Attack Detection Based on Privacy-aware Synthetic Training Data

Aug 15, 2022

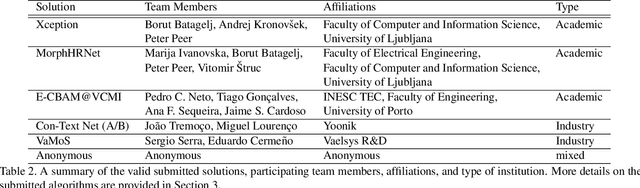

Abstract:This paper presents a summary of the Competition on Face Morphing Attack Detection Based on Privacy-aware Synthetic Training Data (SYN-MAD) held at the 2022 International Joint Conference on Biometrics (IJCB 2022). The competition attracted a total of 12 participating teams, both from academia and industry and present in 11 different countries. In the end, seven valid submissions were submitted by the participating teams and evaluated by the organizers. The competition was held to present and attract solutions that deal with detecting face morphing attacks while protecting people's privacy for ethical and legal reasons. To ensure this, the training data was limited to synthetic data provided by the organizers. The submitted solutions presented innovations that led to outperforming the considered baseline in many experimental settings. The evaluation benchmark is now available at: https://github.com/marcohuber/SYN-MAD-2022.

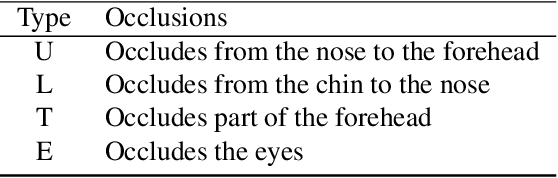

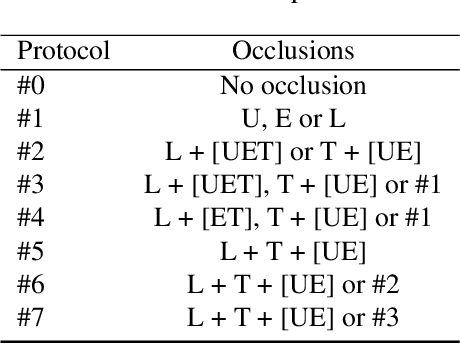

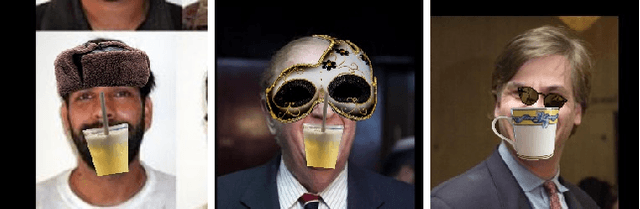

OCFR 2022: Competition on Occluded Face Recognition From Synthetically Generated Structure-Aware Occlusions

Aug 15, 2022

Abstract:This work summarizes the IJCB Occluded Face Recognition Competition 2022 (IJCB-OCFR-2022) embraced by the 2022 International Joint Conference on Biometrics (IJCB 2022). OCFR-2022 attracted a total of 3 participating teams, from academia. Eventually, six valid submissions were submitted and then evaluated by the organizers. The competition was held to address the challenge of face recognition in the presence of severe face occlusions. The participants were free to use any training data and the testing data was built by the organisers by synthetically occluding parts of the face images using a well-known dataset. The submitted solutions presented innovations and performed very competitively with the considered baseline. A major output of this competition is a challenging, realistic, and diverse, and publicly available occluded face recognition benchmark with well defined evaluation protocols.

Myope Models -- Are face presentation attack detection models short-sighted?

Nov 22, 2021

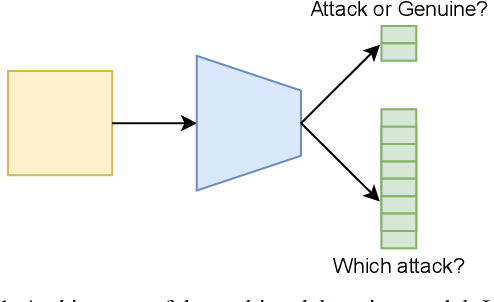

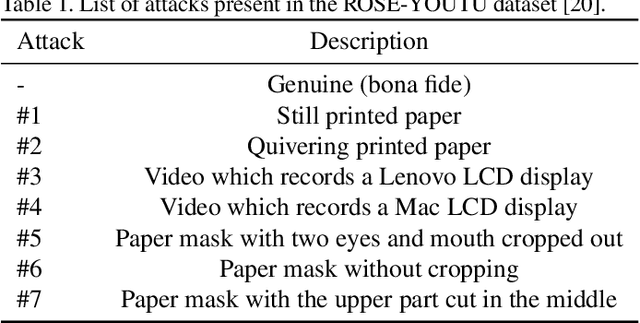

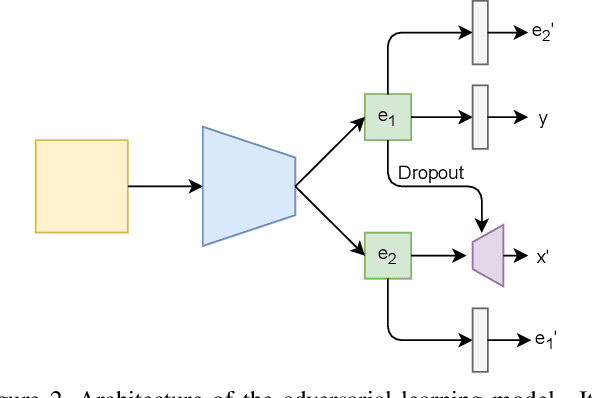

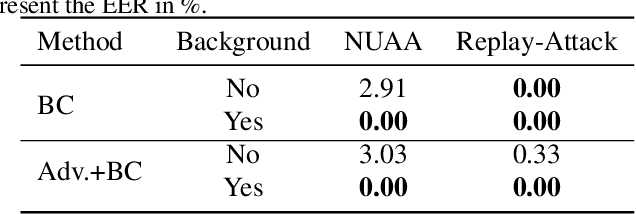

Abstract:Presentation attacks are recurrent threats to biometric systems, where impostors attempt to bypass these systems. Humans often use background information as contextual cues for their visual system. Yet, regarding face-based systems, the background is often discarded, since face presentation attack detection (PAD) models are mostly trained with face crops. This work presents a comparative study of face PAD models (including multi-task learning, adversarial training and dynamic frame selection) in two settings: with and without crops. The results show that the performance is consistently better when the background is present in the images. The proposed multi-task methodology beats the state-of-the-art results on the ROSE-Youtu dataset by a large margin with an equal error rate of 0.2%. Furthermore, we analyze the models' predictions with Grad-CAM++ with the aim to investigate to what extent the models focus on background elements that are known to be useful for human inspection. From this analysis we can conclude that the background cues are not relevant across all the attacks. Thus, showing the capability of the model to leverage the background information only when necessary.

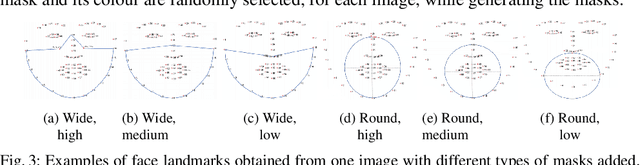

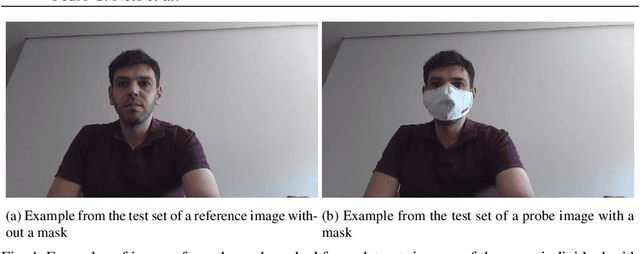

FocusFace: Multi-task Contrastive Learning for Masked Face Recognition

Nov 01, 2021

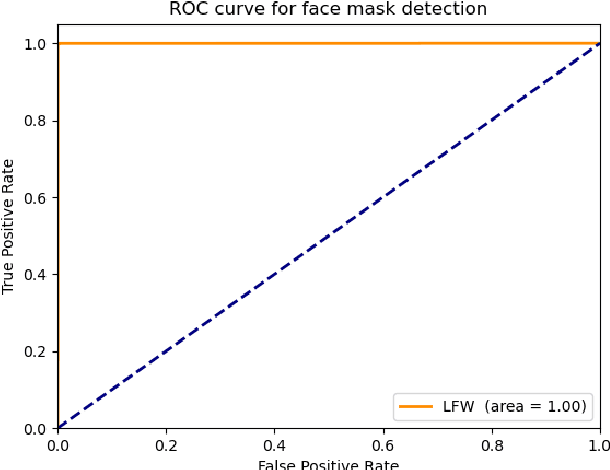

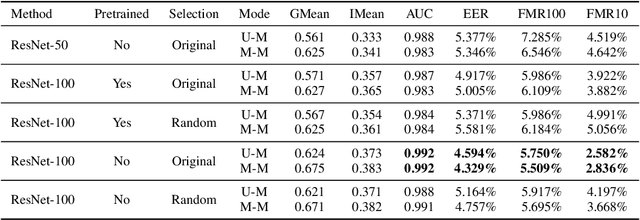

Abstract:SARS-CoV-2 has presented direct and indirect challenges to the scientific community. One of the most prominent indirect challenges advents from the mandatory use of face masks in a large number of countries. Face recognition methods struggle to perform identity verification with similar accuracy on masked and unmasked individuals. It has been shown that the performance of these methods drops considerably in the presence of face masks, especially if the reference image is unmasked. We propose FocusFace, a multi-task architecture that uses contrastive learning to be able to accurately perform masked face recognition. The proposed architecture is designed to be trained from scratch or to work on top of state-of-the-art face recognition methods without sacrificing the capabilities of a existing models in conventional face recognition tasks. We also explore different approaches to design the contrastive learning module. Results are presented in terms of masked-masked (M-M) and unmasked-masked (U-M) face verification performance. For both settings, the results are on par with published methods, but for M-M specifically, the proposed method was able to outperform all the solutions that it was compared to. We further show that when using our method on top of already existing methods the training computational costs decrease significantly while retaining similar performances. The implementation and the trained models are available at GitHub.

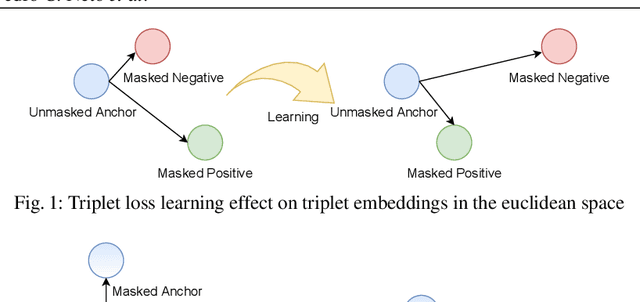

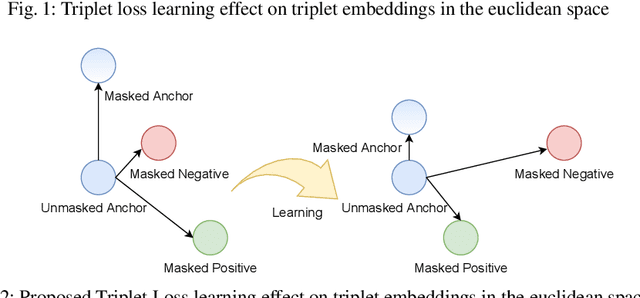

My Eyes Are Up Here: Promoting Focus on Uncovered Regions in Masked Face Recognition

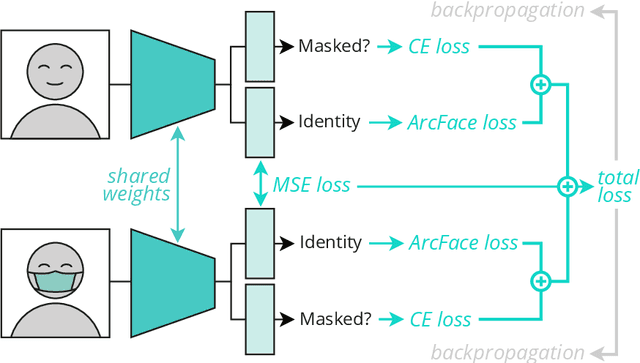

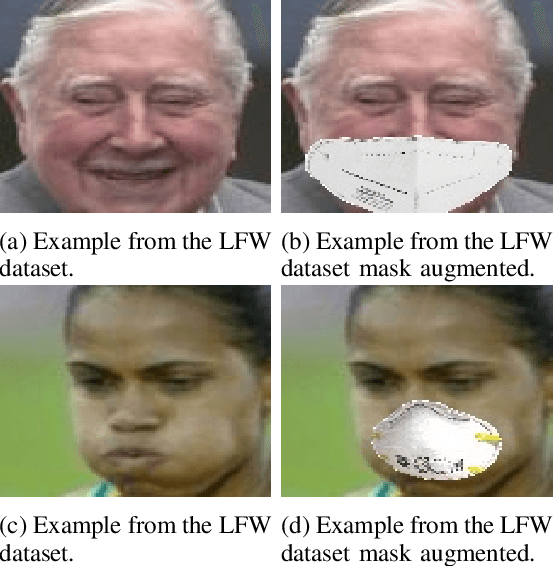

Aug 18, 2021

Abstract:The recent Covid-19 pandemic and the fact that wearing masks in public is now mandatory in several countries, created challenges in the use of face recognition systems (FRS). In this work, we address the challenge of masked face recognition (MFR) and focus on evaluating the verification performance in FRS when verifying masked vs unmasked faces compared to verifying only unmasked faces. We propose a methodology that combines the traditional triplet loss and the mean squared error (MSE) intending to improve the robustness of an MFR system in the masked-unmasked comparison mode. The results obtained by our proposed method show improvements in a detailed step-wise ablation study. The conducted study showed significant performance gains induced by our proposed training paradigm and modified triplet loss on two evaluation databases.

MFR 2021: Masked Face Recognition Competition

Jun 29, 2021

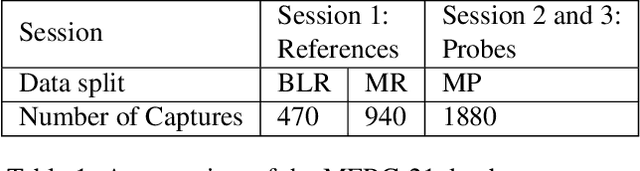

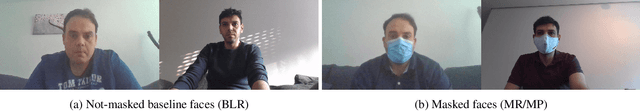

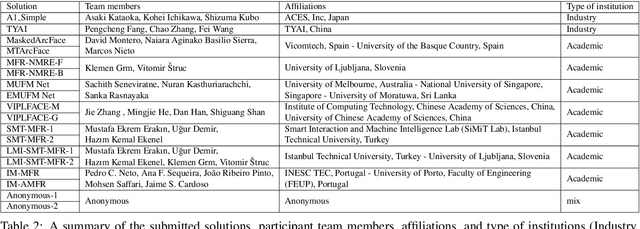

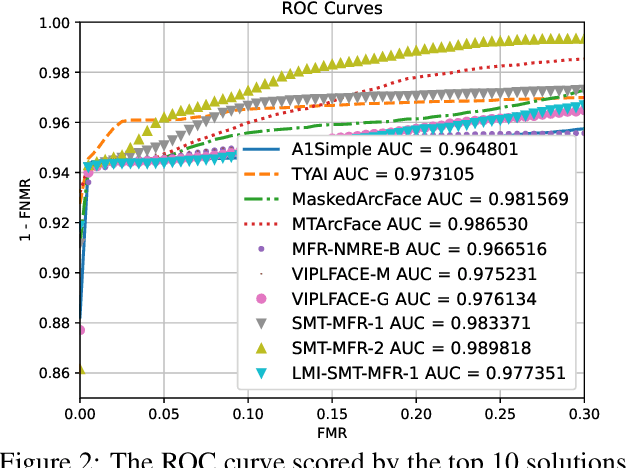

Abstract:This paper presents a summary of the Masked Face Recognition Competitions (MFR) held within the 2021 International Joint Conference on Biometrics (IJCB 2021). The competition attracted a total of 10 participating teams with valid submissions. The affiliations of these teams are diverse and associated with academia and industry in nine different countries. These teams successfully submitted 18 valid solutions. The competition is designed to motivate solutions aiming at enhancing the face recognition accuracy of masked faces. Moreover, the competition considered the deployability of the proposed solutions by taking the compactness of the face recognition models into account. A private dataset representing a collaborative, multi-session, real masked, capture scenario is used to evaluate the submitted solutions. In comparison to one of the top-performing academic face recognition solutions, 10 out of the 18 submitted solutions did score higher masked face verification accuracy.

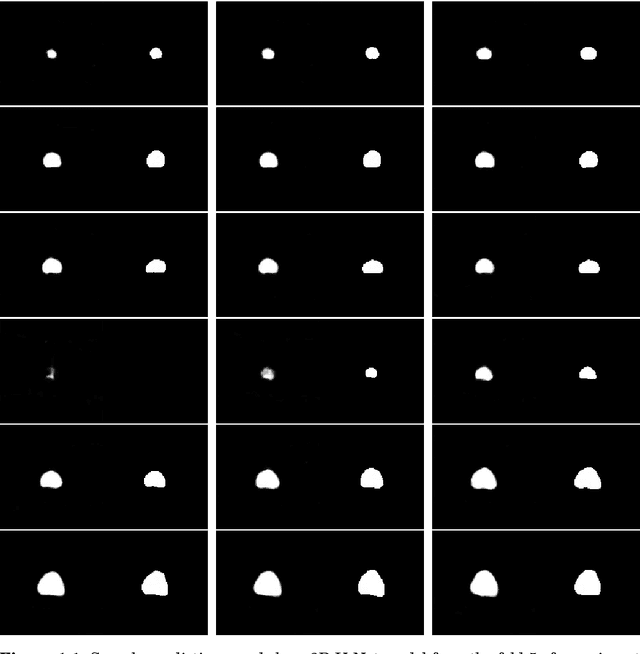

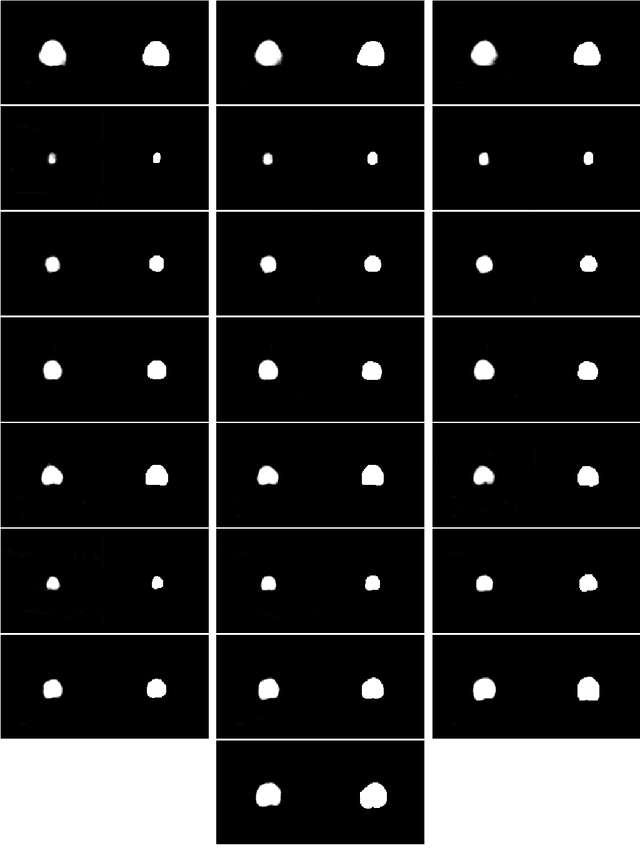

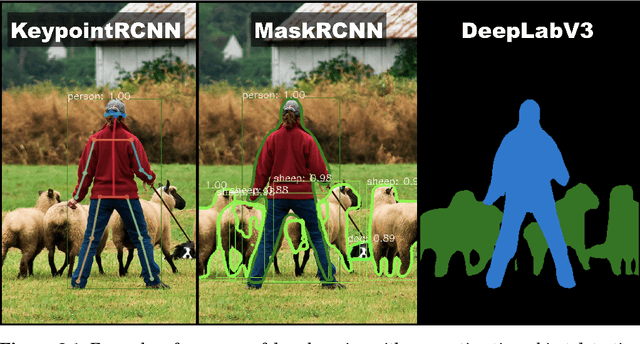

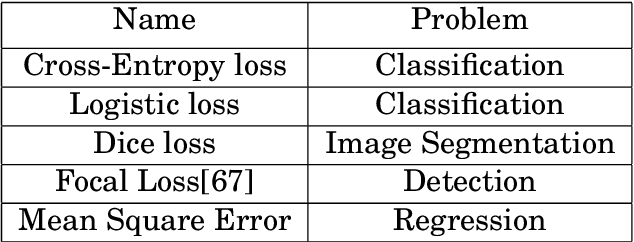

Deep Learning Based Analysis of Prostate Cancer from MP-MRI

Jun 02, 2021

Abstract:The diagnosis of prostate cancer faces a problem with overdiagnosis that leads to damaging side effects due to unnecessary treatment. Research has shown that the use of multi-parametric magnetic resonance images to conduct biopsies can drastically help to mitigate the overdiagnosis, thus reducing the side effects on healthy patients. This study aims to investigate the use of deep learning techniques to explore computer-aid diagnosis based on MRI as input. Several diagnosis problems ranging from classification of lesions as being clinically significant or not to the detection and segmentation of lesions are addressed with deep learning based approaches. This thesis tackled two main problems regarding the diagnosis of prostate cancer. Firstly, XmasNet was used to conduct two large experiments on the classification of lesions. Secondly, detection and segmentation experiments were conducted, first on the prostate and afterward on the prostate cancer lesions. The former experiments explored the lesions through a two-dimensional space, while the latter explored models to work with three-dimensional inputs. For this task, the 3D models explored were the 3D U-Net and a pretrained 3D ResNet-18. A rigorous analysis of all these problems was conducted with a total of two networks, two cropping techniques, two resampling techniques, two crop sizes, five input sizes and data augmentations experimented for lesion classification. While for segmentation two models, two input sizes and data augmentations were experimented. However, while the binary classification of the clinical significance of lesions and the detection and segmentation of the prostate already achieve the desired results (0.870 AUC and 0.915 dice score respectively), the classification of the PIRADS score and the segmentation of lesions still have a large margin to improve (0.664 accuracy and 0.690 dice score respectively).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge