Jingpei Lu

Semantic-SuPer: A Semantic-aware Surgical Perception Framework for Endoscopic Tissue Classification, Reconstruction, and Tracking

Oct 29, 2022

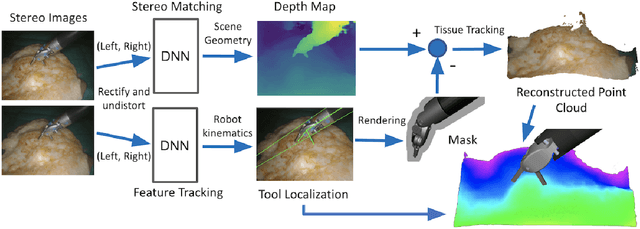

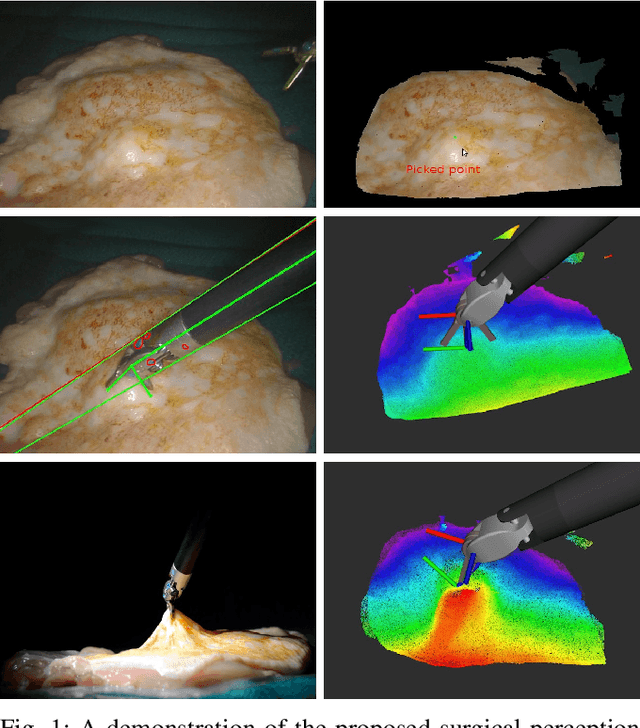

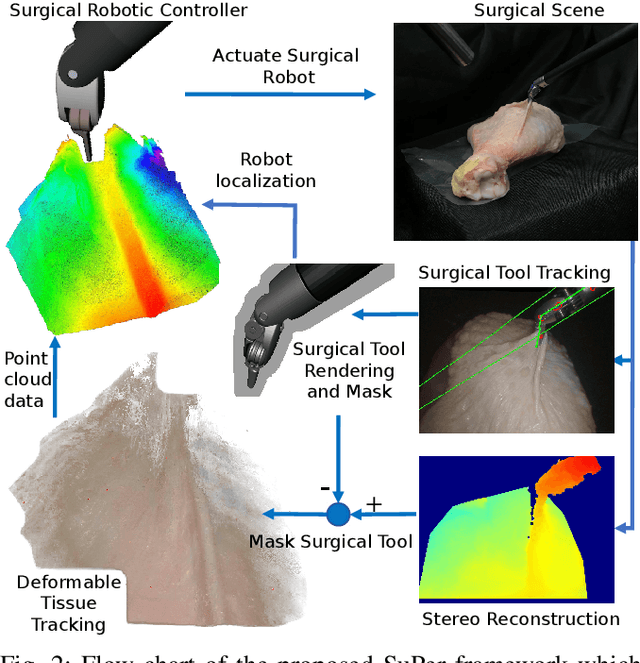

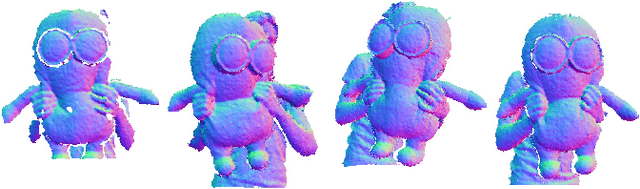

Abstract:Accurate and robust tracking and reconstruction of the surgical scene is a critical enabling technology toward autonomous robotic surgery. Existing algorithms for 3D perception in surgery mainly rely on geometric information, while we propose to also leverage semantic information inferred from the endoscopic video using image segmentation algorithms. In this paper, we present a novel, comprehensive surgical perception framework, Semantic-SuPer, that integrates geometric and semantic information to facilitate data association, 3D reconstruction, and tracking of endoscopic scenes, benefiting downstream tasks like surgical navigation. The proposed framework is demonstrated on challenging endoscopic data with deforming tissue, showing its advantages over our baseline and several other state-of the-art approaches. Our code and dataset will be available at https://github.com/ucsdarclab/Python-SuPer.

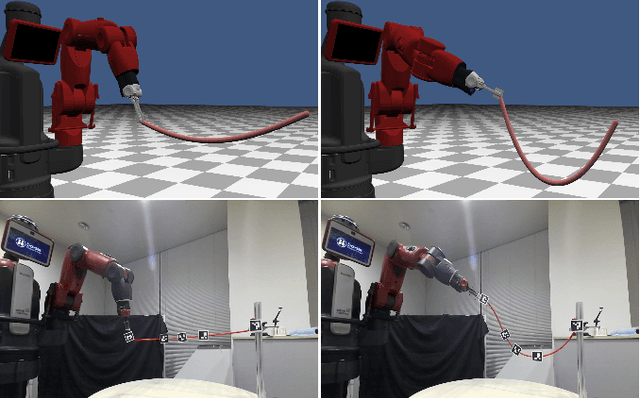

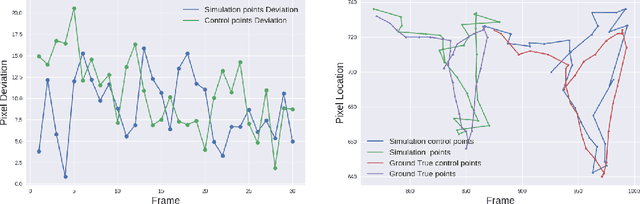

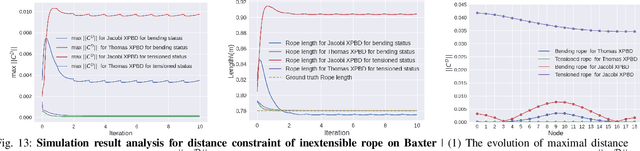

Differentiable Robotic Manipulation of Deformable Rope-like Objects Using Compliant Position-based Dynamics

Feb 20, 2022

Abstract:Robot manipulation of rope-like objects is an interesting problem that has some critical applications, such as autonomous robotic suturing. Solving for and controlling rope is difficult due to the complexity of rope physics and the challenge of building fast and accurate models of deformable materials. While more data-driven approaches have become more popular for finding controllers that learn to do a single task, there is still a strong motivation for a model-based method that could be used to solve a large variety of optimization problems. Towards this end, we introduced compliant, position-based dynamics (XPBD) to model rope-like objects. Using geometric constraints, the model can represent the coupling of shear/stretch and bend/twist effects. Of crucial importance is that our formulation is differentiable, which can solve parameter estimation problems and improve the matching of rope physics to real-life scenarios (i.e., the real-to-sim problem). For the generality of rope-like objects, two different solvers are proposed to handle the inextensible and extensible effects of varied material stiffness for the rope. We demonstrate our framework's robustness and accuracy on real-to-sim experimental setups using the Baxter robot and the da Vinci research kit (DVRK). Our work leads to a new path for robotic manipulation of the deformable rope-like object taking advantage of the ready-to-use gradients.

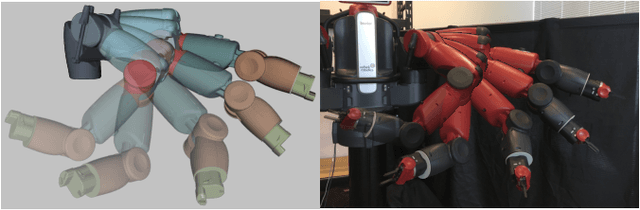

Parameter Identification and Motion Control for Articulated Rigid Body Robots Using Differentiable Position-based Dynamics

Jan 15, 2022

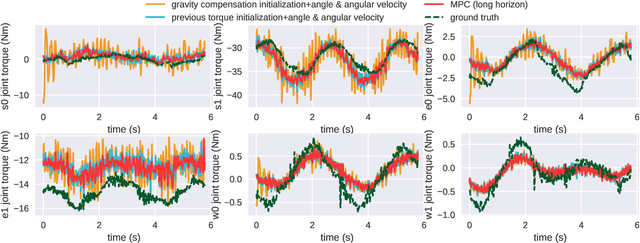

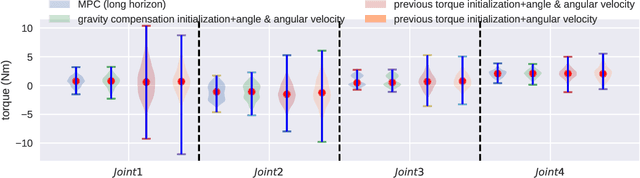

Abstract:Simulation modeling of robots, objects, and environments is the backbone for all model-based control and learning. It is leveraged broadly across dynamic programming and model-predictive control, as well as data generation for imitation, transfer, and reinforcement learning. In addition to fidelity, key features of models in these control and learning contexts are speed, stability, and native differentiability. However, many popular simulation platforms for robotics today lack at least one of the features above. More recently, position-based dynamics (PBD) has become a very popular simulation tool for modeling complex scenes of rigid and non-rigid object interactions, due to its speed and stability, and is starting to gain significant interest in robotics for its potential use in model-based control and learning. Thus, in this paper, we present a mathematical formulation for coupling position-based dynamics (PBD) simulation and optimal robot design, model-based motion control and system identification. Our framework breaks down PBD definitions and derivations for various types of joint-based articulated rigid bodies. We present a back-propagation method with automatic differentiation, which can integrate both positional and angular geometric constraints. Our framework can critically provide the native gradient information and perform gradient-based optimization tasks. We also propose articulated joint model representations and simulation workflow for our differentiable framework. We demonstrate the capability of the framework in efficient optimal robot design, accurate trajectory torque estimation and supporting spring stiffness estimation, where we achieve minor errors. We also implement impedance control in real robots to demonstrate the potential of our differentiable framework in human-in-the-loop applications.

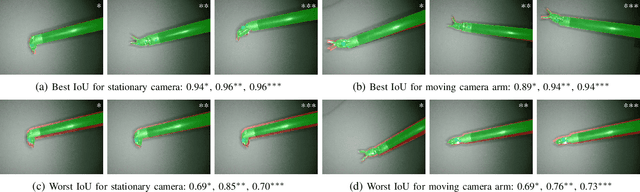

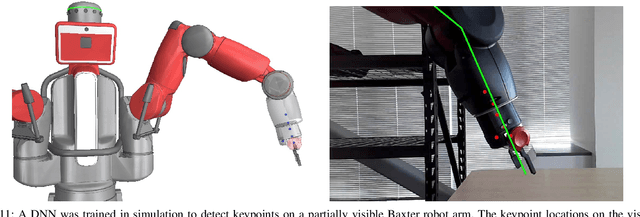

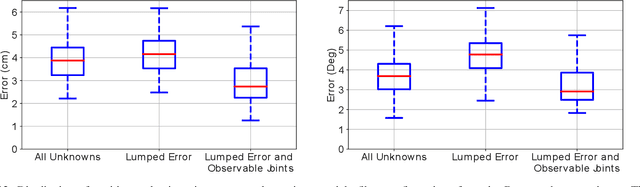

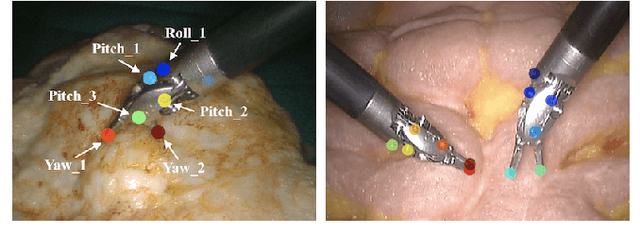

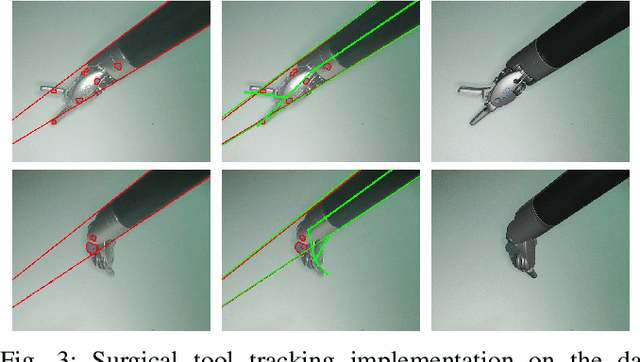

Robotic Tool Tracking under Partially Visible Kinematic Chain: A Unified Approach

Feb 11, 2021

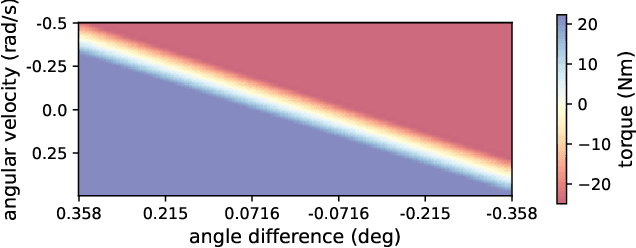

Abstract:Anytime a robot manipulator is controlled via visual feedback, the transformation between the robot and camera frame must be known. However, in the case where cameras can only capture a portion of the robot manipulator in order to better perceive the environment being interacted with, there is greater sensitivity to errors in calibration of the base-to-camera transform. A secondary source of uncertainty during robotic control are inaccuracies in joint angle measurements which can be caused by biases in positioning and complex transmission effects such as backlash and cable stretch. In this work, we bring together these two sets of unknown parameters into a unified problem formulation when the kinematic chain is partially visible in the camera view. We prove that these parameters are non-identifiable implying that explicit estimation of them is infeasible. To overcome this, we derive a smaller set of parameters we call Lumped Error since it lumps together the errors of calibration and joint angle measurements. A particle filter method is presented and tested in simulation and on two real world robots to estimate the Lumped Error and show the efficiency of this parameter reduction.

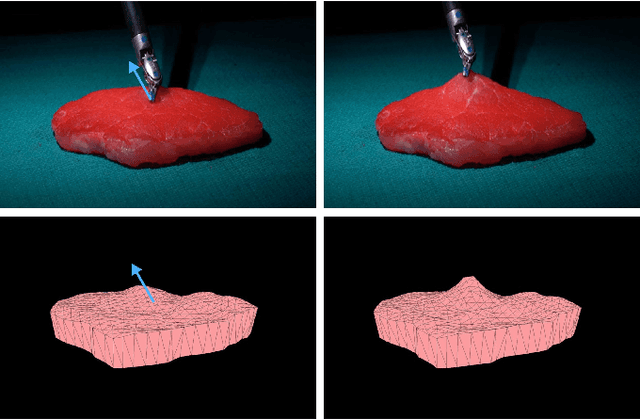

Real-to-Sim Registration of Deformable Soft Tissue with Position-Based Dynamics for Surgical Robot Autonomy

Nov 03, 2020

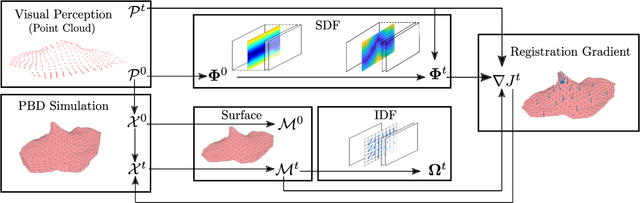

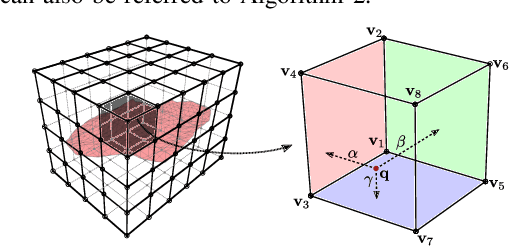

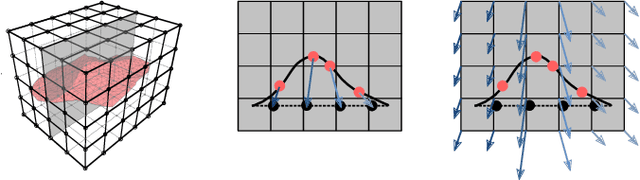

Abstract:Autonomy in robotic surgery is very challenging in unstructured environments, especially when interacting with deformable soft tissues. This creates a challenge for model-based control methods that must account for deformation dynamics during tissue manipulation. Previous works in vision-based perception can capture the geometric changes within the scene, however, integration with dynamic properties toachieve accurate and safe model-based controllers has not been considered before. Considering the mechanic coupling between the robot and the environment, it is crucial to develop a registered, simulated dynamical model. In this work, we propose an online, continuous, real-to-sim registration method to bridge from 3D visual perception to position-based dynamics(PBD) modeling of tissues. The PBD method is employed to simulate soft tissue dynamics as well as rigid tool interactions for model-based control. Meanwhile, a vision-based strategy is used to generate 3D reconstructed point cloud surfaces that can be used to register and update the simulation, accounting for differences between the simulation and the real world. To verify this real-to-sim approach, tissue manipulation experiments have been conducted on the da Vinci Researach Kit. Our real-to-sim approach successfully reduced registration errors online, which is especially important for safety during autonomous control. Moreover, the result shows higher accuracy in occluded areas than fusion-based reconstruction.

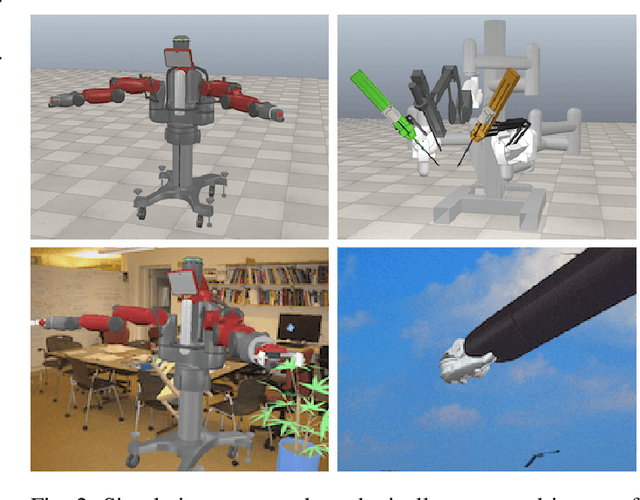

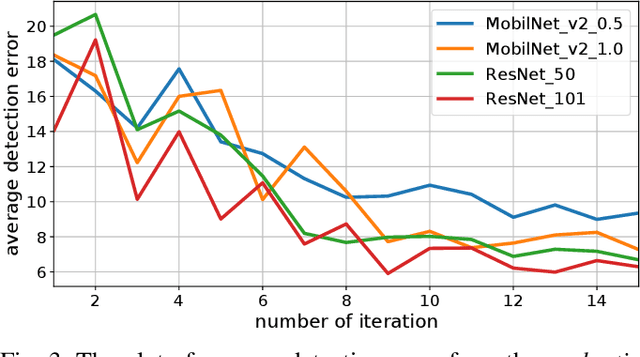

Robust Keypoint Detection and Pose Estimation of Robot Manipulators with Self-Occlusions via Sim-to-Real Transfer

Oct 15, 2020

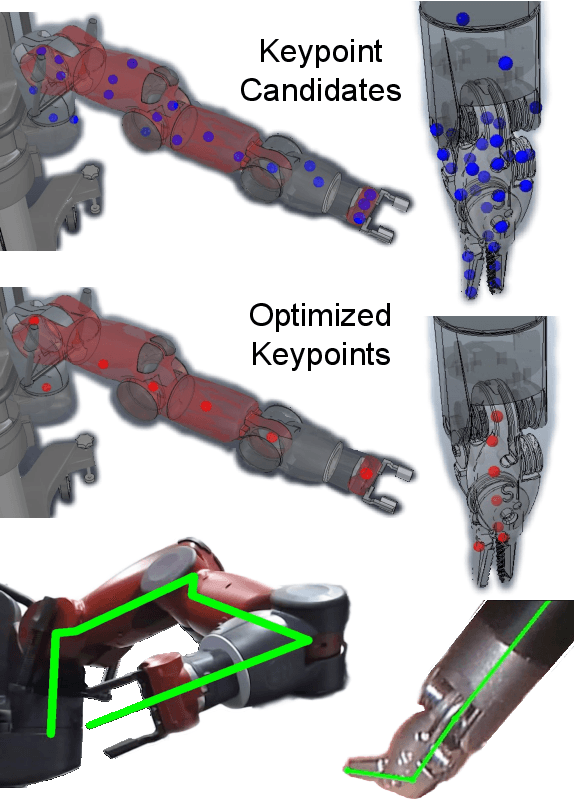

Abstract:Keypoint detection is an essential building block for many robotic applications like motion capture and pose estimation. Historically, keypoints are detected using uniquely engineered markers such as checkerboards, fiducials, or markers. More recently, deep learning methods have been explored as they have the ability to detect user-defined keypoints in a marker-less manner. However, deep neural network (DNN) detectors can have an uneven performance for different manually selected keypoints along the kinematic chain. An example of this can be found on symmetric robotic tools where DNN detectors cannot solve the correspondence problem correctly. In this work, we propose a new and autonomous way to define the keypoint locations that overcomes these challenges. The approach involves finding the optimal set of keypoints on robotic manipulators for robust visual detection. Using a robotic simulator as a medium, our algorithm utilizes synthetic data for DNN training, and the proposed algorithm is used to optimize the selection of keypoints through an iterative approach. The results show that when using the optimized keypoints, the detection performance of the DNNs improved so significantly that they can even be detected in cases of self-occlusion. We further use the optimized keypoints for real robotic applications by using domain randomization to bridge the reality gap between the simulator and the physical world. The physical world experiments show how the proposed method can be applied to the wide-breadth of robotic applications that require visual feedback, such as camera-to-robot calibration, robotic tool tracking, and whole-arm pose estimation.

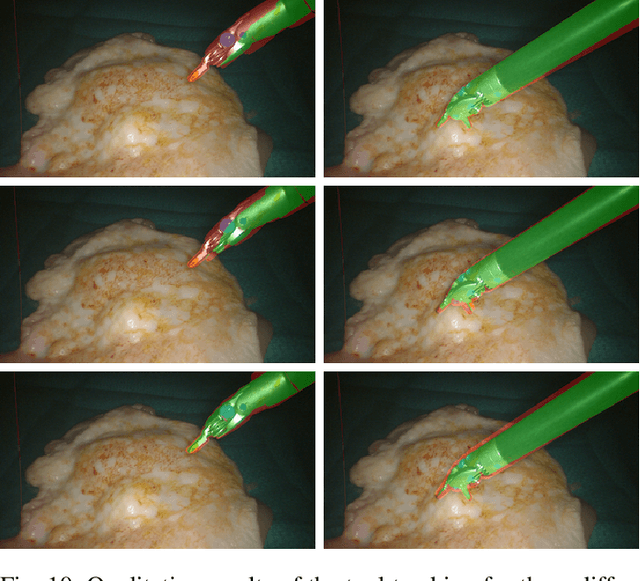

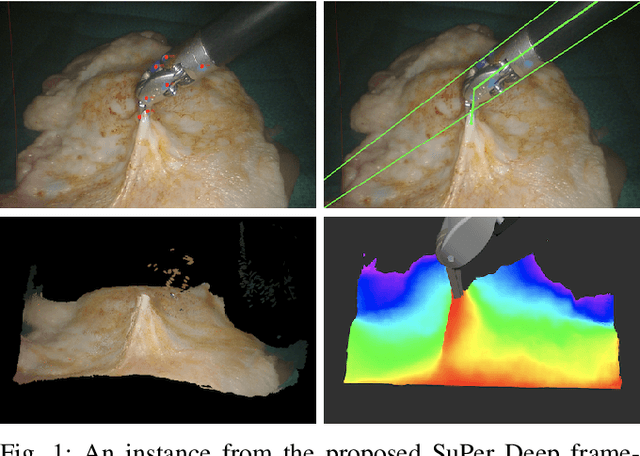

SuPer Deep: A Surgical Perception Framework for Robotic Tissue Manipulation using Deep Learning for Feature Extraction

Mar 07, 2020

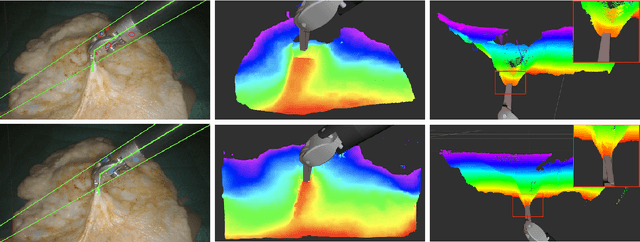

Abstract:Robotic automation in surgery requires precise tracking of surgical tools and mapping of deformable tissue. Previous works on surgical perception frameworks require significant effort in developing features for surgical tool and tissue tracking. In this work, we overcome the challenge by exploiting deep learning methods for surgical perception. We integrated deep neural networks, capable of efficient feature extraction, into the tissue reconstruction and instrument pose estimation processes. By leveraging transfer learning, the deep learning based approach requires minimal training data and reduced feature engineering efforts to fully perceive a surgical scene. The framework was tested on three publicly available datasets, which use the da Vinci Surgical System, for comprehensive analysis. Experimental results show that our framework achieves state-of-the-art tracking performance in a surgical environment by utilizing deep learning for feature extraction.

SuPer: A Surgical Perception Framework for Endoscopic Tissue Manipulation with Surgical Robotics

Sep 11, 2019

Abstract:Traditional control and task automation have been successfully demonstrated in a variety of structured, controlled environments through the use of highly specialized modeled robotic systems in conjunction with multiple sensors. However, application of autonomy in endoscopic surgery is very challenging, particularly in soft tissue work, due to the lack of high-quality images and the unpredictable, constantly deforming environment. In this work, we propose a novel surgical perception framework, SuPer, for surgical robotic control. This framework continuously collects 3D geometric information that allows for mapping of a deformable surgical field while tracking rigid instruments within the field. To achieve this, a model-based tracker is employed to localize the surgical tool with a kinematic prior in conjunction with a model-free tracker to reconstruct the deformable environment and provide an estimated point cloud as a mapping of the environment. The proposed framework was implemented on the da Vinci Surgical System in real-time with an end-effector controller where the target configurations are set and regulated through the framework. Our proposed framework successfully completed autonomous soft tissue manipulation tasks with high accuracy. The demonstration of this novel framework is promising for the future of surgical autonomy. In addition, we provide our dataset for further surgical research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge