Jingge Wang

Transferable Mask Transformer: Cross-domain Semantic Segmentation with Region-adaptive Transferability Estimation

Apr 08, 2025

Abstract:Recent advances in Vision Transformers (ViTs) have set new benchmarks in semantic segmentation. However, when adapting pretrained ViTs to new target domains, significant performance degradation often occurs due to distribution shifts, resulting in suboptimal global attention. Since self-attention mechanisms are inherently data-driven, they may fail to effectively attend to key objects when source and target domains exhibit differences in texture, scale, or object co-occurrence patterns. While global and patch-level domain adaptation methods provide partial solutions, region-level adaptation with dynamically shaped regions is crucial due to spatial heterogeneity in transferability across different image areas. We present Transferable Mask Transformer (TMT), a novel region-level adaptation framework for semantic segmentation that aligns cross-domain representations through spatial transferability analysis. TMT consists of two key components: (1) An Adaptive Cluster-based Transferability Estimator (ACTE) that dynamically segments images into structurally and semantically coherent regions for localized transferability assessment, and (2) A Transferable Masked Attention (TMA) module that integrates region-specific transferability maps into ViTs' attention mechanisms, prioritizing adaptation in regions with low transferability and high semantic uncertainty. Comprehensive evaluations across 20 cross-domain pairs demonstrate TMT's superiority, achieving an average 2% MIoU improvement over vanilla fine-tuning and a 1.28% increase compared to state-of-the-art baselines. The source code will be publicly available.

Adapting Foundation Models for Few-Shot Medical Image Segmentation: Actively and Sequentially

Feb 03, 2025

Abstract:Recent advances in foundation models have brought promising results in computer vision, including medical image segmentation. Fine-tuning foundation models on specific low-resource medical tasks has become a standard practice. However, ensuring reliable and robust model adaptation when the target task has a large domain gap and few annotated samples remains a challenge. Previous few-shot domain adaptation (FSDA) methods seek to bridge the distribution gap between source and target domains by utilizing auxiliary data. The selection and scheduling of auxiliaries are often based on heuristics, which can easily cause negative transfer. In this work, we propose an Active and Sequential domain AdaPtation (ASAP) framework for dynamic auxiliary dataset selection in FSDA. We formulate FSDA as a multi-armed bandit problem and derive an efficient reward function to prioritize training on auxiliary datasets that align closely with the target task, through a single-round fine-tuning. Empirical validation on diverse medical segmentation datasets demonstrates that our method achieves favorable segmentation performance, significantly outperforming the state-of-the-art FSDA methods, achieving an average gain of 27.75% on MRI and 7.52% on CT datasets in Dice score. Code is available at the git repository: https://github.com/techicoco/ASAP.

CCIS-Diff: A Generative Model with Stable Diffusion Prior for Controlled Colonoscopy Image Synthesis

Nov 19, 2024

Abstract:Colonoscopy is crucial for identifying adenomatous polyps and preventing colorectal cancer. However, developing robust models for polyp detection is challenging by the limited size and accessibility of existing colonoscopy datasets. While previous efforts have attempted to synthesize colonoscopy images, current methods suffer from instability and insufficient data diversity. Moreover, these approaches lack precise control over the generation process, resulting in images that fail to meet clinical quality standards. To address these challenges, we propose CCIS-DIFF, a Controlled generative model for high-quality Colonoscopy Image Synthesis based on a Diffusion architecture. Our method offers precise control over both the spatial attributes (polyp location and shape) and clinical characteristics of polyps that align with clinical descriptions. Specifically, we introduce a blur mask weighting strategy to seamlessly blend synthesized polyps with the colonic mucosa, and a text-aware attention mechanism to guide the generated images to reflect clinical characteristics. Notably, to achieve this, we construct a new multi-modal colonoscopy dataset that integrates images, mask annotations, and corresponding clinical text descriptions. Experimental results demonstrate that our method generates high-quality, diverse colonoscopy images with fine control over both spatial constraints and clinical consistency, offering valuable support for downstream segmentation and diagnostic tasks.

Selecting the Best Sequential Transfer Path for Medical Image Segmentation with Limited Labeled Data

Oct 09, 2024

Abstract:The medical image processing field often encounters the critical issue of scarce annotated data. Transfer learning has emerged as a solution, yet how to select an adequate source task and effectively transfer the knowledge to the target task remains challenging. To address this, we propose a novel sequential transfer scheme with a task affinity metric tailored for medical images. Considering the characteristics of medical image segmentation tasks, we analyze the image and label similarity between tasks and compute the task affinity scores, which assess the relatedness among tasks. Based on this, we select appropriate source tasks and develop an effective sequential transfer strategy by incorporating intermediate source tasks to gradually narrow the domain discrepancy and minimize the transfer cost. Thereby we identify the best sequential transfer path for the given target task. Extensive experiments on three MRI medical datasets, FeTS 2022, iSeg-2019, and WMH, demonstrate the efficacy of our method in finding the best source sequence. Compared with directly transferring from a single source task, the sequential transfer results underline a significant improvement in target task performance, achieving an average of 2.58% gain in terms of segmentation Dice score, notably, 6.00% for FeTS 2022. Code is available at the git repository.

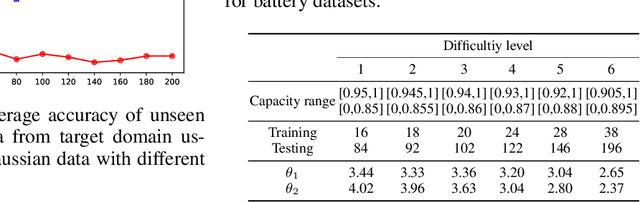

Enhancing Continuous Domain Adaptation with Multi-Path Transfer Curriculum

Feb 26, 2024Abstract:Addressing the large distribution gap between training and testing data has long been a challenge in machine learning, giving rise to fields such as transfer learning and domain adaptation. Recently, Continuous Domain Adaptation (CDA) has emerged as an effective technique, closing this gap by utilizing a series of intermediate domains. This paper contributes a novel CDA method, W-MPOT, which rigorously addresses the domain ordering and error accumulation problems overlooked by previous studies. Specifically, we construct a transfer curriculum over the source and intermediate domains based on Wasserstein distance, motivated by theoretical analysis of CDA. Then we transfer the source model to the target domain through multiple valid paths in the curriculum using a modified version of continuous optimal transport. A bidirectional path consistency constraint is introduced to mitigate the impact of accumulated mapping errors during continuous transfer. We extensively evaluate W-MPOT on multiple datasets, achieving up to 54.1\% accuracy improvement on multi-session Alzheimer MR image classification and 94.7\% MSE reduction on battery capacity estimation.

Generalizing to Unseen Domains with Wasserstein Distributional Robustness under Limited Source Knowledge

Jul 11, 2022

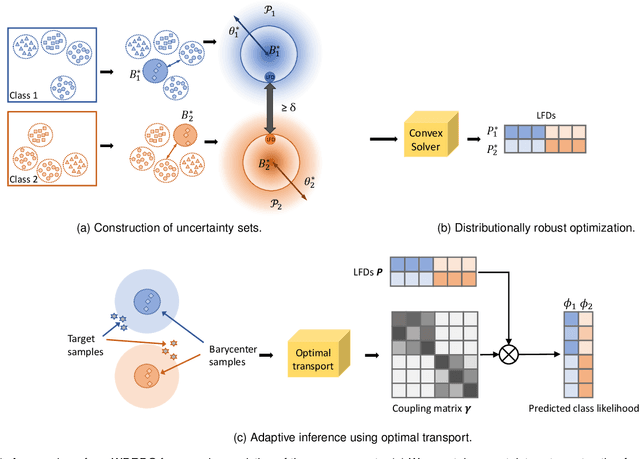

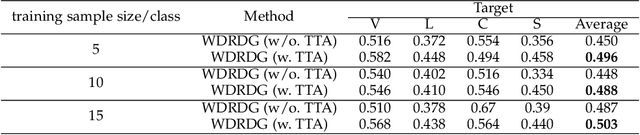

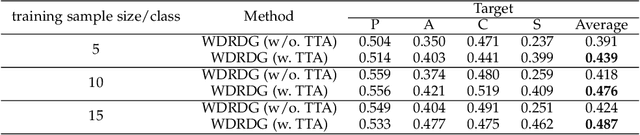

Abstract:Domain generalization aims at learning a universal model that performs well on unseen target domains, incorporating knowledge from multiple source domains. In this research, we consider the scenario where different domain shifts occur among conditional distributions of different classes across domains. When labeled samples in the source domains are limited, existing approaches are not sufficiently robust. To address this problem, we propose a novel domain generalization framework called Wasserstein Distributionally Robust Domain Generalization (WDRDG), inspired by the concept of distributionally robust optimization. We encourage robustness over conditional distributions within class-specific Wasserstein uncertainty sets and optimize the worst-case performance of a classifier over these uncertainty sets. We further develop a test-time adaptation module leveraging optimal transport to quantify the relationship between the unseen target domain and source domains to make adaptive inference for target data. Experiments on the Rotated MNIST, PACS and the VLCS datasets demonstrate that our method could effectively balance the robustness and discriminability in challenging generalization scenarios.

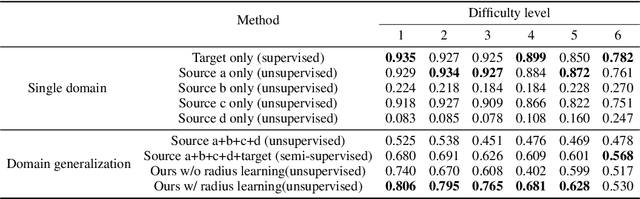

Class-conditioned Domain Generalization via Wasserstein Distributional Robust Optimization

Sep 08, 2021

Abstract:Given multiple source domains, domain generalization aims at learning a universal model that performs well on any unseen but related target domain. In this work, we focus on the domain generalization scenario where domain shifts occur among class-conditional distributions of different domains. Existing approaches are not sufficiently robust when the variation of conditional distributions given the same class is large. In this work, we extend the concept of distributional robust optimization to solve the class-conditional domain generalization problem. Our approach optimizes the worst-case performance of a classifier over class-conditional distributions within a Wasserstein ball centered around the barycenter of the source conditional distributions. We also propose an iterative algorithm for learning the optimal radius of the Wasserstein balls automatically. Experiments show that the proposed framework has better performance on unseen target domain than approaches without domain generalization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge