Jiefu Zhang

Towards Reliable LLM Evaluation: Correcting the Winner's Curse in Adaptive Benchmarking

May 07, 2026Abstract:Adaptive prompt and program search makes LLM evaluation selection-sensitive. Once benchmark items are reused inside tuning, the observed winner's score need not estimate the fresh-data performance of the full tune-then-deploy procedure. We study inference for this procedure-level target under explicit tuning budgets. We propose SIREN, a selection-aware repeated-split reporting protocol that freezes the post-search shortlist, separates splitwise selection from held-out evaluation, and uses an item-level Gaussian multiplier bootstrap for uncertainty quantification. In a fixed-shortlist regime with smooth stabilized selection, the estimator admits a first-order item-level representation, and the bootstrap yields valid simultaneous inference on a finite budget grid. This supports confidence intervals for procedure-performance curves and pre-specified equal-budget and cross-budget comparisons. Controlled simulations and MMLU-Pro tuning experiments show that winner-based reporting can be optimistic and can change deployment conclusions, while SIREN remains close to the finite-sample reporting target.

Task-Agnostic Exoskeleton Control Supports Elderly Joint Energetics during Hip-Intensive Tasks

Mar 23, 2026Abstract:Age-related mobility decline is frequently accompanied by a redistribution of joint kinetics, where older adults compensate for reduced ankle function by increasing demand on the hip. Paradoxically, this compensatory shift typically coincides with age-related reductions in maximal hip power. Although robotic exoskeletons can provide immediate energetic benefits, conventional control strategies have limited previous studies in this population to specific tasks such as steady-state walking, which do not fully reflect mobility demands in the home and community. Here, we implement a task-agnostic hip exoskeleton controller that is inherently sensitive to joint power and validate its efficacy in eight older adults. Across a battery of hip-intensive activities that included level walking, ramp ascent, stair climbing, and sit-to-stand transitions, the exoskeleton matched biological power profiles with high accuracy (mean cosine similarity 0.89). Assistance significantly reduced sagittal plane biological positive work by 24.7% at the hip and by 9.3% for the lower limb, while simultaneously augmenting peak total (biological + exoskeleton) hip power and reducing peak biological hip power. These results suggest that hip exoskeletons can potentially enhance endurance through biological work reduction, and increase functional reserve through total power augmentation, serving as a promising biomechanical intervention to support older adults' mobility.

Optimal Energy Shaping Control for a Backdrivable Hip Exoskeleton

Oct 07, 2022

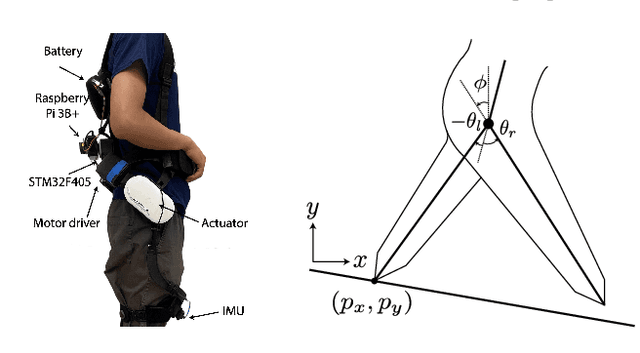

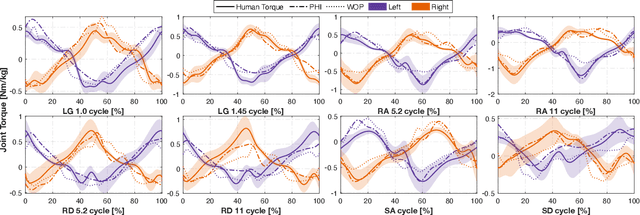

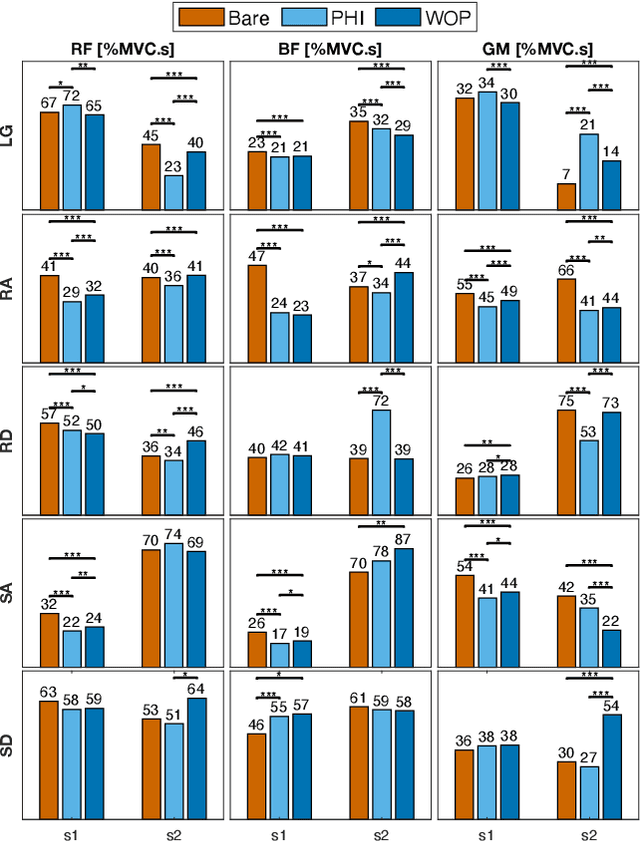

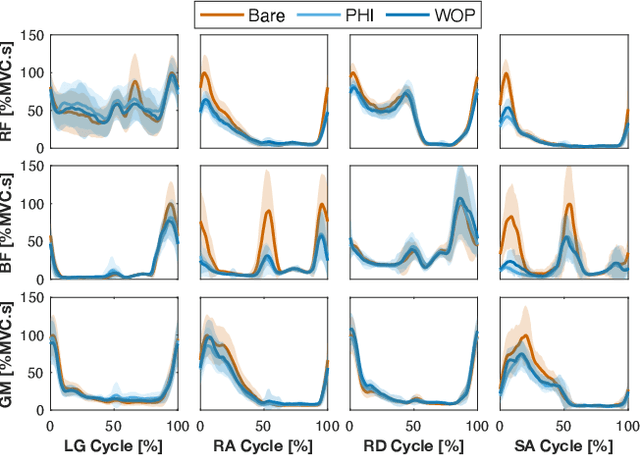

Abstract:Task-dependent controllers widely used in exoskeletons track predefined trajectories, which overly constrain the volitional motion of individuals with remnant voluntary mobility. Energy shaping, on the other hand, provides task-invariant assistance by altering the human body's dynamic characteristics in the closed loop. While human-exoskeleton systems are often modeled using Euler-Lagrange equations, in our previous work we modeled the system as a port-controlled-Hamiltonian system, and a task-invariant controller was designed for a knee-ankle exoskeleton using interconnection-damping assignment passivity-based control. In this paper, we extend this framework to design a controller for a backdrivable hip exoskeleton to assist multiple tasks. A set of basis functions that contains information of kinematics is selected and corresponding coefficients are optimized, which allows the controller to provide torque that fits normative human torque for different activities of daily life. Human-subject experiments with two able-bodied subjects demonstrated the controller's capability to reduce muscle effort across different tasks.

Learning the mapping $\mathbf{x}\mapsto \sum_{i=1}^d x_i^2$: the cost of finding the needle in a haystack

Feb 24, 2020

Abstract:The task of using machine learning to approximate the mapping $\mathbf{x}\mapsto\sum_{i=1}^d x_i^2$ with $x_i\in[-1,1]$ seems to be a trivial one. Given the knowledge of the separable structure of the function, one can design a sparse network to represent the function very accurately, or even exactly. When such structural information is not available, and we may only use a dense neural network, the optimization procedure to find the sparse network embedded in the dense network is similar to finding the needle in a haystack, using a given number of samples of the function. We demonstrate that the cost (measured by sample complexity) of finding the needle is directly related to the Barron norm of the function. While only a small number of samples is needed to train a sparse network, the dense network trained with the same number of samples exhibits large test loss and a large generalization gap. In order to control the size of the generalization gap, we find that the use of explicit regularization becomes increasingly more important as $d$ increases. The numerically observed sample complexity with explicit regularization scales as $\mathcal{O}(d^{2.5})$, which is in fact better than the theoretically predicted sample complexity that scales as $\mathcal{O}(d^{4})$. Without explicit regularization (also called implicit regularization), the numerically observed sample complexity is significantly higher and is close to $\mathcal{O}(d^{4.5})$.

Deep Density: circumventing the Kohn-Sham equations via symmetry preserving neural networks

Nov 27, 2019

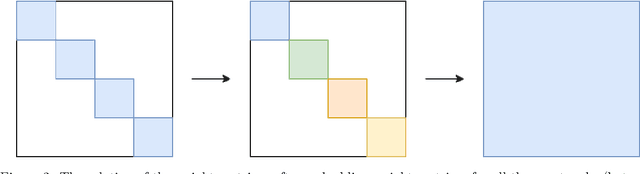

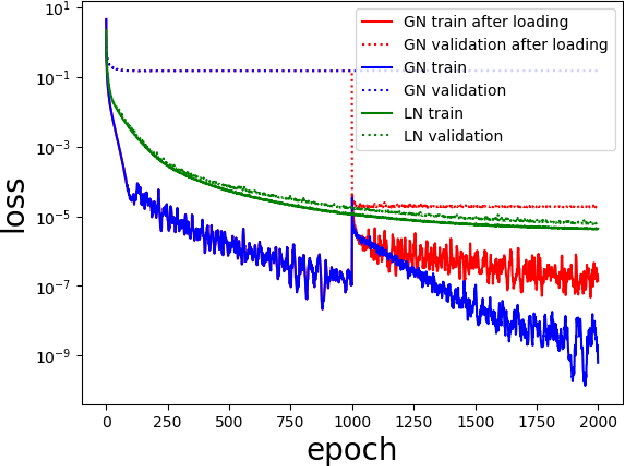

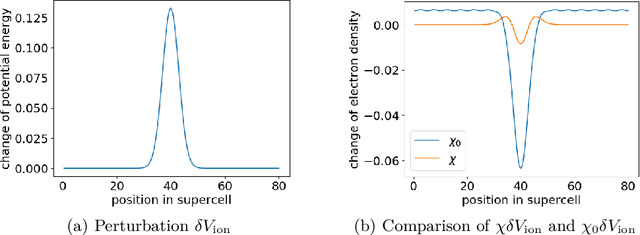

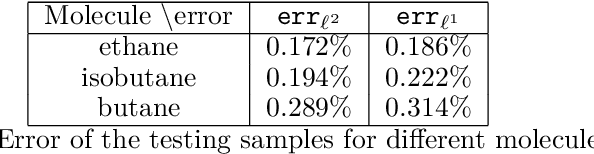

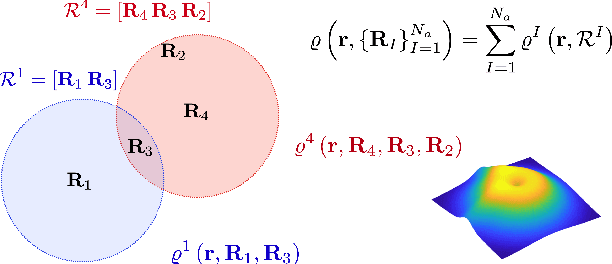

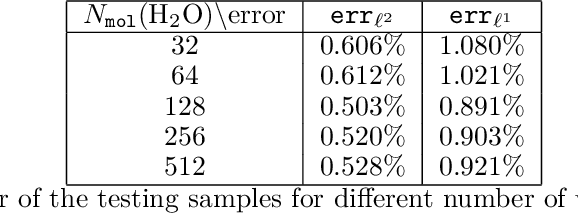

Abstract:The recently developed Deep Potential [Phys. Rev. Lett. 120, 143001, 2018] is a powerful method to represent general inter-atomic potentials using deep neural networks. The success of Deep Potential rests on the proper treatment of locality and symmetry properties of each component of the network. In this paper, we leverage its network structure to effectively represent the mapping from the atomic configuration to the electron density in Kohn-Sham density function theory (KS-DFT). By directly targeting at the self-consistent electron density, we demonstrate that the adapted network architecture, called the Deep Density, can effectively represent the electron density as the linear combination of contributions from many local clusters. The network is constructed to satisfy the translation, rotation, and permutation symmetries, and is designed to be transferable to different system sizes. We demonstrate that using a relatively small number of training snapshots, Deep Density achieves excellent performance for one-dimensional insulating and metallic systems, as well as systems with mixed insulating and metallic characters. We also demonstrate its performance for real three-dimensional systems, including small organic molecules, as well as extended systems such as water (up to $512$ molecules) and aluminum (up to $256$ atoms).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge