Ittai Dayan

Development and Validation of a Deep Learning Model for Prediction of Severe Outcomes in Suspected COVID-19 Infection

Mar 29, 2021

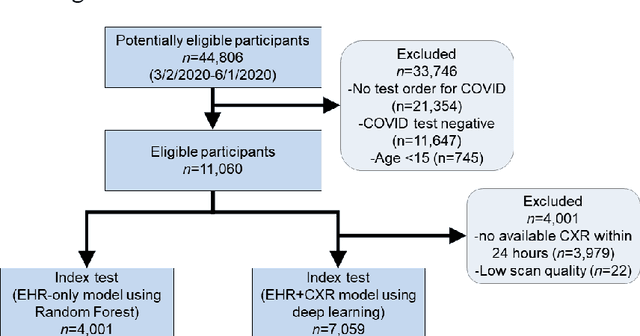

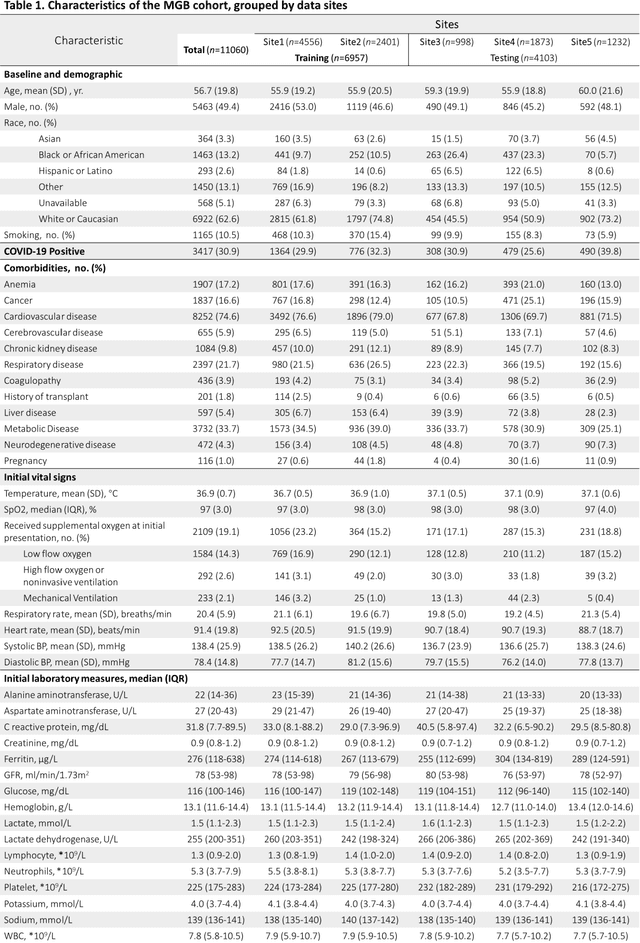

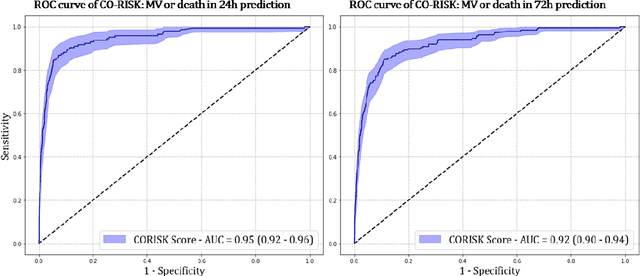

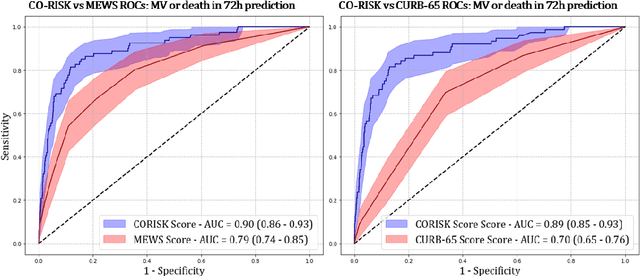

Abstract:COVID-19 patient triaging with predictive outcome of the patients upon first present to emergency department (ED) is crucial for improving patient prognosis, as well as better hospital resources management and cross-infection control. We trained a deep feature fusion model to predict patient outcomes, where the model inputs were EHR data including demographic information, co-morbidities, vital signs and laboratory measurements, plus patient's CXR images. The model output was patient outcomes defined as the most insensitive oxygen therapy required. For patients without CXR images, we employed Random Forest method for the prediction. Predictive risk scores for COVID-19 severe outcomes ("CO-RISK" score) were derived from model output and evaluated on the testing dataset, as well as compared to human performance. The study's dataset (the "MGB COVID Cohort") was constructed from all patients presenting to the Mass General Brigham (MGB) healthcare system from March 1st to June 1st, 2020. ED visits with incomplete or erroneous data were excluded. Patients with no test order for COVID or confirmed negative test results were excluded. Patients under the age of 15 were also excluded. Finally, electronic health record (EHR) data from a total of 11060 COVID-19 confirmed or suspected patients were used in this study. Chest X-ray (CXR) images were also collected from each patient if available. Results show that CO-RISK score achieved area under the Curve (AUC) of predicting MV/death (i.e. severe outcomes) in 24 hours of 0.95, and 0.92 in 72 hours on the testing dataset. The model shows superior performance to the commonly used risk scores in ED (CURB-65 and MEWS). Comparing with physician's decisions, CO-RISK score has demonstrated superior performance to human in making ICU/floor decisions.

Deep Metric Learning-based Image Retrieval System for Chest Radiograph and its Clinical Applications in COVID-19

Nov 26, 2020

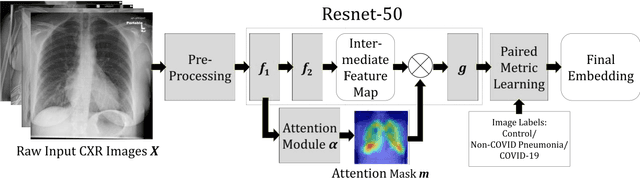

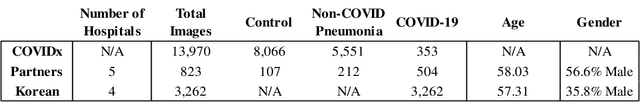

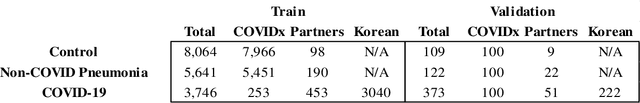

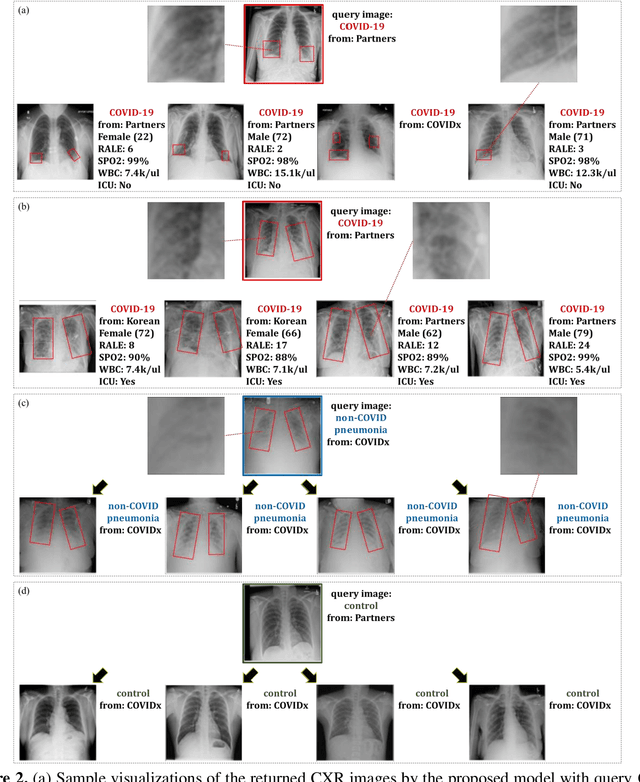

Abstract:In recent years, deep learning-based image analysis methods have been widely applied in computer-aided detection, diagnosis and prognosis, and has shown its value during the public health crisis of the novel coronavirus disease 2019 (COVID-19) pandemic. Chest radiograph (CXR) has been playing a crucial role in COVID-19 patient triaging, diagnosing and monitoring, particularly in the United States. Considering the mixed and unspecific signals in CXR, an image retrieval model of CXR that provides both similar images and associated clinical information can be more clinically meaningful than a direct image diagnostic model. In this work we develop a novel CXR image retrieval model based on deep metric learning. Unlike traditional diagnostic models which aims at learning the direct mapping from images to labels, the proposed model aims at learning the optimized embedding space of images, where images with the same labels and similar contents are pulled together. It utilizes multi-similarity loss with hard-mining sampling strategy and attention mechanism to learn the optimized embedding space, and provides similar images to the query image. The model is trained and validated on an international multi-site COVID-19 dataset collected from 3 different sources. Experimental results of COVID-19 image retrieval and diagnosis tasks show that the proposed model can serve as a robust solution for CXR analysis and patient management for COVID-19. The model is also tested on its transferability on a different clinical decision support task, where the pre-trained model is applied to extract image features from a new dataset without any further training. These results demonstrate our deep metric learning based image retrieval model is highly efficient in the CXR retrieval, diagnosis and prognosis, and thus has great clinical value for the treatment and management of COVID-19 patients.

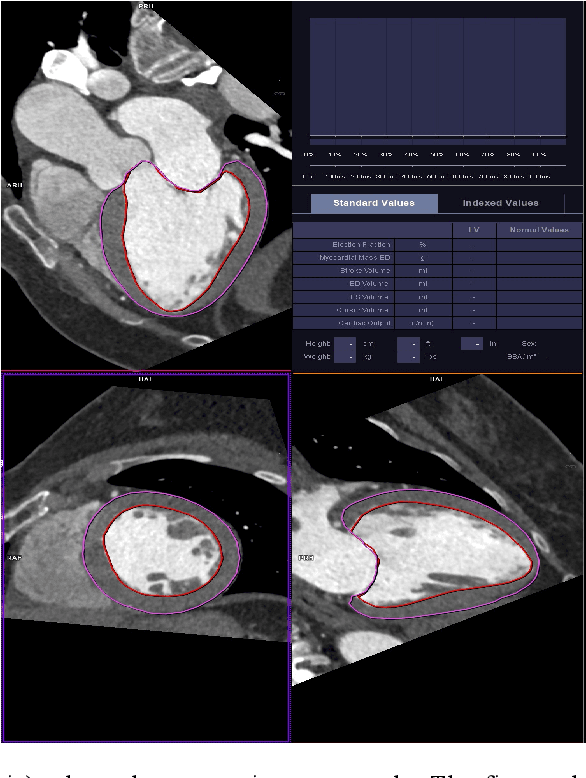

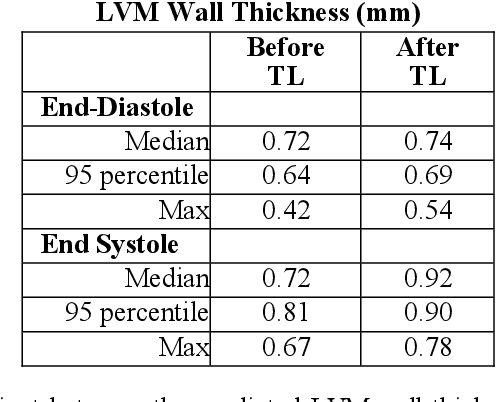

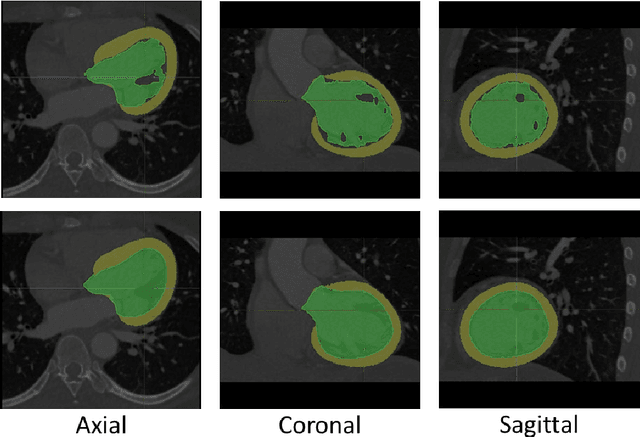

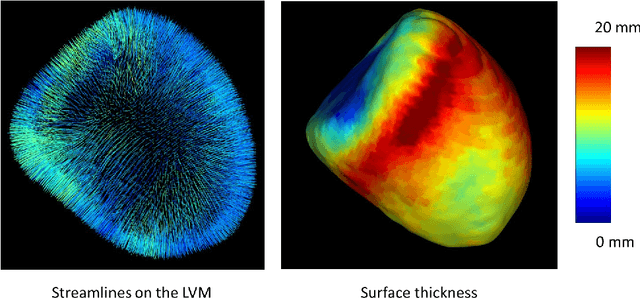

Democratizing Artificial Intelligence in Healthcare: A Study of Model Development Across Two Institutions Incorporating Transfer Learning

Sep 25, 2020

Abstract:The training of deep learning models typically requires extensive data, which are not readily available as large well-curated medical-image datasets for development of artificial intelligence (AI) models applied in Radiology. Recognizing the potential for transfer learning (TL) to allow a fully trained model from one institution to be fine-tuned by another institution using a much small local dataset, this report describes the challenges, methodology, and benefits of TL within the context of developing an AI model for a basic use-case, segmentation of Left Ventricular Myocardium (LVM) on images from 4-dimensional coronary computed tomography angiography. Ultimately, our results from comparisons of LVM segmentation predicted by a model locally trained using random initialization, versus one training-enhanced by TL, showed that a use-case model initiated by TL can be developed with sparse labels with acceptable performance. This process reduces the time required to build a new model in the clinical environment at a different institution.

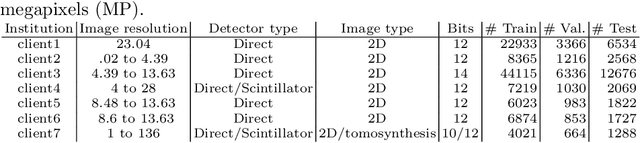

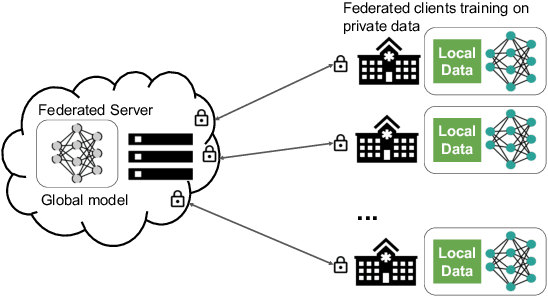

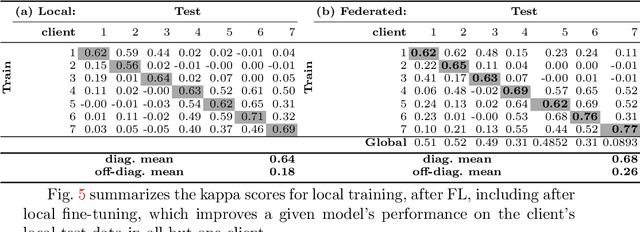

Federated Learning for Breast Density Classification: A Real-World Implementation

Sep 17, 2020

Abstract:Building robust deep learning-based models requires large quantities of diverse training data. In this study, we investigate the use of federated learning (FL) to build medical imaging classification models in a real-world collaborative setting. Seven clinical institutions from across the world joined this FL effort to train a model for breast density classification based on Breast Imaging, Reporting & Data System (BI-RADS). We show that despite substantial differences among the datasets from all sites (mammography system, class distribution, and data set size) and without centralizing data, we can successfully train AI models in federation. The results show that models trained using FL perform 6.3% on average better than their counterparts trained on an institute's local data alone. Furthermore, we show a 45.8% relative improvement in the models' generalizability when evaluated on the other participating sites' testing data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge