Haoran Fan

SLICE: Semantic Latent Injection via Compartmentalized Embedding for Image Watermarking

Mar 13, 2026Abstract:Watermarking the initial noise of diffusion models has emerged as a promising approach for image provenance, but content-independent noise patterns can be forged via inversion and regeneration attacks. Recent semantic-aware watermarking methods improve robustness by conditioning verification on image semantics. However, their reliance on a single global semantic binding makes them vulnerable to localized but globally coherent semantic edits. To address this limitation and provide a trustworthy semantic-aware watermark, we propose $\underline{\textbf{S}}$emantic $\underline{\textbf{L}}$atent $\underline{\textbf{I}}$njection via $\underline{\textbf{C}}$ompartmentalized $\underline{\textbf{E}}$mbedding ($\textbf{SLICE}$). Our framework decouples image semantics into four semantic factors (subject, environment, action, and detail) and precisely anchors them to distinct regions in the initial Gaussian noise. This fine-grained semantic binding enables advanced watermark verification where semantic tampering is detectable and localizable. We theoretically justify why SLICE enables robust and reliable tamper localization and provides statistical guarantees on false-accept rates. Experimental results demonstrate that SLICE significantly outperforms existing baselines against advanced semantic-guided regeneration attacks, substantially reducing attack success while preserving image quality and semantic fidelity. Overall, SLICE offers a practical, training-free provenance solution that is both fine-grained in diagnosis and robust to realistic adversarial manipulations.

Small but Mighty: Enhancing Time Series Forecasting with Lightweight LLMs

Mar 05, 2025Abstract:While LLMs have demonstrated remarkable potential in time series forecasting, their practical deployment remains constrained by excessive computational demands and memory footprints. Existing LLM-based approaches typically suffer from three critical limitations: Inefficient parameter utilization in handling numerical time series patterns; Modality misalignment between continuous temporal signals and discrete text embeddings; and Inflexibility for real-time expert knowledge integration. We present SMETimes, the first systematic investigation of sub-3B parameter SLMs for efficient and accurate time series forecasting. Our approach centers on three key innovations: A statistically-enhanced prompting mechanism that bridges numerical time series with textual semantics through descriptive statistical features; A adaptive fusion embedding architecture that aligns temporal patterns with language model token spaces through learnable parameters; And a dynamic mixture-of-experts framework enabled by SLMs' computational efficiency, adaptively combining base predictions with domain-specific models. Extensive evaluations across seven benchmark datasets demonstrate that our 3B-parameter SLM achieves state-of-the-art performance on five primary datasets while maintaining 3.8x faster training and 5.2x lower memory consumption compared to 7B-parameter LLM baselines. Notably, the proposed model exhibits better learning capabilities, achieving 12.3% lower MSE than conventional LLM. Ablation studies validate that our statistical prompting and cross-modal fusion modules respectively contribute 15.7% and 18.2% error reduction in long-horizon forecasting tasks. By redefining the efficiency-accuracy trade-off landscape, this work establishes SLMs as viable alternatives to resource-intensive LLMs for practical time series forecasting. Code and models are available at https://github.com/xiyan1234567/SMETimes.

DARNet: Bridging Domain Gaps in Cross-Domain Few-Shot Segmentation with Dynamic Adaptation

Dec 08, 2023

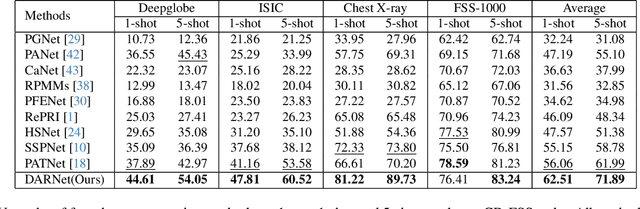

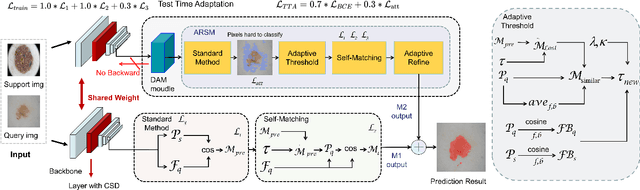

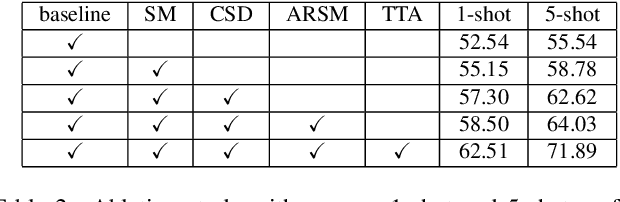

Abstract:Few-shot segmentation (FSS) aims to segment novel classes in a query image by using only a small number of supporting images from base classes. However, in cross-domain few-shot segmentation (CD-FSS), leveraging features from label-rich domains for resource-constrained domains poses challenges due to domain discrepancies. This work presents a Dynamically Adaptive Refine (DARNet) method that aims to balance generalization and specificity for CD-FSS. Our method includes the Channel Statistics Disruption (CSD) strategy, which perturbs feature channel statistics in the source domain, bolstering generalization to unknown target domains. Moreover, recognizing the variability across target domains, an Adaptive Refine Self-Matching (ARSM) method is also proposed to adjust the matching threshold and dynamically refine the prediction result with the self-matching method, enhancing accuracy. We also present a Test-Time Adaptation (TTA) method to refine the model's adaptability to diverse feature distributions. Our approach demonstrates superior performance against state-of-the-art methods in CD-FSS tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge