Fabio Ramos

NVIDIA, University of Sydney

OCTNet: Trajectory Generation in New Environments from Past Experiences

Sep 25, 2019

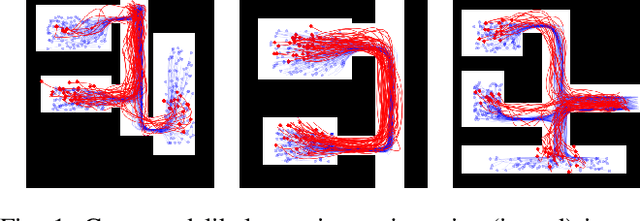

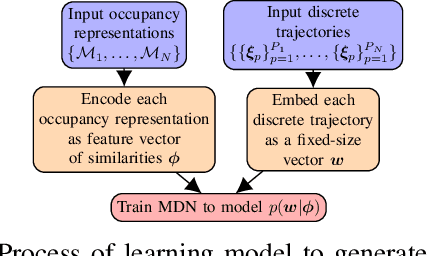

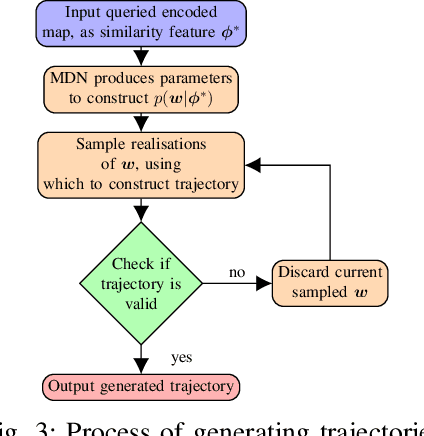

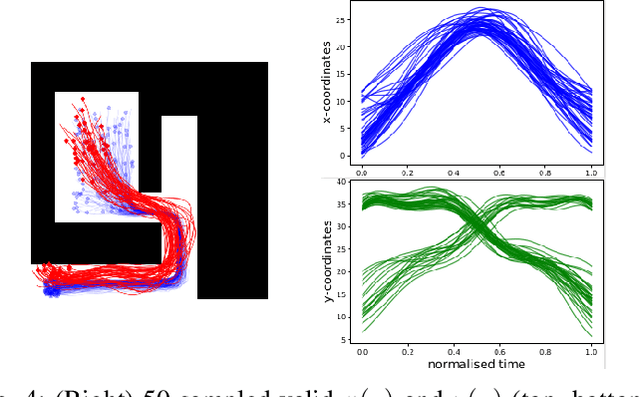

Abstract:Being able to safely operate for extended periods of time in dynamic environments is a critical capability for autonomous systems. This generally involves the prediction and understanding of motion patterns of dynamic entities, such as vehicles and people, in the surroundings. Many motion prediction methods in the literature can learn a function, mapping position and time to potential trajectories taken by people or other dynamic entities. However, these predictions depend only on previously observed trajectories, and do not explicitly take into consideration the environment. Trends of motion obtained in one environment are typically specific to that environment, and are not used to better predict motion in other environments. In this paper, we address the problem of generating likely motion dynamics conditioned on the environment, represented as an occupancy map. We introduce the Occupancy Conditional Trajectory Network (OCTNet) framework, capable of generalising the previously observed motion in known environments, to generate trajectories in new environments where no observations of motion has not been observed. OCTNet encodes trajectories as a fixed-sized vector of parameters and utilises neural networks to learn conditional distributions over parameters. We empirically demonstrate our method's ability to generate complex multi-modal trajectory patterns in different environments.

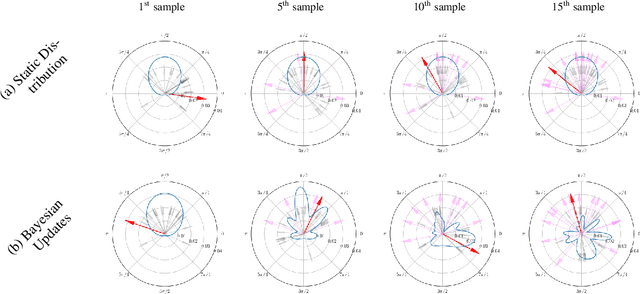

Local Sampling-based Planning with Sequential Bayesian Updates

Sep 08, 2019

Abstract:Sampling-based planners are the predominant motion planning paradigm for robots. Majority of sampling-based planners use a global random sampling scheme to guarantee completeness. However, these schemes are sample inefficient as the majority of the samples are wasted in narrow passages. Consequently, information about the local structure is neglected. Local sampling-based motion planners, on the other hand, take sequential decisions of random walks to samples valid trajectories in configuration space. However, current approaches do not adapt their strategies according to the success and failures of past samples. In this work, we introduce a local sampling-based motion planner with a Bayesian update scheme for modelling a sampling proposal distribution. The proposal distribution is sequentially updated based on previous sample outcomes, consequently shaping the proposal distribution according to local obstacles and constraints in the configuration space. Thus, through learning from past observed outcomes, we can maximise the likelihood of sampling in regions that have a higher probability to form trajectories within narrow passages.

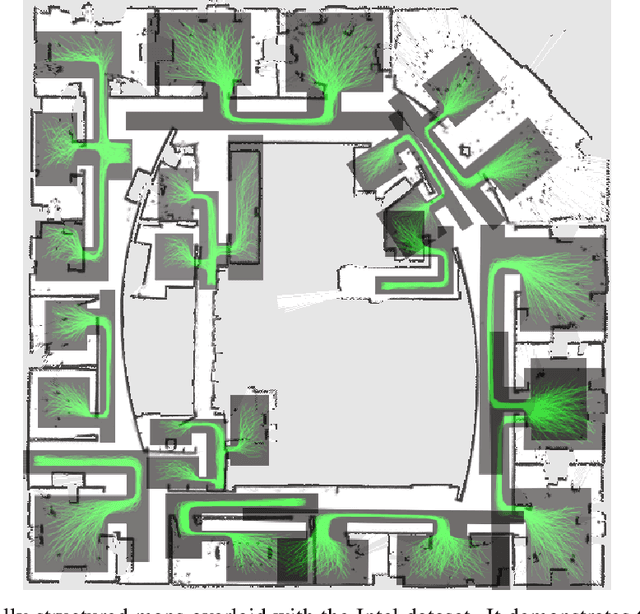

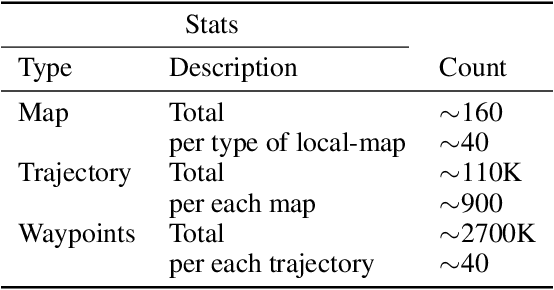

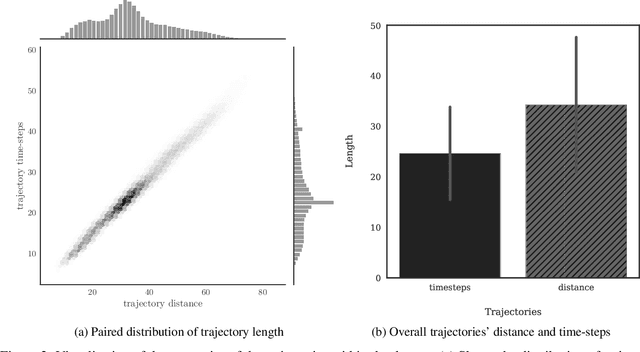

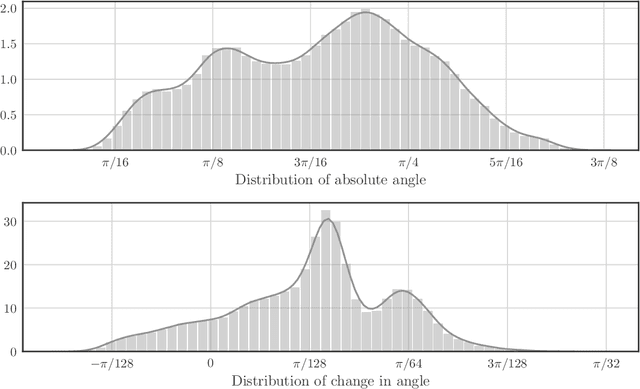

Occ-Traj120: Occupancy Maps with Associated Trajectories

Sep 05, 2019

Abstract:Trajectory modelling had been the principal research area for understanding and anticipating human behaviour. Predicting the dynamic path by observing the agent and its surrounding environment are essential for applications such as autonomous driving and indoor navigation suggestions. However, despite the numerous researches that had been presented, most available dataset does not contains any information on environmental factors---such as the occupancy representation of the map---which arguably plays a significant role on how an agent chooses its trajectory. We present a trajectory dataset with the corresponding occupancy representations of different local-maps. The dataset contains more than 120 locally-structured maps with occupancy representation and more than 110K trajectories in total. Each map has few hundred corresponding simulated trajectories that navigate from a spatial location of a room to another point. The dataset is freely available online.

Speeding Up Iterative Closest Point Using Stochastic Gradient Descent

Jul 22, 2019

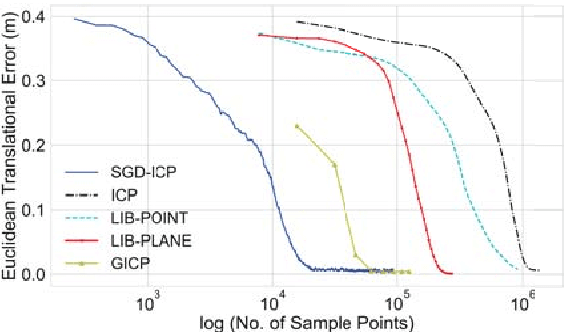

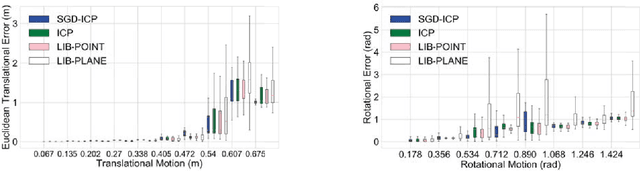

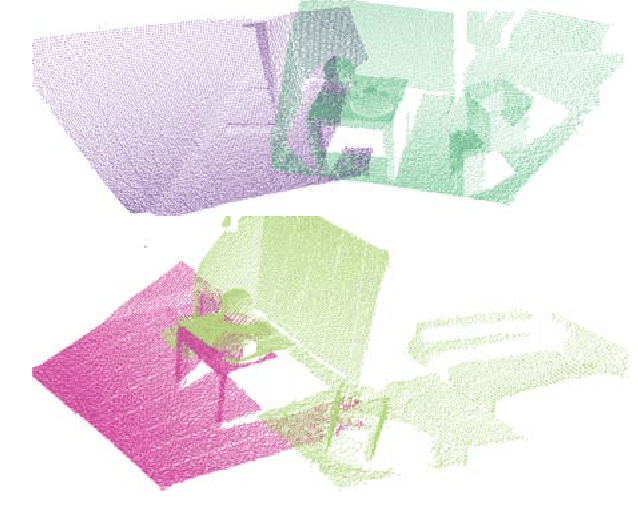

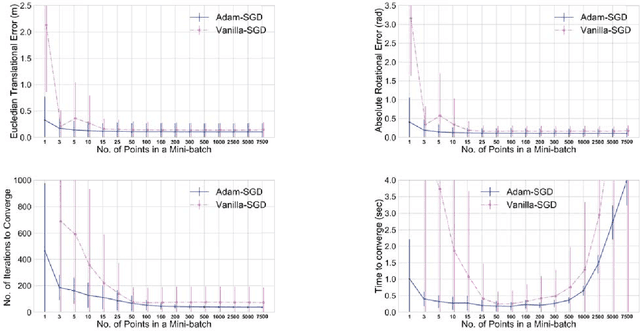

Abstract:Sensors producing 3D point clouds such as 3D laser scanners and RGB-D cameras are widely used in robotics, be it for autonomous driving or manipulation. Aligning point clouds produced by these sensors is a vital component in such applications to perform tasks such as model registration, pose estimation, and SLAM. Iterative closest point (ICP) is the most widely used method for this task, due to its simplicity and efficiency. In this paper we propose a novel method which solves the optimisation problem posed by ICP using stochastic gradient descent (SGD). Using SGD allows us to improve the convergence speed of ICP without sacrificing solution quality. Experiments using Kinect as well as Velodyne data show that, our proposed method is faster than existing methods, while obtaining solutions comparable to standard ICP. An additional benefit is robustness to parameters when processing data from different sensors.

Kernel Trajectory Maps for Multi-Modal Probabilistic Motion Prediction

Jul 11, 2019

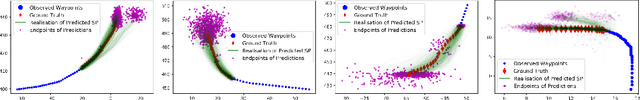

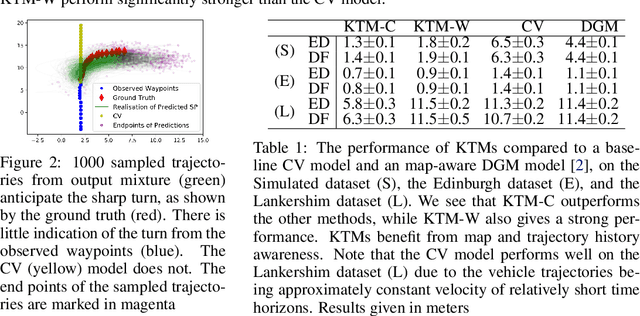

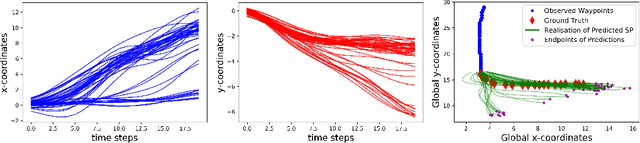

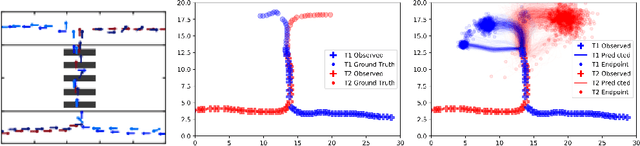

Abstract:Understanding the dynamics of an environment, such as the movement of humans and vehicles, is crucial for agents to achieve long-term autonomy in urban environments. This requires the development of methods to capture the multi-modal and probabilistic nature of motion patterns. We present Kernel Trajectory Maps (KTM) to capture the trajectories of movement in an environment. KTMs leverage the expressiveness of kernels from non-parametric modelling by projecting input trajectories onto a set of representative trajectories, to condition on a sequence of observed waypoint coordinates, and predict a multi-modal distribution over possible future trajectories. The output is a mixture of continuous stochastic processes, where each realisation is a continuous functional trajectory, which can be queried at arbitrarily fine time steps.

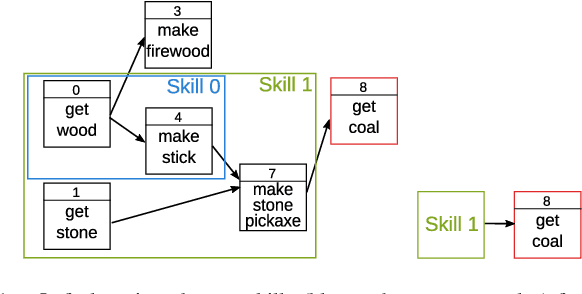

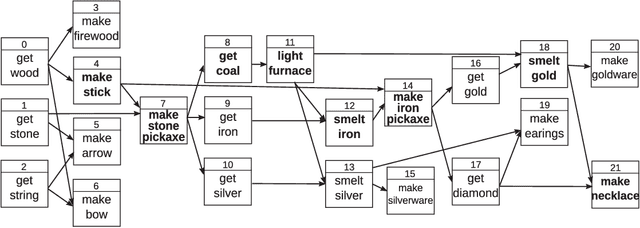

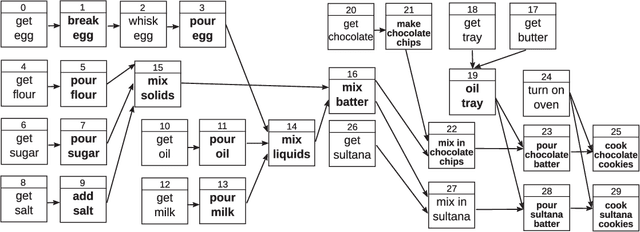

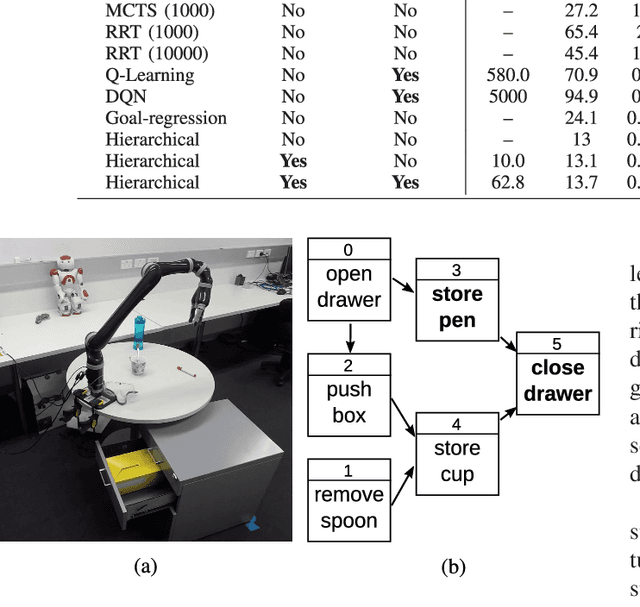

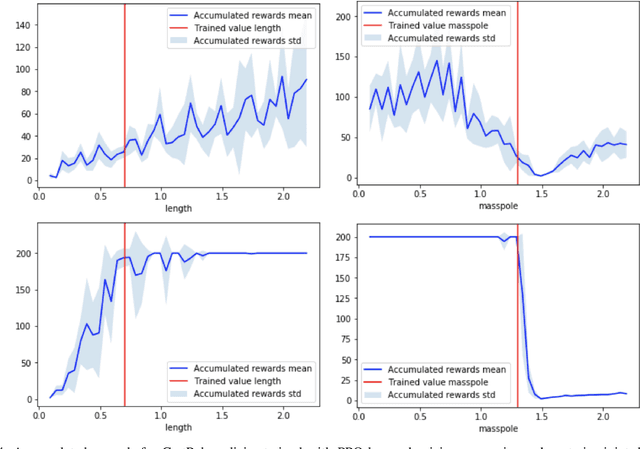

Learning to Plan Hierarchically from Curriculum

Jun 18, 2019

Abstract:We present a framework for learning to plan hierarchically in domains with unknown dynamics. We enhance planning performance by exploiting problem structure in several ways: (i) We simplify the search over plans by leveraging knowledge of skill objectives, (ii) Shorter plans are generated by enforcing aggressively hierarchical planning, (iii) We learn transition dynamics with sparse local models for better generalisation. Our framework decomposes transition dynamics into skill effects and success conditions, which allows fast planning by reasoning on effects, while learning conditions from interactions with the world. We propose a simple method for learning new abstract skills, using successful trajectories stemming from completing the goals of a curriculum. Learned skills are then refined to leverage other abstract skills and enhance subsequent planning. We show that both conditions and abstract skills can be learned simultaneously while planning, even in stochastic domains. Our method is validated in experiments of increasing complexity, with up to 2^100 states, showing superior planning to classic non-hierarchical planners or reinforcement learning methods. Applicability to real-world problems is demonstrated in a simulation-to-real transfer experiment on a robotic manipulator.

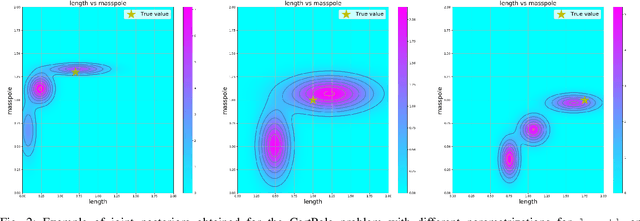

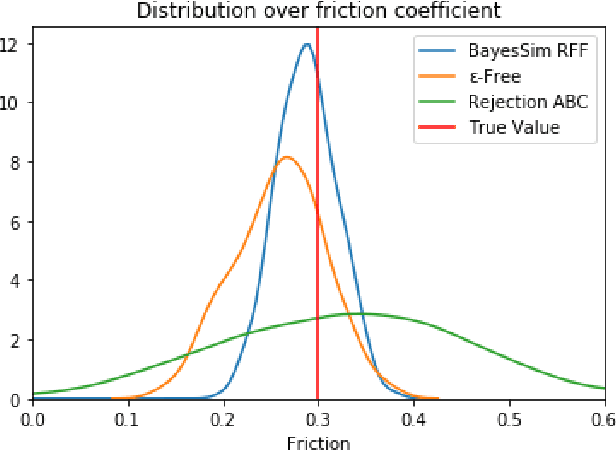

BayesSim: adaptive domain randomization via probabilistic inference for robotics simulators

Jun 04, 2019

Abstract:We introduce BayesSim, a framework for robotics simulations allowing a full Bayesian treatment for the parameters of the simulator. As simulators become more sophisticated and able to represent the dynamics more accurately, fundamental problems in robotics such as motion planning and perception can be solved in simulation and solutions transferred to the physical robot. However, even the most complex simulator might still not be able to represent reality in all its details either due to inaccurate parametrization or simplistic assumptions in the dynamic models. BayesSim provides a principled framework to reason about the uncertainty of simulation parameters. Given a black box simulator (or generative model) that outputs trajectories of state and action pairs from unknown simulation parameters, followed by trajectories obtained with a physical robot, we develop a likelihood-free inference method that computes the posterior distribution of simulation parameters. This posterior can then be used in problems where Sim2Real is critical, for example in policy search. We compare the performance of BayesSim in obtaining accurate posteriors in a number of classical control and robotics problems. Results show that the posterior computed from BayesSim can be used for domain randomization outperforming alternative methods that randomize based on uniform priors.

* Code available at https://github.com/rafaelpossas/bayes_sim

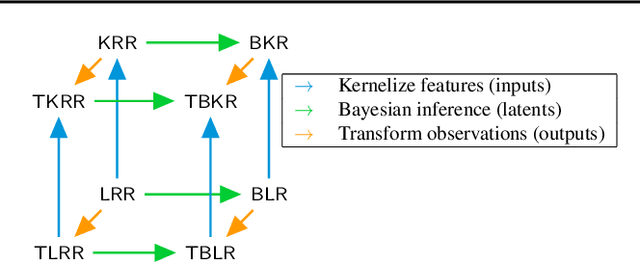

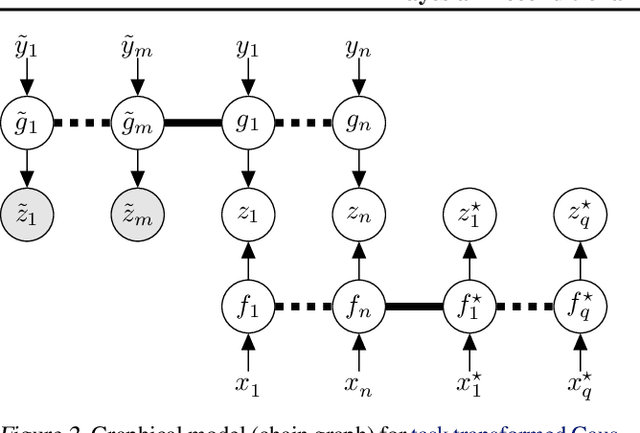

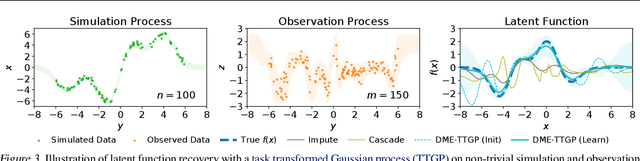

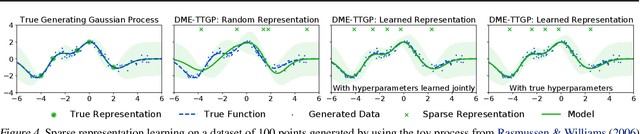

Bayesian Deconditional Kernel Mean Embeddings

Jun 01, 2019

Abstract:Conditional kernel mean embeddings form an attractive nonparametric framework for representing conditional means of functions, describing the observation processes for many complex models. However, the recovery of the original underlying function of interest whose conditional mean was observed is a challenging inference task. We formalize deconditional kernel mean embeddings as a solution to this inverse problem, and show that it can be naturally viewed as a nonparametric Bayes' rule. Critically, we introduce the notion of task transformed Gaussian processes and establish deconditional kernel means as their posterior predictive mean. This connection provides Bayesian interpretations and uncertainty estimates for deconditional kernel mean embeddings, explains their regularization hyperparameters, and reveals a marginal likelihood for kernel hyperparameter learning. These revelations further enable practical applications such as likelihood-free inference and learning sparse representations for big data.

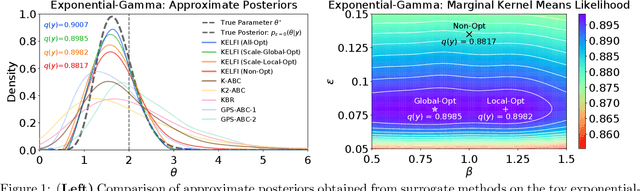

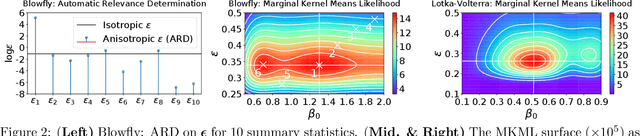

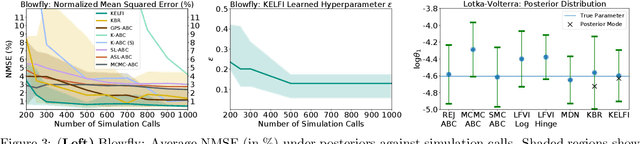

Bayesian Learning of Conditional Kernel Mean Embeddings for Automatic Likelihood-Free Inference

Mar 03, 2019

Abstract:In likelihood-free settings where likelihood evaluations are intractable, approximate Bayesian computation (ABC) addresses the formidable inference task to discover plausible parameters of simulation programs that explain the observations. However, they demand large quantities of simulation calls. Critically, hyperparameters that determine measures of simulation discrepancy crucially balance inference accuracy and sample efficiency, yet are difficult to tune. In this paper, we present kernel embedding likelihood-free inference (KELFI), a holistic framework that automatically learns model hyperparameters to improve inference accuracy given limited simulation budget. By leveraging likelihood smoothness with conditional mean embeddings, we nonparametrically approximate likelihoods and posteriors as surrogate densities and sample from closed-form posterior mean embeddings, whose hyperparameters are learned under its approximate marginal likelihood. Our modular framework demonstrates improved accuracy and efficiency on challenging inference problems in ecology.

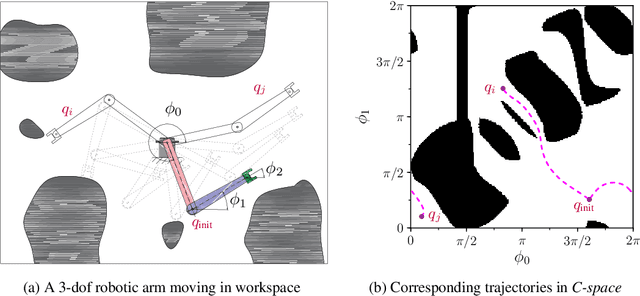

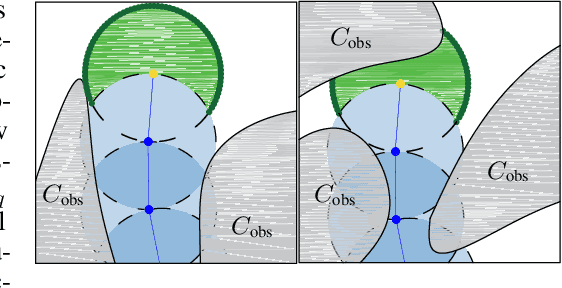

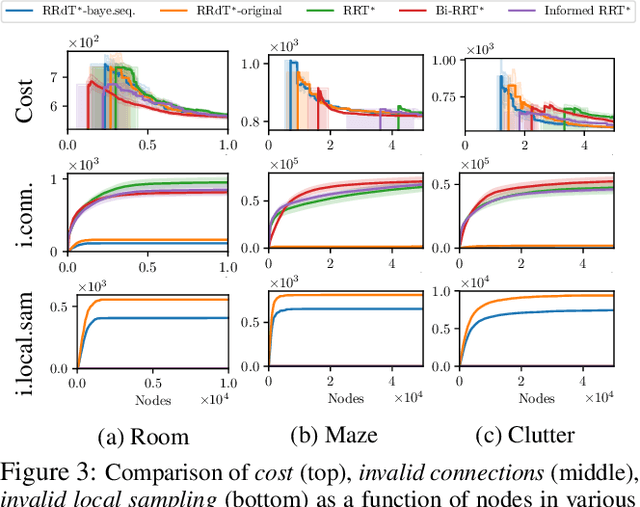

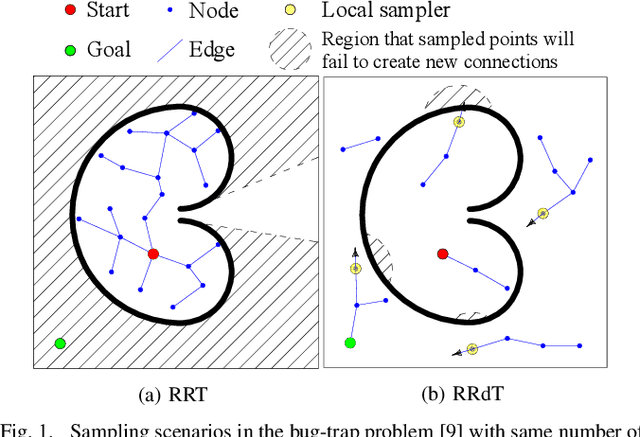

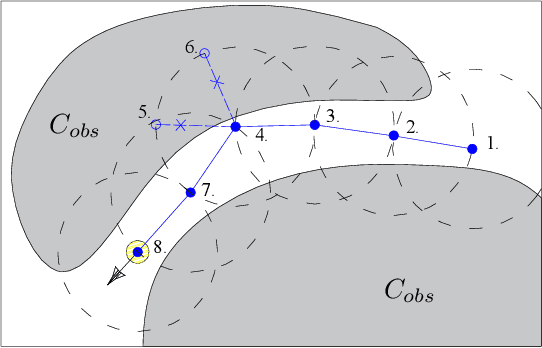

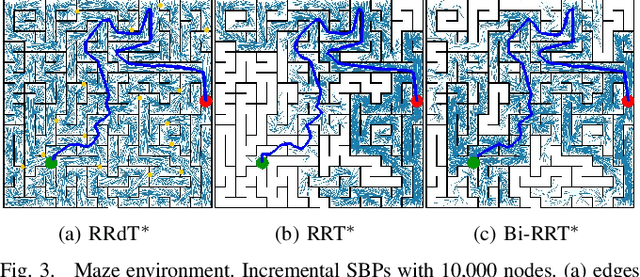

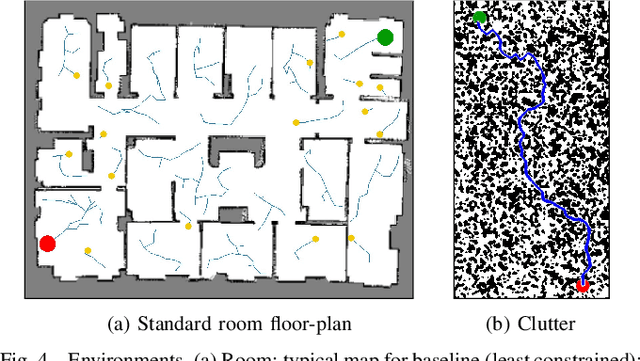

Balancing Global Exploration and Local-connectivity Exploitation with Rapidly-exploring Random disjointed-Trees

Feb 27, 2019

Abstract:Sampling efficiency in a highly constrained environment has long been a major challenge for sampling-based planners. In this work, we propose Rapidly-exploring Random disjointed-Trees* (RRdT*), an incremental optimal multi-query planner. RRdT* uses multiple disjointed-trees to exploit local-connectivity of spaces via Markov Chain random sampling, which utilises neighbourhood information derived from previous successful and failed samples. To balance local exploitation, RRdT* actively explore unseen global spaces when local-connectivity exploitation is unsuccessful. The active trade-off between local exploitation and global exploration is formulated as a multi-armed bandit problem. We argue that the active balancing of global exploration and local exploitation is the key to improving sample efficient in sampling-based motion planners. We provide rigorous proofs of completeness and optimal convergence for this novel approach. Furthermore, we demonstrate experimentally the effectiveness of RRdT*'s locally exploring trees in granting improved visibility for planning. Consequently, RRdT* outperforms existing state-of-the-art incremental planners, especially in highly constrained environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge