Chao Tan

Halt the Hallucination: Decoupling Signal and Semantic OOD Detection Based on Cascaded Early Rejection

Feb 06, 2026Abstract:Efficient and robust Out-of-Distribution (OOD) detection is paramount for safety-critical applications.However, existing methods still execute full-scale inference on low-level statistical noise. This computational mismatch not only incurs resource waste but also induces semantic hallucination, where deep networks forcefully interpret physical anomalies as high-confidence semantic features.To address this, we propose the Cascaded Early Rejection (CER) framework, which realizes hierarchical filtering for anomaly detection via a coarse-to-fine logic.CER comprises two core modules: 1)Structural Energy Sieve (SES), which establishes a non-parametric barrier at the network entry using the Laplacian operator to efficiently intercept physical signal anomalies; and 2) the Semantically-aware Hyperspherical Energy (SHE) detector, which decouples feature magnitude from direction in intermediate layers to identify fine-grained semantic deviations. Experimental results demonstrate that CER not only reduces computational overhead by 32% but also achieves a significant performance leap on the CIFAR-100 benchmark:the average FPR95 drastically decreases from 33.58% to 22.84%, and AUROC improves to 93.97%. Crucially, in real-world scenarios simulating sensor failures, CER exhibits performance far exceeding state-of-the-art methods. As a universal plugin, CER can be seamlessly integrated into various SOTA models to provide performance gains.

MeanCache: From Instantaneous to Average Velocity for Accelerating Flow Matching Inference

Jan 27, 2026Abstract:We present MeanCache, a training-free caching framework for efficient Flow Matching inference. Existing caching methods reduce redundant computation but typically rely on instantaneous velocity information (e.g., feature caching), which often leads to severe trajectory deviations and error accumulation under high acceleration ratios. MeanCache introduces an average-velocity perspective: by leveraging cached Jacobian--vector products (JVP) to construct interval average velocities from instantaneous velocities, it effectively mitigates local error accumulation. To further improve cache timing and JVP reuse stability, we develop a trajectory-stability scheduling strategy as a practical tool, employing a Peak-Suppressed Shortest Path under budget constraints to determine the schedule. Experiments on FLUX.1, Qwen-Image, and HunyuanVideo demonstrate that MeanCache achieves 4.12X and 4.56X and 3.59X acceleration, respectively, while consistently outperforming state-of-the-art caching baselines in generation quality. We believe this simple yet effective approach provides a new perspective for Flow Matching inference and will inspire further exploration of stability-driven acceleration in commercial-scale generative models.

ELGAR: Expressive Cello Performance Motion Generation for Audio Rendition

May 07, 2025Abstract:The art of instrument performance stands as a vivid manifestation of human creativity and emotion. Nonetheless, generating instrument performance motions is a highly challenging task, as it requires not only capturing intricate movements but also reconstructing the complex dynamics of the performer-instrument interaction. While existing works primarily focus on modeling partial body motions, we propose Expressive ceLlo performance motion Generation for Audio Rendition (ELGAR), a state-of-the-art diffusion-based framework for whole-body fine-grained instrument performance motion generation solely from audio. To emphasize the interactive nature of the instrument performance, we introduce Hand Interactive Contact Loss (HICL) and Bow Interactive Contact Loss (BICL), which effectively guarantee the authenticity of the interplay. Moreover, to better evaluate whether the generated motions align with the semantic context of the music audio, we design novel metrics specifically for string instrument performance motion generation, including finger-contact distance, bow-string distance, and bowing score. Extensive evaluations and ablation studies are conducted to validate the efficacy of the proposed methods. In addition, we put forward a motion generation dataset SPD-GEN, collated and normalized from the MoCap dataset SPD. As demonstrated, ELGAR has shown great potential in generating instrument performance motions with complicated and fast interactions, which will promote further development in areas such as animation, music education, interactive art creation, etc.

Investigating Effective Speaker Property Privacy Protection in Federated Learning for Speech Emotion Recognition

Oct 17, 2024

Abstract:Federated Learning (FL) is a privacy-preserving approach that allows servers to aggregate distributed models transmitted from local clients rather than training on user data. More recently, FL has been applied to Speech Emotion Recognition (SER) for secure human-computer interaction applications. Recent research has found that FL is still vulnerable to inference attacks. To this end, this paper focuses on investigating the security of FL for SER concerning property inference attacks. We propose a novel method to protect the property information in speech data by decomposing various properties in the sound and adding perturbations to these properties. Our experiments show that the proposed method offers better privacy-utility trade-offs than existing methods. The trade-offs enable more effective attack prevention while maintaining similar FL utility levels. This work can guide future work on privacy protection methods in speech processing.

Synthetic Test Data Generation Using Recurrent Neural Networks: A Position Paper

Jul 07, 2024

Abstract:Testing in production-like test environments is an essential part of quality assurance processes in many industries. Provisioning of such test environments, for information-intensive services, involves setting up databases that are rich-enough to enable simulating a wide variety of user scenarios. While production data is perhaps the gold-standard here, many organizations, particularly within the public sectors, are not allowed to use production data for testing purposes due to privacy concerns. The alternatives are to use anonymized data, or synthetically generated data. In this paper, we elaborate on these alternatives and compare them in an industrial context. Further we focus on synthetic data generation and investigate the use of recurrent neural networks for this purpose. In our preliminary experiments, we were able to generate representative and highly accurate data using a recurrent neural network. These results open new research questions that we discuss here, and plan to investigate in our future research.

* This paper was published in the proceedings of RAISE@ICSE in 2019

Unsupervised Shadow Removal Using Target Consistency Generative Adversarial Network

Oct 03, 2020

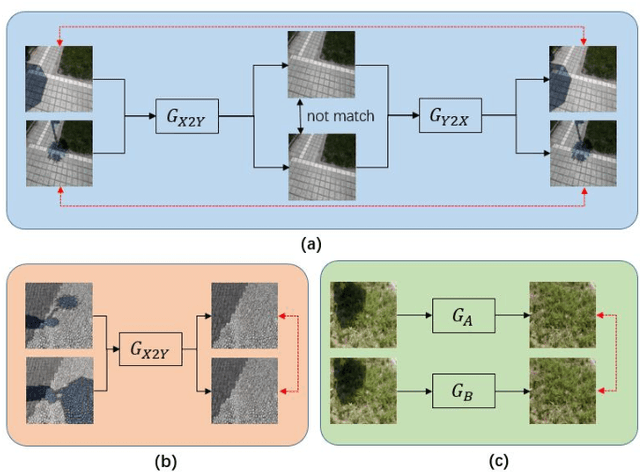

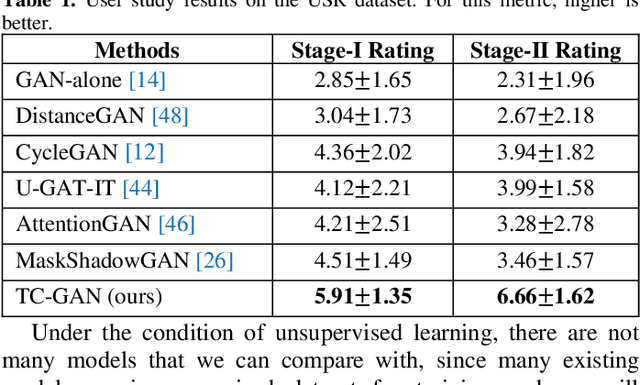

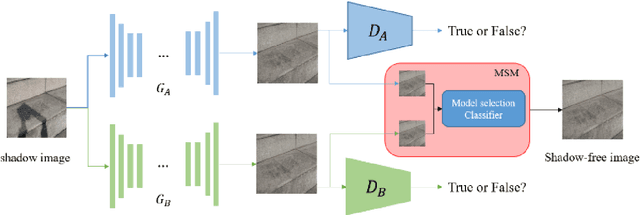

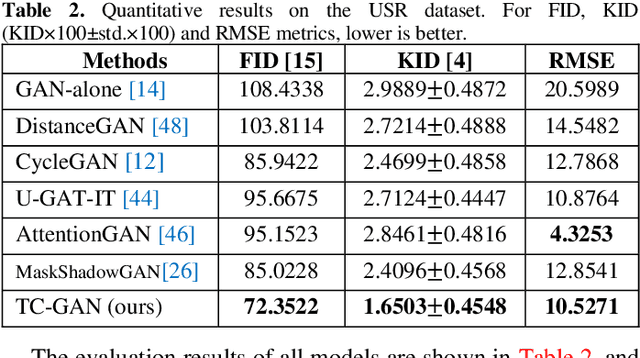

Abstract:Unsupervised shadow removal aims to learn a non-linear function to map the original image from shadow domain to non-shadow domain in the absence of paired shadow and non-shadow data. In this paper, we develop a simple yet efficient target-consistency generative adversarial network (TC-GAN) for the shadow removal task in the unsupervised manner. Compared with the bidirectional mapping in cycle-consistency GAN based methods for shadow removal, TC-GAN tries to learn a one-sided mapping to cast shadow images into shadow-free ones. With the proposed target-consistency constraint, the correlations between shadow images and the output shadow-free image are strictly confined. Extensive comparison experiments results show that TC-GAN outperforms the state-of-the-art unsupervised shadow removal methods by 14.9% in terms of FID and 31.5% in terms of KID. It is rather remarkable that TC-GAN achieves comparable performance with supervised shadow removal methods.

Generating the Cloud Motion Winds Field from Satellite Cloud Imagery Using Deep Learning Approach

Oct 03, 2020

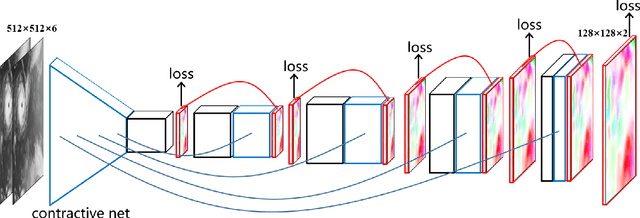

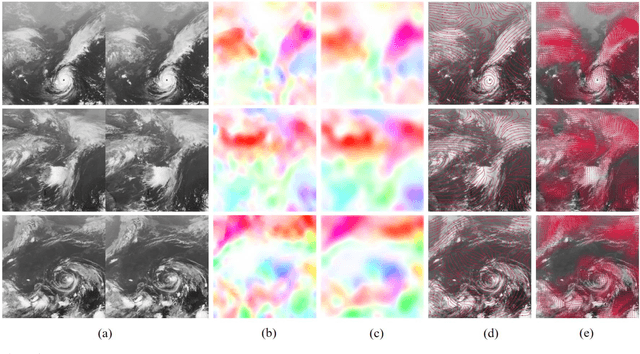

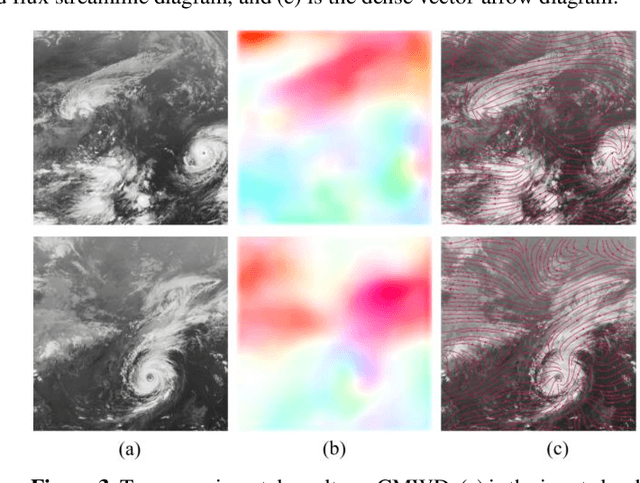

Abstract:Cloud motion winds (CMW) are routinely derived by tracking features in sequential geostationary satellite infrared cloud imagery. In this paper, we explore the cloud motion winds algorithm based on data-driven deep learning approach, and different from conventional hand-craft feature tracking and correlation matching algorithms, we use deep learning model to automatically learn the motion feature representations and directly output the field of cloud motion winds. In addition, we propose a novel large-scale cloud motion winds dataset (CMWD) for training deep learning models. We also try to use a single cloud imagery to predict the cloud motion winds field in a fixed region, which is impossible to achieve using traditional algorithms. The experimental results demonstrate that our algorithm can predict the cloud motion winds field efficiently, and even with a single cloud imagery as input.

TCLNet: Learning to Locate Typhoon Center Using Deep Neural Network

Oct 03, 2020

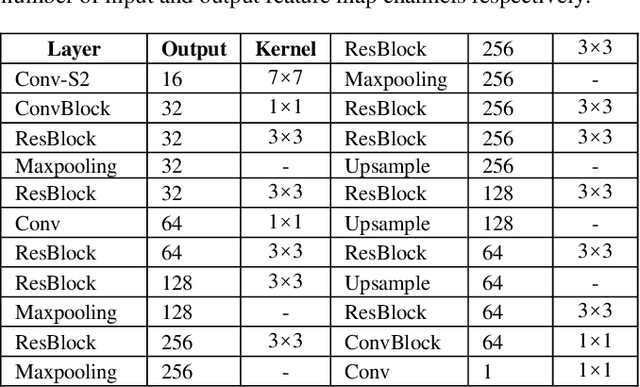

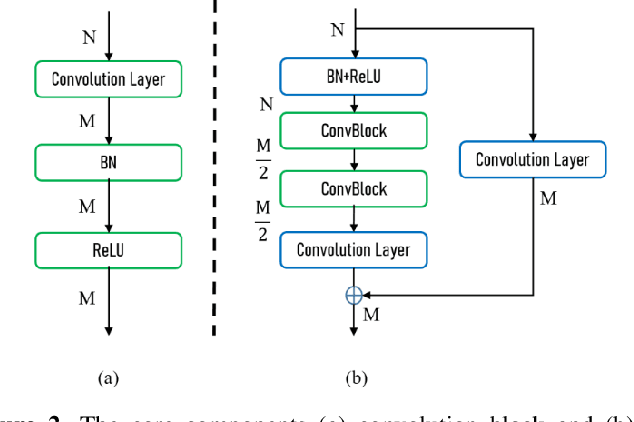

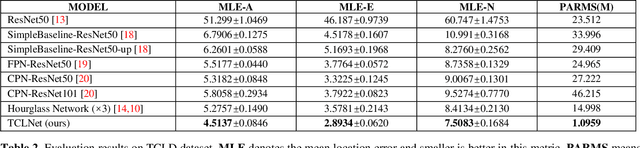

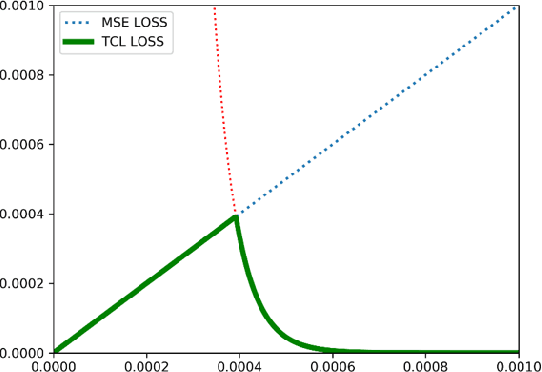

Abstract:The task of typhoon center location plays an important role in typhoon intensity analysis and typhoon path prediction. Conventional typhoon center location algorithms mostly rely on digital image processing and mathematical morphology operation, which achieve limited performance. In this paper, we proposed an efficient fully convolutional end-to-end deep neural network named TCLNet to automatically locate the typhoon center position. We design the network structure carefully so that our TCLNet can achieve remarkable performance base on its lightweight architecture. In addition, we also present a brand new large-scale typhoon center location dataset (TCLD) so that the TCLNet can be trained in a supervised manner. Furthermore, we propose to use a novel TCL+ piecewise loss function to further improve the performance of TCLNet. Extensive experimental results and comparison demonstrate the performance of our model, and our TCLNet achieve a 14.4% increase in accuracy on the basis of a 92.7% reduction in parameters compared with SOTA deep learning based typhoon center location methods.

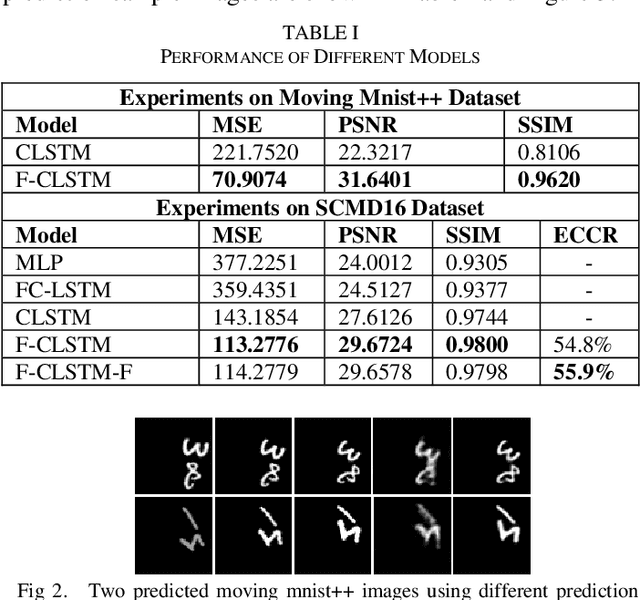

FORECAST-CLSTM: A New Convolutional LSTM Network for Cloudage Nowcasting

May 19, 2019

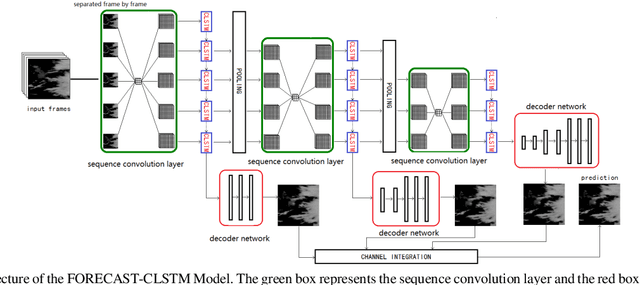

Abstract:With the highly demand of large-scale and real-time weather service for public, a refinement of short-time cloudage prediction has become an essential part of the weather forecast productions. To provide a weather-service-compliant cloudage nowcasting, in this paper, we propose a novel hierarchical Convolutional Long-Short-Term Memory network based deep learning model, which we term as FORECAST-CLSTM, with a new Forecaster loss function to predict the future satellite cloud images. The model is designed to fuse multi-scale features in the hierarchical network structure to predict the pixel value and the morphological movement of the cloudage simultaneously. We also collect about 40K infrared satellite nephograms and create a large-scale Satellite Cloudage Map Dataset(SCMD). The proposed FORECAST-CLSTM model is shown to achieve better prediction performance compared with the state-of-the-art ConvLSTM model and the proposed Forecaster Loss Function is also demonstrated to retain the uncertainty of the real atmosphere condition better than conventional loss function.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge