Bryan Reimer

CLERA: A Unified Model for Joint Cognitive Load and Eye Region Analysis in the Wild

Jun 26, 2023

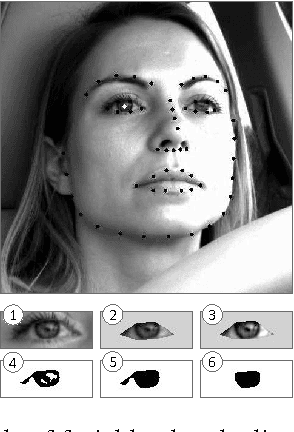

Abstract:Non-intrusive, real-time analysis of the dynamics of the eye region allows us to monitor humans' visual attention allocation and estimate their mental state during the performance of real-world tasks, which can potentially benefit a wide range of human-computer interaction (HCI) applications. While commercial eye-tracking devices have been frequently employed, the difficulty of customizing these devices places unnecessary constraints on the exploration of more efficient, end-to-end models of eye dynamics. In this work, we propose CLERA, a unified model for Cognitive Load and Eye Region Analysis, which achieves precise keypoint detection and spatiotemporal tracking in a joint-learning framework. Our method demonstrates significant efficiency and outperforms prior work on tasks including cognitive load estimation, eye landmark detection, and blink estimation. We also introduce a large-scale dataset of 30k human faces with joint pupil, eye-openness, and landmark annotation, which aims to support future HCI research on human factors and eye-related analysis.

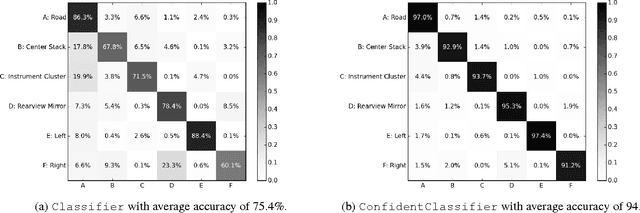

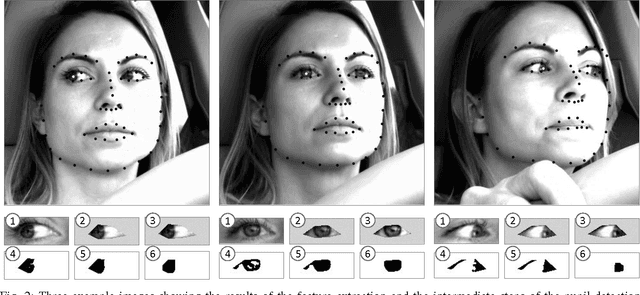

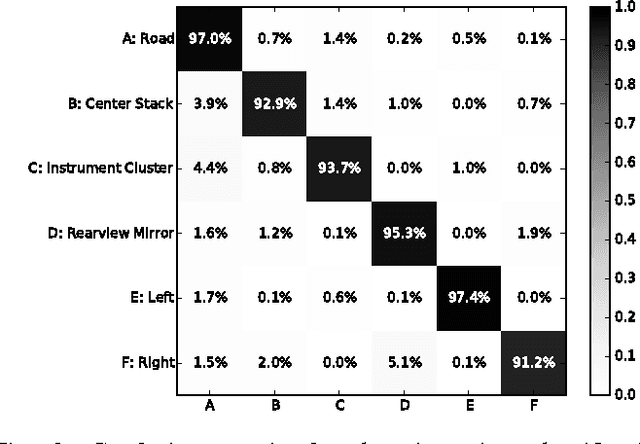

Driver Glance Classification In-the-wild: Towards Generalization Across Domains and Subjects

Dec 05, 2020

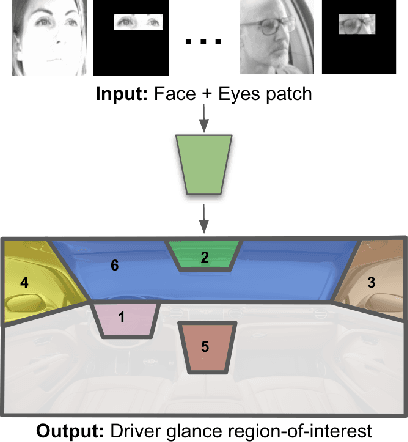

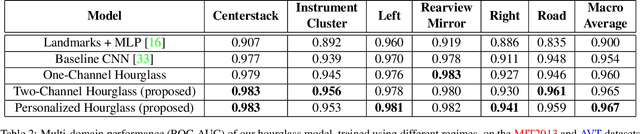

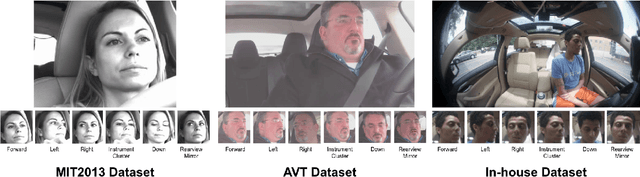

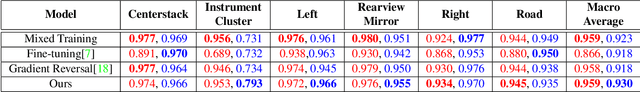

Abstract:Distracted drivers are dangerous drivers. Equipping advanced driver assistance systems (ADAS) with the ability to detect driver distraction can help prevent accidents and improve driver safety. In order to detect driver distraction, an ADAS must be able to monitor their visual attention. We propose a model that takes as input a patch of the driver's face along with a crop of the eye-region and classifies their glance into 6 coarse regions-of-interest (ROIs) in the vehicle. We demonstrate that an hourglass network, trained with an additional reconstruction loss, allows the model to learn stronger contextual feature representations than a traditional encoder-only classification module. To make the system robust to subject-specific variations in appearance and behavior, we design a personalized hourglass model tuned with an auxiliary input representing the driver's baseline glance behavior. Finally, we present a weakly supervised multi-domain training regimen that enables the hourglass to jointly learn representations from different domains (varying in camera type, angle), utilizing unlabeled samples and thereby reducing annotation cost.

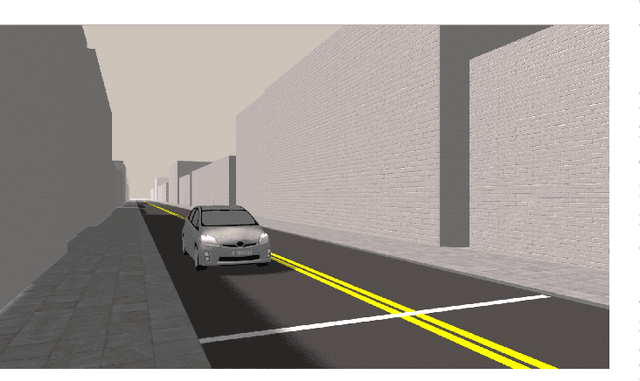

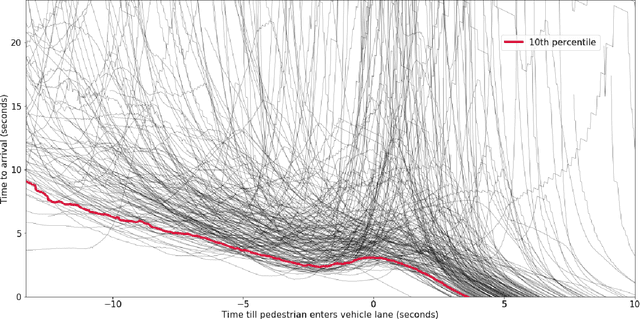

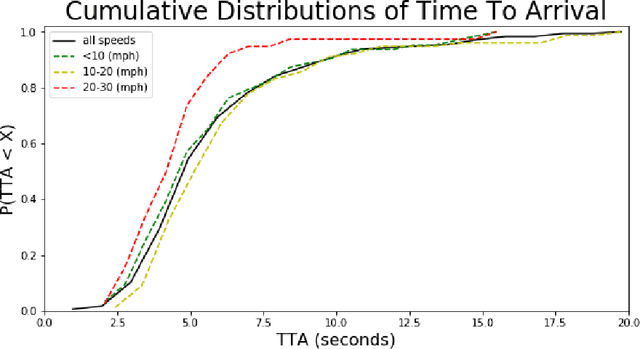

Dynamics of Pedestrian Crossing Decisions Based on Vehicle Trajectories in Large-Scale Simulated and Real-World Data

Apr 08, 2019

Abstract:Humans, as both pedestrians and drivers, generally skillfully navigate traffic intersections. Despite the uncertainty, danger, and the non-verbal nature of communication commonly found in these interactions, there are surprisingly few collisions considering the total number of interactions. As the role of automation technology in vehicles grows, it becomes increasingly critical to understand the relationship between pedestrian and driver behavior: how pedestrians perceive the actions of a vehicle/driver and how pedestrians make crossing decisions. The relationship between time-to-arrival (TTA) and pedestrian gap acceptance (i.e., whether a pedestrian chooses to cross under a given window of time to cross) has been extensively investigated. However, the dynamic nature of vehicle trajectories in the context of non-verbal communication has not been systematically explored. Our work provides evidence that trajectory dynamics, such as changes in TTA, can be powerful signals in the non-verbal communication between drivers and pedestrians. Moreover, we investigate these effects in both simulated and real-world datasets, both larger than have previously been considered in literature to the best of our knowledge.

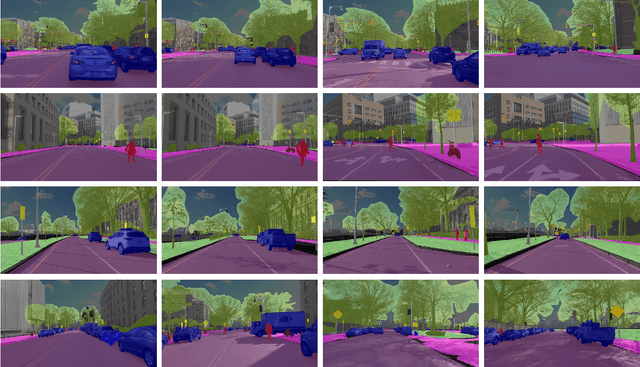

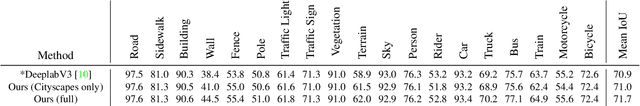

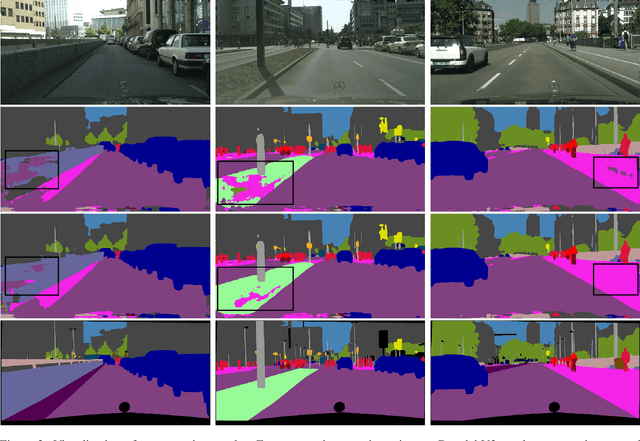

Value of Temporal Dynamics Information in Driving Scene Segmentation

Mar 21, 2019

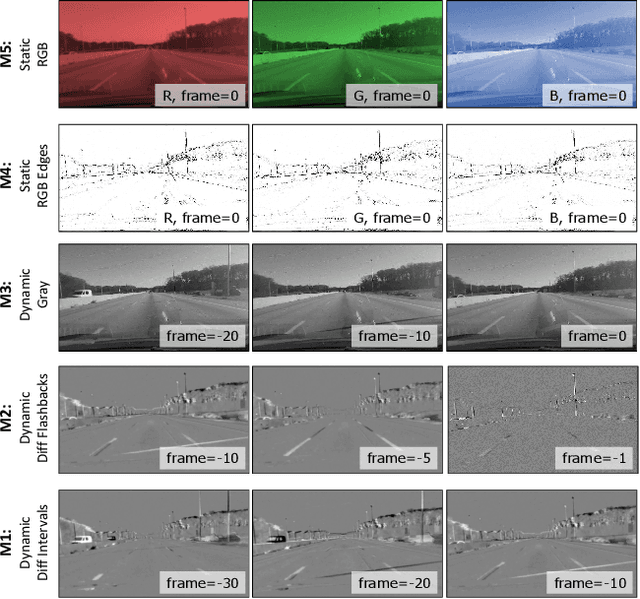

Abstract:Semantic scene segmentation has primarily been addressed by forming representations of single images both with supervised and unsupervised methods. The problem of semantic segmentation in dynamic scenes has begun to recently receive attention with video object segmentation approaches. What is not known is how much extra information the temporal dynamics of the visual scene carries that is complimentary to the information available in the individual frames of the video. There is evidence that the human visual system can effectively perceive the scene from temporal dynamics information of the scene's changing visual characteristics without relying on the visual characteristics of individual snapshots themselves. Our work takes steps to explore whether machine perception can exhibit similar properties by combining appearance-based representations and temporal dynamics representations in a joint-learning problem that reveals the contribution of each toward successful dynamic scene segmentation. Additionally, we provide the MIT Driving Scene Segmentation dataset, which is a large-scale full driving scene segmentation dataset, densely annotated for every pixel and every one of 5,000 video frames. This dataset is intended to help further the exploration of the value of temporal dynamics information for semantic segmentation in video.

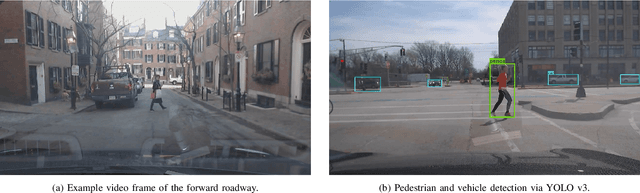

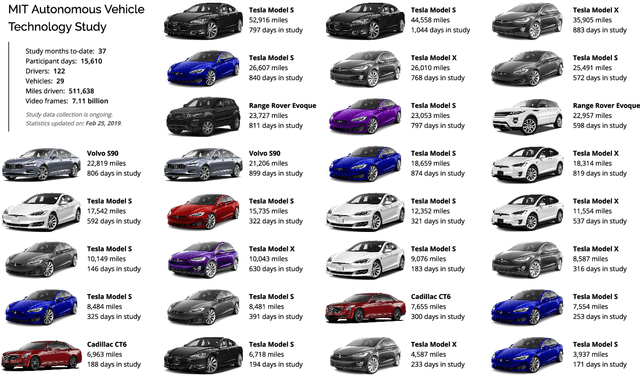

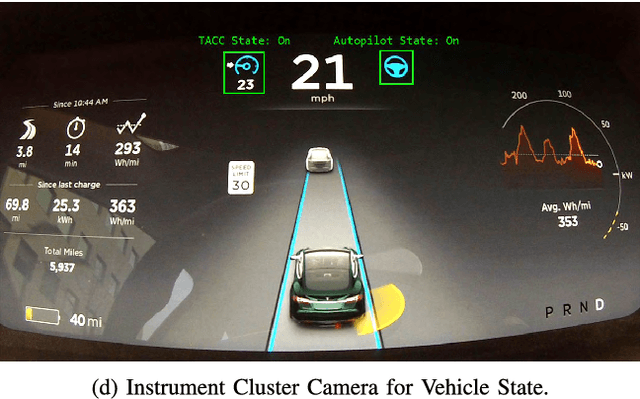

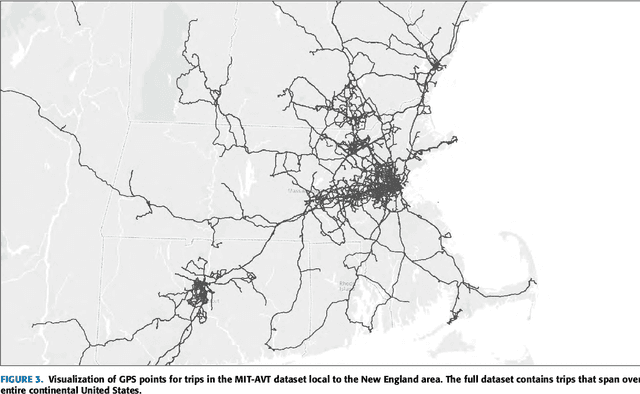

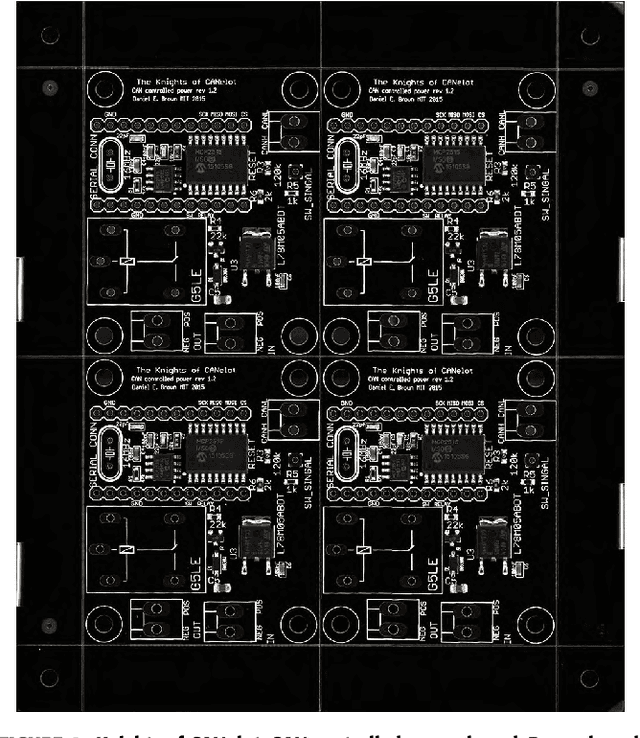

MIT Autonomous Vehicle Technology Study: Large-Scale Deep Learning Based Analysis of Driver Behavior and Interaction with Automation

Sep 30, 2018

Abstract:For the foreseeble future, human beings will likely remain an integral part of the driving task, monitoring the AI system as it performs anywhere from just over 0% to just under 100% of the driving. The governing objectives of the MIT Autonomous Vehicle Technology (MIT-AVT) study are to (1) undertake large-scale real-world driving data collection that includes high-definition video to fuel the development of deep learning based internal and external perception systems, (2) gain a holistic understanding of how human beings interact with vehicle automation technology by integrating video data with vehicle state data, driver characteristics, mental models, and self-reported experiences with technology, and (3) identify how technology and other factors related to automation adoption and use can be improved in ways that save lives. In pursuing these objectives, we have instrumented 21 Tesla Model S and Model X vehicles, 2 Volvo S90 vehicles, 2 Range Rover Evoque, and 2 Cadillac CT6 vehicles for both long-term (over a year per driver) and medium term (one month per driver) naturalistic driving data collection. Furthermore, we are continually developing new methods for analysis of the massive-scale dataset collected from the instrumented vehicle fleet. The recorded data streams include IMU, GPS, CAN messages, and high-definition video streams of the driver face, the driver cabin, the forward roadway, and the instrument cluster (on select vehicles). The study is on-going and growing. To date, we have 99 participants, 11,846 days of participation, 405,807 miles, and 5.5 billion video frames. This paper presents the design of the study, the data collection hardware, the processing of the data, and the computer vision algorithms currently being used to extract actionable knowledge from the data.

Arguing Machines: Human Supervision of Black Box AI Systems That Make Life-Critical Decisions

Sep 24, 2018

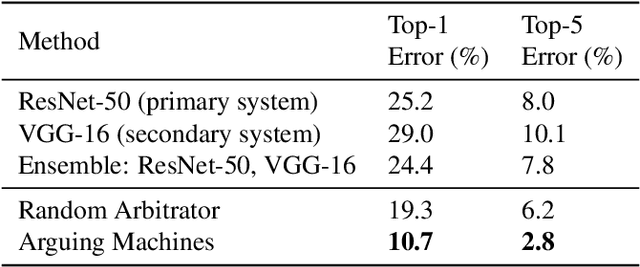

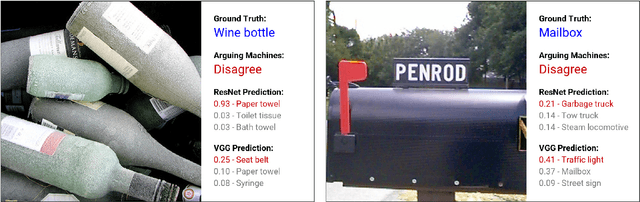

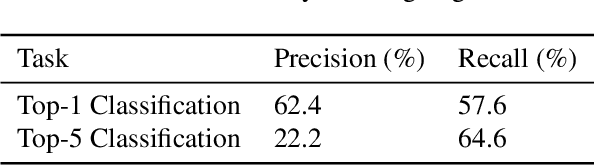

Abstract:We consider the paradigm of a black box AI system that makes life-critical decisions. We propose an "arguing machines" framework that pairs the primary AI system with a secondary one that is independently trained to perform the same task. We show that disagreement between the two systems, without any knowledge of underlying system design or operation, is sufficient to arbitrarily improve the accuracy of the overall decision pipeline given human supervision over disagreements. We demonstrate this system in two applications: (1) an illustrative example of image classification and (2) on large-scale real-world semi-autonomous driving data. For the first application, we apply this framework to image classification achieving a reduction from 8.0% to 2.8% top-5 error on ImageNet. For the second application, we apply this framework to Tesla Autopilot and demonstrate the ability to predict 90.4% of system disengagements that were labeled by human annotators as challenging and needing human supervision.

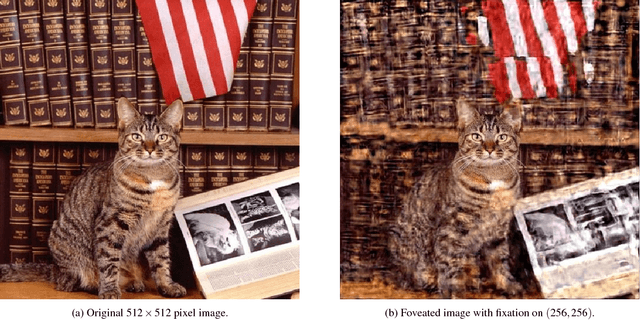

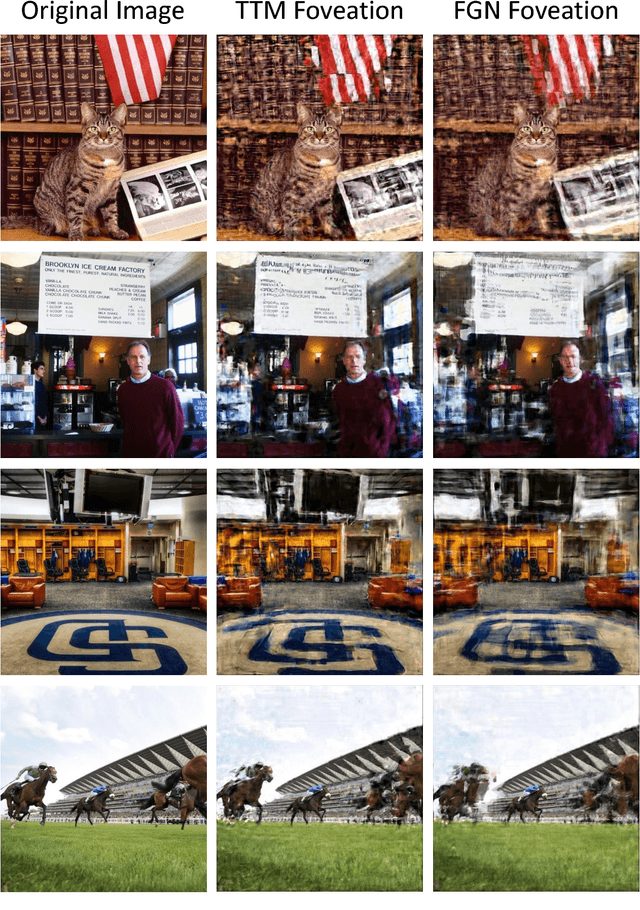

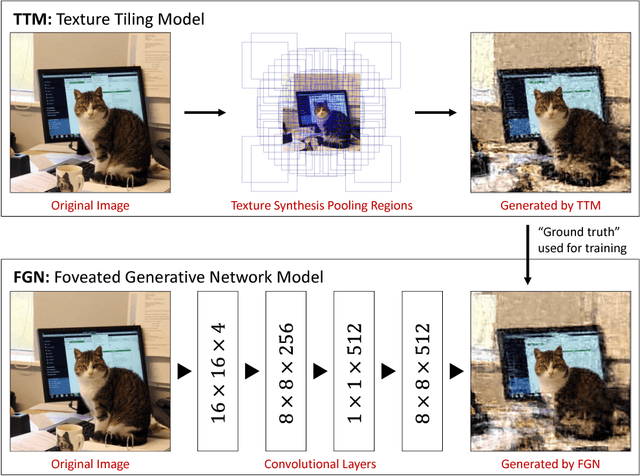

SideEye: A Generative Neural Network Based Simulator of Human Peripheral Vision

Oct 23, 2017

Abstract:Foveal vision makes up less than 1% of the visual field. The other 99% is peripheral vision. Precisely what human beings see in the periphery is both obvious and mysterious in that we see it with our own eyes but can't visualize what we see, except in controlled lab experiments. Degradation of information in the periphery is far more complex than what might be mimicked with a radial blur. Rather, behaviorally-validated models hypothesize that peripheral vision measures a large number of local texture statistics in pooling regions that overlap and grow with eccentricity. In this work, we develop a new method for peripheral vision simulation by training a generative neural network on a behaviorally-validated full-field synthesis model. By achieving a 21,000 fold reduction in running time, our approach is the first to combine realism and speed of peripheral vision simulation to a degree that provides a whole new way to approach visual design: through peripheral visualization.

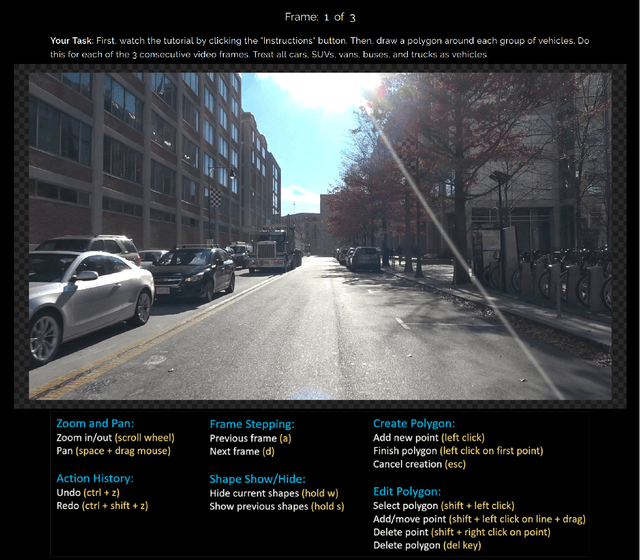

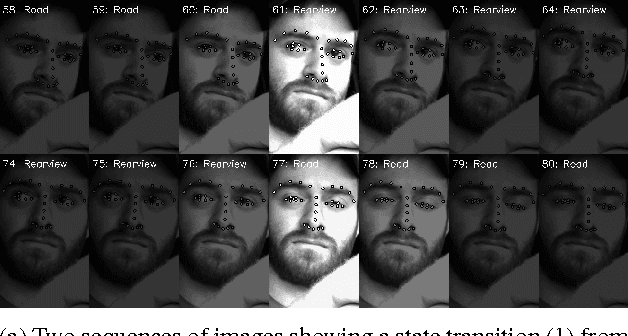

Semi-Automated Annotation of Discrete States in Large Video Datasets

Dec 03, 2016

Abstract:We propose a framework for semi-automated annotation of video frames where the video is of an object that at any point in time can be labeled as being in one of a finite number of discrete states. A Hidden Markov Model (HMM) is used to model (1) the behavior of the underlying object and (2) the noisy observation of its state through an image processing algorithm. The key insight of this approach is that the annotation of frame-by-frame video can be reduced from a problem of labeling every single image to a problem of detecting a transition between states of the underlying objected being recording on video. The performance of the framework is evaluated on a driver gaze classification dataset composed of 16,000,000 images that were fully annotated over 6,000 hours of direct manual annotation labor. On this dataset, we achieve a 13x reduction in manual annotation for an average accuracy of 99.1% and a 84x reduction for an average accuracy of 91.2%.

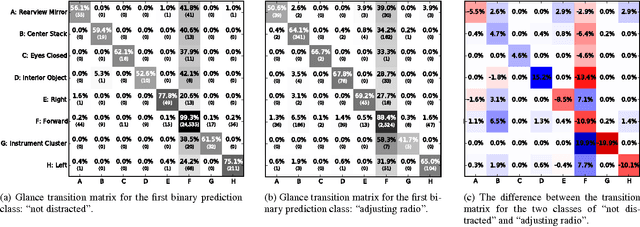

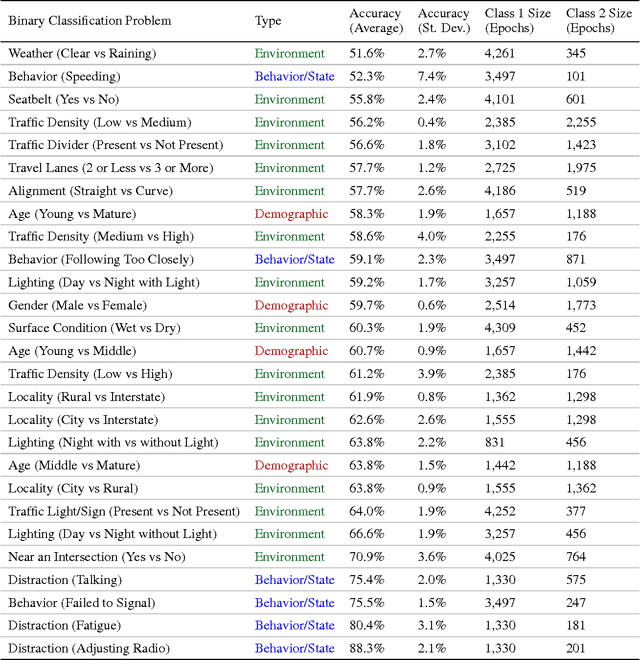

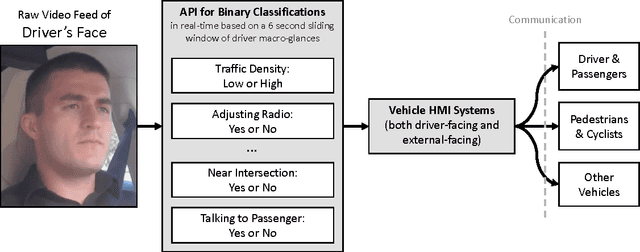

What Can Be Predicted from Six Seconds of Driver Glances?

Nov 26, 2016

Abstract:We consider a large dataset of real-world, on-road driving from a 100-car naturalistic study to explore the predictive power of driver glances and, specifically, to answer the following question: what can be predicted about the state of the driver and the state of the driving environment from a 6-second sequence of macro-glances? The context-based nature of such glances allows for application of supervised learning to the problem of vision-based gaze estimation, making it robust, accurate, and reliable in messy, real-world conditions. So, it's valuable to ask whether such macro-glances can be used to infer behavioral, environmental, and demographic variables? We analyze 27 binary classification problems based on these variables. The takeaway is that glance can be used as part of a multi-sensor real-time system to predict radio-tuning, fatigue state, failure to signal, talking, and several environment variables.

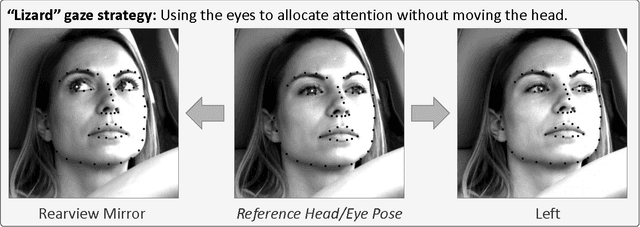

Owl and Lizard: Patterns of Head Pose and Eye Pose in Driver Gaze Classification

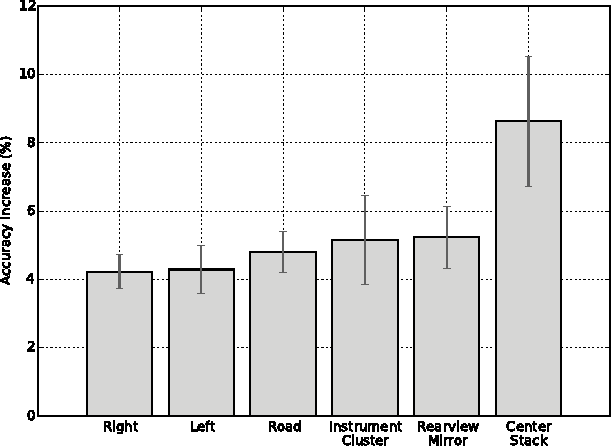

Nov 20, 2016

Abstract:Accurate, robust, inexpensive gaze tracking in the car can help keep a driver safe by facilitating the more effective study of how to improve (1) vehicle interfaces and (2) the design of future Advanced Driver Assistance Systems. In this paper, we estimate head pose and eye pose from monocular video using methods developed extensively in prior work and ask two new interesting questions. First, how much better can we classify driver gaze using head and eye pose versus just using head pose? Second, are there individual-specific gaze strategies that strongly correlate with how much gaze classification improves with the addition of eye pose information? We answer these questions by evaluating data drawn from an on-road study of 40 drivers. The main insight of the paper is conveyed through the analogy of an "owl" and "lizard" which describes the degree to which the eyes and the head move when shifting gaze. When the head moves a lot ("owl"), not much classification improvement is attained by estimating eye pose on top of head pose. On the other hand, when the head stays still and only the eyes move ("lizard"), classification accuracy increases significantly from adding in eye pose. We characterize how that accuracy varies between people, gaze strategies, and gaze regions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge