Bennett Landman

Senior Member, IEEE

BIAS: Transparent reporting of biomedical image analysis challenges

Oct 23, 2019Abstract:The number of biomedical image analysis challenges organized per year is steadily increasing. These international competitions have the purpose of benchmarking algorithms on common data sets, typically to identify the best method for a given problem. Recent research, however, revealed that common practice related to challenge reporting does not allow for adequate interpretation and reproducibility of results. To address the discrepancy between the impact of challenges and the quality (control), the Biomedical I mage Analysis ChallengeS (BIAS) initiative developed a set of recommendations for the reporting of challenges. The BIAS statement aims to improve the transparency of the reporting of a biomedical image analysis challenge regardless of field of application, image modality or task category assessed. This article describes how the BIAS statement was developed and presents a checklist which authors of biomedical image analysis challenges are encouraged to include in their submission when giving a paper on a challenge into review. The purpose of the checklist is to standardize and facilitate the review process and raise interpretability and reproducibility of challenge results by making relevant information explicit.

Less is More: Simultaneous View Classification and Landmark Detection for Abdominal Ultrasound Images

Jun 04, 2018

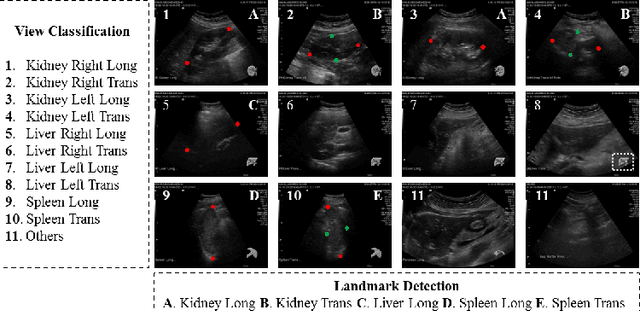

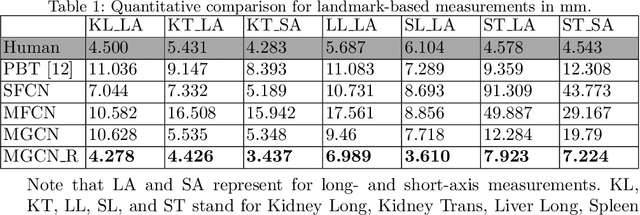

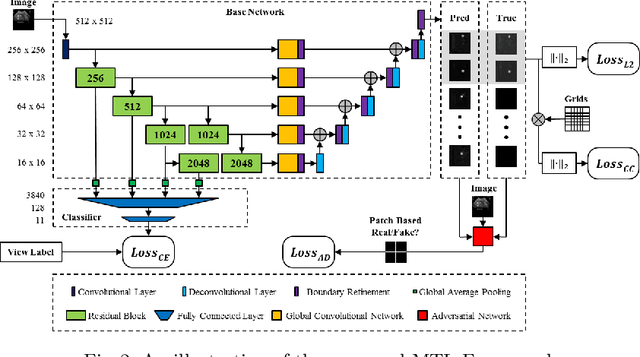

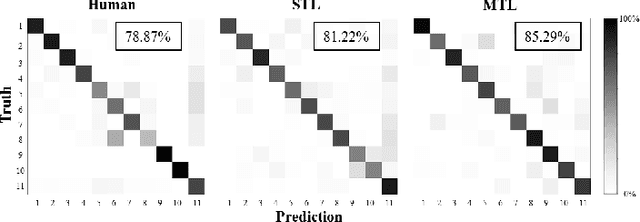

Abstract:An abdominal ultrasound examination, which is the most common ultrasound examination, requires substantial manual efforts to acquire standard abdominal organ views, annotate the views in texts, and record clinically relevant organ measurements. Hence, automatic view classification and landmark detection of the organs can be instrumental to streamline the examination workflow. However, this is a challenging problem given not only the inherent difficulties from the ultrasound modality, e.g., low contrast and large variations, but also the heterogeneity across tasks, i.e., one classification task for all views, and then one landmark detection task for each relevant view. While convolutional neural networks (CNN) have demonstrated more promising outcomes on ultrasound image analytics than traditional machine learning approaches, it becomes impractical to deploy multiple networks (one for each task) due to the limited computational and memory resources on most existing ultrasound scanners. To overcome such limits, we propose a multi-task learning framework to handle all the tasks by a single network. This network is integrated to perform view classification and landmark detection simultaneously; it is also equipped with global convolutional kernels, coordinate constraints, and a conditional adversarial module to leverage the performances. In an experimental study based on 187,219 ultrasound images, with the proposed simplified approach we achieve (1) view classification accuracy better than the agreement between two clinical experts and (2) landmark-based measurement errors on par with inter-user variability. The multi-task approach also benefits from sharing the feature extraction during the training process across all tasks and, as a result, outperforms the approaches that address each task individually.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge