Barbara Bruno

ROBOPOL: Social Robotics Meets Vehicular Communications for Cooperative Automated Driving

Dec 30, 2025Abstract:On the way towards full autonomy, sharing roads between automated vehicles and human actors in so-called mixed traffic is unavoidable. Moreover, even if all vehicles on the road were autonomous, pedestrians would still be crossing the streets. We propose social robots as moderators between autonomous vehicles and vulnerable road users (VRU). To this end, we identify four enablers requiring integration: (1) advanced perception, allowing the robot to see the environment; (2) vehicular communications allowing connected vehicles to share intentions and the robot to send guiding commands; (3) social human-robot interaction allowing the robot to effectively communicate with VRUs and drivers; (4) formal specification allowing the robot to understand traffic and plan accordingly. This paper presents an overview of the key enablers and report on a first proof-of-concept integration of the first three enablers envisioning a social robot advising pedestrians in scenarios with a cooperative automated e-bike.

Augmenting Automatic Speech Recognition Models with Disfluency Detection

Sep 17, 2024

Abstract:Speech disfluency commonly occurs in conversational and spontaneous speech. However, standard Automatic Speech Recognition (ASR) models struggle to accurately recognize these disfluencies because they are typically trained on fluent transcripts. Current research mainly focuses on detecting disfluencies within transcripts, overlooking their exact location and duration in the speech. Additionally, previous work often requires model fine-tuning and addresses limited types of disfluencies. In this work, we present an inference-only approach to augment any ASR model with the ability to detect open-set disfluencies. We first demonstrate that ASR models have difficulty transcribing speech disfluencies. Next, this work proposes a modified Connectionist Temporal Classification(CTC)-based forced alignment algorithm from \cite{kurzinger2020ctc} to predict word-level timestamps while effectively capturing disfluent speech. Additionally, we develop a model to classify alignment gaps between timestamps as either containing disfluent speech or silence. This model achieves an accuracy of 81.62% and an F1-score of 80.07%. We test the augmentation pipeline of alignment gap detection and classification on a disfluent dataset. Our results show that we captured 74.13% of the words that were initially missed by the transcription, demonstrating the potential of this pipeline for downstream tasks.

Co-designing a Child-Robot Relational Norm Intervention to Regulate Children's Handwriting Posture

Jun 11, 2024

Abstract:Persuasive social robots employ their social influence to modulate children's behaviours in child-robot interaction. In this work, we introduce the Child-Robot Relational Norm Intervention (CRNI) model, leveraging the passive role of social robots and children's reluctance to inconvenience others to influence children's behaviours. Unlike traditional persuasive strategies that employ robots in active roles, CRNI utilizes an indirect approach by generating a disturbance for the robot in response to improper child behaviours, thereby motivating behaviour change through the avoidance of norm violations. The feasibility of CRNI is explored with a focus on improving children's handwriting posture. To this end, as a preliminary work, we conducted two participatory design workshops with 12 children and 1 teacher to identify effective disturbances that can promote posture correction.

The Child Factor in Child-Robot Interaction: Discovering the Impact of Developmental Stage and Individual Characteristics

Apr 20, 2024

Abstract:Social robots, owing to their embodied physical presence in human spaces and the ability to directly interact with the users and their environment, have a great potential to support children in various activities in education, healthcare and daily life. Child-Robot Interaction (CRI), as any domain involving children, inevitably faces the major challenge of designing generalized strategies to work with unique, turbulent and very diverse individuals. Addressing this challenging endeavor requires to combine the standpoint of the robot-centered perspective, i.e. what robots technically can and are best positioned to do, with that of the child-centered perspective, i.e. what children may gain from the robot and how the robot should act to best support them in reaching the goals of the interaction. This article aims to help researchers bridge the two perspectives and proposes to address the development of CRI scenarios with insights from child psychology and child development theories. To that end, we review the outcomes of the CRI studies, outline common trends and challenges, and identify two key factors from child psychology that impact child-robot interactions, especially in a long-term perspective: developmental stage and individual characteristics. For both of them we discuss prospective experiment designs which support building naturally engaging and sustainable interactions.

Investigating the role of educational robotics in formal mathematics education: the case of geometry for 15-year-old students

Jun 21, 2021

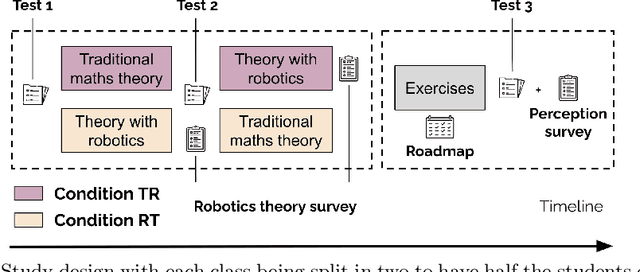

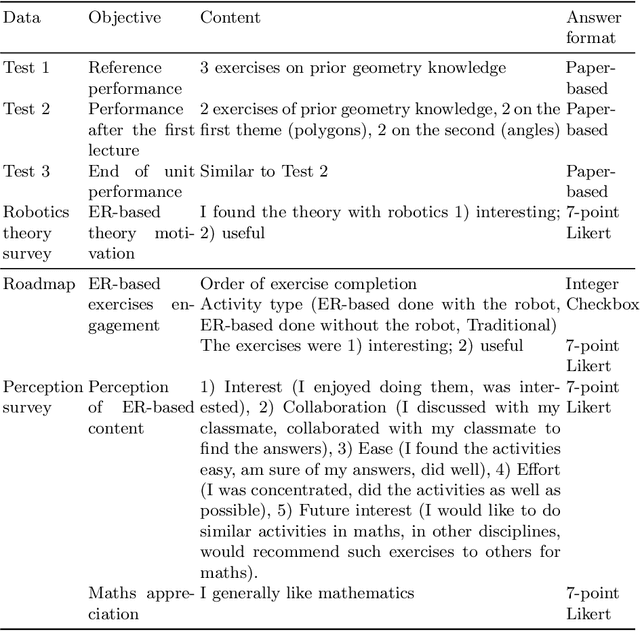

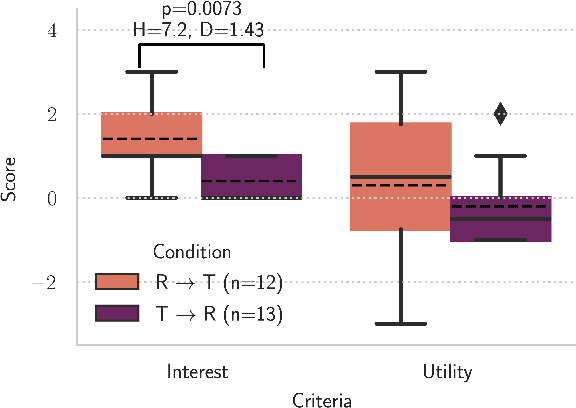

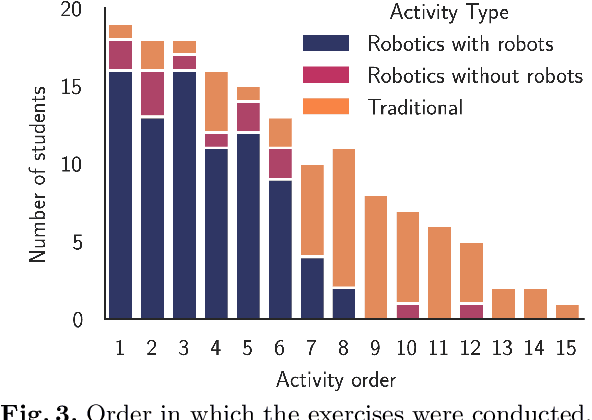

Abstract:Research has shown that Educational Robotics (ER) enhances student performance, interest, engagement and collaboration. However, until now, the adoption of robotics in formal education has remained relatively scarce. Among other causes, this is due to the difficulty of determining the alignment of educational robotic learning activities with the learning outcomes envisioned by the curriculum, as well as their integration with traditional, non-robotics learning activities that are well established in teachers' practices. This work investigates the integration of ER into formal mathematics education, through a quasi-experimental study employing the Thymio robot and Scratch programming to teach geometry to two classes of 15-year-old students, for a total of 26 participants. Three research questions were addressed: (1) Should an ER-based theoretical lecture precede, succeed or replace a traditional theoretical lecture? (2) What is the students' perception of and engagement in the ER-based lecture and exercises? (3) Do the findings differ according to students' prior appreciation of mathematics? The results suggest that ER activities are as valid as traditional ones in helping students grasp the relevant theoretical concepts. Robotics activities seem particularly beneficial during exercise sessions: students freely chose to do exercises that included the robot, rated them as significantly more interesting and useful than their traditional counterparts, and expressed their interest in introducing ER in other mathematics lectures. Finally, results were generally consistent between the students that like and did not like mathematics, suggesting the use of robotics as a means to broaden the number of students engaged in the discipline.

Teachers' perspective on fostering computational thinking through educational robotics

May 11, 2021

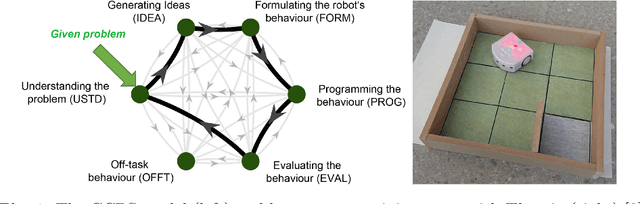

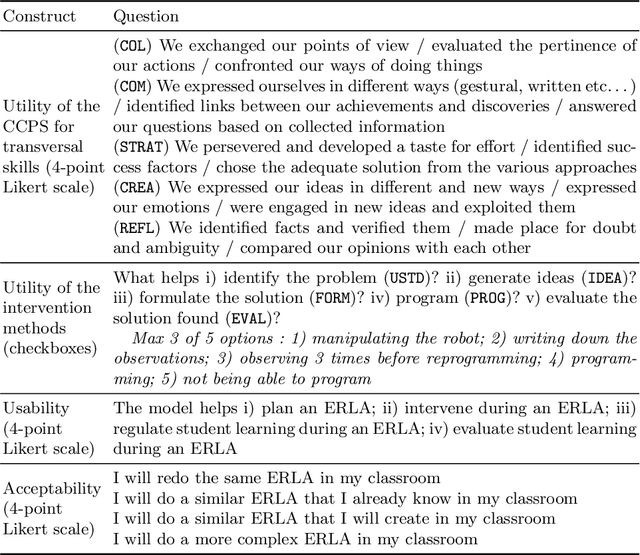

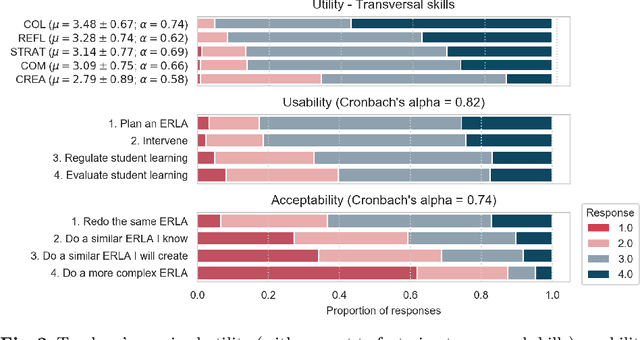

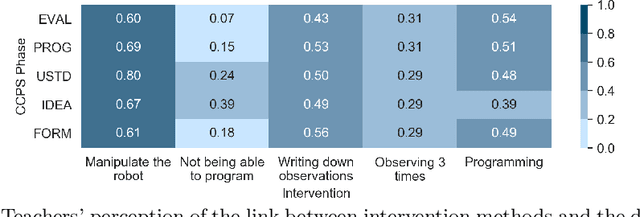

Abstract:With the introduction of educational robotics (ER) and computational thinking (CT) in classrooms, there is a rising need for operational models that help ensure that CT skills are adequately developed. One such model is the Creative Computational Problem Solving Model (CCPS) which can be employed to improve the design of ER learning activities. Following the first validation with students, the objective of the present study is to validate the model with teachers, specifically considering how they may employ the model in their own practices. The Utility, Usability and Acceptability framework was leveraged for the evaluation through a survey analysis with 334 teachers. Teachers found the CCPS model useful to foster transversal skills but could not recognise the impact of specific intervention methods on CT-related cognitive processes. Similarly, teachers perceived the model to be usable for activity design and intervention, although felt unsure about how to use it to assess student learning and adapt their teaching accordingly. Finally, the teachers accepted the model, as shown by their intent to replicate the activity in their classrooms, but were less willing to modify it or create their own activities, suggesting that they need time to appropriate the model and underlying tenets.

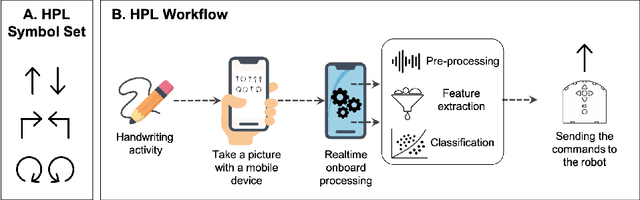

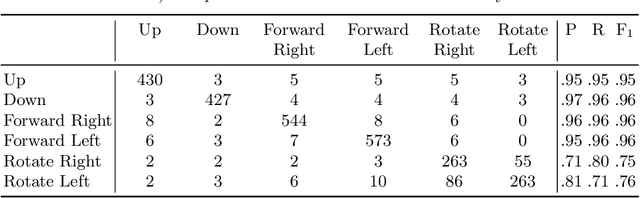

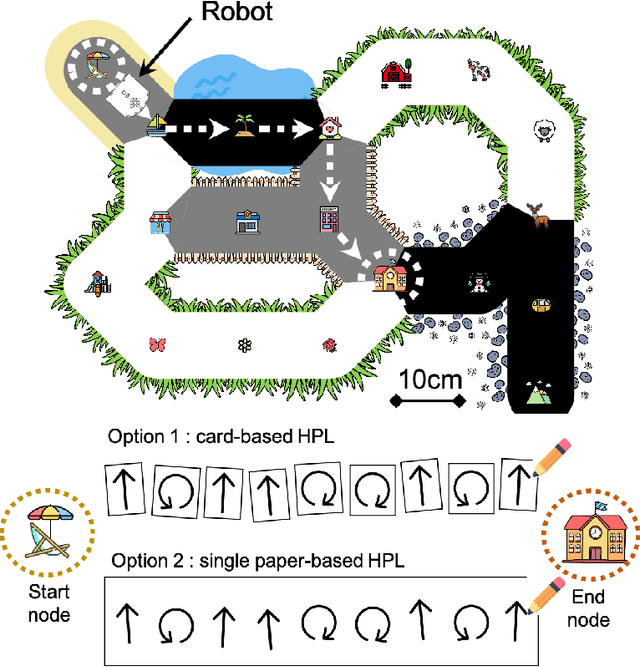

Exploring a Handwriting Programming Language for Educational Robots

May 11, 2021

Abstract:Recently, introducing computer science and educational robots in compulsory education has received increasing attention. However, the use of screens in classrooms is often met with resistance, especially in primary school. To address this issue, this study presents the development of a handwriting-based programming language for educational robots. Aiming to align better with existing classroom practices, it allows students to program a robot by drawing symbols with ordinary pens and paper. Regular smartphones are leveraged to process the hand-drawn instructions using computer vision and machine learning algorithms, and send the commands to the robot for execution. To align with the local computer science curriculum, an appropriate playground and scaffolded learning tasks were designed. The system was evaluated in a preliminary test with eight teachers, developers and educational researchers. While the participants pointed out that some technical aspects could be improved, they also acknowledged the potential of the approach to make computer science education in primary school more accessible.

Studying Alignment in Spontaneous Speech via Automatic Methods: How Do Children Use Task-specific Referents to Succeed in a Collaborative Learning Activity?

Apr 09, 2021

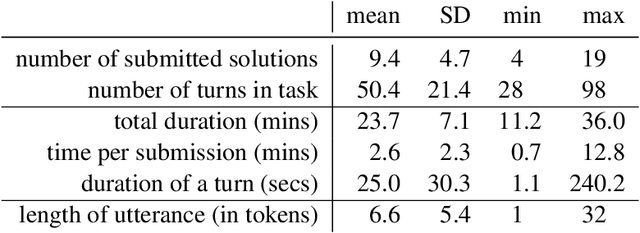

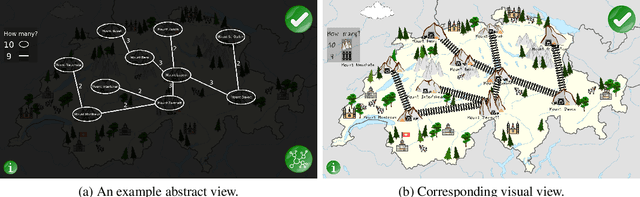

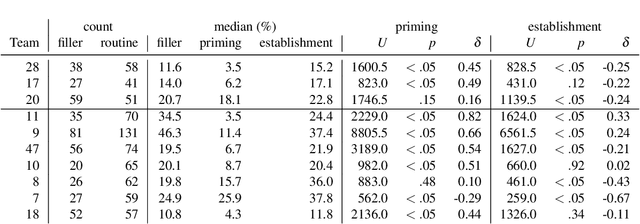

Abstract:A dialogue is successful when there is alignment between the speakers, at different linguistic levels. In this work, we consider the dialogue occurring between interlocutors engaged in a collaborative learning task, and explore how performance and learning (i.e. task success) relate to dialogue alignment processes. The main contribution of this work is to propose new measures to automatically study alignment, to consider completely spontaneous spoken dialogues among children in the context of a collaborative learning activity. Our measures of alignment consider the children's use of expressions that are related to the task at hand, their follow-up actions of these expressions, and how it links to task success. Focusing on expressions related to the task gives us insight into the way children use (potentially unfamiliar) terminology related to the task. A first finding of this work is the discovery that the measures we propose can capture elements of lexical alignment in such a context. Through these measures, we find that teams with bad performance often aligned too late in the dialogue to achieve task success, and that they were late to follow up each other's instructions with actions. We also found that while interlocutors do not exhibit hesitation phenomena (which we measure by looking at fillers) in introducing expressions pertaining to the task, they do exhibit hesitation before accepting the expression, in the role of clarification. Lastly, we show that information management markers (measured by the discourse marker 'oh') occur in the general vicinity of the follow up actions from (automatically) inferred instructions. However, good performers tend to have this marker closer to these actions. Our measures still reflect some fine-grained aspects of learning in the dialogue, even if we cannot conclude that overall they are linked to the final measure of learning.

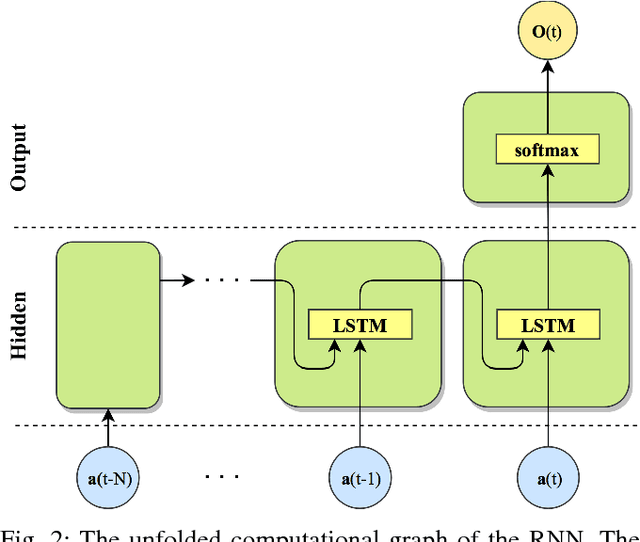

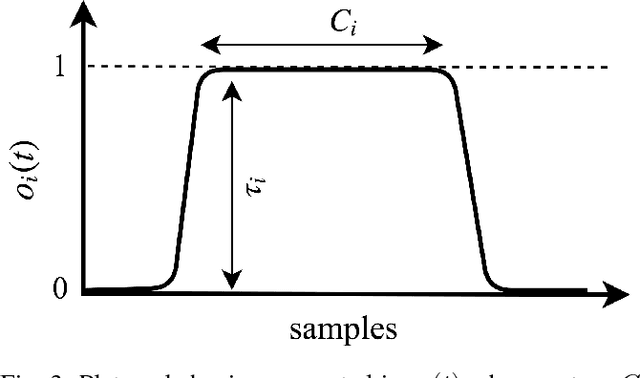

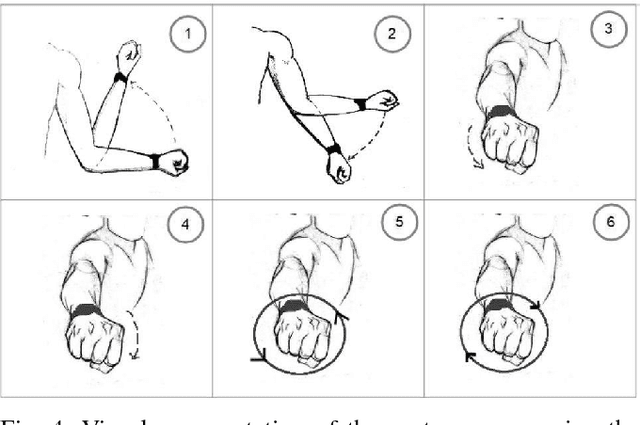

Online Human Gesture Recognition using Recurrent Neural Networks and Wearable Sensors

Oct 26, 2018

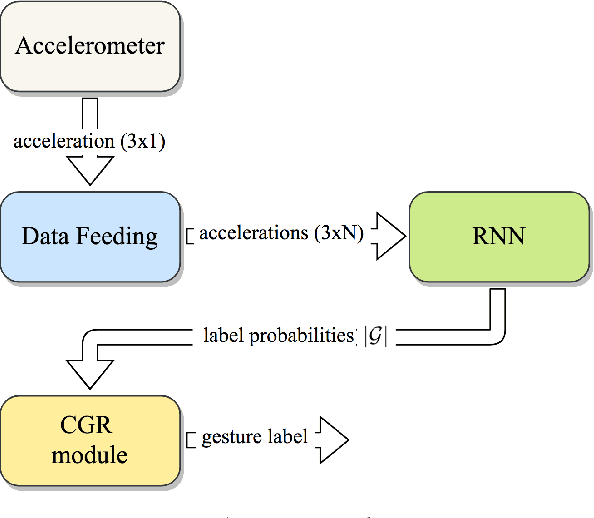

Abstract:Gestures are a natural communication modality for humans. The ability to interpret gestures is fundamental for robots aiming to naturally interact with humans. Wearable sensors are promising to monitor human activity, in particular the usage of triaxial accelerometers for gesture recognition have been explored. Despite this, the state of the art presents lack of systems for reliable online gesture recognition using accelerometer data. The article proposes SLOTH, an architecture for online gesture recognition, based on a wearable triaxial accelerometer, a Recurrent Neural Network (RNN) probabilistic classifier and a procedure for continuous gesture detection, relying on modelling gesture probabilities, that guarantees (i) good recognition results in terms of precision and recall, (ii) immediate system reactivity.

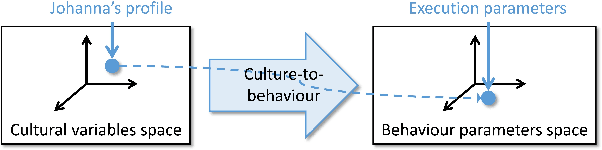

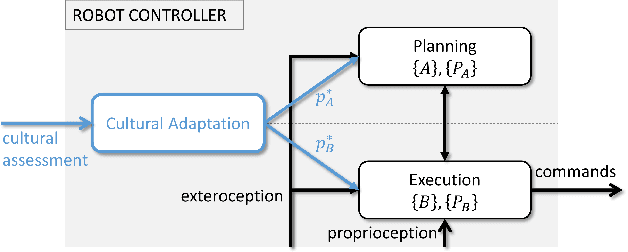

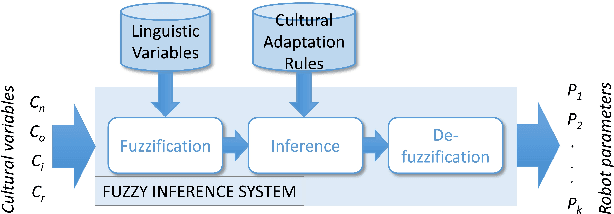

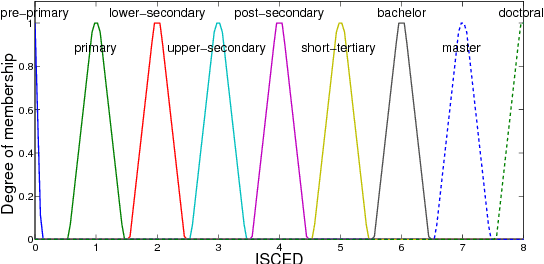

A framework for Culture-aware Robots based on Fuzzy Logic

Mar 22, 2018

Abstract:Cultural adaptation, i.e., the matching of a robot's behaviours to the cultural norms and preferences of its user, is a well known key requirement for the success of any assistive application. However, culture-dependent robot behaviours are often implicitly set by designers, thus not allowing for an easy and automatic adaptation to different cultures. This paper presents a method for the design of culture-aware robots, that can automatically adapt their behaviour to conform to a given culture. We propose a mapping from cultural factors to related parameters of robot behaviours which relies on linguistic variables to encode heterogeneous cultural factors in a uniform formalism, and on fuzzy rules to encode qualitative relations among multiple variables. We illustrate the approach in two practical case studies.

* Presented at: 2017 IEEE International Conference on Fuzzy Systems (FUZZ-IEEE), Naples, Italy, 2017

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge