"Time": models, code, and papers

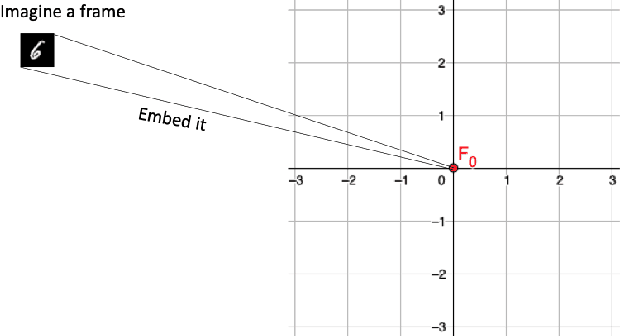

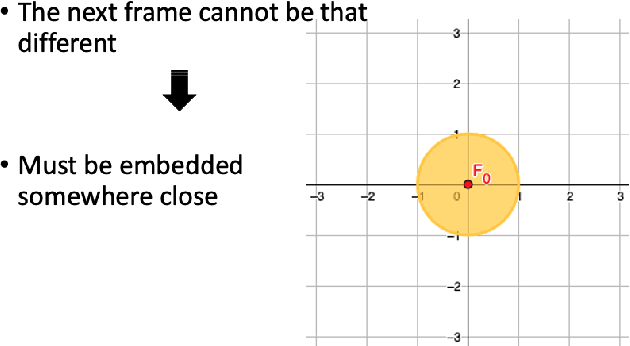

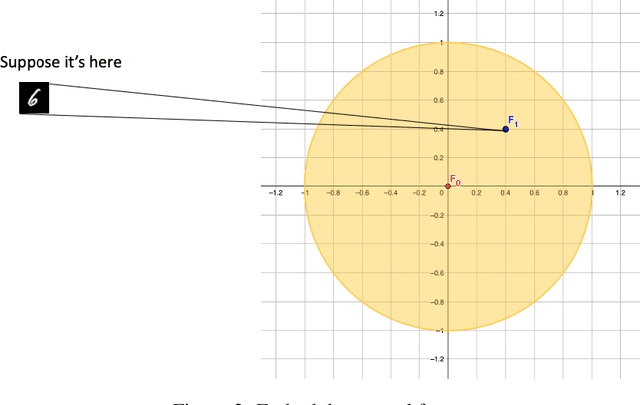

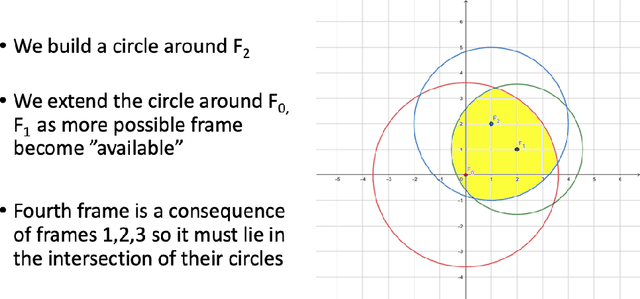

Causal Future Prediction in a Minkowski Space-Time

Aug 30, 2020

Estimating future events is a difficult task. Unlike humans, machine learning approaches are not regularized by a natural understanding of physics. In the wild, a plausible succession of events is governed by the rules of causality, which cannot easily be derived from a finite training set. In this paper we propose a novel theoretical framework to perform causal future prediction by embedding spatiotemporal information on a Minkowski space-time. We utilize the concept of a light cone from special relativity to restrict and traverse the latent space of an arbitrary model. We demonstrate successful applications in causal image synthesis and future video frame prediction on a dataset of images. Our framework is architecture- and task-independent and comes with strong theoretical guarantees of causal capabilities.

An Object Aware Hybrid U-Net for Breast Tumour Annotation

Feb 22, 2022In the clinical settings, during digital examination of histopathological slides, the pathologist annotate the slides by marking the rough boundary around the suspected tumour region. The marking or annotation is generally represented as a polygonal boundary that covers the extent of the tumour in the slide. These polygonal markings are difficult to imitate through CAD techniques since the tumour regions are heterogeneous and hence segmenting them would require exhaustive pixel wise ground truth annotation. Therefore, for CAD analysis, the ground truths are generally annotated by pathologist explicitly for research purposes. However, this kind of annotation which is generally required for semantic or instance segmentation is time consuming and tedious. In this proposed work, therefore, we have tried to imitate pathologist like annotation by segmenting tumour extents by polygonal boundaries. For polygon like annotation or segmentation, we have used Active Contours whose vertices or snake points move towards the boundary of the object of interest to find the region of minimum energy. To penalize the Active Contour we used modified U-Net architecture for learning penalization values. The proposed hybrid deep learning model fuses the modern deep learning segmentation algorithm with traditional Active Contours segmentation technique. The model is tested against both state-of-the-art semantic segmentation and hybrid models for performance evaluation against contemporary work. The results obtained show that the pathologist like annotation could be achieved by developing such hybrid models that integrate the domain knowledge through classical segmentation methods like Active Contours and global knowledge through semantic segmentation deep learning models.

Second-Order Mirror Descent: Convergence in Games Beyond Averaging and Discounting

Nov 18, 2021

In this paper, we propose a second-order extension of the continuous-time game-theoretic mirror descent (MD) dynamics, referred to as MD2, which converges to mere (but not necessarily strict) variationally stable states (VSS) without using common auxiliary techniques such as averaging or discounting. We show that MD2 enjoys no-regret as well as exponential rate of convergence towards a strong VSS upon a slight modification. Furthermore, MD2 can be used to derive many novel primal-space dynamics. Lastly, using stochastic approximation techniques, we provide a convergence guarantee of discrete-time MD2 with noisy observations towards interior mere VSS. Selected simulations are provided to illustrate our results.

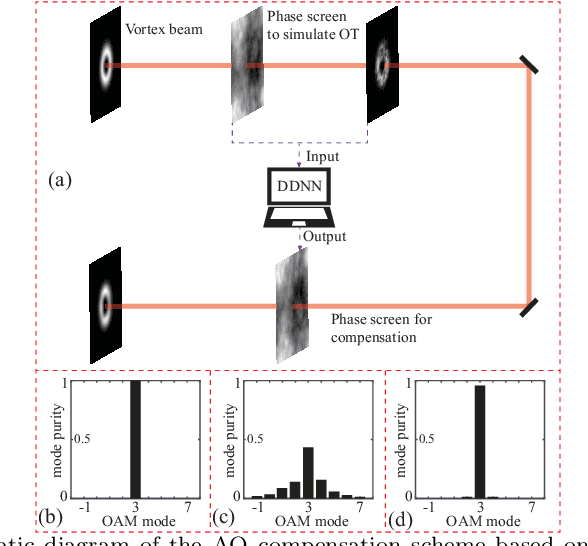

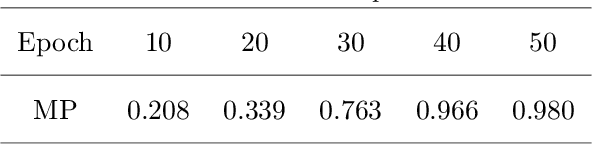

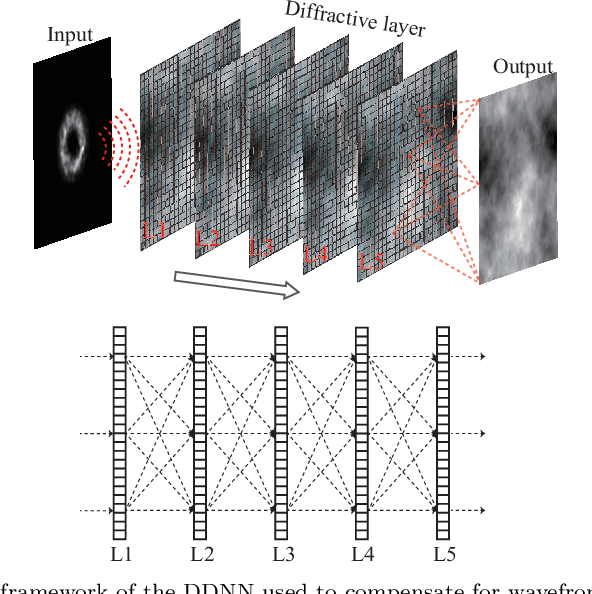

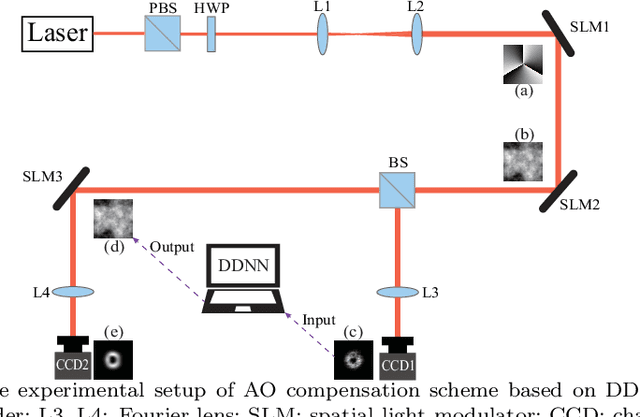

Diffractive deep neural network based adaptive optics scheme for vortex beam in oceanic turbulence

Feb 06, 2022

Vortex beam carrying orbital angular momentum (OAM) is disturbed by oceanic turbulence (OT) when propagating in underwater wireless optical communication (UWOC) system. Adaptive optics (AO) is used to compensate for distortion and improve the performance of the UWOC system. In this work, we propose a diffractive deep neural network (DDNN) based AO scheme to compensate for the distortion caused by OT, where the DDNN is trained to obtain the mapping between the distortion intensity distribution of the vortex beam and its corresponding phase screen representating OT. The intensity pattern of the distorted vortex beam obtained in the experiment is input to the DDNN model, and the predicted phase screen can be used to compensate the distortion in real time. The experiment results show that the proposed scheme can extract quickly the characteristics of the intensity pattern of the distorted vortex beam, and output accurately the predicted phase screen. The mode purity of the compensated vortex beam is significantly improved, even with a strong OT. Our scheme may provide a new avenue for AO techniques, and is expected to promote the communication quality of UWOC system.

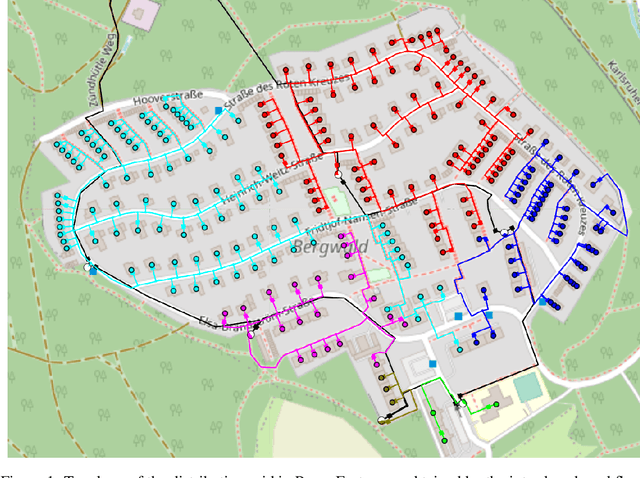

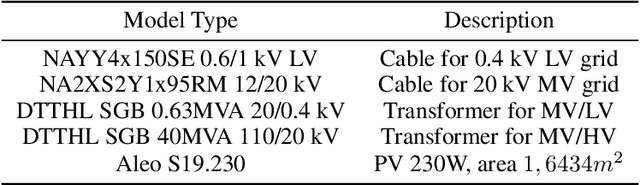

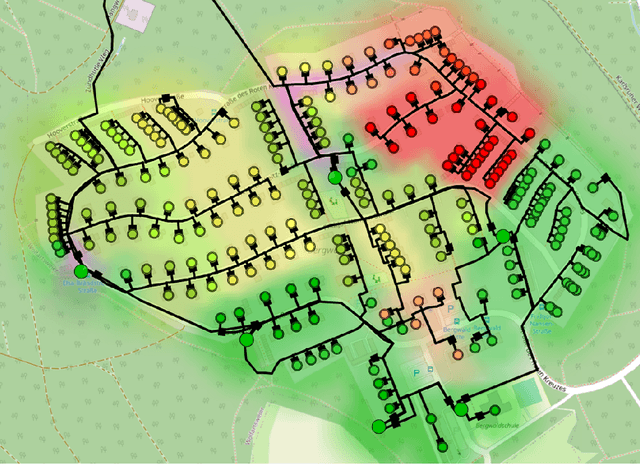

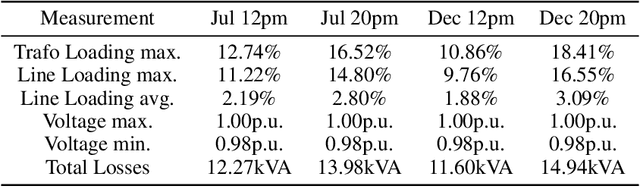

Automated generation of large-scale distribution grid models based on open data and open source software using an optimization approach

Feb 28, 2022

The increasing share of renewable energy sources on distribution grid level as well as the emerging active role of prosumers lead to both higher distribution grid utilization, and at the same time greater unpredictability of energy generation and consumption. This poses major problems for grid operators in view of, e.g., voltage stability and line (over)loading. Thus, detailed and comprehensive simulation models are essential for planning future distribution grid expansion in view of the expected strong electrification of society. In this context, the contribution of the present paper is a new, more refined method for automated creation of large-scale detailed distribution grid models based solely on publicly available GIS and statistical data. Utilizing the street layouts in Open Street Maps as potential cable routes, a graph representation is created and complemented by residential units that are extracted from the same data source. This graph structure is adjusted to match electrical low-voltage grid topology by solving a variation of the minimum cost flow linear optimization problem with provided data on secondary substations. In a final step, the generated grid representation is transferred to a DIgSILENT PowerFactory model with photovoltaic systems. The presented workflow uses open source software and is fully automated and scalable that allows the generation of ready-to-use distribution grid simulation models for given 20kV substation locations and additional data on residential unit properties for improved results. The performance of the developed method with respect to grid utilization is presented for a selected suburban residential area with power flow simulations for eight scenarios including current residential PV installation and a future scenario with full PV expansion. Furthermore, the suitability of the generated models for quasi-dynamic simulations is shown.

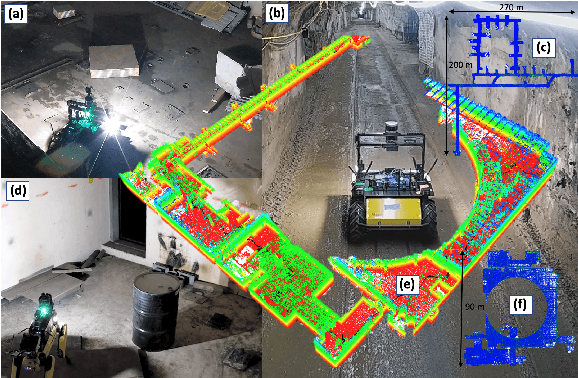

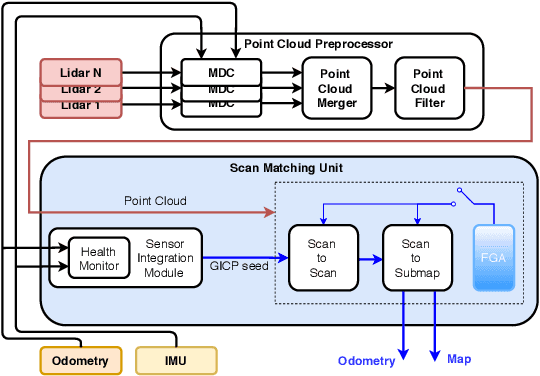

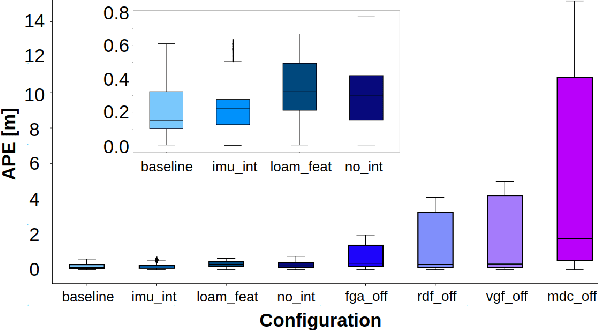

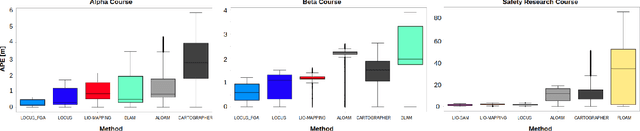

LOCUS: A Multi-Sensor Lidar-Centric Solution for High-Precision Odometry and 3D Mapping in Real-Time

Dec 28, 2020

A reliable odometry source is a prerequisite to enable complex autonomy behaviour in next-generation robots operating in extreme environments. In this work, we present a high-precision lidar odometry system to achieve robust and real-time operation under challenging perceptual conditions. LOCUS (Lidar Odometry for Consistent operation in Uncertain Settings), provides an accurate multi-stage scan matching unit equipped with an health-aware sensor integration module for seamless fusion of additional sensing modalities. We evaluate the performance of the proposed system against state-of-the-art techniques in perceptually challenging environments, and demonstrate top-class localization accuracy along with substantial improvements in robustness to sensor failures. We then demonstrate real-time performance of LOCUS on various types of robotic mobility platforms involved in the autonomous exploration of the Satsop power plant in Elma, WA where the proposed system was a key element of the CoSTAR team's solution that won first place in the Urban Circuit of the DARPA Subterranean Challenge.

Analysis of Evolutionary Algorithms on Fitness Function with Time-linkage Property

Apr 29, 2020In real-world applications, many optimization problems have the time-linkage property, that is, the objective function value relies on the current solution as well as the historical solutions. Although the rigorous theoretical analysis on evolutionary algorithms has rapidly developed in recent two decades, it remains an open problem to theoretically understand the behaviors of evolutionary algorithms on time-linkage problems. This paper takes the first step to rigorously analyze evolutionary algorithms for time-linkage functions. Based on the basic OneMax function, we propose a time-linkage function where the first bit value of the last time step is integrated but has a different preference from the current first bit. We prove that with probability $1-o(1)$, randomized local search and $(1+1)$ EA cannot find the optimum, and with probability $1-o(1)$, $(\mu+1)$ EA is able to reach the optimum.

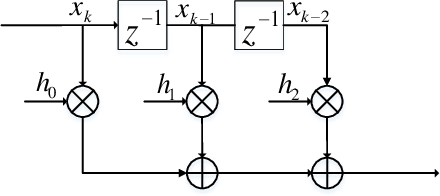

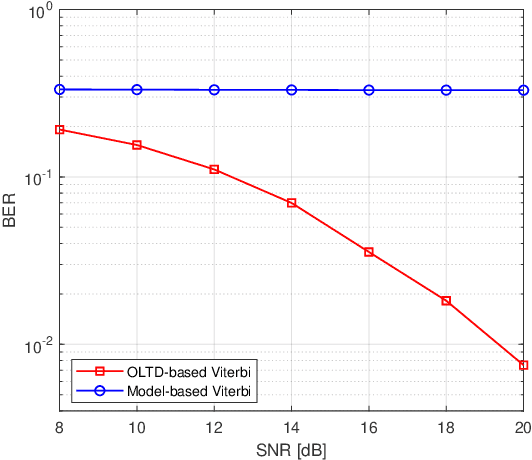

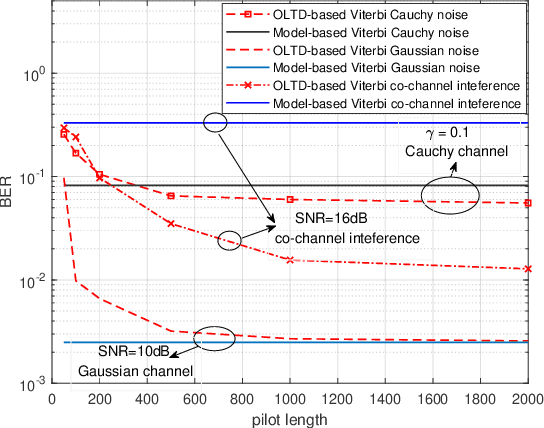

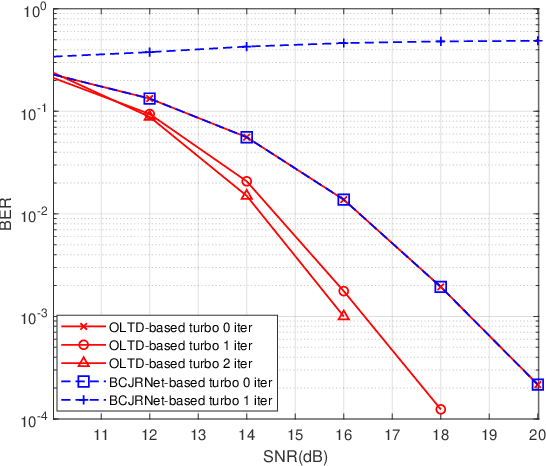

Online Learning of Trellis Diagram Using Neural Network for Robust Detection and Decoding

Feb 22, 2022

This paper studies machine learning-assisted maximum likelihood (ML) and maximum a posteriori (MAP) receivers for a communication system with memory, which can be modelled by a trellis diagram. The prerequisite of the ML/MAP receiver is to obtain the likelihood of the received samples under different state transitions of the trellis diagram, which relies on the channel state information (CSI) and the distribution of the channel noise. We propose to learn the trellis diagram real-time using an artificial neural network (ANN) trained by a pilot sequence. This approach, termed as the online learning of trellis diagram (OLTD), requires neither the CSI nor statistics of the noise, and can be incorporated into the classic Viterbi and the BCJR algorithm. %Compared with the state-of-the-art ViterbiNet and BCJRNet algorithms in the literature, it It is shown to significantly outperform the model-based methods in non-Gaussian channels. It requires much less training overhead than the state-of-the-art methods, and hence is more feasible for real implementations. As an illustrative example, the OLTD-based BCJR is applied to a Bluetooth low energy (BLE) receiver trained only by a 256-sample pilot sequence. Moreover, the OLTD-based BCJR can accommodate for turbo equalization, while the state-of-the-art BCJRNet/ViterbiNet cannot. As an interesting by-product, we propose an enhancement to the BLE standard by introducing a bit interleaver to its physical layer; the resultant improvement of the receiver sensitivity can make it a better fit for some Internet of Things (IoT) communications.

Clipped DeepControl: deep neural network two-dimensional pulse design with an amplitude constraint layer

Jan 21, 2022

Advanced radio-frequency pulse design used in magnetic resonance imaging has recently been demonstrated with deep learning of (convolutional) neural networks and reinforcement learning. For two-dimensionally selective radio-frequency pulses, the (convolutional) neural network pulse prediction time (few milliseconds) was in comparison more than three orders of magnitude faster than the conventional optimal control computation. The network pulses were from the supervised training capable of compensating scan-subject dependent inhomogeneities of B0 and B+1 fields. Unfortunately, the network presented with a non-negligible percentage of pulse amplitude overshoots in the test subset, despite the optimal control pulses used in training were fully constrained. Here, we have extended the convolutional neural network with a custom-made clipping layer that completely eliminates the risk of pulse amplitude overshoots, while preserving the ability to compensate the inhomogeneous field conditions.

Trying to Outrun Causality with Machine Learning: Limitations of Model Explainability Techniques for Identifying Predictive Variables

Feb 25, 2022

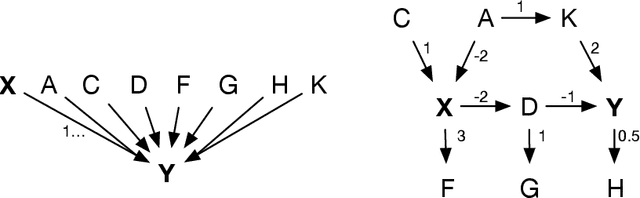

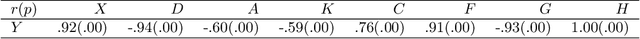

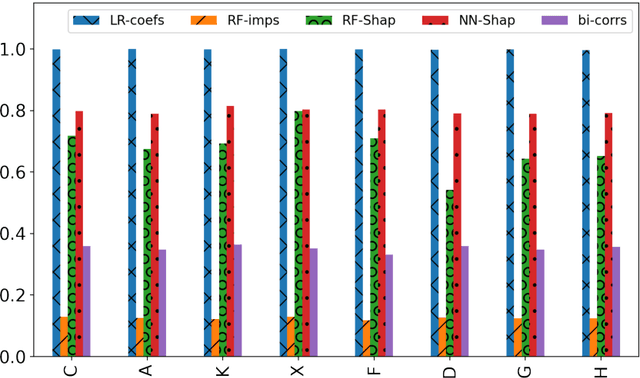

Machine Learning explainability techniques have been proposed as a means of `explaining' or interrogating a model in order to understand why a particular decision or prediction has been made. Such an ability is especially important at a time when machine learning is being used to automate decision processes which concern sensitive factors and legal outcomes. Indeed, it is even a requirement according to EU law. Furthermore, researchers concerned with imposing overly restrictive functional form (e.g., as would be the case in a linear regression) may be motivated to use machine learning algorithms in conjunction with explainability techniques, as part of exploratory research, with the goal of identifying important variables which are associated with an outcome of interest. For example, epidemiologists might be interested in identifying `risk factors' - i.e. factors which affect recovery from disease - by using random forests and assessing variable relevance using importance measures. However, and as we demonstrate, machine learning algorithms are not as flexible as they might seem, and are instead incredibly sensitive to the underling causal structure in the data. The consequences of this are that predictors which are, in fact, critical to a causal system and highly correlated with the outcome, may nonetheless be deemed by explainability techniques to be unrelated/unimportant/unpredictive of the outcome. Rather than this being a limitation of explainability techniques per se, we show that it is rather a consequence of the mathematical implications of regression, and the interaction of these implications with the associated conditional independencies of the underlying causal structure. We provide some alternative recommendations for researchers wanting to explore the data for important variables.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge