"Time": models, code, and papers

A Hybrid Deep Learning Anomaly Detection Framework for Intrusion Detection

Dec 02, 2022

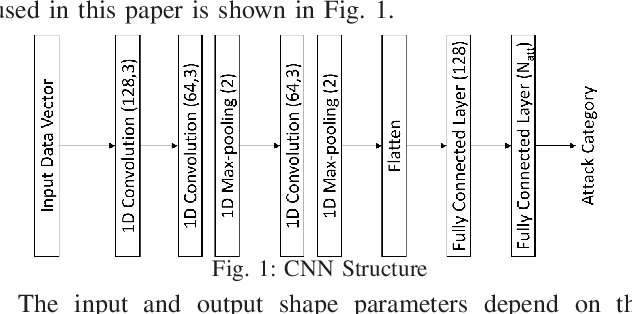

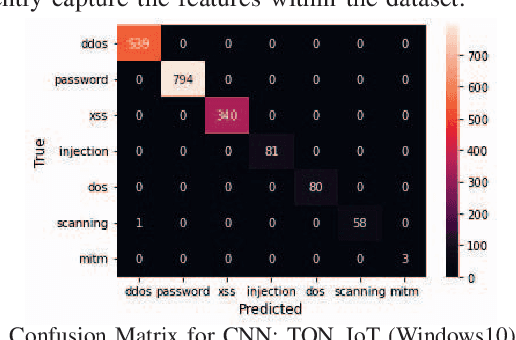

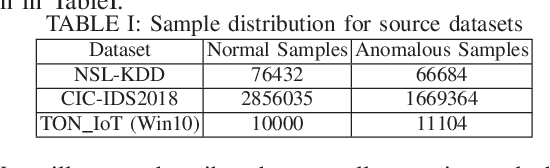

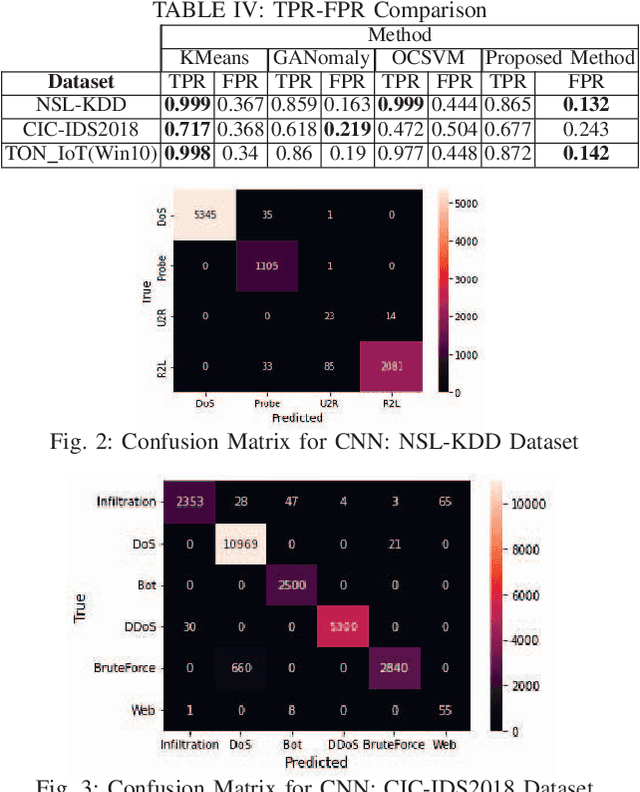

Cyber intrusion attacks that compromise the users' critical and sensitive data are escalating in volume and intensity, especially with the growing connections between our daily life and the Internet. The large volume and high complexity of such intrusion attacks have impeded the effectiveness of most traditional defence techniques. While at the same time, the remarkable performance of the machine learning methods, especially deep learning, in computer vision, had garnered research interests from the cyber security community to further enhance and automate intrusion detections. However, the expensive data labeling and limitation of anomalous data make it challenging to train an intrusion detector in a fully supervised manner. Therefore, intrusion detection based on unsupervised anomaly detection is an important feature too. In this paper, we propose a three-stage deep learning anomaly detection based network intrusion attack detection framework. The framework comprises an integration of unsupervised (K-means clustering), semi-supervised (GANomaly) and supervised learning (CNN) algorithms. We then evaluated and showed the performance of our implemented framework on three benchmark datasets: NSL-KDD, CIC-IDS2018, and TON_IoT.

* Keywords: Cybersecurity, Anomaly Detection, Intrusion Detection, Deep Learning, Unsupervised Learning, Neural Networks; https://ieeexplore.ieee.org/document/9799486

On the Limit of Explaining Black-box Temporal Graph Neural Networks

Dec 02, 2022

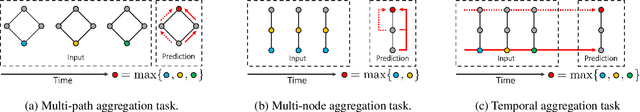

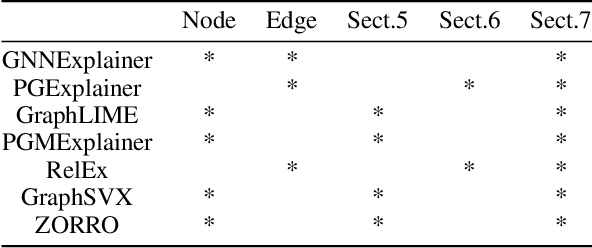

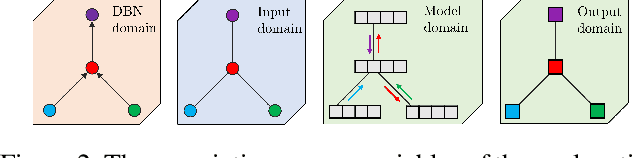

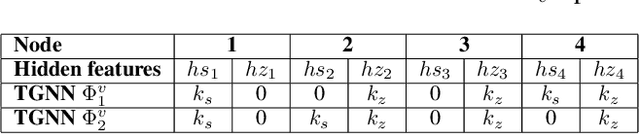

Temporal Graph Neural Network (TGNN) has been receiving a lot of attention recently due to its capability in modeling time-evolving graph-related tasks. Similar to Graph Neural Networks, it is also non-trivial to interpret predictions made by a TGNN due to its black-box nature. A major approach tackling this problems in GNNs is by analyzing the model' responses on some perturbations of the model's inputs, called perturbation-based explanation methods. While these methods are convenient and flexible since they do not need internal access to the model, does this lack of internal access prevent them from revealing some important information of the predictions? Motivated by that question, this work studies the limit of some classes of perturbation-based explanation methods. Particularly, by constructing some specific instances of TGNNs, we show (i) node-perturbation cannot reliably identify the paths carrying out the prediction, (ii) edge-perturbation is not reliable in determining all nodes contributing to the prediction and (iii) perturbing both nodes and edges does not reliably help us identify the graph's components carrying out the temporal aggregation in TGNNs.

Mode decomposition-based time-varying phase synchronization for fMRI Data

Mar 26, 2022

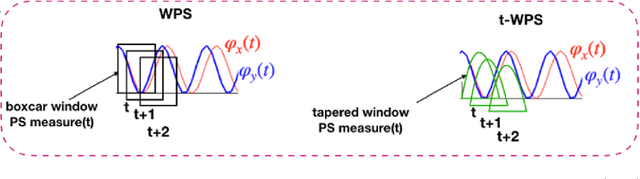

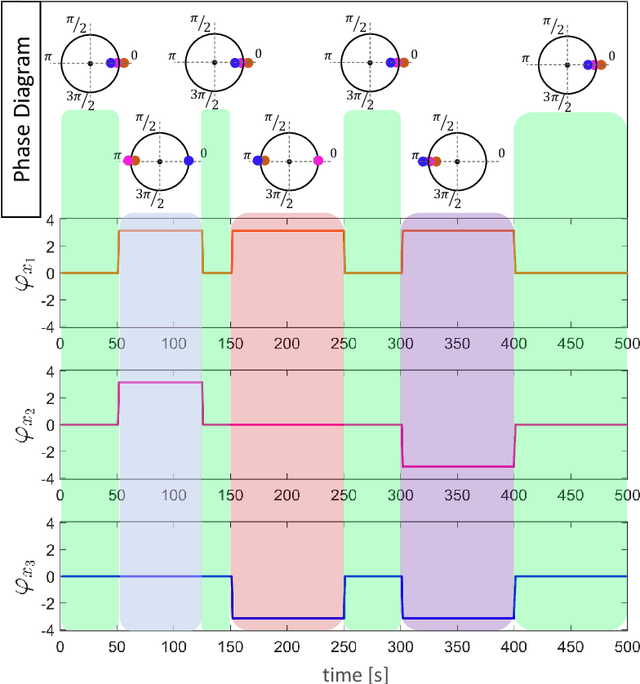

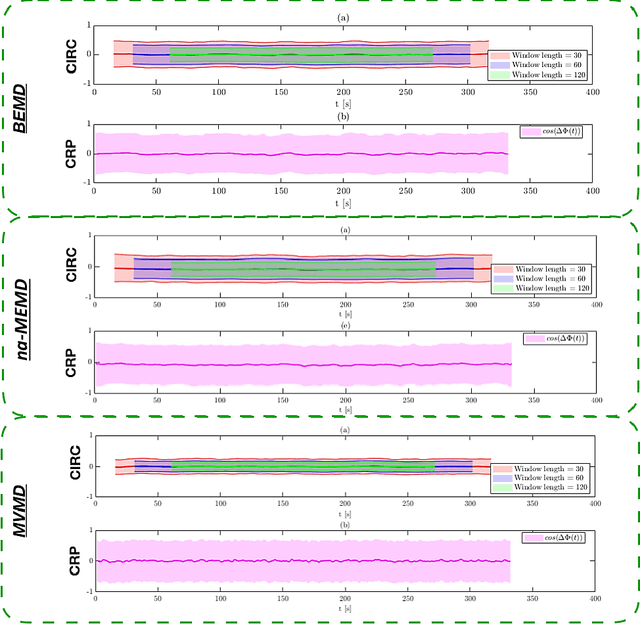

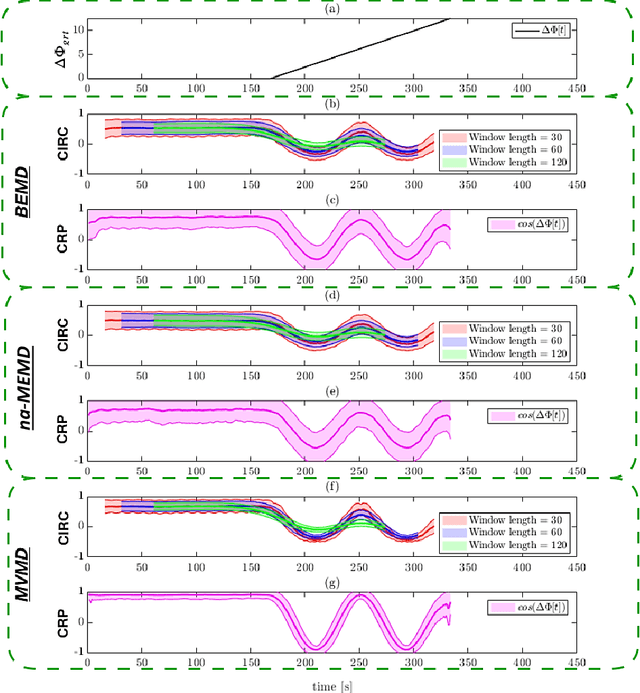

Recently there has been significant interest in measuring time-varying functional connectivity (TVC) between different brain regions using resting-state functional magnetic resonance imaging (rs-fMRI) data. One way to assess the relationship between signals from different brain regions is to measure their phase synchronization (PS) across time. However, this requires the \textit{a priori} choice of type and cut-off frequencies for the bandpass filter needed to perform the analysis. Here we explore alternative approaches based on the use of various mode decomposition (MD) techniques that circumvent this issue. These techniques allow for the data driven decomposition of signals jointly into narrow-band components at different frequencies, thus fulfilling the requirements needed to measure PS. We explore several variants of MD, including empirical mode decomposition (EMD), bivariate EMD (BEMD), noise-assisted multivariate EMD (na-MEMD), and introduce the use of multivariate variational mode decomposition (MVMD) in the context of estimating time-varying PS. We contrast the approaches using a series of simulations and application to rs-fMRI data. Our results show that MVMD outperforms other evaluated MD approaches, and further suggests that this approach can be used as a tool to reliably investigate time-varying PS in rs-fMRI data.

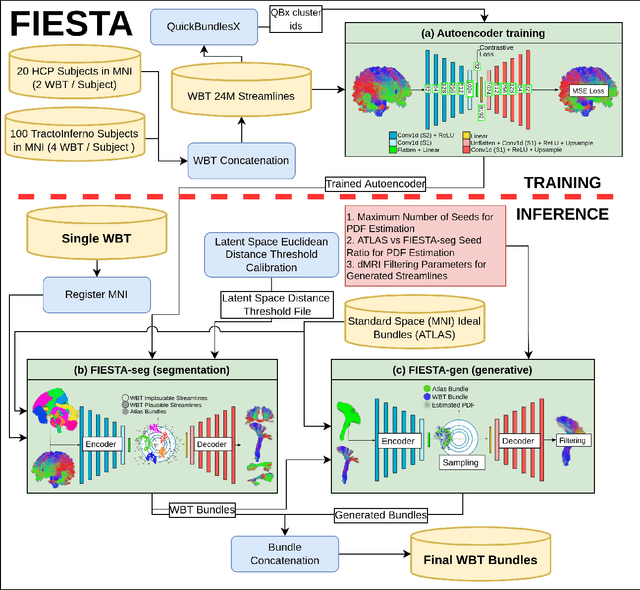

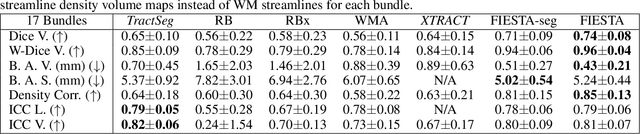

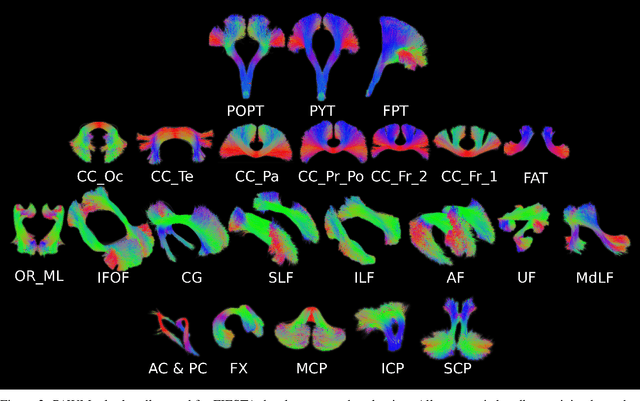

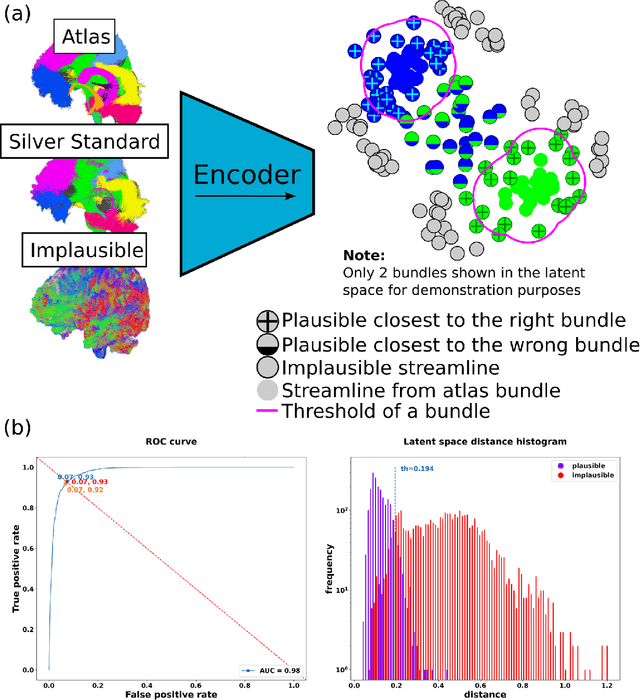

FIESTA: Autoencoders for accurate fiber segmentation in tractography

Dec 12, 2022

White matter bundle segmentation is a cornerstone of modern tractography to study the brain's structural connectivity in domains such as neurological disorders, neurosurgery, and aging. In this study, we present FIESTA (FIbEr Segmentation in Tractography using Autoencoders), a reliable and robust, fully automated, and easily semi-automatically calibrated pipeline based on deep autoencoders that can dissect and fully populate WM bundles. Our framework allows the transition from one anatomical bundle definition to another with marginal calibrating time. This pipeline is built upon FINTA, CINTA, and GESTA methods that demonstrated how autoencoders can be used successfully for streamline filtering, bundling, and streamline generation in tractography. Our proposed method improves bundling coverage by recovering hard-to-track bundles with generative sampling through the latent space seeding of the subject bundle and the atlas bundle. A latent space of streamlines is learned using autoencoder-based modeling combined with contrastive learning. Using an atlas of bundles in standard space (MNI), our proposed method segments new tractograms using the autoencoder latent distance between each tractogram streamline and its closest neighbor bundle in the atlas of bundles. Intra-subject bundle reliability is improved by recovering hard-to-track streamlines, using the autoencoder to generate new streamlines that increase each bundle's spatial coverage while remaining anatomically meaningful. Results show that our method is more reliable than state-of-the-art automated virtual dissection methods such as RecoBundles, RecoBundlesX, TractSeg, White Matter Analysis and XTRACT. Overall, these results show that our framework improves the practicality and usability of current state-of-the-art bundling framework

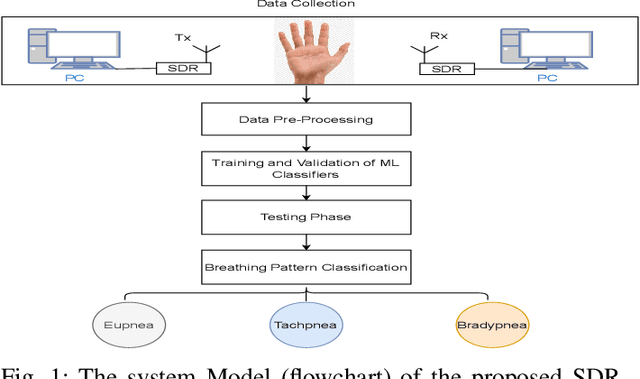

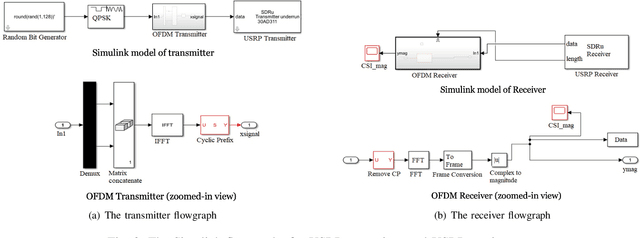

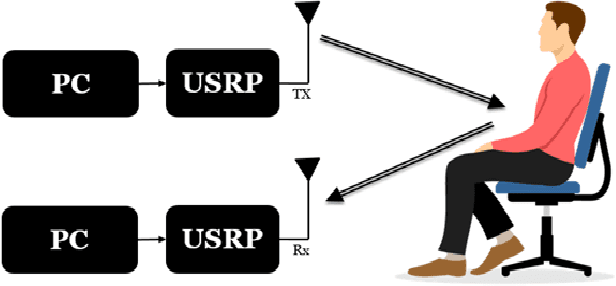

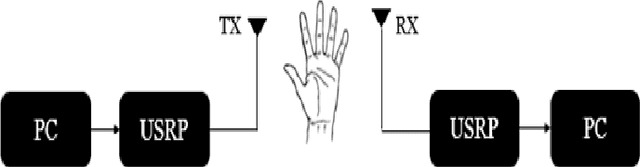

Hand-breathe: Non-Contact Monitoring of Breathing Abnormalities from Hand Palm

Dec 12, 2022

In post-covid19 world, radio frequency (RF)-based non-contact methods, e.g., software-defined radios (SDR)-based methods have emerged as promising candidates for intelligent remote sensing of human vitals, and could help in containment of contagious viruses like covid19. To this end, this work utilizes the universal software radio peripherals (USRP)-based SDRs along with classical machine learning (ML) methods to design a non-contact method to monitor different breathing abnormalities. Under our proposed method, a subject rests his/her hand on a table in between the transmit and receive antennas, while an orthogonal frequency division multiplexing (OFDM) signal passes through the hand. Subsequently, the receiver extracts the channel frequency response (basically, fine-grained wireless channel state information), and feeds it to various ML algorithms which eventually classify between different breathing abnormalities. Among all classifiers, linear SVM classifier resulted in a maximum accuracy of 88.1\%. To train the ML classifiers in a supervised manner, data was collected by doing real-time experiments on 4 subjects in a lab environment. For label generation purpose, the breathing of the subjects was classified into three classes: normal, fast, and slow breathing. Furthermore, in addition to our proposed method (where only a hand is exposed to RF signals), we also implemented and tested the state-of-the-art method (where full chest is exposed to RF radiation). The performance comparison of the two methods reveals a trade-off, i.e., the accuracy of our proposed method is slightly inferior but our method results in minimal body exposure to RF radiation, compared to the benchmark method.

Refining time-space traffic diagrams: A multiple linear regression model

Apr 09, 2022

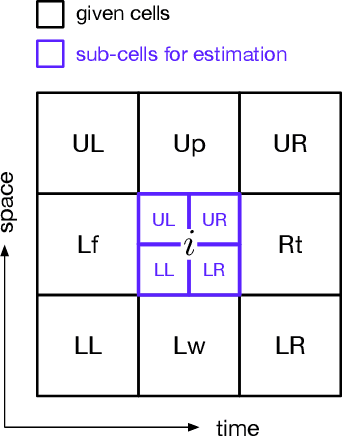

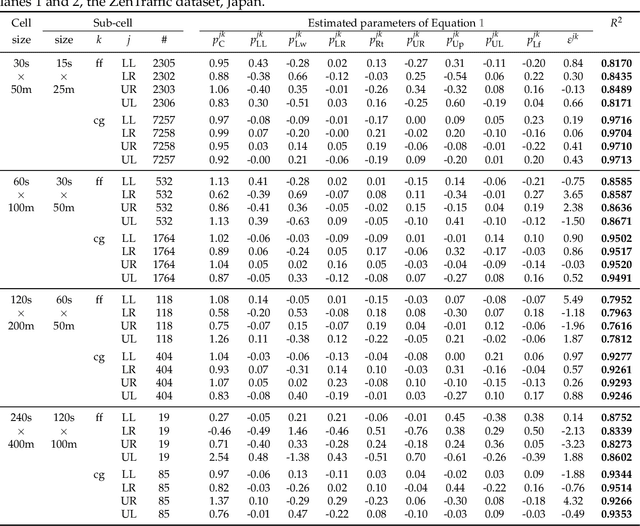

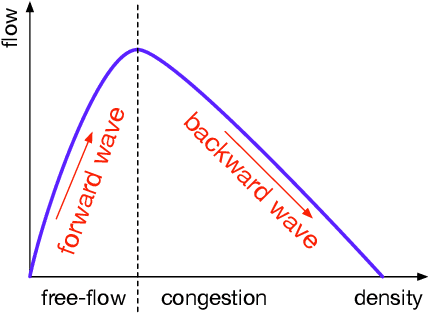

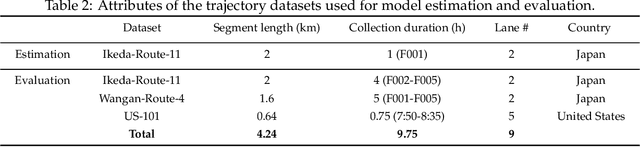

A time-space traffic (TS) diagram that presents traffic states in time-space cells with colors is one of the most important traffic analysis and visualization tools. Despite its importance for transportation research and engineering, most TS diagrams that have already existed or are being produced are too coarse to exhibit detailed traffic dynamics due to the limitation of the current information technology and traffic infrastructure investment. To increase the resolution of a TS diagram and make it present more traffic details, this paper introduces a TS diagram refinement problem and proposes a multiple linear regression-based model to solve the problem. Two tests, which attempt to increase the resolution of a TS diagram for 4 and 16 times, respectively, are carried out to evaluate the performance of the proposed model. The data collected from different time, different location and even different country is involved to thoroughly evaluate the accuracy and transferability of the proposed model. The strict tests with diverse data show that the proposed model, although it is simple in form, is able to refine a TS diagram with a promising accuracy and reliable transferability. The proposed refinement model will "save" those widely-existing TS diagrams from their blurry "faces" and make it possible to learn more traffic details from those TS diagrams.

JAX-Accelerated Neuroevolution of Physics-informed Neural Networks: Benchmarks and Experimental Results

Dec 15, 2022

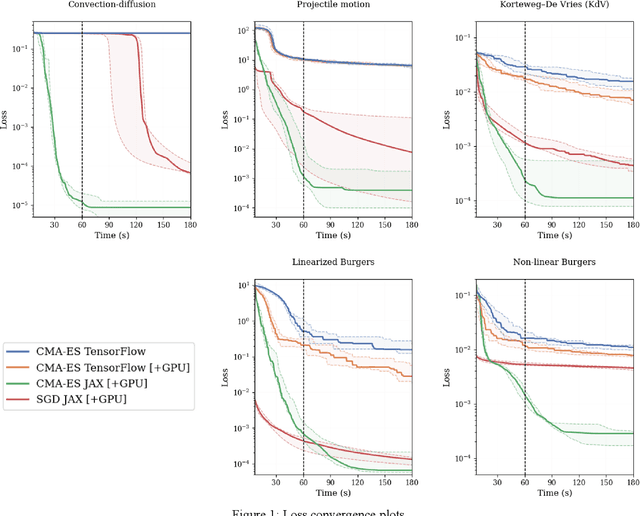

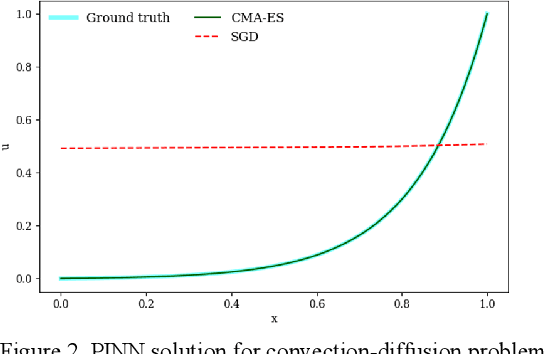

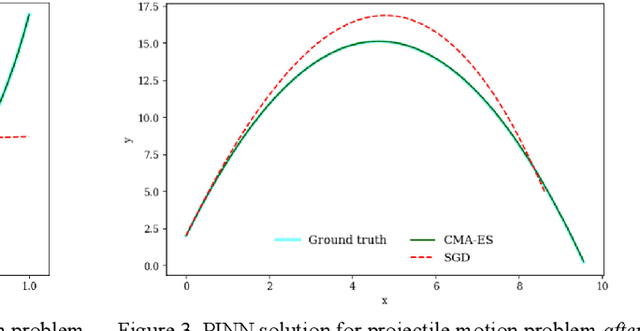

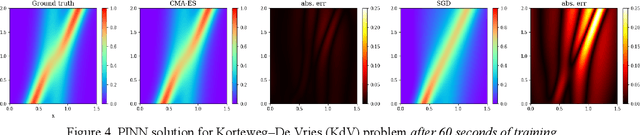

This paper introduces the use of evolutionary algorithms for solving differential equations. The solution is obtained by optimizing a deep neural network whose loss function is defined by the residual terms from the differential equations. Recent studies have used stochastic gradient descent (SGD) variants to train these physics-informed neural networks (PINNs), but these methods can struggle to find accurate solutions due to optimization challenges. When solving differential equations, it is important to find the globally optimum parameters of the network, rather than just finding a solution that works well during training. SGD only searches along a single gradient direction, so it may not be the best approach for training PINNs with their accompanying complex optimization landscapes. In contrast, evolutionary algorithms perform a parallel exploration of different solutions in order to avoid getting stuck in local optima and can potentially find more accurate solutions. However, evolutionary algorithms can be slow, which can make them difficult to use in practice. To address this, we provide a set of five benchmark problems with associated performance metrics and baseline results to support the development of evolutionary algorithms for enhanced PINN training. As a baseline, we evaluate the performance and speed of using the widely adopted Covariance Matrix Adaptation Evolution Strategy (CMA-ES) for solving PINNs. We provide the loss and training time for CMA-ES run on TensorFlow, and CMA-ES and SGD run on JAX (with GPU acceleration) for the five benchmark problems. Our results show that JAX-accelerated evolutionary algorithms, particularly CMA-ES, can be a useful approach for solving differential equations. We hope that our work will support the exploration and development of alternative optimization algorithms for the complex task of optimizing PINNs.

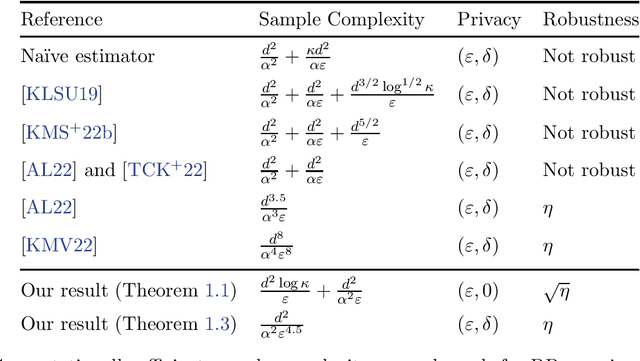

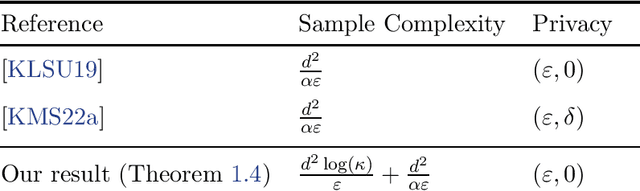

Privately Estimating a Gaussian: Efficient, Robust and Optimal

Dec 15, 2022

In this work, we give efficient algorithms for privately estimating a Gaussian distribution in both pure and approximate differential privacy (DP) models with optimal dependence on the dimension in the sample complexity. In the pure DP setting, we give an efficient algorithm that estimates an unknown $d$-dimensional Gaussian distribution up to an arbitrary tiny total variation error using $\widetilde{O}(d^2 \log \kappa)$ samples while tolerating a constant fraction of adversarial outliers. Here, $\kappa$ is the condition number of the target covariance matrix. The sample bound matches best non-private estimators in the dependence on the dimension (up to a polylogarithmic factor). We prove a new lower bound on differentially private covariance estimation to show that the dependence on the condition number $\kappa$ in the above sample bound is also tight. Prior to our work, only identifiability results (yielding inefficient super-polynomial time algorithms) were known for the problem. In the approximate DP setting, we give an efficient algorithm to estimate an unknown Gaussian distribution up to an arbitrarily tiny total variation error using $\widetilde{O}(d^2)$ samples while tolerating a constant fraction of adversarial outliers. Prior to our work, all efficient approximate DP algorithms incurred a super-quadratic sample cost or were not outlier-robust. For the special case of mean estimation, our algorithm achieves the optimal sample complexity of $\widetilde O(d)$, improving on a $\widetilde O(d^{1.5})$ bound from prior work. Our pure DP algorithm relies on a recursive private preconditioning subroutine that utilizes the recent work on private mean estimation [Hopkins et al., 2022]. Our approximate DP algorithms are based on a substantial upgrade of the method of stabilizing convex relaxations introduced in [Kothari et al., 2022].

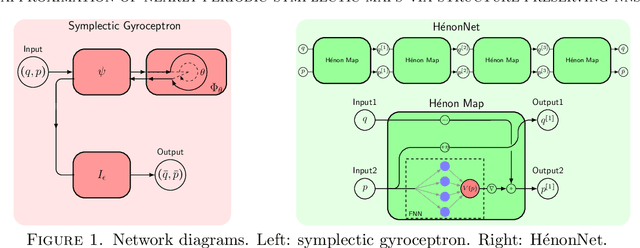

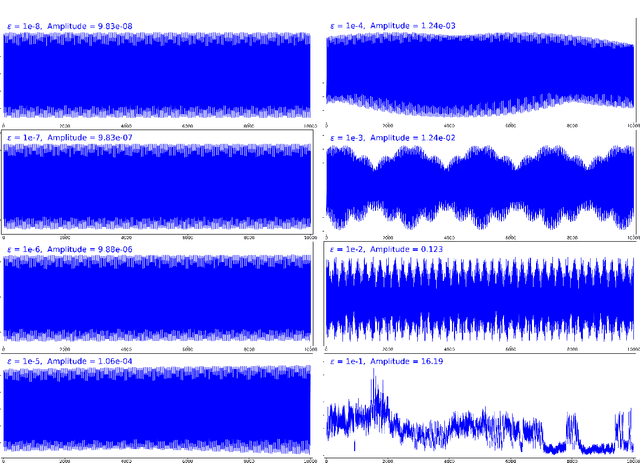

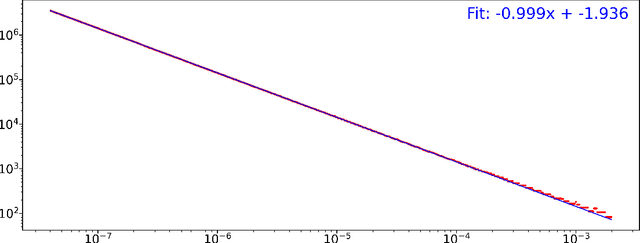

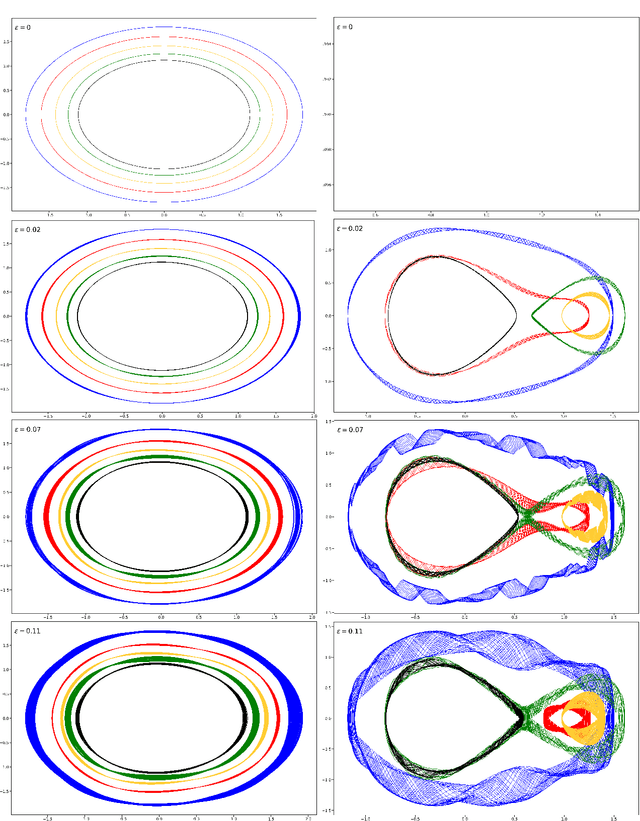

Approximation of nearly-periodic symplectic maps via structure-preserving neural networks

Oct 11, 2022

A continuous-time dynamical system with parameter $\varepsilon$ is nearly-periodic if all its trajectories are periodic with nowhere-vanishing angular frequency as $\varepsilon$ approaches 0. Nearly-periodic maps are discrete-time analogues of nearly-periodic systems, defined as parameter-dependent diffeomorphisms that limit to rotations along a circle action, and they admit formal $U(1)$ symmetries to all orders when the limiting rotation is non-resonant. For Hamiltonian nearly-periodic maps on exact presymplectic manifolds, the formal $U(1)$ symmetry gives rise to a discrete-time adiabatic invariant. In this paper, we construct a novel structure-preserving neural network to approximate nearly-periodic symplectic maps. This neural network architecture, which we call symplectic gyroceptron, ensures that the resulting surrogate map is nearly-periodic and symplectic, and that it gives rise to a discrete-time adiabatic invariant and a long-time stability. This new structure-preserving neural network provides a promising architecture for surrogate modeling of non-dissipative dynamical systems that automatically steps over short timescales without introducing spurious instabilities.

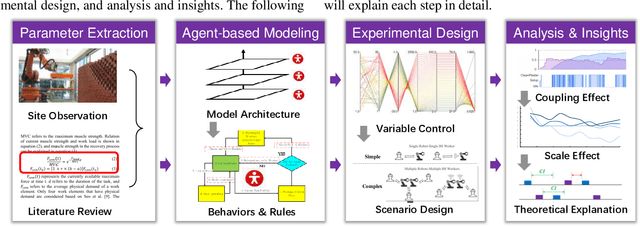

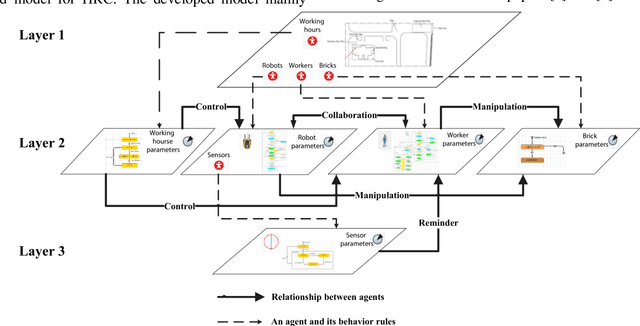

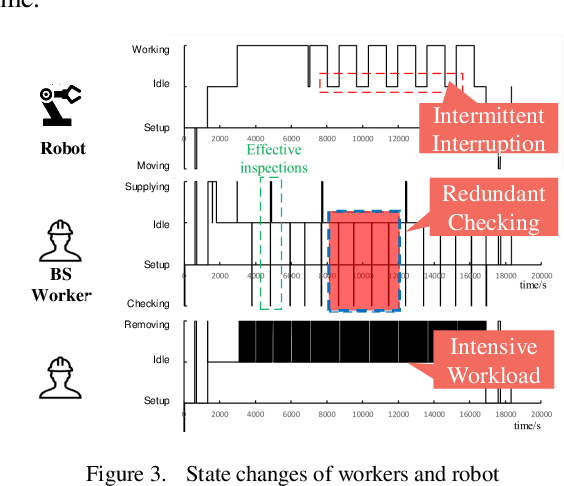

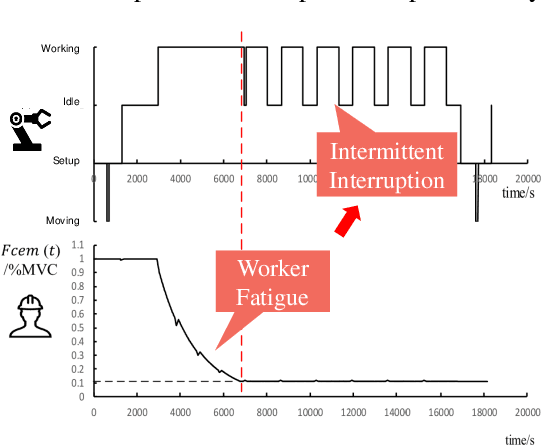

Exploiting the Power of Human-Robot Collaboration: Coupling and Scale Effects in Bricklaying

Dec 10, 2022

As an important contributor to GDP growth, the construction industry is suffering from labor shortage due to population ageing, COVID-19 pandemic, and harsh environments. Considering the complexity and dynamics of construction environment, it is still challenging to develop fully automated robots. For a long time in the future, workers and robots will coexist and collaborate with each other to build or maintain a facility efficiently. As an emerging field, human-robot collaboration (HRC) still faces various open problems. To this end, this pioneer research introduces an agent-based modeling approach to investigate the coupling effect and scale effect of HRC in the bricklaying process. With multiple experiments based on simulation, the dynamic and complex nature of HRC is illustrated in two folds: 1) agents in HRC are interdependent due to human factors of workers, features of robots, and their collaboration behaviors; 2) different parameters of HRC are correlated and have significant impacts on construction productivity (CP). Accidentally and interestingly, it is discovered that HRC has a scale effect on CP, which means increasing the number of collaborated human-robot teams will lead to higher CP even if the human-robot ratio keeps unchanged. Overall, it is argued that more investigations in HRC are needed for efficient construction, occupational safety, etc.; and this research can be taken as a stepstone for developing and evaluating new robots, optimizing HRC processes, and even training future industrial workers in the construction industry.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge