Zhenhua Zou

Anonymization-Enhanced Privacy Protection for Mobile GUI Agents: Available but Invisible

Feb 14, 2026Abstract:Mobile Graphical User Interface (GUI) agents have demonstrated strong capabilities in automating complex smartphone tasks by leveraging multimodal large language models (MLLMs) and system-level control interfaces. However, this paradigm introduces significant privacy risks, as agents typically capture and process entire screen contents, thereby exposing sensitive personal data such as phone numbers, addresses, messages, and financial information. Existing defenses either reduce UI exposure, obfuscate only task-irrelevant content, or rely on user authorization, but none can protect task-critical sensitive information while preserving seamless agent usability. We propose an anonymization-based privacy protection framework that enforces the principle of available-but-invisible access to sensitive data: sensitive information remains usable for task execution but is never directly visible to the cloud-based agent. Our system detects sensitive UI content using a PII-aware recognition model and replaces it with deterministic, type-preserving placeholders (e.g., PHONE_NUMBER#a1b2c) that retain semantic categories while removing identifying details. A layered architecture comprising a PII Detector, UI Transformer, Secure Interaction Proxy, and Privacy Gatekeeper ensures consistent anonymization across user instructions, XML hierarchies, and screenshots, mediates all agent actions over anonymized interfaces, and supports narrowly scoped local computations when reasoning over raw values is necessary. Extensive experiments on the AndroidLab and PrivScreen benchmarks show that our framework substantially reduces privacy leakage across multiple models while incurring only modest utility degradation, achieving the best observed privacy-utility trade-off among existing methods. Code available at: https://github.com/one-step-beh1nd/gui_privacy_protection

Blind Gods and Broken Screens: Architecting a Secure, Intent-Centric Mobile Agent Operating System

Feb 13, 2026Abstract:The evolution of Large Language Models (LLMs) has shifted mobile computing from App-centric interactions to system-level autonomous agents. Current implementations predominantly rely on a "Screen-as-Interface" paradigm, which inherits structural vulnerabilities and conflicts with the mobile ecosystem's economic foundations. In this paper, we conduct a systematic security analysis of state-of-the-art mobile agents using Doubao Mobile Assistant as a representative case. We decompose the threat landscape into four dimensions - Agent Identity, External Interface, Internal Reasoning, and Action Execution - revealing critical flaws such as fake App identity, visual spoofing, indirect prompt injection, and unauthorized privilege escalation stemming from a reliance on unstructured visual data. To address these challenges, we propose Aura, an Agent Universal Runtime Architecture for a clean-slate secure agent OS. Aura replaces brittle GUI scraping with a structured, agent-native interaction model. It adopts a Hub-and-Spoke topology where a privileged System Agent orchestrates intent, sandboxed App Agents execute domain-specific tasks, and the Agent Kernel mediates all communication. The Agent Kernel enforces four defense pillars: (i) cryptographic identity binding via a Global Agent Registry; (ii) semantic input sanitization through a multilayer Semantic Firewall; (iii) cognitive integrity via taint-aware memory and plan-trajectory alignment; and (iv) granular access control with non-deniable auditing. Evaluation on MobileSafetyBench shows that, compared to Doubao, Aura improves low-risk Task Success Rate from roughly 75% to 94.3%, reduces high-risk Attack Success Rate from roughly 40% to 4.4%, and achieves near-order-of-magnitude latency gains. These results demonstrate Aura as a viable, secure alternative to the "Screen-as-Interface" paradigm.

Stochastic Online Shortest Path Routing: The Value of Feedback

Jan 18, 2017

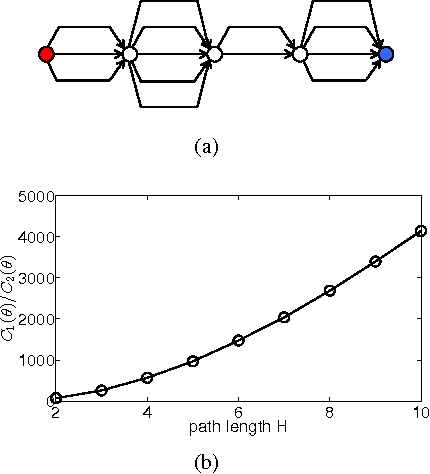

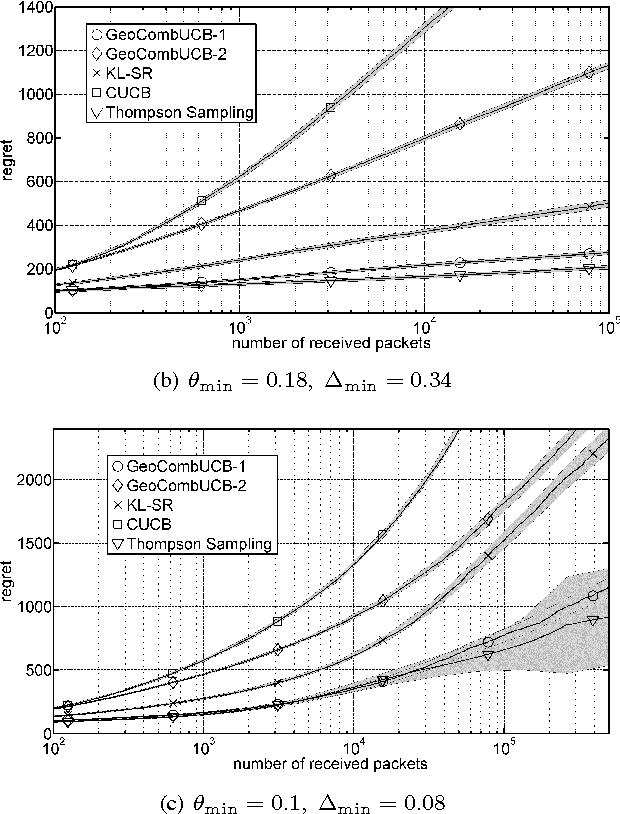

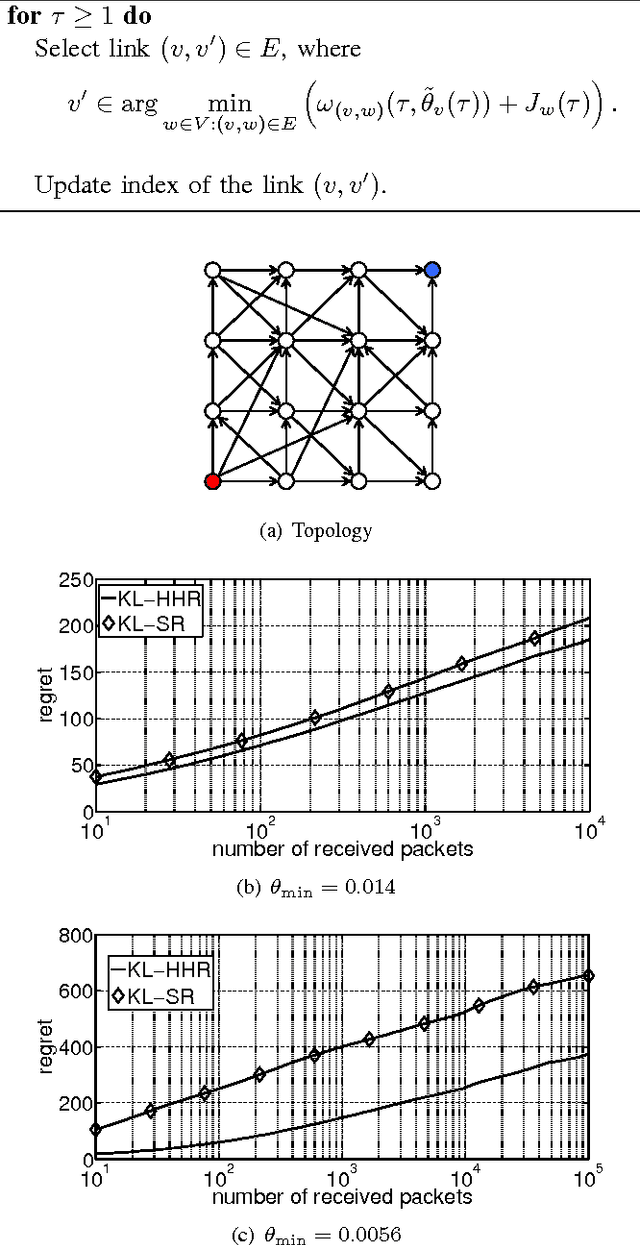

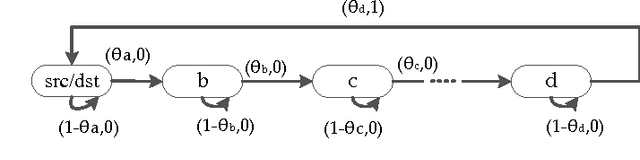

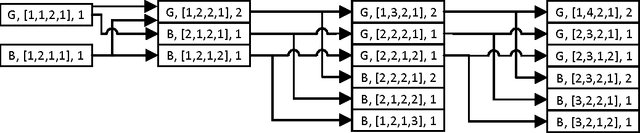

Abstract:This paper studies online shortest path routing over multi-hop networks. Link costs or delays are time-varying and modeled by independent and identically distributed random processes, whose parameters are initially unknown. The parameters, and hence the optimal path, can only be estimated by routing packets through the network and observing the realized delays. Our aim is to find a routing policy that minimizes the regret (the cumulative difference of expected delay) between the path chosen by the policy and the unknown optimal path. We formulate the problem as a combinatorial bandit optimization problem and consider several scenarios that differ in where routing decisions are made and in the information available when making the decisions. For each scenario, we derive a tight asymptotic lower bound on the regret that has to be satisfied by any online routing policy. These bounds help us to understand the performance improvements we can expect when (i) taking routing decisions at each hop rather than at the source only, and (ii) observing per-link delays rather than end-to-end path delays. In particular, we show that (i) is of no use while (ii) can have a spectacular impact. Three algorithms, with a trade-off between computational complexity and performance, are proposed. The regret upper bounds of these algorithms improve over those of the existing algorithms, and they significantly outperform state-of-the-art algorithms in numerical experiments.

Optimal Radio Frequency Energy Harvesting with Limited Energy Arrival Knowledge

Aug 02, 2015

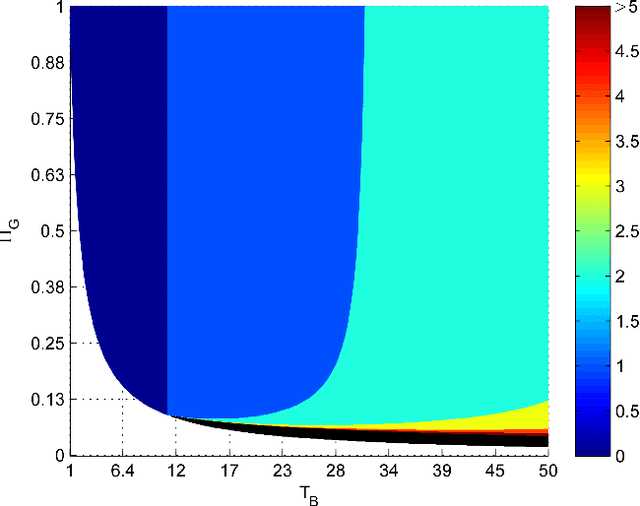

Abstract:In this paper, we develop optimal policies for deciding when a wireless node with radio frequency (RF) energy harvesting (EH) capabilities should try and harvest ambient RF energy. While the idea of RF-EH is appealing, it is not always beneficial to attempt to harvest energy; in environments where the ambient energy is low, nodes could consume more energy being awake with their harvesting circuits turned on than what they can extract from the ambient radio signals; it is then better to enter a sleep mode until the ambient RF energy increases. Towards this end, we consider a scenario with intermittent energy arrivals and a wireless node that wakes up for a period of time (herein called the time-slot) and harvests energy. If enough energy is harvested during the time-slot, then the harvesting is successful and excess energy is stored; however, if there does not exist enough energy the harvesting is unsuccessful and energy is lost. We assume that the ambient energy level is constant during the time-slot, and changes at slot boundaries. The energy level dynamics are described by a two-state Gilbert-Elliott Markov chain model, where the state of the Markov chain can only be observed during the harvesting action, and not when in sleep mode. Two scenarios are studied under this model. In the first scenario, we assume that we have knowledge of the transition probabilities of the Markov chain and formulate the problem as a Partially Observable Markov Decision Process (POMDP), where we find a threshold-based optimal policy. In the second scenario, we assume that we don't have any knowledge about these parameters and formulate the problem as a Bayesian adaptive POMDP; to reduce the complexity of the computations we also propose a heuristic posterior sampling algorithm. The performance of our approaches is demonstrated via numerical examples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge