Zhen Fu

Multi-Loco: Unifying Multi-Embodiment Legged Locomotion via Reinforcement Learning Augmented Diffusion

Jun 13, 2025Abstract:Generalizing locomotion policies across diverse legged robots with varying morphologies is a key challenge due to differences in observation/action dimensions and system dynamics. In this work, we propose Multi-Loco, a novel unified framework combining a morphology-agnostic generative diffusion model with a lightweight residual policy optimized via reinforcement learning (RL). The diffusion model captures morphology-invariant locomotion patterns from diverse cross-embodiment datasets, improving generalization and robustness. The residual policy is shared across all embodiments and refines the actions generated by the diffusion model, enhancing task-aware performance and robustness for real-world deployment. We evaluated our method with a rich library of four legged robots in both simulation and real-world experiments. Compared to a standard RL framework with PPO, our approach -- replacing the Gaussian policy with a diffusion model and residual term -- achieves a 10.35% average return improvement, with gains up to 13.57% in wheeled-biped locomotion tasks. These results highlight the benefits of cross-embodiment data and composite generative architectures in learning robust, generalized locomotion skills.

Learning Whole-Body Loco-Manipulation for Omni-Directional Task Space Pose Tracking with a Wheeled-Quadrupedal-Manipulator

Dec 04, 2024

Abstract:In this paper, we study the whole-body loco-manipulation problem using reinforcement learning (RL). Specifically, we focus on the problem of how to coordinate the floating base and the robotic arm of a wheeled-quadrupedal manipulator robot to achieve direct six-dimensional (6D) end-effector (EE) pose tracking in task space. Different from conventional whole-body loco-manipulation problems that track both floating-base and end-effector commands, the direct EE pose tracking problem requires inherent balance among redundant degrees of freedom in the whole-body motion. We leverage RL to solve this challenging problem. To address the associated difficulties, we develop a novel reward fusion module (RFM) that systematically integrates reward terms corresponding to different tasks in a nonlinear manner. In such a way, the inherent multi-stage and hierarchical feature of the loco-manipulation problem can be carefully accommodated. By combining the proposed RFM with the a teacher-student RL training paradigm, we present a complete RL scheme to achieve 6D EE pose tracking for the wheeled-quadruped manipulator robot. Extensive simulation and hardware experiments demonstrate the significance of the RFM. In particular, we enable smooth and precise tracking performance, achieving state-of-the-art tracking position error of less than 5 cm, and rotation error of less than 0.1 rad. Please refer to https://clearlab-sustech.github.io/RFM_loco_mani/ for more experimental videos.

Force-feedback based Whole-body Stabilizer for Position-Controlled Humanoid Robots

Aug 15, 2021

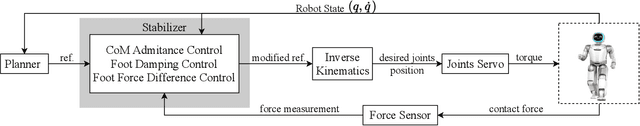

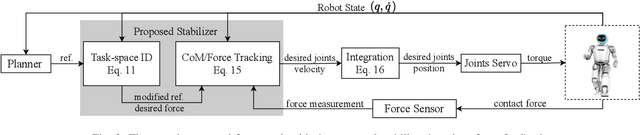

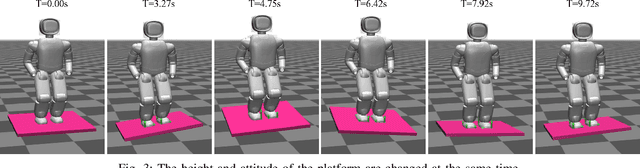

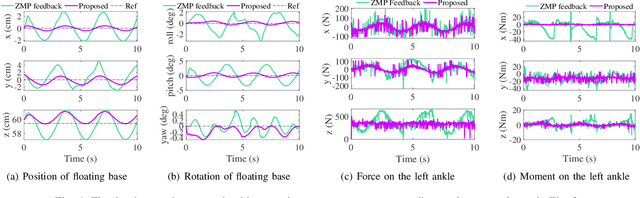

Abstract:This paper studies stabilizer design for position-controlled humanoid robots. Stabilizers are an essential part for position-controlled humanoids, whose primary objective is to adjust the control input sent to the robot to assist the tracking controller to better follow the planned reference trajectory. To achieve this goal, this paper develops a novel force-feedback based whole-body stabilizer that fully exploits the six-dimensional force measurement information and the whole-body dynamics to improve tracking performance. Relying on rigorous analysis of whole-body dynamics of position-controlled humanoids under unknown contact, the developed stabilizer leverages quadratic-programming based technique that allows cooperative consideration of both the center-of-mass tracking and contact force tracking. The effectiveness of the proposed stabilizer is demonstrated on the UBTECH Walker robot in the MuJoCo simulator. Simulation validations show a significant improvement in various scenarios as compared to commonly adopted stabilizers based on the zero-moment-point feedback and the linear inverted pendulum model.

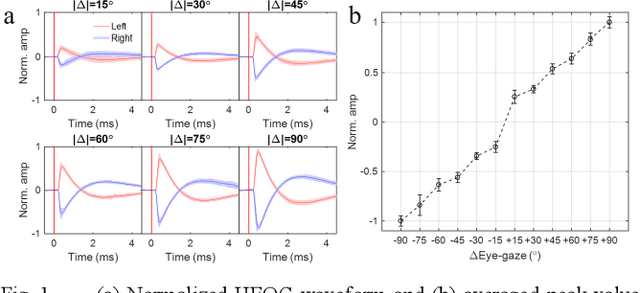

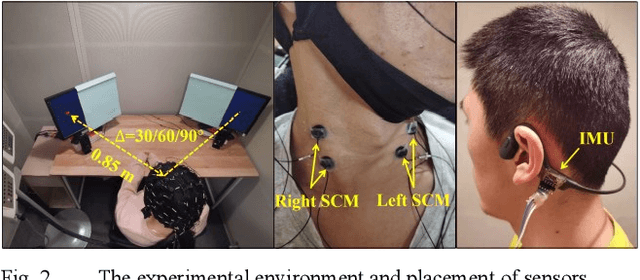

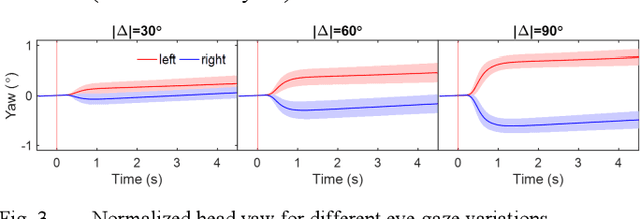

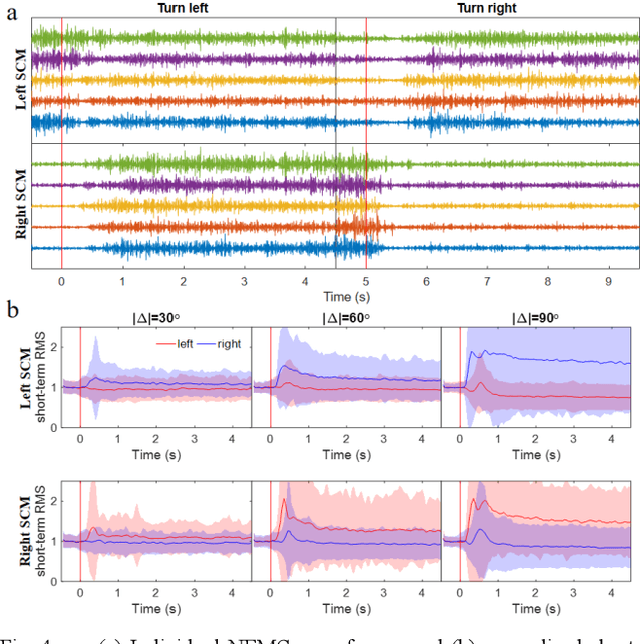

Eye-gaze Estimation with HEOG and Neck EMG using Deep Neural Networks

Mar 03, 2021

Abstract:Hearing-impaired listeners usually have troubles attending target talker in multi-talker scenes, even with hearing aids (HAs). The problem can be solved with eye-gaze steering HAs, which requires listeners eye-gazing on the target. In a situation where head rotates, eye-gaze is subject to both behaviors of saccade and head rotation. However, existing methods of eye-gaze estimation did not work reliably, since the listener's strategy of eye-gaze varies and measurements of the two behaviors were not properly combined. Besides, existing methods were based on hand-craft features, which could overlook some important information. In this paper, a head-fixed and a head-free experiments were conducted. We used horizontal electrooculography (HEOG) and neck electromyography (NEMG), which separately measured saccade and head rotation to commonly estimate eye-gaze. Besides traditional classifier and hand-craft features, deep neural networks (DNN) were introduced to automatically extract features from intact waveforms. Evaluation results showed that when the input was HEOG with inertial measurement unit, the best performance of our proposed DNN classifiers achieved 93.3%; and when HEOG was with NEMG together, the accuracy reached 72.6%, higher than that with HEOG (about 71.0%) or NEMG (about 35.7%) alone. These results indicated the feasibility to estimate eye-gaze with HEOG and NEMG.

Auditory Attention Decoding from EEG using Convolutional Recurrent Neural Network

Mar 03, 2021

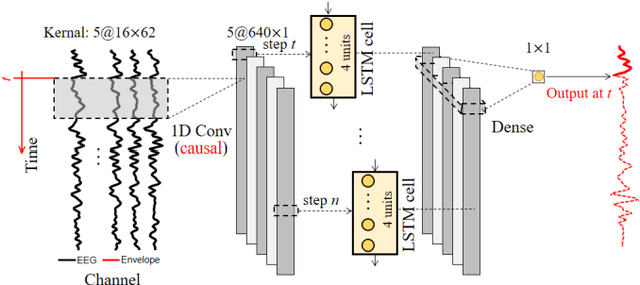

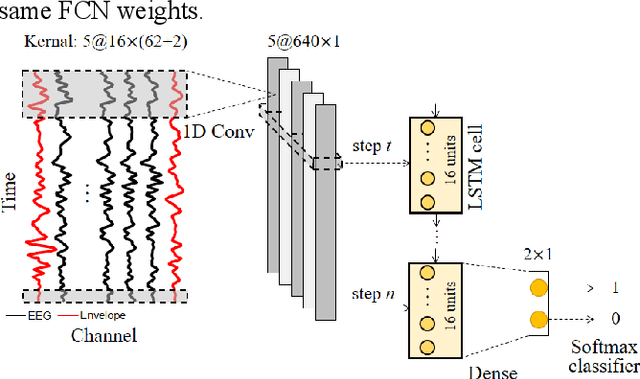

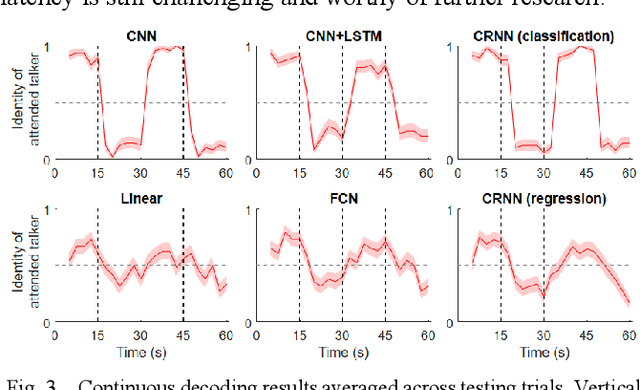

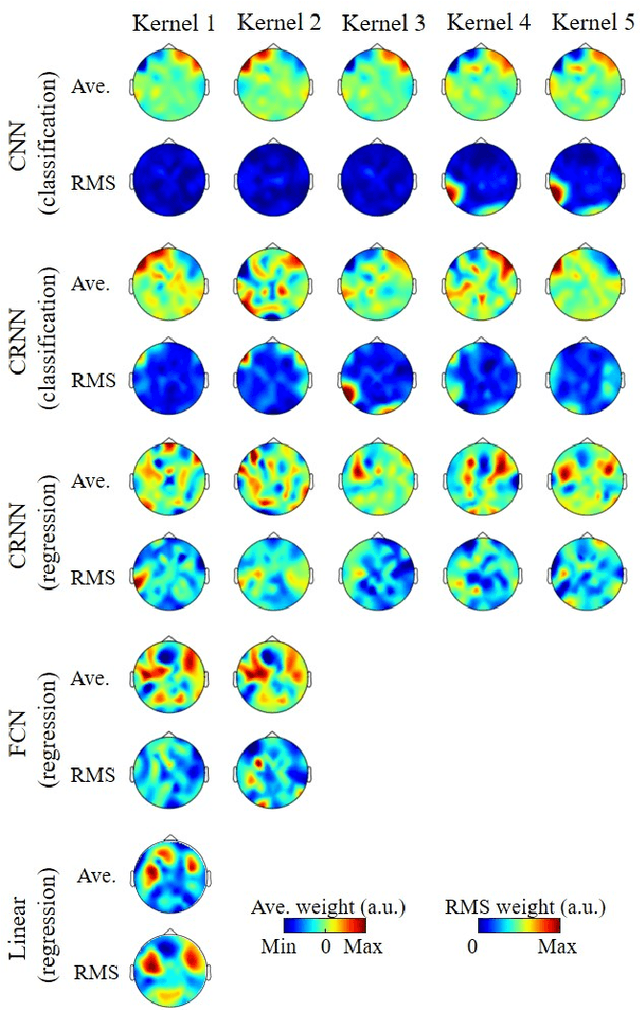

Abstract:The auditory attention decoding (AAD) approach was proposed to determine the identity of the attended talker in a multi-talker scenario by analyzing electroencephalography (EEG) data. Although the linear model-based method has been widely used in AAD, the linear assumption was considered oversimplified and the decoding accuracy remained lower for shorter decoding windows. Recently, nonlinear models based on deep neural networks (DNN) have been proposed to solve this problem. However, these models did not fully utilize both the spatial and temporal features of EEG, and the interpretability of DNN models was rarely investigated. In this paper, we proposed novel convolutional recurrent neural network (CRNN) based regression model and classification model, and compared them with both the linear model and the state-of-the-art DNN models. Results showed that, our proposed CRNN-based classification model outperformed others for shorter decoding windows (around 90% for 2 s and 5 s). Although worse than classification models, the decoding accuracy of the proposed CRNN-based regression model was about 5% greater than other regression models. The interpretability of DNN models was also investigated by visualizing layers' weight.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge