Yitian Li

PhysInOne: Visual Physics Learning and Reasoning in One Suite

Apr 10, 2026Abstract:We present PhysInOne, a large-scale synthetic dataset addressing the critical scarcity of physically-grounded training data for AI systems. Unlike existing datasets limited to merely hundreds or thousands of examples, PhysInOne provides 2 million videos across 153,810 dynamic 3D scenes, covering 71 basic physical phenomena in mechanics, optics, fluid dynamics, and magnetism. Distinct from previous works, our scenes feature multiobject interactions against complex backgrounds, with comprehensive ground-truth annotations including 3D geometry, semantics, dynamic motion, physical properties, and text descriptions. We demonstrate PhysInOne's efficacy across four emerging applications: physics-aware video generation, long-/short-term future frame prediction, physical property estimation, and motion transfer. Experiments show that fine-tuning foundation models on PhysInOne significantly enhances physical plausibility, while also exposing critical gaps in modeling complex physical dynamics and estimating intrinsic properties. As the largest dataset of its kind, orders of magnitude beyond prior works, PhysInOne establishes a new benchmark for advancing physics-grounded world models in generation, simulation, and embodied AI.

Hypothesis Testing Prompting Improves Deductive Reasoning in Large Language Models

May 09, 2024

Abstract:Combining different forms of prompts with pre-trained large language models has yielded remarkable results on reasoning tasks (e.g. Chain-of-Thought prompting). However, along with testing on more complex reasoning, these methods also expose problems such as invalid reasoning and fictional reasoning paths. In this paper, we develop \textit{Hypothesis Testing Prompting}, which adds conclusion assumptions, backward reasoning, and fact verification during intermediate reasoning steps. \textit{Hypothesis Testing prompting} involves multiple assumptions and reverses validation of conclusions leading to its unique correct answer. Experiments on two challenging deductive reasoning datasets ProofWriter and RuleTaker show that hypothesis testing prompting not only significantly improves the effect, but also generates a more reasonable and standardized reasoning process.

Logical Negation Augmenting and Debiasing for Prompt-based Methods

May 08, 2024

Abstract:Prompt-based methods have gained increasing attention on NLP and shown validity on many downstream tasks. Many works have focused on mining these methods' potential for knowledge extraction, but few explore their ability to make logical reasoning. In this work, we focus on the effectiveness of the prompt-based methods on first-order logical reasoning and find that the bottleneck lies in logical negation. Based on our analysis, logical negation tends to result in spurious correlations to negative answers, while propositions without logical negation correlate to positive answers. To solve the problem, we propose a simple but effective method, Negation Augmenting and Negation Debiasing (NAND), which introduces negative propositions to prompt-based methods without updating parameters. Specifically, these negative propositions can counteract spurious correlations by providing "not" for all instances so that models cannot make decisions only by whether expressions contain a logical negation. Experiments on three datasets show that NAND not only solves the problem of calibrating logical negation but also significantly enhances prompt-based methods of logical reasoning without model retraining.

Comparable Demonstrations are Important in In-Context Learning: A Novel Perspective on Demonstration Selection

Dec 12, 2023

Abstract:In-Context Learning (ICL) is an important paradigm for adapting Large Language Models (LLMs) to downstream tasks through a few demonstrations. Despite the great success of ICL, the limitation of the demonstration number may lead to demonstration bias, i.e. the input-label mapping induced by LLMs misunderstands the task's essence. Inspired by human experience, we attempt to mitigate such bias through the perspective of the inter-demonstration relationship. Specifically, we construct Comparable Demonstrations (CDs) by minimally editing the texts to flip the corresponding labels, in order to highlight the task's essence and eliminate potential spurious correlations through the inter-demonstration comparison. Through a series of experiments on CDs, we find that (1) demonstration bias does exist in LLMs, and CDs can significantly reduce such bias; (2) CDs exhibit good performance in ICL, especially in out-of-distribution scenarios. In summary, this study explores the ICL mechanisms from a novel perspective, providing a deeper insight into the demonstration selection strategy for ICL.

Chain-of-Thought Tuning: Masked Language Models can also Think Step By Step in Natural Language Understanding

Oct 18, 2023

Abstract:Chain-of-Thought (CoT) is a technique that guides Large Language Models (LLMs) to decompose complex tasks into multi-step reasoning through intermediate steps in natural language form. Briefly, CoT enables LLMs to think step by step. However, although many Natural Language Understanding (NLU) tasks also require thinking step by step, LLMs perform less well than small-scale Masked Language Models (MLMs). To migrate CoT from LLMs to MLMs, we propose Chain-of-Thought Tuning (CoTT), a two-step reasoning framework based on prompt tuning, to implement step-by-step thinking for MLMs on NLU tasks. From the perspective of CoT, CoTT's two-step framework enables MLMs to implement task decomposition; CoTT's prompt tuning allows intermediate steps to be used in natural language form. Thereby, the success of CoT can be extended to NLU tasks through MLMs. To verify the effectiveness of CoTT, we conduct experiments on two NLU tasks: hierarchical classification and relation extraction, and the results show that CoTT outperforms baselines and achieves state-of-the-art performance.

Accurate Use of Label Dependency in Multi-Label Text Classification Through the Lens of Causality

Oct 11, 2023Abstract:Multi-Label Text Classification (MLTC) aims to assign the most relevant labels to each given text. Existing methods demonstrate that label dependency can help to improve the model's performance. However, the introduction of label dependency may cause the model to suffer from unwanted prediction bias. In this study, we attribute the bias to the model's misuse of label dependency, i.e., the model tends to utilize the correlation shortcut in label dependency rather than fusing text information and label dependency for prediction. Motivated by causal inference, we propose a CounterFactual Text Classifier (CFTC) to eliminate the correlation bias, and make causality-based predictions. Specifically, our CFTC first adopts the predict-then-modify backbone to extract precise label information embedded in label dependency, then blocks the correlation shortcut through the counterfactual de-bias technique with the help of the human causal graph. Experimental results on three datasets demonstrate that our CFTC significantly outperforms the baselines and effectively eliminates the correlation bias in datasets.

Unlock the Potential of Counterfactually-Augmented Data in Out-Of-Distribution Generalization

Oct 10, 2023

Abstract:Counterfactually-Augmented Data (CAD) -- minimal editing of sentences to flip the corresponding labels -- has the potential to improve the Out-Of-Distribution (OOD) generalization capability of language models, as CAD induces language models to exploit domain-independent causal features and exclude spurious correlations. However, the empirical results of CAD's OOD generalization are not as efficient as anticipated. In this study, we attribute the inefficiency to the myopia phenomenon caused by CAD: language models only focus on causal features that are edited in the augmentation operation and exclude other non-edited causal features. Therefore, the potential of CAD is not fully exploited. To address this issue, we analyze the myopia phenomenon in feature space from the perspective of Fisher's Linear Discriminant, then we introduce two additional constraints based on CAD's structural properties (dataset-level and sentence-level) to help language models extract more complete causal features in CAD, thereby mitigating the myopia phenomenon and improving OOD generalization capability. We evaluate our method on two tasks: Sentiment Analysis and Natural Language Inference, and the experimental results demonstrate that our method could unlock the potential of CAD and improve the OOD generalization performance of language models by 1.0% to 5.9%.

Improving the Out-Of-Distribution Generalization Capability of Language Models: Counterfactually-Augmented Data is not Enough

Feb 18, 2023Abstract:Counterfactually-Augmented Data (CAD) has the potential to improve language models' Out-Of-Distribution (OOD) generalization capability, as CAD induces language models to exploit causal features and exclude spurious correlations. However, the empirical results of OOD generalization on CAD are not as efficient as expected. In this paper, we attribute the inefficiency to Myopia Phenomenon caused by CAD: language models only focus on causal features that are edited in the augmentation and exclude other non-edited causal features. As a result, the potential of CAD is not fully exploited. Based on the structural properties of CAD, we design two additional constraints to help language models extract more complete causal features contained in CAD, thus improving the OOD generalization capability. We evaluate our method on two tasks: Sentiment Analysis and Natural Language Inference, and the experimental results demonstrate that our method could unlock CAD's potential and improve language models' OOD generalization capability.

MaxGNR: A Dynamic Weight Strategy via Maximizing Gradient-to-Noise Ratio for Multi-Task Learning

Feb 18, 2023

Abstract:When modeling related tasks in computer vision, Multi-Task Learning (MTL) can outperform Single-Task Learning (STL) due to its ability to capture intrinsic relatedness among tasks. However, MTL may encounter the insufficient training problem, i.e., some tasks in MTL may encounter non-optimal situation compared with STL. A series of studies point out that too much gradient noise would lead to performance degradation in STL, however, in the MTL scenario, Inter-Task Gradient Noise (ITGN) is an additional source of gradient noise for each task, which can also affect the optimization process. In this paper, we point out ITGN as a key factor leading to the insufficient training problem. We define the Gradient-to-Noise Ratio (GNR) to measure the relative magnitude of gradient noise and design the MaxGNR algorithm to alleviate the ITGN interference of each task by maximizing the GNR of each task. We carefully evaluate our MaxGNR algorithm on two standard image MTL datasets: NYUv2 and Cityscapes. The results show that our algorithm outperforms the baselines under identical experimental conditions.

ReadNet:Towards Accurate ReID with Limited and Noisy Samples

May 12, 2020

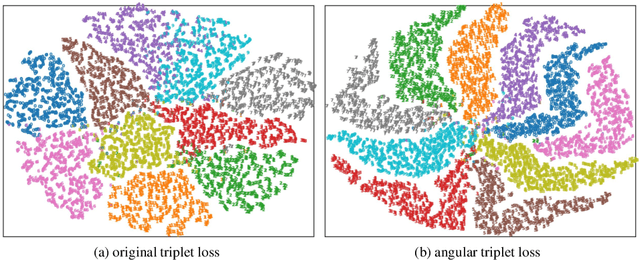

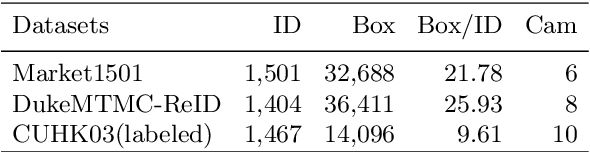

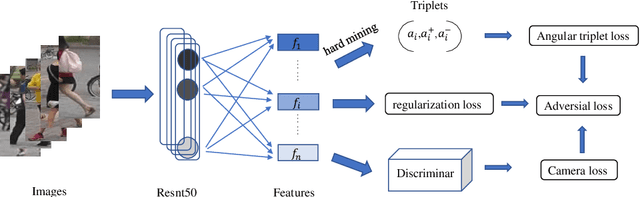

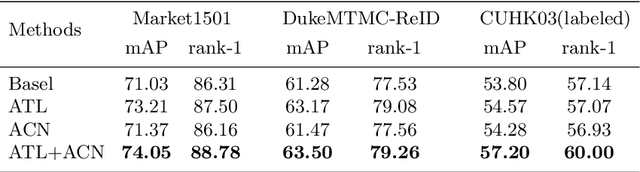

Abstract:Person re-identification (ReID) is an essential cross-camera retrieval task to identify pedestrians. However, the photo number of each pedestrian usually differs drastically, and thus the data limitation and imbalance problem hinders the prediction accuracy greatly. Additionally, in real-world applications, pedestrian images are captured by different surveillance cameras, so the noisy camera related information, such as the lights, perspectives and resolutions, result in inevitable domain gaps for ReID algorithms. These challenges bring difficulties to current deep learning methods with triplet loss for coping with such problems. To address these challenges, this paper proposes ReadNet, an adversarial camera network (ACN) with an angular triplet loss (ATL). In detail, ATL focuses on learning the angular distance among different identities to mitigate the effect of data imbalance, and guarantees a linear decision boundary as well, while ACN takes the camera discriminator as a game opponent of feature extractor to filter camera related information to bridge the multi-camera gaps. ReadNet is designed to be flexible so that either ATL or ACN can be deployed independently or simultaneously. The experiment results on various benchmark datasets have shown that ReadNet can deliver better prediction performance than current state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge