Wang Gao

TaxPraBen: A Scalable Benchmark for Structured Evaluation of LLMs in Chinese Real-World Tax Practice

Apr 10, 2026Abstract:While Large Language Models (LLMs) excel in various general domains, they exhibit notable gaps in the highly specialized, knowledge-intensive, and legally regulated Chinese tax domain. Consequently, while tax-related benchmarks are gaining attention, many focus on isolated NLP tasks, neglecting real-world practical capabilities. To address this issue, we introduce TaxPraBen, the first dedicated benchmark for Chinese taxation practice. It combines 10 traditional application tasks, along with 3 pioneering real-world scenarios: tax risk prevention, tax inspection analysis, and tax strategy planning, sourced from 14 datasets totaling 7.3K instances. TaxPraBen features a scalable structured evaluation paradigm designed through process of "structured parsing-field alignment extraction-numerical and textual matching", enabling end-to-end tax practice assessment while being extensible to other domains. We evaluate 19 LLMs based on Bloom's taxonomy. The results indicate significant performance disparities: all closed-source large-parameter LLMs excel, and Chinese LLMs like Qwen2.5 generally exceed multilingual LLMs, while the YaYi2 LLM, fine-tuned with some tax data, shows only limited improvement. TaxPraBen serves as a vital resource for advancing evaluations of LLMs in practical applications.

Regularized Densely-connected Pyramid Network for Salient Instance Segmentation

Aug 28, 2020

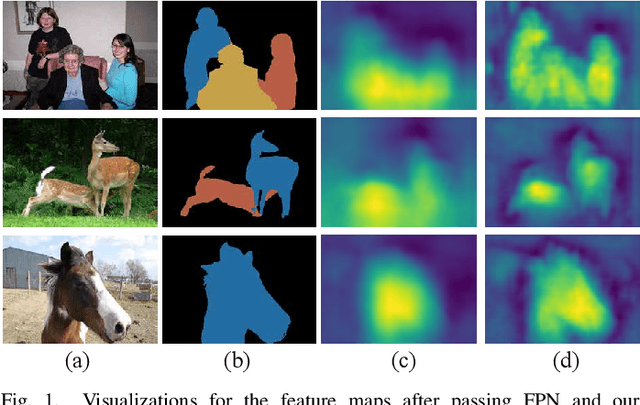

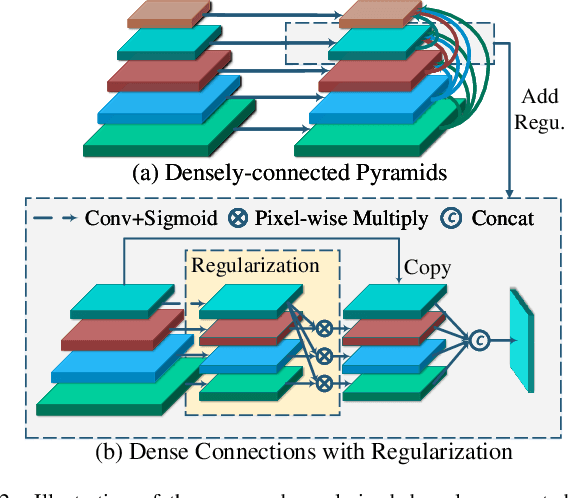

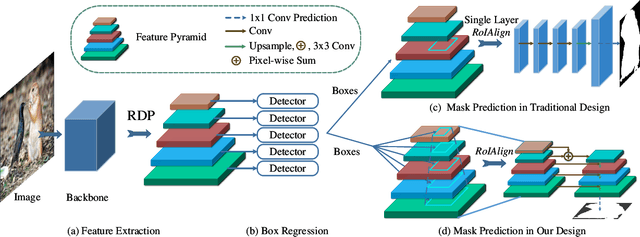

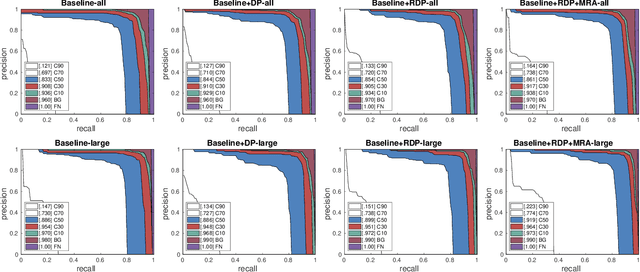

Abstract:Much of the recent efforts on salient object detection (SOD) has been devoted to producing accurate saliency maps without being aware of their instance labels. To this end, we propose a new pipeline for end-to-end salient instance segmentation (SIS) that predicts a class-agnostic mask for each detected salient instance. To make better use of the rich feature hierarchies in deep networks, we propose the regularized dense connections, which attentively promote informative features and suppress non-informative ones from all feature pyramids, to enhance the side predictions. A novel multi-level RoIAlign based decoder is introduced as well to adaptively aggregate multi-level features for better mask predictions. Such good strategies can be well-encapsulated into the Mask-RCNN pipeline. Extensive experiments on popular benchmarks demonstrate that our design significantly outperforms existing state-of-the-art competitors by 6.3% (58.6% vs 52.3%) in terms of the AP metric. The code is available at https://github.com/yuhuan-wu/RDPNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge