Tuhin Chakrabarty

It's not Rocket Science : Interpreting Figurative Language in Narratives

Aug 31, 2021

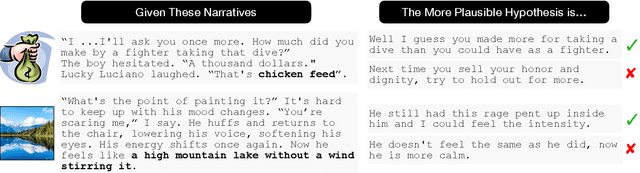

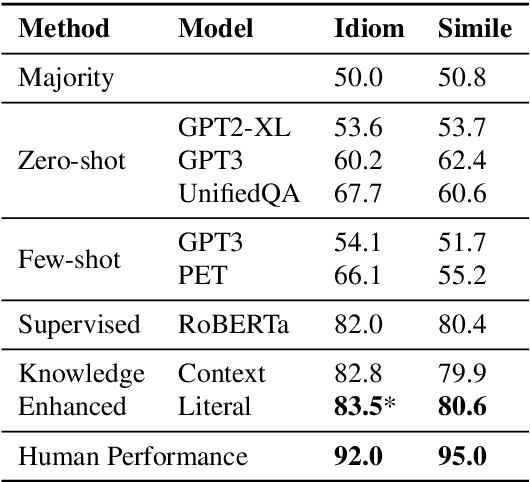

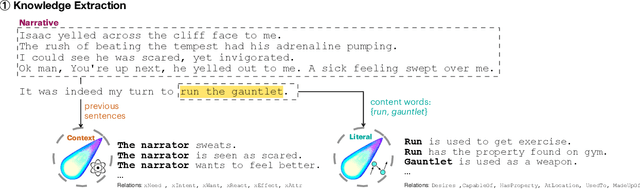

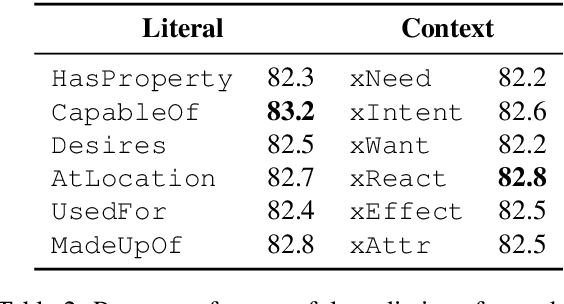

Abstract:Figurative language is ubiquitous in English. Yet, the vast majority of NLP research focuses on literal language. Existing text representations by design rely on compositionality, while figurative language is often non-compositional. In this paper, we study the interpretation of two non-compositional figurative languages (idioms and similes). We collected datasets of fictional narratives containing a figurative expression along with crowd-sourced plausible and implausible continuations relying on the correct interpretation of the expression. We then trained models to choose or generate the plausible continuation. Our experiments show that models based solely on pre-trained language models perform substantially worse than humans on these tasks. We additionally propose knowledge-enhanced models, adopting human strategies for interpreting figurative language: inferring meaning from the context and relying on the constituent word's literal meanings. The knowledge-enhanced models improve the performance on both the discriminative and generative tasks, further bridging the gap from human performance.

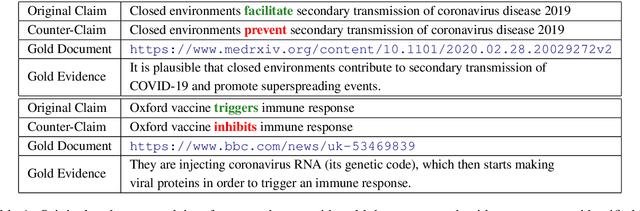

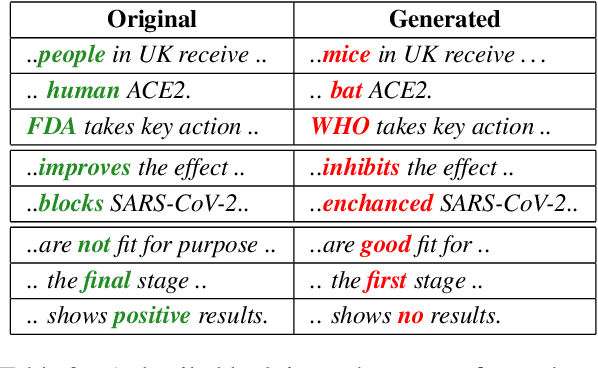

COVID-Fact: Fact Extraction and Verification of Real-World Claims on COVID-19 Pandemic

Jun 07, 2021

Abstract:We introduce a FEVER-like dataset COVID-Fact of $4,086$ claims concerning the COVID-19 pandemic. The dataset contains claims, evidence for the claims, and contradictory claims refuted by the evidence. Unlike previous approaches, we automatically detect true claims and their source articles and then generate counter-claims using automatic methods rather than employing human annotators. Along with our constructed resource, we formally present the task of identifying relevant evidence for the claims and verifying whether the evidence refutes or supports a given claim. In addition to scientific claims, our data contains simplified general claims from media sources, making it better suited for detecting general misinformation regarding COVID-19. Our experiments indicate that COVID-Fact will provide a challenging testbed for the development of new systems and our approach will reduce the costs of building domain-specific datasets for detecting misinformation.

Figurative Language in Recognizing Textual Entailment

Jun 03, 2021

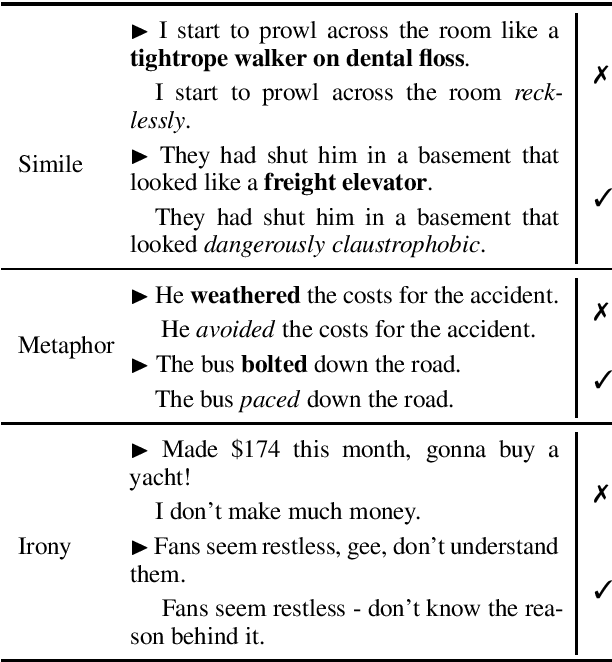

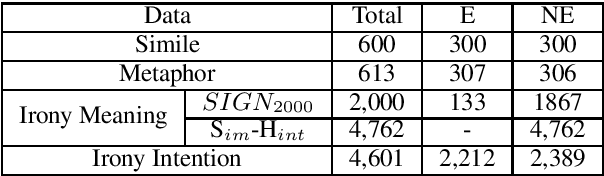

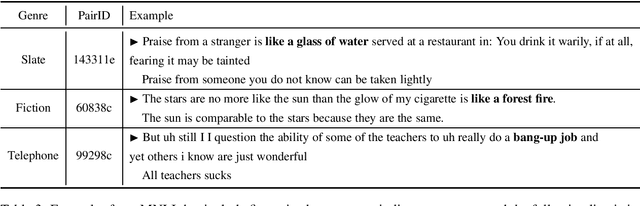

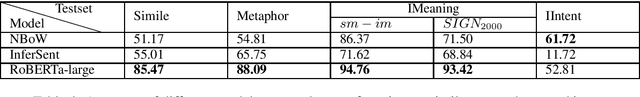

Abstract:We introduce a collection of recognizing textual entailment (RTE) datasets focused on figurative language. We leverage five existing datasets annotated for a variety of figurative language -- simile, metaphor, and irony -- and frame them into over 12,500 RTE examples.We evaluate how well state-of-the-art models trained on popular RTE datasets capture different aspects of figurative language. Our results and analyses indicate that these models might not sufficiently capture figurative language, struggling to perform pragmatic inference and reasoning about world knowledge. Ultimately, our datasets provide a challenging testbed for evaluating RTE models.

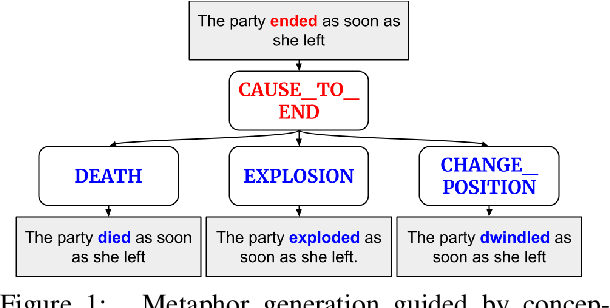

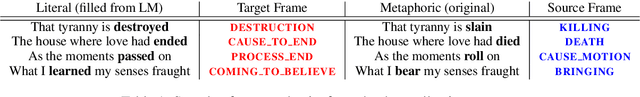

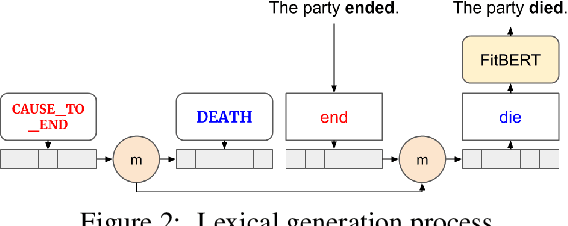

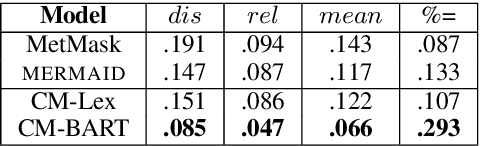

Metaphor Generation with Conceptual Mappings

Jun 02, 2021

Abstract:Generating metaphors is a difficult task as it requires understanding nuanced relationships between abstract concepts. In this paper, we aim to generate a metaphoric sentence given a literal expression by replacing relevant verbs. Guided by conceptual metaphor theory, we propose to control the generation process by encoding conceptual mappings between cognitive domains to generate meaningful metaphoric expressions. To achieve this, we develop two methods: 1) using FrameNet-based embeddings to learn mappings between domains and applying them at the lexical level (CM-Lex), and 2) deriving source/target pairs to train a controlled seq-to-seq generation model (CM-BART). We assess our methods through automatic and human evaluation for basic metaphoricity and conceptual metaphor presence. We show that the unsupervised CM-Lex model is competitive with recent deep learning metaphor generation systems, and CM-BART outperforms all other models both in automatic and human evaluations.

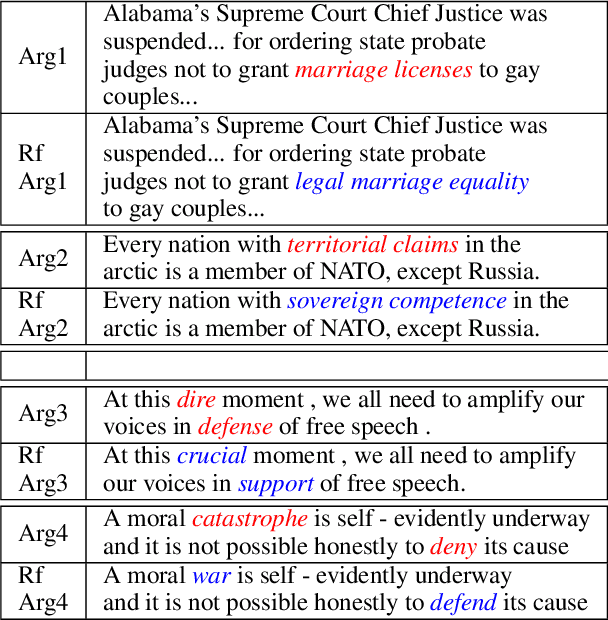

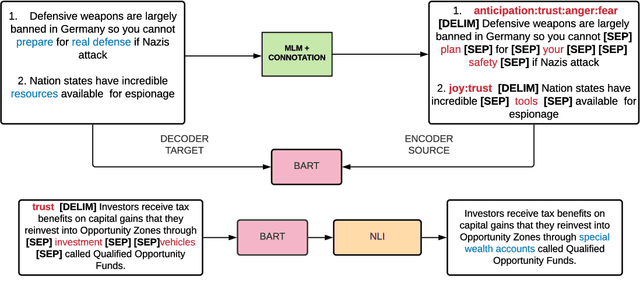

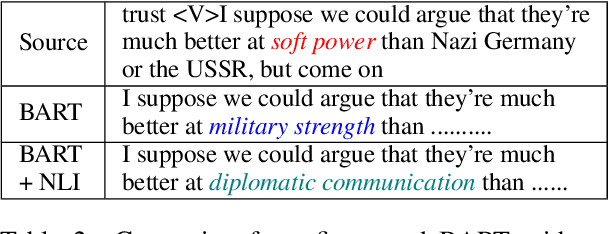

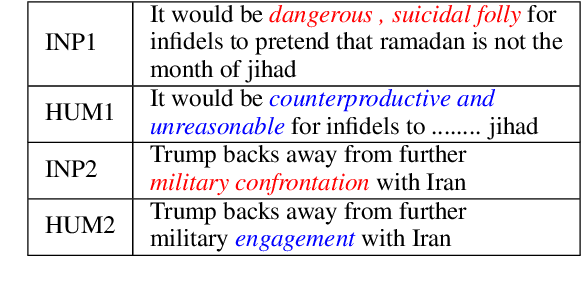

ENTRUST: Argument Reframing with Language Models and Entailment

Apr 11, 2021

Abstract:Framing involves the positive or negative presentation of an argument or issue depending on the audience and goal of the speaker (Entman 1983). Differences in lexical framing, the focus of our work, can have large effects on peoples' opinions and beliefs. To make progress towards reframing arguments for positive effects, we create a dataset and method for this task. We use a lexical resource for "connotations" to create a parallel corpus and propose a method for argument reframing that combines controllable text generation (positive connotation) with a post-decoding entailment component (same denotation). Our results show that our method is effective compared to strong baselines along the dimensions of fluency, meaning, and trustworthiness/reduction of fear.

MERMAID: Metaphor Generation with Symbolism and Discriminative Decoding

Apr 11, 2021

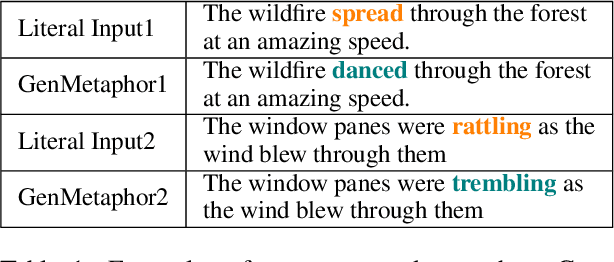

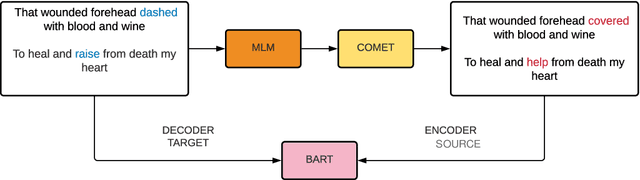

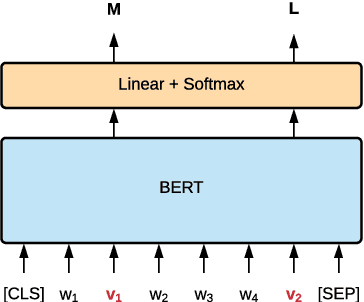

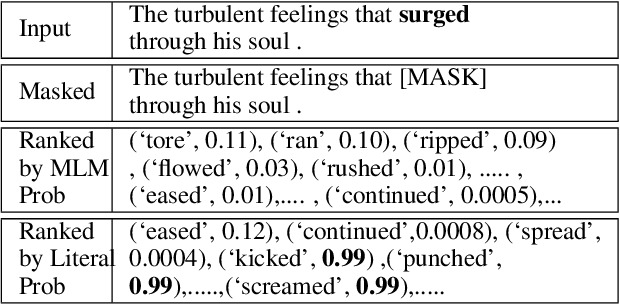

Abstract:Generating metaphors is a challenging task as it requires a proper understanding of abstract concepts, making connections between unrelated concepts, and deviating from the literal meaning. In this paper, we aim to generate a metaphoric sentence given a literal expression by replacing relevant verbs. Based on a theoretically-grounded connection between metaphors and symbols, we propose a method to automatically construct a parallel corpus by transforming a large number of metaphorical sentences from the Gutenberg Poetry corpus (Jacobs, 2018) to their literal counterpart using recent advances in masked language modeling coupled with commonsense inference. For the generation task, we incorporate a metaphor discriminator to guide the decoding of a sequence to sequence model fine-tuned on our parallel data to generate high-quality metaphors. Human evaluation on an independent test set of literal statements shows that our best model generates metaphors better than three well-crafted baselines 66% of the time on average. A task-based evaluation shows that human-written poems enhanced with metaphors proposed by our model are preferred 68% of the time compared to poems without metaphors.

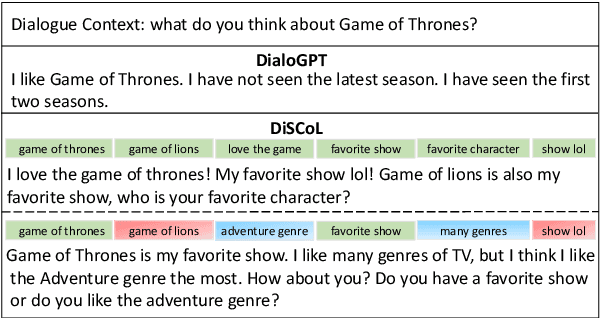

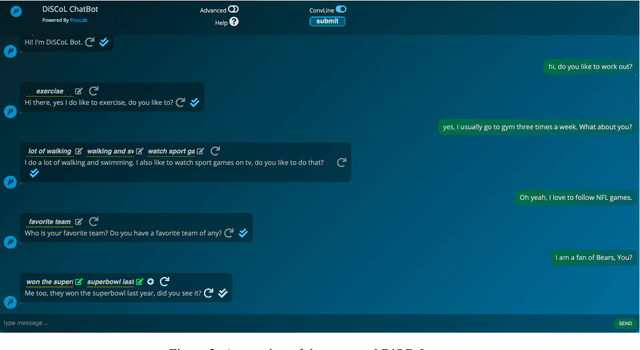

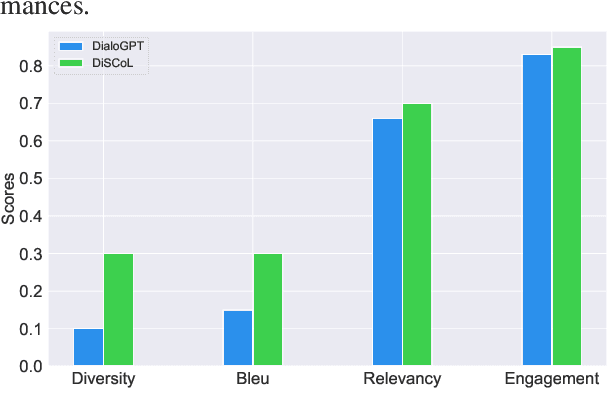

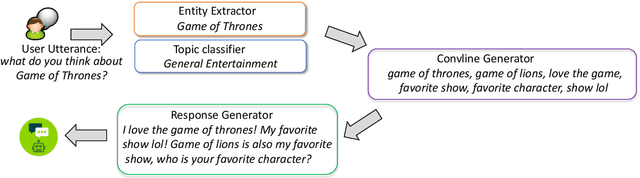

DiSCoL: Toward Engaging Dialogue Systems through Conversational Line Guided Response Generation

Feb 03, 2021

Abstract:Having engaging and informative conversations with users is the utmost goal for open-domain conversational systems. Recent advances in transformer-based language models and their applications to dialogue systems have succeeded to generate fluent and human-like responses. However, they still lack control over the generation process towards producing contentful responses and achieving engaging conversations. To achieve this goal, we present \textbf{DiSCoL} (\textbf{Di}alogue \textbf{S}ystems through \textbf{Co}versational \textbf{L}ine guided response generation). DiSCoL is an open-domain dialogue system that leverages conversational lines (briefly \textbf{convlines}) as controllable and informative content-planning elements to guide the generation model produce engaging and informative responses. Two primary modules in DiSCoL's pipeline are conditional generators trained for 1) predicting relevant and informative convlines for dialogue contexts and 2) generating high-quality responses conditioned on the predicted convlines. Users can also change the returned convlines to \textit{control} the direction of the conversations towards topics that are more interesting for them. Through automatic and human evaluations, we demonstrate the efficiency of the convlines in producing engaging conversations.

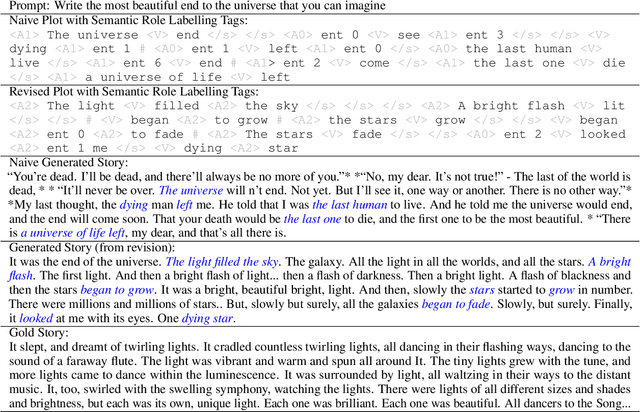

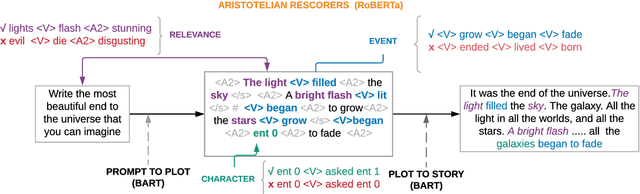

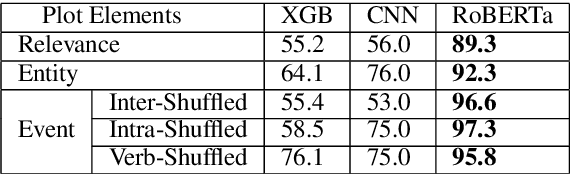

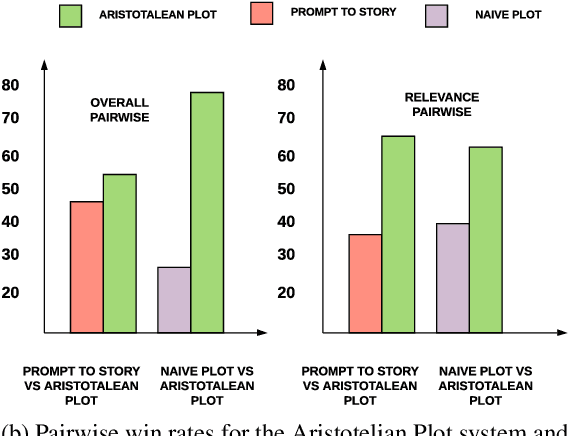

Content Planning for Neural Story Generation with Aristotelian Rescoring

Oct 09, 2020

Abstract:Long-form narrative text generated from large language models manages a fluent impersonation of human writing, but only at the local sentence level, and lacks structure or global cohesion. We posit that many of the problems of story generation can be addressed via high-quality content planning, and present a system that focuses on how to learn good plot structures to guide story generation. We utilize a plot-generation language model along with an ensemble of rescoring models that each implement an aspect of good story-writing as detailed in Aristotle's Poetics. We find that stories written with our more principled plot-structure are both more relevant to a given prompt and higher quality than baselines that do not content plan, or that plan in an unprincipled way.

Generating similes effortlessly like a Pro: A Style Transfer Approach for Simile Generation

Oct 03, 2020

Abstract:Literary tropes, from poetry to stories, are at the crux of human imagination and communication. Figurative language such as a simile go beyond plain expressions to give readers new insights and inspirations. In this paper, we tackle the problem of simile generation. Generating a simile requires proper understanding for effective mapping of properties between two concepts. To this end, we first propose a method to automatically construct a parallel corpus by transforming a large number of similes collected from Reddit to their literal counterpart using structured common sense knowledge. We then propose to fine-tune a pretrained sequence to sequence model, BART~\cite{lewis2019bart}, on the literal-simile pairs to gain generalizability, so that we can generate novel similes given a literal sentence. Experiments show that our approach generates $88\%$ novel similes that do not share properties with the training data. Human evaluation on an independent set of literal statements shows that our model generates similes better than two literary experts \textit{37\%}\footnote{We average 32.6\% and 41.3\% for 2 humans.} of the times, and three baseline systems including a recent metaphor generation model \textit{71\%}\footnote{We average 82\% ,63\% and 68\% for three baselines.} of the times when compared pairwise.\footnote{The simile in the title is generated by our best model. Input: Generating similes effortlessly, output: Generating similes \textit{like a Pro}.} We also show how replacing literal sentences with similes from our best model in machine generated stories improves evocativeness and leads to better acceptance by human judges.

$R^3$: Reverse, Retrieve, and Rank for Sarcasm Generation with Commonsense Knowledge

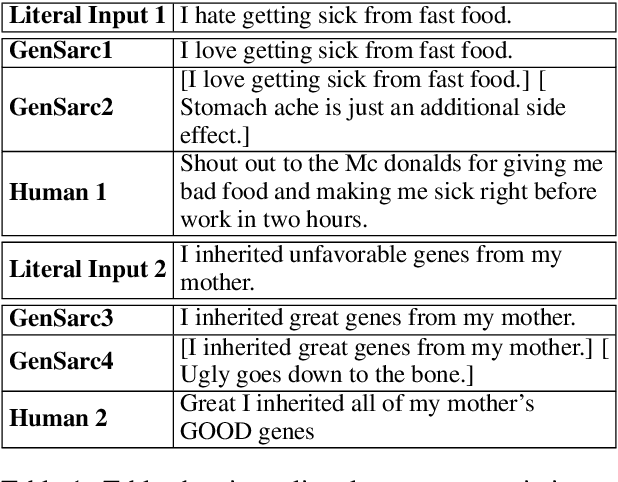

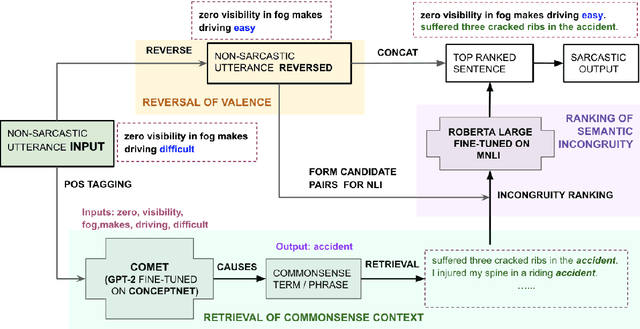

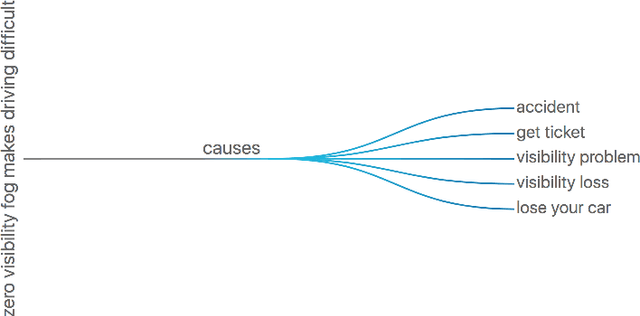

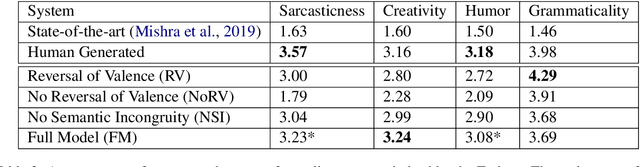

May 28, 2020

Abstract:We propose an unsupervised approach for sarcasm generation based on a non-sarcastic input sentence. Our method employs a retrieve-and-edit framework to instantiate two major characteristics of sarcasm: reversal of valence and semantic incongruity with the context which could include shared commonsense or world knowledge between the speaker and the listener. While prior works on sarcasm generation predominantly focus on context incongruity, we show that combining valence reversal and semantic incongruity based on the commonsense knowledge generates sarcasm of higher quality. Human evaluation shows that our system generates sarcasm better than human annotators 34% of the time, and better than a reinforced hybrid baseline 90% of the time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge