Toby Walsh

NICTA and UNSW

A Pebble in the AI Race

Mar 30, 2020Abstract:Bhutan is sometimes described as \a pebble between two boulders", a small country caught between the two most populous nations on earth: India and China. This pebble is, however, about to be caught up in a vortex: the transformation of our economic, political and social orders by new technologies like Artificial Intelligence. What can a small nation like Bhutan hope to do in the face of such change? What should the nation do, not just to weather this storm, but to become a better place in which to live?

Partial Queries for Constraint Acquisition

Mar 14, 2020

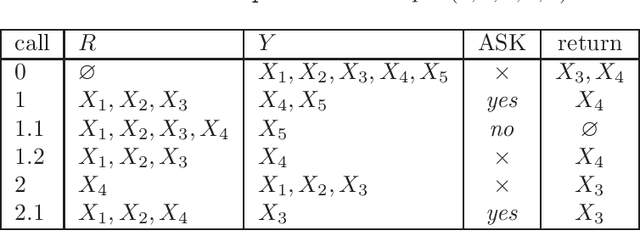

Abstract:Learning constraint networks is known to require a number of membership queries exponential in the number of variables. In this paper, we learn constraint networks by asking the user partial queries. That is, we ask the user to classify assignments to subsets of the variables as positive or negative. We provide an algorithm, called QUACQ, that, given a negative example, focuses onto a constraint of the target network in a number of queries logarithmic in the size of the example. The whole constraint network can then be learned with a polynomial number of partial queries. We give information theoretic lower bounds for learning some simple classes of constraint networks and show that our generic algorithm is optimal in some cases.

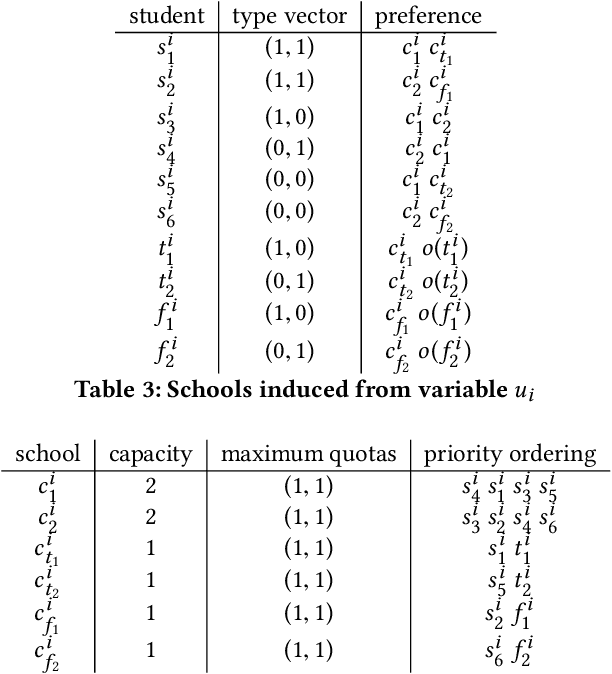

From Matching with Diversity Constraints to Matching with Regional Quotas

Feb 17, 2020

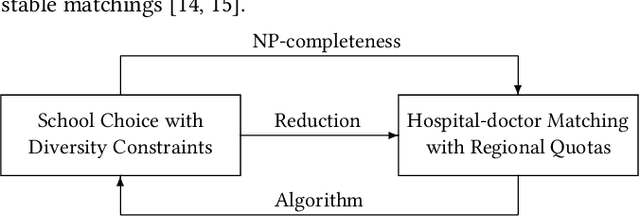

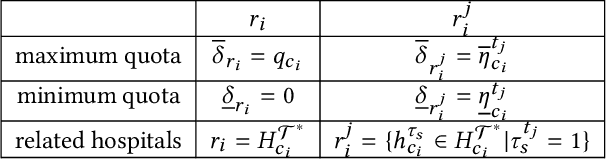

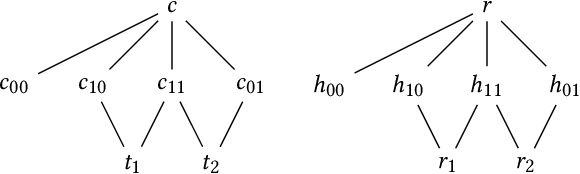

Abstract:In the past few years, several new matching models have been proposed and studied that take into account complex distributional constraints. Relevant lines of work include (1) school choice with diversity constraints where students have (possibly overlapping) types and (2) hospital-doctor matching where various regional quotas are imposed. In this paper, we present a polynomial-time reduction to transform an instance of (1) to an instance of (2) and we show how the feasibility and stability of corresponding matchings are preserved under the reduction. Our reduction provides a formal connection between two important strands of work on matching with distributional constraints. We then apply the reduction in two ways. Firstly, we show that it is NP-complete to check whether a feasible and stable outcome for (1) exists. Due to our reduction, these NP-completeness results carry over to setting (2). In view of this, we help unify some of the results that have been presented in the literature. Secondly, if we have positive results for (2), then we have corresponding results for (1). One key conclusion of our results is that further developments on axiomatic and algorithmic aspects of hospital-doctor matching with regional quotas will result in corresponding results for school choice with diversity constraints.

Greedy Algorithms for Fair Division of Mixed Manna

Dec 17, 2019Abstract:We consider a multi-agent model for fair division of mixed manna (i.e. items for which agents can have positive, zero or negative utilities), in which agents have additive utilities for bundles of items. For this model, we give several general impossibility results and special possibility results for three common fairness concepts (i.e. EF1, EFX, EFX3) and one popular efficiency concept (i.e. PO). We also study how these interact with common welfare objectives such as the Nash, disutility Nash and egalitarian welfares. For example, we show that maximizing the Nash welfare with mixed manna (or minimizing the disutility Nash welfare) does not ensure an EF1 allocation whereas with goods and the Nash welfare it does. We also prove that an EFX3 allocation may not exist even with identical utilities. By comparison, with tertiary utilities, EFX and PO allocations, or EFX3 and PO allocations always exist. Also, with identical utilities, EFX and PO allocations always exist. For these cases, we give polynomial-time algorithms, returning such allocations and approximating further the Nash, disutility Nash and egalitarian welfares in special cases.

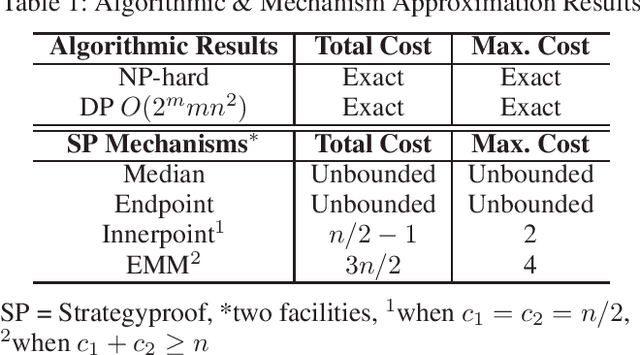

Facility Location Problem with Capacity Constraints: Algorithmic and Mechanism Design Perspectives

Nov 22, 2019

Abstract:We consider the facility location problem in the one-dimensional setting where each facility can serve a limited number of agents from the algorithmic and mechanism design perspectives. From the algorithmic perspective, we prove that the corresponding optimization problem, where the goal is to locate facilities to minimize either the total cost to all agents or the maximum cost of any agent is NP-hard. However, we show that the problem is fixed-parameter tractable, and the optimal solution can be computed in polynomial time whenever the number of facilities is bounded, or when all facilities have identical capacities. We then consider the problem from a mechanism design perspective where the agents are strategic and need not reveal their true locations. We show that several natural mechanisms studied in the uncapacitated setting either lose strategyproofness or a bound on the solution quality for the total or maximum cost objective. We then propose new mechanisms that are strategyproof and achieve approximation guarantees that almost match the lower bounds.

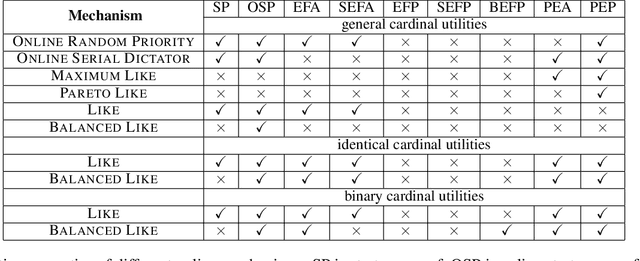

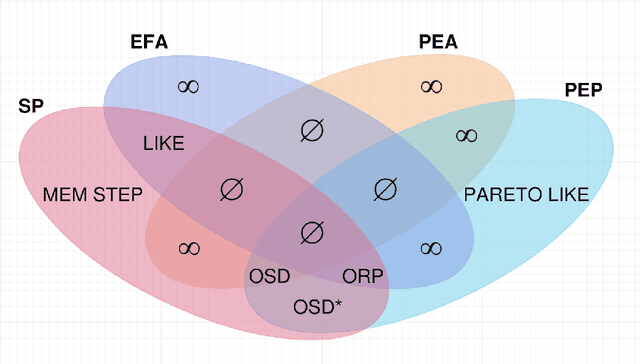

Online Fair Division: A Survey

Nov 21, 2019

Abstract:We survey a burgeoning and promising new research area that considers the online nature of many practical fair division problems. We identify wide variety of such online fair division problems, as well as discuss new mechanisms and normative properties that apply to this online setting. The online nature of such fair division problems provides both opportunities and challenges such as the possibility to develop new online mechanisms as well as the difficulty of dealing with an uncertain future.

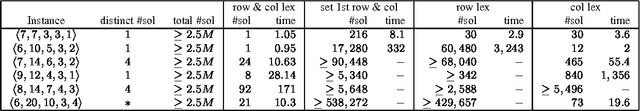

A Commentary on "Breaking Row and Column Symmetries in Matrix Models"

Oct 03, 2019

Abstract:The CP 2002 paper entitled "Breaking Row and Column Symmetries in Matrix Models" by Flener et al. (https://link.springer.com/chapter/10.1007%2F3-540-46135-3_31) describes some of the first work for identifying and analyzing row and column symmetry in matrix models and for efficiently and effectively dealing with such symmetry using static symmetry-breaking ordering constraints. This commentary provides a retrospective on that work and highlights some of the subsequent work on the topic.

CSPLib: Twenty Years On

Sep 30, 2019Abstract:In 1999, we introduced CSPLib, a benchmark library for the constraints community. Our CP-1999 poster paper about CSPLib discussed the advantages and disadvantages of building such a library. Unlike some other domains such as theorem proving, or machine learning, representation was then and remains today a major issue in the success or failure to solve problems. Benchmarks in CSPLib are therefore specified in natural language as this allows users to find good representations for themselves. The community responded positively and CSPLib has become a valuable resource but, as we discuss here, we cannot rest.

SAT vs CSP: a commentary

Sep 27, 2019Abstract:In 2000, I published a relatively comprehensive study of mappings between propositional satisfiability (SAT) and constraint satisfaction problems (CSPs) [Wal00]. I analysed four different mappings of SAT problems into CSPs, and two of CSPs into SAT problems. For each mapping, I compared the impact of achieving arc-consistency on the CSP with unit propagation on the corresponding SAT problems, and lifted these results to CSP algorithms that maintain (some level of ) arc-consistency during search like FC and MAC, and to the Davis- Putnam procedure (which performs unit propagation at each search node). These results helped provide some insight into the relationship between propositional satisfiability and constraint satisfaction that set the scene for an important and valuable body of work that followed. I discuss here what prompted the paper, and what followed.

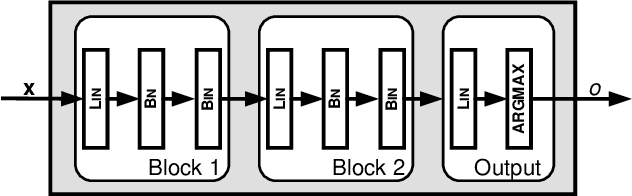

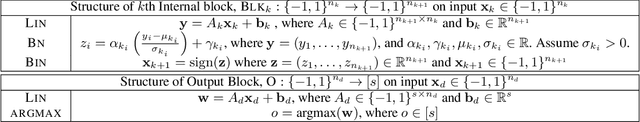

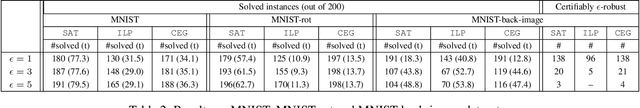

Verifying Properties of Binarized Deep Neural Networks

May 31, 2018

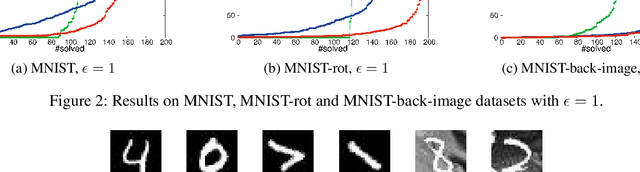

Abstract:Understanding properties of deep neural networks is an important challenge in deep learning. In this paper, we take a step in this direction by proposing a rigorous way of verifying properties of a popular class of neural networks, Binarized Neural Networks, using the well-developed means of Boolean satisfiability. Our main contribution is a construction that creates a representation of a binarized neural network as a Boolean formula. Our encoding is the first exact Boolean representation of a deep neural network. Using this encoding, we leverage the power of modern SAT solvers along with a proposed counterexample-guided search procedure to verify various properties of these networks. A particular focus will be on the critical property of robustness to adversarial perturbations. For this property, our experimental results demonstrate that our approach scales to medium-size deep neural networks used in image classification tasks. To the best of our knowledge, this is the first work on verifying properties of deep neural networks using an exact Boolean encoding of the network.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge