Tasbolat Taunyazov

Survey on Vision-Language-Action Models

Feb 07, 2025Abstract:This paper presents an AI-generated review of Vision-Language-Action (VLA) models, summarizing key methodologies, findings, and future directions. The content is produced using large language models (LLMs) and is intended only for demonstration purposes. This work does not represent original research, but highlights how AI can help automate literature reviews. As AI-generated content becomes more prevalent, ensuring accuracy, reliability, and proper synthesis remains a challenge. Future research will focus on developing a structured framework for AI-assisted literature reviews, exploring techniques to enhance citation accuracy, source credibility, and contextual understanding. By examining the potential and limitations of LLM in academic writing, this study aims to contribute to the broader discussion of integrating AI into research workflows. This work serves as a preliminary step toward establishing systematic approaches for leveraging AI in literature review generation, making academic knowledge synthesis more efficient and scalable.

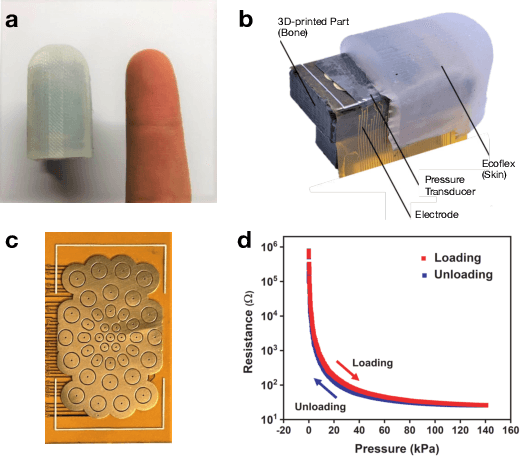

NUSense: Robust Soft Optical Tactile Sensor

Oct 30, 2024Abstract:While most tactile sensors rely on measuring pressure, insights from continuum mechanics suggest that measuring shear strain provides critical information for tactile sensing. In this work, we introduce an optical tactile sensing principle based on shear strain detection. A silicone rubber layer, dyed with color inks, is used to quantify the shear magnitude of the sensing layer. This principle was validated using the NUSense camera-based tactile sensor. The wide-angle camera captures the elongation of the soft pad under mechanical load, a phenomenon attributed to the Poisson effect. The physical and optical properties of the inked pad are essential and should ideally remain stable over time. We tested the robustness of the sensor by subjecting the outermost layer to multiple load cycles using a robot arm. Additionally, we discussed potential applications of this sensor in force sensing and contact localization.

GRaCE: Optimizing Grasps to Satisfy Ranked Criteria in Complex Scenarios

Oct 02, 2023Abstract:This paper addresses the multi-faceted problem of robot grasping, where multiple criteria may conflict and differ in importance. We introduce Grasp Ranking and Criteria Evaluation (GRaCE), a novel approach that employs hierarchical rule-based logic and a rank-preserving utility function to optimize grasps based on various criteria such as stability, kinematic constraints, and goal-oriented functionalities. Additionally, we propose GRaCE-OPT, a hybrid optimization strategy that combines gradient-based and gradient-free methods to effectively navigate the complex, non-convex utility function. Experimental results in both simulated and real-world scenarios show that GRaCE requires fewer samples to achieve comparable or superior performance relative to existing methods. The modular architecture of GRaCE allows for easy customization and adaptation to specific application needs.

Refining 6-DoF Grasps with Context-Specific Classifiers

Aug 14, 2023

Abstract:In this work, we present GraspFlow, a refinement approach for generating context-specific grasps. We formulate the problem of grasp synthesis as a sampling problem: we seek to sample from a context-conditioned probability distribution of successful grasps. However, this target distribution is unknown. As a solution, we devise a discriminator gradient-flow method to evolve grasps obtained from a simpler distribution in a manner that mimics sampling from the desired target distribution. Unlike existing approaches, GraspFlow is modular, allowing grasps that satisfy multiple criteria to be obtained simply by incorporating the relevant discriminators. It is also simple to implement, requiring minimal code given existing auto-differentiation libraries and suitable discriminators. Experiments show that GraspFlow generates stable and executable grasps on a real-world Panda robot for a diverse range of objects. In particular, in 60 trials on 20 different household objects, the first attempted grasp was successful 94% of the time, and 100% grasp success was achieved by the second grasp. Moreover, incorporating a functional discriminator for robot-human handover improved the functional aspect of the grasp by up to 33%.

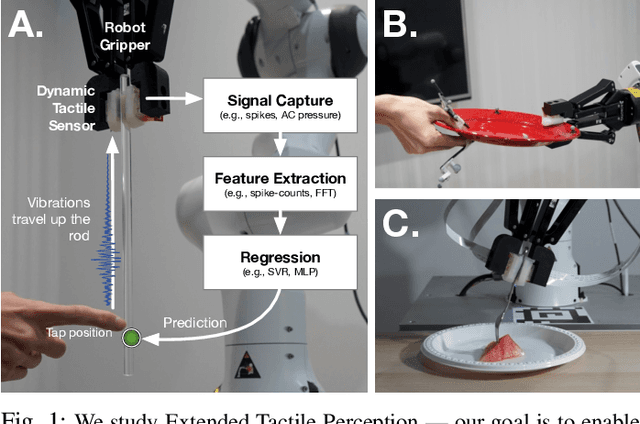

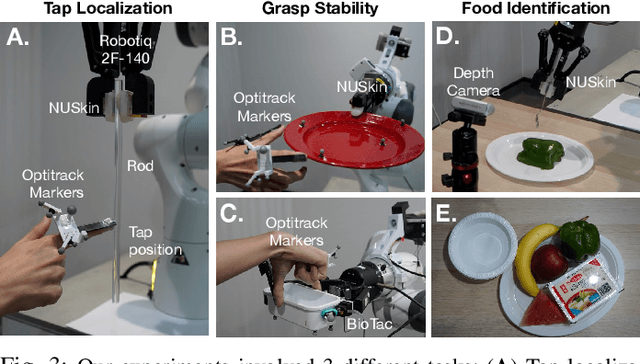

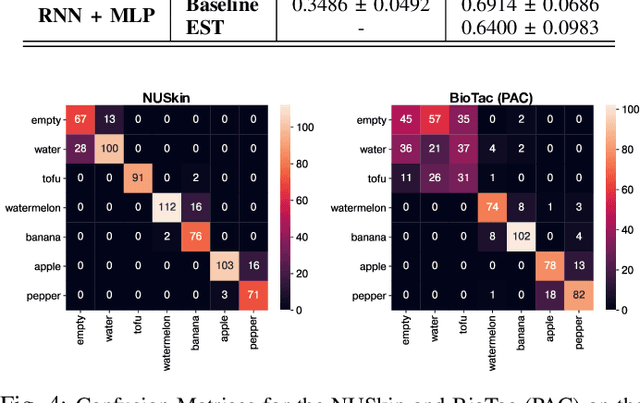

Extended Tactile Perception: Vibration Sensing through Tools and Grasped Objects

Jun 01, 2021

Abstract:Humans display the remarkable ability to sense the world through tools and other held objects. For example, we are able to pinpoint impact locations on a held rod and tell apart different textures using a rigid probe. In this work, we consider how we can enable robots to have a similar capacity, i.e., to embody tools and extend perception using standard grasped objects. We propose that vibro-tactile sensing using dynamic tactile sensors on the robot fingers, along with machine learning models, enables robots to decipher contact information that is transmitted as vibrations along rigid objects. This paper reports on extensive experiments using the BioTac micro-vibration sensor and a new event dynamic sensor, the NUSkin, capable of multi-taxel sensing at 4~kHz. We demonstrate that fine localization on a held rod is possible using our approach (with errors less than 1 cm on a 20 cm rod). Next, we show that vibro-tactile perception can lead to reasonable grasp stability prediction during object handover, and accurate food identification using a standard fork. We find that multi-taxel vibro-tactile sensing at sufficiently high sampling rate (above 2 kHz) led to the best performance across the various tasks and objects. Taken together, our results provides both evidence and guidelines for using vibro-tactile perception to extend tactile perception, which we believe will lead to enhanced competency with tools and better physical human-robot-interaction.

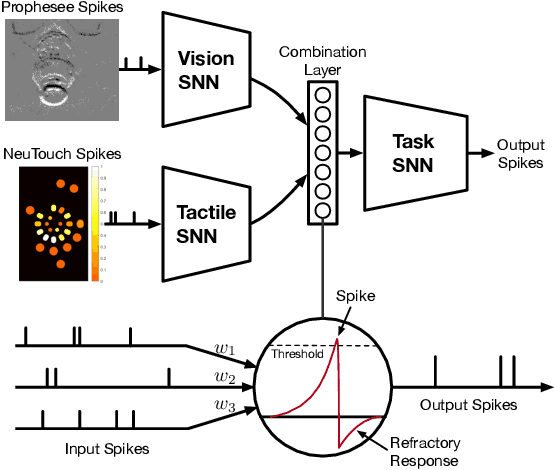

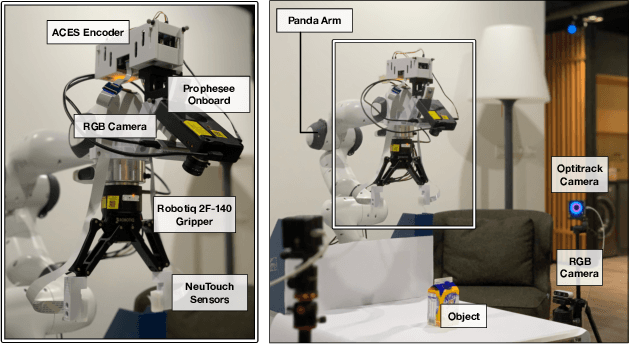

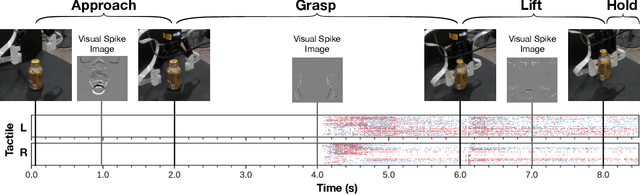

Event-Driven Visual-Tactile Sensing and Learning for Robots

Sep 15, 2020

Abstract:This work contributes an event-driven visual-tactile perception system, comprising a novel biologically-inspired tactile sensor and multi-modal spike-based learning. Our neuromorphic fingertip tactile sensor, NeuTouch, scales well with the number of taxels thanks to its event-based nature. Likewise, our Visual-Tactile Spiking Neural Network (VT-SNN) enables fast perception when coupled with event sensors. We evaluate our visual-tactile system (using the NeuTouch and Prophesee event camera) on two robot tasks: container classification and rotational slip detection. On both tasks, we observe good accuracies relative to standard deep learning methods. We have made our visual-tactile datasets freely-available to encourage research on multi-modal event-driven robot perception, which we believe is a promising approach towards intelligent power-efficient robot systems.

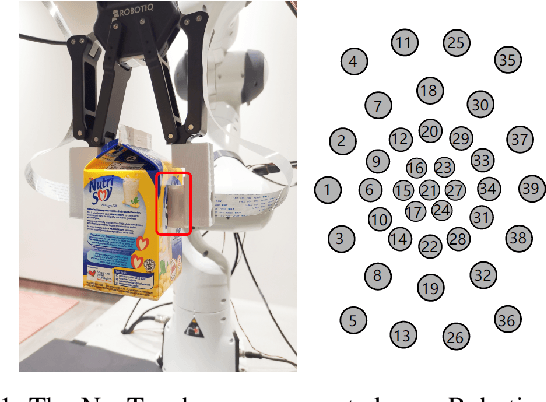

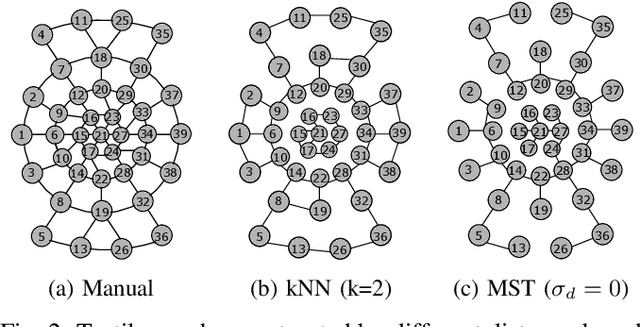

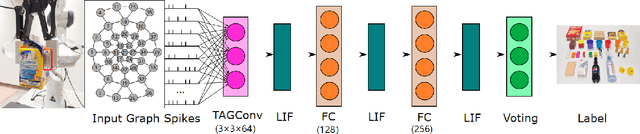

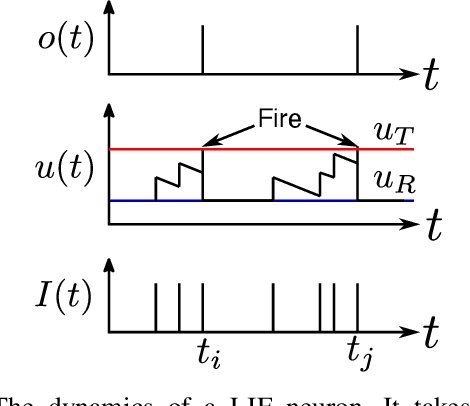

TactileSGNet: A Spiking Graph Neural Network for Event-based Tactile Object Recognition

Aug 01, 2020

Abstract:Tactile perception is crucial for a variety of robot tasks including grasping and in-hand manipulation. New advances in flexible, event-driven, electronic skins may soon endow robots with touch perception capabilities similar to humans. These electronic skins respond asynchronously to changes (e.g., in pressure, temperature), and can be laid out irregularly on the robot's body or end-effector. However, these unique features may render current deep learning approaches such as convolutional feature extractors unsuitable for tactile learning. In this paper, we propose a novel spiking graph neural network for event-based tactile object recognition. To make use of local connectivity of taxels, we present several methods for organizing the tactile data in a graph structure. Based on the constructed graphs, we develop a spiking graph convolutional network. The event-driven nature of spiking neural network makes it arguably more suitable for processing the event-based data. Experimental results on two tactile datasets show that the proposed method outperforms other state-of-the-art spiking methods, achieving high accuracies of approximately 90\% when classifying a variety of different household objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge