Sushma Venkatesh

Vulnerability of Face Morphing Attacks: A Case Study on Lookalike and Identical Twins

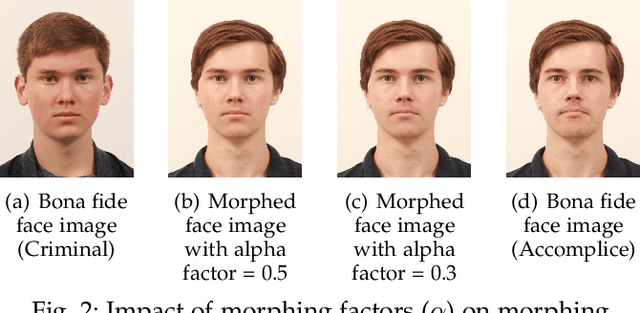

Mar 24, 2023Abstract:Face morphing attacks have emerged as a potential threat, particularly in automatic border control scenarios. Morphing attacks permit more than one individual to use travel documents that can be used to cross borders using automatic border control gates. The potential for morphing attacks depends on the selection of data subjects (accomplice and malicious actors). This work investigates lookalike and identical twins as the source of face morphing generation. We present a systematic study on benchmarking the vulnerability of Face Recognition Systems (FRS) to lookalike and identical twin morphing images. Therefore, we constructed new face morphing datasets using 16 pairs of identical twin and lookalike data subjects. Morphing images from lookalike and identical twins are generated using a landmark-based method. Extensive experiments are carried out to benchmark the attack potential of lookalike and identical twins. Furthermore, experiments are designed to provide insights into the impact of vulnerability with normal face morphing compared with lookalike and identical twin face morphing.

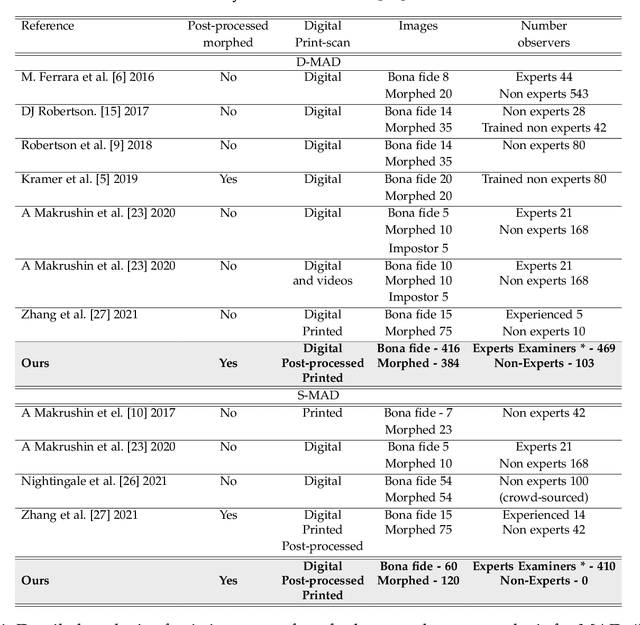

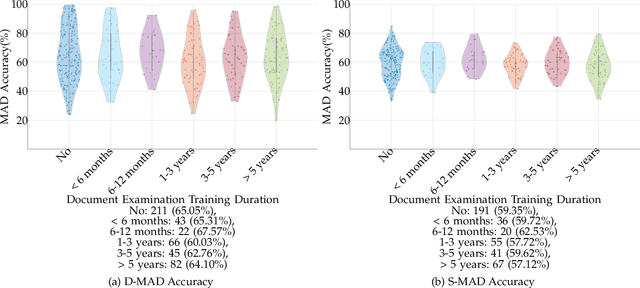

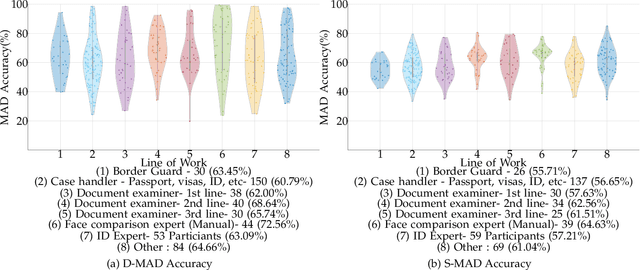

Analyzing Human Observer Ability in Morphing Attack Detection -- Where Do We Stand?

Mar 04, 2022

Abstract:While several works have studied the vulnerability of automated FRS and have proposed morphing attack detection (MAD) methods, very few have focused on studying the human ability to detect morphing attacks. The examiner/observer's face morph detection ability is based on their observation, domain knowledge, experience, and familiarity with the problem, and no works report the detailed findings from observers who check identity documents as a part of their everyday professional life. This work creates a new benchmark database of realistic morphing attacks from 48 unique subjects leading to 400 morphed images presented to the observers in a Differential-MAD (D-MAD) setting. Unlike the existing databases, the newly created morphed image database has been created with careful considerations to age, gender and ethnicity to create realistic morph attacks. Further, unlike the previous works, we also capture ten images from Automated Border Control (ABC) gates to mimic the realistic D-MAD setting leading to 400 probe images in border crossing scenarios. The newly created dataset is further used to study the ability of human observers' ability to detect morphed images. In addition, a new dataset of 180 morphed images is also created using the FRGCv2 dataset under the Single Image-MAD (S-MAD) setting. Further, to benchmark the human ability in detecting morphs, a new evaluation platform is created to conduct S-MAD and D-MAD analysis. The benchmark study employs 469 observers for D-MAD and 410 observers for S-MAD who are primarily governmental employees from more than 40 countries. The analysis provides interesting insights and points to expert observers' missing competence and failure to detect a considerable amount of morphing attacks. Human observers tend to detect morphed images to a lower accuracy as compared to the automated MAD algorithms evaluated in this work.

ReGenMorph: Visibly Realistic GAN Generated Face Morphing Attacks by Attack Re-generation

Aug 20, 2021

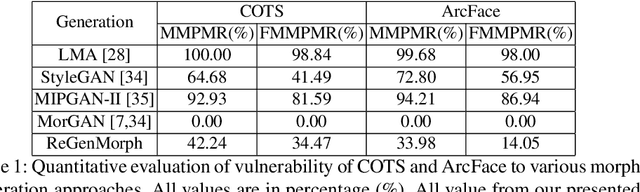

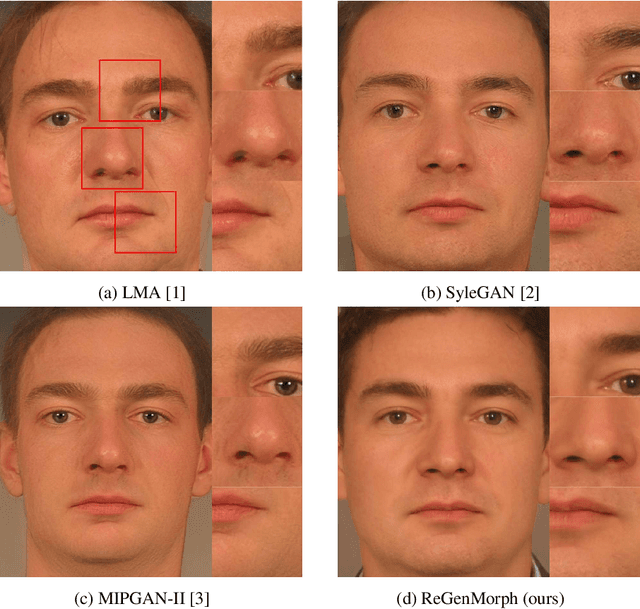

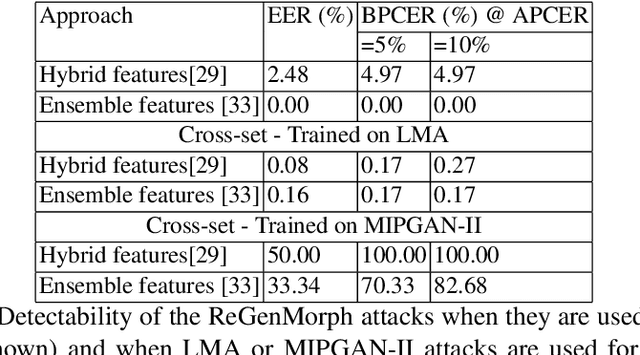

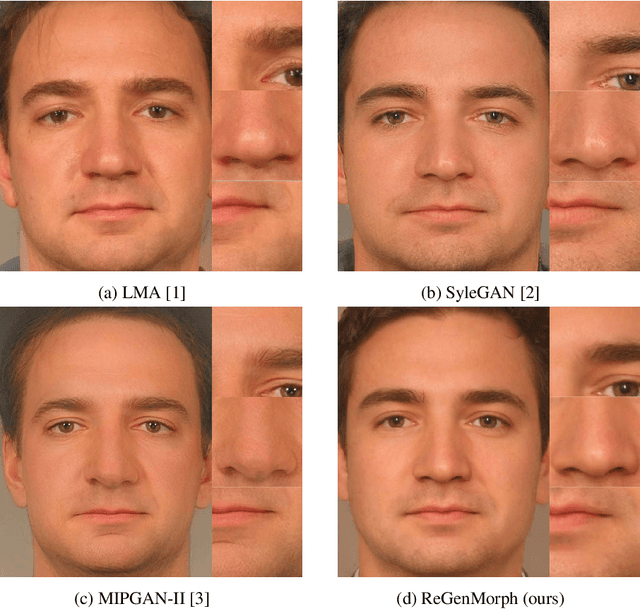

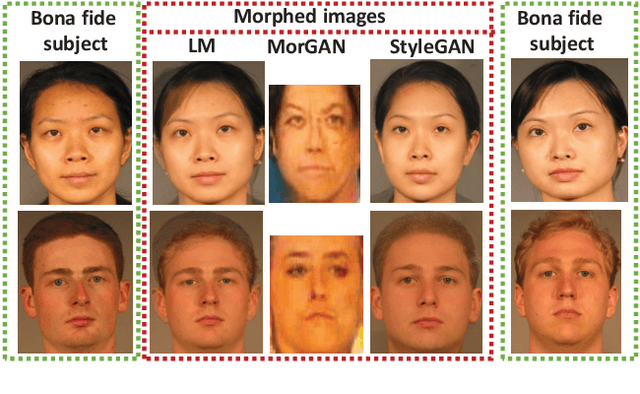

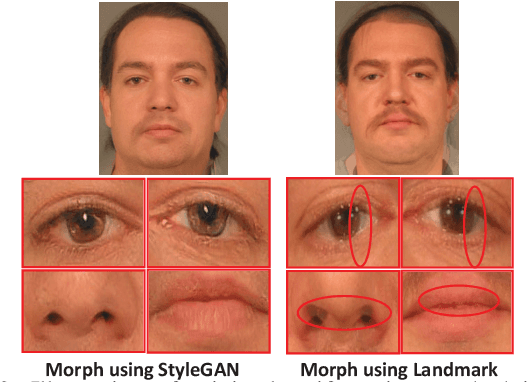

Abstract:Face morphing attacks aim at creating face images that are verifiable to be the face of multiple identities, which can lead to building faulty identity links in operations like border checks. While creating a morphed face detector (MFD), training on all possible attack types is essential to achieve good detection performance. Therefore, investigating new methods of creating morphing attacks drives the generalizability of MADs. Creating morphing attacks was performed on the image level, by landmark interpolation, or on the latent-space level, by manipulating latent vectors in a generative adversarial network. The earlier results in varying blending artifacts and the latter results in synthetic-like striping artifacts. This work presents the novel morphing pipeline, ReGenMorph, to eliminate the LMA blending artifacts by using a GAN-based generation, as well as, eliminate the manipulation in the latent space, resulting in visibly realistic morphed images compared to previous works. The generated ReGenMorph appearance is compared to recent morphing approaches and evaluated for face recognition vulnerability and attack detectability, whether as known or unknown attacks.

Face Morphing Attack Generation & Detection: A Comprehensive Survey

Nov 03, 2020

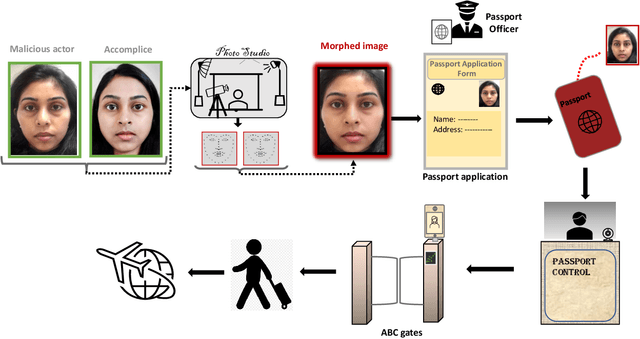

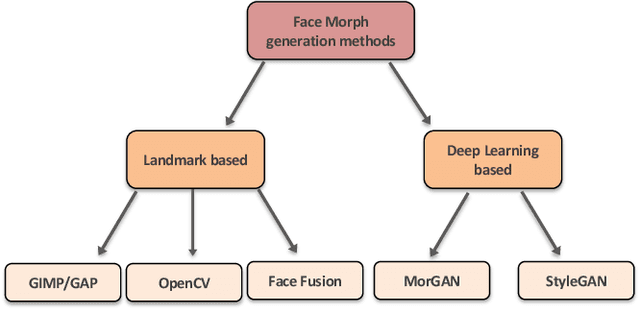

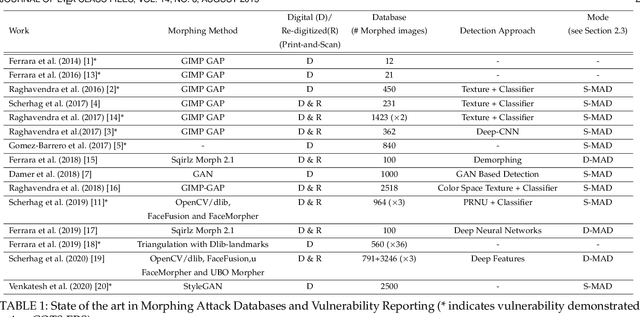

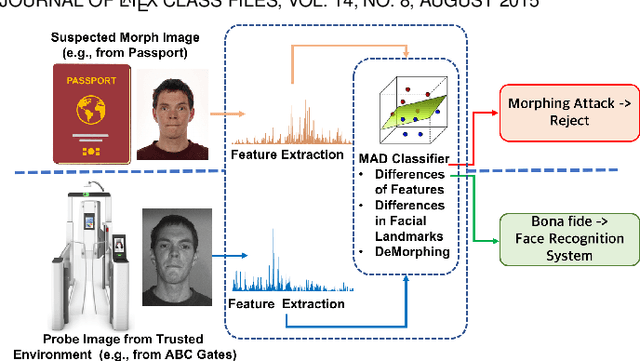

Abstract:The vulnerability of Face Recognition System (FRS) to various kind of attacks (both direct and in-direct attacks) and face morphing attacks has received a great interest from the biometric community. The goal of a morphing attack is to subvert the FRS at Automatic Border Control (ABC) gates by presenting the Electronic Machine Readable Travel Document (eMRTD) or e-passport that is obtained based on the morphed face image. Since the application process for the e-passport in the majority countries requires a passport photo to be presented by the applicant, a malicious actor and the accomplice can generate the morphed face image and to obtain the e-passport. An e-passport with a morphed face images can be used by both the malicious actor and the accomplice to cross the border as the morphed face image can be verified against both of them. This can result in a significant threat as a malicious actor can cross the border without revealing the track of his/her criminal background while the details of accomplice are recorded in the log of the access control system. This survey aims to present a systematic overview of the progress made in the area of face morphing in terms of both morph generation and morph detection. In this paper, we describe and illustrate various aspects of face morphing attacks, including different techniques for generating morphed face images but also the state-of-the-art regarding Morph Attack Detection (MAD) algorithms based on a stringent taxonomy and finally the availability of public databases, which allow to benchmark new MAD algorithms in a reproducible manner. The outcomes of competitions/benchmarking, vulnerability assessments and performance evaluation metrics are also provided in a comprehensive manner. Furthermore, we discuss the open challenges and potential future works that need to be addressed in this evolving field of biometrics.

MIPGAN -- Generating Robust and High Quality Morph Attacks Using Identity Prior Driven GAN

Sep 04, 2020

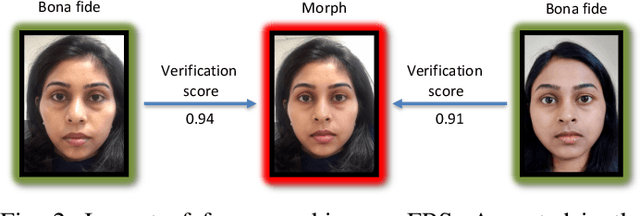

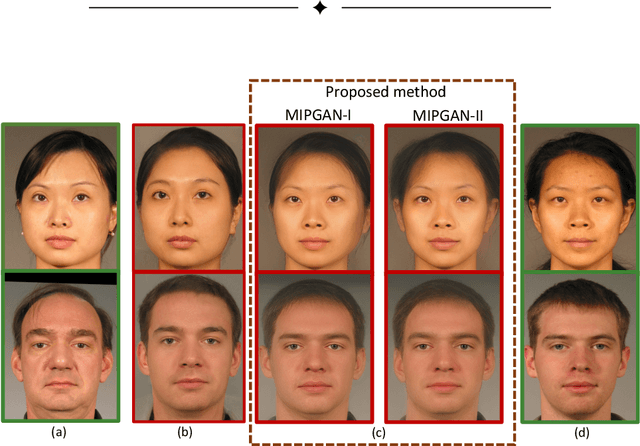

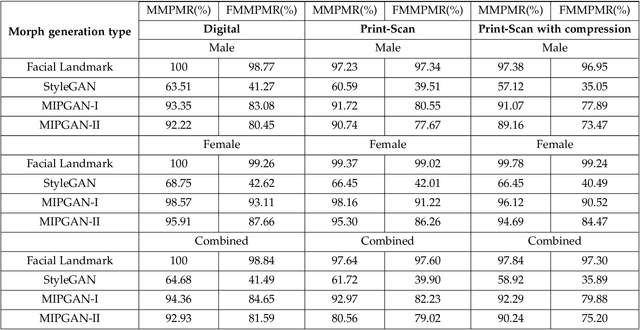

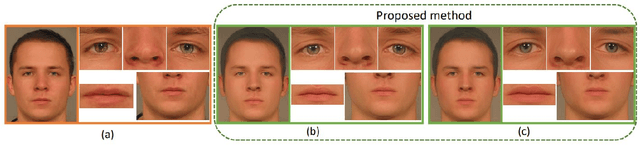

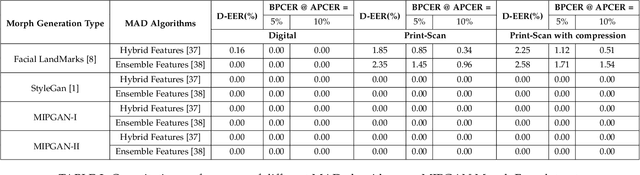

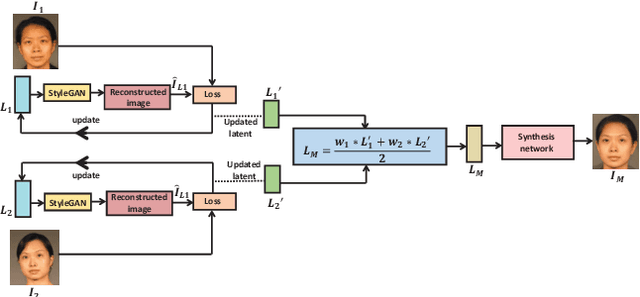

Abstract:Face morphing attacks target to circumvent Face Recognition Systems (FRS) by employing face images derived from multiple data subjects (e.g., accomplices and malicious actors). Morphed images can verify against contributing data subjects with a reasonable success rate, given they have a high degree of identity resemblance. The success of the morphing attacks is directly dependent on the quality of the generated morph images. We present a new approach for generating robust attacks extending our earlier framework for generating face morphs. We present a new approach using an Identity Prior Driven Generative Adversarial Network, which we refer to as \textit{MIPGAN (Morphing through Identity Prior driven GAN)}. The proposed MIPGAN is derived from the StyleGAN with a newly formulated loss function exploiting perceptual quality and identity factor to generate a high quality morphed face image with minimal artifacts and with higher resolution. We demonstrate the proposed approach's applicability to generate robust morph attacks by evaluating it against a commercial Face Recognition System (FRS) and demonstrate the success rate of attacks. Extensive experiments are carried out to assess the FRS's vulnerability against the proposed morphed face generation technique on three types of data such as digital images, re-digitized (printed and scanned) images, and compressed images after re-digitization from newly generated \textit{MIPGAN Face Morph Dataset}. The obtained results demonstrate that the proposed approach of morph generation profoundly threatens the FRS.

Can GAN Generated Morphs Threaten Face Recognition Systems Equally as Landmark Based Morphs? -- Vulnerability and Detection

Jul 07, 2020

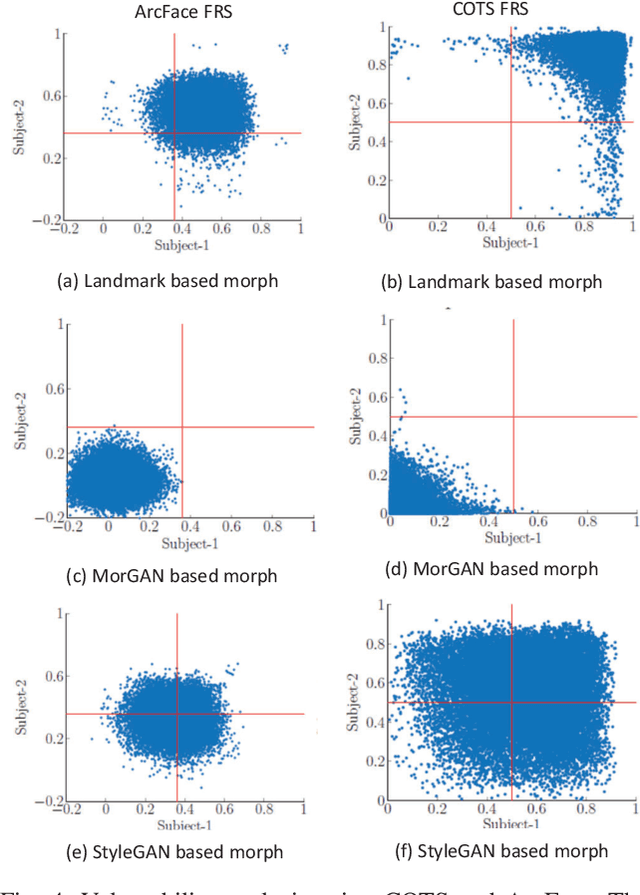

Abstract:The primary objective of face morphing is to combine face images of different data subjects (e.g. a malicious actor and an accomplice) to generate a face image that can be equally verified for both contributing data subjects. In this paper, we propose a new framework for generating face morphs using a newer Generative Adversarial Network (GAN) - StyleGAN. In contrast to earlier works, we generate realistic morphs of both high-quality and high resolution of 1024$\times$1024 pixels. With the newly created morphing dataset of 2500 morphed face images, we pose a critical question in this work. \textit{(i) Can GAN generated morphs threaten Face Recognition Systems (FRS) equally as Landmark based morphs?} Seeking an answer, we benchmark the vulnerability of a Commercial-Off-The-Shelf FRS (COTS) and a deep learning-based FRS (ArcFace). This work also benchmarks the detection approaches for both GAN generated morphs against the landmark based morphs using established Morphing Attack Detection (MAD) schemes.

On the Influence of Ageing on Face Morph Attacks: Vulnerability and Detection

Jul 06, 2020

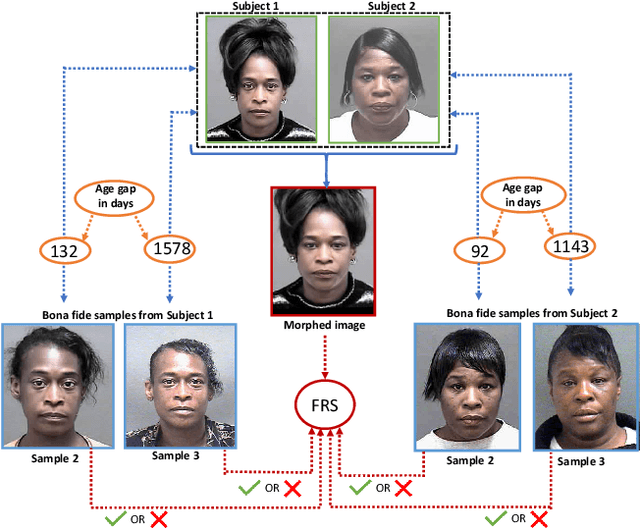

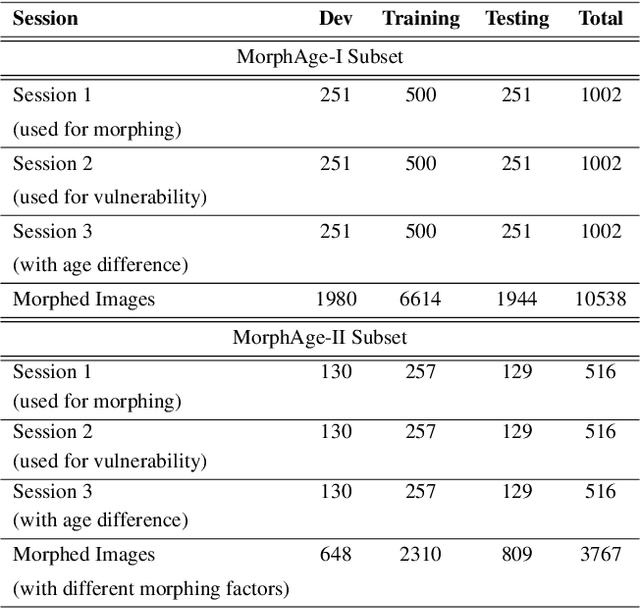

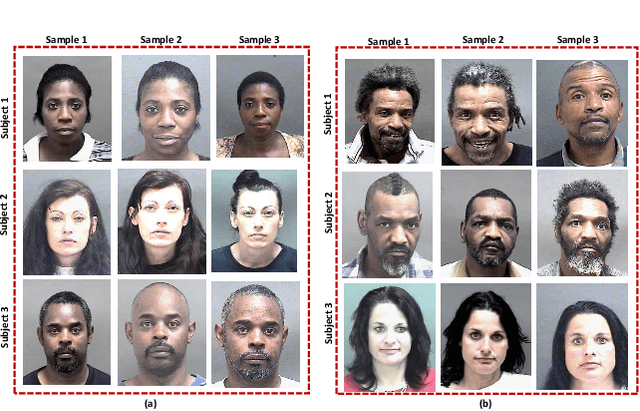

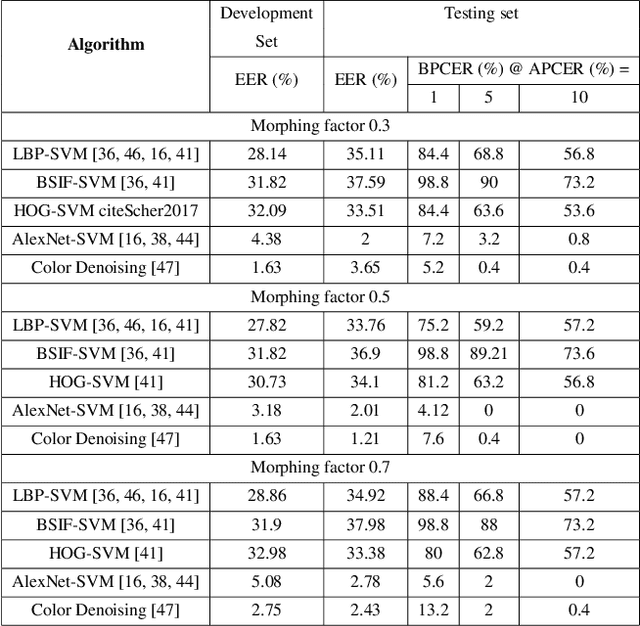

Abstract:Face morphing attacks have raised critical concerns as they demonstrate a new vulnerability of Face Recognition Systems (FRS), which are widely deployed in border control applications. The face morphing process uses the images from multiple data subjects and performs an image blending operation to generate a morphed image of high quality. The generated morphed image exhibits similar visual characteristics corresponding to the biometric characteristics of the data subjects that contributed to the composite image and thus making it difficult for both humans and FRS, to detect such attacks. In this paper, we report a systematic investigation on the vulnerability of the Commercial-Off-The-Shelf (COTS) FRS when morphed images under the influence of ageing are presented. To this extent, we have introduced a new morphed face dataset with ageing derived from the publicly available MORPH II face dataset, which we refer to as MorphAge dataset. The dataset has two bins based on age intervals, the first bin - MorphAge-I dataset has 1002 unique data subjects with the age variation of 1 year to 2 years while the MorphAge-II dataset consists of 516 data subjects whose age intervals are from 2 years to 5 years. To effectively evaluate the vulnerability for morphing attacks, we also introduce a new evaluation metric, namely the Fully Mated Morphed Presentation Match Rate (FMMPMR), to quantify the vulnerability effectively in a realistic scenario. Extensive experiments are carried out by using two different COTS FRS (COTS I - Cognitec and COTS II - Neurotechnology) to quantify the vulnerability with ageing. Further, we also evaluate five different Morph Attack Detection (MAD) techniques to benchmark their detection performance with ageing.

Morphing Attack Detection -- Database, Evaluation Platform and Benchmarking

Jun 16, 2020

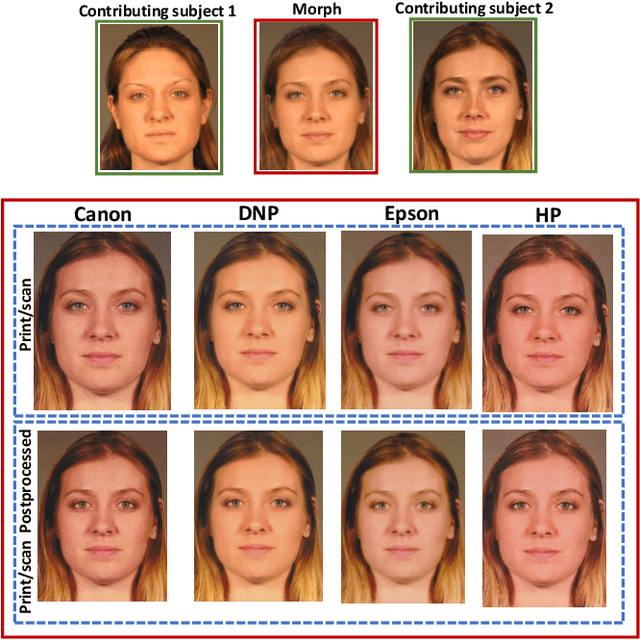

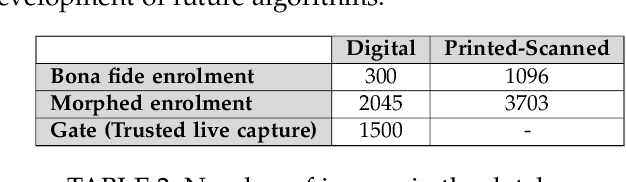

Abstract:Morphing attacks have posed a severe threat to Face Recognition System (FRS). Despite the number of advancements reported in recent works, we note serious open issues that are not addressed. Morphing Attack Detection (MAD) algorithms often are prone to generalization challenges as they are database dependent. The existing databases, mostly of semi-public nature, lack in diversity in terms of ethnicity, various morphing process and post-processing pipelines. Further, they do not reflect a realistic operational scenario for Automated Border Control (ABC) and do not provide a basis to test MAD on unseen data, in order to benchmark the robustness of algorithms. In this work, we present a new sequestered dataset for facilitating the advancements of MAD where the algorithms can be tested on unseen data in an effort to better generalize. The newly constructed dataset consists of facial images from 150 subjects from various ethnicities, age-groups and both genders. In order to challenge the existing MAD algorithms, the morphed images are with careful subject pre-selection created from the subjects, and further post-processed to remove the morphing artifacts. The images are also printed and scanned to remove all digital cues and to simulate a realistic challenge for MAD algorithms. Further, we present a new online evaluation platform to test algorithms on sequestered data. With the platform we can benchmark the morph detection performance and study the generalization ability. This work also presents a detailed analysis on various subsets of sequestered data and outlines open challenges for future directions in MAD research.

Morton Filters for Superior Template Protection for Iris Recognition

Jan 15, 2020

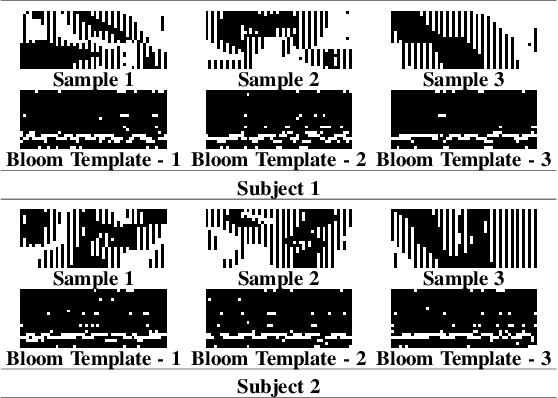

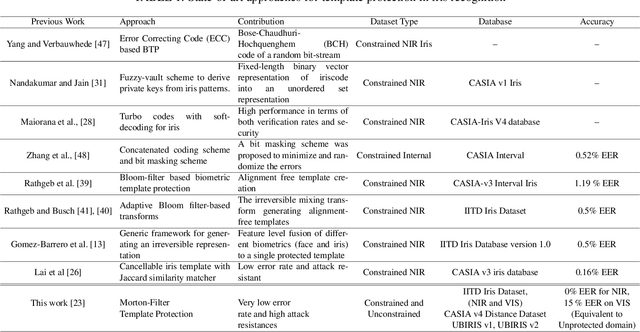

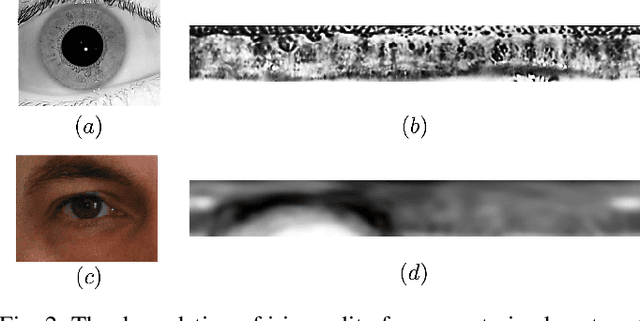

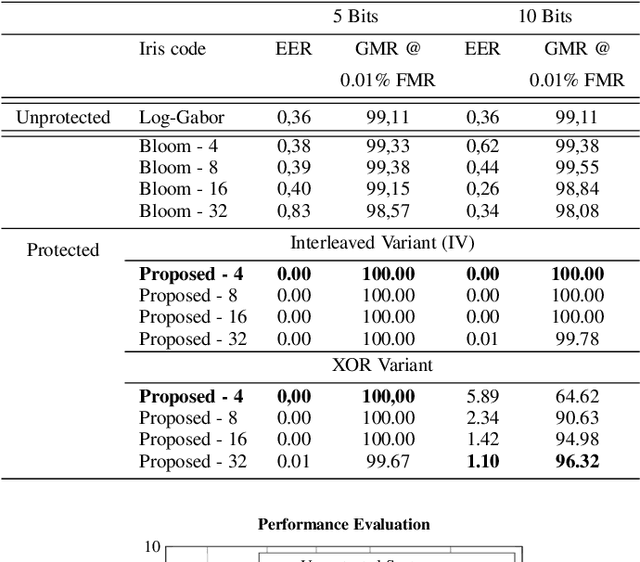

Abstract:We address the fundamental performance issues of template protection (TP) for iris verification. We base our work on the popular Bloom-Filter templates protection & address the key challenges like sub-optimal performance and low unlinkability. Specifically, we focus on cases where Bloom-filter templates results in non-ideal performance due to presence of large degradations within iris images. Iris recognition is challenged with number of occluding factors such as presence of eye-lashes within captured image, occlusion due to eyelids, low quality iris images due to motion blur. All of such degrading factors result in obtaining non-reliable iris codes & thereby provide non-ideal biometric performance. These factors directly impact the protected templates derived from iris images when classical Bloom-filters are employed. To this end, we propose and extend our earlier ideas of Morton-filters for obtaining better and reliable templates for iris. Morton filter based TP for iris codes is based on leveraging the intra and inter-class distribution by exploiting low-rank iris codes to derive the stable bits across iris images for a particular subject and also analyzing the discriminable bits across various subjects. Such low-rank non-noisy iris codes enables realizing the template protection in a superior way which not only can be used in constrained setting, but also in relaxed iris imaging. We further extend the work to analyze the applicability to VIS iris images by employing a large scale public iris image database - UBIRIS(v1 & v2), captured in a unconstrained setting. Through a set of experiments, we demonstrate the applicability of proposed approach and vet the strengths and weakness. Yet another contribution of this work stems in assessing the security of the proposed approach where factors of Unlinkability is studied to indicate the antagonistic nature to relaxed iris imaging scenarios.

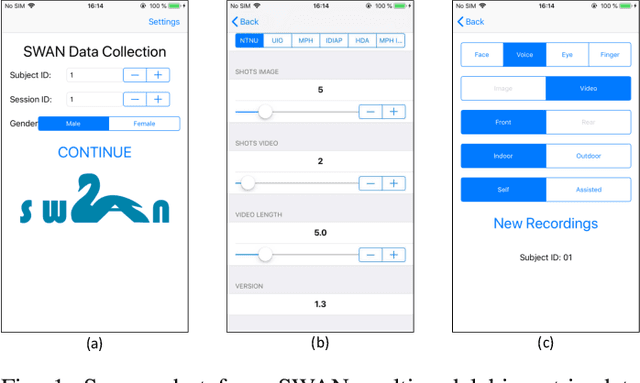

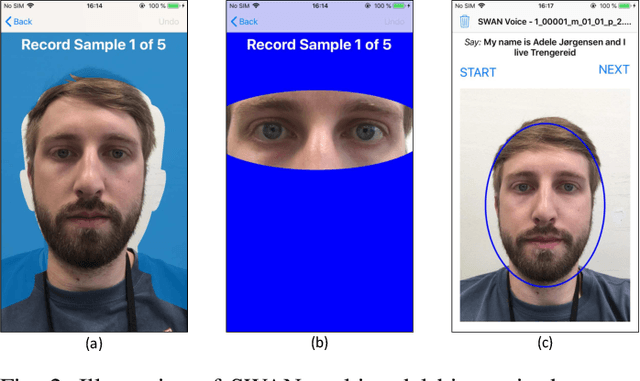

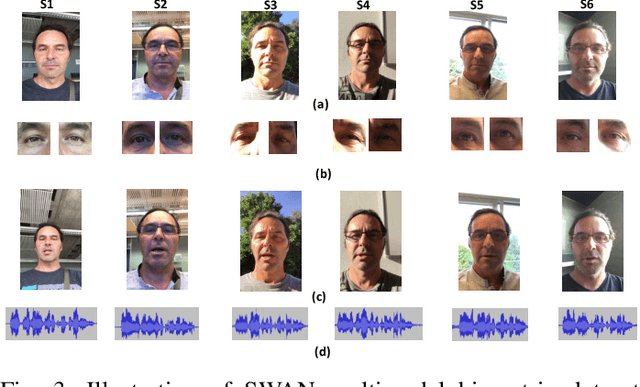

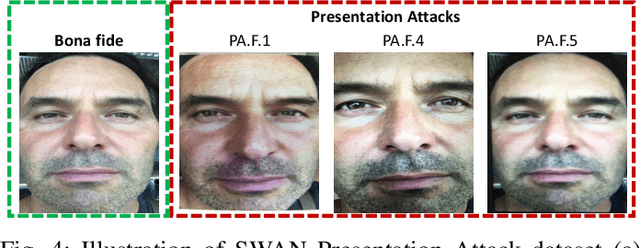

Smartphone Multi-modal Biometric Authentication: Database and Evaluation

Dec 05, 2019

Abstract:Biometric-based verification is widely employed on the smartphones for various applications, including financial transactions. In this work, we present a new multimodal biometric dataset (face, voice, and periocular) acquired using a smartphone. The new dataset is comprised of 150 subjects that are captured in six different sessions reflecting real-life scenarios of smartphone assisted authentication. One of the unique features of this dataset is that it is collected in four different geographic locations representing a diverse population and ethnicity. Additionally, we also present a multimodal Presentation Attack (PA) or spoofing dataset using a low-cost Presentation Attack Instrument (PAI) such as print and electronic display attacks. The novel acquisition protocols and the diversity of the data subjects collected from different geographic locations will allow developing a novel algorithm for either unimodal or multimodal biometrics. Further, we also report the performance evaluation of the baseline biometric verification and Presentation Attack Detection (PAD) on the newly collected dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge