Steven R. Spurgeon

Revealing the Hidden Third Dimension of Point Defects in Two-Dimensional MXenes

Nov 11, 2025Abstract:Point defects govern many important functional properties of two-dimensional (2D) materials. However, resolving the three-dimensional (3D) arrangement of these defects in multi-layer 2D materials remains a fundamental challenge, hindering rational defect engineering. Here, we overcome this limitation using an artificial intelligence-guided electron microscopy workflow to map the 3D topology and clustering of atomic vacancies in Ti$_3$C$_2$T$_X$ MXene. Our approach reconstructs the 3D coordinates of vacancies across hundreds of thousands of lattice sites, generating robust statistical insight into their distribution that can be correlated with specific synthesis pathways. This large-scale data enables us to classify a hierarchy of defect structures--from isolated vacancies to nanopores--revealing their preferred formation and interaction mechanisms, as corroborated by molecular dynamics simulations. This work provides a generalizable framework for understanding and ultimately controlling point defects across large volumes, paving the way for the rational design of defect-engineered functional 2D materials.

Mic-hackathon 2024: Hackathon on Machine Learning for Electron and Scanning Probe Microscopy

Jun 10, 2025

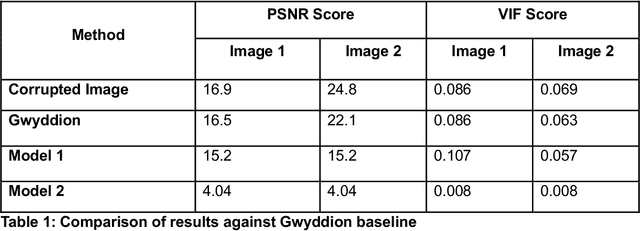

Abstract:Microscopy is a primary source of information on materials structure and functionality at nanometer and atomic scales. The data generated is often well-structured, enriched with metadata and sample histories, though not always consistent in detail or format. The adoption of Data Management Plans (DMPs) by major funding agencies promotes preservation and access. However, deriving insights remains difficult due to the lack of standardized code ecosystems, benchmarks, and integration strategies. As a result, data usage is inefficient and analysis time is extensive. In addition to post-acquisition analysis, new APIs from major microscope manufacturers enable real-time, ML-based analytics for automated decision-making and ML-agent-controlled microscope operation. Yet, a gap remains between the ML and microscopy communities, limiting the impact of these methods on physics, materials discovery, and optimization. Hackathons help bridge this divide by fostering collaboration between ML researchers and microscopy experts. They encourage the development of novel solutions that apply ML to microscopy, while preparing a future workforce for instrumentation, materials science, and applied ML. This hackathon produced benchmark datasets and digital twins of microscopes to support community growth and standardized workflows. All related code is available at GitHub: https://github.com/KalininGroup/Mic-hackathon-2024-codes-publication/tree/1.0.0.1

Mind the Gap: Bridging the Divide Between AI Aspirations and the Reality of Autonomous Characterization

Feb 25, 2025Abstract:What does materials science look like in the "Age of Artificial Intelligence?" Each materials domain-synthesis, characterization, and modeling-has a different answer to this question, motivated by unique challenges and constraints. This work focuses on the tremendous potential of autonomous characterization within electron microscopy. We present our recent advancements in developing domain-aware, multimodal models for microscopy analysis capable of describing complex atomic systems. We then address the critical gap between the theoretical promise of autonomous microscopy and its current practical limitations, showcasing recent successes while highlighting the necessary developments to achieve robust, real-world autonomy.

Revealing the Evolution of Order in Materials Microstructures Using Multi-Modal Computer Vision

Nov 15, 2024

Abstract:The development of high-performance materials for microelectronics, energy storage, and extreme environments depends on our ability to describe and direct property-defining microstructural order. Our present understanding is typically derived from laborious manual analysis of imaging and spectroscopy data, which is difficult to scale, challenging to reproduce, and lacks the ability to reveal latent associations needed for mechanistic models. Here, we demonstrate a multi-modal machine learning (ML) approach to describe order from electron microscopy analysis of the complex oxide La$_{1-x}$Sr$_x$FeO$_3$. We construct a hybrid pipeline based on fully and semi-supervised classification, allowing us to evaluate both the characteristics of each data modality and the value each modality adds to the ensemble. We observe distinct differences in the performance of uni- and multi-modal models, from which we draw general lessons in describing crystal order using computer vision.

Deep Learning for Automated Experimentation in Scanning Transmission Electron Microscopy

Apr 04, 2023

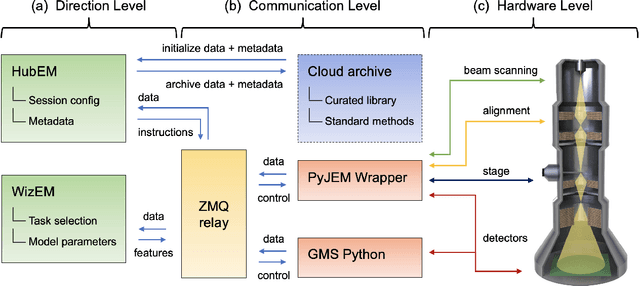

Abstract:Machine learning (ML) has become critical for post-acquisition data analysis in (scanning) transmission electron microscopy, (S)TEM, imaging and spectroscopy. An emerging trend is the transition to real-time analysis and closed-loop microscope operation. The effective use of ML in electron microscopy now requires the development of strategies for microscopy-centered experiment workflow design and optimization. Here, we discuss the associated challenges with the transition to active ML, including sequential data analysis and out-of-distribution drift effects, the requirements for the edge operation, local and cloud data storage, and theory in the loop operations. Specifically, we discuss the relative contributions of human scientists and ML agents in the ideation, orchestration, and execution of experimental workflows and the need to develop universal hyper languages that can apply across multiple platforms. These considerations will collectively inform the operationalization of ML in next-generation experimentation.

Deep-learning-based prediction of nanoparticle phase transitions during in situ transmission electron microscopy

May 23, 2022

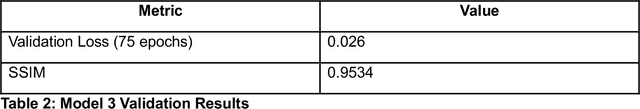

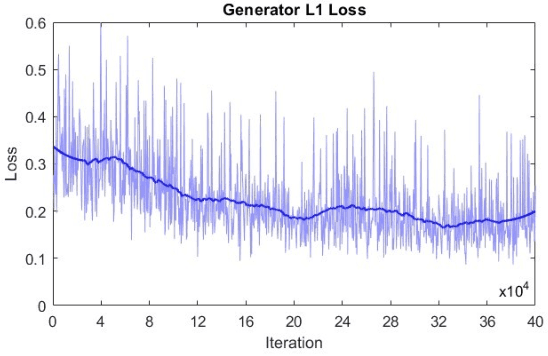

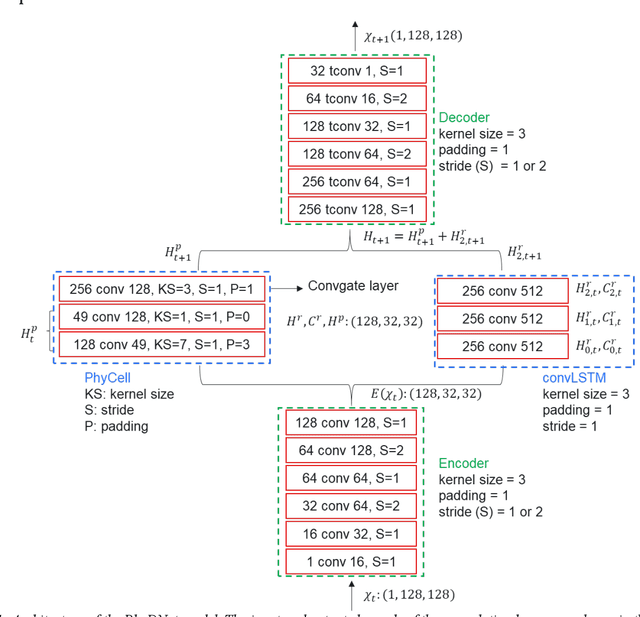

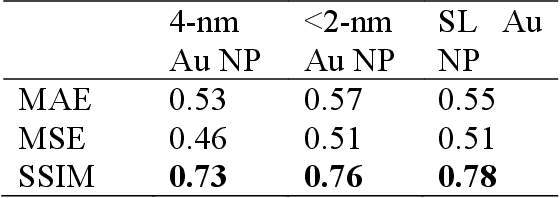

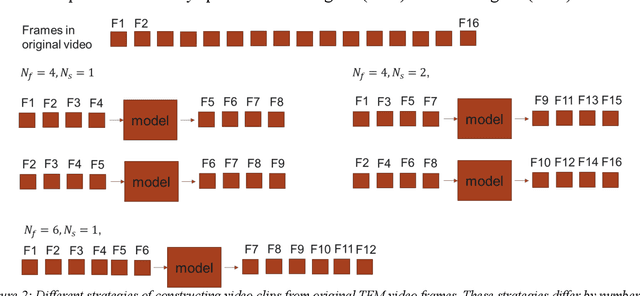

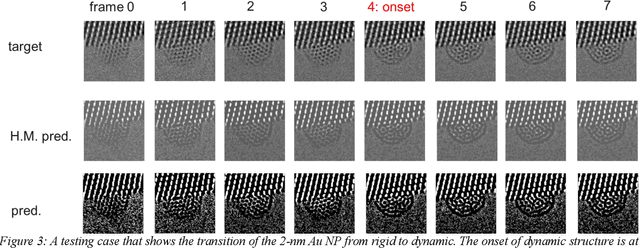

Abstract:We develop the machine learning capability to predict a time sequence of in-situ transmission electron microscopy (TEM) video frames based on the combined long-short-term-memory (LSTM) algorithm and the features de-entanglement method. We train deep learning models to predict a sequence of future video frames based on the input of a sequence of previous frames. This unique capability provides insight into size dependent structural changes in Au nanoparticles under dynamic reaction condition using in-situ environmental TEM data, informing models of morphological evolution and catalytic properties. The model performance and achieved accuracy of predictions are desirable based on, for scientific data characteristic, based on limited size of training data sets. The model convergence and values for the loss function mean square error show dependence on the training strategy, and structural similarity measure between predicted structure images and ground truth reaches the value of about 0.7. This computed structural similarity is smaller than values obtained when the deep learning architecture is trained using much larger benchmark data sets, it is sufficient to show the structural transition of Au nanoparticles. While performance parameters of our model applied to scientific data fall short of those achieved for the non-scientific big data sets, we demonstrate model ability to predict the evolution, even including the particle structural phase transformation, of Au nano particles as catalyst for CO oxidation under the chemical reaction conditions. Using this approach, it may be possible to anticipate the next steps of a chemical reaction for emerging automated experimentation platforms.

An Automated Scanning Transmission Electron Microscope Guided by Sparse Data Analytics

Sep 30, 2021

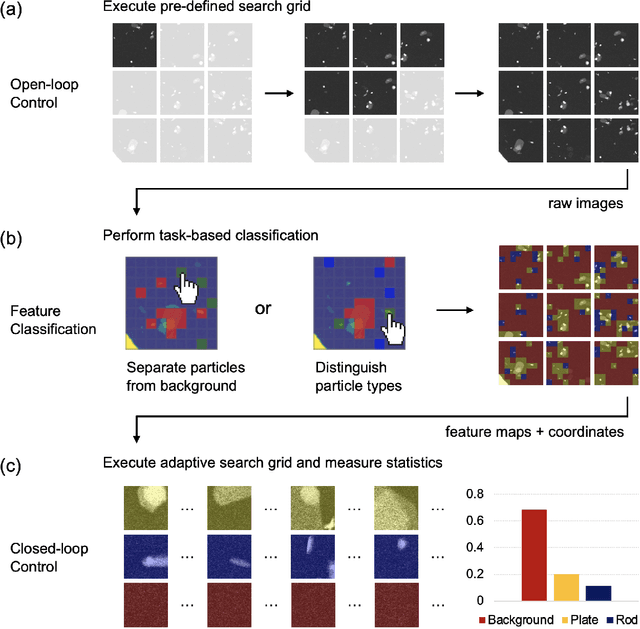

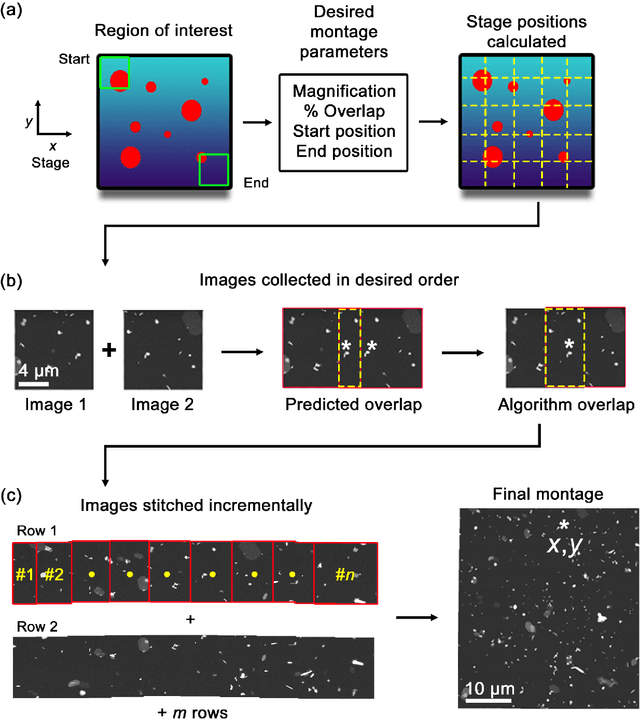

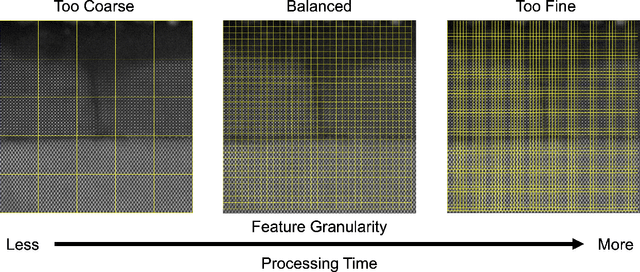

Abstract:Artificial intelligence (AI) promises to reshape scientific inquiry and enable breakthrough discoveries in areas such as energy storage, quantum computing, and biomedicine. Scanning transmission electron microscopy (STEM), a cornerstone of the study of chemical and materials systems, stands to benefit greatly from AI-driven automation. However, present barriers to low-level instrument control, as well as generalizable and interpretable feature detection, make truly automated microscopy impractical. Here, we discuss the design of a closed-loop instrument control platform guided by emerging sparse data analytics. We demonstrate how a centralized controller, informed by machine learning combining limited $a$ $priori$ knowledge and task-based discrimination, can drive on-the-fly experimental decision-making. This platform unlocks practical, automated analysis of a variety of material features, enabling new high-throughput and statistical studies.

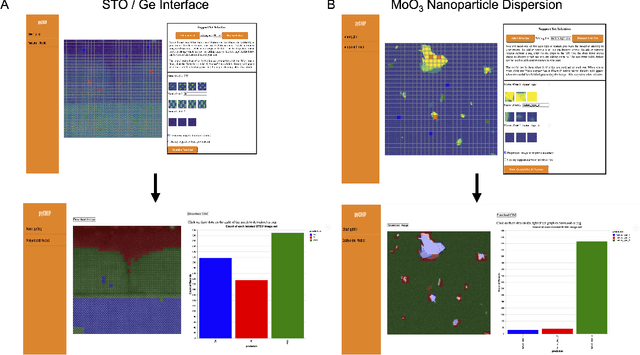

Design of a Graphical User Interface for Few-Shot Machine Learning Classification of Electron Microscopy Data

Jul 21, 2021

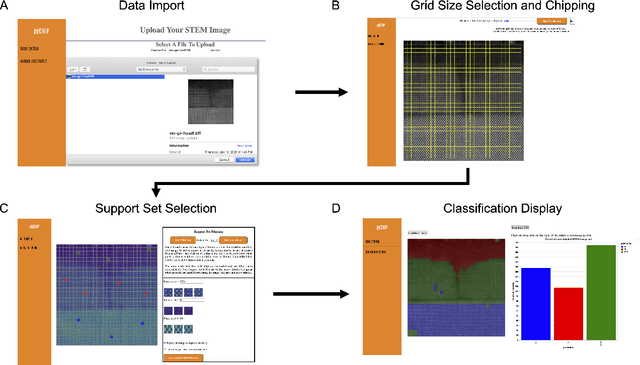

Abstract:The recent growth in data volumes produced by modern electron microscopes requires rapid, scalable, and flexible approaches to image segmentation and analysis. Few-shot machine learning, which can richly classify images from a handful of user-provided examples, is a promising route to high-throughput analysis. However, current command-line implementations of such approaches can be slow and unintuitive to use, lacking the real-time feedback necessary to perform effective classification. Here we report on the development of a Python-based graphical user interface that enables end users to easily conduct and visualize the output of few-shot learning models. This interface is lightweight and can be hosted locally or on the web, providing the opportunity to reproducibly conduct, share, and crowd-source few-shot analyses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge