Stefan Gerber

COGITAO: A Visual Reasoning Framework To Study Compositionality & Generalization

Sep 05, 2025

Abstract:The ability to compose learned concepts and apply them in novel settings is key to human intelligence, but remains a persistent limitation in state-of-the-art machine learning models. To address this issue, we introduce COGITAO, a modular and extensible data generation framework and benchmark designed to systematically study compositionality and generalization in visual domains. Drawing inspiration from ARC-AGI's problem-setting, COGITAO constructs rule-based tasks which apply a set of transformations to objects in grid-like environments. It supports composition, at adjustable depth, over a set of 28 interoperable transformations, along with extensive control over grid parametrization and object properties. This flexibility enables the creation of millions of unique task rules -- surpassing concurrent datasets by several orders of magnitude -- across a wide range of difficulties, while allowing virtually unlimited sample generation per rule. We provide baseline experiments using state-of-the-art vision models, highlighting their consistent failures to generalize to novel combinations of familiar elements, despite strong in-domain performance. COGITAO is fully open-sourced, including all code and datasets, to support continued research in this field.

Cost-efficient Active Illumination Camera For Hyper-spectral Reconstruction

Jun 27, 2024

Abstract:Hyper-spectral imaging has recently gained increasing attention for use in different applications, including agricultural investigation, ground tracking, remote sensing and many other. However, the high cost, large physical size and complicated operation process stop hyperspectral cameras from being employed for various applications and research fields. In this paper, we introduce a cost-efficient, compact and easy to use active illumination camera that may benefit many applications. We developed a fully functional prototype of such camera. With the hope of helping with agricultural research, we tested our camera for plant root imaging. In addition, a U-Net model for spectral reconstruction was trained by using a reference hyperspectral camera's data as ground truth and our camera's data as input. We demonstrated our camera's ability to obtain additional information over a typical RGB camera. In addition, the ability to reconstruct hyperspectral data from multi-spectral input makes our device compatible to models and algorithms developed for hyperspectral applications with no modifications required.

Neuromorphic implementation of ECG anomaly detection using delay chains

Sep 02, 2022

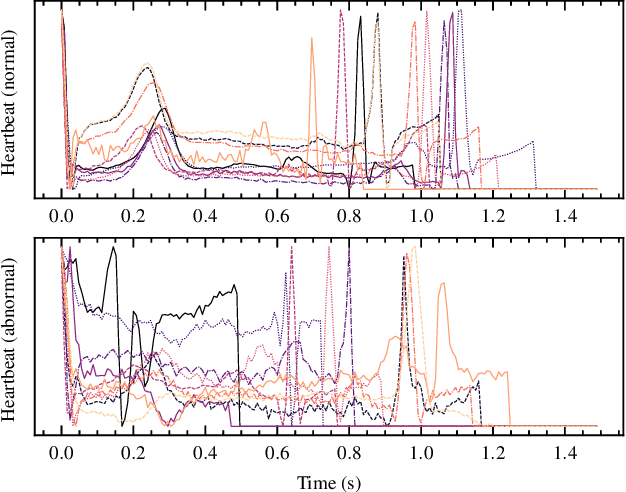

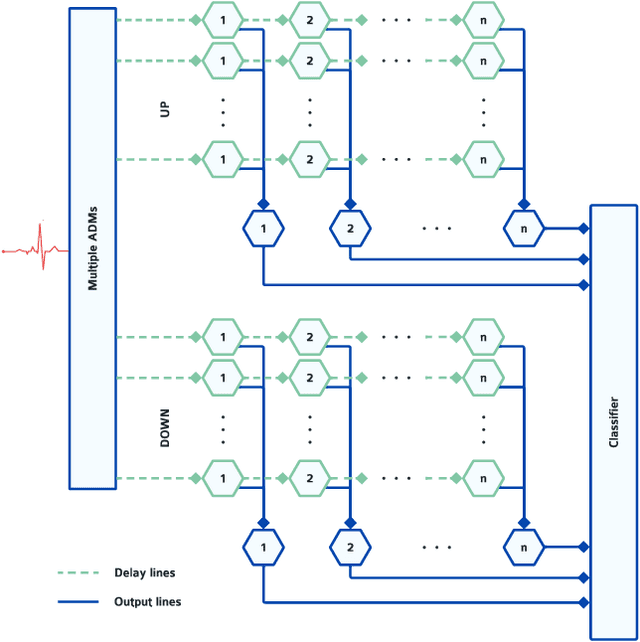

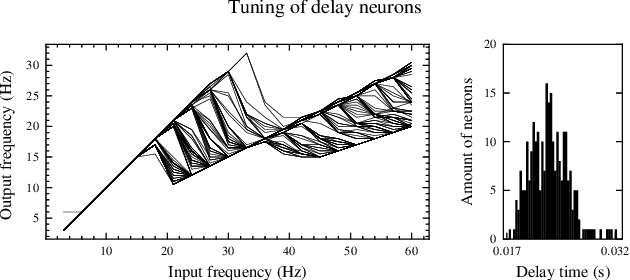

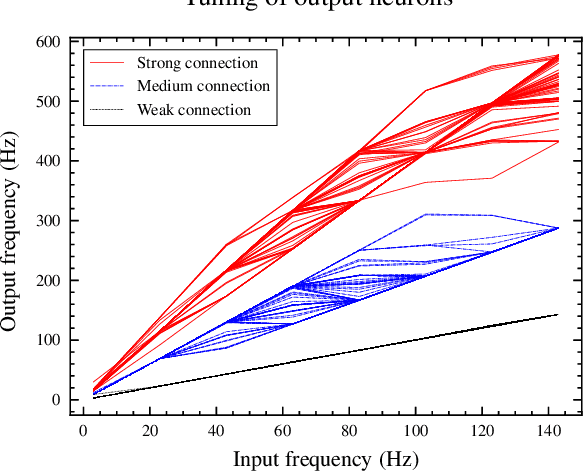

Abstract:Real-time analysis and classification of bio-signals measured using wearable devices is computationally costly and requires dedicated low-power hardware. One promising approach is to use spiking neural networks implemented using in-memory computing architectures and neuromorphic electronic circuits. However, as these circuits process data in streaming mode without the possibility of storing it in external buffers, a major challenge lies in the processing of spatio-temporal signals that last longer than the time constants present in the network synapses and neurons. Here we propose to extend the memory capacity of a spiking neural network by using parallel delay chains. We show that it is possible to map temporal signals of multiple seconds into spiking activity distributed across multiple neurons which have time constants of few milliseconds. We validate this approach on an ECG anomaly detection task and present experimental results that demonstrate how temporal information is properly preserved in the network activity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge