Ryuichiro Hataya

Will Large-scale Generative Models Corrupt Future Datasets?

Nov 15, 2022

Abstract:Recently proposed large-scale text-to-image generative models such as DALL$\cdot$E 2, Midjourney, and StableDiffusion can generate high-quality and realistic images from users' prompts. Not limited to the research community, ordinary Internet users enjoy these generative models, and consequently a tremendous amount of generated images have been shared on the Internet. Meanwhile, today's success of deep learning in the computer vision field owes a lot to images collected from the Internet. These trends lead us to a research question: "will such generated images impact the quality of future datasets and the performance of computer vision models positively or negatively?" This paper empirically answers this question by simulating contamination. Namely, we generate ImageNet-scale and COCO-scale datasets using a state-of-the-art generative model and evaluate models trained on ``contaminated'' datasets on various tasks including image classification and image generation. Throughout experiments, we conclude that generated images negatively affect downstream performance, while the significance depends on tasks and the amount of generated images. The generated datasets are available via https://github.com/moskomule/dataset-contamination.

Decomposing Normal and Abnormal Features of Medical Images into Discrete Latent Codes for Content-Based Image Retrieval

Mar 23, 2021

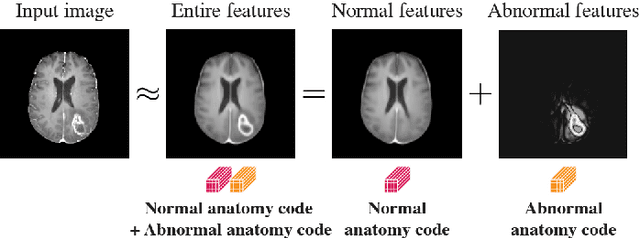

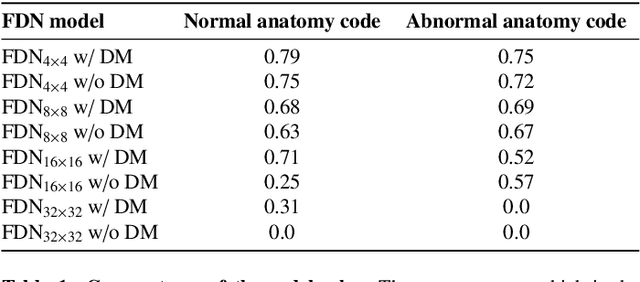

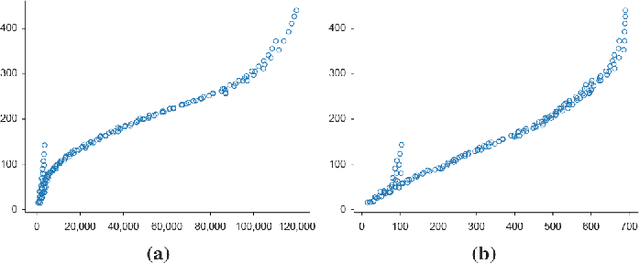

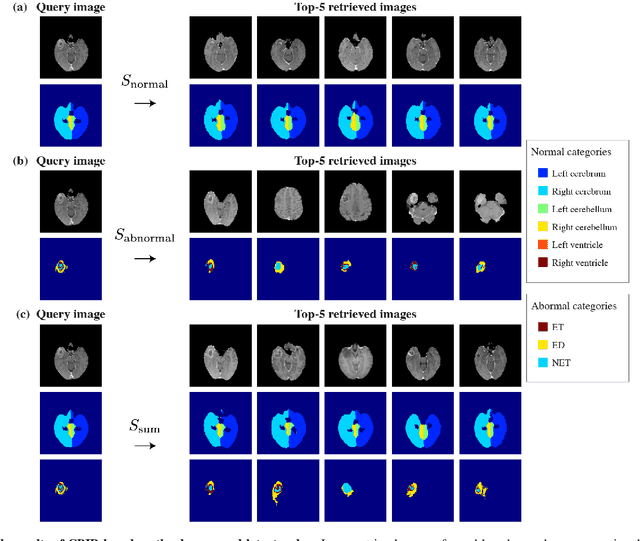

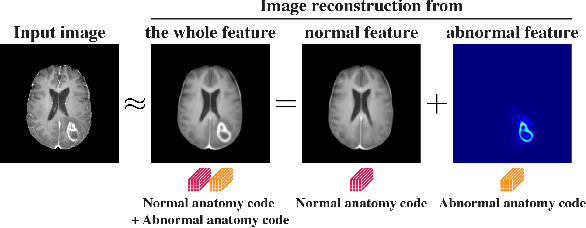

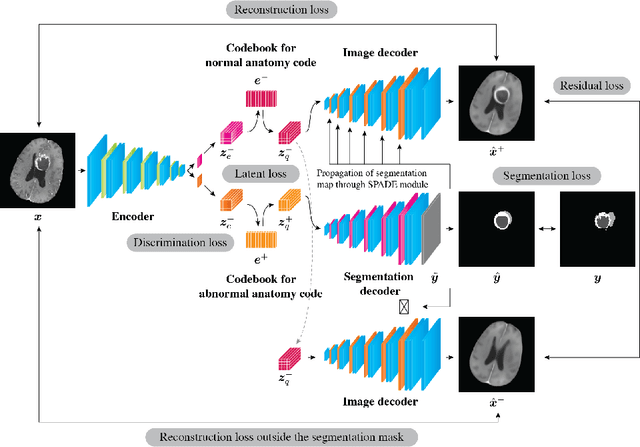

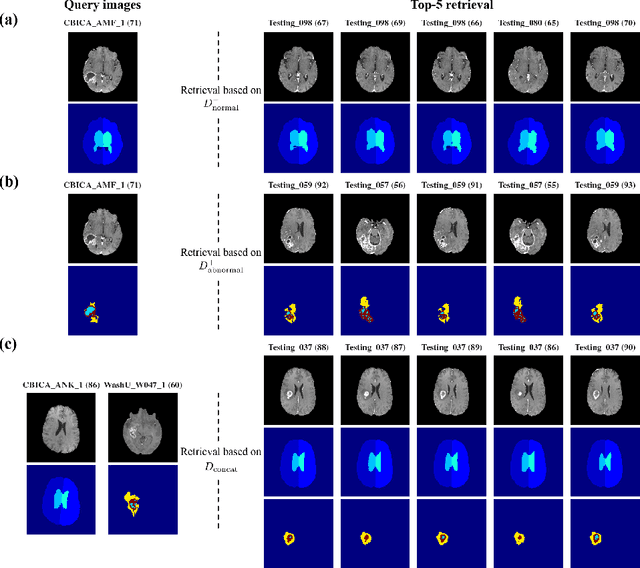

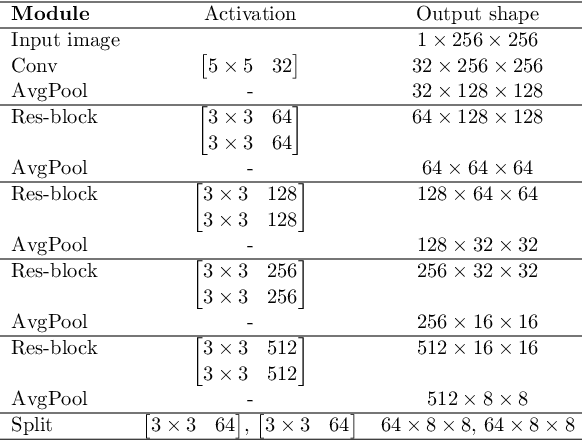

Abstract:In medical imaging, the characteristics purely derived from a disease should reflect the extent to which abnormal findings deviate from the normal features. Indeed, physicians often need corresponding images without abnormal findings of interest or, conversely, images that contain similar abnormal findings regardless of normal anatomical context. This is called comparative diagnostic reading of medical images, which is essential for a correct diagnosis. To support comparative diagnostic reading, content-based image retrieval (CBIR), which can selectively utilize normal and abnormal features in medical images as two separable semantic components, will be useful. Therefore, we propose a neural network architecture to decompose the semantic components of medical images into two latent codes: normal anatomy code and abnormal anatomy code. The normal anatomy code represents normal anatomies that should have existed if the sample is healthy, whereas the abnormal anatomy code attributes to abnormal changes that reflect deviation from the normal baseline. These latent codes are discretized through vector quantization to enable binary hashing, which can reduce the computational burden at the time of similarity search. By calculating the similarity based on either normal or abnormal anatomy codes or the combination of the two codes, our algorithm can retrieve images according to the selected semantic component from a dataset consisting of brain magnetic resonance images of gliomas. Our CBIR system qualitatively and quantitatively achieves remarkable results.

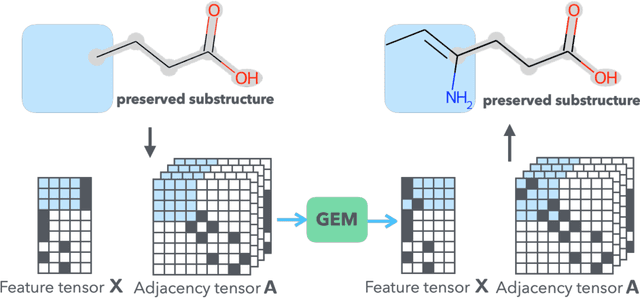

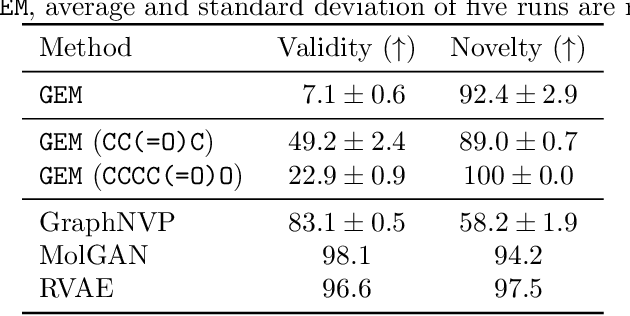

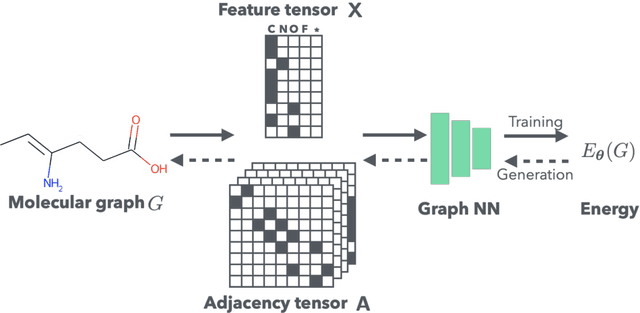

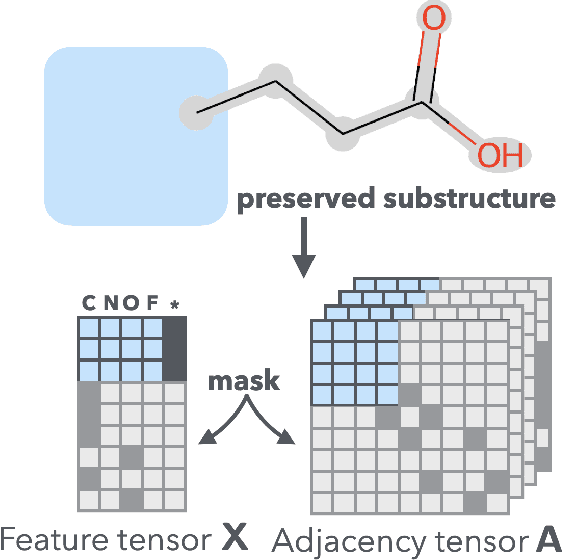

Graph Energy-based Model for Substructure Preserving Molecular Design

Feb 09, 2021

Abstract:It is common practice for chemists to search chemical databases based on substructures of compounds for finding molecules with desired properties. The purpose of de novo molecular generation is to generate instead of search. Existing machine learning based molecular design methods have no or limited ability in generating novel molecules that preserves a target substructure. Our Graph Energy-based Model, or GEM, can fix substructures and generate the rest. The experimental results show that the GEMs trained from chemistry datasets successfully generate novel molecules while preserving the target substructures. This method would provide a new way of incorporating the domain knowledge of chemists in molecular design.

Decomposing Normal and Abnormal Features of Medical Images for Content-based Image Retrieval

Nov 12, 2020

Abstract:Medical images can be decomposed into normal and abnormal features, which is considered as the compositionality. Based on this idea, we propose an encoder-decoder network to decompose a medical image into two discrete latent codes: a normal anatomy code and an abnormal anatomy code. Using these latent codes, we demonstrate a similarity retrieval by focusing on either normal or abnormal features of medical images.

Meta Approach to Data Augmentation Optimization

Jun 14, 2020

Abstract:Data augmentation policies drastically improve the performance of image recognition tasks, especially when the policies are optimized for the target data and tasks. In this paper, we propose to optimize image recognition models and data augmentation policies simultaneously to improve the performance using gradient descent. Unlike prior methods, our approach avoids using proxy tasks or reducing search space, and can directly improve the validation performance. Our method achieves efficient and scalable training by approximating the gradient of policies by implicit gradient with Neumann series approximation. We demonstrate that our approach can improve the performance of various image classification tasks, including ImageNet classification and fine-grained recognition, without using dataset-specific hyperparameter tuning.

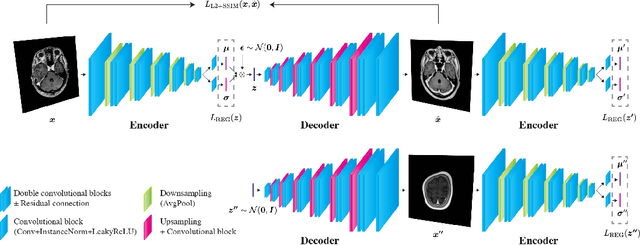

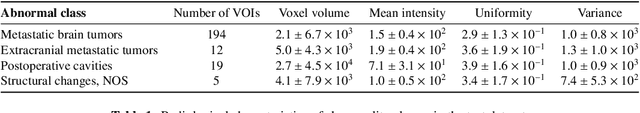

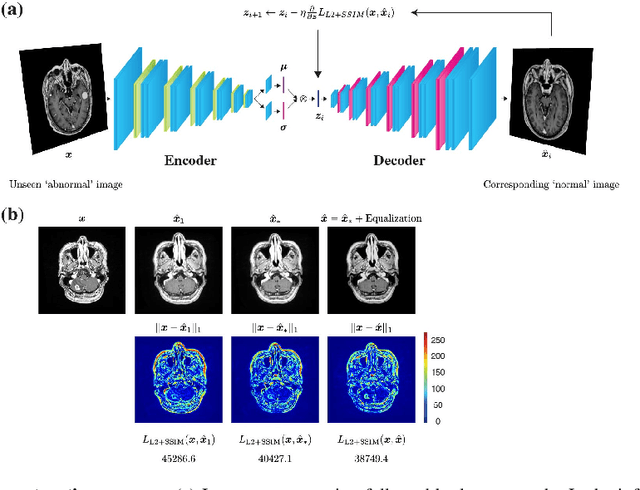

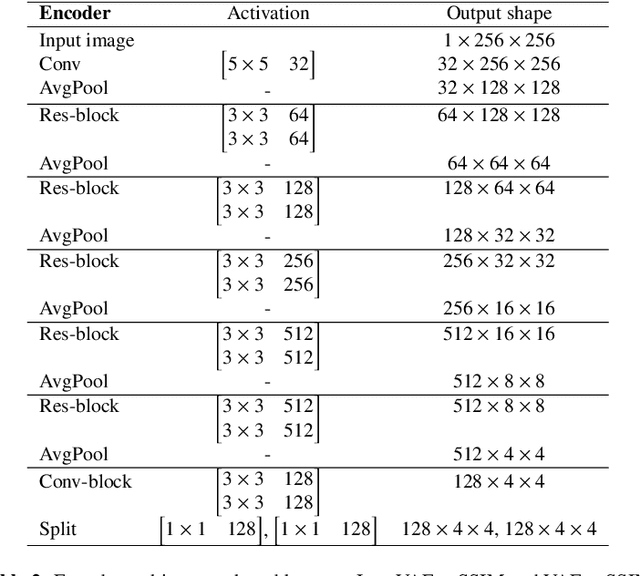

Unsupervised Brain Abnormality Detection Using High Fidelity Image Reconstruction Networks

Jun 02, 2020

Abstract:Recent advances in deep learning have facilitated near-expert medical image analysis. Supervised learning is the mainstay of current approaches, though its success requires the use of large, fully labeled datasets. However, in real-world medical practice, previously unseen disease phenotypes are encountered that have not been defined a priori in finite-size datasets. Unsupervised learning, a hypothesis-free learning framework, may play a complementary role to supervised learning. Here, we demonstrate a novel framework for voxel-wise abnormality detection in brain magnetic resonance imaging (MRI), which exploits an image reconstruction network based on an introspective variational autoencoder trained with a structural similarity constraint. The proposed network learns a latent representation for "normal" anatomical variation using a series of images that do not include annotated abnormalities. After training, the network can map unseen query images to positions in the latent space, and latent variables sampled from those positions can be mapped back to the image space to yield normal-looking replicas of the input images. Finally, the network considers abnormality scores, which are designed to reflect differences at several image feature levels, in order to locate image regions that may contain abnormalities. The proposed method is evaluated on a comprehensively annotated dataset spanning clinically significant structural abnormalities of the brain parenchyma in a population having undergone radiotherapy for brain metastasis, demonstrating that it is particularly effective for contrast-enhanced lesions, i.e., metastatic brain tumors and extracranial metastatic tumors.

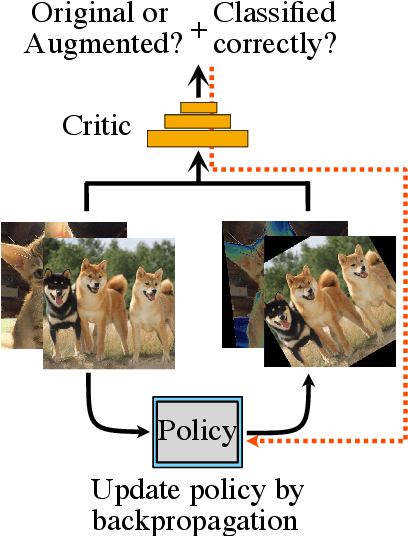

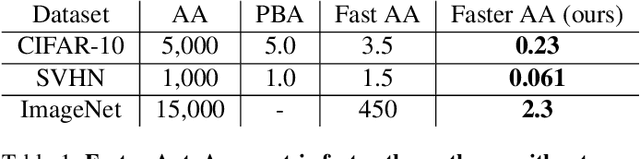

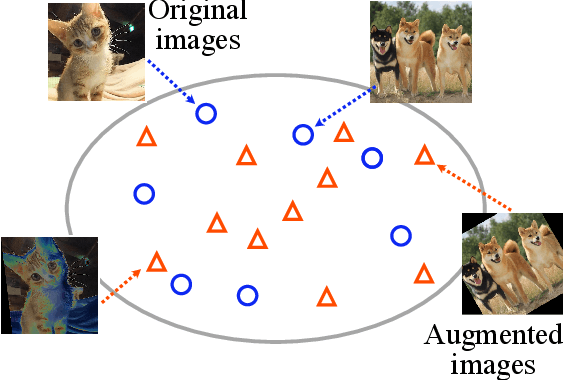

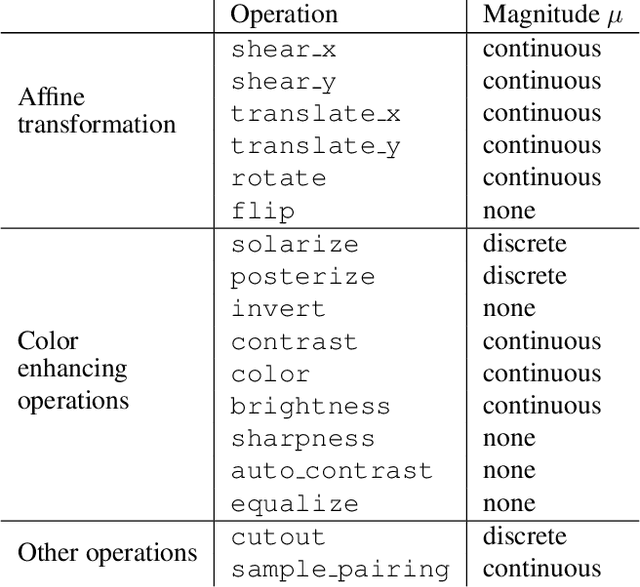

Faster AutoAugment: Learning Augmentation Strategies using Backpropagation

Nov 16, 2019

Abstract:Data augmentation methods are indispensable heuristics to boost the performance of deep neural networks, especially in image recognition tasks. Recently, several studies have shown that augmentation strategies found by search algorithms outperform hand-made strategies. Such methods employ black-box search algorithms over image transformations with continuous or discrete parameters and require a long time to obtain better strategies. In this paper, we propose a differentiable policy search pipeline for data augmentation, which is much faster than previous methods. We introduce approximate gradients for several transformation operations with discrete parameters as well as the differentiable mechanism for selecting operations. As the objective of training, we minimize the distance between the distributions of augmented data and the original data, which can be differentiated. We show that our method, Faster AutoAugment, achieves significantly faster searching than prior work without a performance drop.

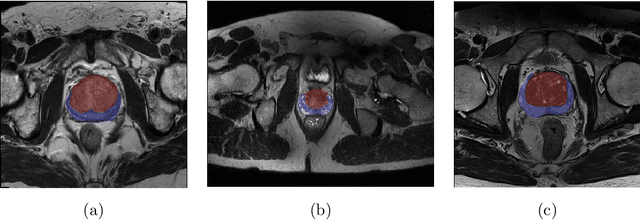

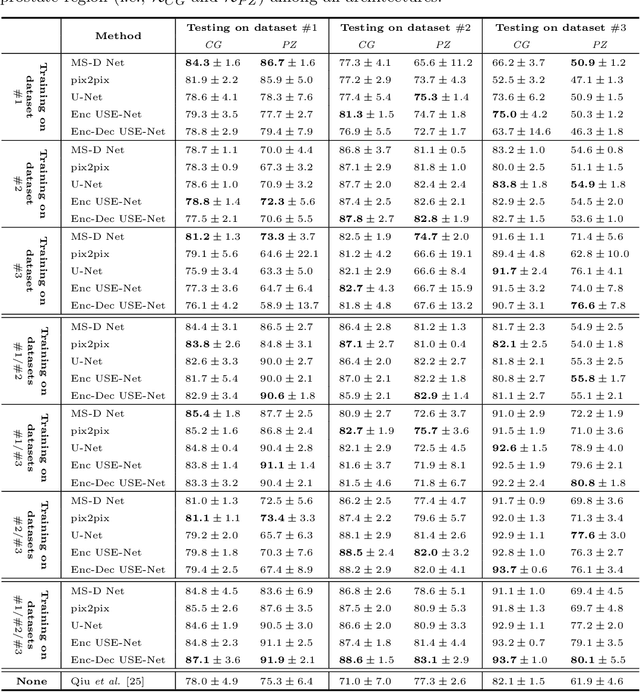

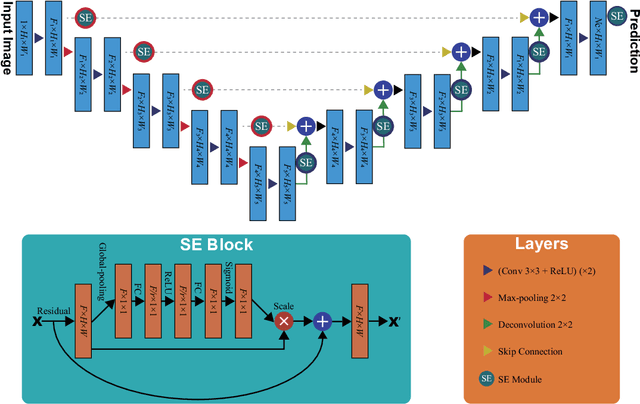

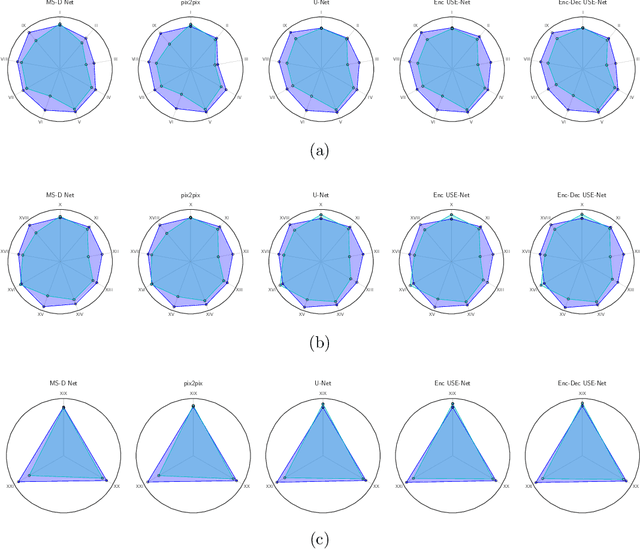

USE-Net: incorporating Squeeze-and-Excitation blocks into U-Net for prostate zonal segmentation of multi-institutional MRI datasets

Apr 17, 2019

Abstract:Prostate cancer is the most common malignant tumors in men but prostate Magnetic Resonance Imaging (MRI) analysis remains challenging. Besides whole prostate gland segmentation, the capability to differentiate between the blurry boundary of the Central Gland (CG) and Peripheral Zone (PZ) can lead to differential diagnosis, since tumor's frequency and severity differ in these regions. To tackle the prostate zonal segmentation task, we propose a novel Convolutional Neural Network (CNN), called USE-Net, which incorporates Squeeze-and-Excitation (SE) blocks into U-Net. Especially, the SE blocks are added after every Encoder (Enc USE-Net) or Encoder-Decoder block (Enc-Dec USE-Net). This study evaluates the generalization ability of CNN-based architectures on three T2-weighted MRI datasets, each one consisting of a different number of patients and heterogeneous image characteristics, collected by different institutions. The following mixed scheme is used for training/testing: (i) training on either each individual dataset or multiple prostate MRI datasets and (ii) testing on all three datasets with all possible training/testing combinations. USE-Net is compared against three state-of-the-art CNN-based architectures (i.e., U-Net, pix2pix, and Mixed-Scale Dense Network), along with a semi-automatic continuous max-flow model. The results show that training on the union of the datasets generally outperforms training on each dataset separately, allowing for both intra-/cross-dataset generalization. Enc USE-Net shows good overall generalization under any training condition, while Enc-Dec USE-Net remarkably outperforms the other methods when trained on all datasets. These findings reveal that the SE blocks' adaptive feature recalibration provides excellent cross-dataset generalization when testing is performed on samples of the datasets used during training.

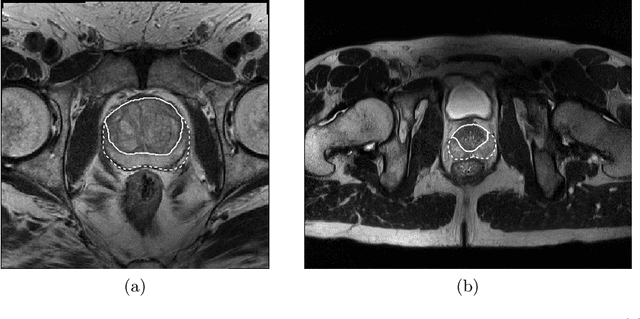

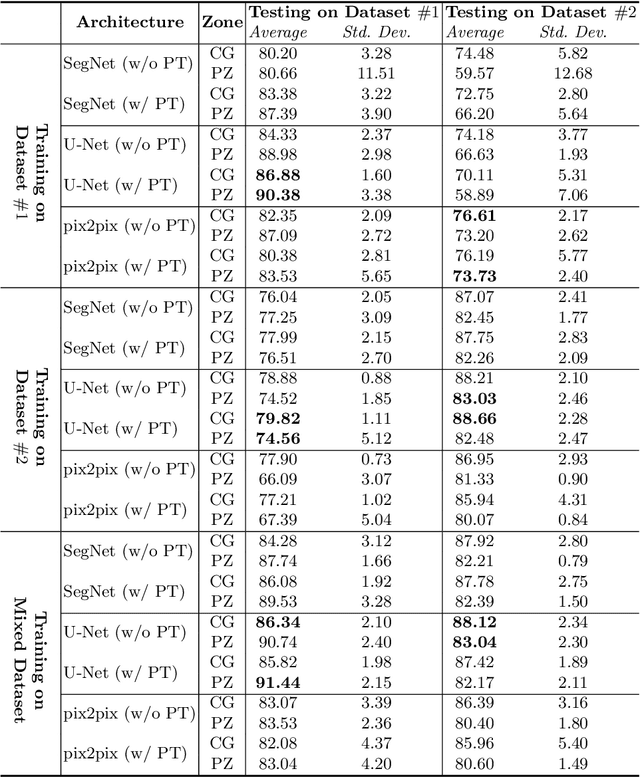

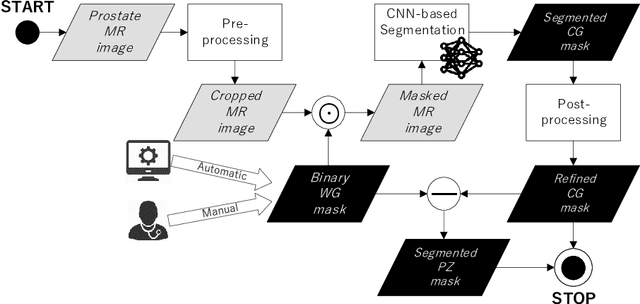

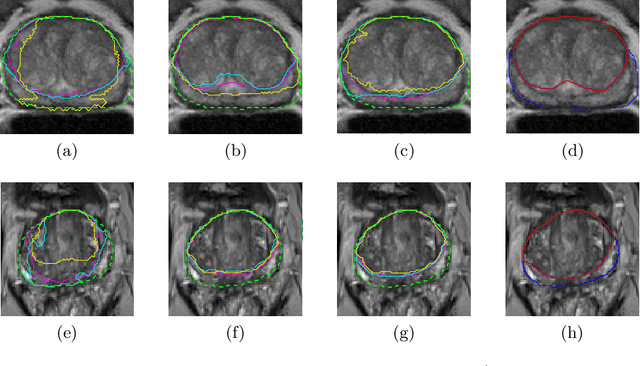

CNN-based Prostate Zonal Segmentation on T2-weighted MR Images: A Cross-dataset Study

Mar 29, 2019

Abstract:Prostate cancer is the most common cancer among US men. However, prostate imaging is still challenging despite the advances in multi-parametric Magnetic Resonance Imaging (MRI), which provides both morphologic and functional information pertaining to the pathological regions. Along with whole prostate gland segmentation, distinguishing between the Central Gland (CG) and Peripheral Zone (PZ) can guide towards differential diagnosis, since the frequency and severity of tumors differ in these regions; however, their boundary is often weak and fuzzy. This work presents a preliminary study on Deep Learning to automatically delineate the CG and PZ, aiming at evaluating the generalization ability of Convolutional Neural Networks (CNNs) on two multi-centric MRI prostate datasets. Especially, we compared three CNN-based architectures: SegNet, U-Net, and pix2pix. In such a context, the segmentation performances achieved with/without pre-training were compared in 4-fold cross-validation. In general, U-Net outperforms the other methods, especially when training and testing are performed on multiple datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge