Ruixiang Cao

OmniWheg: An Omnidirectional Wheel-Leg Transformable Robot

Mar 04, 2022

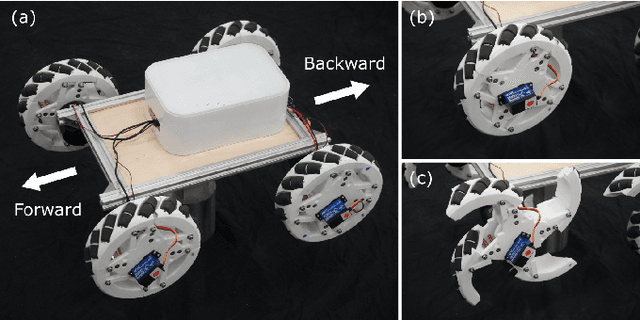

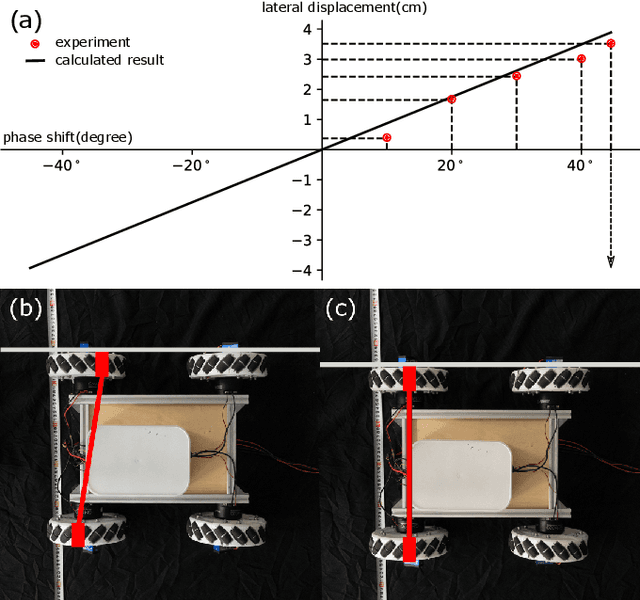

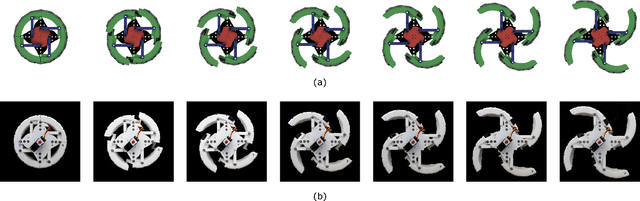

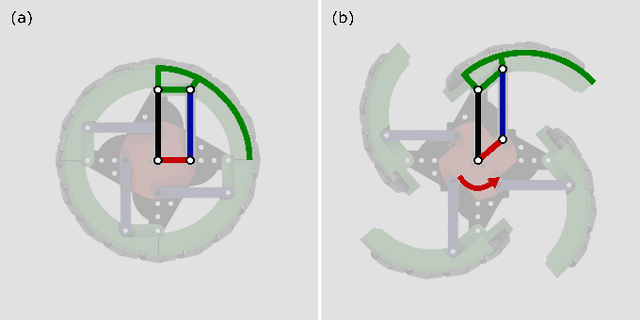

Abstract:This paper presents the design, analysis, and performance evaluation of an omnidirectional transformable wheel-leg robot called OmniWheg. We design a novel mechanism consisting of a separable Omni-wheel and 4-bar linkages, allowing the robot to transform between Omni-wheeled and legged modes smoothly. On wheeled mode, the robot can move in all directions and efficiently adjust the relative position of its wheels, while it can overcome common obstacles in legged mode, such as stairs and steps. Unlike other articles studying whegs, this implementation with omnidirectional wheels allows the correction of misalignments between right and left wheels before traversing obstacles, which effectively improves the success rate and simplifies the preparation process before the wheel-leg transformation. We describe the design concept, mechanism, and the dynamic characteristic of the wheel-leg structure. We then evaluate its performance in various scenarios, including passing obstacles, climbing steps of different heights, and turning/moving omnidirectionally. Our results confirm that this mobile platform can overcome common indoor obstacles and move flexibly on the flat ground with the new transformable wheel-leg mechanism, while keeping a high degree of stability.

Video-driven Neural Physically-based Facial Asset for Production

Feb 18, 2022

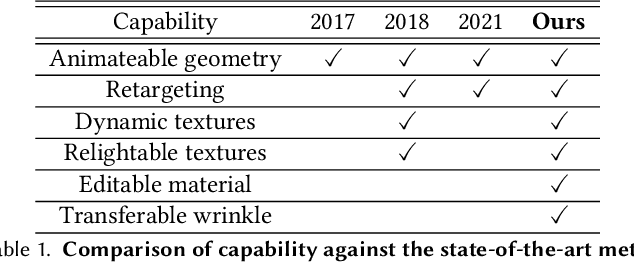

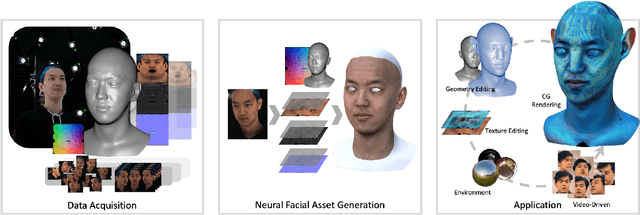

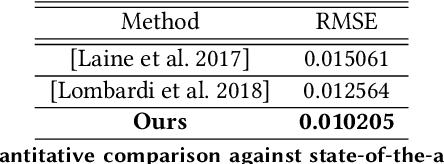

Abstract:Production-level workflows for producing convincing 3D dynamic human faces have long relied on a disarray of labor-intensive tools for geometry and texture generation, motion capture and rigging, and expression synthesis. Recent neural approaches automate individual components but the corresponding latent representations cannot provide artists explicit controls as in conventional tools. In this paper, we present a new learning-based, video-driven approach for generating dynamic facial geometries with high-quality physically-based assets. Two key components are well-structured latent spaces due to dense temporal samplings from videos and explicit facial expression controls to regulate the latent spaces. For data collection, we construct a hybrid multiview-photometric capture stage, coupling with an ultra-fast video camera to obtain raw 3D facial assets. We then model the facial expression, geometry and physically-based textures using separate VAEs with a global MLP-based expression mapping across the latent spaces, to preserve characteristics across respective attributes while maintaining explicit controls over geometry and texture. We also introduce to model the delta information as wrinkle maps for physically-base textures, achieving high-quality rendering of dynamic textures. We demonstrate our approach in high-fidelity performer-specific facial capture and cross-identity facial motion retargeting. In addition, our neural asset along with fast adaptation schemes can also be deployed to handle in-the-wild videos. Besides, we motivate the utility of our explicit facial disentangle strategy by providing promising physically-based editing results like geometry and material editing or winkle transfer with high realism. Comprehensive experiments show that our technique provides higher accuracy and visual fidelity than previous video-driven facial reconstruction and animation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge