Qijun Zhao

Camouflage-aware Image-Text Retrieval via Expert Collaboration

Apr 01, 2026Abstract:Camouflaged scene understanding (CSU) has attracted significant attention due to its broad practical implications. However, in this field, robust image-text cross-modal alignment remains under-explored, hindering deeper understanding of camouflaged scenarios and their related applications. To this end, we focus on the typical image-text retrieval task, and formulate a new task dubbed ``camouflage-aware image-text retrieval'' (CA-ITR). We first construct a dedicated camouflage image-text retrieval dataset (CamoIT), comprising $\sim$10.5K samples with multi-granularity textual annotations. Benchmark results conducted on CamoIT reveal the underlying challenges of CA-ITR for existing cutting-edge retrieval techniques, which are mainly caused by objects' camouflage properties as well as those complex image contents. As a solution, we propose a camouflage-expert collaborative network (CECNet), which features a dual-branch visual encoder: one branch captures holistic image representations, while the other incorporates a dedicated model to inject representations of camouflaged objects. A novel confidence-conditioned graph attention (C\textsuperscript{2}GA) mechanism is incorporated to exploit the complementarity across branches. Comparative experiments show that CECNet achieves $\sim$29% overall CA-ITR accuracy boost, surpassing seven representative retrieval models. The dataset and code will be available at https://github.com/jiangyao-scu/CA-ITR.

Dehallu3D: Hallucination-Mitigated 3D Generation from Single Image via Cyclic View Consistency Refinement

Mar 02, 2026Abstract:Large 3D reconstruction models have revolutionized the 3D content generation field, enabling broad applications in virtual reality and gaming. Just like other large models, large 3D reconstruction models suffer from hallucinations as well, introducing structural outliers (e.g., odd holes or protrusions) that deviate from the input data. However, unlike other large models, hallucinations in large 3D reconstruction models remain severely underexplored, leading to malformed 3D-printed objects or insufficient immersion in virtual scenes. Such hallucinations majorly originate from that existing methods reconstruct 3D content from sparsely generated multi-view images which suffer from large viewpoint gaps and discontinuities. To mitigate hallucinations by eliminating the outliers, we propose Dehallu3D for 3D mesh generation. Our key idea is to design a balanced multi-view continuity constraint to enforce smooth transitions across dense intermediate viewpoints, while avoiding over-smoothing that could erase sharp geometric features. Therefore, Dehallu3D employs a plug-and-play optimization module with two key constraints: (i) adjacent consistency to ensure geometric continuity across views, and (ii) adaptive smoothness to retain fine details.We further propose the Outlier Risk Measure (ORM) metric to quantify geometric fidelity in 3D generation from the perspective of outliers. Extensive experiments show that Dehallu3D achieves high-fidelity 3D generation by effectively preserving structural details while removing hallucinated outliers.

Samba+: General and Accurate Salient Object Detection via A More Unified Mamba-based Framework

Feb 02, 2026Abstract:Existing salient object detection (SOD) models are generally constrained by the limited receptive fields of convolutional neural networks (CNNs) and quadratic computational complexity of Transformers. Recently, the emerging state-space model, namely Mamba, has shown great potential in balancing global receptive fields and computational efficiency. As a solution, we propose Saliency Mamba (Samba), a pure Mamba-based architecture that flexibly handles various distinct SOD tasks, including RGB/RGB-D/RGB-T SOD, video SOD (VSOD), RGB-D VSOD, and visible-depth-thermal SOD. Specifically, we rethink the scanning strategy of Mamba for SOD, and introduce a saliency-guided Mamba block (SGMB) that features a spatial neighborhood scanning (SNS) algorithm to preserve the spatial continuity of salient regions. A context-aware upsampling (CAU) method is also proposed to promote hierarchical feature alignment and aggregation by modeling contextual dependencies. As one step further, to avoid the "task-specific" problem as in previous SOD solutions, we develop Samba+, which is empowered by training Samba in a multi-task joint manner, leading to a more unified and versatile model. Two crucial components that collaboratively tackle challenges encountered in input of arbitrary modalities and continual adaptation are investigated. Specifically, a hub-and-spoke graph attention (HGA) module facilitates adaptive cross-modal interactive fusion, and a modality-anchored continual learning (MACL) strategy alleviates inter-modal conflicts together with catastrophic forgetting. Extensive experiments demonstrate that Samba individually outperforms existing methods across six SOD tasks on 22 datasets with lower computational cost, whereas Samba+ achieves even superior results on these tasks and datasets by using a single trained versatile model. Additional results further demonstrate the potential of our Samba framework.

CapeNext: Rethinking and refining dynamic support information for category-agnostic pose estimation

Nov 17, 2025

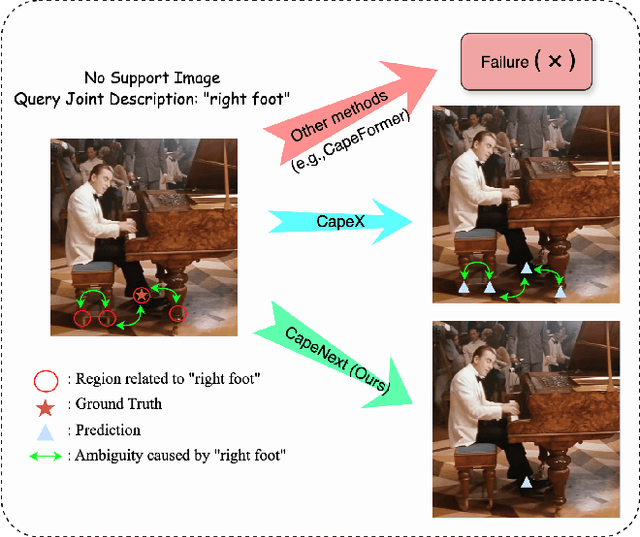

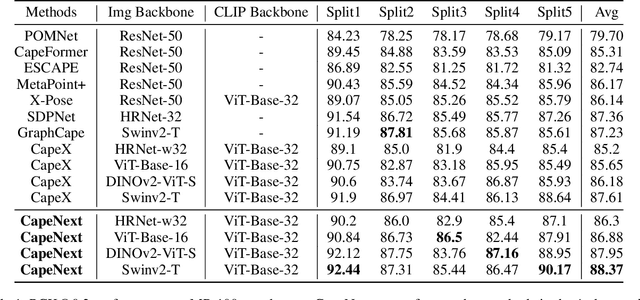

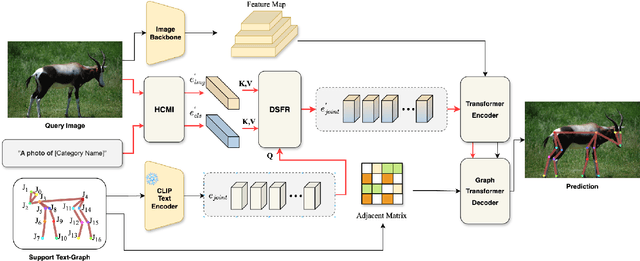

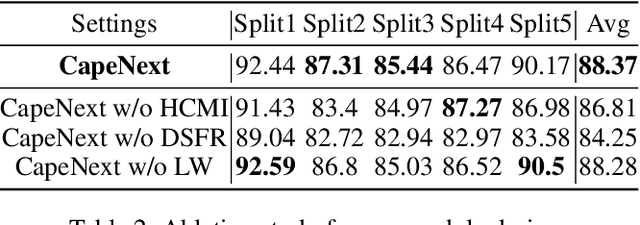

Abstract:Recent research in Category-Agnostic Pose Estimation (CAPE) has adopted fixed textual keypoint description as semantic prior for two-stage pose matching frameworks. While this paradigm enhances robustness and flexibility by disentangling the dependency of support images, our critical analysis reveals two inherent limitations of static joint embedding: (1) polysemy-induced cross-category ambiguity during the matching process(e.g., the concept "leg" exhibiting divergent visual manifestations across humans and furniture), and (2) insufficient discriminability for fine-grained intra-category variations (e.g., posture and fur discrepancies between a sleeping white cat and a standing black cat). To overcome these challenges, we propose a new framework that innovatively integrates hierarchical cross-modal interaction with dual-stream feature refinement, enhancing the joint embedding with both class-level and instance-specific cues from textual description and specific images. Experiments on the MP-100 dataset demonstrate that, regardless of the network backbone, CapeNext consistently outperforms state-of-the-art CAPE methods by a large margin.

Mamba-based Efficient Spatio-Frequency Motion Perception for Video Camouflaged Object Detection

Jul 31, 2025Abstract:Existing video camouflaged object detection (VCOD) methods primarily rely on spatial appearance features to perceive motion cues for breaking camouflage. However, the high similarity between foreground and background in VCOD results in limited discriminability of spatial appearance features (e.g., color and texture), restricting detection accuracy and completeness. Recent studies demonstrate that frequency features can not only enhance feature representation to compensate for appearance limitations but also perceive motion through dynamic variations in frequency energy. Furthermore, the emerging state space model called Mamba, enables efficient perception of motion cues in frame sequences due to its linear-time long-sequence modeling capability. Motivated by this, we propose a novel visual camouflage Mamba (Vcamba) based on spatio-frequency motion perception that integrates frequency and spatial features for efficient and accurate VCOD. Specifically, we propose a receptive field visual state space (RFVSS) module to extract multi-scale spatial features after sequence modeling. For frequency learning, we introduce an adaptive frequency component enhancement (AFE) module with a novel frequency-domain sequential scanning strategy to maintain semantic consistency. Then we propose a space-based long-range motion perception (SLMP) module and a frequency-based long-range motion perception (FLMP) module to model spatio-temporal and frequency-temporal sequences in spatial and frequency phase domains. Finally, the space and frequency motion fusion module (SFMF) integrates dual-domain features for unified motion representation. Experimental results show that our Vcamba outperforms state-of-the-art methods across 6 evaluation metrics on 2 datasets with lower computation cost, confirming the superiority of Vcamba. Our code is available at: https://github.com/BoydeLi/Vcamba.

Unleashing the Power of Motion and Depth: A Selective Fusion Strategy for RGB-D Video Salient Object Detection

Jul 29, 2025Abstract:Applying salient object detection (SOD) to RGB-D videos is an emerging task called RGB-D VSOD and has recently gained increasing interest, due to considerable performance gains of incorporating motion and depth and that RGB-D videos can be easily captured now in daily life. Existing RGB-D VSOD models have different attempts to derive motion cues, in which extracting motion information explicitly from optical flow appears to be a more effective and promising alternative. Despite this, there remains a key issue that how to effectively utilize optical flow and depth to assist the RGB modality in SOD. Previous methods always treat optical flow and depth equally with respect to model designs, without explicitly considering their unequal contributions in individual scenarios, limiting the potential of motion and depth. To address this issue and unleash the power of motion and depth, we propose a novel selective cross-modal fusion framework (SMFNet) for RGB-D VSOD, incorporating a pixel-level selective fusion strategy (PSF) that achieves optimal fusion of optical flow and depth based on their actual contributions. Besides, we propose a multi-dimensional selective attention module (MSAM) to integrate the fused features derived from PSF with the remaining RGB modality at multiple dimensions, effectively enhancing feature representation to generate refined features. We conduct comprehensive evaluation of SMFNet against 19 state-of-the-art models on both RDVS and DVisal datasets, making the evaluation the most comprehensive RGB-D VSOD benchmark up to date, and it also demonstrates the superiority of SMFNet over other models. Meanwhile, evaluation on five video benchmark datasets incorporating synthetic depth validates the efficacy of SMFNet as well. Our code and benchmark results are made publicly available at https://github.com/Jia-hao999/SMFNet.

QEMesh: Employing A Quadric Error Metrics-Based Representation for Mesh Generation

Apr 08, 2025Abstract:Mesh generation plays a crucial role in 3D content creation, as mesh is widely used in various industrial applications. Recent works have achieved impressive results but still face several issues, such as unrealistic patterns or pits on surfaces, thin parts missing, and incomplete structures. Most of these problems stem from the choice of shape representation or the capabilities of the generative network. To alleviate these, we extend PoNQ, a Quadric Error Metrics (QEM)-based representation, and propose a novel model, QEMesh, for high-quality mesh generation. PoNQ divides the shape surface into tiny patches, each represented by a point with its normal and QEM matrix, which preserves fine local geometry information. In our QEMesh, we regard these elements as generable parameters and design a unique latent diffusion model containing a novel multi-decoder VAE for PoNQ parameters generation. Given the latent code generated by the diffusion model, three parameter decoders produce several PoNQ parameters within each voxel cell, and an occupancy decoder predicts which voxel cells containing parameters to form the final shape. Extensive evaluations demonstrate that our method generates results with watertight surfaces and is comparable to state-of-the-art methods in several main metrics.

CamoSAM2: Motion-Appearance Induced Auto-Refining Prompts for Video Camouflaged Object Detection

Apr 01, 2025Abstract:The Segment Anything Model 2 (SAM2), a prompt-guided video foundation model, has remarkably performed in video object segmentation, drawing significant attention in the community. Due to the high similarity between camouflaged objects and their surroundings, which makes them difficult to distinguish even by the human eye, the application of SAM2 for automated segmentation in real-world scenarios faces challenges in camouflage perception and reliable prompts generation. To address these issues, we propose CamoSAM2, a motion-appearance prompt inducer (MAPI) and refinement framework to automatically generate and refine prompts for SAM2, enabling high-quality automatic detection and segmentation in VCOD task. Initially, we introduce a prompt inducer that simultaneously integrates motion and appearance cues to detect camouflaged objects, delivering more accurate initial predictions than existing methods. Subsequently, we propose a video-based adaptive multi-prompts refinement (AMPR) strategy tailored for SAM2, aimed at mitigating prompt error in initial coarse masks and further producing good prompts. Specifically, we introduce a novel three-step process to generate reliable prompts by camouflaged object determination, pivotal prompting frame selection, and multi-prompts formation. Extensive experiments conducted on two benchmark datasets demonstrate that our proposed model, CamoSAM2, significantly outperforms existing state-of-the-art methods, achieving increases of 8.0% and 10.1% in mIoU metric. Additionally, our method achieves the fastest inference speed compared to current VCOD models.

Patch-Depth Fusion: Dichotomous Image Segmentation via Fine-Grained Patch Strategy and Depth Integrity-Prior

Mar 08, 2025Abstract:Dichotomous Image Segmentation (DIS) is a high-precision object segmentation task for high-resolution natural images. The current mainstream methods focus on the optimization of local details but overlook the fundamental challenge of modeling the integrity of objects. We have found that the depth integrity-prior implicit in the the pseudo-depth maps generated by Depth Anything Model v2 and the local detail features of image patches can jointly address the above dilemmas. Based on the above findings, we have designed a novel Patch-Depth Fusion Network (PDFNet) for high-precision dichotomous image segmentation. The core of PDFNet consists of three aspects. Firstly, the object perception is enhanced through multi-modal input fusion. By utilizing the patch fine-grained strategy, coupled with patch selection and enhancement, the sensitivity to details is improved. Secondly, by leveraging the depth integrity-prior distributed in the depth maps, we propose an integrity-prior loss to enhance the uniformity of the segmentation results in the depth maps. Finally, we utilize the features of the shared encoder and, through a simple depth refinement decoder, improve the ability of the shared encoder to capture subtle depth-related information in the images. Experiments on the DIS-5K dataset show that PDFNet significantly outperforms state-of-the-art non-diffusion methods. Due to the incorporation of the depth integrity-prior, PDFNet achieves or even surpassing the performance of the latest diffusion-based methods while using less than 11% of the parameters of diffusion-based methods. The source code at https://github.com/Tennine2077/PDFNet.

Learning Adaptive and View-Invariant Vision Transformer with Multi-Teacher Knowledge Distillation for Real-Time UAV Tracking

Dec 28, 2024

Abstract:Visual tracking has made significant strides due to the adoption of transformer-based models. Most state-of-the-art trackers struggle to meet real-time processing demands on mobile platforms with constrained computing resources, particularly for real-time unmanned aerial vehicle (UAV) tracking. To achieve a better balance between performance and efficiency, we introduce AVTrack, an adaptive computation framework designed to selectively activate transformer blocks for real-time UAV tracking. The proposed Activation Module (AM) dynamically optimizes the ViT architecture by selectively engaging relevant components, thereby enhancing inference efficiency without significant compromise to tracking performance. Furthermore, to tackle the challenges posed by extreme changes in viewing angles often encountered in UAV tracking, the proposed method enhances ViTs' effectiveness by learning view-invariant representations through mutual information (MI) maximization. Two effective design principles are proposed in the AVTrack. Building on it, we propose an improved tracker, dubbed AVTrack-MD, which introduces the novel MI maximization-based multi-teacher knowledge distillation (MD) framework. It harnesses the benefits of multiple teachers, specifically the off-the-shelf tracking models from the AVTrack, by integrating and refining their outputs, thereby guiding the learning process of the compact student network. Specifically, we maximize the MI between the softened feature representations from the multi-teacher models and the student model, leading to improved generalization and performance of the student model, particularly in noisy conditions. Extensive experiments on multiple UAV tracking benchmarks demonstrate that AVTrack-MD not only achieves performance comparable to the AVTrack baseline but also reduces model complexity, resulting in a significant 17\% increase in average tracking speed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge