Prasad Tadepalli

Oregon State University

Entity-aware ELMo: Learning Contextual Entity Representation for Entity Disambiguation

Aug 22, 2019

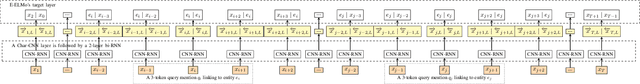

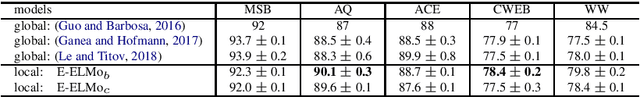

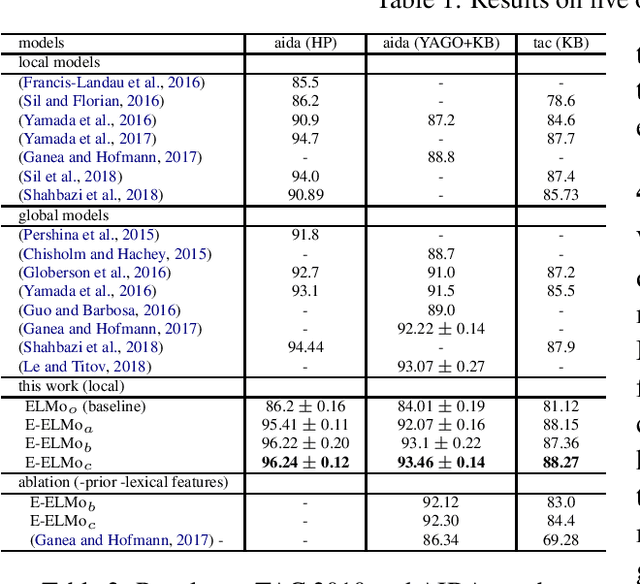

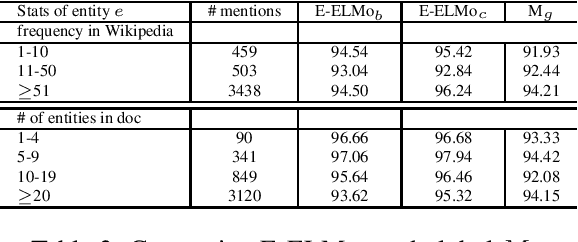

Abstract:We present a new local entity disambiguation system. The key to our system is a novel approach for learning entity representations. In our approach we learn an entity aware extension of Embedding for Language Model (ELMo) which we call Entity-ELMo (E-ELMo). Given a paragraph containing one or more named entity mentions, each mention is first defined as a function of the entire paragraph (including other mentions), then they predict the referent entities. Utilizing E-ELMo for local entity disambiguation, we outperform all of the state-of-the-art local and global models on the popular benchmarks by improving about 0.5\% on micro average accuracy for AIDA test-b with Yago candidate set. The evaluation setup of the training data and candidate set are the same as our baselines for fair comparison.

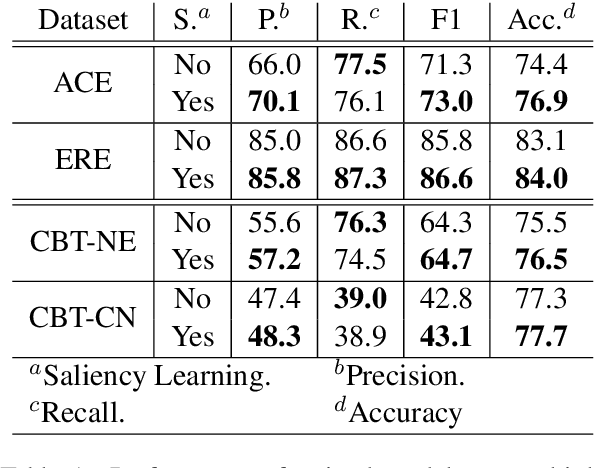

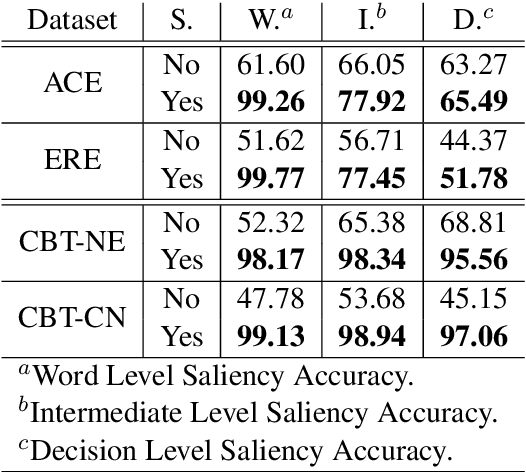

Saliency Learning: Teaching the Model Where to Pay Attention

Apr 04, 2019

Abstract:Deep learning has emerged as a compelling solution to many NLP tasks with remarkable performances. However, due to their opacity, such models are hard to interpret and trust. Recent work on explaining deep models has introduced approaches to provide insights toward the model's behaviour and predictions, which are helpful for assessing the reliability of the model's predictions. However, such methods do not improve the model's reliability. In this paper, we aim to teach the model to make the right prediction for the right reason by providing explanation training and ensuring the alignment of the model's explanation with the ground truth explanation. Our experimental results on multiple tasks and datasets demonstrate the effectiveness of the proposed method, which produces more reliable predictions while delivering better results compared to traditionally trained models.

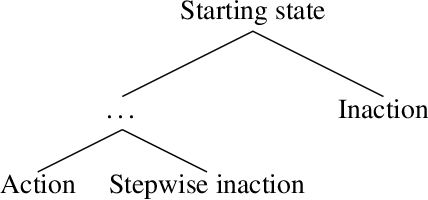

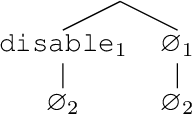

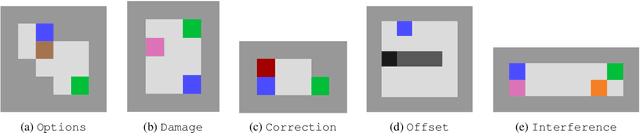

Conservative Agency via Attainable Utility Preservation

Feb 26, 2019

Abstract:Reward functions are often misspecified. An agent optimizing an incorrect reward function can change its environment in large, undesirable, and potentially irreversible ways. Work on impact measurement seeks a means of identifying (and thereby avoiding) large changes to the environment. We propose a novel impact measure which induces conservative, effective behavior across a range of situations. The approach attempts to preserve the attainable utility of auxiliary objectives. We evaluate our proposal on an array of benchmark tasks and show that it matches or outperforms relative reachability, the state-of-the-art in impact measurement.

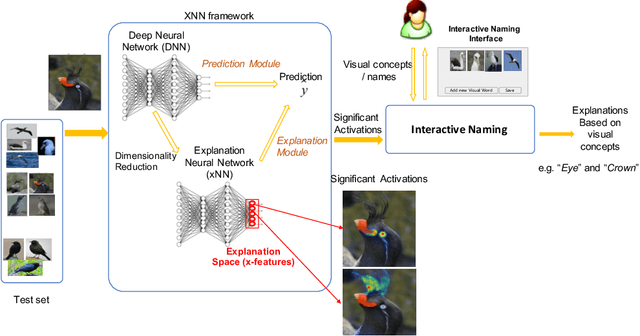

Interactive Naming for Explaining Deep Neural Networks: A Formative Study

Dec 20, 2018

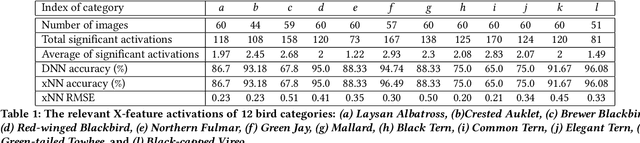

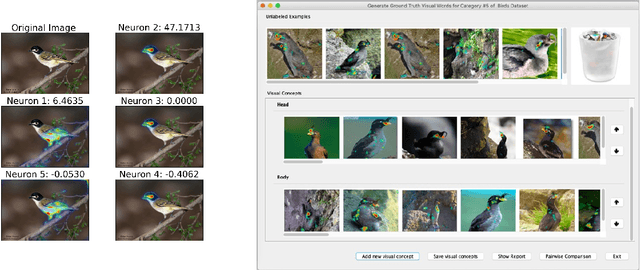

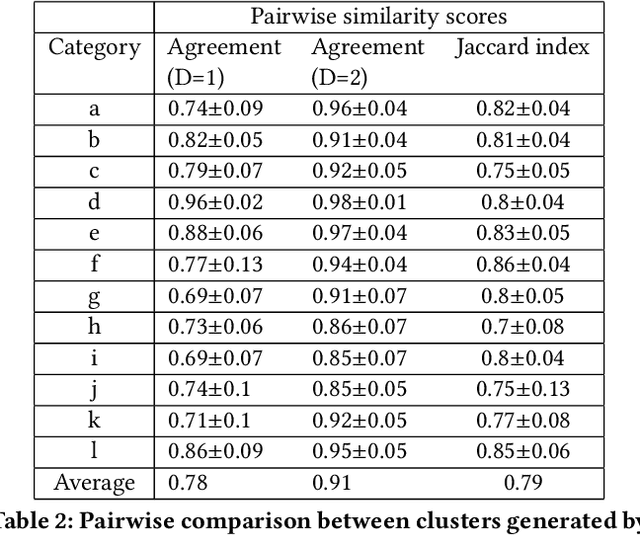

Abstract:We consider the problem of explaining the decisions of deep neural networks for image recognition in terms of human-recognizable visual concepts. In particular, given a test set of images, we aim to explain each classification in terms of a small number of image regions, or activation maps, which have been associated with semantic concepts by a human annotator. This allows for generating summary views of the typical reasons for classifications, which can help build trust in a classifier and/or identify example types for which the classifier may not be trusted. For this purpose, we developed a user interface for "interactive naming," which allows a human annotator to manually cluster significant activation maps in a test set into meaningful groups called "visual concepts". The main contribution of this paper is a systematic study of the visual concepts produced by five human annotators using the interactive naming interface. In particular, we consider the adequacy of the concepts for explaining the classification of test-set images, correspondence of the concepts to activations of individual neurons, and the inter-annotator agreement of visual concepts. We find that a large fraction of the activation maps have recognizable visual concepts, and that there is significant agreement between the different annotators about their denotations. Our work is an exploratory study of the interplay between machine learning and human recognition mediated by visualizations of the results of learning.

Learning Scripts as Hidden Markov Models

Sep 11, 2018

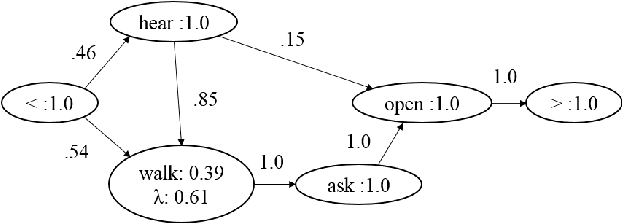

Abstract:Scripts have been proposed to model the stereotypical event sequences found in narratives. They can be applied to make a variety of inferences including filling gaps in the narratives and resolving ambiguous references. This paper proposes the first formal framework for scripts based on Hidden Markov Models (HMMs). Our framework supports robust inference and learning algorithms, which are lacking in previous clustering models. We develop an algorithm for structure and parameter learning based on Expectation Maximization and evaluate it on a number of natural datasets. The results show that our algorithm is superior to several informed baselines for predicting missing events in partial observation sequences.

Attentional Multi-Reading Sarcasm Detection

Sep 09, 2018

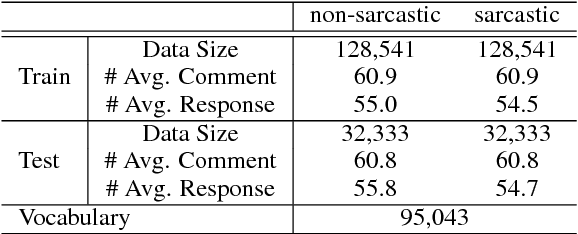

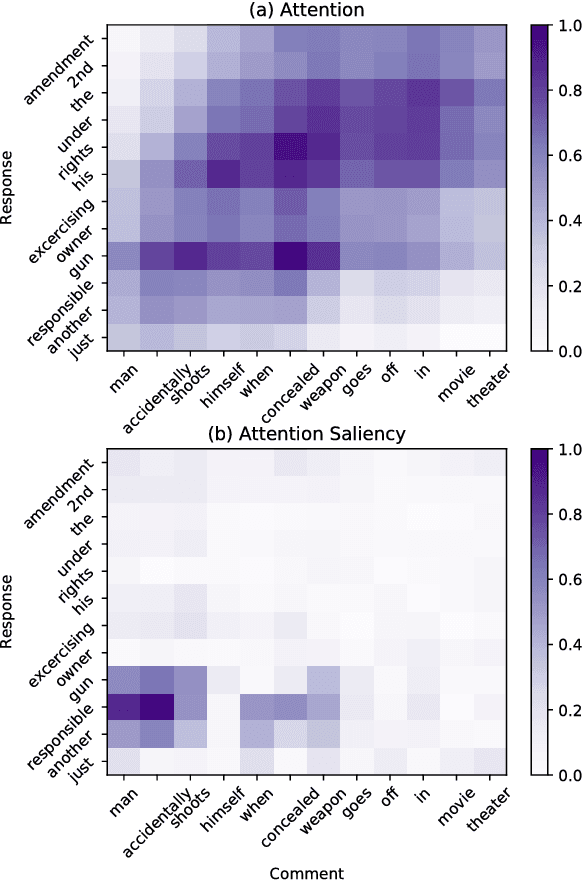

Abstract:Recognizing sarcasm often requires a deep understanding of multiple sources of information, including the utterance, the conversational context, and real world facts. Most of the current sarcasm detection systems consider only the utterance in isolation. There are some limited attempts toward taking into account the conversational context. In this paper, we propose an interpretable end-to-end model that combines information from both the utterance and the conversational context to detect sarcasm, and demonstrate its effectiveness through empirical evaluations. We also study the behavior of the proposed model to provide explanations for the model's decisions. Importantly, our model is capable of determining the impact of utterance and conversational context on the model's decisions. Finally, we provide an ablation study to illustrate the impact of different components of the proposed model.

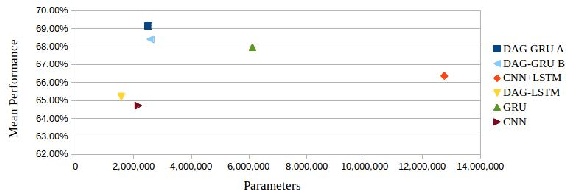

Event Detection with Neural Networks: A Rigorous Empirical Evaluation

Aug 26, 2018

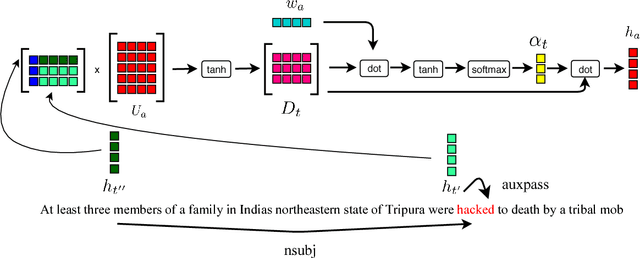

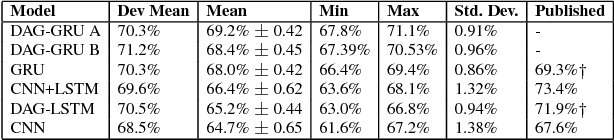

Abstract:Detecting events and classifying them into predefined types is an important step in knowledge extraction from natural language texts. While the neural network models have generally led the state-of-the-art, the differences in performance between different architectures have not been rigorously studied. In this paper we present a novel GRU-based model that combines syntactic information along with temporal structure through an attention mechanism. We show that it is competitive with other neural network architectures through empirical evaluations under different random initializations and training-validation-test splits of ACE2005 dataset.

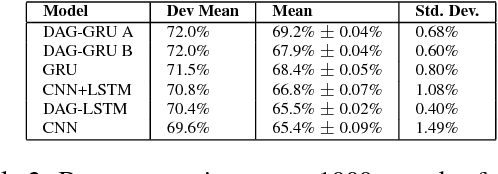

Interpreting Recurrent and Attention-Based Neural Models: a Case Study on Natural Language Inference

Aug 12, 2018

Abstract:Deep learning models have achieved remarkable success in natural language inference (NLI) tasks. While these models are widely explored, they are hard to interpret and it is often unclear how and why they actually work. In this paper, we take a step toward explaining such deep learning based models through a case study on a popular neural model for NLI. In particular, we propose to interpret the intermediate layers of NLI models by visualizing the saliency of attention and LSTM gating signals. We present several examples for which our methods are able to reveal interesting insights and identify the critical information contributing to the model decisions.

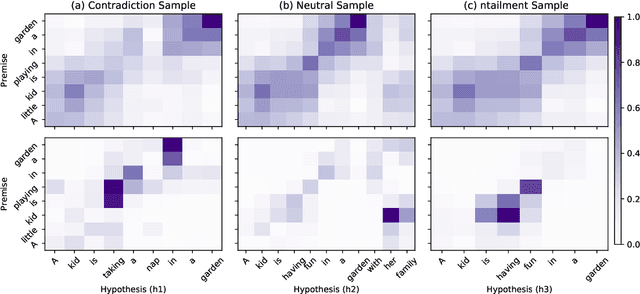

Joint Neural Entity Disambiguation with Output Space Search

Jun 19, 2018

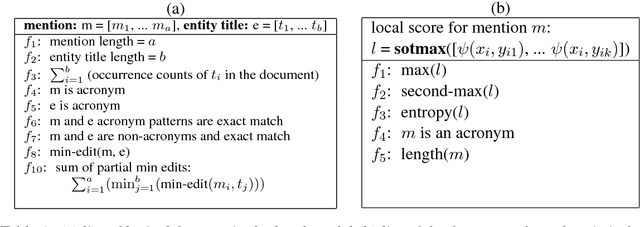

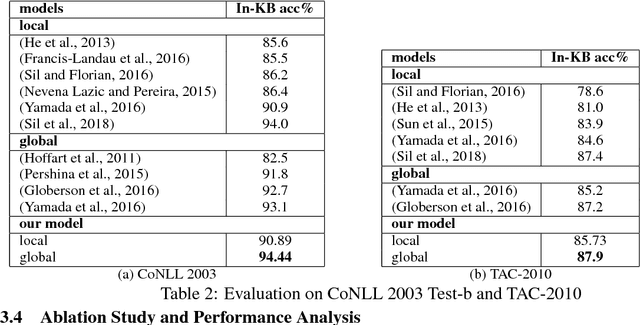

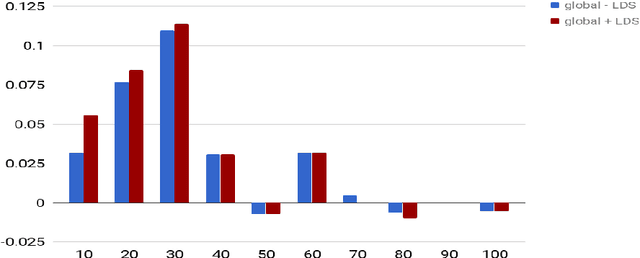

Abstract:In this paper, we present a novel model for entity disambiguation that combines both local contextual information and global evidences through Limited Discrepancy Search (LDS). Given an input document, we start from a complete solution constructed by a local model and conduct a search in the space of possible corrections to improve the local solution from a global view point. Our search utilizes a heuristic function to focus more on the least confident local decisions and a pruning function to score the global solutions based on their local fitness and the global coherences among the predicted entities. Experimental results on CoNLL 2003 and TAC 2010 benchmarks verify the effectiveness of our model.

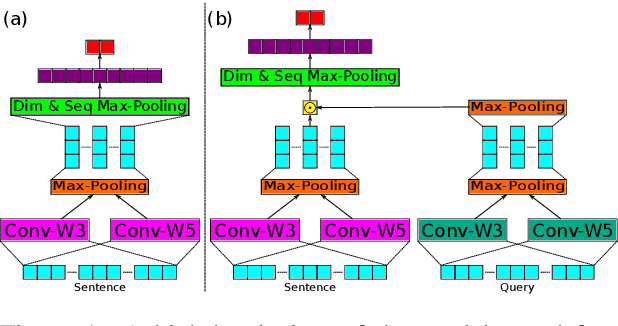

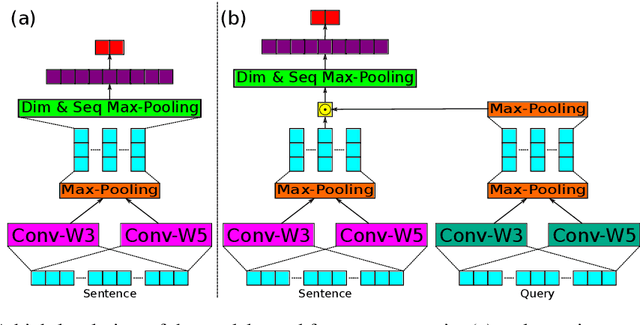

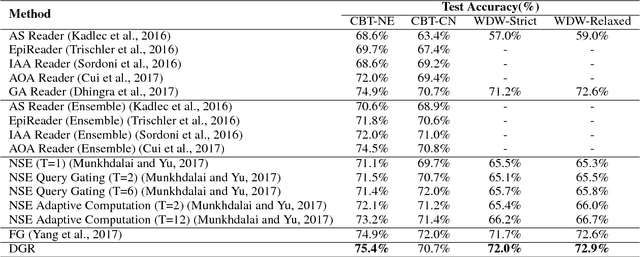

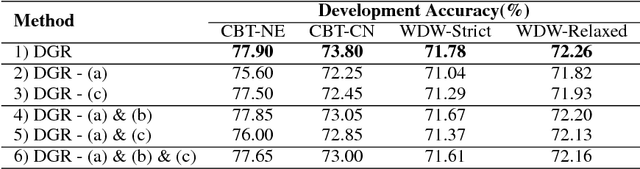

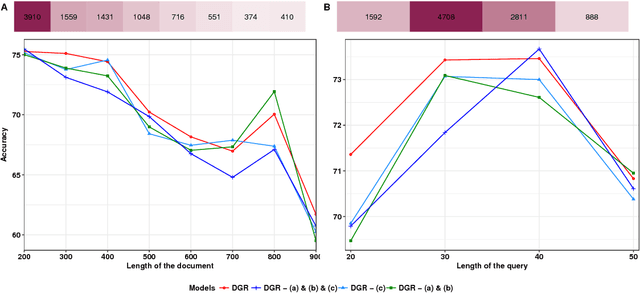

Dependent Gated Reading for Cloze-Style Question Answering

Jun 01, 2018

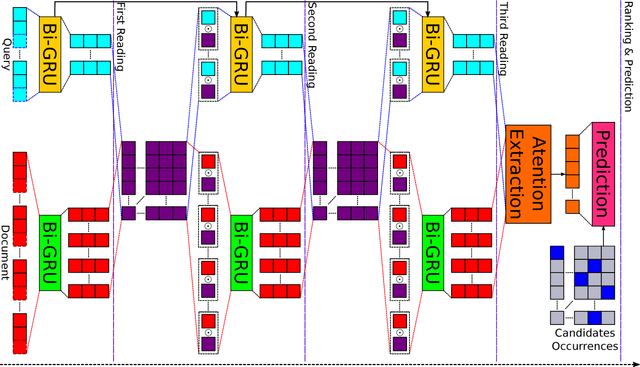

Abstract:We present a novel deep learning architecture to address the cloze-style question answering task. Existing approaches employ reading mechanisms that do not fully exploit the interdependency between the document and the query. In this paper, we propose a novel \emph{dependent gated reading} bidirectional GRU network (DGR) to efficiently model the relationship between the document and the query during encoding and decision making. Our evaluation shows that DGR obtains highly competitive performance on well-known machine comprehension benchmarks such as the Children's Book Test (CBT-NE and CBT-CN) and Who DiD What (WDW, Strict and Relaxed). Finally, we extensively analyze and validate our model by ablation and attention studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge