Pou-Chun Kung

HumanSplatHMR: Closing the Loop Between Human Mesh Recovery and Gaussian Splatting Avatar

May 04, 2026Abstract:Accurately recovering human pose and appearance from video is an essential component of scene reconstruction, with applications to motion capture, motion prediction, virtual reality, and digital twinning. Despite significant interest in building realistic human avatars from video, this paper demonstrates that existing methods do not accurately recover the 3D geometry of humans. ViT-based approaches are not consistently reliable and can overfit to 2D views, while NeRF- and Gaussian Splatting-based avatars treat pose and appearance separately, limiting rendering generalization to new poses. To resolve these shortcomings, this paper proposes HumanSplatHMR, a joint optimization framework that refines 3D human poses while simultaneously learning a high-fidelity avatar for novel-view and novel-pose synthesis. Our key insight is to close the loop between geometric pose estimation and differentiable rendering. Unlike prior human avatar methods that rely on accurate human pose obtained through motion capture systems or offline refinement, which are impractical in in-the-wild scenarios, our approach uses only human mesh estimates from a state-of-the-art human pose estimator to better reflect real-world conditions. Therefore, instead of using the human pose only as a deformation prior, HumanSplatHMR backpropagates photometric, segmentation, and depth losses through a differentiable renderer to the pose parameters and global position. This coupling refines the global 3D pose over time, improving accuracy and alignment while producing better renderings from novel views. Experiments show consistent improvements over pose recovery baselines that omit image-level refinement and avatar baselines that decouple pose estimation from avatar reconstruction.

RadarSplat-RIO: Indoor Radar-Inertial Odometry with Gaussian Splatting-Based Radar Bundle Adjustment

Apr 15, 2026Abstract:Radar is more resilient to adverse weather and lighting conditions than visual and Lidar simultaneous localization and mapping (SLAM). However, most radar SLAM pipelines still rely heavily on frame-to-frame odometry, which leads to substantial drift. While loop closure can correct long-term errors, it requires revisiting places and relies on robust place recognition. In contrast, visual odometry methods typically leverage bundle adjustment (BA) to jointly optimize poses and map within a local window. However, an equivalent BA formulation for radar has remained largely unexplored. We present the first radar BA framework enabled by Gaussian Splatting (GS), a dense and differentiable scene representation. Our method jointly optimizes radar sensor poses and scene geometry using full range-azimuth-Doppler data, bringing the benefits of multi-frame BA to radar for the first time. When integrated with an existing radar-inertial odometry frontend, our approach significantly reduces pose drift and improves robustness. Across multiple indoor scenes, our radar BA achieves substantial gains over the prior radar-inertial odometry, reducing average absolute translational and rotational errors by 90% and 80%, respectively.

SonarSplat: Novel View Synthesis of Imaging Sonar via Gaussian Splatting

Mar 31, 2025

Abstract:In this paper, we present SonarSplat, a novel Gaussian splatting framework for imaging sonar that demonstrates realistic novel view synthesis and models acoustic streaking phenomena. Our method represents the scene as a set of 3D Gaussians with acoustic reflectance and saturation properties. We develop a novel method to efficiently rasterize learned Gaussians to produce a range/azimuth image that is faithful to the acoustic image formation model of imaging sonar. In particular, we develop a novel approach to model azimuth streaking in a Gaussian splatting framework. We evaluate SonarSplat using real-world datasets of sonar images collected from an underwater robotic platform in a controlled test tank and in a real-world river environment. Compared to the state-of-the-art, SonarSplat offers improved image synthesis capabilities (+2.5 dB PSNR). We also demonstrate that SonarSplat can be leveraged for azimuth streak removal and 3D scene reconstruction.

LiHi-GS: LiDAR-Supervised Gaussian Splatting for Highway Driving Scene Reconstruction

Dec 19, 2024

Abstract:Photorealistic 3D scene reconstruction plays an important role in autonomous driving, enabling the generation of novel data from existing datasets to simulate safety-critical scenarios and expand training data without additional acquisition costs. Gaussian Splatting (GS) facilitates real-time, photorealistic rendering with an explicit 3D Gaussian representation of the scene, providing faster processing and more intuitive scene editing than the implicit Neural Radiance Fields (NeRFs). While extensive GS research has yielded promising advancements in autonomous driving applications, they overlook two critical aspects: First, existing methods mainly focus on low-speed and feature-rich urban scenes and ignore the fact that highway scenarios play a significant role in autonomous driving. Second, while LiDARs are commonplace in autonomous driving platforms, existing methods learn primarily from images and use LiDAR only for initial estimates or without precise sensor modeling, thus missing out on leveraging the rich depth information LiDAR offers and limiting the ability to synthesize LiDAR data. In this paper, we propose a novel GS method for dynamic scene synthesis and editing with improved scene reconstruction through LiDAR supervision and support for LiDAR rendering. Unlike prior works that are tested mostly on urban datasets, to the best of our knowledge, we are the first to focus on the more challenging and highly relevant highway scenes for autonomous driving, with sparse sensor views and monotone backgrounds.

LONER: LiDAR Only Neural Representations for Real-Time SLAM

Sep 12, 2023

Abstract:This paper proposes LONER, the first real-time LiDAR SLAM algorithm that uses a neural implicit scene representation. Existing implicit mapping methods for LiDAR show promising results in large-scale reconstruction, but either require groundtruth poses or run slower than real-time. In contrast, LONER uses LiDAR data to train an MLP to estimate a dense map in real-time, while simultaneously estimating the trajectory of the sensor. To achieve real-time performance, this paper proposes a novel information-theoretic loss function that accounts for the fact that different regions of the map may be learned to varying degrees throughout online training. The proposed method is evaluated qualitatively and quantitatively on two open-source datasets. This evaluation illustrates that the proposed loss function converges faster and leads to more accurate geometry reconstruction than other loss functions used in depth-supervised neural implicit frameworks. Finally, this paper shows that LONER estimates trajectories competitively with state-of-the-art LiDAR SLAM methods, while also producing dense maps competitive with existing real-time implicit mapping methods that use groundtruth poses.

Radar Occupancy Prediction with Lidar Supervision while Preserving Long-Range Sensing and Penetrating Capabilities

Dec 08, 2021

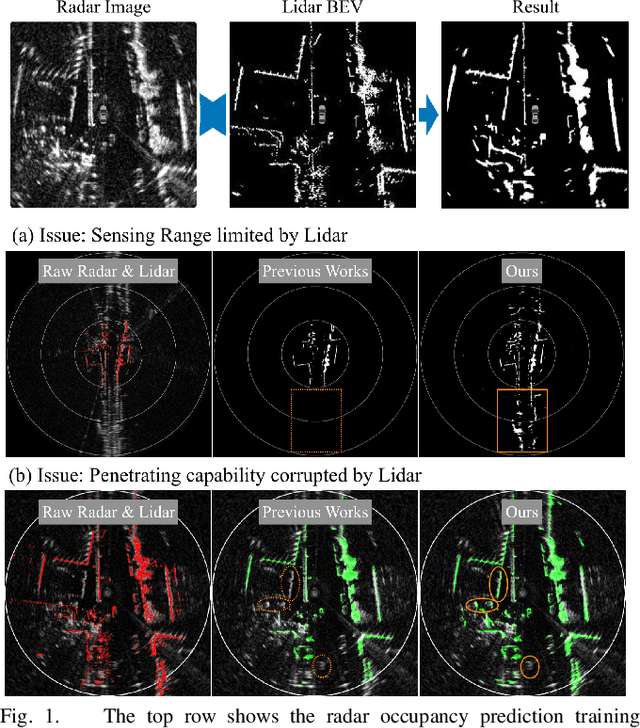

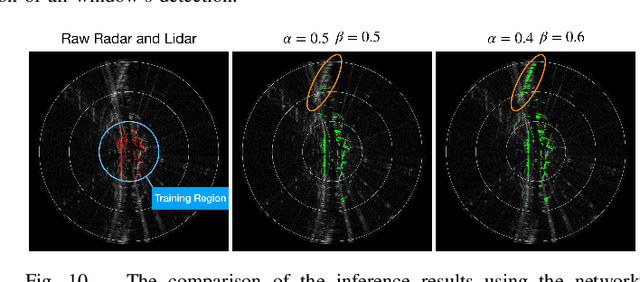

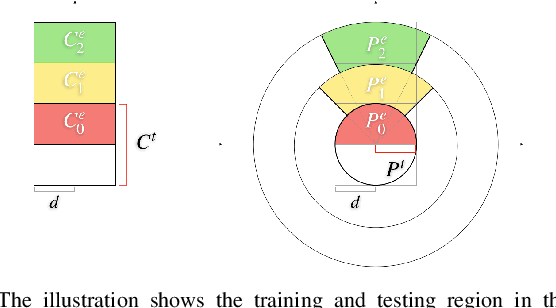

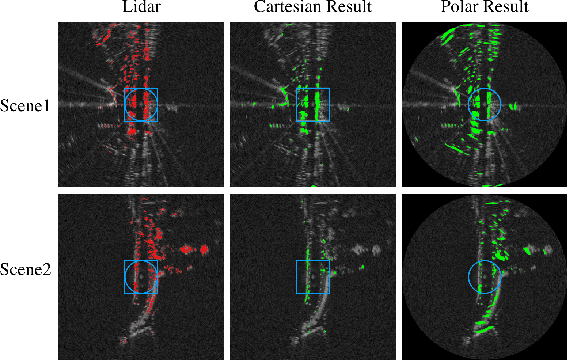

Abstract:Radar shows great potential for autonomous driving by accomplishing long-range sensing under diverse weather conditions. But radar is also a particularly challenging sensing modality due to the radar noises. Recent works have made enormous progress in classifying free and occupied spaces in radar images by leveraging lidar label supervision. However, there are still several unsolved issues. Firstly, the sensing distance of the results is limited by the sensing range of lidar. Secondly, the performance of the results is degenerated by lidar due to the physical sensing discrepancies between the two sensors. For example, some objects visible to lidar are invisible to radar, and some objects occluded in lidar scans are visible in radar images because of the radar's penetrating capability. These sensing differences cause false positive and penetrating capability degeneration, respectively. In this paper, we propose training data preprocessing and polar sliding window inference to solve the issues. The data preprocessing aims to reduce the effect caused by radar-invisible measurements in lidar scans. The polar sliding window inference aims to solve the limited sensing range issue by applying a near-range trained network to the long-range region. Instead of using common Cartesian representation, we propose to use polar representation to reduce the shape dissimilarity between long-range and near-range data. We find that extending a near-range trained network to long-range region inference in the polar space has 4.2 times better IoU than in Cartesian space. Besides, the polar sliding window inference can preserve the radar penetrating capability by changing the viewpoint of the inference region, which makes some occluded measurements seem non-occluded for a pretrained network.

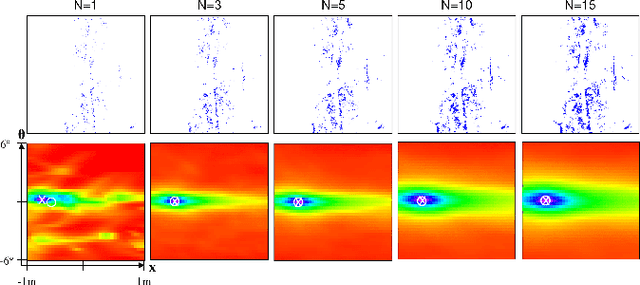

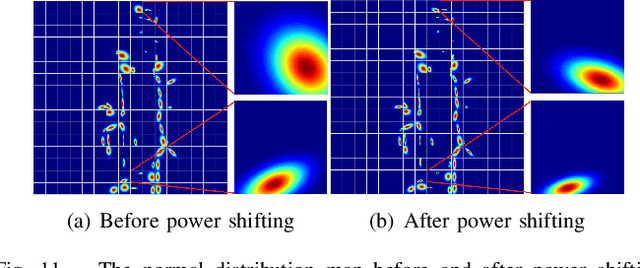

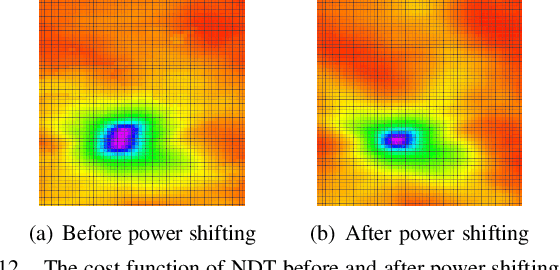

A Normal Distribution Transform-Based Radar Odometry Designed For Scanning and Automotive Radars

Mar 16, 2021

Abstract:Existing radar sensors can be classified into automotive and scanning radars. While most radar odometry (RO) methods are only designed for a specific type of radar, our RO method adapts to both scanning and automotive radars. Our RO is simple yet effective, where the pipeline consists of thresholding, probabilistic submap building, and an NDT-based radar scan matching. The proposed RO has been tested on two public radar datasets: the Oxford Radar RobotCar dataset and the nuScenes dataset, which provide scanning and automotive radar data respectively. The results show that our approach surpasses state-of-the-art RO using either automotive or scanning radar by reducing translational error by 51% and 30%, respectively, and rotational error by 17% and 29%, respectively. Besides, we show that our RO achieves centimeter-level accuracy as lidar odometry, and automotive and scanning RO have similar accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge