Peng Meng

Improving Machine Reading Comprehension with Single-choice Decision and Transfer Learning

Nov 06, 2020

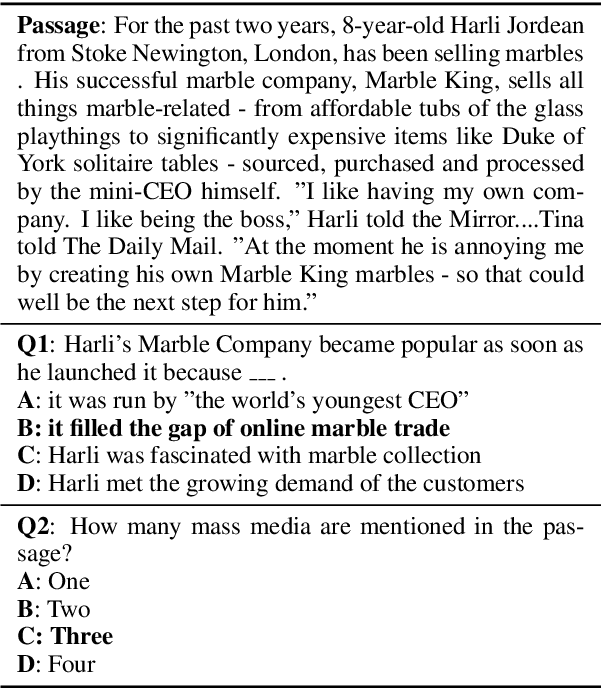

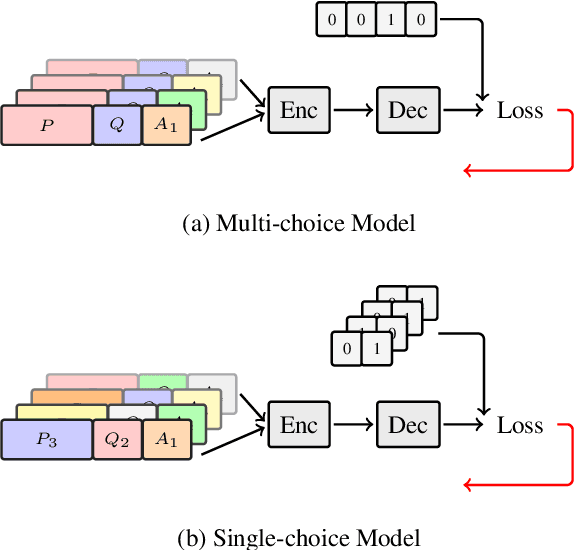

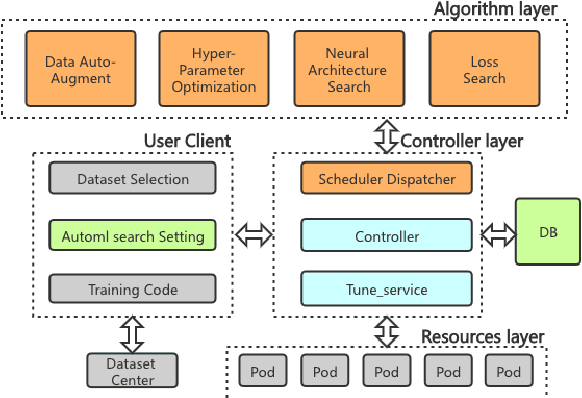

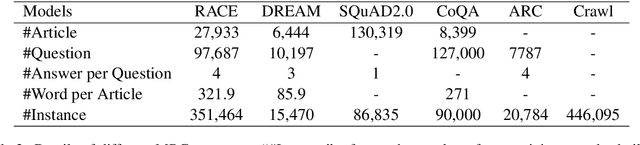

Abstract:Multi-choice Machine Reading Comprehension (MMRC) aims to select the correct answer from a set of options based on a given passage and question. Due to task specific of MMRC, it is no-trivial to transfer knowledge from other MRC tasks such as SQuAD, Dream. In this paper, we simply reconstruct multi-choice to single-choice by training a binary classification to distinguish whether a certain answer is correct. Then select the option with the highest confidence score. We construct our model upon ALBERT-xxlarge model and estimate it on the RACE dataset. During training, We adopt AutoML strategy to tune better parameters. Experimental results show that the single-choice is better than multi-choice. In addition, by transferring knowledge from other kinds of MRC tasks, our model achieves the new state of the art results in both single and ensemble settings.

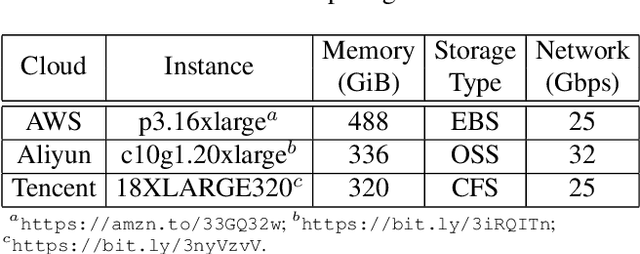

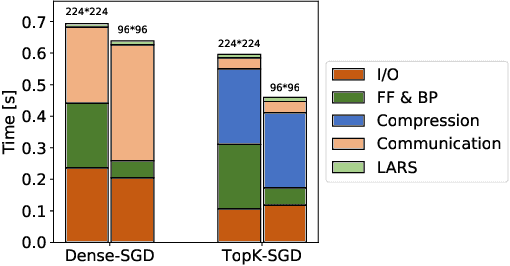

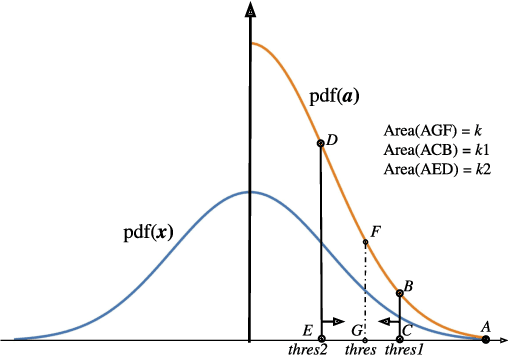

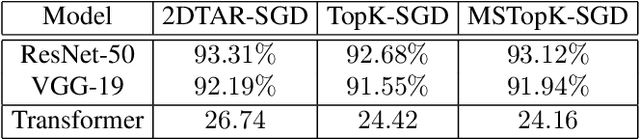

Towards Scalable Distributed Training of Deep Learning on Public Cloud Clusters

Oct 20, 2020

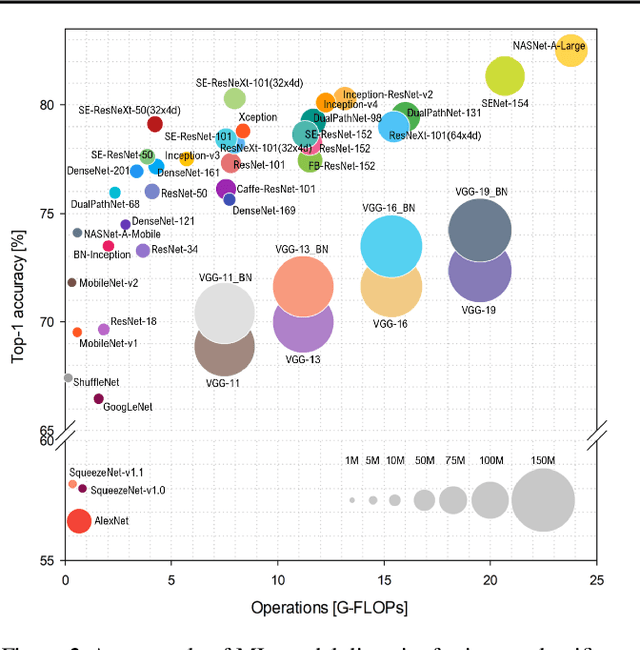

Abstract:Distributed training techniques have been widely deployed in large-scale deep neural networks (DNNs) training on dense-GPU clusters. However, on public cloud clusters, due to the moderate inter-connection bandwidth between instances, traditional state-of-the-art distributed training systems cannot scale well in training large-scale models. In this paper, we propose a new computing and communication efficient top-k sparsification communication library for distributed training. To further improve the system scalability, we optimize I/O by proposing a simple yet efficient multi-level data caching mechanism and optimize the update operation by introducing a novel parallel tensor operator. Experimental results on a 16-node Tencent Cloud cluster (each node with 8 Nvidia Tesla V100 GPUs) show that our system achieves 25%-40% faster than existing state-of-the-art systems on CNNs and Transformer. We finally break the record on DAWNBench on training ResNet-50 to 93% top-5 accuracy on ImageNet.

MLPerf Inference Benchmark

Nov 06, 2019

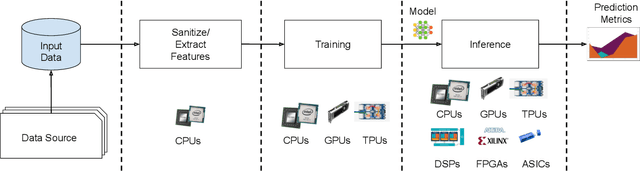

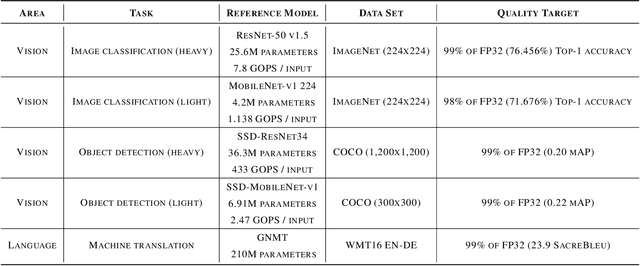

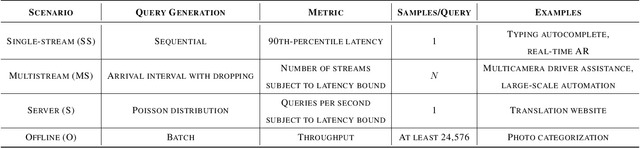

Abstract:Machine-learning (ML) hardware and software system demand is burgeoning. Driven by ML applications, the number of different ML inference systems has exploded. Over 100 organizations are building ML inference chips, and the systems that incorporate existing models span at least three orders of magnitude in power consumption and four orders of magnitude in performance; they range from embedded devices to data-center solutions. Fueling the hardware are a dozen or more software frameworks and libraries. The myriad combinations of ML hardware and ML software make assessing ML-system performance in an architecture-neutral, representative, and reproducible manner challenging. There is a clear need for industry-wide standard ML benchmarking and evaluation criteria. MLPerf Inference answers that call. Driven by more than 30 organizations as well as more than 200 ML engineers and practitioners, MLPerf implements a set of rules and practices to ensure comparability across systems with wildly differing architectures. In this paper, we present the method and design principles of the initial MLPerf Inference release. The first call for submissions garnered more than 600 inference-performance measurements from 14 organizations, representing over 30 systems that show a range of capabilities.

More than Word Frequencies: Authorship Attribution via Natural Frequency Zoned Word Distribution Analysis

Aug 15, 2012

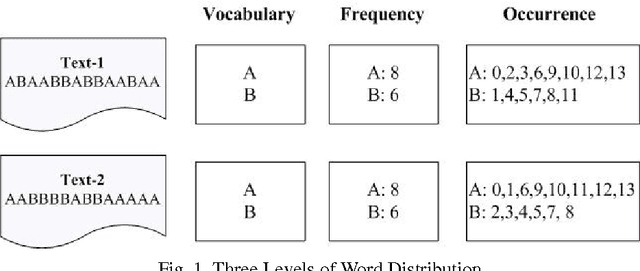

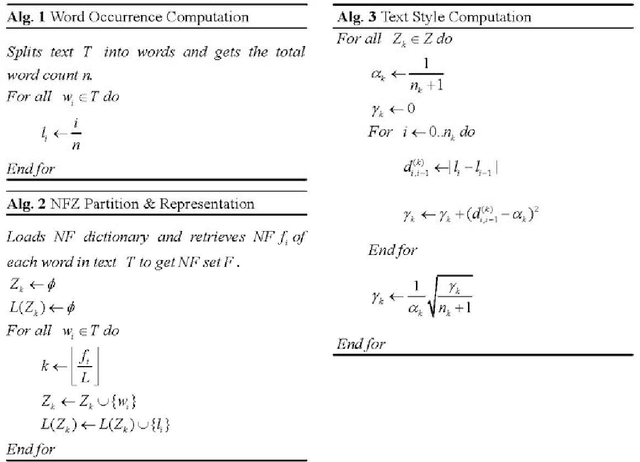

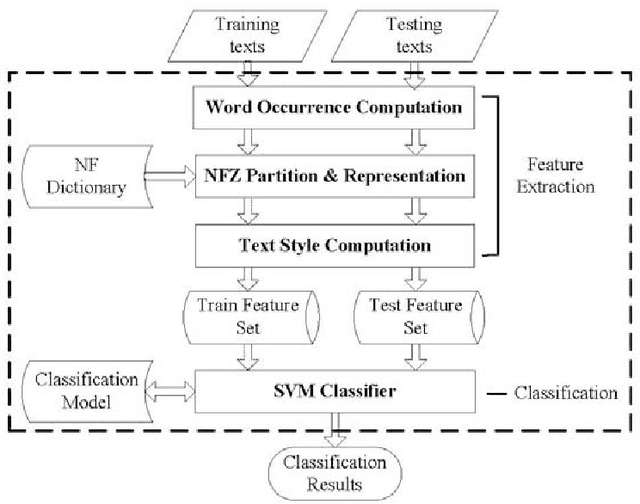

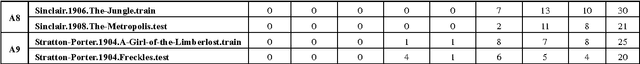

Abstract:With such increasing popularity and availability of digital text data, authorships of digital texts can not be taken for granted due to the ease of copying and parsing. This paper presents a new text style analysis called natural frequency zoned word distribution analysis (NFZ-WDA), and then a basic authorship attribution scheme and an open authorship attribution scheme for digital texts based on the analysis. NFZ-WDA is based on the observation that all authors leave distinct intrinsic word usage traces on texts written by them and these intrinsic styles can be identified and employed to analyze the authorship. The intrinsic word usage styles can be estimated through the analysis of word distribution within a text, which is more than normal word frequency analysis and can be expressed as: which groups of words are used in the text; how frequently does each group of words occur; how are the occurrences of each group of words distributed in the text. Next, the basic authorship attribution scheme and the open authorship attribution scheme provide solutions for both closed and open authorship attribution problems. Through analysis and extensive experimental studies, this paper demonstrates the efficiency of the proposed method for authorship attribution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge