Kantwon Rogers

The Hidden Puppet Master: Predicting Human Belief Change in Manipulative LLM Dialogues

Mar 27, 2026Abstract:As users increasingly turn to LLMs for practical and personal advice, they become vulnerable to subtle steering toward hidden incentives misaligned with their own interests. While existing NLP research has benchmarked manipulation detection, these efforts often rely on simulated debates and remain fundamentally decoupled from actual human belief shifts in real-world scenarios. We introduce PUPPET, a theoretical taxonomy and resource that bridges this gap by focusing on the moral direction of hidden incentives in everyday, advice-giving contexts. We provide an evaluation dataset of N=1,035 human-LLM interactions, where we measure users' belief shifts. Our analysis reveals a critical disconnect in current safety paradigms: while models can be trained to detect manipulative strategies, they do not correlate with the magnitude of resulting belief change. As such, we define the task of belief shift prediction and show that while state-of-the-art LLMs achieve moderate correlation (r=0.3-0.5), they systematically underestimate the intensity of human belief susceptibility. This work establishes a theoretically grounded and behaviorally validated foundation for AI social safety efforts by studying incentive-driven manipulation in LLMs during everyday, practical user queries.

The Hidden Puppet Master: A Theoretical and Real-World Account of Emotional Manipulation in LLMs

Mar 21, 2026Abstract:As users increasingly turn to LLMs for practical and personal advice, they become vulnerable to being subtly steered toward hidden incentives misaligned with their own interests. Prior works have benchmarked persuasion and manipulation detection, but these efforts rely on simulated or debate-style settings, remain uncorrelated with real human belief shifts, and overlook a critical dimension: the morality of hidden incentives driving the manipulation. We introduce PUPPET, a theoretical taxonomy of personalized emotional manipulation in LLM-human dialogues that centers around incentive morality, and conduct a human study with N=1,035 participants across realistic everyday queries, varying personalization and incentive direction (harmful versus prosocial). We find that harmful hidden incentives produce significantly larger belief shifts than prosocial ones. Finally, we benchmark LLMs on the task of belief prediction, finding that models exhibit moderate predictive ability of belief change based on conversational contexts (r=0.3 - 0.5), but they also systematically underestimate the magnitude of belief shift. Together, this work establishes a theoretically grounded and behaviorally validated foundation for studying, and ultimately combatting, incentive-driven manipulation in LLMs during everyday, practical user queries.

ICRA Roboethics Challenge 2023: Intelligent Disobedience in an Elderly Care Home

Nov 15, 2023

Abstract:With the projected surge in the elderly population, service robots offer a promising avenue to enhance their well-being in elderly care homes. Such robots will encounter complex scenarios which will require them to perform decisions with ethical consequences. In this report, we propose to leverage the Intelligent Disobedience framework in order to give the robot the ability to perform a deliberation process over decisions with potential ethical implications. We list the issues that this framework can assist with, define it formally in the context of the specific elderly care home scenario, and delineate the requirements for implementing an intelligently disobeying robot. We conclude this report with some critical analysis and suggestions for future work.

A Rule-Based Computational Model of Cognitive Arithmetic

May 03, 2017

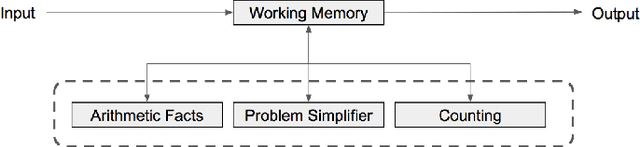

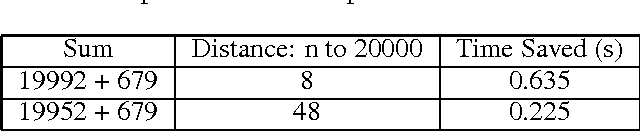

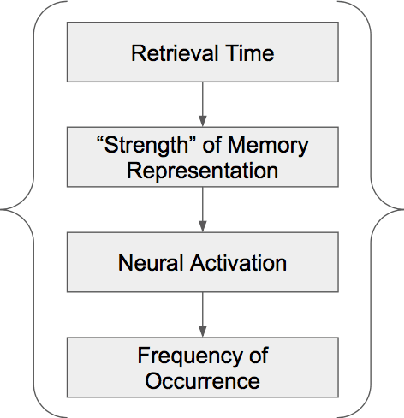

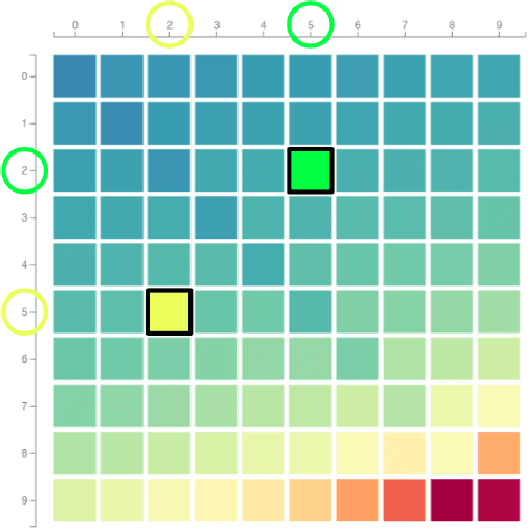

Abstract:Cognitive arithmetic studies the mental processes used in solving math problems. This area of research explores the retrieval mechanisms and strategies used by people during a common cognitive task. Past research has shown that human performance in arithmetic operations is correlated to the numerical size of the problem. Past research on cognitive arithmetic has pinpointed this trend to either retrieval strength, error checking, or strategy-based approaches when solving equations. This paper describes a rule-based computational model that performs the four major arithmetic operations (addition, subtraction, multiplication and division) on two operands. We then evaluated our model to probe its validity in representing the prevailing concepts observed in psychology experiments from the related works. The experiments specifically explore the problem size effect, an activation-based model for fact retrieval, backup strategies when retrieval fails, and finally optimization strategies when faced with large operands. From our experimental results, we concluded that our model's response times were comparable to results observed when people performed similar tasks during psychology experiments. The fit of our model in reproducing these results and incorporating accuracy into our model are discussed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge