Jing Chi

TRACER: Verifiable Generative Provenance for Multimodal Tool-Using Agents

May 11, 2026Abstract:Multimodal large language models increasingly solve vision-centric tasks by calling external tools for visual inspection, OCR, retrieval, calculation, and multi-step reasoning. Current tool-using agents usually expose the executed tool trajectory and the final answer, but they rarely specify which tool observation supports each generated claim. We call this missing claim-level dependency structure the provenance gap. The gap makes tool use hard to verify and hard to optimize, because useful evidence, redundant exploration, and unsupported reasoning are mixed in the same trajectory. We introduce TRACER, a framework for verifiable generative provenance in multimodal tool-using agents. Instead of adding citations after generation, TRACER generates each answer sentence together with a structured provenance record that identifies the supporting tool turn, evidence unit, and semantic support relation. Its relation space contains Quotation, Compression, and Inference, covering direct reuse, faithful condensation, and grounded derivation. TRACER verifies each record through schema checking, tool-turn alignment, source authenticity, and relation rationality, and then converts verified provenance into traceability constraints and provenance-derived local credit for reinforcement learning. We further construct TRACE-Bench, a benchmark for sentence-level provenance reconstruction from coarse multimodal tool trajectories. On TRACE-Bench, simply adding tools often introduces noise. With Qwen3-VL-8B, TRACER reaches 78.23% answer accuracy and 95.72% summary accuracy, outperforming the strongest closed-source tool-augmented baseline by 23.80 percentage points. Compared with tool-only supervised fine-tuning, it also reduces total test-set tool calls from 4949 to 3486. These results show that reliable multimodal tool reasoning depends on provenance-aware use of observations, not on more tool calls alone.

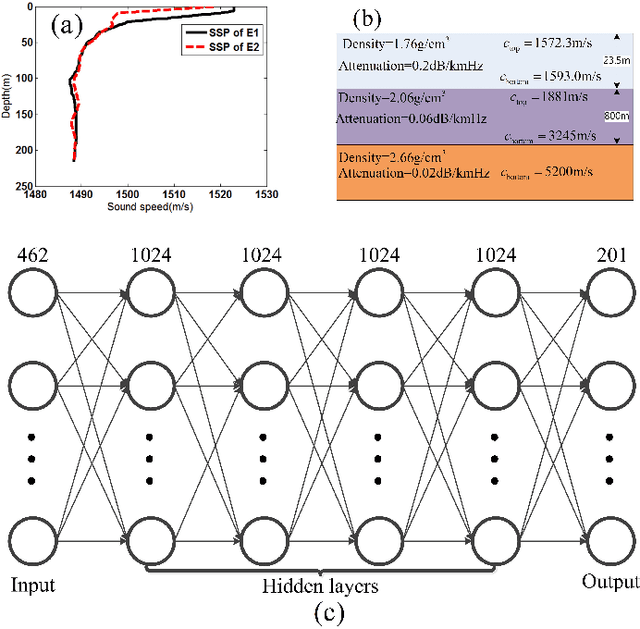

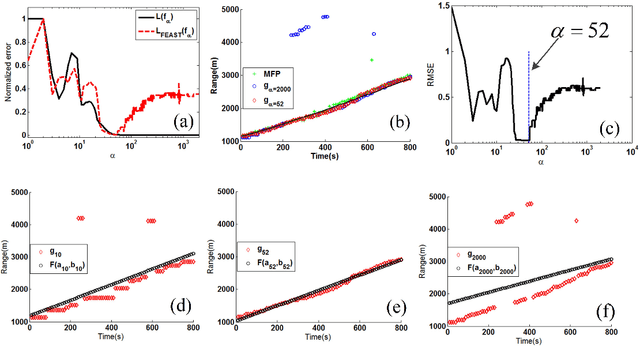

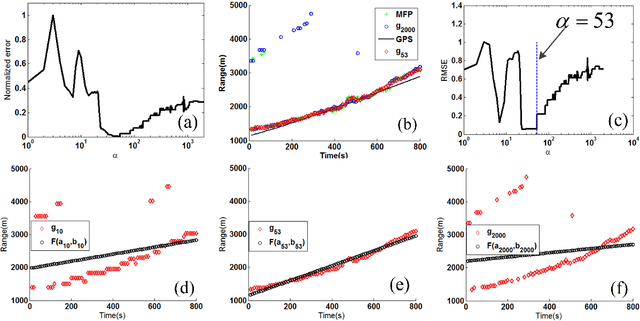

Sound source ranging using a feed-forward neural network with fitting-based early stopping

Apr 01, 2019

Abstract:When a feed-forward neural network (FNN) is trained for source ranging in an ocean waveguide, it is difficult evaluating the range accuracy of the FNN on unlabeled test data. A fitting-based early stopping (FEAST) method is introduced to evaluate the range error of the FNN on test data where the distance of source is unknown. Based on FEAST, when the evaluated range error of the FNN reaches the minimum on test data, stopping training, which will help to improve the ranging accuracy of the FNN on the test data. The FEAST is demonstrated on simulated and experimental data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge